supervised machine learning on:

[Wikipedia]

[Google]

[Amazon]

In

In

There are several ways in which the standard supervised learning problem can be generalized:

*

There are several ways in which the standard supervised learning problem can be generalized:

*

Machine Learning Open Source Software (MLOSS)

{{DEFAULTSORT:Supervised Learning

In

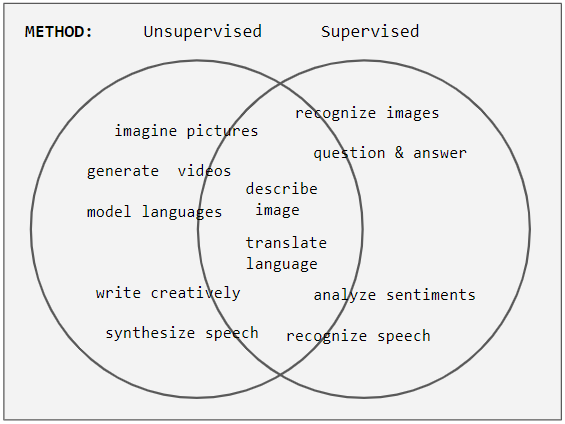

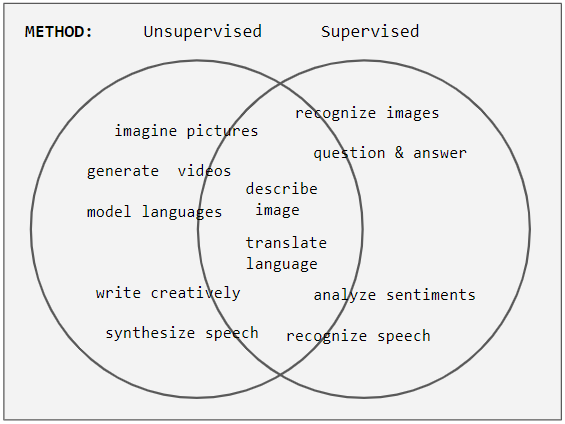

In machine learning

Machine learning (ML) is a field of study in artificial intelligence concerned with the development and study of Computational statistics, statistical algorithms that can learn from data and generalise to unseen data, and thus perform Task ( ...

, supervised learning (SL) is a paradigm where a model

A model is an informative representation of an object, person, or system. The term originally denoted the plans of a building in late 16th-century English, and derived via French and Italian ultimately from Latin , .

Models can be divided in ...

is trained using input objects (e.g. a vector of predictor variables) and desired output values (also known as a ''supervisory signal''), which are often human-made labels. The training process builds a function that maps new data to expected output values. An optimal scenario will allow for the algorithm to accurately determine output values for unseen instances. This requires the learning algorithm to generalize from the training data to unseen situations in a reasonable way (see inductive bias). This statistical quality of an algorithm is measured via a ''generalization error

For supervised learning applications in machine learning and statistical learning theory, generalization errorMohri, M., Rostamizadeh A., Talwakar A., (2018) ''Foundations of Machine learning'', 2nd ed., Boston: MIT Press (also known as the out-of- ...

''.

Steps to follow

To solve a given problem of supervised learning, the following steps must be performed: # Determine the type of training samples. Before doing anything else, the user should decide what kind of data is to be used as atraining set

In machine learning, a common task is the study and construction of algorithms that can learn from and make predictions on data. Such algorithms function by making data-driven predictions or decisions, through building a mathematical model from ...

. In the case of handwriting analysis, for example, this might be a single handwritten character, an entire handwritten word, an entire sentence of handwriting, or a full paragraph of handwriting.

# Gather a training set. The training set needs to be representative of the real-world use of the function. Thus, a set of input objects is gathered together with corresponding outputs, either from human experts or from measurements.

# Determine the input feature

Feature may refer to:

Computing

* Feature recognition, could be a hole, pocket, or notch

* Feature (computer vision), could be an edge, corner or blob

* Feature (machine learning), in statistics: individual measurable properties of the phenome ...

representation of the learned function. The accuracy of the learned function depends strongly on how the input object is represented. Typically, the input object is transformed into a feature vector

In machine learning and pattern recognition, a feature is an individual measurable property or characteristic of a data set. Choosing informative, discriminating, and independent features is crucial to produce effective algorithms for pattern re ...

, which contains a number of features that are descriptive of the object. The number of features should not be too large, because of the curse of dimensionality

The curse of dimensionality refers to various phenomena that arise when analyzing and organizing data in high-dimensional spaces that do not occur in low-dimensional settings such as the three-dimensional physical space of everyday experience. T ...

; but should contain enough information to accurately predict the output.

# Determine the structure of the learned function and corresponding learning algorithm. For example, one may choose to use support-vector machine

In machine learning, support vector machines (SVMs, also support vector networks) are supervised learning, supervised Maximum-margin hyperplane, max-margin models with associated learning algorithms that analyze data for Statistical classification ...

s or decision tree

A decision tree is a decision support system, decision support recursive partitioning structure that uses a Tree (graph theory), tree-like Causal model, model of decisions and their possible consequences, including probability, chance event ou ...

s.

# Complete the design. Run the learning algorithm on the gathered training set. Some supervised learning algorithms require the user to determine certain control parameters. These parameters may be adjusted by optimizing performance on a subset (called a '' validation set'') of the training set, or via cross-validation.

# Evaluate the accuracy of the learned function. After parameter adjustment and learning, the performance of the resulting function should be measured on a test set that is separate from the training set.

Algorithm choice

A wide range of supervised learning algorithms are available, each with its strengths and weaknesses. There is no single learning algorithm that works best on all supervised learning problems (see the No free lunch theorem). There are four major issues to consider in supervised learning:Bias–variance tradeoff

A first issue is the tradeoff between ''bias'' and ''variance''. Imagine that we have available several different, but equally good, training data sets. A learning algorithm is biased for a particular input if, when trained on each of these data sets, it is systematically incorrect when predicting the correct output for . A learning algorithm has high variance for a particular input if it predicts different output values when trained on different training sets. The prediction error of a learned classifier is related to the sum of the bias and the variance of the learning algorithm. Generally, there is a tradeoff between bias and variance. A learning algorithm with low bias must be "flexible" so that it can fit the data well. But if the learning algorithm is too flexible, it will fit each training data set differently, and hence have high variance. A key aspect of many supervised learning methods is that they are able to adjust this tradeoff between bias and variance (either automatically or by providing a bias/variance parameter that the user can adjust).Function complexity and amount of training data

The second issue is of the amount of training data available relative to the complexity of the "true" function (classifier or regression function). If the true function is simple, then an "inflexible" learning algorithm with high bias and low variance will be able to learn it from a small amount of data. But if the true function is highly complex (e.g., because it involves complex interactions among many different input features and behaves differently in different parts of the input space), then the function will only be able to learn with a large amount of training data paired with a "flexible" learning algorithm with low bias and high variance.Dimensionality of the input space

A third issue is the dimensionality of the input space. If the input feature vectors have large dimensions, learning the function can be difficult even if the true function only depends on a small number of those features. This is because the many "extra" dimensions can confuse the learning algorithm and cause it to have high variance. Hence, input data of large dimensions typically requires tuning the classifier to have low variance and high bias. In practice, if the engineer can manually remove irrelevant features from the input data, it will likely improve the accuracy of the learned function. In addition, there are many algorithms forfeature selection

In machine learning, feature selection is the process of selecting a subset of relevant Feature (machine learning), features (variables, predictors) for use in model construction. Feature selection techniques are used for several reasons:

* sim ...

that seek to identify the relevant features and discard the irrelevant ones. This is an instance of the more general strategy of dimensionality reduction

Dimensionality reduction, or dimension reduction, is the transformation of data from a high-dimensional space into a low-dimensional space so that the low-dimensional representation retains some meaningful properties of the original data, ideally ...

, which seeks to map the input data into a lower-dimensional space prior to running the supervised learning algorithm.

Noise in the output values

A fourth issue is the degree of noise in the desired output values (the supervisory target variables). If the desired output values are often incorrect (because of human error or sensor errors), then the learning algorithm should not attempt to find a function that exactly matches the training examples. Attempting to fit the data too carefully leads tooverfitting

In mathematical modeling, overfitting is "the production of an analysis that corresponds too closely or exactly to a particular set of data, and may therefore fail to fit to additional data or predict future observations reliably". An overfi ...

. You can overfit even when there are no measurement errors (stochastic noise) if the function you are trying to learn is too complex for your learning model. In such a situation, the part of the target function that cannot be modeled "corrupts" your training data - this phenomenon has been called deterministic noise. When either type of noise is present, it is better to go with a higher bias, lower variance estimator.

In practice, there are several approaches to alleviate noise in the output values such as early stopping

In machine learning, early stopping is a form of Regularization (mathematics), regularization used to avoid overfitting when training a model with an iterative method, such as gradient descent. Such methods update the model to make it better fit th ...

to prevent overfitting as well as detecting and removing the noisy training examples prior to training the supervised learning algorithm. There are several algorithms that identify noisy training examples and removing the suspected noisy training examples prior to training has decreased generalization error

For supervised learning applications in machine learning and statistical learning theory, generalization errorMohri, M., Rostamizadeh A., Talwakar A., (2018) ''Foundations of Machine learning'', 2nd ed., Boston: MIT Press (also known as the out-of- ...

with statistical significance

In statistical hypothesis testing, a result has statistical significance when a result at least as "extreme" would be very infrequent if the null hypothesis were true. More precisely, a study's defined significance level, denoted by \alpha, is the ...

.

Other factors to consider

Other factors to consider when choosing and applying a learning algorithm include the following: * Heterogeneity of the data. If the feature vectors include features of many different kinds (discrete, discrete ordered, counts, continuous values), some algorithms are easier to apply than others. Many algorithms, including support-vector machines,linear regression

In statistics, linear regression is a statistical model, model that estimates the relationship between a Scalar (mathematics), scalar response (dependent variable) and one or more explanatory variables (regressor or independent variable). A mode ...

, logistic regression

In statistics, a logistic model (or logit model) is a statistical model that models the logit, log-odds of an event as a linear function (calculus), linear combination of one or more independent variables. In regression analysis, logistic regres ...

, neural networks

A neural network is a group of interconnected units called neurons that send signals to one another. Neurons can be either Cell (biology), biological cells or signal pathways. While individual neurons are simple, many of them together in a netwo ...

, and nearest neighbor methods, require that the input features be numerical and scaled to similar ranges (e.g., to the 1,1interval). Methods that employ a distance function, such as nearest neighbor methods and support-vector machines with Gaussian kernels, are particularly sensitive to this. An advantage of decision trees

A decision tree is a decision support system, decision support recursive partitioning structure that uses a Tree (graph theory), tree-like Causal model, model of decisions and their possible consequences, including probability, chance event ou ...

is that they easily handle heterogeneous data.

* Redundancy in the data. If the input features contain redundant information (e.g., highly correlated features), some learning algorithms (e.g., linear regression

In statistics, linear regression is a statistical model, model that estimates the relationship between a Scalar (mathematics), scalar response (dependent variable) and one or more explanatory variables (regressor or independent variable). A mode ...

, logistic regression

In statistics, a logistic model (or logit model) is a statistical model that models the logit, log-odds of an event as a linear function (calculus), linear combination of one or more independent variables. In regression analysis, logistic regres ...

, and distance-based methods) will perform poorly because of numerical instabilities. These problems can often be solved by imposing some form of regularization

Regularization may refer to:

* Regularization (linguistics)

* Regularization (mathematics)

* Regularization (physics)

* Regularization (solid modeling)

* Regularization Law, an Israeli law intended to retroactively legalize settlements

See also ...

.

* Presence of interactions and non-linearities. If each of the features makes an independent contribution to the output, then algorithms based on linear functions (e.g., linear regression

In statistics, linear regression is a statistical model, model that estimates the relationship between a Scalar (mathematics), scalar response (dependent variable) and one or more explanatory variables (regressor or independent variable). A mode ...

, logistic regression

In statistics, a logistic model (or logit model) is a statistical model that models the logit, log-odds of an event as a linear function (calculus), linear combination of one or more independent variables. In regression analysis, logistic regres ...

, support-vector machine

In machine learning, support vector machines (SVMs, also support vector networks) are supervised learning, supervised Maximum-margin hyperplane, max-margin models with associated learning algorithms that analyze data for Statistical classification ...

s, naive Bayes

In statistics, naive (sometimes simple or idiot's) Bayes classifiers are a family of " probabilistic classifiers" which assumes that the features are conditionally independent, given the target class. In other words, a naive Bayes model assumes th ...

) and distance functions (e.g., nearest neighbor methods, support-vector machines with Gaussian kernels) generally perform well. However, if there are complex interactions among features, then algorithms such as decision trees

A decision tree is a decision support system, decision support recursive partitioning structure that uses a Tree (graph theory), tree-like Causal model, model of decisions and their possible consequences, including probability, chance event ou ...

and neural networks work better, because they are specifically designed to discover these interactions. Linear methods can also be applied, but the engineer must manually specify the interactions when using them.

When considering a new application, the engineer can compare multiple learning algorithms and experimentally determine which one works best on the problem at hand (see cross-validation). Tuning the performance of a learning algorithm can be very time-consuming. Given fixed resources, it is often better to spend more time collecting additional training data and more informative features than it is to spend extra time tuning the learning algorithms.

Algorithms

The most widely used learning algorithms are: *Support-vector machine

In machine learning, support vector machines (SVMs, also support vector networks) are supervised learning, supervised Maximum-margin hyperplane, max-margin models with associated learning algorithms that analyze data for Statistical classification ...

s

*Linear regression

In statistics, linear regression is a statistical model, model that estimates the relationship between a Scalar (mathematics), scalar response (dependent variable) and one or more explanatory variables (regressor or independent variable). A mode ...

*Logistic regression

In statistics, a logistic model (or logit model) is a statistical model that models the logit, log-odds of an event as a linear function (calculus), linear combination of one or more independent variables. In regression analysis, logistic regres ...

*Naive Bayes

In statistics, naive (sometimes simple or idiot's) Bayes classifiers are a family of " probabilistic classifiers" which assumes that the features are conditionally independent, given the target class. In other words, a naive Bayes model assumes th ...

*Linear discriminant analysis

Linear discriminant analysis (LDA), normal discriminant analysis (NDA), canonical variates analysis (CVA), or discriminant function analysis is a generalization of Fisher's linear discriminant, a method used in statistics and other fields, to fi ...

*Decision trees

A decision tree is a decision support system, decision support recursive partitioning structure that uses a Tree (graph theory), tree-like Causal model, model of decisions and their possible consequences, including probability, chance event ou ...

* ''k''-nearest neighbors algorithm

*Neural networks

A neural network is a group of interconnected units called neurons that send signals to one another. Neurons can be either Cell (biology), biological cells or signal pathways. While individual neurons are simple, many of them together in a netwo ...

(e.g., Multilayer perceptron

In deep learning, a multilayer perceptron (MLP) is a name for a modern feedforward neural network consisting of fully connected neurons with nonlinear activation functions, organized in layers, notable for being able to distinguish data that is ...

)

* Similarity learning

How supervised learning algorithms work

Given a set of training examples of the form such that is thefeature vector

In machine learning and pattern recognition, a feature is an individual measurable property or characteristic of a data set. Choosing informative, discriminating, and independent features is crucial to produce effective algorithms for pattern re ...

of the -th example and is its label (i.e., class), a learning algorithm seeks a function , where is the input space and is the output space. The function is an element of some space of possible functions , usually called the ''hypothesis space''. It is sometimes convenient to represent using a scoring function such that is defined as returning the value that gives the highest score: . Let denote the space of scoring functions.

Although and can be any space of functions, many learning algorithms are probabilistic models where takes the form of a conditional probability

In probability theory, conditional probability is a measure of the probability of an Event (probability theory), event occurring, given that another event (by assumption, presumption, assertion or evidence) is already known to have occurred. This ...

model , or takes the form of a joint probability

A joint or articulation (or articular surface) is the connection made between bones, ossicles, or other hard structures in the body which link an animal's skeletal system into a functional whole.Saladin, Ken. Anatomy & Physiology. 7th ed. McGra ...

model . For example, naive Bayes

In statistics, naive (sometimes simple or idiot's) Bayes classifiers are a family of " probabilistic classifiers" which assumes that the features are conditionally independent, given the target class. In other words, a naive Bayes model assumes th ...

and linear discriminant analysis

Linear discriminant analysis (LDA), normal discriminant analysis (NDA), canonical variates analysis (CVA), or discriminant function analysis is a generalization of Fisher's linear discriminant, a method used in statistics and other fields, to fi ...

are joint probability models, whereas logistic regression

In statistics, a logistic model (or logit model) is a statistical model that models the logit, log-odds of an event as a linear function (calculus), linear combination of one or more independent variables. In regression analysis, logistic regres ...

is a conditional probability model.

There are two basic approaches to choosing or : empirical risk minimization

In statistical learning theory, the principle of empirical risk minimization defines a family of learning algorithms based on evaluating performance over a known and fixed dataset. The core idea is based on an application of the law of large num ...

and structural risk minimization. Empirical risk minimization seeks the function that best fits the training data. Structural risk minimization includes a ''penalty function'' that controls the bias/variance tradeoff.

In both cases, it is assumed that the training set consists of a sample of independent and identically distributed pairs, . In order to measure how well a function fits the training data, a loss function

In mathematical optimization and decision theory, a loss function or cost function (sometimes also called an error function) is a function that maps an event or values of one or more variables onto a real number intuitively representing some "cost ...

is defined. For training example , the loss of predicting the value is .

The ''risk'' of function is defined as the expected loss of . This can be estimated from the training data as

:.

Empirical risk minimization

In empirical risk minimization, the supervised learning algorithm seeks the function that minimizes . Hence, a supervised learning algorithm can be constructed by applying anoptimization algorithm

Mathematical optimization (alternatively spelled ''optimisation'') or mathematical programming is the selection of a best element, with regard to some criteria, from some set of available alternatives. It is generally divided into two subfiel ...

to find .

When is a conditional probability distribution and the loss function is the negative log likelihood: , then empirical risk minimization is equivalent to maximum likelihood estimation

In statistics, maximum likelihood estimation (MLE) is a method of estimation theory, estimating the Statistical parameter, parameters of an assumed probability distribution, given some observed data. This is achieved by Mathematical optimization, ...

.

When contains many candidate functions or the training set is not sufficiently large, empirical risk minimization leads to high variance and poor generalization. The learning algorithm is able to memorize the training examples without generalizing well (overfitting).

Structural risk minimization

Structural risk minimization seeks to prevent overfitting by incorporating a regularization penalty into the optimization. The regularization penalty can be viewed as implementing a form ofOccam's razor

In philosophy, Occam's razor (also spelled Ockham's razor or Ocham's razor; ) is the problem-solving principle that recommends searching for explanations constructed with the smallest possible set of elements. It is also known as the principle o ...

that prefers simpler functions over more complex ones.

A wide variety of penalties have been employed that correspond to different definitions of complexity. For example, consider the case where the function is a linear function of the form

:.

A popular regularization penalty is , which is the squared Euclidean norm

Euclidean space is the fundamental space of geometry, intended to represent physical space. Originally, in Euclid's ''Elements'', it was the three-dimensional space of Euclidean geometry, but in modern mathematics there are ''Euclidean spaces'' ...

of the weights, also known as the norm. Other norms include the norm, , and the "norm", which is the number of non-zero s. The penalty will be denoted by .

The supervised learning optimization problem is to find the function that minimizes

:

The parameter controls the bias-variance tradeoff. When , this gives empirical risk minimization with low bias and high variance. When is large, the learning algorithm will have high bias and low variance. The value of can be chosen empirically via cross-validation.

The complexity penalty has a Bayesian interpretation as the negative log prior probability of , , in which case is the posterior probability

The posterior probability is a type of conditional probability that results from updating the prior probability with information summarized by the likelihood via an application of Bayes' rule. From an epistemological perspective, the posteri ...

of .

Generative training

The training methods described above are ''discriminative training'' methods, because they seek to find a function that discriminates well between the different output values (seediscriminative model

Discriminative models, also referred to as conditional models, are a class of models frequently used for classification. They are typically used to solve binary classification problems, i.e. assign labels, such as pass/fail, win/lose, alive/dead or ...

). For the special case where is a joint probability distribution

A joint or articulation (or articular surface) is the connection made between bones, ossicles, or other hard structures in the body which link an animal's skeletal system into a functional whole.Saladin, Ken. Anatomy & Physiology. 7th ed. McGraw- ...

and the loss function is the negative log likelihood a risk minimization algorithm is said to perform ''generative training'', because can be regarded as a generative model

In statistical classification, two main approaches are called the generative approach and the discriminative approach. These compute classifiers by different approaches, differing in the degree of statistical modelling. Terminology is inconsiste ...

that explains how the data were generated. Generative training algorithms are often simpler and more computationally efficient than discriminative training algorithms. In some cases, the solution can be computed in closed form as in naive Bayes

In statistics, naive (sometimes simple or idiot's) Bayes classifiers are a family of " probabilistic classifiers" which assumes that the features are conditionally independent, given the target class. In other words, a naive Bayes model assumes th ...

and linear discriminant analysis

Linear discriminant analysis (LDA), normal discriminant analysis (NDA), canonical variates analysis (CVA), or discriminant function analysis is a generalization of Fisher's linear discriminant, a method used in statistics and other fields, to fi ...

.

Generalizations

There are several ways in which the standard supervised learning problem can be generalized:

*

There are several ways in which the standard supervised learning problem can be generalized:

* Semi-supervised learning

Weak supervision (also known as semi-supervised learning) is a paradigm in machine learning, the relevance and notability of which increased with the advent of large language models due to large amount of data required to train them. It is charact ...

or weak supervision

Weak supervision (also known as semi-supervised learning) is a paradigm in machine learning, the relevance and notability of which increased with the advent of large language models due to large amount of data required to train them. It is charact ...

: the desired output values are provided only for a subset of the training data. The remaining data is unlabeled or imprecisely labeled.

* Active learning

Active learning is "a method of learning in which students are actively or experientially involved in the learning process and where there are different levels of active learning, depending on student involvement." states that "students particip ...

: Instead of assuming that all of the training examples are given at the start, active learning algorithms interactively collect new examples, typically by making queries to a human user. Often, the queries are based on unlabeled data, which is a scenario that combines semi-supervised learning with active learning.

* Structured prediction

Structured prediction or structured output learning is an umbrella term for supervised machine learning techniques that involves predicting structured objects, rather than discrete or real values.

Similar to commonly used supervised learning t ...

: When the desired output value is a complex object, such as a parse tree

A parse tree or parsing tree (also known as a derivation tree or concrete syntax tree) is an ordered, rooted tree that represents the syntactic structure of a string according to some context-free grammar. The term ''parse tree'' itself is use ...

or a labeled graph, then standard methods must be extended.

* Learning to rank

Learning to rank. Slides from Tie-Yan Liu's talk at World Wide Web Conference, WWW 2009 conference aravailable online or machine-learned ranking (MLR) is the application of machine learning, typically Supervised learning, supervised, Semi-supervi ...

: When the input is a set of objects and the desired output is a ranking of those objects, then again the standard methods must be extended.

Approaches and algorithms

* Analytical learning *Artificial neural network

In machine learning, a neural network (also artificial neural network or neural net, abbreviated ANN or NN) is a computational model inspired by the structure and functions of biological neural networks.

A neural network consists of connected ...

* Backpropagation

In machine learning, backpropagation is a gradient computation method commonly used for training a neural network to compute its parameter updates.

It is an efficient application of the chain rule to neural networks. Backpropagation computes th ...

* Boosting (meta-algorithm)

In machine learning (ML), boosting is an ensemble metaheuristic for primarily reducing bias (as opposed to variance). It can also improve the stability and accuracy of ML classification and regression algorithms. Hence, it is prevalent in supe ...

* Bayesian statistics

Bayesian statistics ( or ) is a theory in the field of statistics based on the Bayesian interpretation of probability, where probability expresses a ''degree of belief'' in an event. The degree of belief may be based on prior knowledge about ...

* Case-based reasoning

Case-based reasoning (CBR), broadly construed, is the process of solving new problems based on the solutions of similar past problems.

In everyday life, an auto mechanic who fixes an engine by recalling another car that exhibited similar sympto ...

* Decision tree learning

Decision tree learning is a supervised learning approach used in statistics, data mining and machine learning. In this formalism, a classification or regression decision tree is used as a predictive model to draw conclusions about a set of obser ...

* Inductive logic programming

Inductive logic programming (ILP) is a subfield of symbolic artificial intelligence which uses logic programming as a uniform representation for examples, background knowledge and hypotheses. The term "''inductive''" here refers to philosophical ...

* Gaussian process regression

In statistics, originally in geostatistics, kriging or Kriging (), also known as Gaussian process regression, is a method of interpolation based on Gaussian process governed by prior covariances. Under suitable assumptions of the prior, kriging g ...

* Genetic programming

Genetic programming (GP) is an evolutionary algorithm, an artificial intelligence technique mimicking natural evolution, which operates on a population of programs. It applies the genetic operators selection (evolutionary algorithm), selection a ...

* Group method of data handling

A group is a number of persons or things that are located, gathered, or classed together.

Groups of people

* Cultural group, a group whose members share the same cultural identity

* Ethnic group, a group whose members share the same ethnic iden ...

* Kernel estimators

* Learning automata

* Learning classifier systems

* Learning vector quantization

* Minimum message length (decision tree

A decision tree is a decision support system, decision support recursive partitioning structure that uses a Tree (graph theory), tree-like Causal model, model of decisions and their possible consequences, including probability, chance event ou ...

s, decision graphs, etc.)

* Multilinear subspace learning

Multilinear subspace learning is an approach for disentangling the causal factor of data formation and performing dimensionality reduction.M. A. O. Vasilescu, D. Terzopoulos (2003"Multilinear Subspace Analysis of Image Ensembles" "Proceedings of ...

* Naive Bayes classifier

In statistics, naive (sometimes simple or idiot's) Bayes classifiers are a family of " probabilistic classifiers" which assumes that the features are conditionally independent, given the target class. In other words, a naive Bayes model assumes th ...

* Maximum entropy classifier

* Conditional random field

Conditional random fields (CRFs) are a class of statistical modeling methods often applied in pattern recognition and machine learning and used for structured prediction. Whereas a classifier predicts a label for a single sample without consi ...

* Nearest neighbor algorithm

* Probably approximately correct learning

In computational learning theory, probably approximately correct (PAC) learning is a framework for mathematical analysis of machine learning. It was proposed in 1984 by Leslie Valiant.L. Valiant. A theory of the learnable.' Communications of the ...

(PAC) learning

* Ripple down rules

Ripple-down rules (RDR) are a way of approaching knowledge acquisition. Knowledge acquisition refers to the transfer of knowledge from human experts to knowledge-based systems.

Introductory material

Ripple-down rules are an incremental approach ...

, a knowledge acquisition methodology

* Symbolic machine learning algorithms

* Subsymbolic machine learning algorithms

* Support vector machine

In machine learning, support vector machines (SVMs, also support vector networks) are supervised max-margin models with associated learning algorithms that analyze data for classification and regression analysis. Developed at AT&T Bell Laborato ...

s

* Minimum complexity machines (MCM)

* Random forest

Random forests or random decision forests is an ensemble learning method for statistical classification, classification, regression analysis, regression and other tasks that works by creating a multitude of decision tree learning, decision trees ...

s

* Ensembles of classifiers

* Ordinal classification

* Data pre-processing

* Handling imbalanced datasets

* Statistical relational learning

Statistical relational learning (SRL) is a subdiscipline of artificial intelligence and machine learning that is concerned with domain models that exhibit both uncertainty (which can be dealt with using statistical methods) and complex, relational ...

* Proaftn, a multicriteria classification algorithm

Applications

*Bioinformatics

Bioinformatics () is an interdisciplinary field of science that develops methods and Bioinformatics software, software tools for understanding biological data, especially when the data sets are large and complex. Bioinformatics uses biology, ...

* Cheminformatics

Cheminformatics (also known as chemoinformatics) refers to the use of physical chemistry theory with computer and information science techniques—so called "'' in silico''" techniques—in application to a range of descriptive and prescriptive ...

** Quantitative structure–activity relationship

Quantitative structure–activity relationship models (QSAR models) are regression or classification models used in the chemical and biological sciences and engineering. Like other regression models, QSAR regression models relate a set of "predi ...

* Database marketing

Database marketing is a form of direct marketing that uses databases of customers or potential customers to generate personalized communications in order to promote a product or service for marketing purposes. The method of communication can be an ...

* Handwriting recognition

Handwriting recognition (HWR), also known as handwritten text recognition (HTR), is the ability of a computer to receive and interpret intelligible handwriting, handwritten input from sources such as paper documents, photographs, touch-screens ...

* Information retrieval

Information retrieval (IR) in computing and information science is the task of identifying and retrieving information system resources that are relevant to an Information needs, information need. The information need can be specified in the form ...

** Learning to rank

Learning to rank. Slides from Tie-Yan Liu's talk at World Wide Web Conference, WWW 2009 conference aravailable online or machine-learned ranking (MLR) is the application of machine learning, typically Supervised learning, supervised, Semi-supervi ...

* Information extraction

* Object recognition in computer vision

Computer vision tasks include methods for image sensor, acquiring, Image processing, processing, Image analysis, analyzing, and understanding digital images, and extraction of high-dimensional data from the real world in order to produce numerical ...

* Optical character recognition

Optical character recognition or optical character reader (OCR) is the electronics, electronic or machine, mechanical conversion of images of typed, handwritten or printed text into machine-encoded text, whether from a scanned document, a photo ...

* Spam detection

* Pattern recognition

Pattern recognition is the task of assigning a class to an observation based on patterns extracted from data. While similar, pattern recognition (PR) is not to be confused with pattern machines (PM) which may possess PR capabilities but their p ...

* Speech recognition

Speech recognition is an interdisciplinary subfield of computer science and computational linguistics that develops methodologies and technologies that enable the recognition and translation of spoken language into text by computers. It is also ...

* Supervised learning is a special case of downward causation In philosophy, downward causation is a causal relationship from higher levels of a system to lower-level parts of that system: for example, mental events acting to cause physical events. The term was originally coined in 1974 by the philosopher and ...

in biological systems

* Landform classification using satellite imagery

Satellite images (also Earth observation imagery, spaceborne photography, or simply satellite photo) are images of Earth collected by imaging satellites operated by governments and businesses around the world. Satellite imaging companies sell im ...

* Spend classification in procurement

Procurement is the process of locating and agreeing to terms and purchasing goods, services, or other works from an external source, often with the use of a tendering or competitive bidding process. The term may also refer to a contractual ...

processes

General issues

*Computational learning theory

In computer science, computational learning theory (or just learning theory) is a subfield of artificial intelligence devoted to studying the design and analysis of machine learning algorithms.

Overview

Theoretical results in machine learning m ...

* Inductive bias

* Overfitting

In mathematical modeling, overfitting is "the production of an analysis that corresponds too closely or exactly to a particular set of data, and may therefore fail to fit to additional data or predict future observations reliably". An overfi ...

* (Uncalibrated) class membership probabilities

* Version space

Version space learning is a logical approach to machine learning, specifically binary classification. Version space learning algorithms search a predefined space of hypotheses, viewed as a set of logical sentences. Formally, the hypothesis space i ...

s

See also

*List of datasets for machine-learning research

These datasets are used in machine learning (ML) research and have been cited in peer-reviewed academic journals. Datasets are an integral part of the field of machine learning. Major advances in this field can result from advances in learni ...

* Unsupervised learning

Unsupervised learning is a framework in machine learning where, in contrast to supervised learning, algorithms learn patterns exclusively from unlabeled data. Other frameworks in the spectrum of supervisions include weak- or semi-supervision, wh ...

References

External links

Machine Learning Open Source Software (MLOSS)

{{DEFAULTSORT:Supervised Learning