Bioinformatics () is an

interdisciplinary field that develops methods and

software tool

A programming tool or software development tool is a computer program that software developers use to create, debug, maintain, or otherwise support other programs and applications. The term usually refers to relatively simple programs, that can ...

s for understanding

biological data, in particular when the data sets are large and complex. As an interdisciplinary field of

science

Science is a systematic endeavor that builds and organizes knowledge in the form of testable explanations and predictions about the universe.

Science may be as old as the human species, and some of the earliest archeological evidence ...

, bioinformatics combines

biology

Biology is the scientific study of life. It is a natural science with a broad scope but has several unifying themes that tie it together as a single, coherent field. For instance, all organisms are made up of cells that process hereditary ...

,

chemistry

Chemistry is the scientific study of the properties and behavior of matter. It is a natural science that covers the elements that make up matter to the compounds made of atoms, molecules and ions: their composition, structure, proper ...

,

physics

Physics is the natural science that studies matter, its fundamental constituents, its motion and behavior through space and time, and the related entities of energy and force. "Physical science is that department of knowledge which ...

,

computer science

Computer science is the study of computation, automation, and information. Computer science spans theoretical disciplines (such as algorithms, theory of computation, information theory, and automation) to Applied science, practical discipli ...

,

information engineering

Information engineering is the engineering discipline that deals with the generation, distribution, analysis, and use of information, data, and knowledge in systems. The field first became identifiable in the early 21st century.

The component ...

,

mathematics

Mathematics is an area of knowledge that includes the topics of numbers, formulas and related structures, shapes and the spaces in which they are contained, and quantities and their changes. These topics are represented in modern mathematics ...

and

statistics

Statistics (from German: '' Statistik'', "description of a state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, indust ...

to analyze and interpret the

biological data. Bioinformatics has been used for ''

in silico'' analyses of biological queries using computational and statistical techniques.

Bioinformatics includes biological studies that use

computer programming

Computer programming is the process of performing a particular computation (or more generally, accomplishing a specific computing result), usually by designing and building an executable computer program. Programming involves tasks such as anal ...

as part of their methodology, as well as specific analysis "pipelines" that are repeatedly used, particularly in the field of

genomics. Common uses of bioinformatics include the identification of candidates

gene

In biology, the word gene (from , ; "...Wilhelm Johannsen coined the word gene to describe the Mendelian units of heredity..." meaning ''generation'' or ''birth'' or ''gender'') can have several different meanings. The Mendelian gene is a b ...

s and single

nucleotide

Nucleotides are organic molecules consisting of a nucleoside and a phosphate. They serve as monomeric units of the nucleic acid polymers – deoxyribonucleic acid (DNA) and ribonucleic acid (RNA), both of which are essential biomolecu ...

polymorphisms (

SNPs). Often, such identification is made with the aim to better understand the genetic basis of disease, unique adaptations, desirable properties (esp. in agricultural species), or differences between populations. In a less formal way, bioinformatics also tries to understand the organizational principles within

nucleic acid and

protein

Proteins are large biomolecules and macromolecules that comprise one or more long chains of amino acid residues. Proteins perform a vast array of functions within organisms, including catalysing metabolic reactions, DNA replication, res ...

sequences, called

proteomics.

Image and

signal processing

Signal processing is an electrical engineering subfield that focuses on analyzing, modifying and synthesizing '' signals'', such as sound, images, and scientific measurements. Signal processing techniques are used to optimize transmissions, ...

allow extraction of useful results from large amounts of raw data. In the field of genetics, it aids in sequencing and annotating genomes and their observed

mutation

In biology, a mutation is an alteration in the nucleic acid sequence of the genome of an organism, virus, or extrachromosomal DNA. Viral genomes contain either DNA or RNA. Mutations result from errors during DNA or viral replication, m ...

s. It plays a role in the

text mining of biological literature and the development of biological and gene

ontologies to organize and query biological data. It also plays a role in the analysis of gene and protein expression and regulation. Bioinformatics tools aid in comparing, analyzing and interpreting genetic and genomic data and more generally in the understanding of evolutionary aspects of molecular biology. At a more integrative level, it helps analyze and catalogue the biological pathways and networks that are an important part of

systems biology. In

structural biology, it aids in the simulation and modeling of DNA,

RNA,

proteins as well as biomolecular interactions.

History

Historically, the term ''bioinformatics'' did not mean what it means today.

Paulien Hogeweg and

Ben Hesper

Ben is frequently used as a shortened version of the given names Benjamin, Benedict, Bennett or Benson, and is also a given name in its own right.

Ben (in he, בֶּן, ''son of'') forms part of Hebrew surnames, e.g. Abraham ben Abraham ( he, ...

coined it in 1970 to refer to the study of information processes in biotic systems.

This definition placed bioinformatics as a field parallel to

biochemistry

Biochemistry or biological chemistry is the study of chemical processes within and relating to living organisms. A sub-discipline of both chemistry and biology, biochemistry may be divided into three fields: structural biology, enzymology and ...

(the study of chemical processes in biological systems).

Sequences

There has been a tremendous advance in speed and cost reduction since the completion of the Human Genome Project, with some labs able to sequence over 100,000 billion bases each year, and a full genome can be sequenced for a thousand dollars or less. Computers became essential in molecular biology when

protein sequences became available after

Frederick Sanger determined the sequence of

insulin in the early 1950s. Comparing multiple sequences manually turned out to be impractical. A pioneer in the field was

Margaret Oakley Dayhoff. She compiled one of the first protein sequence databases, initially published as books and pioneered methods of sequence alignment and

molecular evolution

Molecular evolution is the process of change in the sequence composition of cellular molecules such as DNA, RNA, and proteins across generations. The field of molecular evolution uses principles of evolutionary biology and population genet ...

.

Another early contributor to bioinformatics was

Elvin A. Kabat

Elvin Abraham Kabat (September 1, 1914 – June 16, 2000) was an American

biomedical scientist and one of the founding fathers of modern quantitative immunochemistry. Kabat was awarded the Louisa Gross Horwitz Prize from Columbia University in ...

, who pioneered biological sequence analysis in 1970 with his comprehensive volumes of antibody sequences released with Tai Te Wu between 1980 and 1991.

In the 1970s, new techniques for sequencing DNA were applied to bacteriophage MS2 and øX174, and the extended nucleotide sequences were then parsed with informational and statistical algorithms. These studies illustrated that well known features, such as the coding segments and the triplet code, are revealed in straightforward statistical analyses and were thus proof of the concept that bioinformatics would be insightful.

Goals

To study how normal cellular activities are altered in different disease states, the biological data must be combined to form a comprehensive picture of these activities. Therefore, the field of bioinformatics has evolved such that the most pressing task now involves the analysis and interpretation of various types of data. This also includes nucleotide and

amino acid sequences,

protein domains, and

protein structures. The actual process of analyzing and interpreting data is referred to as

computational biology. Important sub-disciplines within bioinformatics and computational biology include:

* Development and implementation of computer programs that enable efficient access to, management, and use of, various types of information.

* Development of new algorithms (mathematical formulas) and statistical measures that assess relationships among members of large data sets. For example, there are methods to locate a

gene

In biology, the word gene (from , ; "...Wilhelm Johannsen coined the word gene to describe the Mendelian units of heredity..." meaning ''generation'' or ''birth'' or ''gender'') can have several different meanings. The Mendelian gene is a b ...

within a sequence, to predict protein structure and/or function, and to

cluster

may refer to:

Science and technology Astronomy

* Cluster (spacecraft), constellation of four European Space Agency spacecraft

* Asteroid cluster, a small asteroid family

* Cluster II (spacecraft), a European Space Agency mission to study th ...

protein sequences into families of related sequences.

The primary goal of bioinformatics is to increase the understanding of biological processes. What sets it apart from other approaches, however, is its focus on developing and applying computationally intensive techniques to achieve this goal. Examples include:

pattern recognition,

data mining,

machine learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence.

Machine ...

algorithms, and

visualization. Major research efforts in the field include

sequence alignment,

gene finding,

genome assembly,

drug design,

drug discovery,

protein structure alignment,

protein structure prediction, prediction of

gene expression and

protein–protein interactions,

genome-wide association studies, the modeling of

evolution

Evolution is change in the heritable characteristics of biological populations over successive generations. These characteristics are the expressions of genes, which are passed on from parent to offspring during reproduction. Variation ...

and

cell division/mitosis.

Bioinformatics now entails the creation and advancement of databases, algorithms, computational and statistical techniques, and theory to solve formal and practical problems arising from the management and analysis of biological data.

Over the past few decades, rapid developments in genomic and other molecular research technologies and developments in

information technologies have combined to produce a tremendous amount of information related to molecular biology. Bioinformatics is the name given to these mathematical and computing approaches used to glean understanding of biological processes.

Common activities in bioinformatics include mapping and analyzing

DNA and protein sequences, aligning DNA and protein sequences to compare them, and creating and viewing 3-D models of protein structures.

Relation to other fields

Bioinformatics is a science field that is similar to but distinct from

biological computation, while it is often considered synonymous to

computational biology. Biological computation uses

bioengineering and

biology

Biology is the scientific study of life. It is a natural science with a broad scope but has several unifying themes that tie it together as a single, coherent field. For instance, all organisms are made up of cells that process hereditary ...

to build biological

computer

A computer is a machine that can be programmed to carry out sequences of arithmetic or logical operations ( computation) automatically. Modern digital electronic computers can perform generic sets of operations known as programs. These prog ...

s, whereas bioinformatics uses computation to better understand biology. Bioinformatics and computational biology involve the analysis of biological data, particularly DNA, RNA, and protein sequences. The field of bioinformatics experienced explosive growth starting in the mid-1990s, driven largely by the

Human Genome Project and by rapid advances in DNA sequencing technology.

Analyzing biological data to produce meaningful information involves writing and running software programs that use

algorithm

In mathematics and computer science, an algorithm () is a finite sequence of rigorous instructions, typically used to solve a class of specific problems or to perform a computation. Algorithms are used as specifications for performing ...

s from

graph theory

In mathematics, graph theory is the study of '' graphs'', which are mathematical structures used to model pairwise relations between objects. A graph in this context is made up of '' vertices'' (also called ''nodes'' or ''points'') which are conn ...

,

artificial intelligence

Artificial intelligence (AI) is intelligence—perceiving, synthesizing, and inferring information—demonstrated by machines, as opposed to intelligence displayed by animals and humans. Example tasks in which this is done include speech ...

,

soft computing

Soft computing is a set of algorithms,

including neural networks, fuzzy logic, and evolutionary algorithms.

These algorithms are tolerant of imprecision, uncertainty, partial truth and approximation.

It is contrasted with hard computing: al ...

,

data mining,

image processing, and

computer simulation. The algorithms in turn depend on theoretical foundations such as

discrete mathematics,

control theory

Control theory is a field of mathematics that deals with the control system, control of dynamical systems in engineered processes and machines. The objective is to develop a model or algorithm governing the application of system inputs to drive ...

,

system theory

Systems theory is the interdisciplinary study of systems, i.e. cohesive groups of interrelated, interdependent components that can be natural or human-made. Every system has causal boundaries, is influenced by its context, defined by its stru ...

,

information theory, and

statistics

Statistics (from German: '' Statistik'', "description of a state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, indust ...

.

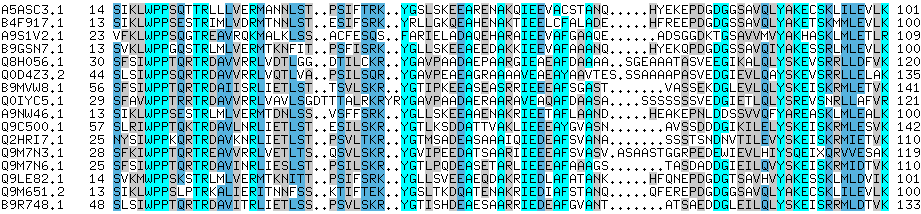

Sequence analysis

Since the

Phage Φ-X174 was

sequenced in 1977,

the

DNA sequences of thousands of organisms have been decoded and stored in databases. This sequence information is analyzed to determine genes that encode

protein

Proteins are large biomolecules and macromolecules that comprise one or more long chains of amino acid residues. Proteins perform a vast array of functions within organisms, including catalysing metabolic reactions, DNA replication, res ...

s, RNA genes, regulatory sequences, structural motifs, and repetitive sequences. A comparison of genes within a

species

In biology, a species is the basic unit of classification and a taxonomic rank of an organism, as well as a unit of biodiversity. A species is often defined as the largest group of organisms in which any two individuals of the appropriat ...

or between different species can show similarities between protein functions, or relations between species (the use of

molecular systematics to construct

phylogenetic trees). With the growing amount of data, it long ago became impractical to analyze DNA sequences manually.

Computer program

A computer program is a sequence or set of instructions in a programming language for a computer to Execution (computing), execute. Computer programs are one component of software, which also includes software documentation, documentation and oth ...

s such as

BLAST are used routinely to search sequences—as of 2008, from more than 260,000 organisms, containing over 190 billion

nucleotide

Nucleotides are organic molecules consisting of a nucleoside and a phosphate. They serve as monomeric units of the nucleic acid polymers – deoxyribonucleic acid (DNA) and ribonucleic acid (RNA), both of which are essential biomolecu ...

s.

DNA sequencing

Before sequences can be analyzed they have to be obtained from the data storage bank example Genbank.

DNA sequencing is still a non-trivial problem as the raw data may be noisy or affected by weak signals.

Algorithm

In mathematics and computer science, an algorithm () is a finite sequence of rigorous instructions, typically used to solve a class of specific problems or to perform a computation. Algorithms are used as specifications for performing ...

s have been developed for

base calling for the various experimental approaches to DNA sequencing.

Sequence assembly

Most DNA sequencing techniques produce short fragments of sequence that need to be assembled to obtain complete gene or genome sequences. The so-called

shotgun sequencing

In genetics, shotgun sequencing is a method used for sequencing random DNA strands. It is named by analogy with the rapidly expanding, quasi-random shot grouping of a shotgun.

The chain-termination method of DNA sequencing ("Sanger sequencing ...

technique (which was used, for example, by

The Institute for Genomic Research (TIGR) to sequence the first bacterial genome, ''

Haemophilus influenzae

''Haemophilus influenzae'' (formerly called Pfeiffer's bacillus or ''Bacillus influenzae'') is a Gram-negative, non-motile, coccobacillary, facultatively anaerobic, capnophilic pathogenic bacterium of the family Pasteurellaceae. The bact ...

'')

generates the sequences of many thousands of small DNA fragments (ranging from 35 to 900 nucleotides long, depending on the sequencing technology). The ends of these fragments overlap and, when aligned properly by a genome assembly program, can be used to reconstruct the complete genome. Shotgun sequencing yields sequence data quickly, but the task of assembling the fragments can be quite complicated for larger genomes. For a genome as large as the

human genome, it may take many days of CPU time on large-memory, multiprocessor computers to assemble the fragments, and the resulting assembly usually contains numerous gaps that must be filled in later. Shotgun sequencing is the method of choice for virtually all genomes sequenced today, and genome assembly algorithms are a critical area of bioinformatics research.

Genome annotation

In the context of

genomics,

annotation is the process of marking the genes and other biological features in a DNA sequence. This process needs to be automated because most genomes are too large to annotate by hand, not to mention the desire to annotate as many genomes as possible, as the rate of

sequencing has ceased to pose a bottleneck. Annotation is made possible by the fact that genes have recognisable start and stop regions, although the exact sequence found in these regions can vary between genes.

Genome annotation can be classified into three levels: the nucleotide, protein, and process levels.

Gene finding is a chief aspect of nucleotide-level annotation. For complex genomes, the most successful methods use a combination of ab initio gene prediction and sequence comparison with expressed sequence databases and other organisms. Nucleotide-level annotation also allows the integration of genome sequence with other genetic and physical maps of the genome.

The principal aim of protein-level annotation is to assign function to the products of the genome. Databases of protein sequences and functional domains and motifs are powerful resources for this type of annotation. Nevertheless, half of the predicted proteins in a new genome sequence tend to have no obvious function.

Understanding the function of genes and their products in the context of cellular and organismal physiology is the goal of process-level annotation. One of the obstacles to this level of annotation has been the inconsistency of terms used by different model systems. The Gene Ontology Consortium is helping to solve this problem.

The first description of a comprehensive genome annotation system was published in 1995

by the team at

The Institute for Genomic Research that performed the first complete sequencing and analysis of the genome of a free-living organism, the bacterium ''

Haemophilus influenzae

''Haemophilus influenzae'' (formerly called Pfeiffer's bacillus or ''Bacillus influenzae'') is a Gram-negative, non-motile, coccobacillary, facultatively anaerobic, capnophilic pathogenic bacterium of the family Pasteurellaceae. The bact ...

''.

Owen White

Owen White is a bioinformatician and director of the Institute For Genome Sciences at the University of Maryland School of Medicine. He is known for his work on the bioinformatics tools GLIMMER and MUMmer.

Education

White studied biotechnology a ...

designed and built a software system to identify the genes encoding all proteins, transfer RNAs, ribosomal RNAs (and other sites) and to make initial functional assignments. Most current genome annotation systems work similarly, but the programs available for analysis of genomic DNA, such as the

GeneMark

GeneMark is a generic name for a family of ab initio gene prediction programs developed at the Georgia Institute of Technology in Atlanta. Developed in 1993, original GeneMark was used in 1995 as a primary gene prediction tool for annotation of ...

program trained and used to find protein-coding genes in ''

Haemophilus influenzae

''Haemophilus influenzae'' (formerly called Pfeiffer's bacillus or ''Bacillus influenzae'') is a Gram-negative, non-motile, coccobacillary, facultatively anaerobic, capnophilic pathogenic bacterium of the family Pasteurellaceae. The bact ...

'', are constantly changing and improving.

Following the goals that the Human Genome Project left to achieve after its closure in 2003, a new project developed by the National Human Genome Research Institute in the U.S appeared. The so-called

ENCODE project is a collaborative data collection of the functional elements of the human genome that uses next-generation DNA-sequencing technologies and genomic tiling arrays, technologies able to automatically generate large amounts of data at a dramatically reduced per-base cost but with the same accuracy (base call error) and fidelity (assembly error).

Gene function prediction

While genome annotation is primarily based on sequence similarity (and thus

homology), other properties of sequences can be used to predict the function of genes. In fact, most ''gene'' function prediction methods focus on ''protein'' sequences as they are more informative and more feature-rich. For instance, the distribution of hydrophobic

amino acid

Amino acids are organic compounds that contain both amino and carboxylic acid functional groups. Although hundreds of amino acids exist in nature, by far the most important are the alpha-amino acids, which comprise proteins. Only 22 alpha ...

s predicts

transmembrane segments in proteins. However, protein function prediction can also use external information such as gene (or protein)

expression data,

protein structure, or

protein-protein interactions.

Computational evolutionary biology

Evolutionary biology

Evolutionary biology is the subfield of biology that studies the evolutionary processes (natural selection, common descent, speciation) that produced the diversity of life on Earth. It is also defined as the study of the history of life ...

is the study of the origin and descent of

species

In biology, a species is the basic unit of classification and a taxonomic rank of an organism, as well as a unit of biodiversity. A species is often defined as the largest group of organisms in which any two individuals of the appropriat ...

, as well as their change over time.

Informatics has assisted evolutionary biologists by enabling researchers to:

* trace the evolution of a large number of organisms by measuring changes in their

DNA, rather than through physical taxonomy or physiological observations alone,

* compare entire

genomes, which permits the study of more complex evolutionary events, such as

gene duplication,

horizontal gene transfer, and the prediction of factors important in bacterial

speciation,

* build complex computational

population genetics

Population genetics is a subfield of genetics that deals with genetic differences within and between populations, and is a part of evolutionary biology. Studies in this branch of biology examine such phenomena as adaptation, speciation, and po ...

models to predict the outcome of the system over time

* track and share information on an increasingly large number of species and organisms

Future work endeavours to reconstruct the now more complex

tree of life.

The area of research within

computer science

Computer science is the study of computation, automation, and information. Computer science spans theoretical disciplines (such as algorithms, theory of computation, information theory, and automation) to Applied science, practical discipli ...

that uses

genetic algorithms is sometimes confused with computational evolutionary biology, but the two areas are not necessarily related.

Comparative genomics

The core of comparative genome analysis is the establishment of the correspondence between

genes (

orthology analysis) or other genomic features in different organisms. It is these intergenomic maps that make it possible to trace the evolutionary processes responsible for the divergence of two genomes. A multitude of evolutionary events acting at various organizational levels shape genome evolution. At the lowest level, point mutations affect individual nucleotides. At a higher level, large chromosomal segments undergo duplication, lateral transfer, inversion, transposition, deletion and insertion. Ultimately, whole genomes are involved in processes of hybridization, polyploidization and

endosymbiosis, often leading to rapid speciation. The complexity of genome evolution poses many exciting challenges to developers of mathematical models and algorithms, who have recourse to a spectrum of algorithmic, statistical and mathematical techniques, ranging from exact,

heuristics, fixed parameter and

approximation algorithms

In computer science and operations research, approximation algorithms are efficient algorithms that find approximate solutions to optimization problems (in particular NP-hard problems) with provable guarantees on the distance of the returned s ...

for problems based on parsimony models to

Markov chain Monte Carlo

In statistics, Markov chain Monte Carlo (MCMC) methods comprise a class of algorithms for sampling from a probability distribution. By constructing a Markov chain that has the desired distribution as its equilibrium distribution, one can obtain ...

algorithms for

Bayesian analysis of problems based on probabilistic models.

Many of these studies are based on the detection of

sequence homology to assign sequences to

protein families.

Pan genomics

Pan genomics is a concept introduced in 2005 by Tettelin and Medini which eventually took root in bioinformatics. Pan genome is the complete gene repertoire of a particular taxonomic group: although initially applied to closely related strains of a species, it can be applied to a larger context like genus, phylum, etc. It is divided in two parts- The Core genome: Set of genes common to all the genomes under study (These are often housekeeping genes vital for survival) and The Dispensable/Flexible Genome: Set of genes not present in all but one or some genomes under study. A bioinformatics tool BPGA can be used to characterize the Pan Genome of bacterial species.

Genetics of disease

With the advent of next-generation sequencing we are obtaining enough sequence data to map the genes of complex diseases including

infertility,

breast cancer

Breast cancer is cancer that develops from breast tissue. Signs of breast cancer may include a lump in the breast, a change in breast shape, dimpling of the skin, milk rejection, fluid coming from the nipple, a newly inverted nipple, or ...

or

Alzheimer's disease.

Genome-wide association studies are a useful approach to pinpoint the mutations responsible for such complex diseases.

Through these studies, thousands of DNA variants have been identified that are associated with similar diseases and traits. Furthermore, the possibility for genes to be used at prognosis, diagnosis or treatment is one of the most essential applications. Many studies are discussing both the promising ways to choose the genes to be used and the problems and pitfalls of using genes to predict disease presence or prognosis.

Genome-wide association studies have successfully identified thousands of common genetic variants for complex diseases and traits; however, these common variants only explain a small fraction of heritability.

Rare variants may account for some of the

missing heritability The "missing heritability" problem is the fact that single genetic variations cannot account for much of the heritability of diseases, behaviors, and other phenotypes. This is a problem that has significant implications for medicine, since a person' ...

. Large-scale

whole genome sequencing studies have rapidly sequenced millions of whole genomes, and such studies have identified hundreds of millions of

rare variants.

Functional annotations predict the effect or function of a genetic variant and help to prioritize rare functional variants, and incorporating these annotations can effectively boost the power of genetic association of rare variants analysis of whole genome sequencing studies. Some tools have been developed to provide all-in-one rare variant association analysis for whole-genome sequencing data, including integration of genotype data and their functional annotations, association analysis, result summary and visualization.

Analysis of mutations in cancer

In

cancer

Cancer is a group of diseases involving abnormal cell growth with the potential to invade or spread to other parts of the body. These contrast with benign tumors, which do not spread. Possible signs and symptoms include a lump, abnormal b ...

, the genomes of affected cells are rearranged in complex or even unpredictable ways. Massive sequencing efforts are used to identify previously unknown

point mutations in a variety of

gene

In biology, the word gene (from , ; "...Wilhelm Johannsen coined the word gene to describe the Mendelian units of heredity..." meaning ''generation'' or ''birth'' or ''gender'') can have several different meanings. The Mendelian gene is a b ...

s in cancer. Bioinformaticians continue to produce specialized automated systems to manage the sheer volume of sequence data produced, and they create new algorithms and software to compare the sequencing results to the growing collection of

human genome sequences and

germline polymorphisms. New physical detection technologies are employed, such as

oligonucleotide microarrays to identify chromosomal gains and losses (called

comparative genomic hybridization), and

single-nucleotide polymorphism

In genetics, a single-nucleotide polymorphism (SNP ; plural SNPs ) is a germline substitution of a single nucleotide at a specific position in the genome. Although certain definitions require the substitution to be present in a sufficiently ...

arrays to detect known ''point mutations''. These detection methods simultaneously measure several hundred thousand sites throughout the genome, and when used in high-throughput to measure thousands of samples, generate

terabytes of data per experiment. Again the massive amounts and new types of data generate new opportunities for bioinformaticians. The data is often found to contain considerable variability, or

noise

Noise is unwanted sound considered unpleasant, loud or disruptive to hearing. From a physics standpoint, there is no distinction between noise and desired sound, as both are vibrations through a medium, such as air or water. The difference aris ...

, and thus

Hidden Markov model

A hidden Markov model (HMM) is a statistical Markov model in which the system being modeled is assumed to be a Markov process — call it X — with unobservable ("''hidden''") states. As part of the definition, HMM requires that there be an ...

and change-point analysis methods are being developed to infer real

copy number changes.

Two important principles can be used in the analysis of cancer genomes bioinformatically pertaining to the identification of mutations in the

exome. First, cancer is a disease of accumulated somatic mutations in genes. Second cancer contains driver mutations which need to be distinguished from passengers.

With the breakthroughs that this next-generation sequencing technology is providing to the field of Bioinformatics, cancer genomics could drastically change. These new methods and software allow bioinformaticians to sequence many cancer genomes quickly and affordably. This could create a more flexible process for classifying types of cancer by analysis of cancer driven mutations in the genome. Furthermore, tracking of patients while the disease progresses may be possible in the future with the sequence of cancer samples.

Another type of data that requires novel informatics development is the analysis of

lesion

A lesion is any damage or abnormal change in the tissue of an organism, usually caused by disease or trauma. ''Lesion'' is derived from the Latin "injury". Lesions may occur in plants as well as animals.

Types

There is no designated classif ...

s found to be recurrent among many tumors.

Gene and protein expression

Analysis of gene expression

The

expression of many genes can be determined by measuring

mRNA levels with multiple techniques including

microarrays,

expressed cDNA sequence tag (EST) sequencing,

serial analysis of gene expression (SAGE) tag sequencing,

massively parallel signature sequencing

Massive parallel signature sequencing (MPSS) is a procedure that is used to identify and quantify mRNA transcripts, resulting in data similar to serial analysis of gene expression (SAGE), although it employs a series of biochemical and sequencing ...

(MPSS),

RNA-Seq, also known as "Whole Transcriptome Shotgun Sequencing" (WTSS), or various applications of multiplexed in-situ hybridization. All of these techniques are extremely noise-prone and/or subject to bias in the biological measurement, and a major research area in computational biology involves developing statistical tools to separate

signal from

noise

Noise is unwanted sound considered unpleasant, loud or disruptive to hearing. From a physics standpoint, there is no distinction between noise and desired sound, as both are vibrations through a medium, such as air or water. The difference aris ...

in high-throughput gene expression studies. Such studies are often used to determine the genes implicated in a disorder: one might compare microarray data from cancerous

epithelial

Epithelium or epithelial tissue is one of the four basic types of animal tissue, along with connective tissue, muscle tissue and nervous tissue. It is a thin, continuous, protective layer of compactly packed cells with a little intercellu ...

cells to data from non-cancerous cells to determine the transcripts that are up-regulated and down-regulated in a particular population of cancer cells.

Analysis of protein expression

Protein microarrays and high throughput (HT)

mass spectrometry (MS) can provide a snapshot of the proteins present in a biological sample. Bioinformatics is very much involved in making sense of protein microarray and HT MS data; the former approach faces similar problems as with microarrays targeted at mRNA, the latter involves the problem of matching large amounts of mass data against predicted masses from protein sequence databases, and the complicated statistical analysis of samples where multiple, but incomplete peptides from each protein are detected. Cellular protein localization in a tissue context can be achieved through affinity

proteomics displayed as spatial data based on

immunohistochemistry and

tissue microarrays.

Analysis of regulation

Gene regulation

Regulation of gene expression, or gene regulation, includes a wide range of mechanisms that are used by cells to increase or decrease the production of specific gene products ( protein or RNA). Sophisticated programs of gene expression are w ...

is the complex orchestration of events by which a signal, potentially an extracellular signal such as a

hormone

A hormone (from the Greek participle , "setting in motion") is a class of signaling molecules in multicellular organisms that are sent to distant organs by complex biological processes to regulate physiology and behavior. Hormones are required ...

, eventually leads to an increase or decrease in the activity of one or more

protein

Proteins are large biomolecules and macromolecules that comprise one or more long chains of amino acid residues. Proteins perform a vast array of functions within organisms, including catalysing metabolic reactions, DNA replication, res ...

s. Bioinformatics techniques have been applied to explore various steps in this process.

For example, gene expression can be regulated by nearby elements in the genome. Promoter analysis involves the identification and study of

sequence motifs in the DNA surrounding the coding region of a gene. These motifs influence the extent to which that region is transcribed into mRNA.

Enhancer elements far away from the promoter can also regulate gene expression, through three-dimensional looping interactions. These interactions can be determined by bioinformatic analysis of

chromosome conformation capture experiments.

Expression data can be used to infer gene regulation: one might compare

microarray data from a wide variety of states of an organism to form hypotheses about the genes involved in each state. In a single-cell organism, one might compare stages of the

cell cycle

The cell cycle, or cell-division cycle, is the series of events that take place in a cell that cause it to divide into two daughter cells. These events include the duplication of its DNA (DNA replication) and some of its organelles, and sub ...

, along with various stress conditions (heat shock, starvation, etc.). One can then apply

clustering algorithms to that expression data to determine which genes are co-expressed. For example, the upstream regions (promoters) of co-expressed genes can be searched for over-represented

regulatory elements. Examples of clustering algorithms applied in gene clustering are

k-means clustering,

self-organizing maps (SOMs),

hierarchical clustering, and

consensus clustering methods.

Analysis of cellular organization

Several approaches have been developed to analyze the location of organelles, genes, proteins, and other components within cells. This is relevant as the location of these components affects the events within a cell and thus helps us to predict the behavior of biological systems. A

gene ontology category, ''cellular component'', has been devised to capture subcellular localization in many

biological database

Biological databases are libraries of biological sciences, collected from scientific experiments, published literature, high-throughput experiment technology, and computational analysis. They contain information from research areas including genom ...

s.

Microscopy and image analysis

Microscopic pictures allow us to locate both

organelles as well as molecules. It may also help us to distinguish between normal and abnormal cells, e.g. in

cancer

Cancer is a group of diseases involving abnormal cell growth with the potential to invade or spread to other parts of the body. These contrast with benign tumors, which do not spread. Possible signs and symptoms include a lump, abnormal b ...

.

Protein localization

The localization of proteins helps us to evaluate the role of a protein. For instance, if a protein is found in the

nucleus

Nucleus ( : nuclei) is a Latin word for the seed inside a fruit. It most often refers to:

* Atomic nucleus, the very dense central region of an atom

*Cell nucleus, a central organelle of a eukaryotic cell, containing most of the cell's DNA

Nucl ...

it may be involved in

gene regulation

Regulation of gene expression, or gene regulation, includes a wide range of mechanisms that are used by cells to increase or decrease the production of specific gene products ( protein or RNA). Sophisticated programs of gene expression are w ...

or

splicing. By contrast, if a protein is found in

mitochondria, it may be involved in

respiration or other

metabolic processes. Protein localization is thus an important component of

protein function prediction. There are well developed

protein subcellular localization prediction resources available, including protein subcellular location databases, and prediction tools.

Nuclear organization of chromatin

Data from high-throughput

chromosome conformation capture experiments, such as

Hi-C (experiment) and

ChIA-PET, can provide information on the spatial proximity of DNA loci. Analysis of these experiments can determine the three-dimensional structure and

nuclear organization of chromatin. Bioinformatic challenges in this field include partitioning the genome into domains, such as

Topologically Associating Domains (TADs), that are organised together in three-dimensional space.

Structural bioinformatics

Protein structure prediction is another important application of bioinformatics. The

amino acid

Amino acids are organic compounds that contain both amino and carboxylic acid functional groups. Although hundreds of amino acids exist in nature, by far the most important are the alpha-amino acids, which comprise proteins. Only 22 alpha ...

sequence of a protein, the so-called

primary structure, can be easily determined from the sequence on the gene that codes for it. In the vast majority of cases, this primary structure uniquely determines a structure in its native environment. (Of course, there are exceptions, such as the

bovine spongiform encephalopathy (mad cow disease)

prion.) Knowledge of this structure is vital in understanding the function of the protein. Structural information is usually classified as one of ''

secondary'', ''

tertiary'' and ''

quaternary'' structure. A viable general solution to such predictions remains an open problem. Most efforts have so far been directed towards heuristics that work most of the time.

One of the key ideas in bioinformatics is the notion of

homology. In the genomic branch of bioinformatics, homology is used to predict the function of a gene: if the sequence of gene ''A'', whose function is known, is homologous to the sequence of gene ''B,'' whose function is unknown, one could infer that B may share A's function. In the structural branch of bioinformatics, homology is used to determine which parts of a protein are important in structure formation and interaction with other proteins. In a technique called

homology modeling, this information is used to predict the structure of a protein once the structure of a homologous protein is known. Until recently, this remained the only way to predict protein structures reliably. However, a game-changing breakthrough occurred with the release of new deep-learning algorithms-based software called AlphaFold, developed by a bioinformatics team within Google's A.I. research department DeepMind. AlphaFold, during the 14th Critical Assessment of protein Structure Prediction (CASP14) computational protein structure prediction software competition, became the first contender ever to deliver prediction submissions with accuracy competitive with experimental structures in a majority of cases and greatly outperforming all other prediction software methods up to that point. AlphaFold has since released the predicted structures for hundreds of millions of proteins.

One example of this is hemoglobin in humans and the hemoglobin in legumes (

leghemoglobin), which are distant relatives from the same

protein superfamily. Both serve the same purpose of transporting oxygen in the organism. Although both of these proteins have completely different amino acid sequences, their protein structures are virtually identical, which reflects their near identical purposes and shared ancestor.

Other techniques for predicting protein structure include protein threading and ''de novo'' (from scratch) physics-based modeling.

Another aspect of structural bioinformatics include the use of protein structures for

Virtual Screening models such as

Quantitative Structure-Activity Relationship models and proteochemometric models (PCM). Furthermore, a protein's crystal structure can be used in simulation of for example ligand-binding studies and ''in silico'' mutagenesis studies.

Network and systems biology

''Network analysis'' seeks to understand the relationships within

biological networks such as

metabolic or

protein–protein interaction networks. Although biological networks can be constructed from a single type of molecule or entity (such as genes), network biology often attempts to integrate many different data types, such as proteins, small molecules, gene expression data, and others, which are all connected physically, functionally, or both.

''Systems biology'' involves the use of

computer simulations of

cellular subsystems (such as the

networks of metabolites and

enzyme

Enzymes () are proteins that act as biological catalysts by accelerating chemical reactions. The molecules upon which enzymes may act are called substrates, and the enzyme converts the substrates into different molecules known as products ...

s that comprise

metabolism

Metabolism (, from el, μεταβολή ''metabolē'', "change") is the set of life-sustaining chemical reactions in organisms. The three main functions of metabolism are: the conversion of the energy in food to energy available to run ...

,

signal transduction pathways and

gene regulatory networks) to both analyze and visualize the complex connections of these cellular processes.

Artificial life or virtual evolution attempts to understand evolutionary processes via the computer simulation of simple (artificial) life forms.

Molecular interaction networks

Tens of thousands of three-dimensional protein structures have been determined by

X-ray crystallography and

protein nuclear magnetic resonance spectroscopy Nuclear magnetic resonance spectroscopy of proteins (usually abbreviated protein NMR) is a field of structural biology in which NMR spectroscopy is used to obtain information about the structure and dynamics of proteins, and also nucleic acids, a ...

(protein NMR) and a central question in structural bioinformatics is whether it is practical to predict possible protein–protein interactions only based on these 3D shapes, without performing

protein–protein interaction experiments. A variety of methods have been developed to tackle the

protein–protein docking problem, though it seems that there is still much work to be done in this field.

Other interactions encountered in the field include Protein–ligand (including drug) and

protein–peptide. Molecular dynamic simulation of movement of atoms about rotatable bonds is the fundamental principle behind computational

algorithm

In mathematics and computer science, an algorithm () is a finite sequence of rigorous instructions, typically used to solve a class of specific problems or to perform a computation. Algorithms are used as specifications for performing ...

s, termed docking algorithms, for studying

molecular interactions.

Others

Literature analysis

The growth in the number of published literature makes it virtually impossible to read every paper, resulting in disjointed sub-fields of research. Literature analysis aims to employ computational and statistical linguistics to mine this growing library of text resources. For example:

* Abbreviation recognition – identify the long-form and abbreviation of biological terms

*

Named-entity recognition – recognizing biological terms such as gene names

* Protein–protein interaction – identify which

protein

Proteins are large biomolecules and macromolecules that comprise one or more long chains of amino acid residues. Proteins perform a vast array of functions within organisms, including catalysing metabolic reactions, DNA replication, res ...

s interact with which proteins from text

The area of research draws from

statistics

Statistics (from German: '' Statistik'', "description of a state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, indust ...

and

computational linguistics.

High-throughput image analysis

Computational technologies are used to accelerate or fully automate the processing, quantification and analysis of large amounts of high-information-content

biomedical imagery. Modern

image analysis systems augment an observer's ability to make measurements from a large or complex set of images, by improving

accuracy

Accuracy and precision are two measures of '' observational error''.

''Accuracy'' is how close a given set of measurements ( observations or readings) are to their '' true value'', while ''precision'' is how close the measurements are to each o ...

,

objectivity

Objectivity can refer to:

* Objectivity (philosophy), the property of being independent from perception

** Objectivity (science), the goal of eliminating personal biases in the practice of science

** Journalistic objectivity, encompassing fai ...

, or speed. A fully developed analysis system may completely replace the observer. Although these systems are not unique to biomedical imagery, biomedical imaging is becoming more important for both

diagnostics and research. Some examples are:

* high-throughput and high-fidelity quantification and sub-cellular localization (

high-content screening High-content screening (HCS), also known as high-content analysis (HCA) or cellomics, is a method that is used in biological research and drug discovery to identify substances such as small molecules, peptides, or RNAi that alter the phenotype of a ...

, cytohistopathology,

Bioimage informatics Bioimage informatics is a subfield of bioinformatics and computational biology. It focuses on the use of computational techniques to analyze bioimages, especially cellular and molecular images, at large scale and high throughput. The goal is to obt ...

)

*

morphometrics

* clinical image analysis and visualization

* determining the real-time air-flow patterns in breathing lungs of living animals

* quantifying occlusion size in real-time imagery from the development of and recovery during arterial injury

* making behavioral observations from extended video recordings of laboratory animals

* infrared measurements for metabolic activity determination

* inferring clone overlaps in

DNA mapping, e.g. the

Sulston score The Sulston score is an equation used in DNA mapping to numerically assess the likelihood that a given "fingerprint" similarity between two DNA clones is merely a result of chance. Used as such, it is a test of statistical significance. That is, l ...

High-throughput single cell data analysis

Computational techniques are used to analyse high-throughput, low-measurement single cell data, such as that obtained from

flow cytometry. These methods typically involve finding populations of cells that are relevant to a particular disease state or experimental condition.

Biodiversity informatics

Biodiversity informatics deals with the collection and analysis of

biodiversity

Biodiversity or biological diversity is the variety and variability of life on Earth. Biodiversity is a measure of variation at the genetic ('' genetic variability''), species ('' species diversity''), and ecosystem ('' ecosystem diversity'') ...

data, such as

taxonomic databases, or

microbiome

A microbiome () is the community of microorganisms that can usually be found living together in any given habitat. It was defined more precisely in 1988 by Whipps ''et al.'' as "a characteristic microbial community occupying a reasonably wel ...

data. Examples of such analyses include

phylogenetics,

niche modelling

Species distribution modelling (SDM), also known as environmental (or ecological) niche modelling (ENM), habitat modelling, predictive habitat distribution modelling, and range mapping uses computer algorithms to predict the distribution of a spe ...

,

species richness mapping,

DNA barcoding, or

species

In biology, a species is the basic unit of classification and a taxonomic rank of an organism, as well as a unit of biodiversity. A species is often defined as the largest group of organisms in which any two individuals of the appropriat ...

identification tools.

Ontologies and data integration

Biological ontologies are

directed acyclic graph

In mathematics, particularly graph theory, and computer science, a directed acyclic graph (DAG) is a directed graph with no directed cycles. That is, it consists of vertices and edges (also called ''arcs''), with each edge directed from one ...

s of

controlled vocabularies. They are designed to capture biological concepts and descriptions in a way that can be easily categorised and analysed with computers. When categorised in this way, it is possible to gain added value from holistic and integrated analysis.

The

OBO Foundry was an effort to standardise certain ontologies. One of the most widespread is the

Gene ontology which describes gene function. There are also ontologies which describe phenotypes.

Databases

Databases are essential for bioinformatics research and applications. Many databases exist, covering various information types: for example, DNA and protein sequences, molecular structures, phenotypes and biodiversity. Databases may contain empirical data (obtained directly from experiments), predicted data (obtained from analysis), or, most commonly, both. They may be specific to a particular organism, pathway or molecule of interest. Alternatively, they can incorporate data compiled from multiple other databases. These databases vary in their format, access mechanism, and whether they are public or not.

Some of the most commonly used databases are listed below. For a more comprehensive list, please check the link at the beginning of the subsection.

* Used in biological sequence analysis:

Genbank,

UniProt

* Used in structure analysis:

Protein Data Bank

The Protein Data Bank (PDB) is a database for the three-dimensional structural data of large biological molecules, such as proteins and nucleic acids. The data, typically obtained by X-ray crystallography, NMR spectroscopy, or, increasingly, cr ...

(PDB)

* Used in finding Protein Families and

Motif Finding:

InterPro,

Pfam

* Used for Next Generation Sequencing:

Sequence Read Archive

The Sequence Read Archive (SRA, previously known as the Short Read Archive) is a bioinformatics database that provides a public repository for DNA sequencing data, especially the "short reads" generated by high-throughput sequencing, which are typ ...

* Used in Network Analysis: Metabolic Pathway Databases (

KEGG,

BioCyc), Interaction Analysis Databases, Functional Networks

* Used in design of synthetic genetic circuits:

GenoCAD

GenoCAD is one of the earliest computer assisted design tools for synthetic biology. The software is a bioinformatics tool developed and maintained by GenoFAB, Inc.. GenoCAD facilitates the design of protein expression vectors, artificial gene ne ...

Software and tools

Software tools for bioinformatics range from simple command-line tools, to more complex graphical programs and standalone web-services available from various

bioinformatics companies or public institutions.

Open-source bioinformatics software

Many

free and open-source software tools have existed and continued to grow since the 1980s.

The combination of a continued need for new

algorithm

In mathematics and computer science, an algorithm () is a finite sequence of rigorous instructions, typically used to solve a class of specific problems or to perform a computation. Algorithms are used as specifications for performing ...

s for the analysis of emerging types of biological readouts, the potential for innovative ''

in silico'' experiments, and freely available

open code

Open-source software (OSS) is computer software that is released under a license in which the copyright holder grants users the rights to use, study, change, and distribute the software and its source code to anyone and for any purpose. Open ...

bases have helped to create opportunities for all research groups to contribute to both bioinformatics and the range of open-source software available, regardless of their funding arrangements. The open source tools often act as incubators of ideas, or community-supported

plug-ins in commercial applications. They may also provide ''

de facto

''De facto'' ( ; , "in fact") describes practices that exist in reality, whether or not they are officially recognized by laws or other formal norms. It is commonly used to refer to what happens in practice, in contrast with '' de jure'' ("by l ...

'' standards and shared object models for assisting with the challenge of bioinformation integration.

The

range of open-source software packages includes titles such as

Bioconductor

Bioconductor is a free, open source and open development software project for the analysis and comprehension of genomic data generated by wet lab experiments in molecular biology.

Bioconductor is based primarily on the statistical R program ...

,

BioPerl

BioPerl is a collection of Perl modules that facilitate the development of Perl scripts for bioinformatics applications. It has played an integral role in the Human Genome Project.

Background

BioPerl is an active open source software project sup ...

,

Biopython,

BioJava

BioJava is an open-source software project dedicated to provide Java tools to process biological data.VS Matha and P Kangueane, 2009, ''Bioinformatics: a concept-based introduction'', 2009. p26 BioJava is a set of library functions written in the p ...

,

BioJS

BioJS is an open-source project for bioinformatics data on the web. Its goal is to develop an open-source library of JavaScript components to visualise biological data. BioJS develops and maintains small building blocks (components) which can be r ...

,

BioRuby

BioRuby is a collection of open-source Ruby code, comprising classes for computational molecular biology and bioinformatics. It contains classes for DNA and protein sequence analysis, sequence alignment, biological database parsing, structural biol ...

,

Bioclipse

The Bioclipse project is a Java-based, open-source, visual platform for chemo- and bioinformatics based on the Eclipse Rich Client Platform (RCP). It gained scripting functionality in 2009, and a command line version in 2021.

Like any RCP applic ...

,

EMBOSS,

.NET Bio,

Orange with its bioinformatics add-on,

Apache Taverna,

UGENE and

GenoCAD

GenoCAD is one of the earliest computer assisted design tools for synthetic biology. The software is a bioinformatics tool developed and maintained by GenoFAB, Inc.. GenoCAD facilitates the design of protein expression vectors, artificial gene ne ...

. To maintain this tradition and create further opportunities, the non-profit

Open Bioinformatics Foundation have supported the annual

Bioinformatics Open Source Conference

The Bioinformatics Open Source Conference (BOSC) is an academic conference on open-source programming and other open science practices in bioinformatics, organised by the Open Bioinformatics Foundation. The conference has been held annually since ...

(BOSC) since 2000.

Web services in bioinformatics

SOAP

Soap is a salt of a fatty acid used in a variety of cleansing and lubricating products. In a domestic setting, soaps are surfactants usually used for washing, bathing, and other types of housekeeping. In industrial settings, soaps are us ...

- and

REST-based interfaces have been developed for a wide variety of bioinformatics applications allowing an application running on one computer in one part of the world to use algorithms, data and computing resources on servers in other parts of the world. The main advantages derive from the fact that end users do not have to deal with software and database maintenance overheads.

Basic bioinformatics services are classified by the

EBI into three categories:

SSS (Sequence Search Services),

MSA (Multiple Sequence Alignment), and

BSA (Biological Sequence Analysis). The availability of these

service-oriented bioinformatics resources demonstrate the applicability of web-based bioinformatics solutions, and range from a collection of standalone tools with a common data format under a single, standalone or web-based interface, to integrative, distributed and extensible

bioinformatics workflow management systems

A bioinformatics workflow management system is a specialized form of workflow management system designed specifically to compose and execute a series of computational or data manipulation steps, or a workflow, that relate to bioinformatics.

Ther ...

.

Bioinformatics workflow management systems

A

bioinformatics workflow management system is a specialized form of a

workflow management system designed specifically to compose and execute a series of computational or data manipulation steps, or a workflow, in a Bioinformatics application. Such systems are designed to

* provide an easy-to-use environment for individual application scientists themselves to create their own workflows,

* provide interactive tools for the scientists enabling them to execute their workflows and view their results in real-time,

* simplify the process of sharing and reusing workflows between the scientists, and

* enable scientists to track the

provenance of the workflow execution results and the workflow creation steps.

Some of the platforms giving this service:

Galaxy,

Kepler

Johannes Kepler (; ; 27 December 1571 – 15 November 1630) was a German astronomer, mathematician, astrologer, natural philosopher and writer on music. He is a key figure in the 17th-century Scientific Revolution, best known for his laws o ...

,

Taverna,

UGENE,

Anduril,

HIVE

A hive may refer to a beehive, an enclosed structure in which some honey bee species live and raise their young.

Hive or hives may also refer to:

Arts

* ''Hive'' (game), an abstract-strategy board game published in 2001

* "Hive" (song), a 201 ...

.

BioCompute and BioCompute Objects

In 2014, the

US Food and Drug Administration sponsored a conference held at the

National Institutes of Health

The National Institutes of Health, commonly referred to as NIH (with each letter pronounced individually), is the primary agency of the United States government responsible for biomedical and public health research. It was founded in the lat ...

Bethesda Campus to discuss reproducibility in bioinformatics. Over the next three years, a consortium of stakeholders met regularly to discuss what would become BioCompute paradigm. These stakeholders included representatives from government, industry, and academic entities. Session leaders represented numerous branches of the FDA and NIH Institutes and Centers, non-profit entities including the

Human Variome Project and the

European Federation for Medical Informatics, and research institutions including

Stanford, the

New York Genome Center, and the

George Washington University

, mottoeng = "God is Our Trust"

, established =

, type = Private federally chartered research university

, academic_affiliations =

, endowment = $2.8 billion (2022)

, presi ...

.

It was decided that the BioCompute paradigm would be in the form of digital 'lab notebooks' which allow for the reproducibility, replication, review, and reuse, of bioinformatics protocols. This was proposed to enable greater continuity within a research group over the course of normal personnel flux while furthering the exchange of ideas between groups. The US FDA funded this work so that information on pipelines would be more transparent and accessible to their regulatory staff.

In 2016, the group reconvened at the NIH in Bethesda and discussed the potential for a

BioCompute Object

The BioCompute Object (BCO) Project is a community-driven initiative to build a framework for standardizing and sharing computations and analyses generated from High-throughput sequencing (HTS -- also referred to as next-generation sequencing or ...

, an instance of the BioCompute paradigm. This work was copied as both a "standard trial use" document and a preprint paper uploaded to bioRxiv. The BioCompute object allows for the JSON-ized record to be shared among employees, collaborators, and regulators.

Education platforms

As well as in-person

Masters degree courses being taught at many universities, the computational nature of bioinformtics lends it to

computer-aided and online learning. Software platforms designed to teach bioinformatics concepts and methods include

Rosalind and online courses offered through the

Swiss Institute of Bioinformatics

The SIB Swiss Institute of Bioinformatics is an academic not-for-profit foundation which federates bioinformatics activities throughout Switzerland.

The institute was established on 30 March 1998 and its mission is to provide core bioinfor ...

Training Portal. The

Canadian Bioinformatics Workshops

Canadian Bioinformatics Workshops (CBW) are a series of advanced training workshops in bioinformatics, founded in 1999 in response to an identified need for a skilled bioinformatics workforce in Canada.

1999-2007

The Canadian Bioinformatics Work ...

provides videos and slides from training workshops on their website under a

Creative Commons license. The 4273π project or 4273pi project also offers open source educational materials for free. The course runs on low cost

Raspberry Pi computers and has been used to teach adults and school pupils. 4273π is actively developed by a consortium of academics and research staff who have run research level bioinformatics using Raspberry Pi computers and the 4273π operating system.

platforms also provide online certifications in bioinformatics and related disciplines, including

Coursera's Bioinformatics Specialization (

UC San Diego) and Genomic Data Science Specialization (

Johns Hopkins) as well as

EdX's Data Analysis for Life Sciences XSeries (

Harvard).

Conferences

There are several large conferences that are concerned with bioinformatics. Some of the most notable examples are

Intelligent Systems for Molecular Biology (ISMB),

European Conference on Computational Biology (ECCB), and

Research in Computational Molecular Biology (RECOMB).

See also

References

Further reading

* Sehgal et al. : Structural, phylogenetic and docking studies of D-amino acid oxidase activator(DAOA ), a candidate schizophrenia gene. Theoretical Biology and Medical Modelling 2013 10 :3.

* Raul Ise

The Present-Day Meaning Of The Word Bioinformatics Global Journal of Advanced Research, 2015

* Achuthsankar S Nai

Computational Biology & Bioinformatics – A gentle Overview Communications of Computer Society of India, January 2007

*

Aluru, Srinivas, ed. ''Handbook of Computational Molecular Biology''. Chapman & Hall/Crc, 2006. (Chapman & Hall/Crc Computer and Information Science Series)

* Baldi, P and Brunak, S, ''Bioinformatics: The Machine Learning Approach'', 2nd edition. MIT Press, 2001.

* Barnes, M.R. and Gray, I.C., eds., ''Bioinformatics for Geneticists'', first edition. Wiley, 2003.

* Baxevanis, A.D. and Ouellette, B.F.F., eds., ''Bioinformatics: A Practical Guide to the Analysis of Genes and Proteins'', third edition. Wiley, 2005.

* Baxevanis, A.D., Petsko, G.A., Stein, L.D., and Stormo, G.D., eds., ''

Current Protocols ''Current Protocols'' is a series of laboratory manuals for life scientists. The first title, ''Current Protocols in Molecular Biology'', was established in 1987 by the founding editors Frederick M. Ausubel, Roger Brent, Robert Kingston, David D. ...

in Bioinformatics''. Wiley, 2007.

* Cristianini, N. and Hahn, M

''Introduction to Computational Genomics'' Cambridge University Press, 2006. ( , )

* Durbin, R., S. Eddy, A. Krogh and G. Mitchison, ''Biological sequence analysis''. Cambridge University Press, 1998.

*

* Keedwell, E., ''Intelligent Bioinformatics: The Application of Artificial Intelligence Techniques to Bioinformatics Problems''. Wiley, 2005.

* Kohane, et al. ''Microarrays for an Integrative Genomics.'' The MIT Press, 2002.

* Lund, O. et al. ''Immunological Bioinformatics.'' The MIT Press, 2005.

*

Pachter, Lior and

Sturmfels, Bernd. "Algebraic Statistics for Computational Biology" Cambridge University Press, 2005.

* Pevzner, Pavel A. ''Computational Molecular Biology: An Algorithmic Approach'' The MIT Press, 2000.

* Soinov, L

Bioinformatics and Pattern Recognition Come TogetherJournal of Pattern Recognition Research

JPRR, Vol 1 (1) 2006 p. 37–41

* Stevens, Hallam, ''Life Out of Sequence: A Data-Driven History of Bioinformatics'', Chicago: The University of Chicago Press, 2013,

* Tisdall, James. "Beginning Perl for Bioinformatics" O'Reilly, 2001.

*

ttp://www.nap.edu/catalog/2121.html Calculating the Secrets of Life: Contributions of the Mathematical Sciences and computing to Molecular Biology (1995)Foundations of Computational and Systems Biology MIT CourseComputational Biology: Genomes, Networks, Evolution Free MIT Course

External links

*

*

*

Bioinformatics Resource Portal (SIB)

{{Authority control

There has been a tremendous advance in speed and cost reduction since the completion of the Human Genome Project, with some labs able to sequence over 100,000 billion bases each year, and a full genome can be sequenced for a thousand dollars or less. Computers became essential in molecular biology when protein sequences became available after Frederick Sanger determined the sequence of insulin in the early 1950s. Comparing multiple sequences manually turned out to be impractical. A pioneer in the field was Margaret Oakley Dayhoff. She compiled one of the first protein sequence databases, initially published as books and pioneered methods of sequence alignment and

There has been a tremendous advance in speed and cost reduction since the completion of the Human Genome Project, with some labs able to sequence over 100,000 billion bases each year, and a full genome can be sequenced for a thousand dollars or less. Computers became essential in molecular biology when protein sequences became available after Frederick Sanger determined the sequence of insulin in the early 1950s. Comparing multiple sequences manually turned out to be impractical. A pioneer in the field was Margaret Oakley Dayhoff. She compiled one of the first protein sequence databases, initially published as books and pioneered methods of sequence alignment and

Protein structure prediction is another important application of bioinformatics. The

Protein structure prediction is another important application of bioinformatics. The  Tens of thousands of three-dimensional protein structures have been determined by X-ray crystallography and

Tens of thousands of three-dimensional protein structures have been determined by X-ray crystallography and