In

mathematical statistics, the Kullback–Leibler (KL) divergence (also called relative entropy and I-divergence

), denoted

, is a type of

statistical distance: a measure of how much a model

probability distribution

In probability theory and statistics, a probability distribution is a Function (mathematics), function that gives the probabilities of occurrence of possible events for an Experiment (probability theory), experiment. It is a mathematical descri ...

is different from a true probability distribution .

Mathematically, it is defined as

A simple

interpretation of the KL divergence of from is the

expected excess

surprise from using as a model instead of when the actual distribution is . While it is a measure of how different two distributions are and is thus a distance in some sense, it is not actually a

metric, which is the most familiar and formal type of distance. In particular, it is not symmetric in the two distributions (in contrast to

variation of information), and does not satisfy the

triangle inequality

In mathematics, the triangle inequality states that for any triangle, the sum of the lengths of any two sides must be greater than or equal to the length of the remaining side.

This statement permits the inclusion of Degeneracy (mathematics)#T ...

. Instead, in terms of

information geometry

Information geometry is an interdisciplinary field that applies the techniques of differential geometry to study probability theory and statistics. It studies statistical manifolds, which are Riemannian manifolds whose points correspond to proba ...

, it is a type of

divergence

In vector calculus, divergence is a vector operator that operates on a vector field, producing a scalar field giving the rate that the vector field alters the volume in an infinitesimal neighborhood of each point. (In 2D this "volume" refers to ...

, a generalization of

squared distance, and for certain classes of distributions (notably an

exponential family), it satisfies a generalized

Pythagorean theorem

In mathematics, the Pythagorean theorem or Pythagoras' theorem is a fundamental relation in Euclidean geometry between the three sides of a right triangle. It states that the area of the square whose side is the hypotenuse (the side opposite t ...

(which applies to squared distances).

Relative entropy is always a non-negative

real number

In mathematics, a real number is a number that can be used to measure a continuous one- dimensional quantity such as a duration or temperature. Here, ''continuous'' means that pairs of values can have arbitrarily small differences. Every re ...

, with value 0 if and only if the two distributions in question are identical. It has diverse applications, both theoretical, such as characterizing the relative

(Shannon) entropy in information systems, randomness in continuous

time-series, and information gain when comparing statistical models of

inference

Inferences are steps in logical reasoning, moving from premises to logical consequences; etymologically, the word '' infer'' means to "carry forward". Inference is theoretically traditionally divided into deduction and induction, a distinct ...

; and practical, such as applied statistics,

fluid mechanics

Fluid mechanics is the branch of physics concerned with the mechanics of fluids (liquids, gases, and plasma (physics), plasmas) and the forces on them.

Originally applied to water (hydromechanics), it found applications in a wide range of discipl ...

,

neuroscience

Neuroscience is the scientific study of the nervous system (the brain, spinal cord, and peripheral nervous system), its functions, and its disorders. It is a multidisciplinary science that combines physiology, anatomy, molecular biology, ...

,

bioinformatics

Bioinformatics () is an interdisciplinary field of science that develops methods and Bioinformatics software, software tools for understanding biological data, especially when the data sets are large and complex. Bioinformatics uses biology, ...

, and

machine learning

Machine learning (ML) is a field of study in artificial intelligence concerned with the development and study of Computational statistics, statistical algorithms that can learn from data and generalise to unseen data, and thus perform Task ( ...

.

Introduction and context

Consider two probability distributions and . Usually, represents the data, the observations, or a measured probability distribution. Distribution represents instead a theory, a model, a description or an approximation of . The Kullback–Leibler divergence

is then interpreted as the average difference of the number of bits required for encoding samples of using a code optimized for rather than one optimized for . Note that the roles of and can be reversed in some situations where that is easier to compute, such as with the

expectation–maximization algorithm (EM) and

evidence lower bound (ELBO) computations.

Etymology

The relative entropy was introduced by

Solomon Kullback and

Richard Leibler in as "the mean information for discrimination between

and

per observation from

", where one is comparing two probability measures

, and

are the hypotheses that one is selecting from measure

(respectively). They denoted this by

, and defined the "'divergence' between

and

" as the symmetrized quantity

, which had already been defined and used by

Harold Jeffreys

Sir Harold Jeffreys, FRS (22 April 1891 – 18 March 1989) was a British geophysicist who made significant contributions to mathematics and statistics. His book, ''Theory of Probability'', which was first published in 1939, played an importan ...

in 1948. In , the symmetrized form is again referred to as the "divergence", and the relative entropies in each direction are referred to as a "directed divergences" between two distributions; Kullback preferred the term discrimination information.

The term "divergence" is in contrast to a distance (metric), since the symmetrized divergence does not satisfy the triangle inequality. Numerous references to earlier uses of the symmetrized divergence and to other

statistical distances are given in . The asymmetric "directed divergence" has come to be known as the Kullback–Leibler divergence, while the symmetrized "divergence" is now referred to as the Jeffreys divergence.

Definition

For

discrete probability distribution

In probability theory and statistics, a probability distribution is a function that gives the probabilities of occurrence of possible events for an experiment. It is a mathematical description of a random phenomenon in terms of its sample spa ...

s and defined on the same

sample space, the relative entropy from to is defined

to be

which is equivalent to

In other words, it is the

expectation of the logarithmic difference between the probabilities and , where the expectation is taken using the probabilities .

Relative entropy is only defined in this way if, for all ,

implies

(

absolute continuity

In calculus and real analysis, absolute continuity is a smoothness property of functions that is stronger than continuity and uniform continuity. The notion of absolute continuity allows one to obtain generalizations of the relationship between ...

). Otherwise, it is often defined as but the value

is possible even if

everywhere, provided that

is infinite in extent. Analogous comments apply to the continuous and general measure cases defined below.

Whenever

is zero the contribution of the corresponding term is interpreted as zero because

For distributions and of a

continuous random variable

In probability theory and statistics, a probability distribution is a function that gives the probabilities of occurrence of possible events for an experiment. It is a mathematical description of a random phenomenon in terms of its sample spa ...

, relative entropy is defined to be the integral

where and denote the

probability densities of and .

More generally, if and are probability

measures on a

measurable space and is

absolutely continuous with respect to , then the relative entropy from to is defined as

where

is the

Radon–Nikodym derivative of with respect to , i.e. the unique almost everywhere defined function on

such that

which exists because is absolutely continuous with respect to . Also we assume the expression on the right-hand side exists. Equivalently (by the

chain rule

In calculus, the chain rule is a formula that expresses the derivative of the Function composition, composition of two differentiable functions and in terms of the derivatives of and . More precisely, if h=f\circ g is the function such that h ...

), this can be written as

which is the

entropy

Entropy is a scientific concept, most commonly associated with states of disorder, randomness, or uncertainty. The term and the concept are used in diverse fields, from classical thermodynamics, where it was first recognized, to the micros ...

of relative to . Continuing in this case, if

is any measure on

for which densities and with

and

exist (meaning that and are both absolutely continuous with respect to then the relative entropy from to is given as

Note that such a measure

for which densities can be defined always exists, since one can take

although in practice it will usually be one that applies in the context such as

counting measure for discrete distributions, or

Lebesgue measure

In measure theory, a branch of mathematics, the Lebesgue measure, named after French mathematician Henri Lebesgue, is the standard way of assigning a measure to subsets of higher dimensional Euclidean '-spaces. For lower dimensions or , it c ...

or a convenient variant thereof such as

Gaussian measure

In mathematics, Gaussian measure is a Borel measure on finite-dimensional Euclidean space \mathbb^n, closely related to the normal distribution in statistics. There is also a generalization to infinite-dimensional spaces. Gaussian measures are na ...

or the uniform measure on the

sphere

A sphere (from Ancient Greek, Greek , ) is a surface (mathematics), surface analogous to the circle, a curve. In solid geometry, a sphere is the Locus (mathematics), set of points that are all at the same distance from a given point in three ...

,

Haar measure

In mathematical analysis, the Haar measure assigns an "invariant volume" to subsets of locally compact topological groups, consequently defining an integral for functions on those groups.

This Measure (mathematics), measure was introduced by Alfr� ...

on a

Lie group

In mathematics, a Lie group (pronounced ) is a group (mathematics), group that is also a differentiable manifold, such that group multiplication and taking inverses are both differentiable.

A manifold is a space that locally resembles Eucli ...

etc. for continuous distributions.

The logarithms in these formulae are usually taken to

base 2 if information is measured in units of

bits, or to base if information is measured in

nats. Most formulas involving relative entropy hold regardless of the base of the logarithm.

Various conventions exist for referring to

in words. Often it is referred to as the divergence ''between'' and , but this fails to convey the fundamental asymmetry in the relation. Sometimes, as in this article, it may be described as the divergence of ''from'' or as the divergence ''from'' ''to'' . This reflects the

asymmetry

Asymmetry is the absence of, or a violation of, symmetry (the property of an object being invariant to a transformation, such as reflection). Symmetry is an important property of both physical and abstract systems and it may be displayed in pre ...

in

Bayesian inference, which starts ''from'' a

prior

The term prior may refer to:

* Prior (ecclesiastical), the head of a priory (monastery)

* Prior convictions, the life history and previous convictions of a suspect or defendant in a criminal case

* Prior probability, in Bayesian statistics

* Prio ...

and updates ''to'' the

posterior . Another common way to refer to

is as the relative entropy of ''with respect to'' or the information gain from over .

Basic example

Kullback gives the following example (Table 2.1, Example 2.1). Let and be the distributions shown in the table and figure. is the distribution on the left side of the figure, a

binomial distribution

In probability theory and statistics, the binomial distribution with parameters and is the discrete probability distribution of the number of successes in a sequence of statistical independence, independent experiment (probability theory) ...

with

and

. is the distribution on the right side of the figure, a

discrete uniform distribution with the three possible outcomes (i.e.

), each with probability

.

Relative entropies

and

are calculated as follows. This example uses the

natural log with base

, designated to get results in

nats (see

units of information

A unit of information is any unit of measure of digital data size. In digital computing, a unit of information is used to describe the capacity of a digital data storage device. In telecommunications, a unit of information is used to describe ...

):

Interpretations

Statistics

In the field of statistics, the

Neyman–Pearson lemma states that the most powerful way to distinguish between the two distributions and based on an observation (drawn from one of them) is through the log of the ratio of their likelihoods:

. The KL divergence is the expected value of this statistic if is actually drawn from . Kullback motivated the statistic as an expected log likelihood ratio.

Coding

In the context of

coding theory

Coding theory is the study of the properties of codes and their respective fitness for specific applications. Codes are used for data compression, cryptography, error detection and correction, data transmission and computer data storage, data sto ...

,

can be constructed by measuring the expected number of extra

bits required to

code

In communications and information processing, code is a system of rules to convert information—such as a letter, word, sound, image, or gesture—into another form, sometimes shortened or secret, for communication through a communicati ...

samples from using a code optimized for rather than the code optimized for .

Inference

In the context of

machine learning

Machine learning (ML) is a field of study in artificial intelligence concerned with the development and study of Computational statistics, statistical algorithms that can learn from data and generalise to unseen data, and thus perform Task ( ...

,

is often called the

information gain achieved if would be used instead of which is currently used. By analogy with information theory, it is called the ''relative entropy'' of with respect to .

Expressed in the language of

Bayesian inference,

is a measure of the information gained by revising one's beliefs from the

prior probability distribution to the

posterior probability distribution . In other words, it is the amount of information lost when is used to approximate .

Information geometry

In applications, typically represents the "true" distribution of data, observations, or a precisely calculated theoretical distribution, while typically represents a theory, model, description, or

approximation of . In order to find a distribution that is closest to , we can minimize the KL divergence and compute an

information projection.

While it is a

statistical distance, it is not a

metric, the most familiar type of distance, but instead it is a

divergence

In vector calculus, divergence is a vector operator that operates on a vector field, producing a scalar field giving the rate that the vector field alters the volume in an infinitesimal neighborhood of each point. (In 2D this "volume" refers to ...

. While metrics are symmetric and generalize ''linear'' distance, satisfying the

triangle inequality

In mathematics, the triangle inequality states that for any triangle, the sum of the lengths of any two sides must be greater than or equal to the length of the remaining side.

This statement permits the inclusion of Degeneracy (mathematics)#T ...

, divergences are asymmetric and generalize ''squared'' distance, in some cases satisfying a generalized

Pythagorean theorem

In mathematics, the Pythagorean theorem or Pythagoras' theorem is a fundamental relation in Euclidean geometry between the three sides of a right triangle. It states that the area of the square whose side is the hypotenuse (the side opposite t ...

. In general

does not equal

, and the asymmetry is an important part of the geometry. The

infinitesimal

In mathematics, an infinitesimal number is a non-zero quantity that is closer to 0 than any non-zero real number is. The word ''infinitesimal'' comes from a 17th-century Modern Latin coinage ''infinitesimus'', which originally referred to the " ...

form of relative entropy, specifically its

Hessian, gives a

metric tensor

In the mathematical field of differential geometry, a metric tensor (or simply metric) is an additional structure on a manifold (such as a surface) that allows defining distances and angles, just as the inner product on a Euclidean space allows ...

that equals the

Fisher information metric

In information geometry, the Fisher information metric is a particular Riemannian metric which can be defined on a smooth statistical manifold, ''i.e.'', a smooth manifold whose points are probability distributions. It can be used to calculate the ...

; see . Fisher information metric on the certain probability distribution let determine the natural gradient for information-geometric optimization algorithms. Its quantum version is Fubini-study metric. Relative entropy satisfies a generalized Pythagorean theorem for

exponential families (geometrically interpreted as

dually flat manifolds), and this allows one to minimize relative entropy by geometric means, for example by

information projection and in

maximum likelihood estimation

In statistics, maximum likelihood estimation (MLE) is a method of estimation theory, estimating the Statistical parameter, parameters of an assumed probability distribution, given some observed data. This is achieved by Mathematical optimization, ...

.

The relative entropy is the

Bregman divergence generated by the negative entropy, but it is also of the form of an

-divergence. For probabilities over a finite

alphabet

An alphabet is a standard set of letter (alphabet), letters written to represent particular sounds in a spoken language. Specifically, letters largely correspond to phonemes as the smallest sound segments that can distinguish one word from a ...

, it is unique in being a member of both of these classes of

statistical divergences. The application of Bregman divergence can be found in mirror descent.

Finance (game theory)

Consider a growth-optimizing investor in a fair game with mutually exclusive outcomes

(e.g. a “horse race” in which the official odds add up to one).

The rate of return expected by such an investor is equal to the relative entropy

between the investor's believed probabilities and the official odds.

This is a special case of a much more general connection between financial returns and divergence measures.

Financial risks are connected to

via information geometry. Investors' views, the prevailing market view, and risky scenarios form triangles on the relevant manifold of probability distributions. The shape of the triangles determines key financial risks (both qualitatively and quantitatively). For instance, obtuse triangles in which investors' views and risk scenarios appear on “opposite sides” relative to the market describe negative risks, acute triangles describe positive exposure, and the right-angled situation in the middle corresponds to zero risk. Extending this concept, relative entropy can be hypothetically utilised to identify the behaviour of informed investors, if one takes this to be represented by the magnitude and deviations away from the prior expectations of fund flows, for example.

Motivation

In information theory, the

Kraft–McMillan theorem establishes that any directly decodable coding scheme for coding a message to identify one value

out of a set of possibilities can be seen as representing an implicit probability distribution

over , where

is the length of the code for

in bits. Therefore, relative entropy can be interpreted as the expected extra message-length per datum that must be communicated if a code that is optimal for a given (wrong) distribution is used, compared to using a code based on the true distribution : it is the ''excess'' entropy.

where

is the

cross entropy of relative to and

is the

entropy

Entropy is a scientific concept, most commonly associated with states of disorder, randomness, or uncertainty. The term and the concept are used in diverse fields, from classical thermodynamics, where it was first recognized, to the micros ...

of (which is the same as the cross-entropy of P with itself).

The relative entropy

can be thought of geometrically as a

statistical distance, a measure of how far the distribution is from the distribution . Geometrically it is a

divergence

In vector calculus, divergence is a vector operator that operates on a vector field, producing a scalar field giving the rate that the vector field alters the volume in an infinitesimal neighborhood of each point. (In 2D this "volume" refers to ...

: an asymmetric, generalized form of squared distance. The cross-entropy

is itself such a measurement (formally a

loss function), but it cannot be thought of as a distance, since

is not zero. This can be fixed by subtracting

to make

agree more closely with our notion of distance, as the ''excess'' loss. The resulting function is asymmetric, and while this can be symmetrized (see ), the asymmetric form is more useful. See for more on the geometric interpretation.

Relative entropy relates to "

rate function" in the theory of

large deviations.

[Novak S.Y. (2011), ''Extreme Value Methods with Applications to Finance'' ch. 14.5 ( Chapman & Hall). .]

Arthur Hobson proved that relative entropy is the only measure of difference between probability distributions that satisfies some desired properties, which are the canonical extension to those appearing in a commonly used

characterization of entropy. Consequently,

mutual information

In probability theory and information theory, the mutual information (MI) of two random variables is a measure of the mutual Statistical dependence, dependence between the two variables. More specifically, it quantifies the "Information conten ...

is the only measure of mutual dependence that obeys certain related conditions, since it can be defined

in terms of Kullback–Leibler divergence.

Properties

* Relative entropy is always

non-negative

In mathematics, the sign of a real number is its property of being either positive, negative, or 0. Depending on local conventions, zero may be considered as having its own unique sign, having no sign, or having both positive and negative sign. ...

,

a result known as

Gibbs' inequality, with

equals zero

if and only if

In logic and related fields such as mathematics and philosophy, "if and only if" (often shortened as "iff") is paraphrased by the biconditional, a logical connective between statements. The biconditional is true in two cases, where either bo ...

as measures.

In particular, if

and

, then

-

almost everywhere

In measure theory (a branch of mathematical analysis), a property holds almost everywhere if, in a technical sense, the set for which the property holds takes up nearly all possibilities. The notion of "almost everywhere" is a companion notion to ...

. The entropy

thus sets a minimum value for the cross-entropy

, the

expected number of

bits required when using a code based on rather than ; and the Kullback–Leibler divergence therefore represents the expected number of extra bits that must be transmitted to identify a value drawn from , if a code is used corresponding to the probability distribution , rather than the "true" distribution .

* No upper-bound exists for the general case. However, it is shown that if and are two discrete probability distributions built by distributing the same discrete quantity, then the maximum value of

can be calculated.

* Relative entropy remains well-defined for continuous distributions, and furthermore is invariant under

parameter transformations. For example, if a transformation is made from variable to variable

, then, since

and

where

is the absolute value of the derivative or more generally of the

Jacobian, the relative entropy may be rewritten:

where

and

. Although it was assumed that the transformation was continuous, this need not be the case. This also shows that the relative entropy produces a

dimensionally consistent quantity, since if is a dimensioned variable,

and

are also dimensioned, since e.g.

is dimensionless. The argument of the logarithmic term is and remains dimensionless, as it must. It can therefore be seen as in some ways a more fundamental quantity than some other properties in information theory

[See the section "differential entropy – 4" i]

Relative Entropy

video lecture by Sergio Verdú NIPS 2009 (such as

self-information or

Shannon entropy), which can become undefined or negative for non-discrete probabilities.

* Relative entropy is

additive for

independent distributions in much the same way as Shannon entropy. If

are independent distributions, and

, and likewise

for independent distributions

then

* Relative entropy

is

convex

Convex or convexity may refer to:

Science and technology

* Convex lens, in optics

Mathematics

* Convex set, containing the whole line segment that joins points

** Convex polygon, a polygon which encloses a convex set of points

** Convex polytop ...

in the pair of

probability measure

In mathematics, a probability measure is a real-valued function defined on a set of events in a σ-algebra that satisfies Measure (mathematics), measure properties such as ''countable additivity''. The difference between a probability measure an ...

s

, i.e. if

and

are two pairs of probability measures then

*

may be Taylor expanded about its minimum (i.e.

) as

which converges if and only if

almost surely w.r.t

.

Denote

and note that

. The first derivative of

may be derived and evaluated as follows

Further derivatives may be derived and evaluated as follows

Hence solving for

via the Taylor expansion of

about

evaluated at

yields

a.s. is a sufficient condition for convergence of the series by the following absolute convergence argument

a.s. is also a necessary condition for convergence of the series by the following proof by contradiction. Assume that

with measure strictly greater than

. It then follows that there must exist some values

,

, and

such that

and

with measure

. The previous proof of sufficiency demonstrated that the measure

component of the series where

is bounded, so we need only concern ourselves with the behavior of the measure

component of the series where

. The absolute value of the

th term of this component of the series is then lower bounded by

, which is unbounded as

, so the series diverges.

Duality formula for variational inference

The following result, due to Donsker and Varadhan, is known as Donsker and Varadhan's variational formula.

Examples

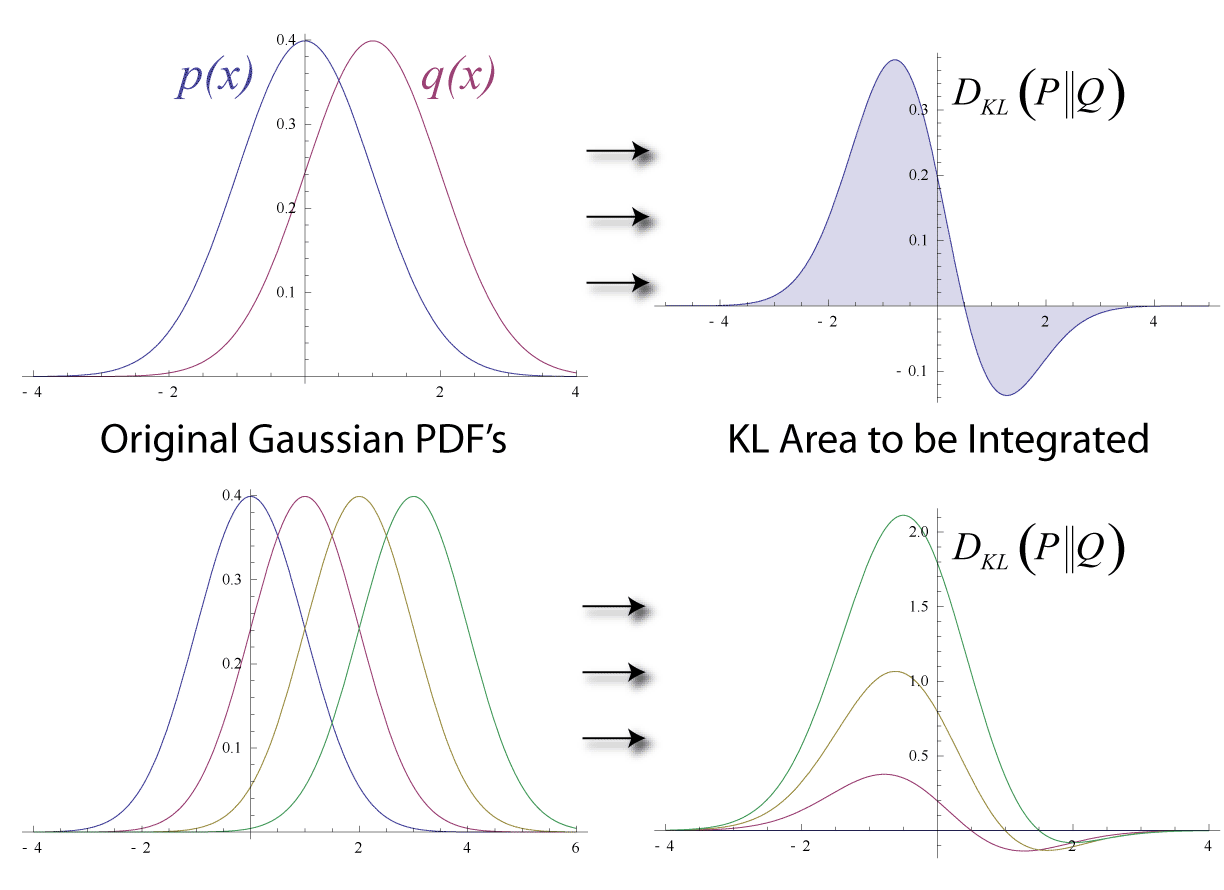

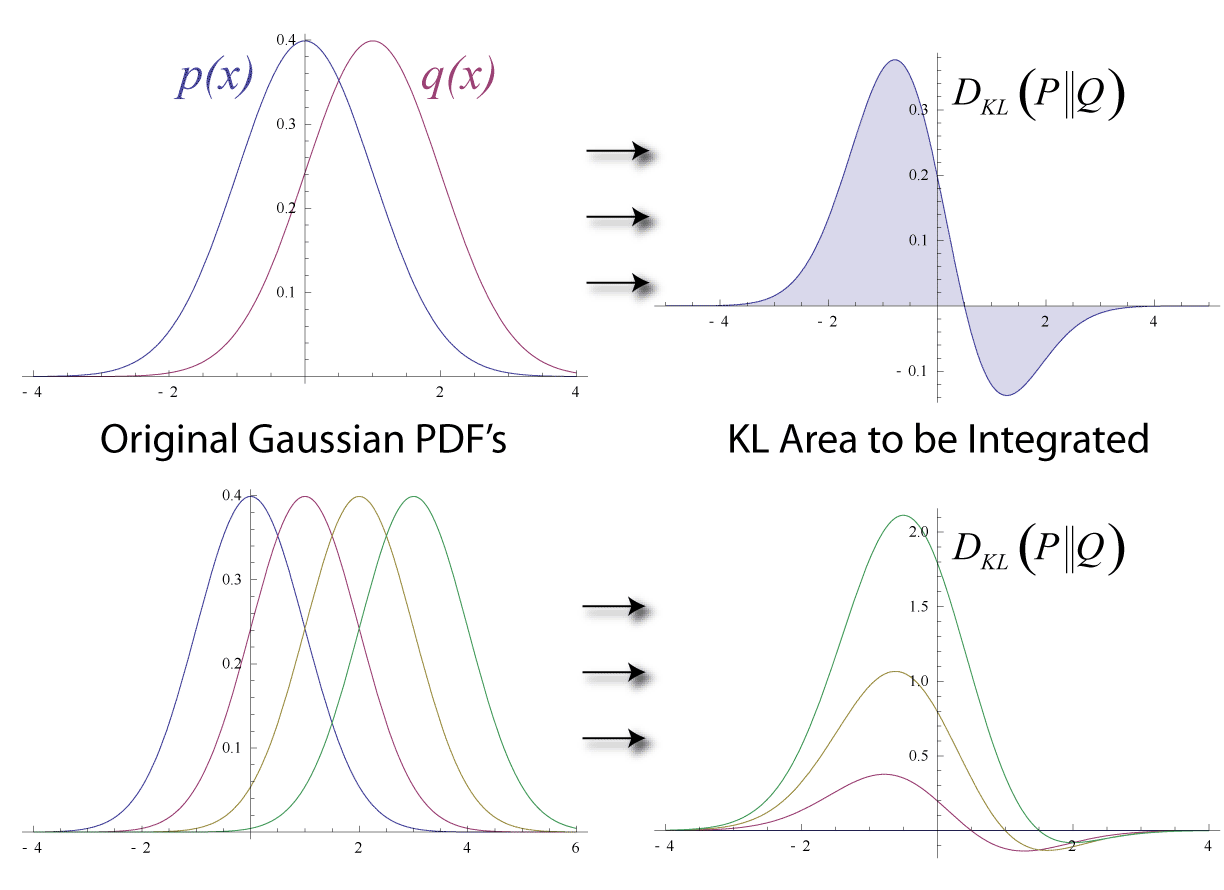

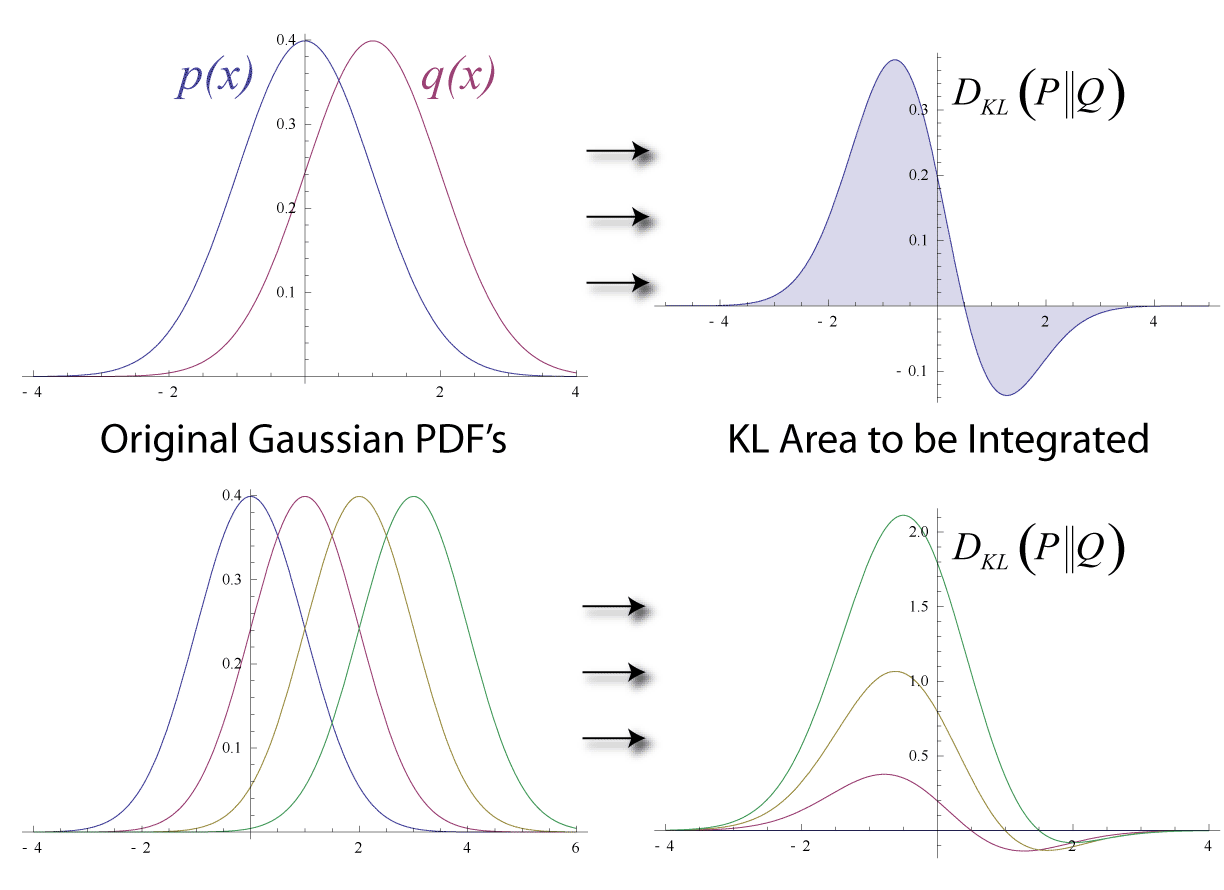

Multivariate normal distributions

Suppose that we have two

multivariate normal distributions, with means

and with (non-singular)

covariance matrices If the two distributions have the same dimension, , then the relative entropy between the distributions is as follows:

The

logarithm

In mathematics, the logarithm of a number is the exponent by which another fixed value, the base, must be raised to produce that number. For example, the logarithm of to base is , because is to the rd power: . More generally, if , the ...

in the last term must be taken to base

since all terms apart from the last are base- logarithms of expressions that are either factors of the density function or otherwise arise naturally. The equation therefore gives a result measured in

nats. Dividing the entire expression above by

yields the divergence in

bits.

In a numerical implementation, it is helpful to express the result in terms of the Cholesky decompositions

such that

and

. Then with and solutions to the triangular linear systems

, and

,

A special case, and a common quantity in

variational inference, is the relative entropy between a diagonal multivariate normal, and a standard normal distribution (with zero mean and unit variance):

For two univariate normal distributions and the above simplifies to

In the case of co-centered normal distributions with

, this simplifies

to:

Uniform distributions

Consider two uniform distributions, with the support of

In information theory, the Kraft–McMillan theorem establishes that any directly decodable coding scheme for coding a message to identify one value out of a set of possibilities can be seen as representing an implicit probability distribution over , where is the length of the code for in bits. Therefore, relative entropy can be interpreted as the expected extra message-length per datum that must be communicated if a code that is optimal for a given (wrong) distribution is used, compared to using a code based on the true distribution : it is the ''excess'' entropy.

where is the cross entropy of relative to and is the

In information theory, the Kraft–McMillan theorem establishes that any directly decodable coding scheme for coding a message to identify one value out of a set of possibilities can be seen as representing an implicit probability distribution over , where is the length of the code for in bits. Therefore, relative entropy can be interpreted as the expected extra message-length per datum that must be communicated if a code that is optimal for a given (wrong) distribution is used, compared to using a code based on the true distribution : it is the ''excess'' entropy.

where is the cross entropy of relative to and is the  In information theory, the Kraft–McMillan theorem establishes that any directly decodable coding scheme for coding a message to identify one value out of a set of possibilities can be seen as representing an implicit probability distribution over , where is the length of the code for in bits. Therefore, relative entropy can be interpreted as the expected extra message-length per datum that must be communicated if a code that is optimal for a given (wrong) distribution is used, compared to using a code based on the true distribution : it is the ''excess'' entropy.

where is the cross entropy of relative to and is the

In information theory, the Kraft–McMillan theorem establishes that any directly decodable coding scheme for coding a message to identify one value out of a set of possibilities can be seen as representing an implicit probability distribution over , where is the length of the code for in bits. Therefore, relative entropy can be interpreted as the expected extra message-length per datum that must be communicated if a code that is optimal for a given (wrong) distribution is used, compared to using a code based on the true distribution : it is the ''excess'' entropy.

where is the cross entropy of relative to and is the