Deep learning (also known as deep structured learning) is part of a broader family of

machine learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence.

Machine ...

methods based on

artificial neural network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains.

An ANN is based on a collection of connected unit ...

s with

representation learning

In machine learning, feature learning or representation learning is a set of techniques that allows a system to automatically discover the representations needed for feature detection or classification from raw data. This replaces manual feature e ...

. Learning can be

supervised,

semi-supervised or

unsupervised.

Deep-learning architectures such as

deep neural network

Deep learning (also known as deep structured learning) is part of a broader family of machine learning methods based on artificial neural networks with representation learning. Learning can be supervised, semi-supervised or unsupervised.

D ...

s,

deep belief network

In machine learning, a deep belief network (DBN) is a generative graphical model, or alternatively a class of deep neural network, composed of multiple layers of latent variables ("hidden units"), with connections between the layers but not bet ...

s,

deep reinforcement learning

Deep reinforcement learning (deep RL) is a subfield of machine learning that combines reinforcement learning (RL) and deep learning. RL considers the problem of a computational agent learning to make decisions by trial and error. Deep RL incorpor ...

,

recurrent neural networks

A recurrent neural network (RNN) is a class of artificial neural networks where connections between nodes can create a cycle, allowing output from some nodes to affect subsequent input to the same nodes. This allows it to exhibit temporal dynamic ...

,

convolutional neural networks

In deep learning, a convolutional neural network (CNN, or ConvNet) is a class of artificial neural network (ANN), most commonly applied to analyze visual imagery. CNNs are also known as Shift Invariant or Space Invariant Artificial Neural Networ ...

and

Transformers

''Transformers'' is a media franchise produced by American toy company Hasbro and Japanese toy company Tomy, Takara Tomy. It primarily follows the Autobots and the Decepticons, two alien robot factions at war that can transform into other forms ...

have been applied to fields including

computer vision,

speech recognition

Speech recognition is an interdisciplinary subfield of computer science and computational linguistics that develops methodologies and technologies that enable the recognition and translation of spoken language into text by computers with the ...

,

natural language processing,

machine translation

Machine translation, sometimes referred to by the abbreviation MT (not to be confused with computer-aided translation, machine-aided human translation or interactive translation), is a sub-field of computational linguistics that investigates t ...

,

bioinformatics,

drug design

Drug design, often referred to as rational drug design or simply rational design, is the inventive process of finding new medications based on the knowledge of a biological target. The drug is most commonly an organic small molecule that acti ...

,

medical image analysis

Medical image computing (MIC) is an interdisciplinary field at the intersection of computer science, information engineering

Information engineering is the engineering discipline that deals with the generation, distribution, analysis, and use ...

,

climate science

Climatology (from Greek , ''klima'', "place, zone"; and , '' -logia'') or climate science is the scientific study of Earth's climate, typically defined as weather conditions averaged over a period of at least 30 years. This modern field of stu ...

, material inspection and

board game

Board games are tabletop games that typically use . These pieces are moved or placed on a pre-marked board (playing surface) and often include elements of table, card, role-playing, and miniatures games as well.

Many board games feature a co ...

programs, where they have produced results comparable to and in some cases surpassing human expert performance.

Artificial neural network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains.

An ANN is based on a collection of connected unit ...

s (ANNs) were inspired by information processing and distributed communication nodes in

biological system

A biological system is a complex network which connects several biologically relevant entities. Biological organization spans several scales and are determined based different structures depending on what the system is. Examples of biological syst ...

s. ANNs have various differences from biological

brain

A brain is an organ that serves as the center of the nervous system in all vertebrate and most invertebrate animals. It is located in the head, usually close to the sensory organs for senses such as vision. It is the most complex organ in a ve ...

s. Specifically, artificial neural networks tend to be static and symbolic, while the biological brain of most living organisms is dynamic (plastic) and analog.

The adjective "deep" in deep learning refers to the use of multiple layers in the network. Early work showed that a linear

perceptron

In machine learning, the perceptron (or McCulloch-Pitts neuron) is an algorithm for supervised learning of binary classifiers. A binary classifier is a function which can decide whether or not an input, represented by a vector of numbers, belon ...

cannot be a universal classifier, but that a network with a nonpolynomial activation function with one hidden layer of unbounded width can. Deep learning is a modern variation which is concerned with an unbounded number of layers of bounded size, which permits practical application and optimized implementation, while retaining theoretical universality under mild conditions. In deep learning the layers are also permitted to be heterogeneous and to deviate widely from biologically informed

connectionist

Connectionism refers to both an approach in the field of cognitive science that hopes to explain mental phenomena using artificial neural networks (ANN) and to a wide range of techniques and algorithms using ANNs in the context of artificial int ...

models, for the sake of efficiency, trainability and understandability, hence the "structured" part.

Definition

Deep learning is a class of

machine learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence.

Machine ...

algorithm

In mathematics and computer science, an algorithm () is a finite sequence of rigorous instructions, typically used to solve a class of specific problems or to perform a computation. Algorithms are used as specifications for performing ...

s that

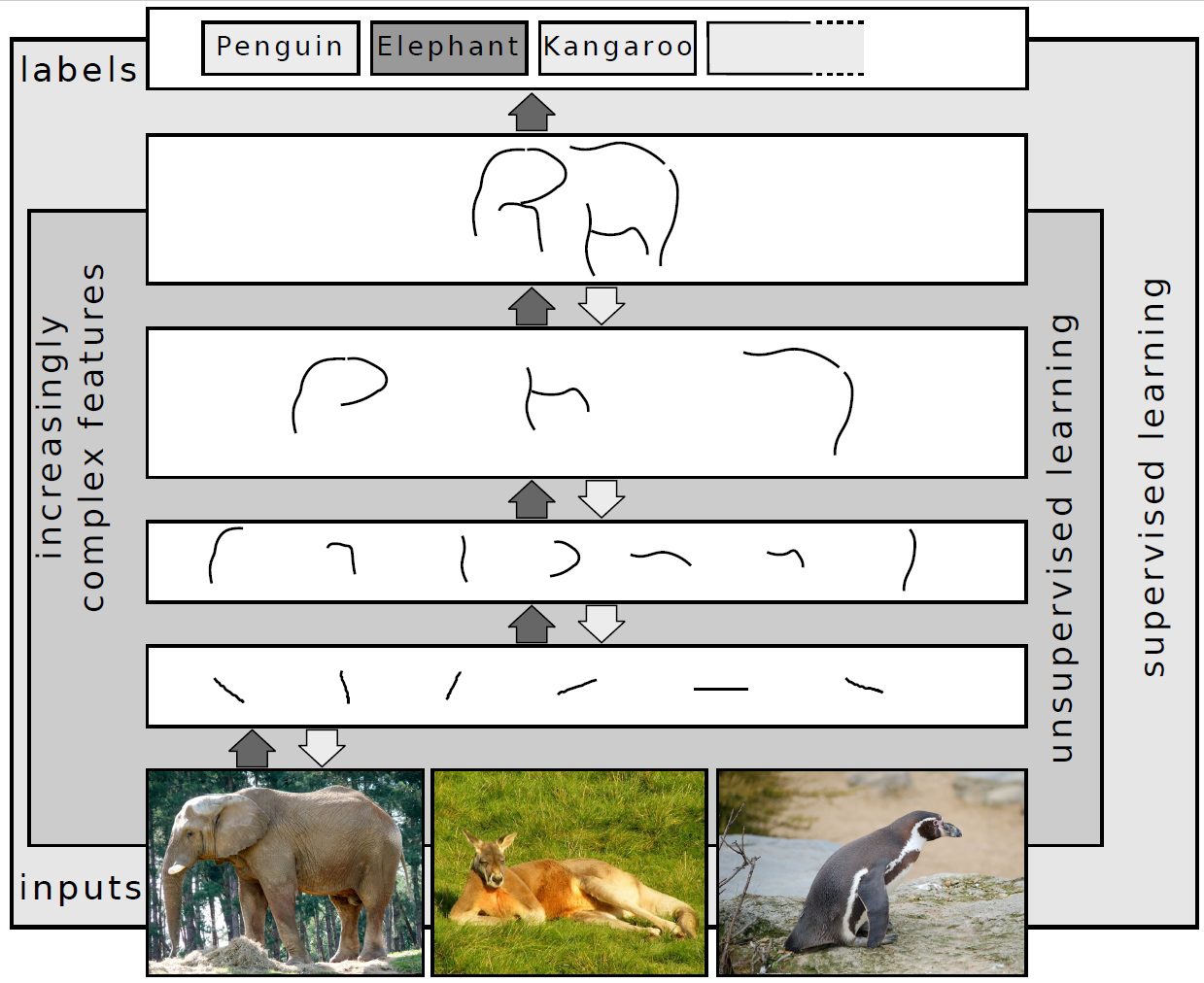

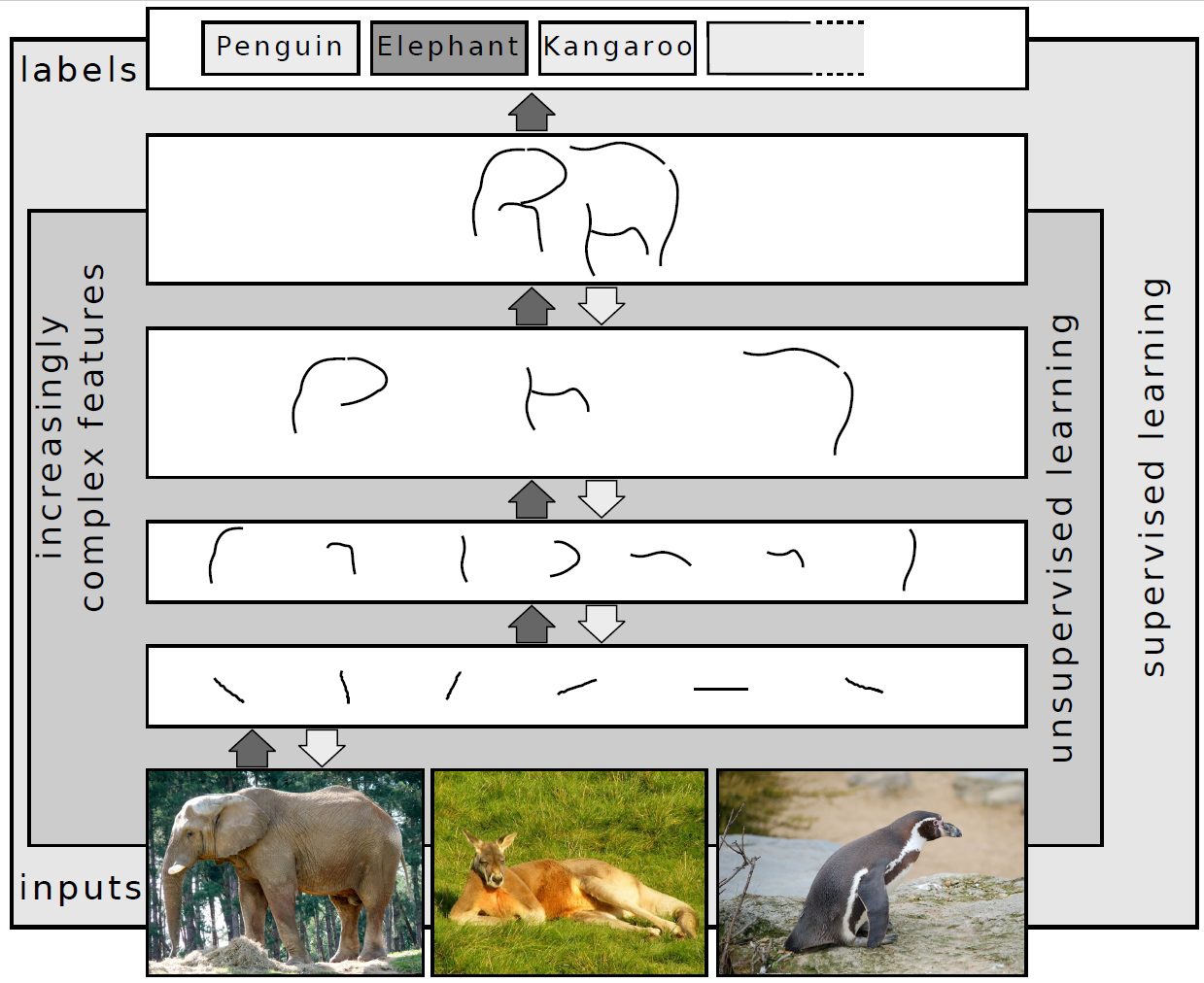

uses multiple layers to progressively extract higher-level features from the raw input. For example, in

image processing, lower layers may identify edges, while higher layers may identify the concepts relevant to a human such as digits or letters or faces.

Overview

Most modern deep learning models are based on

artificial neural network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains.

An ANN is based on a collection of connected unit ...

s, specifically

convolutional neural network

In deep learning, a convolutional neural network (CNN, or ConvNet) is a class of artificial neural network (ANN), most commonly applied to analyze visual imagery. CNNs are also known as Shift Invariant or Space Invariant Artificial Neural Netwo ...

s (CNN)s, although they can also include

propositional formula

In propositional logic, a propositional formula is a type of syntactic formula which is well formed and has a truth value. If the values of all variables in a propositional formula are given, it determines a unique truth value. A propositional for ...

s or latent variables organized layer-wise in deep

generative model

In statistical classification, two main approaches are called the generative approach and the discriminative approach. These compute classifiers by different approaches, differing in the degree of statistical modelling. Terminology is inconsi ...

s such as the nodes in

deep belief network

In machine learning, a deep belief network (DBN) is a generative graphical model, or alternatively a class of deep neural network, composed of multiple layers of latent variables ("hidden units"), with connections between the layers but not bet ...

s and deep

Boltzmann machine

A Boltzmann machine (also called Sherrington–Kirkpatrick model with external field or stochastic Ising–Lenz–Little model) is a stochastic spin-glass model with an external field, i.e., a Sherrington–Kirkpatrick model, that is a stochastic ...

s.

In deep learning, each level learns to transform its input data into a slightly more abstract and composite representation. In an image recognition application, the raw input may be a

matrix

Matrix most commonly refers to:

* ''The Matrix'' (franchise), an American media franchise

** ''The Matrix'', a 1999 science-fiction action film

** "The Matrix", a fictional setting, a virtual reality environment, within ''The Matrix'' (franchis ...

of pixels; the first representational layer may abstract the pixels and encode edges; the second layer may compose and encode arrangements of edges; the third layer may encode a nose and eyes; and the fourth layer may recognize that the image contains a face. Importantly, a deep learning process can learn which features to optimally place in which level ''on its own''. This does not eliminate the need for hand-tuning; for example, varying numbers of layers and layer sizes can provide different degrees of abstraction.

The word "deep" in "deep learning" refers to the number of layers through which the data is transformed. More precisely, deep learning systems have a substantial ''credit assignment path'' (CAP) depth. The CAP is the chain of transformations from input to output. CAPs describe potentially causal connections between input and output. For a

feedforward neural network, the depth of the CAPs is that of the network and is the number of hidden layers plus one (as the output layer is also parameterized). For

recurrent neural network

A recurrent neural network (RNN) is a class of artificial neural networks where connections between nodes can create a cycle, allowing output from some nodes to affect subsequent input to the same nodes. This allows it to exhibit temporal dynamic ...

s, in which a signal may propagate through a layer more than once, the CAP depth is potentially unlimited.

No universally agreed-upon threshold of depth divides shallow learning from deep learning, but most researchers agree that deep learning involves CAP depth higher than 2. CAP of depth 2 has been shown to be a universal approximator in the sense that it can emulate any function. Beyond that, more layers do not add to the function approximator ability of the network. Deep models (CAP > 2) are able to extract better features than shallow models and hence, extra layers help in learning the features effectively.

Deep learning architectures can be constructed with a

greedy layer-by-layer method.

Deep learning helps to disentangle these abstractions and pick out which features improve performance.

For

supervised learning

Supervised learning (SL) is a machine learning paradigm for problems where the available data consists of labelled examples, meaning that each data point contains features (covariates) and an associated label. The goal of supervised learning alg ...

tasks, deep learning methods eliminate

feature engineering

Feature engineering or feature extraction or feature discovery is the process of using domain knowledge to extract features (characteristics, properties, attributes) from raw data. The motivation is to use these extra features to improve the qua ...

, by translating the data into compact intermediate representations akin to

principal components, and derive layered structures that remove redundancy in representation.

Deep learning algorithms can be applied to unsupervised learning tasks. This is an important benefit because unlabeled data are more abundant than the labeled data. Examples of deep structures that can be trained in an unsupervised manner are

deep belief network

In machine learning, a deep belief network (DBN) is a generative graphical model, or alternatively a class of deep neural network, composed of multiple layers of latent variables ("hidden units"), with connections between the layers but not bet ...

s.

Interpretations

Deep neural networks are generally interpreted in terms of the

universal approximation theorem

In the mathematical theory of artificial neural networks, universal approximation theorems are results that establish the density of an algorithmically generated class of functions within a given function space of interest. Typically, these result ...

[Lu, Z., Pu, H., Wang, F., Hu, Z., & Wang, L. (2017)]

The Expressive Power of Neural Networks: A View from the Width

. Neural Information Processing Systems, 6231-6239. or

probabilistic inference.

The classic universal approximation theorem concerns the capacity of

feedforward neural networks with a single hidden layer of finite size to approximate

continuous functions

In mathematics, a continuous function is a function such that a continuous variation (that is a change without jump) of the argument induces a continuous variation of the value of the function. This means that there are no abrupt changes in valu ...

.

In 1989, the first proof was published by

George Cybenko for

sigmoid

Sigmoid means resembling the lower-case Greek letter sigma (uppercase Σ, lowercase σ, lowercase in word-final position ς) or the Latin letter S. Specific uses include:

* Sigmoid function, a mathematical function

* Sigmoid colon, part of the l ...

activation functions

and was generalised to feed-forward multi-layer architectures in 1991 by Kurt Hornik.

Recent work also showed that universal approximation also holds for non-bounded activation functions such as the rectified linear unit.

The universal approximation theorem for

deep neural network

Deep learning (also known as deep structured learning) is part of a broader family of machine learning methods based on artificial neural networks with representation learning. Learning can be supervised, semi-supervised or unsupervised.

D ...

s concerns the capacity of networks with bounded width but the depth is allowed to grow. Lu et al.

[ proved that if the width of a ]deep neural network

Deep learning (also known as deep structured learning) is part of a broader family of machine learning methods based on artificial neural networks with representation learning. Learning can be supervised, semi-supervised or unsupervised.

D ...

with ReLU

In the context of artificial neural networks, the rectifier or ReLU (rectified linear unit) activation function is an activation function defined as the positive part of its argument:

: f(x) = x^+ = \max(0, x),

where ''x'' is the input to a neu ...

activation is strictly larger than the input dimension, then the network can approximate any Lebesgue integrable function; If the width is smaller or equal to the input dimension, then a deep neural network

Deep learning (also known as deep structured learning) is part of a broader family of machine learning methods based on artificial neural networks with representation learning. Learning can be supervised, semi-supervised or unsupervised.

D ...

is not a universal approximator.

The probabilistic interpretationmachine learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence.

Machine ...

. It features inference,optimization

Mathematical optimization (alternatively spelled ''optimisation'') or mathematical programming is the selection of a best element, with regard to some criterion, from some set of available alternatives. It is generally divided into two subfi ...

concepts of training

Training is teaching, or developing in oneself or others, any skills and knowledge or fitness that relate to specific useful competencies. Training has specific goals of improving one's capability, capacity, productivity and performance. I ...

and testing, related to fitting and generalization

A generalization is a form of abstraction whereby common properties of specific instances are formulated as general concepts or claims. Generalizations posit the existence of a domain or set of elements, as well as one or more common characte ...

, respectively. More specifically, the probabilistic interpretation considers the activation nonlinearity as a cumulative distribution function.Bishop

A bishop is an ordained clergy member who is entrusted with a position of authority and oversight in a religious institution.

In Christianity, bishops are normally responsible for the governance of dioceses. The role or office of bishop is c ...

.

History

Some sources point out that Frank Rosenblatt

Frank Rosenblatt (July 11, 1928July 11, 1971) was an American psychologist notable in the field of artificial intelligence. He is sometimes called the father of deep learning.

Life and career

Rosenblatt was born in New Rochelle, New York as son o ...

developed and explored all of the basic ingredients of the deep learning systems of today.perceptron

In machine learning, the perceptron (or McCulloch-Pitts neuron) is an algorithm for supervised learning of binary classifiers. A binary classifier is a function which can decide whether or not an input, represented by a vector of numbers, belon ...

s was published by Alexey Ivakhnenko and Lapa in 1967.group method of data handling Group method of data handling (GMDH) is a family of inductive algorithms for computer-based mathematical modeling of multi-parametric datasets that features fully automatic structural and parametric optimization of models.

GMDH is used in such fiel ...

.Neocognitron

__NOTOC__

The neocognitron is a hierarchical, multilayered artificial neural network proposed by Kunihiko Fukushima in 1979. It has been used for Japanese handwritten character recognition and other pattern recognition tasks, and served as the ins ...

introduced by Kunihiko Fukushima

Kunihiko Fukushima ( Japanese: 福島 邦彦, born 16 March 1936) is a Japanese computer scientist, most noted for his work on artificial neural networks and deep learning. He is currently working part-time as a Senior Research Scientist at the F ...

in 1980.Rina Dechter

Rina Dechter (born August 13, 1950) is a distinguished professor of computer science in the Donald Bren School of Information and Computer Sciences at the University of California, Irvine. Her research is on automated reasoning in artificial inte ...

in 1986,Rina Dechter

Rina Dechter (born August 13, 1950) is a distinguished professor of computer science in the Donald Bren School of Information and Computer Sciences at the University of California, Irvine. Her research is on automated reasoning in artificial inte ...

(1986). Learning while searching in constraint-satisfaction problems. University of California, Computer Science Department, Cognitive Systems Laborator

Online

[Igor Aizenberg, Naum N. Aizenberg, Joos P.L. Vandewalle (2000). Multi-Valued and Universal Binary Neurons: Theory, Learning and Applications. Springer Science & Business Media.]

In 1989, Yann LeCun

Yann André LeCun ( , ; originally spelled Le Cun; born 8 July 1960) is a French computer scientist working primarily in the fields of machine learning, computer vision, mobile robotics and computational neuroscience. He is the Silver Professo ...

et al. applied the standard backpropagation

In machine learning, backpropagation (backprop, BP) is a widely used algorithm for training feedforward artificial neural networks. Generalizations of backpropagation exist for other artificial neural networks (ANNs), and for functions gener ...

algorithm, which had been around as the reverse mode of automatic differentiation

In mathematics and computer algebra, automatic differentiation (AD), also called algorithmic differentiation, computational differentiation, auto-differentiation, or simply autodiff, is a set of techniques to evaluate the derivative of a function s ...

since 1970,Seppo Linnainmaa

Seppo Ilmari Linnainmaa (born 28 September 1945) is a Finnish mathematician and computer scientist. He was born in Pori. In 1974 he obtained the first doctorate ever awarded in computer science at the University of Helsinki. In 1976, he became As ...

(1970). The representation of the cumulative rounding error of an algorithm as a Taylor expansion of the local rounding errors. Master's Thesis (in Finnish), Univ. Helsinki, 6-7.[LeCun ''et al.'', "Backpropagation Applied to Handwritten Zip Code Recognition," ''Neural Computation'', 1, pp. 541–551, 1989.]

Independently in 1988, Wei Zhang et al. applied the backpropagation algorithm to a convolutional neural network (a simplified Neocognitron by keeping only the convolutional interconnections between the image feature layers and the last fully connected layer) for alphabets recognition and also proposed an implementation of the CNN with an optical computing system.wake-sleep algorithm

The wake-sleep algorithm is an unsupervised learning algorithm for a stochastic multilayer neural network. The algorithm adjusts the parameters so as to produce a good density estimator. There are two learning phases, the “wake” phase and the ...

, co-developed with Peter Dayan

Peter Dayan is director at the Max Planck Institute for Biological Cybernetics in Tübingen, Germany. He is co-author of ''Theoretical Neuroscience'', an influential textbook on computational neuroscience. He is known for applying Bayesian metho ...

and Hinton. Many factors contribute to the slow speed, including the vanishing gradient problem

In machine learning, the vanishing gradient problem is encountered when training artificial neural networks with gradient-based learning methods and backpropagation. In such methods, during each iteration of training each of the neural network's ...

analyzed in 1991 by Sepp Hochreiter

Josef "Sepp" Hochreiter (born 14 February 1967) is a German computer scientist. Since 2018 he has led the Institute for Machine Learning at the Johannes Kepler University of Linz after having led the Institute of Bioinformatics from 2006 to 2018 ...

.[S. Hochreiter.,]

Untersuchungen zu dynamischen neuronalen Netzen

," ''Diploma thesis. Institut f. Informatik, Technische Univ. Munich. Advisor: J. Schmidhuber'', 1991.artificial neural network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains.

An ANN is based on a collection of connected unit ...

's (ANN) computational cost and a lack of understanding of how the brain wires its biological networks.

Both shallow and deep learning (e.g., recurrent nets) of ANNs have been explored for many years.mixture model

In statistics, a mixture model is a probabilistic model for representing the presence of subpopulations within an overall population, without requiring that an observed data set should identify the sub-population to which an individual observatio ...

/Hidden Markov model

A hidden Markov model (HMM) is a statistical Markov model in which the system being modeled is assumed to be a Markov process — call it X — with unobservable ("''hidden''") states. As part of the definition, HMM requires that there be an o ...

(GMM-HMM) technology based on generative models of speech trained discriminatively.speech recognition

Speech recognition is an interdisciplinary subfield of computer science and computational linguistics that develops methodologies and technologies that enable the recognition and translation of spoken language into text by computers with the ...

researchers moved away from neural nets to pursue generative modeling. An exception was at SRI International

SRI International (SRI) is an American nonprofit scientific research institute and organization headquartered in Menlo Park, California. The trustees of Stanford University established SRI in 1946 as a center of innovation to support economic ...

in the late 1990s. Funded by the US government's NSA

The National Security Agency (NSA) is a national-level intelligence agency of the United States Department of Defense, under the authority of the Director of National Intelligence (DNI). The NSA is responsible for global monitoring, collecti ...

and DARPA

The Defense Advanced Research Projects Agency (DARPA) is a research and development agency of the United States Department of Defense responsible for the development of emerging technologies for use by the military.

Originally known as the Ad ...

, SRI studied deep neural networks in speech and speaker recognition

Speaker recognition is the identification of a person from characteristics of voices. It is used to answer the question "Who is speaking?" The term voice recognition can refer to ''speaker recognition'' or speech recognition. Speaker verification ...

. The speaker recognition team led by Larry Heck reported significant success with deep neural networks in speech processing in the 1998 National Institute of Standards and Technology

The National Institute of Standards and Technology (NIST) is an agency of the United States Department of Commerce whose mission is to promote American innovation and industrial competitiveness. NIST's activities are organized into physical s ...

Speaker Recognition evaluation.waveform

In electronics, acoustics, and related fields, the waveform of a signal is the shape of its graph as a function of time, independent of its time and magnitude scales and of any displacement in time.David Crecraft, David Gorham, ''Electro ...

s, later produced excellent larger-scale results.

Many aspects of speech recognition were taken over by a deep learning method called long short-term memory

Long short-term memory (LSTM) is an artificial neural network used in the fields of artificial intelligence and deep learning. Unlike standard feedforward neural networks, LSTM has feedback connections. Such a recurrent neural network (RNN) ...

(LSTM), a recurrent neural network published by Hochreiter and Schmidhuber in 1997.[Santiago Fernandez, Alex Graves, and Jürgen Schmidhuber (2007)]

An application of recurrent neural networks to discriminative keyword spotting

. Proceedings of ICANN (2), pp. 220–229. In 2015, Google's speech recognition reportedly experienced a dramatic performance jump of 49% through CTC-trained LSTM, which they made available through Google Voice Search

Google Voice Search or Search by Voice is a Google product that allows users to use Google Search by speaking on a mobile phone or computer, i.e. have the device search for data upon entering information on what to search into the device by sp ...

.Teh

''Teh'' is an Internet slang neologism most frequently used as an English article, based on a common typographical error of "''the".'' ''Teh'' has subsequently developed grammatical usages distinct from ''the''. It is not common in spoken or writt ...

restricted Boltzmann machine

A restricted Boltzmann machine (RBM) is a generative stochastic artificial neural network that can learn a probability distribution over its set of inputs.

RBMs were initially invented under the name Harmonium by Paul Smolensky in 1986,

and rose ...

, then fine-tuning it using supervised backpropagation

In machine learning, backpropagation (backprop, BP) is a widely used algorithm for training feedforward artificial neural networks. Generalizations of backpropagation exist for other artificial neural networks (ANNs), and for functions gener ...

.[G. E. Hinton.,]

Learning multiple layers of representation

," ''Trends in Cognitive Sciences'', 11, pp. 428–434, 2007. The papers referred to ''learning'' for ''deep belief nets.''

Deep learning is part of state-of-the-art systems in various disciplines, particularly computer vision and automatic speech recognition

Speech recognition is an interdisciplinary subfield of computer science and computational linguistics that develops methodologies and technologies that enable the recognition and translation of spoken language into text by computers with the ...

(ASR). Results on commonly used evaluation sets such as TIMIT TIMIT is a corpus of phonemically and lexically transcribed speech of American English speakers of different sexes and dialects. Each transcribed element has been delineated in time.

TIMIT was designed to further acoustic-phonetic knowledge and au ...

(ASR) and MNIST (image classification

Computer vision is an interdisciplinary scientific field that deals with how computers can gain high-level understanding from digital images or videos. From the perspective of engineering, it seeks to understand and automate tasks that the hum ...

), as well as a range of large-vocabulary speech recognition tasks have steadily improved.Convolutional neural network

In deep learning, a convolutional neural network (CNN, or ConvNet) is a class of artificial neural network (ANN), most commonly applied to analyze visual imagery. CNNs are also known as Shift Invariant or Space Invariant Artificial Neural Netwo ...

s (CNNs) were superseded for ASR by CTCLSTM

Long short-term memory (LSTM) is an artificial neural network used in the fields of artificial intelligence and deep learning. Unlike standard feedforward neural networks, LSTM has feedback connections. Such a recurrent neural network (RNN) c ...

.Yann LeCun

Yann André LeCun ( , ; originally spelled Le Cun; born 8 July 1960) is a French computer scientist working primarily in the fields of machine learning, computer vision, mobile robotics and computational neuroscience. He is the Silver Professo ...

(2016). Slides on Deep Learnin

Online

backpropagation

In machine learning, backpropagation (backprop, BP) is a widely used algorithm for training feedforward artificial neural networks. Generalizations of backpropagation exist for other artificial neural networks (ANNs), and for functions gener ...

when using DNNs with large, context-dependent output layers produced error rates dramatically lower than then-state-of-the-art Gaussian mixture model (GMM)/Hidden Markov Model (HMM) and also than more-advanced generative model-based systems.Nvidia

Nvidia CorporationOfficially written as NVIDIA and stylized in its logo as VIDIA with the lowercase "n" the same height as the uppercase "VIDIA"; formerly stylized as VIDIA with a large italicized lowercase "n" on products from the mid 1990s to ...

was involved in what was called the “big bang” of deep learning, “as deep-learning neural networks were trained with Nvidia graphics processing unit

A graphics processing unit (GPU) is a specialized electronic circuit designed to manipulate and alter memory to accelerate the creation of images in a frame buffer intended for output to a display device. GPUs are used in embedded systems, m ...

s (GPUs).” That year, Andrew Ng

Andrew Yan-Tak Ng (; born 1976) is a British-born American computer scientist and technology entrepreneur focusing on machine learning and AI. Ng was a co-founder and head of Google Brain and was the former Chief Scientist at Baidu, building ...

determined that GPUs could increase the speed of deep-learning systems by about 100 times. In particular, GPUs are well-suited for the matrix/vector computations involved in machine learning.

Deep learning revolution

In 2012, a team led by George E. Dahl won the "Merck Molecular Activity Challenge" using multi-task deep neural networks to predict the

In 2012, a team led by George E. Dahl won the "Merck Molecular Activity Challenge" using multi-task deep neural networks to predict the biomolecular target

A biological target is anything within a living organism to which some other entity (like an endogenous ligand or a drug) is directed and/or binds, resulting in a change in its behavior or function. Examples of common classes of biological targets ...

of one drug.NIH

The National Institutes of Health, commonly referred to as NIH (with each letter pronounced individually), is the primary agency of the United States government responsible for biomedical and public health research. It was founded in the late ...

, FDA

The United States Food and Drug Administration (FDA or US FDA) is a federal agency of the Department of Health and Human Services. The FDA is responsible for protecting and promoting public health through the control and supervision of food ...

and NCATS.["Toxicology in the 21st century Data Challenge"]backpropagation

In machine learning, backpropagation (backprop, BP) is a widely used algorithm for training feedforward artificial neural networks. Generalizations of backpropagation exist for other artificial neural networks (ANNs), and for functions gener ...

had been around for decades, and GPU implementations of NNs for years, including CNNs, fast implementations of CNNs on GPUs were needed to progress on computer vision.[.][.][.]

Some researchers state that the October 2012 ImageNet victory anchored the start of a "deep learning revolution" that has transformed the AI industry.

In March 2019, Yoshua Bengio

Yoshua Bengio (born March 5, 1964) is a Canadian computer scientist, most noted for his work on artificial neural networks and deep learning. He is a professor at the Department of Computer Science and Operations Research at the Université ...

, Geoffrey Hinton

Geoffrey Everest Hinton One or more of the preceding sentences incorporates text from the royalsociety.org website where: (born 6 December 1947) is a British-Canadian cognitive psychologist and computer scientist, most noted for his work on a ...

and Yann LeCun

Yann André LeCun ( , ; originally spelled Le Cun; born 8 July 1960) is a French computer scientist working primarily in the fields of machine learning, computer vision, mobile robotics and computational neuroscience. He is the Silver Professo ...

were awarded the Turing Award

The ACM A. M. Turing Award is an annual prize given by the Association for Computing Machinery (ACM) for contributions of lasting and major technical importance to computer science. It is generally recognized as the highest distinction in comput ...

for conceptual and engineering breakthroughs that have made deep neural networks a critical component of computing.

Neural networks

Artificial neural networks

Artificial neural networks (ANNs) or connectionist

Connectionism refers to both an approach in the field of cognitive science that hopes to explain mental phenomena using artificial neural networks (ANN) and to a wide range of techniques and algorithms using ANNs in the context of artificial int ...

systems are computing systems inspired by the biological neural networks that constitute animal brains. Such systems learn (progressively improve their ability) to do tasks by considering examples, generally without task-specific programming. For example, in image recognition, they might learn to identify images that contain cats by analyzing example images that have been manually labeled as "cat" or "no cat" and using the analytic results to identify cats in other images. They have found most use in applications difficult to express with a traditional computer algorithm using rule-based programming

Logic programming is a programming paradigm which is largely based on formal logic. Any program written in a logic programming language is a set of sentences in logical form, expressing facts and rules about some problem domain. Major logic prog ...

.

An ANN is based on a collection of connected units called artificial neuron

An artificial neuron is a mathematical function conceived as a model of biological neurons, a neural network. Artificial neurons are elementary units in an artificial neural network. The artificial neuron receives one or more inputs (representing ...

s, (analogous to biological neurons in a biological brain). Each connection ( synapse) between neurons can transmit a signal to another neuron. The receiving (postsynaptic) neuron can process the signal(s) and then signal downstream neurons connected to it. Neurons may have state, generally represented by real numbers, typically between 0 and 1. Neurons and synapses may also have a weight that varies as learning proceeds, which can increase or decrease the strength of the signal that it sends downstream.

Typically, neurons are organized in layers. Different layers may perform different kinds of transformations on their inputs. Signals travel from the first (input), to the last (output) layer, possibly after traversing the layers multiple times.

The original goal of the neural network approach was to solve problems in the same way that a human brain would. Over time, attention focused on matching specific mental abilities, leading to deviations from biology such as backpropagation

In machine learning, backpropagation (backprop, BP) is a widely used algorithm for training feedforward artificial neural networks. Generalizations of backpropagation exist for other artificial neural networks (ANNs), and for functions gener ...

, or passing information in the reverse direction and adjusting the network to reflect that information.

Neural networks have been used on a variety of tasks, including computer vision, speech recognition

Speech recognition is an interdisciplinary subfield of computer science and computational linguistics that develops methodologies and technologies that enable the recognition and translation of spoken language into text by computers with the ...

, machine translation

Machine translation, sometimes referred to by the abbreviation MT (not to be confused with computer-aided translation, machine-aided human translation or interactive translation), is a sub-field of computational linguistics that investigates t ...

, social network

A social network is a social structure made up of a set of social actors (such as individuals or organizations), sets of dyadic ties, and other social interactions between actors. The social network perspective provides a set of methods for ...

filtering, playing board and video games and medical diagnosis.

As of 2017, neural networks typically have a few thousand to a few million units and millions of connections. Despite this number being several order of magnitude less than the number of neurons on a human brain, these networks can perform many tasks at a level beyond that of humans (e.g., recognizing faces, or playing "Go" ).

Deep neural networks

A deep neural network (DNN) is an artificial neural network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains.

An ANN is based on a collection of connected unit ...

(ANN) with multiple layers between the input and output layers.multivariate polynomial

In mathematics, a polynomial is an expression consisting of indeterminates (also called variables) and coefficients, that involves only the operations of addition, subtraction, multiplication, and positive-integer powers of variables. An exampl ...

s are exponentially easier to approximate with DNNs than with shallow networks.

Deep architectures include many variants of a few basic approaches. Each architecture has found success in specific domains. It is not always possible to compare the performance of multiple architectures, unless they have been evaluated on the same data sets.

DNNs are typically feedforward networks in which data flows from the input layer to the output layer without looping back. At first, the DNN creates a map of virtual neurons and assigns random numerical values, or "weights", to connections between them. The weights and inputs are multiplied and return an output between 0 and 1. If the network did not accurately recognize a particular pattern, an algorithm would adjust the weights. That way the algorithm can make certain parameters more influential, until it determines the correct mathematical manipulation to fully process the data.

Recurrent neural networks

A recurrent neural network (RNN) is a class of artificial neural networks where connections between nodes can create a cycle, allowing output from some nodes to affect subsequent input to the same nodes. This allows it to exhibit temporal dynamic ...

(RNNs), in which data can flow in any direction, are used for applications such as language modeling.

Challenges

As with ANNs, many issues can arise with naively trained DNNs. Two common issues are overfitting and computation time.

DNNs are prone to overfitting because of the added layers of abstraction, which allow them to model rare dependencies in the training data. Regularization

Regularization may refer to:

* Regularization (linguistics)

* Regularization (mathematics)

* Regularization (physics)

In physics, especially quantum field theory, regularization is a method of modifying observables which have singularities in ...

methods such as Ivakhnenko's unit pruningsparsity

In numerical analysis and scientific computing, a sparse matrix or sparse array is a matrix in which most of the elements are zero. There is no strict definition regarding the proportion of zero-value elements for a matrix to qualify as sparse b ...

(-regularization) can be applied during training to combat overfitting. Alternatively dropout regularization randomly omits units from the hidden layers during training. This helps to exclude rare dependencies.learning rate

In machine learning and statistics, the learning rate is a tuning parameter in an optimization algorithm that determines the step size at each iteration while moving toward a minimum of a loss function. Since it influences to what extent newly ...

, and initial weights. Sweeping through the parameter space for optimal parameters may not be feasible due to the cost in time and computational resources. Various tricks, such as batching (computing the gradient on several training examples at once rather than individual examples)[Ting Qin, et al. "A learning algorithm of CMAC based on RLS." Neural Processing Letters 19.1 (2004): 49-61.][Ting Qin, et al.]

Continuous CMAC-QRLS and its systolic array

." Neural Processing Letters 22.1 (2005): 1-16.

Hardware

Since the 2010s, advances in both machine learning algorithms and computer hardware have led to more efficient methods for training deep neural networks that contain many layers of non-linear hidden units and a very large output layer. By 2019, graphic processing units (GPU

A graphics processing unit (GPU) is a specialized electronic circuit designed to manipulate and alter memory to accelerate the creation of images in a frame buffer intended for output to a display device. GPUs are used in embedded systems, mobi ...

s), often with AI-specific enhancements, had displaced CPUs as the dominant method of training large-scale commercial cloud AI. OpenAI

OpenAI is an artificial intelligence (AI) research laboratory consisting of the for-profit corporation OpenAI LP and its parent company, the non-profit OpenAI Inc. The company conducts research in the field of AI with the stated goal of promo ...

estimated the hardware computation used in the largest deep learning projects from AlexNet (2012) to AlphaZero (2017), and found a 300,000-fold increase in the amount of computation required, with a doubling-time trendline of 3.4 months.

Special electronic circuits called deep learning processors were designed to speed up deep learning algorithms. Deep learning processors include neural processing units (NPUs) in Huawei

Huawei Technologies Co., Ltd. ( ; ) is a Chinese multinational technology corporation headquartered in Shenzhen, Guangdong, China. It designs, develops, produces and sells telecommunications equipment, consumer electronics and various smar ...

cellphones and cloud computing

Cloud computing is the on-demand availability of computer system resources, especially data storage ( cloud storage) and computing power, without direct active management by the user. Large clouds often have functions distributed over mu ...

servers such as tensor processing unit

Tensor Processing Unit (TPU) is an AI accelerator application-specific integrated circuit (ASIC) developed by Google for neural network machine learning, using Google's own TensorFlow software. Google began using TPUs internally in 2015, and in ...

s (TPU) in the Google Cloud Platform. Cerebras Systems has also built a dedicated system to handle large deep learning models, the CS-2, based on the largest processor in the industry, the second-generation Wafer Scale Engine (WSE-2).

Atomically thin semiconductors are considered promising for energy-efficient deep learning hardware where the same basic device structure is used for both logic operations and data storage.

In 2020, Marega et al. published experiments with a large-area active channel material for developing logic-in-memory devices and circuits based on floating-gate

The floating-gate MOSFET (FGMOS), also known as a floating-gate MOS transistor or floating-gate transistor, is a type of metal–oxide–semiconductor field-effect transistor (MOSFET) where the gate is electrically isolated, creating a floating no ...

field-effect transistor

The field-effect transistor (FET) is a type of transistor that uses an electric field to control the flow of current in a semiconductor. FETs ( JFETs or MOSFETs) are devices with three terminals: ''source'', ''gate'', and ''drain''. FETs cont ...

s (FGFETs).photonic

Photonics is a branch of optics that involves the application of generation, detection, and manipulation of light in form of photons through emission, transmission, modulation, signal processing, switching, amplification, and sensing. Though ...

hardware accelerator for parallel convolutional processing.wavelength

In physics, the wavelength is the spatial period of a periodic wave—the distance over which the wave's shape repeats.

It is the distance between consecutive corresponding points of the same phase on the wave, such as two adjacent crests, t ...

division multiplexing in conjunction with frequency comb In optics, a frequency comb is a laser source whose spectrum consists of a series of discrete, equally spaced frequency lines. Frequency combs can be generated by a number of mechanisms, including periodic modulation (in amplitude and/or phase) of a ...

s, and (2) extremely high data modulation speeds.photonics

Photonics is a branch of optics that involves the application of generation, detection, and manipulation of light in form of photons through emission, transmission, modulation, signal processing, switching, amplification, and sensing. Though ...

in data-heavy AI applications.

Applications

Automatic speech recognition

Large-scale automatic speech recognition is the first and most convincing successful case of deep learning. LSTM RNNs can learn "Very Deep Learning" tasksdialect

The term dialect (from Latin , , from the Ancient Greek word , 'discourse', from , 'through' and , 'I speak') can refer to either of two distinctly different types of linguistic phenomena:

One usage refers to a variety of a language that is a ...

s of American English

American English, sometimes called United States English or U.S. English, is the set of varieties of the English language native to the United States. English is the most widely spoken language in the United States and in most circumstances i ...

, where each speaker reads 10 sentences.[''TIMIT Acoustic-Phonetic Continuous Speech Corpus'' Linguistic Data Consortium, Philadelphia.] Its small size lets many configurations be tried. More importantly, the TIMIT task concerns phone-sequence recognition, which, unlike word-sequence recognition, allows weak phone bigram

A bigram or digram is a sequence of two adjacent elements from a string of tokens, which are typically letters, syllables, or words. A bigram is an ''n''-gram for ''n''=2. The frequency distribution of every bigram in a string is commonly used f ...

language models. This lets the strength of the acoustic modeling aspects of speech recognition be more easily analyzed. The error rates listed below, including these early results and measured as percent phone error rates (PER), have been summarized since 1991.

The debut of DNNs for speaker recognition in the late 1990s and speech recognition around 2009-2011 and of LSTM around 2003–2007, accelerated progress in eight major areas:transfer learning

Transfer learning (TL) is a research problem in machine learning (ML) that focuses on storing knowledge gained while solving one problem and applying it to a different but related problem. For example, knowledge gained while learning to recognize ...

by DNNs and related deep models

* CNNs and how to design them to best exploit domain knowledge

Domain knowledge is knowledge of a specific, specialized discipline or field, in contrast to general (or domain-independent) knowledge. The term is often used in reference to a more general discipline—for example, in describing a software engin ...

of speech

* RNN and its rich LSTM variants

* Other types of deep models including tensor-based models and integrated deep generative/discriminative models.

All major commercial speech recognition systems (e.g., Microsoft Cortana, Xbox

Xbox is a video gaming brand created and owned by Microsoft. The brand consists of five video game consoles, as well as applications (games), streaming services, an online service by the name of Xbox network, and the development arm by the ...

, Skype Translator, Amazon Alexa

Amazon Alexa, also known simply as Alexa, is a virtual assistant technology largely based on a Polish speech synthesiser named Ivona, bought by Amazon in 2013. It was first used in the Amazon Echo smart speaker and the Echo Dot, Echo Studio ...

, Google Now

Google Now was a feature of Google Search of the Google app for Android and iOS. Google Now proactively delivered information to users to predict (based on search habits and other factors) information they may need in the form of informational ...

, Apple Siri

Siri ( ) is a virtual assistant that is part of Apple Inc.'s iOS, iPadOS, watchOS, macOS, tvOS, and audioOS operating systems. It uses voice queries, gesture based control, focus-tracking and a natural-language user interface to answer questi ...

, Baidu

Baidu, Inc. ( ; , meaning "hundred times") is a Chinese multinational technology company specializing in Internet-related services and products and artificial intelligence (AI), headquartered in Beijing's Haidian District. It is one of the l ...

and iFlyTek

iFlytek (), styled as iFLYTEK, is a partially state-owned Chinese information technology company established in 1999. It creates voice recognition software and 10+ voice-based internet/mobile products covering education, communication, music, int ...

voice search, and a range of Nuance speech products, etc.) are based on deep learning.

Image recognition

A common evaluation set for image classification is the MNIST database data set. MNIST is composed of handwritten digits and includes 60,000 training examples and 10,000 test examples. As with TIMIT, its small size lets users test multiple configurations. A comprehensive list of results on this set is available.

Visual art processing

Closely related to the progress that has been made in image recognition is the increasing application of deep learning techniques to various visual art tasks. DNNs have proven themselves capable, for example, of

*identifying the style period of a given painting[

* Neural Style Transfer capturing the style of a given artwork and applying it in a visually pleasing manner to an arbitrary photograph or video][

*generating striking imagery based on random visual input fields.]

Natural language processing

Neural networks have been used for implementing language models since the early 2000s.word embedding

In natural language processing (NLP), word embedding is a term used for the representation of words for text analysis, typically in the form of a real-valued vector that encodes the meaning of the word such that the words that are closer in the v ...

. Word embedding, such as ''word2vec

Word2vec is a technique for natural language processing (NLP) published in 2013. The word2vec algorithm uses a neural network model to learn word associations from a large corpus of text. Once trained, such a model can detect synonymous words or ...

'', can be thought of as a representational layer in a deep learning architecture that transforms an atomic word into a positional representation of the word relative to other words in the dataset; the position is represented as a point in a vector space

In mathematics and physics, a vector space (also called a linear space) is a set whose elements, often called '' vectors'', may be added together and multiplied ("scaled") by numbers called ''scalars''. Scalars are often real numbers, but can ...

. Using word embedding as an RNN input layer allows the network to parse sentences and phrases using an effective compositional vector grammar. A compositional vector grammar can be thought of as probabilistic context free grammar (PCFG) implemented by an RNN.sentiment analysis

Sentiment analysis (also known as opinion mining or emotion AI) is the use of natural language processing, text analysis, computational linguistics, and biometrics to systematically identify, extract, quantify, and study affective states and subjec ...

, information retrieval, spoken language understanding,word embedding

In natural language processing (NLP), word embedding is a term used for the representation of words for text analysis, typically in the form of a real-valued vector that encodes the meaning of the word such that the words that are closer in the v ...

to sentence embedding.

Google Translate

Google Translate is a multilingual neural machine translation service developed by Google to translate text, documents and websites from one language into another. It offers a website interface, a mobile app for Android and iOS, and an API ...

(GT) uses a large end-to-end long short-term memory

Long short-term memory (LSTM) is an artificial neural network used in the fields of artificial intelligence and deep learning. Unlike standard feedforward neural networks, LSTM has feedback connections. Such a recurrent neural network (RNN) ...

(LSTM) network.example-based machine translation

Example-based machine translation (EBMT) is a method of machine translation often characterized by its use of a bilingual corpus with parallel texts as its main knowledge base at run-time. It is essentially a translation by analogy and can be vi ...

method in which the system "learns from millions of examples."

Drug discovery and toxicology

A large percentage of candidate drugs fail to win regulatory approval. These failures are caused by insufficient efficacy (on-target effect), undesired interactions (off-target effects), or unanticipated toxic effects.biomolecular target

A biological target is anything within a living organism to which some other entity (like an endogenous ligand or a drug) is directed and/or binds, resulting in a change in its behavior or function. Examples of common classes of biological targets ...

s,rational drug design

Drug design, often referred to as rational drug design or simply rational design, is the inventive process of finding new medications based on the knowledge of a biological target. The drug is most commonly an organic small molecule that activa ...

. AtomNet was used to predict novel candidate biomolecules for disease targets such as the Ebola virus

''Zaire ebolavirus'', more commonly known as Ebola virus (; EBOV), is one of six known species within the genus '' Ebolavirus''. Four of the six known ebolaviruses, including EBOV, cause a severe and often fatal hemorrhagic fever in humans and o ...

and multiple sclerosis.

In 2017 graph neural network

A graph neural network (GNN) belongs to a class of artificial neural networks for processing data that can be represented as graphs.

In the more general subject of "geometric deep learning", certain existing neural network architectures can ...

s were used for the first time to predict various properties of molecules in a large toxicology data set. In 2019, generative neural networks were used to produce molecules that were validated experimentally all the way into mice.

Customer relationship management

Deep reinforcement learning

Deep reinforcement learning (deep RL) is a subfield of machine learning that combines reinforcement learning (RL) and deep learning. RL considers the problem of a computational agent learning to make decisions by trial and error. Deep RL incorpor ...

has been used to approximate the value of possible direct marketing

Direct marketing is a form of communicating an offer, where organizations communicate directly to a pre-selected customer and supply a method for a direct response. Among practitioners, it is also known as ''direct response marketing''. By ...

actions, defined in terms of RFM variables. The estimated value function was shown to have a natural interpretation as customer lifetime value

In marketing, customer lifetime value (CLV or often CLTV), lifetime customer value (LCV), or life-time value (LTV) is a prognostication of the net profit

contributed to the whole future relationship with a customer. The prediction model can have ...

.

Recommendation systems

Recommendation systems have used deep learning to extract meaningful features for a latent factor model for content-based music and journal recommendations. Multi-view deep learning has been applied for learning user preferences from multiple domains. The model uses a hybrid collaborative and content-based approach and enhances recommendations in multiple tasks.

Bioinformatics

An autoencoder

An autoencoder is a type of artificial neural network used to learn efficient codings of unlabeled data ( unsupervised learning). The encoding is validated and refined by attempting to regenerate the input from the encoding. The autoencoder lea ...

ANN was used in bioinformatics, to predict gene ontology

The Gene Ontology (GO) is a major bioinformatics initiative to unify the representation of gene and gene product attributes across all species. More specifically, the project aims to: 1) maintain and develop its controlled vocabulary of gene and ge ...

annotations and gene-function relationships.

In medical informatics, deep learning was used to predict sleep quality based on data from wearables and predictions of health complications from electronic health record data.

Medical image analysis

Deep learning has been shown to produce competitive results in medical application such as cancer cell classification, lesion detection, organ segmentation and image enhancement. Modern deep learning tools demonstrate the high accuracy of detecting various diseases and the helpfulness of their use by specialists to improve the diagnosis efficiency.

Mobile advertising

Finding the appropriate mobile audience for mobile advertising

Mobile advertising is a form of advertising via mobile (wireless) phones or other mobile devices. It is a subset of mobile marketing, mobile advertising can take place as text ads via SMS, or banner advertisements that appear embedded in a mo ...

is always challenging, since many data points must be considered and analyzed before a target segment can be created and used in ad serving by any ad server. Deep learning has been used to interpret large, many-dimensioned advertising datasets. Many data points are collected during the request/serve/click internet advertising cycle. This information can form the basis of machine learning to improve ad selection.

Image restoration

Deep learning has been successfully applied to inverse problems

An inverse problem in science is the process of calculating from a set of observations the causal factors that produced them: for example, calculating an image in X-ray computed tomography, source reconstruction in acoustics, or calculating the ...

such as denoising

Noise reduction is the process of removing noise from a signal. Noise reduction techniques exist for audio and images. Noise reduction algorithms may distort the signal to some degree. Noise rejection is the ability of a circuit to isolate an un ...

, super-resolution

Super-resolution imaging (SR) is a class of techniques that enhance (increase) the resolution of an imaging system. In optical SR the diffraction limit of systems is transcended, while in geometrical SR the resolution of digital imaging sensors ...

, inpainting

Inpainting is a conservation process where damaged, deteriorated, or missing parts of an artwork are filled in to present a complete image. This process is commonly used in image restoration. It can be applied to both physical and digital art ...

, and film colorization

Film colorization (American English; or colourisation [British English], or colourization [Canadian English and Oxford English]) is any process that adds color to black-and-white, sepia, or other monochrome moving-picture imag ...

. These applications include learning methods such as "Shrinkage Fields for Effective Image Restoration" which trains on an image dataset, and Deep Image Prior, which trains on the image that needs restoration.

Financial fraud detection

Deep learning is being successfully applied to financial fraud detection

In law, fraud is intentional deception to secure unfair or unlawful gain, or to deprive a victim of a legal right. Fraud can violate civil law (e.g., a fraud victim may sue the fraud perpetrator to avoid the fraud or recover monetary compensa ...

, tax evasion detection, and anti-money laundering. A potentially impressive demonstration of unsupervised learning as prosecution of financial crime is required to produce training data.

Also of note is that while the state of the art model in automated financial crime detection has existed for quite some time, the applications for deep learning referred to here dramatically under perform much simpler theoretical models. One such, yet to be implemented model, the Sensor Location Heuristic and Simple Any Human Detection for Financial Crimes (SLHSAHDFC), is an example.

The model works with the simple heuristic of choosing where it gets its input data. By placing the sensors by places frequented by large concentrations of wealth and power and then simply identifying any live human being, it turns out that the automated detection of financial crime is accomplished at very high accuracies and very high confidence levels. Even better, the model has shown to be extremely effective at identifying not just crime but large, very destructive and egregious crime. Due to the effectiveness of such models it is highly likely that applications to financial crime detection by deep learning will never be able to compete.

Military

The United States Department of Defense applied deep learning to train robots in new tasks through observation.

Partial differential equations

Physics informed neural networks have been used to solve partial differential equations in both forward and inverse problems in a data driven manner. One example is the reconstructing fluid flow governed by the Navier-Stokes equations. Using physics informed neural networks does not require the often expensive mesh generation that conventional CFD methods relies on.

Image Reconstruction

Image reconstruction is the reconstruction of the underlying images from the image-related measurements. Several works showed the better and superior performance of the deep learning methods compared to analytical methods for various applications, e.g., spectral imaging and ultrasound imaging.

Epigenetic clock

For more information, see Epigenetic clock.

An epigenetic clock is a biochemical test that can be used to measure age. Galkin et al. used deep neural networks to train an epigenetic aging clock of unprecedented accuracy using >6,000 blood samples. The clock uses information from 1000 CpG sites and predicts people with certain conditions older than healthy controls: IBD, frontotemporal dementia, ovarian cancer, obesity. The aging clock is planned to be released for public use in 2021 by an Insilico Medicine spinoff company Deep Longevity.

Relation to human cognitive and brain development

Deep learning is closely related to a class of theories of brain development

The development of the nervous system, or neural development (neurodevelopment), refers to the processes that generate, shape, and reshape the nervous system of animals, from the earliest stages of embryonic development to adulthood. The fiel ...

(specifically, neocortical development) proposed by cognitive neuroscientists in the early 1990s.self-organization

Self-organization, also called spontaneous order in the social sciences, is a process where some form of overall order arises from local interactions between parts of an initially disordered system. The process can be spontaneous when suff ...

somewhat analogous to the neural networks utilized in deep learning models. Like the neocortex, neural networks employ a hierarchy of layered filters in which each layer considers information from a prior layer (or the operating environment), and then passes its output (and possibly the original input), to other layers. This process yields a self-organizing stack of transducer

A transducer is a device that converts energy from one form to another. Usually a transducer converts a signal in one form of energy to a signal in another.

Transducers are often employed at the boundaries of automation, measurement, and cont ...

s, well-tuned to their operating environment. A 1995 description stated, "...the infant's brain seems to organize itself under the influence of waves of so-called trophic-factors ... different regions of the brain become connected sequentially, with one layer of tissue maturing before another and so on until the whole brain is mature."[S. Blakeslee., "In brain's early growth, timetable may be critical," ''The New York Times, Science Section'', pp. B5–B6, 1995.]

A variety of approaches have been used to investigate the plausibility of deep learning models from a neurobiological perspective. On the one hand, several variants of the backpropagation

In machine learning, backpropagation (backprop, BP) is a widely used algorithm for training feedforward artificial neural networks. Generalizations of backpropagation exist for other artificial neural networks (ANNs), and for functions gener ...

algorithm have been proposed in order to increase its processing realism. Other researchers have argued that unsupervised forms of deep learning, such as those based on hierarchical generative model

In statistical classification, two main approaches are called the generative approach and the discriminative approach. These compute classifiers by different approaches, differing in the degree of statistical modelling. Terminology is inconsi ...

s and deep belief network

In machine learning, a deep belief network (DBN) is a generative graphical model, or alternatively a class of deep neural network, composed of multiple layers of latent variables ("hidden units"), with connections between the layers but not bet ...

s, may be closer to biological reality. In this respect, generative neural network models have been related to neurobiological evidence about sampling-based processing in the cerebral cortex.

Although a systematic comparison between the human brain organization and the neuronal encoding in deep networks has not yet been established, several analogies have been reported. For example, the computations performed by deep learning units could be similar to those of actual neurons and neural populations. Similarly, the representations developed by deep learning models are similar to those measured in the primate visual system both at the single-unit and at the population levels.

Commercial activity

Facebook

Facebook is an online social media and social networking service owned by American company Meta Platforms. Founded in 2004 by Mark Zuckerberg with fellow Harvard College students and roommates Eduardo Saverin, Andrew McCollum, Dustin Mosk ...

's AI lab performs tasks such as automatically tagging uploaded pictures with the names of the people in them.AlphaGo

AlphaGo is a computer program that plays the board game Go. It was developed by DeepMind Technologies a subsidiary of Google (now Alphabet Inc.). Subsequent versions of AlphaGo became increasingly powerful, including a version that competed u ...

system, which learned the game of Go well enough to beat a professional Go player. Google Translate

Google Translate is a multilingual neural machine translation service developed by Google to translate text, documents and websites from one language into another. It offers a website interface, a mobile app for Android and iOS, and an API ...

uses a neural network to translate between more than 100 languages.

In 2017, Covariant.ai was launched, which focuses on integrating deep learning into factories.

As of 2008, researchers at The University of Texas at Austin

The University of Texas at Austin (UT Austin, UT, or Texas) is a public research university in Austin, Texas. It was founded in 1883 and is the oldest institution in the University of Texas System. With 40,916 undergraduate students, 11,075 ...

(UT) developed a machine learning framework called Training an Agent Manually via Evaluative Reinforcement, or TAMER, which proposed new methods for robots or computer programs to learn how to perform tasks by interacting with a human instructor.

Criticism and comment

Deep learning has attracted both criticism and comment, in some cases from outside the field of computer science.

Theory

A main criticism concerns the lack of theory surrounding some methods. Learning in the most common deep architectures is implemented using well-understood gradient descent. However, the theory surrounding other algorithms, such as contrastive divergence is less clear. (e.g., Does it converge? If so, how fast? What is it approximating?) Deep learning methods are often looked at as a black box

In science, computing, and engineering, a black box is a system which can be viewed in terms of its inputs and outputs (or transfer characteristics), without any knowledge of its internal workings. Its implementation is "opaque" (black). The te ...

, with most confirmations done empirically, rather than theoretically."Realistically, deep learning is only part of the larger challenge of building intelligent machines. Such techniques lack ways of representing causal relationships

Causality (also referred to as causation, or cause and effect) is influence by which one event, process, state, or object (''a'' ''cause'') contributes to the production of another event, process, state, or object (an ''effect'') where the cau ...

(...) have no obvious ways of performing logical inferences, and they are also still a long way from integrating abstract knowledge, such as information about what objects are, what they are for, and how they are typically used. The most powerful A.I. systems, like Watson (...) use techniques like deep learning as just one element in a very complicated ensemble of techniques, ranging from the statistical technique of Bayesian inference to deductive reasoning."

In further reference to the idea that artistic sensitivity might be inherent in relatively low levels of the cognitive hierarchy, a published series of graphic representations of the internal states of deep (20-30 layers) neural networks attempting to discern within essentially random data the images on which they were trained demonstrate a visual appeal: the original research notice received well over 1,000 comments, and was the subject of what was for a time the most frequently accessed article on ''The Guardian

''The Guardian'' is a British daily newspaper. It was founded in 1821 as ''The Manchester Guardian'', and changed its name in 1959. Along with its sister papers ''The Observer'' and ''The Guardian Weekly'', ''The Guardian'' is part of the Gu ...

's'' website.

Errors

Some deep learning architectures display problematic behaviors,artificial general intelligence

Artificial general intelligence (AGI) is the ability of an intelligent agent to understand or learn any intellectual task that a human being can.

It is a primary goal of some artificial intelligence research and a common topic in science fictio ...

(AGI) architectures.commonsense reasoning

In artificial intelligence (AI), commonsense reasoning is a human-like ability to make presumptions about the type and essence of ordinary situations humans encounter every day. These assumptions include judgments about the nature of physical objec ...

that operates on concepts in terms of grammatical production rules and is a basic goal of both human language acquisition and artificial intelligence

Artificial intelligence (AI) is intelligence—perceiving, synthesizing, and inferring information—demonstrated by machines, as opposed to intelligence displayed by animals and humans. Example tasks in which this is done include speech r ...

(AI).

Cyber threat

As deep learning moves from the lab into the world, research and experience show that artificial neural networks are vulnerable to hacks and deception. By identifying patterns that these systems use to function, attackers can modify inputs to ANNs in such a way that the ANN finds a match that human observers would not recognize. For example, an attacker can make subtle changes to an image such that the ANN finds a match even though the image looks to a human nothing like the search target. Such manipulation is termed an “adversarial attack.”

In 2016 researchers used one ANN to doctor images in trial and error fashion, identify another's focal points and thereby generate images that deceived it. The modified images looked no different to human eyes. Another group showed that printouts of doctored images then photographed successfully tricked an image classification system.facial recognition system

A facial recognition system is a technology capable of matching a human face from a digital image or a video frame against a database of faces. Such a system is typically employed to authenticate users through ID verification services, and ...

into thinking ordinary people were celebrities, potentially allowing one person to impersonate another. In 2017 researchers added stickers to stop signs and caused an ANN to misclassify them.Google Now

Google Now was a feature of Google Search of the Google app for Android and iOS. Google Now proactively delivered information to users to predict (based on search habits and other factors) information they may need in the form of informational ...

voice command system open a particular web address, and hypothesized that this could "serve as a stepping stone for further attacks (e.g., opening a web page hosting drive-by malware)."

Reliance on human microwork

Most Deep Learning systems rely on training and verification data that is generated and/or annotated by humans. It has been argued in media philosophy that not only low-paid clickwork (e.g. on Amazon Mechanical Turk) is regularly deployed for this purpose, but also implicit forms of human microwork that are often not recognized as such.gamification

Gamification is the strategic attempt to enhance systems, services, organizations, and activities by creating similar experiences to those experienced when playing games in order to motivate and engage users. This is generally accomplished thro ...