|

Backpropagation

In machine learning, backpropagation is a gradient computation method commonly used for training a neural network to compute its parameter updates. It is an efficient application of the chain rule to neural networks. Backpropagation computes the gradient of a loss function with respect to the weights of the network for a single input–output example, and does so efficiently, computing the gradient one layer at a time, iterating backward from the last layer to avoid redundant calculations of intermediate terms in the chain rule; this can be derived through dynamic programming. Strictly speaking, the term ''backpropagation'' refers only to an algorithm for efficiently computing the gradient, not how the gradient is used; but the term is often used loosely to refer to the entire learning algorithm – including how the gradient is used, such as by stochastic gradient descent, or as an intermediate step in a more complicated optimizer, such as Adaptive Moment Estimation. The ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

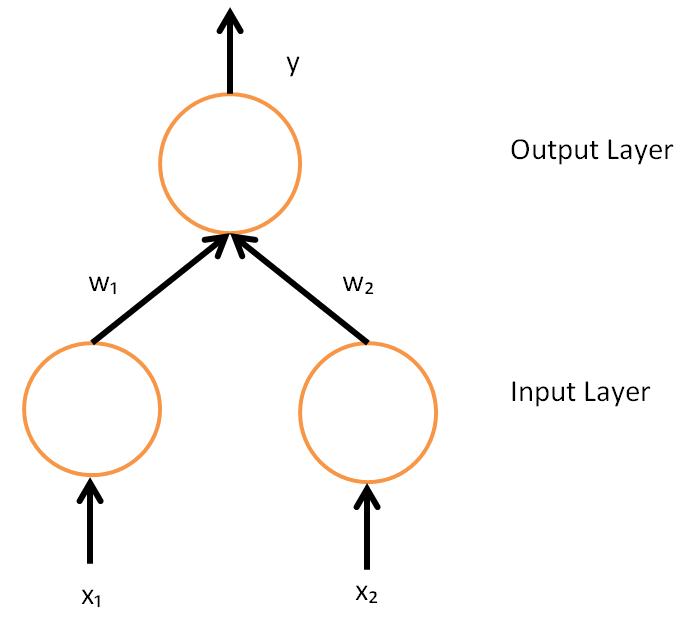

Neural Network (machine Learning)

In machine learning, a neural network (also artificial neural network or neural net, abbreviated ANN or NN) is a computational model inspired by the structure and functions of biological neural networks. A neural network consists of connected units or nodes called ''artificial neurons'', which loosely model the neurons in the brain. Artificial neuron models that mimic biological neurons more closely have also been recently investigated and shown to significantly improve performance. These are connected by ''edges'', which model the synapses in the brain. Each artificial neuron receives signals from connected neurons, then processes them and sends a signal to other connected neurons. The "signal" is a real number, and the output of each neuron is computed by some non-linear function of the sum of its inputs, called the ''activation function''. The strength of the signal at each connection is determined by a ''weight'', which adjusts during the learning process. Typically, neuron ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Softmax Function

The softmax function, also known as softargmax or normalized exponential function, converts a tuple of real numbers into a probability distribution of possible outcomes. It is a generalization of the logistic function to multiple dimensions, and is used in multinomial logistic regression. The softmax function is often used as the last activation function of a neural network to normalize the output of a network to a probability distribution over predicted output classes. Definition The softmax function takes as input a tuple of real numbers, and normalizes it into a probability distribution consisting of probabilities proportional to the exponentials of the input numbers. That is, prior to applying softmax, some tuple components could be negative, or greater than one; and might not sum to 1; but after applying softmax, each component will be in the interval (0, 1), and the components will add up to 1, so that they can be interpreted as probabilities. Furthermore, the la ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Stochastic Gradient Descent

Stochastic gradient descent (often abbreviated SGD) is an Iterative method, iterative method for optimizing an objective function with suitable smoothness properties (e.g. Differentiable function, differentiable or Subderivative, subdifferentiable). It can be regarded as a stochastic approximation of gradient descent optimization, since it replaces the actual gradient (calculated from the entire data set) by an estimate thereof (calculated from a randomly selected subset of the data). Especially in high-dimensional optimization problems this reduces the very high Computational complexity, computational burden, achieving faster iterations in exchange for a lower Rate of convergence, convergence rate. The basic idea behind stochastic approximation can be traced back to the Robbins–Monro algorithm of the 1950s. Today, stochastic gradient descent has become an important optimization method in machine learning. Background Both statistics, statistical M-estimation, estimation and ma ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Logistic Function

A logistic function or logistic curve is a common S-shaped curve ( sigmoid curve) with the equation f(x) = \frac where The logistic function has domain the real numbers, the limit as x \to -\infty is 0, and the limit as x \to +\infty is L. The exponential function with negated argument (e^ ) is used to define the standard logistic function, depicted at right, where L=1, k=1, x_0=0, which has the equation f(x) = \frac and is sometimes simply called the sigmoid. It is also sometimes called the expit, being the inverse function of the logit. The logistic function finds applications in a range of fields, including biology (especially ecology), biomathematics, chemistry, demography, economics, geoscience, mathematical psychology, probability, sociology, political science, linguistics, statistics, and artificial neural networks. There are various generalizations, depending on the field. History The logistic function was introduced in a series of three papers by Pier ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Swish Function

The swish function is a family of mathematical function defined as follows: : \operatorname_\beta(x) = x \operatorname(\beta x) = \frac. where \beta can be constant (usually set to 1) or trainable and "sigmoid" refers to the logistic function. The swish family was designed to smoothly interpolate between a linear function and the ReLU function. When considering positive values, Swish is a particular case of doubly parameterized sigmoid shrinkage function defined in . Variants of the swish function include Mish. Special values For β = 0, the function is linear: f(''x'') = ''x''/2. For β = 1, the function is the Sigmoid Linear Unit (SiLU). With β → ∞, the function converges to ReLU. Thus, the swish family smoothly interpolates between a linear function and the ReLU function. Since \operatorname_\beta(x) = \operatorname_1(\beta x) / \beta, all instances of swish have the same shape as the default \operatorname_1 , zoomed by \beta . ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Machine Learning

Machine learning (ML) is a field of study in artificial intelligence concerned with the development and study of Computational statistics, statistical algorithms that can learn from data and generalise to unseen data, and thus perform Task (computing), tasks without explicit Machine code, instructions. Within a subdiscipline in machine learning, advances in the field of deep learning have allowed Neural network (machine learning), neural networks, a class of statistical algorithms, to surpass many previous machine learning approaches in performance. ML finds application in many fields, including natural language processing, computer vision, speech recognition, email filtering, agriculture, and medicine. The application of ML to business problems is known as predictive analytics. Statistics and mathematical optimisation (mathematical programming) methods comprise the foundations of machine learning. Data mining is a related field of study, focusing on exploratory data analysi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

ReLU

In the context of Neural network (machine learning), artificial neural networks, the rectifier or ReLU (rectified linear unit) activation function is an activation function defined as the non-negative part of its argument, i.e., the ramp function: :\operatorname(x) = x^+ = \max(0, x) = \frac = \begin x & \text x > 0, \\ 0 & x \le 0 \end where x is the input to a Artificial neuron, neuron. This is analogous to half-wave rectification in electrical engineering. ReLU is one of the most popular activation functions for artificial neural networks, and finds application in computer vision and speech recognitionAndrew L. Maas, Awni Y. Hannun, Andrew Y. Ng (2014)Rectifier Nonlinearities Improve Neural Network Acoustic Models using Deep learning, deep neural nets and computational neuroscience. History The ReLU was first used by Alston Scott Householder, Alston Householder in 1941 as a mathematical abstraction of biological neural networks. Kunihiko Fukushima in 1969 used R ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Reverse Accumulation

In mathematics and computer algebra, automatic differentiation (auto-differentiation, autodiff, or AD), also called algorithmic differentiation, computational differentiation, and differentiation arithmetic Hend Dawood and Nefertiti Megahed (2023). Automatic differentiation of uncertainties: an interval computational differentiation for first and higher derivatives with implementation. PeerJ Computer Science 9:e1301 https://doi.org/10.7717/peerj-cs.1301. Hend Dawood and Nefertiti Megahed (2019). A Consistent and Categorical Axiomatization of Differentiation Arithmetic Applicable to First and Higher Order Derivatives. Punjab University Journal of Mathematics. 51(11). pp. 77-100. doi: 10.5281/zenodo.3479546. http://doi.org/10.5281/zenodo.3479546. is a set of techniques to evaluate the partial derivative of a function specified by a computer program. Automatic differentiation is a subtle and central tool to automatize the simultaneous computation of the numerical values of arbitrarily ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Rectifier (neural Networks)

In the context of Neural network (machine learning), artificial neural networks, the rectifier or ReLU (rectified linear unit) activation function is an activation function defined as the non-negative part of its argument, i.e., the ramp function: :\operatorname(x) = x^+ = \max(0, x) = \frac = \begin x & \text x > 0, \\ 0 & x \le 0 \end where x is the input to a Artificial neuron, neuron. This is analogous to half-wave rectification in electrical engineering. ReLU is one of the most popular activation functions for artificial neural networks, and finds application in computer vision and speech recognitionAndrew L. Maas, Awni Y. Hannun, Andrew Y. Ng (2014)Rectifier Nonlinearities Improve Neural Network Acoustic Models using Deep learning, deep neural nets and computational neuroscience. History The ReLU was first used by Alston Scott Householder, Alston Householder in 1941 as a mathematical abstraction of biological neural networks. Kunihiko Fukushima in 1969 used R ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Sigmoid Function

A sigmoid function is any mathematical function whose graph of a function, graph has a characteristic S-shaped or sigmoid curve. A common example of a sigmoid function is the logistic function, which is defined by the formula :\sigma(x) = \frac = \frac = 1 - \sigma(-x). Other sigmoid functions are given in the #Examples, Examples section. In some fields, most notably in the context of artificial neural networks, the term "sigmoid function" is used as a synonym for "logistic function". Special cases of the sigmoid function include the Gompertz curve (used in modeling systems that saturate at large values of ''x'') and the ogee curve (used in the spillway of some dams). Sigmoid functions have domain of all real numbers, with return (response) value commonly monotonically increasing but could be decreasing. Sigmoid functions most often show a return value (''y'' axis) in the range 0 to 1. Another commonly used range is from −1 to 1. A wide variety of sigmoid functions ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Automatic Differentiation

In mathematics and computer algebra, automatic differentiation (auto-differentiation, autodiff, or AD), also called algorithmic differentiation, computational differentiation, and differentiation arithmetic Hend Dawood and Nefertiti Megahed (2023). Automatic differentiation of uncertainties: an interval computational differentiation for first and higher derivatives with implementation. PeerJ Computer Science 9:e1301 https://doi.org/10.7717/peerj-cs.1301. Hend Dawood and Nefertiti Megahed (2019). A Consistent and Categorical Axiomatization of Differentiation Arithmetic Applicable to First and Higher Order Derivatives. Punjab University Journal of Mathematics. 51(11). pp. 77-100. doi: 10.5281/zenodo.3479546. http://doi.org/10.5281/zenodo.3479546. is a set of techniques to evaluate the partial derivative of a function specified by a computer program. Automatic differentiation is a subtle and central tool to automatize the simultaneous computation of the numerical values of arbitrarily ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |