Bayesian inference is a method of

statistical inference

Statistical inference is the process of using data analysis to infer properties of an underlying probability distribution, distribution of probability.Upton, G., Cook, I. (2008) ''Oxford Dictionary of Statistics'', OUP. . Inferential statistical ...

in which

Bayes' theorem

In probability theory and statistics, Bayes' theorem (alternatively Bayes' law or Bayes' rule), named after Thomas Bayes, describes the probability of an event, based on prior knowledge of conditions that might be related to the event. For examp ...

is used to update the probability for a hypothesis as more

evidence

Evidence for a proposition is what supports this proposition. It is usually understood as an indication that the supported proposition is true. What role evidence plays and how it is conceived varies from field to field.

In epistemology, evidenc ...

or

information

Information is an abstract concept that refers to that which has the power to inform. At the most fundamental level information pertains to the interpretation of that which may be sensed. Any natural process that is not completely random ...

becomes available. Bayesian inference is an important technique in

statistics

Statistics (from German language, German: ''wikt:Statistik#German, Statistik'', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of ...

, and especially in

mathematical statistics

Mathematical statistics is the application of probability theory, a branch of mathematics, to statistics, as opposed to techniques for collecting statistical data. Specific mathematical techniques which are used for this include mathematical an ...

. Bayesian updating is particularly important in the

dynamic analysis of a sequence of data. Bayesian inference has found application in a wide range of activities, including

science

Science is a systematic endeavor that builds and organizes knowledge in the form of testable explanations and predictions about the universe.

Science may be as old as the human species, and some of the earliest archeological evidence for ...

,

engineering

Engineering is the use of scientific method, scientific principles to design and build machines, structures, and other items, including bridges, tunnels, roads, vehicles, and buildings. The discipline of engineering encompasses a broad rang ...

,

philosophy

Philosophy (from , ) is the systematized study of general and fundamental questions, such as those about existence, reason, knowledge, values, mind, and language. Such questions are often posed as problems to be studied or resolved. Some ...

,

medicine

Medicine is the science and practice of caring for a patient, managing the diagnosis, prognosis, prevention, treatment, palliation of their injury or disease, and promoting their health. Medicine encompasses a variety of health care pract ...

,

sport

Sport pertains to any form of Competition, competitive physical activity or game that aims to use, maintain, or improve physical ability and Skill, skills while providing enjoyment to participants and, in some cases, entertainment to specta ...

, and

law

Law is a set of rules that are created and are enforceable by social or governmental institutions to regulate behavior,Robertson, ''Crimes against humanity'', 90. with its precise definition a matter of longstanding debate. It has been vario ...

. In the philosophy of

decision theory

Decision theory (or the theory of choice; not to be confused with choice theory) is a branch of applied probability theory concerned with the theory of making decisions based on assigning probabilities to various factors and assigning numerical ...

, Bayesian inference is closely related to subjective probability, often called "

Bayesian probability

Bayesian probability is an Probability interpretations, interpretation of the concept of probability, in which, instead of frequentist probability, frequency or propensity probability, propensity of some phenomenon, probability is interpreted as re ...

".

Introduction to Bayes' rule

Formal explanation

Bayesian inference derives the

posterior probability

The posterior probability is a type of conditional probability that results from updating the prior probability with information summarized by the likelihood via an application of Bayes' rule. From an epistemological perspective, the posterior p ...

as a

consequence

Consequence may refer to:

* Logical consequence, also known as a ''consequence relation'', or ''entailment''

* In operant conditioning, a result of some behavior

* Consequentialism, a theory in philosophy in which the morality of an act is determi ...

of two

antecedents: a

prior probability

In Bayesian statistical inference, a prior probability distribution, often simply called the prior, of an uncertain quantity is the probability distribution that would express one's beliefs about this quantity before some evidence is taken into ...

and a "

likelihood function

The likelihood function (often simply called the likelihood) represents the probability of random variable realizations conditional on particular values of the statistical parameters. Thus, when evaluated on a given sample, the likelihood funct ...

" derived from a

statistical model

A statistical model is a mathematical model that embodies a set of statistical assumptions concerning the generation of Sample (statistics), sample data (and similar data from a larger Statistical population, population). A statistical model repres ...

for the observed data. Bayesian inference computes the posterior probability according to

Bayes' theorem

In probability theory and statistics, Bayes' theorem (alternatively Bayes' law or Bayes' rule), named after Thomas Bayes, describes the probability of an event, based on prior knowledge of conditions that might be related to the event. For examp ...

:

where

*

stands for any ''hypothesis'' whose probability may be affected by

data

In the pursuit of knowledge, data (; ) is a collection of discrete values that convey information, describing quantity, quality, fact, statistics, other basic units of meaning, or simply sequences of symbols that may be further interpreted ...

(called ''evidence'' below). Often there are competing hypotheses, and the task is to determine which is the most probable.

*

, the ''

prior probability

In Bayesian statistical inference, a prior probability distribution, often simply called the prior, of an uncertain quantity is the probability distribution that would express one's beliefs about this quantity before some evidence is taken into ...

'', is the estimate of the probability of the hypothesis

''before'' the data

, the current evidence, is observed.

*

, the ''evidence'', corresponds to new data that were not used in computing the prior probability.

*

, the ''

posterior probability

The posterior probability is a type of conditional probability that results from updating the prior probability with information summarized by the likelihood via an application of Bayes' rule. From an epistemological perspective, the posterior p ...

'', is the probability of

''given''

, i.e., ''after''

is observed. This is what we want to know: the probability of a hypothesis ''given'' the observed evidence.

*

is the probability of observing

''given''

, and is called the ''

likelihood

The likelihood function (often simply called the likelihood) represents the probability of random variable realizations conditional on particular values of the statistical parameters. Thus, when evaluated on a given sample, the likelihood funct ...

''. As a function of

with

fixed, it indicates the compatibility of the evidence with the given hypothesis. The likelihood function is a function of the evidence,

, while the posterior probability is a function of the hypothesis,

.

*

is sometimes termed the

marginal likelihood

A marginal likelihood is a likelihood function that has been integrated over the parameter space. In Bayesian statistics, it represents the probability of generating the observed sample from a prior and is therefore often referred to as model evi ...

or "model evidence". This factor is the same for all possible hypotheses being considered (as is evident from the fact that the hypothesis

does not appear anywhere in the symbol, unlike for all the other factors), so this factor does not enter into determining the relative probabilities of different hypotheses.

For different values of

, only the factors

and

, both in the numerator, affect the value of

– the posterior probability of a hypothesis is proportional to its prior probability (its inherent likeliness) and the newly acquired likelihood (its compatibility with the new observed evidence).

Bayes' rule can also be written as follows:

because

and

where

is "not

", the

logical negation

In logic, negation, also called the logical complement, is an operation that takes a proposition P to another proposition "not P", written \neg P, \mathord P or \overline. It is interpreted intuitively as being true when P is false, and false ...

of

.

One quick and easy way to remember the equation would be to use Rule of Multiplication:

Alternatives to Bayesian updating

Bayesian updating is widely used and computationally convenient. However, it is not the only updating rule that might be considered rational.

Ian Hacking

Ian MacDougall Hacking (born February 18, 1936) is a Canadian philosopher specializing in the philosophy of science. Throughout his career, he has won numerous awards, such as the Killam Prize for the Humanities and the Balzan Prize, and been ...

noted that traditional "

Dutch book

In gambling, a Dutch book or lock is a set of odds and bets, established by the bookmaker, that ensures that the bookmaker will profit—at the expense of the gamblers—regardless of the outcome of the event (a horse race, for example) on which ...

" arguments did not specify Bayesian updating: they left open the possibility that non-Bayesian updating rules could avoid Dutch books. Hacking wrote "And neither the Dutch book argument nor any other in the personalist arsenal of proofs of the probability axioms entails the dynamic assumption. Not one entails Bayesianism. So the personalist requires the dynamic assumption to be Bayesian. It is true that in consistency a personalist could abandon the Bayesian model of learning from experience. Salt could lose its savour."

Indeed, there are non-Bayesian updating rules that also avoid Dutch books (as discussed in the literature on "

probability kinematics Radical probabilism is a hypothesis in philosophy, in particular epistemology, and probability theory that holds that no facts are known for certain. That view holds profound implications for statistical inference. The philosophy is particularly ass ...

") following the publication of

Richard C. Jeffrey

Richard Carl Jeffrey (August 5, 1926 – November 9, 2002) was an American philosopher, logician, and probability theory, probability theorist. He is best known for developing and championing the philosophy of radical probabilism and the associa ...

's rule, which applies Bayes' rule to the case where the evidence itself is assigned a probability. The additional hypotheses needed to uniquely require Bayesian updating have been deemed to be substantial, complicated, and unsatisfactory.

Inference over exclusive and exhaustive possibilities

If evidence is simultaneously used to update belief over a set of exclusive and exhaustive propositions, Bayesian inference may be thought of as acting on this belief distribution as a whole.

General formulation

Suppose a process is generating independent and identically distributed events

, but the probability distribution is unknown. Let the event space

represent the current state of belief for this process. Each model is represented by event

. The conditional probabilities

are specified to define the models.

is the degree of belief in

. Before the first inference step,

is a set of ''initial prior probabilities''. These must sum to 1, but are otherwise arbitrary.

Suppose that the process is observed to generate

. For each

, the prior

is updated to the posterior

. From

Bayes' theorem

In probability theory and statistics, Bayes' theorem (alternatively Bayes' law or Bayes' rule), named after Thomas Bayes, describes the probability of an event, based on prior knowledge of conditions that might be related to the event. For examp ...

:

Upon observation of further evidence, this procedure may be repeated.

Multiple observations

For a sequence of

independent and identically distributed

In probability theory and statistics, a collection of random variables is independent and identically distributed if each random variable has the same probability distribution as the others and all are mutually independent. This property is usua ...

observations

, it can be shown by induction that repeated application of the above is equivalent to

where

Parametric formulation: motivating the formal description

By parameterizing the space of models, the belief in all models may be updated in a single step. The distribution of belief over the model space may then be thought of as a distribution of belief over the parameter space. The distributions in this section are expressed as continuous, represented by probability densities, as this is the usual situation. The technique is however equally applicable to discrete distributions.

Let the vector

span the parameter space. Let the initial prior distribution over

be

, where

is a set of parameters to the prior itself, or ''

hyperparameter

In Bayesian statistics, a hyperparameter is a parameter of a prior distribution; the term is used to distinguish them from parameters of the model for the underlying system under analysis.

For example, if one is using a beta distribution to mo ...

s''. Let

be a sequence of

independent and identically distributed

In probability theory and statistics, a collection of random variables is independent and identically distributed if each random variable has the same probability distribution as the others and all are mutually independent. This property is usua ...

event observations, where all

are distributed as

for some

.

Bayes' theorem

In probability theory and statistics, Bayes' theorem (alternatively Bayes' law or Bayes' rule), named after Thomas Bayes, describes the probability of an event, based on prior knowledge of conditions that might be related to the event. For examp ...

is applied to find the

posterior distribution

The posterior probability is a type of conditional probability that results from updating the prior probability with information summarized by the likelihood via an application of Bayes' rule. From an epistemological perspective, the posterior p ...

over

:

where

Formal description of Bayesian inference

Definitions

*

, a data point in general. This may in fact be a

vector

Vector most often refers to:

*Euclidean vector, a quantity with a magnitude and a direction

*Vector (epidemiology), an agent that carries and transmits an infectious pathogen into another living organism

Vector may also refer to:

Mathematic ...

of values.

*

, the

parameter

A parameter (), generally, is any characteristic that can help in defining or classifying a particular system (meaning an event, project, object, situation, etc.). That is, a parameter is an element of a system that is useful, or critical, when ...

of the data point's distribution, i.e., This may be a

vector

Vector most often refers to:

*Euclidean vector, a quantity with a magnitude and a direction

*Vector (epidemiology), an agent that carries and transmits an infectious pathogen into another living organism

Vector may also refer to:

Mathematic ...

of parameters.

*

, the

hyperparameter

In Bayesian statistics, a hyperparameter is a parameter of a prior distribution; the term is used to distinguish them from parameters of the model for the underlying system under analysis.

For example, if one is using a beta distribution to mo ...

of the parameter distribution, i.e., This may be a

vector

Vector most often refers to:

*Euclidean vector, a quantity with a magnitude and a direction

*Vector (epidemiology), an agent that carries and transmits an infectious pathogen into another living organism

Vector may also refer to:

Mathematic ...

of hyperparameters.

*

is the sample, a set of

observed data points, i.e.,

.

*

, a new data point whose distribution is to be predicted.

Bayesian inference

*The

prior distribution

In Bayesian statistical inference, a prior probability distribution, often simply called the prior, of an uncertain quantity is the probability distribution that would express one's beliefs about this quantity before some evidence is taken int ...

is the distribution of the parameter(s) before any data is observed, i.e.

. The prior distribution might not be easily determined; in such a case, one possibility may be to use the

Jeffreys prior

In Bayesian probability, the Jeffreys prior, named after Sir Harold Jeffreys, is a non-informative (objective) prior distribution for a parameter space; its density function is proportional to the square root of the determinant of the Fisher infor ...

to obtain a prior distribution before updating it with newer observations.

*The

sampling distribution

In statistics, a sampling distribution or finite-sample distribution is the probability distribution of a given random-sample-based statistic. If an arbitrarily large number of samples, each involving multiple observations (data points), were s ...

is the distribution of the observed data conditional on its parameters, i.e. This is also termed the

likelihood

The likelihood function (often simply called the likelihood) represents the probability of random variable realizations conditional on particular values of the statistical parameters. Thus, when evaluated on a given sample, the likelihood funct ...

, especially when viewed as a function of the parameter(s), sometimes written

.

*The

marginal likelihood

A marginal likelihood is a likelihood function that has been integrated over the parameter space. In Bayesian statistics, it represents the probability of generating the observed sample from a prior and is therefore often referred to as model evi ...

(sometimes also termed the ''evidence'') is the distribution of the observed data

marginalized

Social exclusion or social marginalisation is the social disadvantage and relegation to the fringe of society. It is a term that has been used widely in Europe and was first used in France in the late 20th century. It is used across discipline ...

over the parameter(s), i.e.

It quantifies the agreement between data and expert opinion, in a geometric sense that can be made precise.

*The

posterior distribution

The posterior probability is a type of conditional probability that results from updating the prior probability with information summarized by the likelihood via an application of Bayes' rule. From an epistemological perspective, the posterior p ...

is the distribution of the parameter(s) after taking into account the observed data. This is determined by

Bayes' rule

In probability theory and statistics, Bayes' theorem (alternatively Bayes' law or Bayes' rule), named after Thomas Bayes, describes the probability of an event, based on prior knowledge of conditions that might be related to the event. For examp ...

, which forms the heart of Bayesian inference:

This is expressed in words as "posterior is proportional to likelihood times prior", or sometimes as "posterior = likelihood times prior, over evidence".

* In practice, for almost all complex Bayesian models used in machine learning, the posterior distribution

is not obtained in a closed form distribution, mainly because the parameter space for

can be very high, or the Bayesian model retains certain hierarchical structure formulated from the observations

and parameter

. In such situations, we need to resort to approximation techniques.

Bayesian prediction

*The

posterior predictive distribution

Posterior may refer to:

* Posterior (anatomy), the end of an organism opposite to its head

** Buttocks, as a euphemism

* Posterior horn (disambiguation)

* Posterior probability

The posterior probability is a type of conditional probability that r ...

is the distribution of a new data point, marginalized over the posterior:

*The

prior predictive distribution

Prior (or prioress) is an ecclesiastical title for a superior in some religious orders. The word is derived from the Latin for "earlier" or "first". Its earlier generic usage referred to any monastic superior. In abbeys, a prior would be low ...

is the distribution of a new data point, marginalized over the prior:

Bayesian theory calls for the use of the posterior predictive distribution to do

predictive inference

Statistical inference is the process of using data analysis to infer properties of an underlying distribution of probability.Upton, G., Cook, I. (2008) ''Oxford Dictionary of Statistics'', OUP. . Inferential statistical analysis infers propertie ...

, i.e., to

predict

A prediction (Latin ''præ-'', "before," and ''dicere'', "to say"), or forecast, is a statement about a future event or data. They are often, but not always, based upon experience or knowledge. There is no universal agreement about the exact ...

the distribution of a new, unobserved data point. That is, instead of a fixed point as a prediction, a distribution over possible points is returned. Only this way is the entire posterior distribution of the parameter(s) used. By comparison, prediction in

frequentist statistics

Frequentist inference is a type of statistical inference based in frequentist probability, which treats “probability” in equivalent terms to “frequency” and draws conclusions from sample-data by means of emphasizing the frequency or pro ...

often involves finding an optimum point estimate of the parameter(s)—e.g., by

maximum likelihood

In statistics, maximum likelihood estimation (MLE) is a method of estimation theory, estimating the Statistical parameter, parameters of an assumed probability distribution, given some observed data. This is achieved by Mathematical optimization, ...

or

maximum a posteriori estimation

In Bayesian statistics, a maximum a posteriori probability (MAP) estimate is an estimate of an unknown quantity, that equals the mode of the posterior distribution. The MAP can be used to obtain a point estimate of an unobserved quantity on the b ...

(MAP)—and then plugging this estimate into the formula for the distribution of a data point. This has the disadvantage that it does not account for any uncertainty in the value of the parameter, and hence will underestimate the

variance

In probability theory and statistics, variance is the expectation of the squared deviation of a random variable from its population mean or sample mean. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers ...

of the predictive distribution.

In some instances, frequentist statistics can work around this problem. For example,

confidence interval

In frequentist statistics, a confidence interval (CI) is a range of estimates for an unknown parameter. A confidence interval is computed at a designated ''confidence level''; the 95% confidence level is most common, but other levels, such as 9 ...

s and

prediction interval

In statistical inference, specifically predictive inference, a prediction interval is an estimate of an interval in which a future observation will fall, with a certain probability, given what has already been observed. Prediction intervals are o ...

s in frequentist statistics when constructed from a

normal distribution

In statistics, a normal distribution or Gaussian distribution is a type of continuous probability distribution for a real-valued random variable. The general form of its probability density function is

:

f(x) = \frac e^

The parameter \mu ...

with unknown

mean and

variance

In probability theory and statistics, variance is the expectation of the squared deviation of a random variable from its population mean or sample mean. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers ...

are constructed using a

Student's t-distribution

In probability and statistics, Student's ''t''-distribution (or simply the ''t''-distribution) is any member of a family of continuous probability distributions that arise when estimating the mean of a normally distributed population in sit ...

. This correctly estimates the variance, due to the facts that (1) the average of normally distributed random variables is also normally distributed, and (2) the predictive distribution of a normally distributed data point with unknown mean and variance, using conjugate or uninformative priors, has a Student's t-distribution. In Bayesian statistics, however, the posterior predictive distribution can always be determined exactly—or at least to an arbitrary level of precision when numerical methods are used.

Both types of predictive distributions have the form of a

compound probability distribution (as does the

marginal likelihood

A marginal likelihood is a likelihood function that has been integrated over the parameter space. In Bayesian statistics, it represents the probability of generating the observed sample from a prior and is therefore often referred to as model evi ...

). In fact, if the prior distribution is a

conjugate prior, such that the prior and posterior distributions come from the same family, it can be seen that both prior and posterior predictive distributions also come from the same family of compound distributions. The only difference is that the posterior predictive distribution uses the updated values of the hyperparameters (applying the Bayesian update rules given in the

conjugate prior article), while the prior predictive distribution uses the values of the hyperparameters that appear in the prior distribution.

Mathematical properties

Interpretation of factor

. That is, if the model were true, the evidence would be more likely than is predicted by the current state of belief. The reverse applies for a decrease in belief. If the belief does not change,

. That is, the evidence is independent of the model. If the model were true, the evidence would be exactly as likely as predicted by the current state of belief.

Cromwell's rule

If

then

. If

, then

. This can be interpreted to mean that hard convictions are insensitive to counter-evidence.

The former follows directly from Bayes' theorem. The latter can be derived by applying the first rule to the event "not

" in place of "

", yielding "if

, then

", from which the result immediately follows.

Asymptotic behaviour of posterior

Consider the behaviour of a belief distribution as it is updated a large number of times with

independent and identically distributed

In probability theory and statistics, a collection of random variables is independent and identically distributed if each random variable has the same probability distribution as the others and all are mutually independent. This property is usua ...

trials. For sufficiently nice prior probabilities, the

Bernstein-von Mises theorem gives that in the limit of infinite trials, the posterior converges to a

Gaussian distribution independent of the initial prior under some conditions firstly outlined and rigorously proven by

Joseph L. Doob

Joseph Leo Doob (February 27, 1910 – June 7, 2004) was an American mathematician, specializing in analysis and probability theory.

The theory of martingales was developed by Doob.

Early life and education

Doob was born in Cincinnati, Ohio, ...

in 1948, namely if the random variable in consideration has a finite

probability space. The more general results were obtained later by the statistician

David A. Freedman

David Amiel Freedman (5 March 1938 – 17 October 2008) was Professor of Statistics at the University of California, Berkeley. He was a distinguished mathematical statistician whose wide-ranging research included the analysis of martingale inequ ...

who published in two seminal research papers in 1963 and 1965 when and under what circumstances the asymptotic behaviour of posterior is guaranteed. His 1963 paper treats, like Doob (1949), the finite case and comes to a satisfactory conclusion. However, if the random variable has an infinite but countable

probability space (i.e., corresponding to a die with infinite many faces) the 1965 paper demonstrates that for a dense subset of priors the

Bernstein-von Mises theorem is not applicable. In this case there is

almost surely no asymptotic convergence. Later in the 1980s and 1990s

Freedman

A freedman or freedwoman is a formerly enslaved person who has been released from slavery, usually by legal means. Historically, enslaved people were freed by manumission (granted freedom by their captor-owners), emancipation (granted freedom a ...

and

Persi Diaconis continued to work on the case of infinite countable probability spaces. To summarise, there may be insufficient trials to suppress the effects of the initial choice, and especially for large (but finite) systems the convergence might be very slow.

Conjugate priors

In parameterized form, the prior distribution is often assumed to come from a family of distributions called

conjugate priors. The usefulness of a conjugate prior is that the corresponding posterior distribution will be in the same family, and the calculation may be expressed in

closed form.

Estimates of parameters and predictions

It is often desired to use a posterior distribution to estimate a parameter or variable. Several methods of Bayesian estimation select

measurements of central tendency from the posterior distribution.

For one-dimensional problems, a unique median exists for practical continuous problems. The posterior median is attractive as a

robust estimator.

If there exists a finite mean for the posterior distribution, then the posterior mean is a method of estimation.

Taking a value with the greatest probability defines

maximum ''a posteriori'' (MAP) estimates:

There are examples where no maximum is attained, in which case the set of MAP estimates is

empty.

There are other methods of estimation that minimize the posterior ''

risk'' (expected-posterior loss) with respect to a

loss function

In mathematical optimization and decision theory, a loss function or cost function (sometimes also called an error function) is a function that maps an event or values of one or more variables onto a real number intuitively representing some "cost ...

, and these are of interest to

statistical decision theory using the sampling distribution ("frequentist statistics").

The

posterior predictive distribution

Posterior may refer to:

* Posterior (anatomy), the end of an organism opposite to its head

** Buttocks, as a euphemism

* Posterior horn (disambiguation)

* Posterior probability

The posterior probability is a type of conditional probability that r ...

of a new observation

(that is independent of previous observations) is determined by

Examples

Probability of a hypothesis

Suppose there are two full bowls of cookies. Bowl #1 has 10 chocolate chip and 30 plain cookies, while bowl #2 has 20 of each. Our friend Fred picks a bowl at random, and then picks a cookie at random. We may assume there is no reason to believe Fred treats one bowl differently from another, likewise for the cookies. The cookie turns out to be a plain one. How probable is it that Fred picked it out of bowl #1?

Intuitively, it seems clear that the answer should be more than a half, since there are more plain cookies in bowl #1. The precise answer is given by Bayes' theorem. Let

correspond to bowl #1, and

to bowl #2.

It is given that the bowls are identical from Fred's point of view, thus

, and the two must add up to 1, so both are equal to 0.5.

The event

is the observation of a plain cookie. From the contents of the bowls, we know that

and

Bayes' formula then yields

Before we observed the cookie, the probability we assigned for Fred having chosen bowl #1 was the prior probability,

, which was 0.5. After observing the cookie, we must revise the probability to

, which is 0.6.

Making a prediction

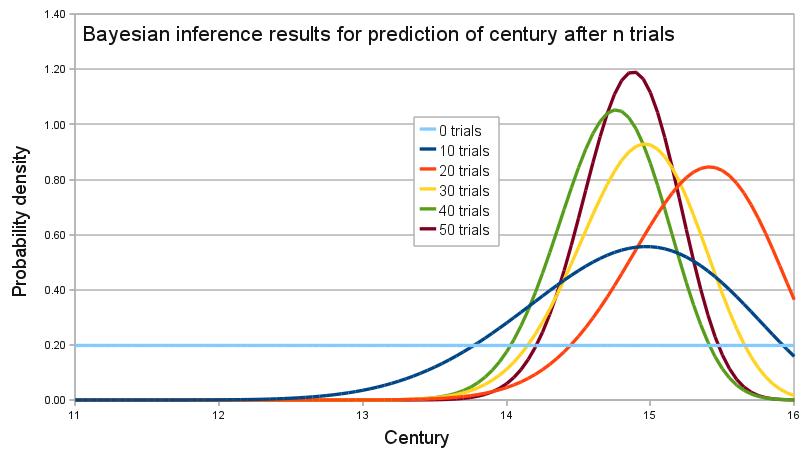

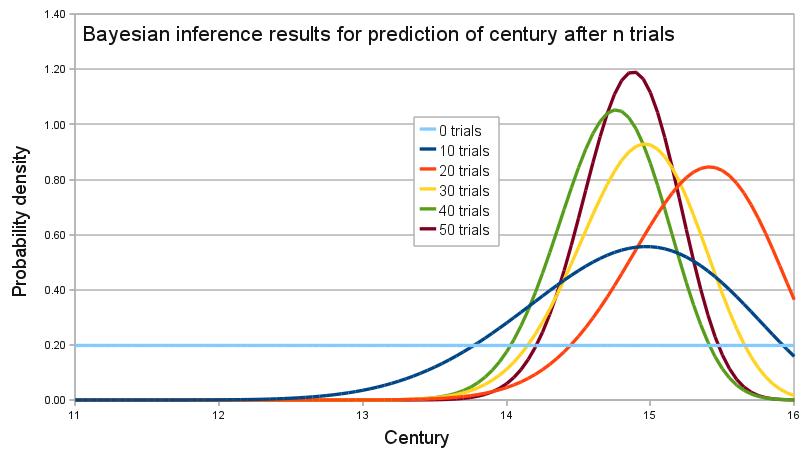

An archaeologist is working at a site thought to be from the medieval period, between the 11th century to the 16th century. However, it is uncertain exactly when in this period the site was inhabited. Fragments of pottery are found, some of which are glazed and some of which are decorated. It is expected that if the site were inhabited during the early medieval period, then 1% of the pottery would be glazed and 50% of its area decorated, whereas if it had been inhabited in the late medieval period then 81% would be glazed and 5% of its area decorated. How confident can the archaeologist be in the date of inhabitation as fragments are unearthed?

The degree of belief in the continuous variable

(century) is to be calculated, with the discrete set of events

as evidence. Assuming linear variation of glaze and decoration with time, and that these variables are independent,

Assume a uniform prior of

, and that trials are

independent and identically distributed

In probability theory and statistics, a collection of random variables is independent and identically distributed if each random variable has the same probability distribution as the others and all are mutually independent. This property is usua ...

. When a new fragment of type

is discovered, Bayes' theorem is applied to update the degree of belief for each

:

A computer simulation of the changing belief as 50 fragments are unearthed is shown on the graph. In the simulation, the site was inhabited around 1420, or

. By calculating the area under the relevant portion of the graph for 50 trials, the archaeologist can say that there is practically no chance the site was inhabited in the 11th and 12th centuries, about 1% chance that it was inhabited during the 13th century, 63% chance during the 14th century and 36% during the 15th century. The

Bernstein-von Mises theorem asserts here the asymptotic convergence to the "true" distribution because the

probability space corresponding to the discrete set of events

is finite (see above section on asymptotic behaviour of the posterior).

In frequentist statistics and decision theory

A

decision-theoretic justification of the use of Bayesian inference was given by

Abraham Wald, who proved that every unique Bayesian procedure is

admissible. Conversely, every

admissible statistical procedure is either a Bayesian procedure or a limit of Bayesian procedures.

[Bickel & Doksum (2001, p. 32)]

Wald characterized admissible procedures as Bayesian procedures (and limits of Bayesian procedures), making the Bayesian formalism a central technique in such areas of

frequentist inference as

parameter estimation,

hypothesis testing, and computing

confidence intervals. For example:

* "Under some conditions, all admissible procedures are either Bayes procedures or limits of Bayes procedures (in various senses). These remarkable results, at least in their original form, are due essentially to Wald. They are useful because the property of being Bayes is easier to analyze than admissibility."

* "In decision theory, a quite general method for proving admissibility consists in exhibiting a procedure as a unique Bayes solution."

*"In the first chapters of this work, prior distributions with finite support and the corresponding Bayes procedures were used to establish some of the main theorems relating to the comparison of experiments. Bayes procedures with respect to more general prior distributions have played a very important role in the development of statistics, including its asymptotic theory." "There are many problems where a glance at posterior distributions, for suitable priors, yields immediately interesting information. Also, this technique can hardly be avoided in sequential analysis."

*"A useful fact is that any Bayes decision rule obtained by taking a proper prior over the whole parameter space must be admissible"

*"An important area of investigation in the development of admissibility ideas has been that of conventional sampling-theory procedures, and many interesting results have been obtained."

Model selection

Bayesian methodology also plays a role in

model selection where the aim is to select one model from a set of competing models that represents most closely the underlying process that generated the observed data. In Bayesian model comparison, the model with the highest

posterior probability

The posterior probability is a type of conditional probability that results from updating the prior probability with information summarized by the likelihood via an application of Bayes' rule. From an epistemological perspective, the posterior p ...

given the data is selected. The posterior probability of a model depends on the evidence, or

marginal likelihood

A marginal likelihood is a likelihood function that has been integrated over the parameter space. In Bayesian statistics, it represents the probability of generating the observed sample from a prior and is therefore often referred to as model evi ...

, which reflects the probability that the data is generated by the model, and on the

prior belief of the model. When two competing models are a priori considered to be equiprobable, the ratio of their posterior probabilities corresponds to the

Bayes factor. Since Bayesian model comparison is aimed on selecting the model with the highest posterior probability, this methodology is also referred to as the maximum a posteriori (MAP) selection rule or the MAP probability rule.

Probabilistic programming

While conceptually simple, Bayesian methods can be mathematically and numerically challenging. Probabilistic programming languages (PPLs) implement functions to easily build Bayesian models together with efficient automatic inference methods. This helps separate the model building from the inference, allowing practitioners to focus on their specific problems and leaving PPLs to handle the computational details for them.

Applications

Statistical data analysis

See the separate Wikipedia entry on

Bayesian Statistics, specifically the

Statistical modeling section in that page.

Computer applications

Bayesian inference has applications in

artificial intelligence and

expert system

In artificial intelligence, an expert system is a computer system emulating the decision-making ability of a human expert.

Expert systems are designed to solve complex problems by reasoning through bodies of knowledge, represented mainly as if� ...

s. Bayesian inference techniques have been a fundamental part of computerized

pattern recognition techniques since the late 1950s. There is also an ever-growing connection between Bayesian methods and simulation-based

Monte Carlo techniques since complex models cannot be processed in closed form by a Bayesian analysis, while a

graphical model structure ''may'' allow for efficient simulation algorithms like the

Gibbs sampling and other

Metropolis–Hastings algorithm schemes. Recently Bayesian inference has gained popularity among the

phylogenetics community for these reasons; a number of applications allow many demographic and evolutionary parameters to be estimated simultaneously.

As applied to

statistical classification, Bayesian inference has been used to develop algorithms for identifying

e-mail spam. Applications which make use of Bayesian inference for spam filtering include

CRM114,

DSPAM,

Bogofilter

Bogofilter is a mail filter that classifies e-mail as spam or ham (non-spam) by a statistical analysis of the message's header and content (body). The program is able to learn from the user's classifications and corrections. It was originally writt ...

,

SpamAssassin,

SpamBayes

SpamBayes is a Bayesian spam filter written in Python which uses techniques laid out by Paul Graham in his essay "A Plan for Spam". It has subsequently been improved by Gary Robinson and Tim Peters, among others.

The most notable difference b ...

,

Mozilla, XEAMS, and others. Spam classification is treated in more detail in the article on the

naïve Bayes classifier

In statistics, naive Bayes classifiers are a family of simple "Probabilistic classification, probabilistic classifiers" based on applying Bayes' theorem with strong (naive) statistical independence, independence assumptions between the features (s ...

.

Solomonoff's Inductive inference is the theory of prediction based on observations; for example, predicting the next symbol based upon a given series of symbols. The only assumption is that the environment follows some unknown but computable probability distribution. It is a formal inductive framework that combines two well-studied principles of inductive inference: Bayesian statistics and

Occam's Razor

Occam's razor, Ockham's razor, or Ocham's razor ( la, novacula Occami), also known as the principle of parsimony or the law of parsimony ( la, lex parsimoniae), is the problem-solving principle that "entities should not be multiplied beyond neces ...

. Solomonoff's universal prior probability of any prefix ''p'' of a computable sequence ''x'' is the sum of the probabilities of all programs (for a universal computer) that compute something starting with ''p''. Given some ''p'' and any computable but unknown probability distribution from which ''x'' is sampled, the universal prior and Bayes' theorem can be used to predict the yet unseen parts of ''x'' in optimal fashion.

Bioinformatics and healthcare applications

Bayesian inference has been applied in different

Bioinformatics

Bioinformatics () is an interdisciplinary field that develops methods and software tools for understanding biological data, in particular when the data sets are large and complex. As an interdisciplinary field of science, bioinformatics combi ...

applications, including differential gene expression analysis.

[Robinson, Mark D & McCarthy, Davis J & Smyth, Gordon K edgeR: a Bioconductor package for differential expression analysis of digital gene expression data, Bioinformatics.] Bayesian inference is also used in a general cancer risk model, called

CIRI (Continuous Individualized Risk Index), where serial measurements are incorporated to update a Bayesian model which is primarily built from prior knowledge.

In the courtroom

Bayesian inference can be used by jurors to coherently accumulate the evidence for and against a defendant, and to see whether, in totality, it meets their personal threshold for '

beyond a reasonable doubt

Beyond a reasonable doubt is a legal standard of proof required to validate a criminal conviction in most adversarial legal systems. It is a higher standard of proof than the balance of probabilities standard commonly used in civil cases, bec ...

'. Bayes' theorem is applied successively to all evidence presented, with the posterior from one stage becoming the prior for the next. The benefit of a Bayesian approach is that it gives the juror an unbiased, rational mechanism for combining evidence. It may be appropriate to explain Bayes' theorem to jurors in

odds form, as

betting odds are more widely understood than probabilities. Alternatively, a

logarithmic approach, replacing multiplication with addition, might be easier for a jury to handle.

If the existence of the crime is not in doubt, only the identity of the culprit, it has been suggested that the prior should be uniform over the qualifying population. For example, if 1,000 people could have committed the crime, the prior probability of guilt would be 1/1000.

The use of Bayes' theorem by jurors is controversial. In the United Kingdom, a defence

expert witness explained Bayes' theorem to the jury in ''

R v Adams''. The jury convicted, but the case went to appeal on the basis that no means of accumulating evidence had been provided for jurors who did not wish to use Bayes' theorem. The Court of Appeal upheld the conviction, but it also gave the opinion that "To introduce Bayes' Theorem, or any similar method, into a criminal trial plunges the jury into inappropriate and unnecessary realms of theory and complexity, deflecting them from their proper task."

Gardner-Medwin argues that the criterion on which a verdict in a criminal trial should be based is ''not'' the probability of guilt, but rather the ''probability of the evidence, given that the defendant is innocent'' (akin to a

frequentist p-value

In null-hypothesis significance testing, the ''p''-value is the probability of obtaining test results at least as extreme as the result actually observed, under the assumption that the null hypothesis is correct. A very small ''p''-value means ...

). He argues that if the posterior probability of guilt is to be computed by Bayes' theorem, the prior probability of guilt must be known. This will depend on the incidence of the crime, which is an unusual piece of evidence to consider in a criminal trial. Consider the following three propositions:

*A The known facts and testimony could have arisen if the defendant is guilty

*B The known facts and testimony could have arisen if the defendant is innocent

*C The defendant is guilty.

Gardner-Medwin argues that the jury should believe both A and not-B in order to convict. A and not-B implies the truth of C, but the reverse is not true. It is possible that B and C are both true, but in this case he argues that a jury should acquit, even though they know that they will be letting some guilty people go free. See also

Lindley's paradox.

Bayesian epistemology

Bayesian epistemology is a movement that advocates for Bayesian inference as a means of justifying the rules of inductive logic.

Karl Popper

Sir Karl Raimund Popper (28 July 1902 – 17 September 1994) was an Austrian-British philosopher, academic and social commentator. One of the 20th century's most influential philosophers of science, Popper is known for his rejection of the cl ...

and

David Miller have rejected the idea of Bayesian rationalism, i.e. using Bayes rule to make epistemological inferences: It is prone to the same

vicious circle

A vicious circle (or cycle) is a complex chain of events that reinforces itself through a feedback loop, with detrimental results. It is a system with no tendency toward equilibrium (social, economic, ecological, etc.), at least in the short r ...

as any other

justificationist

Critical rationalism is an epistemological philosophy advanced by Karl Popper on the basis that, if a statement cannot be logically deduced (from what is known), it might nevertheless be possible to logically falsify it. Following David Hume, Hum ...

epistemology, because it presupposes what it attempts to justify. According to this view, a rational interpretation of Bayesian inference would see it merely as a probabilistic version of

falsification, rejecting the belief, commonly held by Bayesians, that high likelihood achieved by a series of Bayesian updates would prove the hypothesis beyond any reasonable doubt, or even with likelihood greater than 0.

Other

* The

scientific method is sometimes interpreted as an application of Bayesian inference. In this view, Bayes' rule guides (or should guide) the updating of probabilities about

hypotheses

A hypothesis (plural hypotheses) is a proposed explanation for a phenomenon. For a hypothesis to be a scientific hypothesis, the scientific method requires that one can test it. Scientists generally base scientific hypotheses on previous obser ...

conditional on new observations or

experiments. The Bayesian inference has also been applied to treat

stochastic scheduling

Stochastic scheduling concerns scheduling problems involving random attributes, such as random processing times, random due dates, random weights, and stochastic machine breakdowns. Major applications arise in manufacturing systems, computer system ...

problems with incomplete information by Cai et al. (2009).

*

Bayesian search theory is used to search for lost objects.

*

Bayesian inference in phylogeny

*

Bayesian tool for methylation analysis

*

Bayesian approaches to brain function investigate the brain as a Bayesian mechanism.

* Bayesian inference in ecological studies

* Bayesian inference is used to estimate parameters in stochastic chemical kinetic models

* Bayesian inference in

econophysics for currency or

stock market prediction

Stock market prediction is the act of trying to determine the future value of a company stock or other financial instrument traded on an exchange. The successful prediction of a stock's future price could yield significant profit. The efficient- ...

*

Bayesian inference in marketing

In marketing, Bayesian inference allows for decision making and market research evaluation under uncertainty and with limited data.

Introduction

Bayes’ theorem is fundamental to Bayesian inference. It is a subset of statistics, providing a mat ...

*

Bayesian inference in motor learning

Bayesian inference is a statistical tool that can be applied to motor learning, specifically to adaptation. Adaptation is a short-term learning process involving gradual improvement in performance in response to a change in sensory information. Ba ...

* Bayesian inference is used in

probabilistic numerics to solve numerical problems

Bayes and Bayesian inference

The problem considered by Bayes in Proposition 9 of his essay, "

An Essay towards solving a Problem in the Doctrine of Chances", is the posterior distribution for the parameter ''a'' (the success rate) of the

binomial distribution

In probability theory and statistics, the binomial distribution with parameters ''n'' and ''p'' is the discrete probability distribution of the number of successes in a sequence of ''n'' independent experiments, each asking a yes–no quest ...

.

History

The term ''Bayesian'' refers to

Thomas Bayes (1701–1761), who proved that probabilistic limits could be placed on an unknown event. However, it was

Pierre-Simon Laplace (1749–1827) who introduced (as Principle VI) what is now called

Bayes' theorem

In probability theory and statistics, Bayes' theorem (alternatively Bayes' law or Bayes' rule), named after Thomas Bayes, describes the probability of an event, based on prior knowledge of conditions that might be related to the event. For examp ...

and used it to address problems in

celestial mechanics, medical statistics,

reliability, and

jurisprudence.

Early Bayesian inference, which used uniform priors following Laplace's

principle of insufficient reason, was called "

inverse probability" (because it

infer

Inferences are steps in reasoning, moving from premises to logical consequences; etymologically, the word '' infer'' means to "carry forward". Inference is theoretically traditionally divided into deduction and induction, a distinction that in ...

s backwards from observations to parameters, or from effects to causes

[). After the 1920s, "inverse probability" was largely supplanted by a collection of methods that came to be called ]frequentist statistics

Frequentist inference is a type of statistical inference based in frequentist probability, which treats “probability” in equivalent terms to “frequency” and draws conclusions from sample-data by means of emphasizing the frequency or pro ...

.[

In the 20th century, the ideas of Laplace were further developed in two different directions, giving rise to ''objective'' and ''subjective'' currents in Bayesian practice. In the objective or "non-informative" current, the statistical analysis depends on only the model assumed, the data analyzed,]

See also

References

Citations

Sources

* Aster, Richard; Borchers, Brian, and Thurber, Clifford (2012). ''Parameter Estimation and Inverse Problems'', Second Edition, Elsevier. ,

*

* Box, G. E. P. and Tiao, G. C. (1973) ''Bayesian Inference in Statistical Analysis'', Wiley,

*

*

* Jaynes E. T. (2003) ''Probability Theory: The Logic of Science'', CUP.

Link to Fragmentary Edition of March 1996

.

*

*

Further reading

* For a full report on the history of Bayesian statistics and the debates with frequentists approaches, read

Elementary

The following books are listed in ascending order of probabilistic sophistication:

* Stone, JV (2013), "Bayes' Rule: A Tutorial Introduction to Bayesian Analysis",

Sebtel Press, England.

*

*

*

*

* Bolstad, William M. (2007) ''Introduction to Bayesian Statistics'': Second Edition, John Wiley

* Updated classic textbook. Bayesian theory clearly presented.

* Lee, Peter M. ''Bayesian Statistics: An Introduction''. Fourth Edition (2012), John Wiley

*

*

Intermediate or advanced

*

*

* DeGroot, Morris H., ''Optimal Statistical Decisions''. Wiley Classics Library. 2004. (Originally published (1970) by McGraw-Hill.) .

*

* Jaynes, E. T. (1998

''Probability Theory: The Logic of Science''

* O'Hagan, A. and Forster, J. (2003) ''Kendall's Advanced Theory of Statistics'', Volume 2B: ''Bayesian Inference''. Arnold, New York. .

*

* Glenn Shafer

Glenn Shafer (born November 21, 1946) is an American mathematician and statistician. He is the co-creator of Dempster–Shafer theory. He is a University Professor and Board of Governors Professor at Rutgers University.

Early life and education

S ...

and Pearl, Judea, eds. (1988) ''Probabilistic Reasoning in Intelligent Systems'', San Mateo, CA: Morgan Kaufmann.

* Pierre Bessière et al. (2013),

Bayesian Programming

, CRC Press.

* Francisco J. Samaniego (2010), "A Comparison of the Bayesian and Frequentist Approaches to Estimation" Springer, New York,

External links

*

Bayesian Statistics

from Scholarpedia.

from Queen Mary University of London

* ttp://cocosci.berkeley.edu/tom/bayes.html Bayesian reading list categorized and annotated b

Tom Griffiths

* A. Hajek and S. Hartmann

Bayesian Epistemology

in: J. Dancy et al. (eds.), A Companion to Epistemology. Oxford: Blackwell 2010, 93–106.

* S. Hartmann and J. Sprenger

Bayesian Epistemology

in: S. Bernecker and D. Pritchard (eds.), Routledge Companion to Epistemology. London: Routledge 2010, 609–620.

''Stanford Encyclopedia of Philosophy'': "Inductive Logic"Bayesian Confirmation Theory

{{DEFAULTSORT:Bayesian Inference

Logic and statistics

Statistical forecasting

An archaeologist is working at a site thought to be from the medieval period, between the 11th century to the 16th century. However, it is uncertain exactly when in this period the site was inhabited. Fragments of pottery are found, some of which are glazed and some of which are decorated. It is expected that if the site were inhabited during the early medieval period, then 1% of the pottery would be glazed and 50% of its area decorated, whereas if it had been inhabited in the late medieval period then 81% would be glazed and 5% of its area decorated. How confident can the archaeologist be in the date of inhabitation as fragments are unearthed?

The degree of belief in the continuous variable (century) is to be calculated, with the discrete set of events as evidence. Assuming linear variation of glaze and decoration with time, and that these variables are independent,

Assume a uniform prior of , and that trials are

An archaeologist is working at a site thought to be from the medieval period, between the 11th century to the 16th century. However, it is uncertain exactly when in this period the site was inhabited. Fragments of pottery are found, some of which are glazed and some of which are decorated. It is expected that if the site were inhabited during the early medieval period, then 1% of the pottery would be glazed and 50% of its area decorated, whereas if it had been inhabited in the late medieval period then 81% would be glazed and 5% of its area decorated. How confident can the archaeologist be in the date of inhabitation as fragments are unearthed?

The degree of belief in the continuous variable (century) is to be calculated, with the discrete set of events as evidence. Assuming linear variation of glaze and decoration with time, and that these variables are independent,

Assume a uniform prior of , and that trials are