|

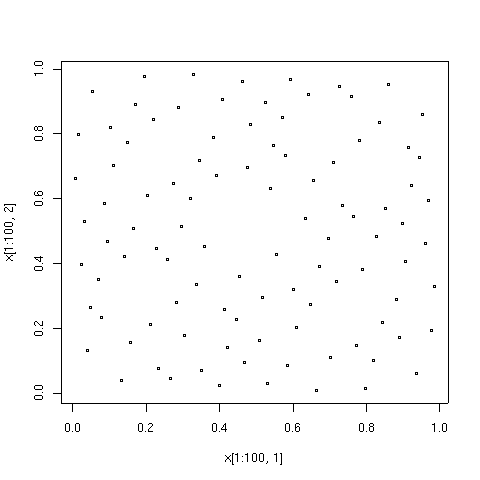

Quasi-random

In mathematics, a low-discrepancy sequence is a sequence with the property that for all values of N, its subsequence x_1, \ldots, x_N has a low discrepancy. Roughly speaking, the discrepancy of a sequence is low if the proportion of points in the sequence falling into an arbitrary set ''B'' is close to proportional to the measure of ''B'', as would happen on average (but not for particular samples) in the case of an equidistributed sequence. Specific definitions of discrepancy differ regarding the choice of ''B'' ( hyperspheres, hypercubes, etc.) and how the discrepancy for every B is computed (usually normalized) and combined (usually by taking the worst value). Low-discrepancy sequences are also called quasirandom sequences, due to their common use as a replacement of uniformly distributed random numbers. The "quasi" modifier is used to denote more clearly that the values of a low-discrepancy sequence are neither random nor pseudorandom, but such sequences share some propertie ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Quasi-Monte Carlo Method

In numerical analysis, the quasi-Monte Carlo method is a method for numerical integration and solving some other problems using low-discrepancy sequences (also called quasi-random sequences or sub-random sequences) to achieve variance reduction. This is in contrast to the regular Monte Carlo method or Monte Carlo integration, which are based on sequences of pseudorandom numbers. Monte Carlo and quasi-Monte Carlo methods are stated in a similar way. The problem is to approximate the integral of a function ''f'' as the average of the function evaluated at a set of points ''x''1, ..., ''x''''N'': : \int_ f(u)\,u \approx \frac\,\sum_^N f(x_i). Since we are integrating over the ''s''-dimensional unit cube, each ''x''''i'' is a vector of ''s'' elements. The difference between quasi-Monte Carlo and Monte Carlo is the way the ''x''''i'' are chosen. Quasi-Monte Carlo uses a low-discrepancy sequence such as the Halton sequence, the Sobol sequence, or the Faure sequence, whereas Mont ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Subrandom Kurtosis

In mathematics, a low-discrepancy sequence is a sequence with the property that for all values of N, its subsequence x_1, \ldots, x_N has a low discrepancy. Roughly speaking, the discrepancy of a sequence is low if the proportion of points in the sequence falling into an arbitrary set ''B'' is close to proportional to the measure of ''B'', as would happen on average (but not for particular samples) in the case of an equidistributed sequence. Specific definitions of discrepancy differ regarding the choice of ''B'' ( hyperspheres, hypercubes, etc.) and how the discrepancy for every B is computed (usually normalized) and combined (usually by taking the worst value). Low-discrepancy sequences are also called quasirandom sequences, due to their common use as a replacement of uniformly distributed random numbers. The "quasi" modifier is used to denote more clearly that the values of a low-discrepancy sequence are neither random nor pseudorandom, but such sequences share some propertie ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mathematics

Mathematics is a field of study that discovers and organizes methods, Mathematical theory, theories and theorems that are developed and Mathematical proof, proved for the needs of empirical sciences and mathematics itself. There are many areas of mathematics, which include number theory (the study of numbers), algebra (the study of formulas and related structures), geometry (the study of shapes and spaces that contain them), Mathematical analysis, analysis (the study of continuous changes), and set theory (presently used as a foundation for all mathematics). Mathematics involves the description and manipulation of mathematical object, abstract objects that consist of either abstraction (mathematics), abstractions from nature orin modern mathematicspurely abstract entities that are stipulated to have certain properties, called axioms. Mathematics uses pure reason to proof (mathematics), prove properties of objects, a ''proof'' consisting of a succession of applications of in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Kurtosis

In probability theory and statistics, kurtosis (from , ''kyrtos'' or ''kurtos'', meaning "curved, arching") refers to the degree of “tailedness” in the probability distribution of a real-valued random variable. Similar to skewness, kurtosis provides insight into specific characteristics of a distribution. Various methods exist for quantifying kurtosis in theoretical distributions, and corresponding techniques allow estimation based on sample data from a population. It’s important to note that different measures of kurtosis can yield varying interpretations. The standard measure of a distribution's kurtosis, originating with Karl Pearson, is a scaled version of the fourth moment of the distribution. This number is related to the tails of the distribution, not its peak; hence, the sometimes-seen characterization of kurtosis as " peakedness" is incorrect. For this measure, higher kurtosis corresponds to greater extremity of deviations (or outliers), and not the configur ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Lebesgue Measure

In measure theory, a branch of mathematics, the Lebesgue measure, named after French mathematician Henri Lebesgue, is the standard way of assigning a measure to subsets of higher dimensional Euclidean '-spaces. For lower dimensions or , it coincides with the standard measure of length, area, or volume. In general, it is also called '-dimensional volume, '-volume, hypervolume, or simply volume. It is used throughout real analysis, in particular to define Lebesgue integration. Sets that can be assigned a Lebesgue measure are called Lebesgue-measurable; the measure of the Lebesgue-measurable set A is here denoted by \lambda(A). Henri Lebesgue described this measure in the year 1901 which, a year after, was followed up by his description of the Lebesgue integral. Both were published as part of his dissertation in 1902. Definition For any interval I = ,b/math>, or I = (a, b), in the set \mathbb of real numbers, let \ell(I)= b - a denote its length. For any subset E\subseteq ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Harald Niederreiter

Harald G. Niederreiter (born June 7, 1944) is an Austrian mathematician known for his work in discrepancy theory, algebraic geometry, quasi-Monte Carlo methods, and cryptography. Education and career Niederreiter was born on June 7, 1944, in Vienna, and grew up in Salzburg... He began studying mathematics at the University of Vienna in 1963, and finished his doctorate there in 1969, with a thesis on discrepancy in compact abelian groups supervised by Edmund Hlawka. He began his academic career as an assistant professor at the University of Vienna, but soon moved to Southern Illinois University. During this period he also visited the University of Illinois at Urbana-Champaign, Institute for Advanced Study, and University of California, Los Angeles. In 1978 he moved again, becoming the head of a new mathematics department at the University of the West Indies in Jamaica. In 1981 he returned to Austria for a post at the Austrian Academy of Sciences, where from 1989 to 2000 he serve ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Monte Carlo Method

Monte Carlo methods, or Monte Carlo experiments, are a broad class of computational algorithms that rely on repeated random sampling to obtain numerical results. The underlying concept is to use randomness to solve problems that might be deterministic in principle. The name comes from the Monte Carlo Casino in Monaco, where the primary developer of the method, mathematician Stanisław Ulam, was inspired by his uncle's gambling habits. Monte Carlo methods are mainly used in three distinct problem classes: optimization, numerical integration, and generating draws from a probability distribution. They can also be used to model phenomena with significant uncertainty in inputs, such as calculating the risk of a nuclear power plant failure. Monte Carlo methods are often implemented using computer simulations, and they can provide approximate solutions to problems that are otherwise intractable or too complex to analyze mathematically. Monte Carlo methods are widely used in va ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Numerical Integration

In analysis, numerical integration comprises a broad family of algorithms for calculating the numerical value of a definite integral. The term numerical quadrature (often abbreviated to quadrature) is more or less a synonym for "numerical integration", especially as applied to one-dimensional integrals. Some authors refer to numerical integration over more than one dimension as cubature; others take "quadrature" to include higher-dimensional integration. The basic problem in numerical integration is to compute an approximate solution to a definite integral :\int_a^b f(x) \, dx to a given degree of accuracy. If is a smooth function integrated over a small number of dimensions, and the domain of integration is bounded, there are many methods for approximating the integral to the desired precision. Numerical integration has roots in the geometrical problem of finding a square with the same area as a given plane figure ('' quadrature'' or ''squaring''), as in the quadrature of t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Local Minima

In mathematical analysis, the maximum and minimum of a function are, respectively, the greatest and least value taken by the function. Known generically as extremum, they may be defined either within a given range (the ''local'' or ''relative'' extrema) or on the entire domain (the ''global'' or ''absolute'' extrema) of a function. Pierre de Fermat was one of the first mathematicians to propose a general technique, adequality, for finding the maxima and minima of functions. As defined in set theory, the maximum and minimum of a set are the greatest and least elements in the set, respectively. Unbounded infinite sets, such as the set of real numbers, have no minimum or maximum. In statistics, the corresponding concept is the sample maximum and minimum. Definition A real-valued function ''f'' defined on a domain ''X'' has a global (or absolute) maximum point at ''x''∗, if for all ''x'' in ''X''. Similarly, the function has a global (or absolute) minimum point at ''x''∗, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cumulative Distribution Function

In probability theory and statistics, the cumulative distribution function (CDF) of a real-valued random variable X, or just distribution function of X, evaluated at x, is the probability that X will take a value less than or equal to x. Every probability distribution Support (measure theory), supported on the real numbers, discrete or "mixed" as well as Continuous variable, continuous, is uniquely identified by a right-continuous Monotonic function, monotone increasing function (a càdlàg function) F \colon \mathbb R \rightarrow [0,1] satisfying \lim_F(x)=0 and \lim_F(x)=1. In the case of a scalar continuous distribution, it gives the area under the probability density function from negative infinity to x. Cumulative distribution functions are also used to specify the distribution of multivariate random variables. Definition The cumulative distribution function of a real-valued random variable X is the function given by where the right-hand side represents the probability ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Median

The median of a set of numbers is the value separating the higher half from the lower half of a Sample (statistics), data sample, a statistical population, population, or a probability distribution. For a data set, it may be thought of as the “middle" value. The basic feature of the median in describing data compared to the Arithmetic mean, mean (often simply described as the "average") is that it is not Skewness, skewed by a small proportion of extremely large or small values, and therefore provides a better representation of the center. Median income, for example, may be a better way to describe the center of the income distribution because increases in the largest incomes alone have no effect on the median. For this reason, the median is of central importance in robust statistics. Median is a 2-quantile; it is the value that partitions a set into two equal parts. Finite set of numbers The median of a finite list of numbers is the "middle" number, when those numbers are liste ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |