|

Elastic Map

Elastic maps provide a tool for nonlinear dimensionality reduction. By their construction, they are a system of elastic springs embedded in the data space. This system approximates a low-dimensional manifold. The elastic coefficients of this system allow the switch from completely unstructured k-means clustering (zero elasticity) to the estimators located closely to linear PCA manifolds (for high bending and low stretching modules). With some intermediate values of the elasticity coefficients, this system effectively approximates non-linear principal manifolds. This approach is based on a mechanical analogy between principal manifolds, that are passing through "the middle" of the data distribution, and elastic membranes and plates. The method was developed by A.N. GorbanA.Y. Zinovyevand A.A. Pitenko in 1996–1998. Energy of elastic map Let be a data set in a finite-dimensional Euclidean space. Elastic map is represented by a set of nodes _j in the same space. Each datapo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

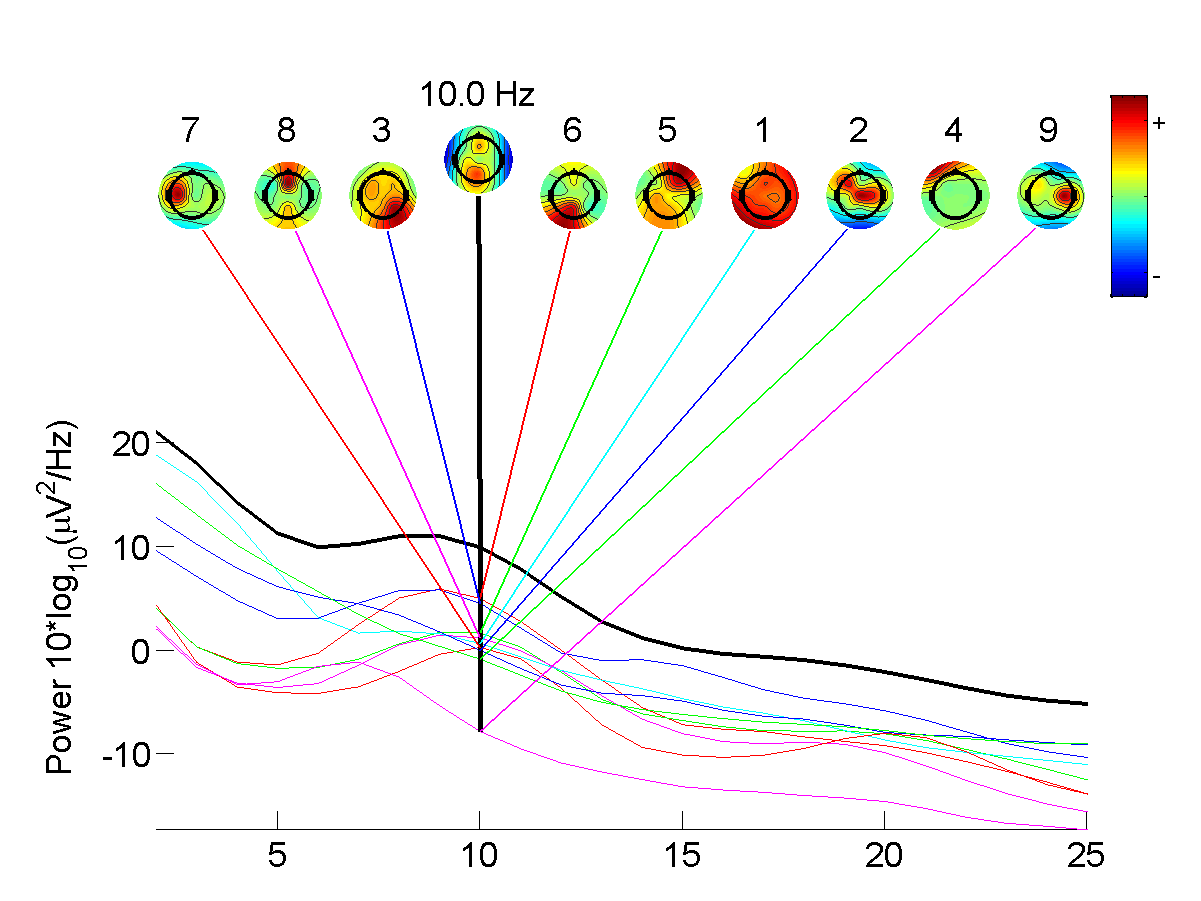

Independent Component Analysis

In signal processing, independent component analysis (ICA) is a computational method for separating a multivariate statistics, multivariate signal into additive subcomponents. This is done by assuming that at most one subcomponent is Gaussian and that the subcomponents are Statistical independence, statistically independent from each other. ICA was invented by Jeanny Hérault and Christian Jutten in 1985. ICA is a special case of blind source separation. A common example application of ICA is the "cocktail party problem" of listening in on one person's speech in a noisy room. Introduction Independent component analysis attempts to decompose a multivariate signal into independent non-Gaussian signals. As an example, sound is usually a signal that is composed of the numerical addition, at each time t, of signals from several sources. The question then is whether it is possible to separate these contributing sources from the observed total signal. When the statistical independence ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Principal Component Analysis

Principal component analysis (PCA) is a linear dimensionality reduction technique with applications in exploratory data analysis, visualization and data preprocessing. The data is linearly transformed onto a new coordinate system such that the directions (principal components) capturing the largest variation in the data can be easily identified. The principal components of a collection of points in a real coordinate space are a sequence of p unit vectors, where the i-th vector is the direction of a line that best fits the data while being orthogonal to the first i-1 vectors. Here, a best-fitting line is defined as one that minimizes the average squared perpendicular distance from the points to the line. These directions (i.e., principal components) constitute an orthonormal basis in which different individual dimensions of the data are linearly uncorrelated. Many studies use the first two principal components in order to plot the data in two dimensions and to visually identi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Self-organizing Map

A self-organizing map (SOM) or self-organizing feature map (SOFM) is an unsupervised machine learning technique used to produce a low-dimensional (typically two-dimensional) representation of a higher-dimensional data set while preserving the topological structure of the data. For example, a data set with p variables measured in n observations could be represented as clusters of observations with similar values for the variables. These clusters then could be visualized as a two-dimensional "map" such that observations in proximal clusters have more similar values than observations in distal clusters. This can make high-dimensional data easier to visualize and analyze. An SOM is a type of artificial neural network but is trained using competitive learning rather than the error-correction learning (e.g., backpropagation with gradient descent) used by other artificial neural networks. The SOM was introduced by the Finnish professor Teuvo Kohonen in the 1980s and therefore is some ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Support Vector Machine

In machine learning, support vector machines (SVMs, also support vector networks) are supervised max-margin models with associated learning algorithms that analyze data for classification and regression analysis. Developed at AT&T Bell Laboratories, SVMs are one of the most studied models, being based on statistical learning frameworks of VC theory proposed by Vapnik (1982, 1995) and Chervonenkis (1974). In addition to performing linear classification, SVMs can efficiently perform non-linear classification using the ''kernel trick'', representing the data only through a set of pairwise similarity comparisons between the original data points using a kernel function, which transforms them into coordinates in a higher-dimensional feature space. Thus, SVMs use the kernel trick to implicitly map their inputs into high-dimensional feature spaces, where linear classification can be performed. Being max-margin models, SVMs are resilient to noisy data (e.g., misclassified examples). ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

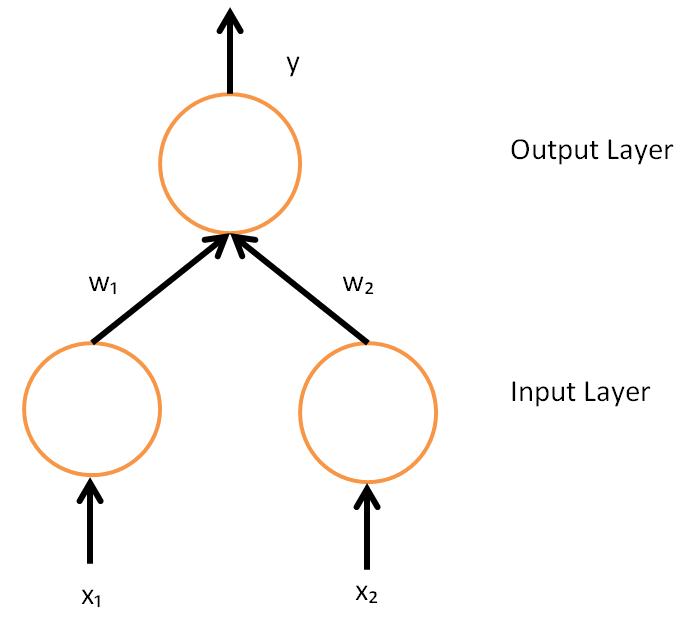

Artificial Neural Network

In machine learning, a neural network (also artificial neural network or neural net, abbreviated ANN or NN) is a computational model inspired by the structure and functions of biological neural networks. A neural network consists of connected units or nodes called '' artificial neurons'', which loosely model the neurons in the brain. Artificial neuron models that mimic biological neurons more closely have also been recently investigated and shown to significantly improve performance. These are connected by ''edges'', which model the synapses in the brain. Each artificial neuron receives signals from connected neurons, then processes them and sends a signal to other connected neurons. The "signal" is a real number, and the output of each neuron is computed by some non-linear function of the sum of its inputs, called the '' activation function''. The strength of the signal at each connection is determined by a ''weight'', which adjusts during the learning process. Typically, ne ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Backpropagation

In machine learning, backpropagation is a gradient computation method commonly used for training a neural network to compute its parameter updates. It is an efficient application of the chain rule to neural networks. Backpropagation computes the gradient of a loss function with respect to the weights of the network for a single input–output example, and does so efficiently, computing the gradient one layer at a time, iterating backward from the last layer to avoid redundant calculations of intermediate terms in the chain rule; this can be derived through dynamic programming. Strictly speaking, the term ''backpropagation'' refers only to an algorithm for efficiently computing the gradient, not how the gradient is used; but the term is often used loosely to refer to the entire learning algorithm – including how the gradient is used, such as by stochastic gradient descent, or as an intermediate step in a more complicated optimizer, such as Adaptive Moment Estimation. The ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Multiphase Flow

In fluid mechanics, multiphase flow is the simultaneous Fluid dynamics, flow of materials with two or more thermodynamic Phase (matter), phases. Virtually all processing technologies from Cavitation, cavitating pumps and turbines to paper-making and the construction of plastics involve some form of multiphase flow. It is also prevalent in many List of natural phenomena, natural phenomena. These phases may consist of one chemical component (e.g. flow of water and water vapour), or several different chemical components (e.g. flow of oil and water). A phase is classified as ''continuous'' if it occupies a continually connected region of space (as opposed to ''disperse'' if the phase occupies disconnected regions of space). The continuous phase may be either gaseous or a liquid. The disperse phase can consist of a solid, liquid or gas. Two general topologies can be identified: ''disperse'' flows and ''separated'' flows.'' ''The former consists of finite particles, drops or bubbles di ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Learning

Machine learning (ML) is a field of study in artificial intelligence concerned with the development and study of Computational statistics, statistical algorithms that can learn from data and generalise to unseen data, and thus perform Task (computing), tasks without explicit Machine code, instructions. Within a subdiscipline in machine learning, advances in the field of deep learning have allowed Neural network (machine learning), neural networks, a class of statistical algorithms, to surpass many previous machine learning approaches in performance. ML finds application in many fields, including natural language processing, computer vision, speech recognition, email filtering, agriculture, and medicine. The application of ML to business problems is known as predictive analytics. Statistics and mathematical optimisation (mathematical programming) methods comprise the foundations of machine learning. Data mining is a related field of study, focusing on exploratory data analysi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Financial Portfolio

In finance, a portfolio is a collection of investments. Definition The term "portfolio" refers to any combination of financial assets such as stocks, bonds and cash. Portfolios may be held by individual investors or managed by financial professionals, hedge funds, banks and other financial institutions. It is a generally accepted principle that a portfolio is designed according to the investor's risk tolerance, time frame and investment objectives. The monetary value of each asset may influence the risk/reward ratio of the portfolio. When determining asset allocation, the aim is to maximise the expected return and minimise the risk. This is an example of a multi-objective optimization problem: many efficient solutions are available and the preferred solution must be selected by considering a tradeoff between risk and return. In particular, a portfolio A is dominated by another portfolio A' if A' has a greater expected gain and a lesser risk than A. If no portfolio dominates A, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Trichomes

Trichomes (; ) are fine outgrowths or appendages on plants, algae, lichens, and certain protists. They are of diverse structure and function. Examples are hairs, glandular hairs, scales, and papillae. A covering of any kind of hair on a plant is an indumentum, and the surface bearing them is said to be Leaf#Surface, pubescent. Algal trichomes Certain, usually filamentous, algae have the terminal cell (biology), cell produced into an elongate hair-like structure called a trichome. The same term is applied to such structures in some cyanobacteria, such as ''Spirulina (dietary supplement), Spirulina'' and ''Oscillatoria''. The trichomes of cyanobacteria may be unsheathed, as in ''Oscillatoria'', or sheathed, as in ''Calothrix''. These structures play an important role in preventing soil erosion, particularly in cold desert climates. The filamentous sheaths form a persistent sticky network that helps maintain soil structure. Plant trichomes Plant trichomes have many diff ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |