Continuous random variable on:

[Wikipedia]

[Google]

[Amazon]

In

Continuous probability distributions can be described by means of the

Continuous probability distributions can be described by means of the

is non-decreasing;

* is right-continuous;

*;

* and ; and

*.

Conversely, any function that satisfies the first four of the properties above is the cumulative distribution function of some probability distribution on the real numbers.

Any probability distribution can be decomposed as the

A discrete probability distribution is the probability distribution of a random variable that can take on only a countable number of values ( almost surely) which means that the probability of any event can be expressed as a (finite or

A discrete probability distribution is the probability distribution of a random variable that can take on only a countable number of values ( almost surely) which means that the probability of any event can be expressed as a (finite or

probability theory

Probability theory or probability calculus is the branch of mathematics concerned with probability. Although there are several different probability interpretations, probability theory treats the concept in a rigorous mathematical manner by expre ...

and statistics

Statistics (from German language, German: ', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a s ...

, a probability distribution is a function that gives the probabilities of occurrence of possible events for an experiment

An experiment is a procedure carried out to support or refute a hypothesis, or determine the efficacy or likelihood of something previously untried. Experiments provide insight into cause-and-effect by demonstrating what outcome occurs whe ...

. It is a mathematical description of a random

In common usage, randomness is the apparent or actual lack of definite pattern or predictability in information. A random sequence of events, symbols or steps often has no order and does not follow an intelligible pattern or combination. ...

phenomenon in terms of its sample space and the probabilities

Probability is a branch of mathematics and statistics concerning Event (probability theory), events and numerical descriptions of how likely they are to occur. The probability of an event is a number between 0 and 1; the larger the probab ...

of events (subset

In mathematics, a Set (mathematics), set ''A'' is a subset of a set ''B'' if all Element (mathematics), elements of ''A'' are also elements of ''B''; ''B'' is then a superset of ''A''. It is possible for ''A'' and ''B'' to be equal; if they a ...

s of the sample space).

For instance, if is used to denote the outcome of a coin toss ("the experiment"), then the probability distribution of would take the value 0.5 (1 in 2 or 1/2) for , and 0.5 for (assuming that the coin is fair). More commonly, probability distributions are used to compare the relative occurrence of many different random values.

Probability distributions can be defined in different ways and for discrete or for continuous variables. Distributions with special properties or for especially important applications are given specific names.

Introduction

A probability distribution is a mathematical description of the probabilities of events, subsets of the sample space. The sample space, often represented in notation by is theset

Set, The Set, SET or SETS may refer to:

Science, technology, and mathematics Mathematics

*Set (mathematics), a collection of elements

*Category of sets, the category whose objects and morphisms are sets and total functions, respectively

Electro ...

of all possible outcomes of a random phenomenon being observed. The sample space may be any set: a set of real numbers

In mathematics, a real number is a number that can be used to measurement, measure a continuous variable, continuous one-dimensional quantity such as a time, duration or temperature. Here, ''continuous'' means that pairs of values can have arbi ...

, a set of descriptive labels, a set of vectors, a set of arbitrary non-numerical values, etc. For example, the sample space of a coin flip could be

To define probability distributions for the specific case of random variables

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a mathematical formalization of a quantity or object which depends on random events. The term 'random variable' in its mathematical definition refers ...

(so the sample space can be seen as a numeric set), it is common to distinguish between discrete and continuous random variable

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a Mathematics, mathematical formalization of a quantity or object which depends on randomness, random events. The term 'random variable' in its mathema ...

s. In the discrete case, it is sufficient to specify a probability mass function

In probability and statistics, a probability mass function (sometimes called ''probability function'' or ''frequency function'') is a function that gives the probability that a discrete random variable is exactly equal to some value. Sometimes i ...

assigning a probability to each possible outcome (e.g. when throwing a fair die, each of the six digits to , corresponding to the number of dots on the die, has probability The probability of an event is then defined to be the sum of the probabilities of all outcomes that satisfy the event; for example, the probability of the event "the die rolls an even value" is

In contrast, when a random variable takes values from a continuum then by convention, any individual outcome is assigned probability zero. For such continuous random variables, only events that include infinitely many outcomes such as intervals have probability greater than 0.

For example, consider measuring the weight of a piece of ham in the supermarket, and assume the scale can provide arbitrarily many digits of precision. Then, the probability that it weighs ''exactly'' 500 g must be zero because no matter how high the level of precision chosen, it cannot be assumed that there are no non-zero decimal digits in the remaining omitted digits ignored by the precision level.

However, for the same use case, it is possible to meet quality control requirements such as that a package of "500 g" of ham must weigh between 490 g and 510 g with at least 98% probability. This is possible because this measurement does not require as much precision from the underlying equipment.

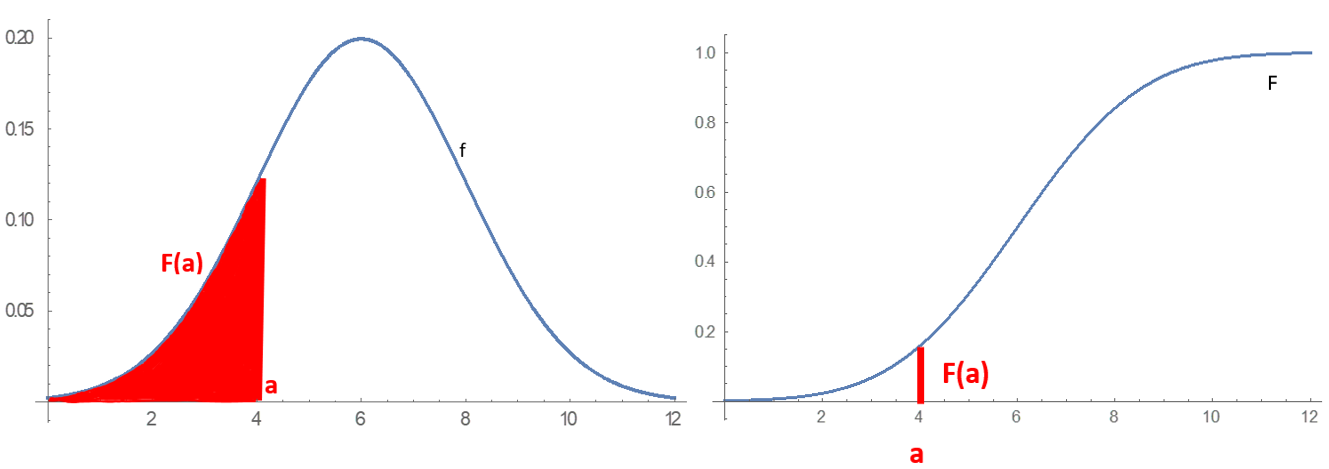

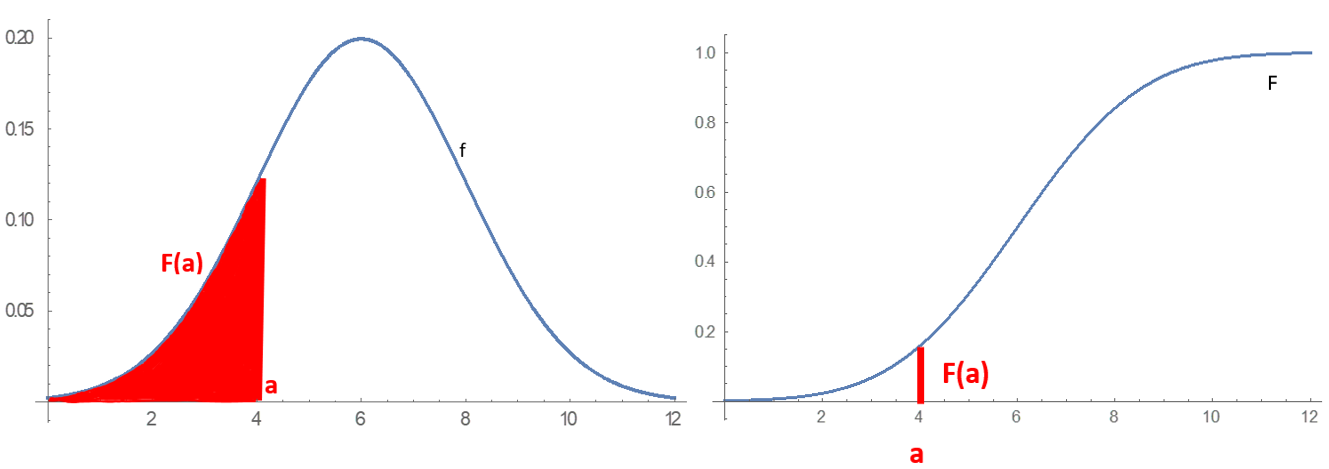

Continuous probability distributions can be described by means of the

Continuous probability distributions can be described by means of the cumulative distribution function

In probability theory and statistics, the cumulative distribution function (CDF) of a real-valued random variable X, or just distribution function of X, evaluated at x, is the probability that X will take a value less than or equal to x.

Ever ...

, which describes the probability that the random variable is no larger than a given value (i.e., for some . The cumulative distribution function is the area under the probability density function

In probability theory, a probability density function (PDF), density function, or density of an absolutely continuous random variable, is a Function (mathematics), function whose value at any given sample (or point) in the sample space (the s ...

from to , as shown in figure 1.

Most continuous probability distributions encountered in practice are not only continuous but also absolutely continuous. Such distributions can be described by their probability density function

In probability theory, a probability density function (PDF), density function, or density of an absolutely continuous random variable, is a Function (mathematics), function whose value at any given sample (or point) in the sample space (the s ...

. Informally, the probability density of a random variable describes the infinitesimal

In mathematics, an infinitesimal number is a non-zero quantity that is closer to 0 than any non-zero real number is. The word ''infinitesimal'' comes from a 17th-century Modern Latin coinage ''infinitesimus'', which originally referred to the " ...

probability that takes any value — that is as becomes is arbitrarily small. The probability that lies in a given interval can be computed rigorously by integrating the probability density function over that interval.

General probability definition

Let be aprobability space

In probability theory, a probability space or a probability triple (\Omega, \mathcal, P) is a mathematical construct that provides a formal model of a random process or "experiment". For example, one can define a probability space which models ...

, be a measurable space, and be a -valued random variable. Then the probability distribution of is the pushforward measure of the probability measure onto induced by . Explicitly, this pushforward measure on is given by

for

Any probability distribution is a probability measure

In mathematics, a probability measure is a real-valued function defined on a set of events in a σ-algebra that satisfies Measure (mathematics), measure properties such as ''countable additivity''. The difference between a probability measure an ...

on (in general different from , unless happens to be the identity map).

A probability distribution can be described in various forms, such as by a probability mass function or a cumulative distribution function. One of the most general descriptions, which applies for absolutely continuous and discrete variables, is by means of a probability function whose input space is a σ-algebra, and gives a real number

In mathematics, a real number is a number that can be used to measure a continuous one- dimensional quantity such as a duration or temperature. Here, ''continuous'' means that pairs of values can have arbitrarily small differences. Every re ...

probability as its output, particularly, a number in .

The probability function can take as argument subsets of the sample space itself, as in the coin toss example, where the function was defined so that and . However, because of the widespread use of random variables

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a mathematical formalization of a quantity or object which depends on random events. The term 'random variable' in its mathematical definition refers ...

, which transform the sample space into a set of numbers (e.g., , ), it is more common to study probability distributions whose argument are subsets of these particular kinds of sets (number sets), and all probability distributions discussed in this article are of this type. It is common to denote as the probability that a certain value of the variable belongs to a certain event .

The above probability function only characterizes a probability distribution if it satisfies all the Kolmogorov axioms, that is:

# , so the probability is non-negative

# , so no probability exceeds

# for any countable disjoint family of sets

The concept of probability function is made more rigorous by defining it as the element of a probability space

In probability theory, a probability space or a probability triple (\Omega, \mathcal, P) is a mathematical construct that provides a formal model of a random process or "experiment". For example, one can define a probability space which models ...

, where is the set of possible outcomes, is the set of all subsets whose probability can be measured, and is the probability function, or probability measure, that assigns a probability to each of these measurable subsets .

Probability distributions usually belong to one of two classes. A discrete probability distribution is applicable to the scenarios where the set of possible outcomes is discrete

Discrete may refer to:

*Discrete particle or quantum in physics, for example in quantum theory

* Discrete device, an electronic component with just one circuit element, either passive or active, other than an integrated circuit

* Discrete group, ...

(e.g. a coin toss, a roll of a die) and the probabilities are encoded by a discrete list of the probabilities of the outcomes; in this case the discrete probability distribution is known as probability mass function

In probability and statistics, a probability mass function (sometimes called ''probability function'' or ''frequency function'') is a function that gives the probability that a discrete random variable is exactly equal to some value. Sometimes i ...

. On the other hand, absolutely continuous probability distributions are applicable to scenarios where the set of possible outcomes can take on values in a continuous range (e.g. real numbers), such as the temperature on a given day. In the absolutely continuous case, probabilities are described by a probability density function

In probability theory, a probability density function (PDF), density function, or density of an absolutely continuous random variable, is a Function (mathematics), function whose value at any given sample (or point) in the sample space (the s ...

, and the probability distribution is by definition the integral of the probability density function. The normal distribution

In probability theory and statistics, a normal distribution or Gaussian distribution is a type of continuous probability distribution for a real-valued random variable. The general form of its probability density function is

f(x) = \frac ...

is a commonly encountered absolutely continuous probability distribution. More complex experiments, such as those involving stochastic processes defined in continuous time, may demand the use of more general probability measure

In mathematics, a probability measure is a real-valued function defined on a set of events in a σ-algebra that satisfies Measure (mathematics), measure properties such as ''countable additivity''. The difference between a probability measure an ...

s.

A probability distribution whose sample space is one-dimensional (for example real numbers, list of labels, ordered labels or binary) is called univariate, while a distribution whose sample space is a vector space

In mathematics and physics, a vector space (also called a linear space) is a set (mathematics), set whose elements, often called vector (mathematics and physics), ''vectors'', can be added together and multiplied ("scaled") by numbers called sc ...

of dimension 2 or more is called multivariate. A univariate distribution gives the probabilities of a single random variable

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a Mathematics, mathematical formalization of a quantity or object which depends on randomness, random events. The term 'random variable' in its mathema ...

taking on various different values; a multivariate distribution (a joint probability distribution

A joint or articulation (or articular surface) is the connection made between bones, ossicles, or other hard structures in the body which link an animal's skeletal system into a functional whole.Saladin, Ken. Anatomy & Physiology. 7th ed. McGraw- ...

) gives the probabilities of a random vector

In probability, and statistics, a multivariate random variable or random vector is a list or vector of mathematical variables each of whose value is unknown, either because the value has not yet occurred or because there is imperfect knowledge ...

– a list of two or more random variables – taking on various combinations of values. Important and commonly encountered univariate probability distributions include the binomial distribution

In probability theory and statistics, the binomial distribution with parameters and is the discrete probability distribution of the number of successes in a sequence of statistical independence, independent experiment (probability theory) ...

, the hypergeometric distribution, and the normal distribution

In probability theory and statistics, a normal distribution or Gaussian distribution is a type of continuous probability distribution for a real-valued random variable. The general form of its probability density function is

f(x) = \frac ...

. A commonly encountered multivariate distribution is the multivariate normal distribution.

Besides the probability function, the cumulative distribution function, the probability mass function and the probability density function, the moment generating function and the characteristic function also serve to identify a probability distribution, as they uniquely determine an underlying cumulative distribution function.

Terminology

Some key concepts and terms, widely used in the literature on the topic of probability distributions, are listed below.Basic terms

*''Random variable

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a Mathematics, mathematical formalization of a quantity or object which depends on randomness, random events. The term 'random variable' in its mathema ...

'': takes values from a sample space; probabilities describe which values and set of values are more likely taken.

*'' Event'': set of possible values (outcomes) of a random variable that occurs with a certain probability.

*'' Probability function'' or ''probability measure'': describes the probability that the event occurs.Chapters 1 and 2 of

*''Cumulative distribution function

In probability theory and statistics, the cumulative distribution function (CDF) of a real-valued random variable X, or just distribution function of X, evaluated at x, is the probability that X will take a value less than or equal to x.

Ever ...

'': function evaluating the probability

Probability is a branch of mathematics and statistics concerning events and numerical descriptions of how likely they are to occur. The probability of an event is a number between 0 and 1; the larger the probability, the more likely an e ...

that will take a value less than or equal to for a random variable (only for real-valued random variables).

*'' Quantile function'': the inverse of the cumulative distribution function. Gives such that, with probability , will not exceed .

Discrete probability distributions

*Discrete probability distribution: for many random variables with finitely or countably infinitely many values. *''Probability mass function

In probability and statistics, a probability mass function (sometimes called ''probability function'' or ''frequency function'') is a function that gives the probability that a discrete random variable is exactly equal to some value. Sometimes i ...

'' (''pmf''): function that gives the probability that a discrete random variable is equal to some value.

*''Frequency distribution

In statistics, the frequency or absolute frequency of an Event (probability theory), event i is the number n_i of times the observation has occurred/been recorded in an experiment or study. These frequencies are often depicted graphically or tabu ...

'': a table that displays the frequency of various outcomes .

*'' Relative frequency distribution'': a frequency distribution

In statistics, the frequency or absolute frequency of an Event (probability theory), event i is the number n_i of times the observation has occurred/been recorded in an experiment or study. These frequencies are often depicted graphically or tabu ...

where each value has been divided (normalized) by a number of outcomes in a sample (i.e. sample size).

*'' Categorical distribution'': for discrete random variables with a finite set of values.

Absolutely continuous probability distributions

*Absolutely continuous probability distribution: for many random variables with uncountably many values. *''Probability density function

In probability theory, a probability density function (PDF), density function, or density of an absolutely continuous random variable, is a Function (mathematics), function whose value at any given sample (or point) in the sample space (the s ...

'' (''pdf'') or ''probability density'': function whose value at any given sample (or point) in the sample space (the set of possible values taken by the random variable) can be interpreted as providing a ''relative likelihood'' that the value of the random variable would equal that sample.

Related terms

* ''Support'': set of values that can be assumed with non-zero probability (or probability density in the case of a continuous distribution) by the random variable. For a random variable , it is sometimes denoted as . *Tail:More information and examples can be found in the articles Heavy-tailed distribution, Long-tailed distribution,fat-tailed distribution

A fat-tailed distribution is a probability distribution that exhibits a large skewness or kurtosis, relative to that of either a normal distribution or an exponential distribution. In common usage, the terms fat-tailed and Heavy-tailed distribut ...

the regions close to the bounds of the random variable, if the pmf or pdf are relatively low therein. Usually has the form , or a union thereof.

*Head: the region where the pmf or pdf is relatively high. Usually has the form .

*''Expected value

In probability theory, the expected value (also called expectation, expectancy, expectation operator, mathematical expectation, mean, expectation value, or first Moment (mathematics), moment) is a generalization of the weighted average. Informa ...

'' or ''mean'': the weighted average of the possible values, using their probabilities as their weights; or the continuous analog thereof.

*''Median

The median of a set of numbers is the value separating the higher half from the lower half of a Sample (statistics), data sample, a statistical population, population, or a probability distribution. For a data set, it may be thought of as the “ ...

'': the value such that the set of values less than the median, and the set greater than the median, each have probabilities no greater than one-half.

* ''Mode'': for a discrete random variable, the value with highest probability; for an absolutely continuous random variable, a location at which the probability density function has a local peak.

*''Quantile

In statistics and probability, quantiles are cut points dividing the range of a probability distribution into continuous intervals with equal probabilities or dividing the observations in a sample in the same way. There is one fewer quantile t ...

'': the q-quantile is the value such that .

*''Variance

In probability theory and statistics, variance is the expected value of the squared deviation from the mean of a random variable. The standard deviation (SD) is obtained as the square root of the variance. Variance is a measure of dispersion ...

'': the second moment of the pmf or pdf about the mean; an important measure of the dispersion of the distribution.

*''Standard deviation

In statistics, the standard deviation is a measure of the amount of variation of the values of a variable about its Expected value, mean. A low standard Deviation (statistics), deviation indicates that the values tend to be close to the mean ( ...

'': the square root of the variance, and hence another measure of dispersion.

* ''Symmetry'': a property of some distributions in which the portion of the distribution to the left of a specific value (usually the median) is a mirror image of the portion to its right.

*'' Skewness'': a measure of the extent to which a pmf or pdf "leans" to one side of its mean. The third standardized moment of the distribution.

*'' Kurtosis'': a measure of the "fatness" of the tails of a pmf or pdf. The fourth standardized moment of the distribution.

Cumulative distribution function

In the special case of a real-valued random variable, the probability distribution can equivalently be represented by a cumulative distribution function instead of a probability measure. The cumulative distribution function of a random variable with regard to a probability distribution is defined as The cumulative distribution function of any real-valued random variable has the properties: *mixture

In chemistry, a mixture is a material made up of two or more different chemical substances which can be separated by physical method. It is an impure substance made up of 2 or more elements or compounds mechanically mixed together in any proporti ...

of a discrete

Discrete may refer to:

*Discrete particle or quantum in physics, for example in quantum theory

* Discrete device, an electronic component with just one circuit element, either passive or active, other than an integrated circuit

* Discrete group, ...

, an absolutely continuous and a singular continuous distribution, and thus any cumulative distribution function admits a decomposition as the convex sum of the three according cumulative distribution functions.

Discrete probability distribution

countably infinite

In mathematics, a set is countable if either it is finite or it can be made in one to one correspondence with the set of natural numbers. Equivalently, a set is ''countable'' if there exists an injective function from it into the natural numbe ...

) sum:

where is a countable set with . Thus the discrete random variables (i.e. random variables whose probability distribution is discrete) are exactly those with a probability mass function

In probability and statistics, a probability mass function (sometimes called ''probability function'' or ''frequency function'') is a function that gives the probability that a discrete random variable is exactly equal to some value. Sometimes i ...

. In the case where the range of values is countably infinite, these values have to decline to zero fast enough for the probabilities to add up to 1. For example, if for , the sum of probabilities would be .

Well-known discrete probability distributions used in statistical modeling include the Poisson distribution

In probability theory and statistics, the Poisson distribution () is a discrete probability distribution that expresses the probability of a given number of events occurring in a fixed interval of time if these events occur with a known const ...

, the Bernoulli distribution, the binomial distribution

In probability theory and statistics, the binomial distribution with parameters and is the discrete probability distribution of the number of successes in a sequence of statistical independence, independent experiment (probability theory) ...

, the geometric distribution

In probability theory and statistics, the geometric distribution is either one of two discrete probability distributions:

* The probability distribution of the number X of Bernoulli trials needed to get one success, supported on \mathbb = \;

* T ...

, the negative binomial distribution and categorical distribution. When a sample (a set of observations) is drawn from a larger population, the sample points have an empirical distribution that is discrete, and which provides information about the population distribution. Additionally, the discrete uniform distribution is commonly used in computer programs that make equal-probability random selections between a number of choices.

Cumulative distribution function

A real-valued discrete random variable can equivalently be defined as a random variable whose cumulative distribution function increases only by jump discontinuities—that is, its cdf increases only where it "jumps" to a higher value, and is constant in intervals without jumps. The points where jumps occur are precisely the values which the random variable may take. Thus the cumulative distribution function has the form The points where the cdf jumps always form a countable set; this may be any countable set and thus may even be dense in the real numbers.Dirac delta representation

A discrete probability distribution is often represented withDirac measure

In mathematics, a Dirac measure assigns a size to a set based solely on whether it contains a fixed element ''x'' or not. It is one way of formalizing the idea of the Dirac delta function, an important tool in physics and other technical fields.

...

s, also called one-point distributions (see below), the probability distributions of deterministic random variables. For any outcome , let be the Dirac measure concentrated at . Given a discrete probability distribution, there is a countable set with and a probability mass function . If is any event, then

or in short,

Similarly, discrete distributions can be represented with the Dirac delta function

In mathematical analysis, the Dirac delta function (or distribution), also known as the unit impulse, is a generalized function on the real numbers, whose value is zero everywhere except at zero, and whose integral over the entire real line ...

as a generalized probability density function

In probability theory, a probability density function (PDF), density function, or density of an absolutely continuous random variable, is a Function (mathematics), function whose value at any given sample (or point) in the sample space (the s ...

, where

which means

for any event

Indicator-function representation

For a discrete random variable , let be the values it can take with non-zero probability. Denote These are disjoint sets, and for such sets It follows that the probability that takes any value except for is zero, and thus one can write as except on a set of probability zero, where is the indicator function of . This may serve as an alternative definition of discrete random variables.One-point distribution

A special case is the discrete distribution of a random variable that can take on only one fixed value, in other words, a Dirac measure. Expressed formally, the random variable has a one-point distribution if it has a possible outcome such that All other possible outcomes then have probability 0. Its cumulative distribution function jumps immediately from 0 before to 1 at . It is closely related to a deterministic distribution, which cannot take on any other value, while a one-point distribution can take other values, though only with probability 0. For most practical purposes the two notions are equivalent.Absolutely continuous probability distribution

An absolutely continuous probability distribution is a probability distribution on the real numbers with uncountably many possible values, such as a whole interval in the real line, and where the probability of any event can be expressed as an integral. More precisely, a real random variable has an absolutely continuous probability distribution if there is a function