Self-organizing Map on:

[Wikipedia]

[Google]

[Amazon]

A self-organizing map (SOM) or self-organizing feature map (SOFM) is an

unsupervised

''Unsupervised'' is an American adult animated sitcom created by David Hornsby, Rob Rosell, and Scott Marder which ran on FX from January 19 to December 20, 2012. The show was created, and for the most part, written by David Hornsby, Scott Marder ...

machine learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence.

Machine ...

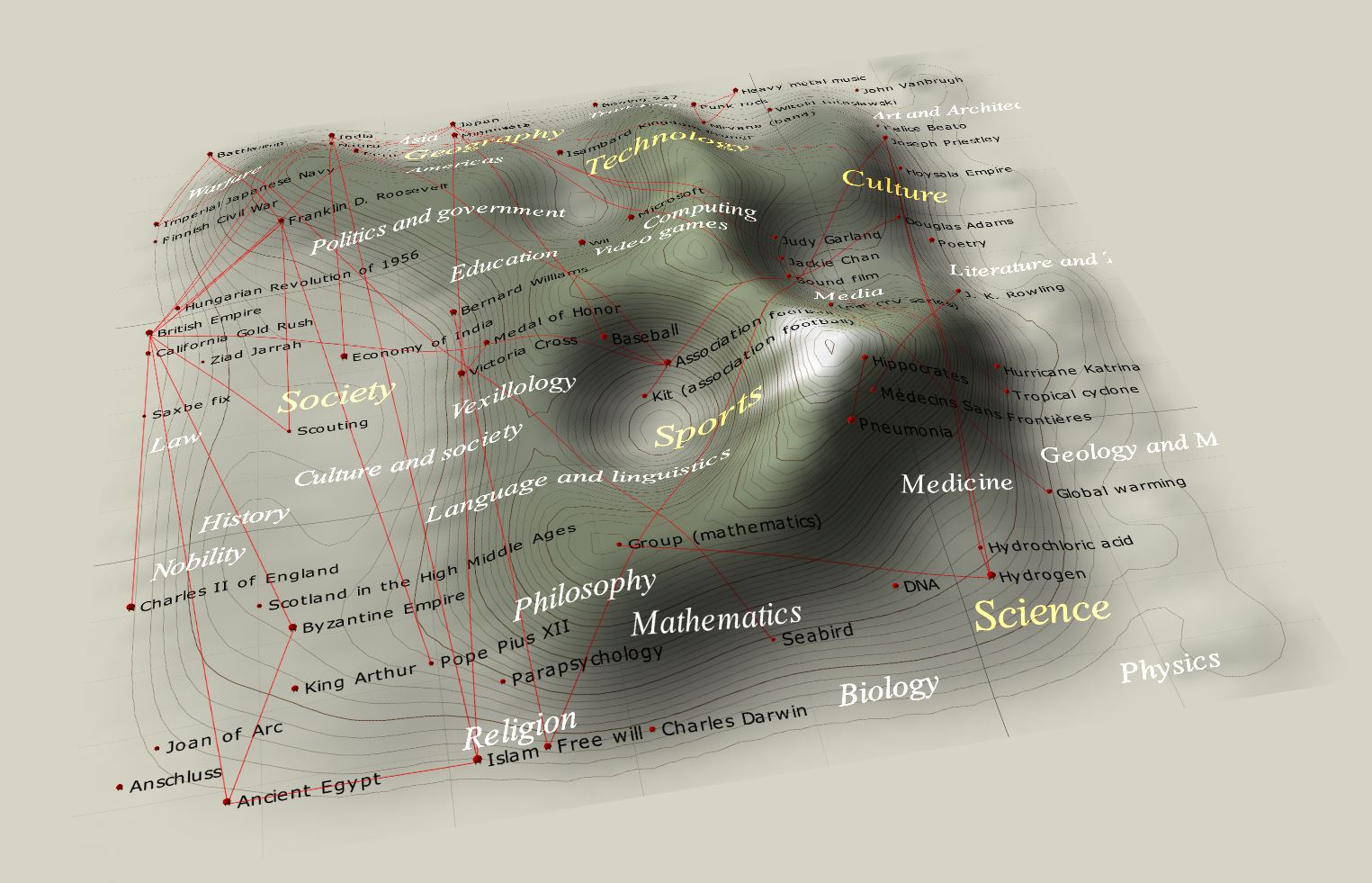

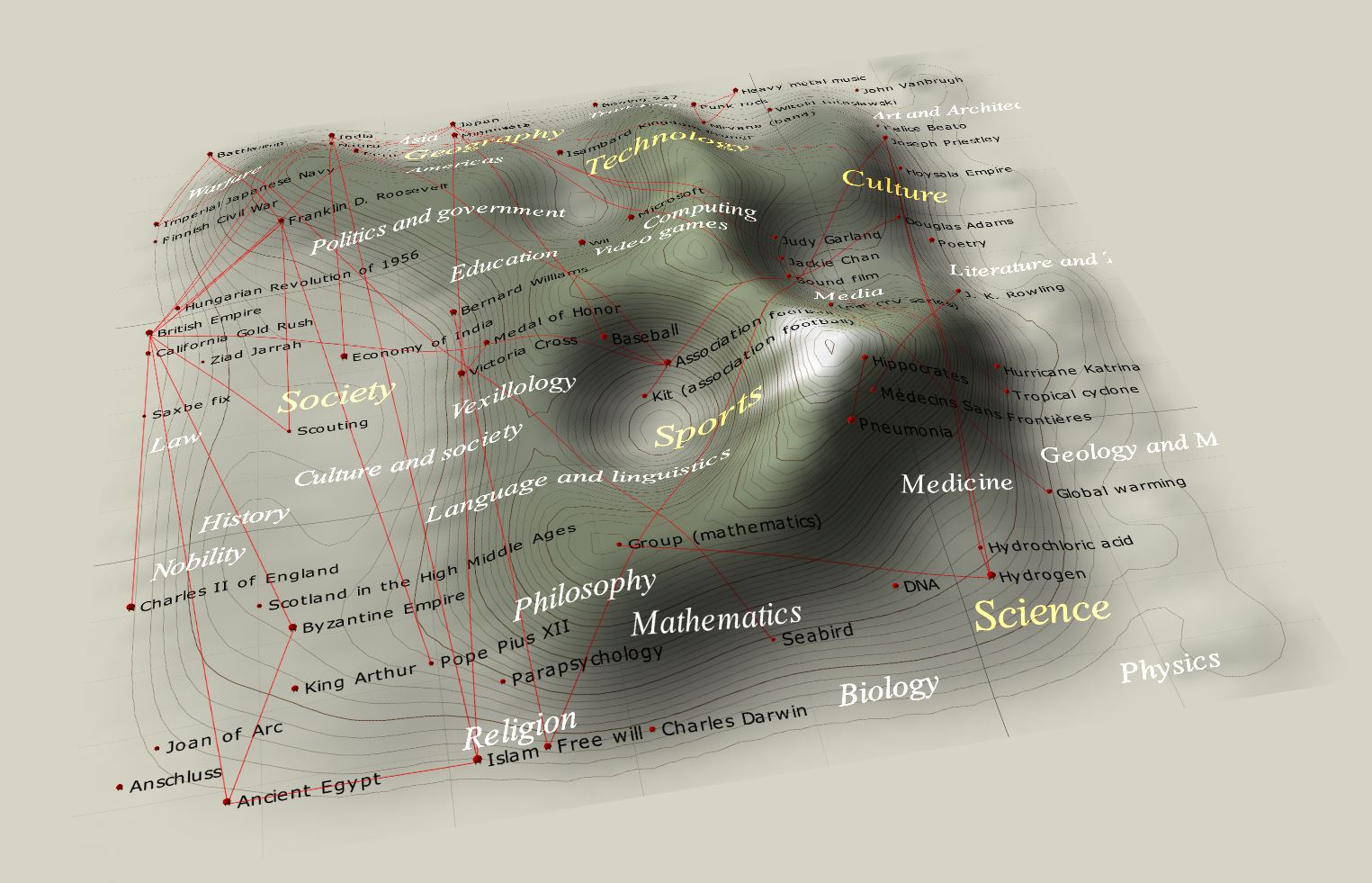

technique used to produce a low-dimensional (typically two-dimensional) representation of a higher dimensional data set while preserving the topological structure

In mathematics, a topological space is, roughly speaking, a geometrical space in which closeness is defined but cannot necessarily be measured by a numeric distance. More specifically, a topological space is a set whose elements are called point ...

of the data. For example, a data set with variables measured in observations could be represented as clusters of observations with similar values for the variables. These clusters then could be visualized as a two-dimensional "map" such that observations in proximal clusters have more similar values than observations in distal clusters. This can make high-dimensional data easier to visualize and analyze.

An SOM is a type of artificial neural network

Artificial neural networks (ANNs), usually simply called neural networks (NNs) or neural nets, are computing systems inspired by the biological neural networks that constitute animal brains.

An ANN is based on a collection of connected unit ...

but is trained using competitive learning Competitive learning is a form of unsupervised learning in artificial neural networks, in which nodes compete for the right to respond to a subset of the input data. A variant of Hebbian learning, competitive learning works by increasing the specia ...

rather than the error-correction learning (e.g., backpropagation

In machine learning, backpropagation (backprop, BP) is a widely used algorithm for training feedforward neural network, feedforward artificial neural networks. Generalizations of backpropagation exist for other artificial neural networks (ANN ...

with gradient descent

In mathematics, gradient descent (also often called steepest descent) is a first-order iterative optimization algorithm for finding a local minimum of a differentiable function. The idea is to take repeated steps in the opposite direction of the ...

) used by other artificial neural networks. The SOM was introduced by the Finnish

Finnish may refer to:

* Something or someone from, or related to Finland

* Culture of Finland

* Finnish people or Finns, the primary ethnic group in Finland

* Finnish language, the national language of the Finnish people

* Finnish cuisine

See also ...

professor Teuvo Kohonen

Teuvo Kalevi Kohonen (11 July 1934 – 13 December 2021) was a prominent Finnish academic ( Dr. Eng.) and researcher. He was professor emeritus of the Academy of Finland. The Kohonen map or network is a computationally convenient abstraction building on biological models of neural systems from the 1970s and

This process is repeated for each input vector for a (usually large) number of cycles λ. The network winds up associating output nodes with groups or patterns in the input data set. If these patterns can be named, the names can be attached to the associated nodes in the trained net.

During mapping, there will be one single ''winning'' neuron: the neuron whose weight vector lies closest to the input vector. This can be simply determined by calculating the Euclidean distance between input vector and weight vector.

While representing input data as vectors has been emphasized in this article, any kind of object which can be represented digitally, which has an appropriate distance measure associated with it, and in which the necessary operations for training are possible can be used to construct a self-organizing map. This includes matrices, continuous functions or even other self-organizing maps.

This process is repeated for each input vector for a (usually large) number of cycles λ. The network winds up associating output nodes with groups or patterns in the input data set. If these patterns can be named, the names can be attached to the associated nodes in the trained net.

During mapping, there will be one single ''winning'' neuron: the neuron whose weight vector lies closest to the input vector. This can be simply determined by calculating the Euclidean distance between input vector and weight vector.

While representing input data as vectors has been emphasized in this article, any kind of object which can be represented digitally, which has an appropriate distance measure associated with it, and in which the necessary operations for training are possible can be used to construct a self-organizing map. This includes matrices, continuous functions or even other self-organizing maps.

There are two ways to interpret a SOM. Because in the training phase weights of the whole neighborhood are moved in the same direction, similar items tend to excite adjacent neurons. Therefore, SOM forms a semantic map where similar samples are mapped close together and dissimilar ones apart. This may be visualized by a U-Matrix (Euclidean distance between weight vectors of neighboring cells) of the SOM.Ultsch, Alfred (2003); ''U*-Matrix: A tool to visualize clusters in high dimensional data'', Department of Computer Science, University of Marburg

There are two ways to interpret a SOM. Because in the training phase weights of the whole neighborhood are moved in the same direction, similar items tend to excite adjacent neurons. Therefore, SOM forms a semantic map where similar samples are mapped close together and dissimilar ones apart. This may be visualized by a U-Matrix (Euclidean distance between weight vectors of neighboring cells) of the SOM.Ultsch, Alfred (2003); ''U*-Matrix: A tool to visualize clusters in high dimensional data'', Department of Computer Science, University of Marburg

Technical Report Nr. 36:1-12

/ref> The other way is to think of neuronal weights as pointers to the input space. They form a discrete approximation of the distribution of training samples. More neurons point to regions with high training sample concentration and fewer where the samples are scarce. SOM may be considered a nonlinear generalization of

morphogenesis

Morphogenesis (from the Greek ''morphê'' shape and ''genesis'' creation, literally "the generation of form") is the biological process that causes a cell, tissue or organism to develop its shape. It is one of three fundamental aspects of devel ...

models dating back to Alan Turing

Alan Mathison Turing (; 23 June 1912 – 7 June 1954) was an English mathematician, computer scientist, logician, cryptanalyst, philosopher, and theoretical biologist. Turing was highly influential in the development of theoretical com ...

in the 1950s.

Overview

Self-organizing maps, like most artificial neural networks, operate in two modes: training and mapping. First, training uses an input data set (the "input space") to generate a lower-dimensional representation of the input data (the "map space"). Second, mapping classifies additional input data using the generated map. In most cases, the goal of training is to represent an input space with ''p'' dimensions as a map space with two dimensions. Specifically, an input space with ''p'' variables is said to have ''p'' dimensions. A map space consists of components called "nodes" or "neurons," which are arranged as ahexagonal

In geometry, a hexagon (from Greek , , meaning "six", and , , meaning "corner, angle") is a six-sided polygon. The total of the internal angles of any simple (non-self-intersecting) hexagon is 720°.

Regular hexagon

A '' regular hexagon'' has ...

or rectangular

In Euclidean geometry, Euclidean plane geometry, a rectangle is a quadrilateral with four right angles. It can also be defined as: an equiangular quadrilateral, since equiangular means that all of its angles are equal (360°/4 = 90°); or a par ...

grid with two dimensions. The number of nodes and their arrangement are specified beforehand based on the larger goals of the analysis and exploration of the data.

Each node in the map space is associated with a "weight" vector, which is the position of the node in the input space. While nodes in the map space stay fixed, training consists in moving weight vectors toward the input data (reducing a distance metric such as Euclidean distance

In mathematics, the Euclidean distance between two points in Euclidean space is the length of a line segment between the two points.

It can be calculated from the Cartesian coordinates of the points using the Pythagorean theorem, therefor ...

) without spoiling the topology induced from the map space. After training, the map can be used to classify additional observations for the input space by finding the node with the closest weight vector (smallest distance metric) to the input space vector.

Learning algorithm

The goal of learning in the self-organizing map is to cause different parts of the network to respond similarly to certain input patterns. This is partly motivated by how visual, auditory or other sensory information is handled in separate parts of thecerebral cortex

The cerebral cortex, also known as the cerebral mantle, is the outer layer of neural tissue of the cerebrum of the brain in humans and other mammals. The cerebral cortex mostly consists of the six-layered neocortex, with just 10% consisting of ...

in the human brain

The human brain is the central organ of the human nervous system, and with the spinal cord makes up the central nervous system. The brain consists of the cerebrum, the brainstem and the cerebellum. It controls most of the activities of the ...

.

The weights of the neurons are initialized either to small random values or sampled evenly from the subspace spanned by the two largest principal component

Principal may refer to:

Title or rank

* Principal (academia), the chief executive of a university

** Principal (education), the office holder/ or boss in any school

* Principal (civil service) or principal officer, the senior management level ...

eigenvectors

In linear algebra, an eigenvector () or characteristic vector of a linear transformation is a nonzero vector that changes at most by a scalar factor when that linear transformation is applied to it. The corresponding eigenvalue, often denoted b ...

. With the latter alternative, learning is much faster because the initial weights already give a good approximation of SOM weights.

The network must be fed a large number of example vectors that represent, as close as possible, the kinds of vectors expected during mapping. The examples are usually administered several times as iterations.

The training utilizes competitive learning Competitive learning is a form of unsupervised learning in artificial neural networks, in which nodes compete for the right to respond to a subset of the input data. A variant of Hebbian learning, competitive learning works by increasing the specia ...

. When a training example is fed to the network, its Euclidean distance

In mathematics, the Euclidean distance between two points in Euclidean space is the length of a line segment between the two points.

It can be calculated from the Cartesian coordinates of the points using the Pythagorean theorem, therefor ...

to all weight vectors is computed. The neuron whose weight vector is most similar to the input is called the best matching unit (BMU). The weights of the BMU and neurons close to it in the SOM grid are adjusted towards the input vector. The magnitude of the change decreases with time and with the grid-distance from the BMU. The update formula for a neuron v with weight vector Wv(s) is

:,

where ''s'' is the step index, ''t'' is an index into the training sample, ''u'' is the index of the BMU for the input vector D(''t''), ''α''(''s'') is a monotonically decreasing

In mathematics, a monotonic function (or monotone function) is a function between ordered sets that preserves or reverses the given order. This concept first arose in calculus, and was later generalized to the more abstract setting of order ...

learning coefficient; ''θ''(''u'', ''v'', ''s'') is the neighborhood function which gives the distance between the neuron u and the neuron ''v'' in step ''s''. Depending on the implementations, t can scan the training data set systematically (''t'' is 0, 1, 2...''T''-1, then repeat, ''T'' being the training sample's size), be randomly drawn from the data set (bootstrap sampling

Bootstrapping is any test or metric that uses random sampling with replacement (e.g. mimicking the sampling process), and falls under the broader class of resampling methods. Bootstrapping assigns measures of accuracy (bias, variance, confidenc ...

), or implement some other sampling method (such as jackknifing

Jackknifing is the folding of an articulated vehicle so that it resembles the acute angle of a folding pocket knife. If a vehicle towing a trailer skids, the trailer can push the towing vehicle from behind until it spins the vehicle around and ...

).

The neighborhood function ''θ''(''u'', ''v'', ''s'') (also called ''function of lateral interaction'') depends on the grid-distance between the BMU (neuron ''u'') and neuron ''v''. In the simplest form, it is 1 for all neurons close enough to BMU and 0 for others, but the Gaussian

Carl Friedrich Gauss (1777–1855) is the eponym of all of the topics listed below.

There are over 100 topics all named after this German mathematician and scientist, all in the fields of mathematics, physics, and astronomy. The English eponymo ...

and mexican-hat functions are common choices, too. Regardless of the functional form, the neighborhood function shrinks with time. At the beginning when the neighborhood is broad, the self-organizing takes place on the global scale. When the neighborhood has shrunk to just a couple of neurons, the weights are converging to local estimates. In some implementations, the learning coefficient ''α'' and the neighborhood function ''θ'' decrease steadily with increasing ''s'', in others (in particular those where ''t'' scans the training data set) they decrease in step-wise fashion, once every ''T'' steps.

This process is repeated for each input vector for a (usually large) number of cycles λ. The network winds up associating output nodes with groups or patterns in the input data set. If these patterns can be named, the names can be attached to the associated nodes in the trained net.

During mapping, there will be one single ''winning'' neuron: the neuron whose weight vector lies closest to the input vector. This can be simply determined by calculating the Euclidean distance between input vector and weight vector.

While representing input data as vectors has been emphasized in this article, any kind of object which can be represented digitally, which has an appropriate distance measure associated with it, and in which the necessary operations for training are possible can be used to construct a self-organizing map. This includes matrices, continuous functions or even other self-organizing maps.

This process is repeated for each input vector for a (usually large) number of cycles λ. The network winds up associating output nodes with groups or patterns in the input data set. If these patterns can be named, the names can be attached to the associated nodes in the trained net.

During mapping, there will be one single ''winning'' neuron: the neuron whose weight vector lies closest to the input vector. This can be simply determined by calculating the Euclidean distance between input vector and weight vector.

While representing input data as vectors has been emphasized in this article, any kind of object which can be represented digitally, which has an appropriate distance measure associated with it, and in which the necessary operations for training are possible can be used to construct a self-organizing map. This includes matrices, continuous functions or even other self-organizing maps.

Variables

These are the variables needed, with vectors in bold, * is the current iteration * is the iteration limit * is the index of the target input data vector in the input data set * is a target input data vector * is the index of the node in the map * is the current weight vector of node * is the index of the best matching unit (BMU) in the map * is a restraint due to distance from BMU, usually called the neighbourhood function, and * is a learning restraint due to iteration progress.Algorithm

# Randomize the node weight vectors in a map # Randomly pick an input vector # Traverse each node in the map ## Use theEuclidean distance

In mathematics, the Euclidean distance between two points in Euclidean space is the length of a line segment between the two points.

It can be calculated from the Cartesian coordinates of the points using the Pythagorean theorem, therefor ...

formula to find the similarity between the input vector and the map's node's weight vector

## Track the node that produces the smallest distance (this node is the best matching unit, BMU)

# Update the weight vectors of the nodes in the neighborhood of the BMU (including the BMU itself) by pulling them closer to the input vector

##

# Increase and repeat from step 2 while

Alternative algorithm

# Randomize the map's nodes' weight vectors # Traverse each input vector in the input data set ## Traverse each node in the map ### Use theEuclidean distance

In mathematics, the Euclidean distance between two points in Euclidean space is the length of a line segment between the two points.

It can be calculated from the Cartesian coordinates of the points using the Pythagorean theorem, therefor ...

formula to find the similarity between the input vector and the map's node's weight vector

### Track the node that produces the smallest distance (this node is the best matching unit, BMU)

## Update the nodes in the neighborhood of the BMU (including the BMU itself) by pulling them closer to the input vector

###

# Increase and repeat from step 2 while

Initialization options

Selection of initial weights as good approximations of the final weights is a well-known problem for all iterative methods of artificial neural networks, including self-organizing maps. Kohonen originally proposed random initiation of weights. (This approach is reflected by the algorithms described above.) More recently, principal component initialization, in which initial map weights are chosen from the space of the first principal components, has become popular due to the exact reproducibility of the results. A careful comparison of random initialization to principal component initialization for a one-dimensional map, however, found that the advantages of principal component initialization are not universal. The best initialization method depends on the geometry of the specific dataset. Principal component initialization was preferable (for a one-dimensional map) when the principal curve approximating the dataset could be univalently and linearly projected on the first principal component (quasilinear sets). For nonlinear datasets, however, random initiation performed better.Interpretation

There are two ways to interpret a SOM. Because in the training phase weights of the whole neighborhood are moved in the same direction, similar items tend to excite adjacent neurons. Therefore, SOM forms a semantic map where similar samples are mapped close together and dissimilar ones apart. This may be visualized by a U-Matrix (Euclidean distance between weight vectors of neighboring cells) of the SOM.Ultsch, Alfred (2003); ''U*-Matrix: A tool to visualize clusters in high dimensional data'', Department of Computer Science, University of Marburg

There are two ways to interpret a SOM. Because in the training phase weights of the whole neighborhood are moved in the same direction, similar items tend to excite adjacent neurons. Therefore, SOM forms a semantic map where similar samples are mapped close together and dissimilar ones apart. This may be visualized by a U-Matrix (Euclidean distance between weight vectors of neighboring cells) of the SOM.Ultsch, Alfred (2003); ''U*-Matrix: A tool to visualize clusters in high dimensional data'', Department of Computer Science, University of MarburgTechnical Report Nr. 36:1-12

/ref> The other way is to think of neuronal weights as pointers to the input space. They form a discrete approximation of the distribution of training samples. More neurons point to regions with high training sample concentration and fewer where the samples are scarce. SOM may be considered a nonlinear generalization of

Principal components analysis

Principal component analysis (PCA) is a popular technique for analyzing large datasets containing a high number of dimensions/features per observation, increasing the interpretability of data while preserving the maximum amount of information, and ...

(PCA). It has been shown, using both artificial and real geophysical data, that SOM has many advantages over the conventional feature extraction

In machine learning, pattern recognition, and image processing, feature extraction starts from an initial set of measured data and builds derived values (features) intended to be informative and non-redundant, facilitating the subsequent learning a ...

methods such as Empirical Orthogonal Functions (EOF) or PCA.

Originally, SOM was not formulated as a solution to an optimisation problem. Nevertheless, there have been several attempts to modify the definition of SOM and to formulate an optimisation problem which gives similar results. For example, Elastic map

Elastic maps provide a tool for nonlinear dimensionality reduction. By their construction, they are a system of elastic springs embedded in the data

space. This system approximates a low-dimensional manifold. The elastic coefficients of this s ...

s use the mechanical metaphor of elasticity to approximate principal manifolds: the analogy is an elastic membrane and plate.

Examples

Fisher's iris flower data

Consider an array of nodes, each of which contains a weight vector and is aware of its location in the array. Each weight vector is of the same dimension as the node's input vector. The weights may initially be set to random values. Now we need input to feed the map. Colors can be represented by their red, green, and blue components. Consequently, we will represent colors as vectors in theunit cube

A unit cube, more formally a cube of side 1, is a cube whose sides are 1 unit long.. See in particulap. 671. The volume of a 3-dimensional unit cube is 1 cubic unit, and its total surface area is 6 square units..

Unit hypercube

The term '' ...

of the free vector space over generated by the basis:

:R = <255, 0, 0>

:G = <0, 255, 0>

:B = <0, 0, 255>

The diagram shown compares the results of training on the data setsThese data sets are not normalized. Normalization would be necessary to train the SOM.

:threeColors = 55, 0, 0 , 255, 0 , 0, 255:eightColors = , 0, 0 55, 0, 0 , 255, 0 , 0, 255 55, 255, 0 , 255, 255 55, 0, 255 55, 255, 255

and the original images. Note the striking resemblance between the two.

Similarly, after training a grid of neurons for 250 iterations with a learning rate

In machine learning and statistics, the learning rate is a tuning parameter in an optimization algorithm that determines the step size at each iteration while moving toward a minimum of a loss function. Since it influences to what extent newly ac ...

of 0.1 on Fisher's Iris, the map can already detect the main differences between species.

Other

* Project prioritization and selection * Seismic facies analysis for oil and gas exploration *Failure mode and effects analysis

Failure mode and effects analysis (FMEA; often written with "failure modes" in plural) is the process of reviewing as many components, assemblies, and subsystems as possible to identify potential failure modes in a system and their causes and effe ...

* Creation of artwork

*Finding representative data in large datasets (e.g., representative species for ecological communities, representative days for energy system models).

Alternatives

* Thegenerative topographic map Generative topographic map (GTM) is a machine learning method that is a probabilistic counterpart of the self-organizing map (SOM), is probably convergent and does not require a shrinking neighborhood or a decreasing step size. It is a generative m ...

(GTM) is a potential alternative to SOMs. In the sense that a GTM explicitly requires a smooth and continuous mapping from the input space to the map space, it is topology preserving. However, in a practical sense, this measure of topological preservation is lacking.

* The time adaptive self-organizing map (TASOM) network is an extension of the basic SOM. The TASOM employs adaptive learning rates and neighborhood functions. It also includes a scaling parameter to make the network invariant to scaling, translation and rotation of the input space. The TASOM and its variants have been used in several applications including adaptive clustering, multilevel thresholding, input space approximation, and active contour modeling. Moreover, a Binary Tree TASOM or BTASOM, resembling a binary natural tree having nodes composed of TASOM networks has been proposed where the number of its levels and the number of its nodes are adaptive with its environment.

* The growing self-organizing map (GSOM) is a growing variant of the self-organizing map. The GSOM was developed to address the issue of identifying a suitable map size in the SOM. It starts with a minimal number of nodes (usually four) and grows new nodes on the boundary based on a heuristic. By using a value called the ''spread factor'', the data analyst has the ability to control the growth of the GSOM.

* The elastic map

Elastic maps provide a tool for nonlinear dimensionality reduction. By their construction, they are a system of elastic springs embedded in the data

space. This system approximates a low-dimensional manifold. The elastic coefficients of this s ...

s approach borrows from the spline interpolation

In the mathematical field of numerical analysis, spline interpolation is a form of interpolation where the interpolant is a special type of piecewise polynomial called a spline. That is, instead of fitting a single, high-degree polynomial to all ...

the idea of minimization of the elastic energy

Elastic energy is the mechanical potential energy stored in the configuration of a material or physical system as it is subjected to elastic deformation by work performed upon it. Elastic energy occurs when objects are impermanently compressed, ...

. In learning, it minimizes the sum of quadratic bending and stretching energy with the least squares

The method of least squares is a standard approach in regression analysis to approximate the solution of overdetermined systems (sets of equations in which there are more equations than unknowns) by minimizing the sum of the squares of the res ...

approximation error

The approximation error in a data value is the discrepancy between an exact value and some ''approximation'' to it. This error can be expressed as an absolute error (the numerical amount of the discrepancy) or as a relative error (the absolute er ...

.

* The conformal approach that uses conformal mapping to interpolate each training sample between grid nodes in a continuous surface. A one-to-one smooth mapping is possible in this approach.

* The oriented and scalable map (OS-Map) generalises the neighborhood function and the winner selection. The homogeneous Gaussian neighborhood function is replaced with the matrix exponential. Thus one can specify the orientation either in the map space or in the data space. SOM has a fixed scale (=1), so that the maps "optimally describe the domain of observation". But what about a map covering the domain twice or in n-folds? This entails the conception of scaling. The OS-Map regards the scale as a statistical description of how many best-matching nodes an input has in the map.

See also

*Neural gas

Neural gas is an artificial neural network, inspired by the self-organizing map and introduced in 1991 by Thomas Martinetz and Klaus Schulten. The neural gas is a simple algorithm for finding optimal data representations based on feature ve ...

* Learning Vector Quantization In computer science, learning vector quantization (LVQ) is a prototype-based supervised classification algorithm. LVQ is the supervised counterpart of vector quantization systems.

Overview

LVQ can be understood as a special case of an artifici ...

* Liquid state machine

* Hybrid Kohonen SOM

* Sparse coding

Neural coding (or Neural representation) is a neuroscience field concerned with characterising the hypothetical relationship between the stimulus and the individual or ensemble neuronal responses and the relationship among the electrical activit ...

* Sparse distributed memory Sparse distributed memory (SDM) is a mathematical model of human long-term memory introduced by Pentti Kanerva in 1988 while he was at NASA Ames Research Center. It is a generalized random-access memory (RAM) for long (e.g., 1,000 bit) binary words. ...

* Deep learning

Deep learning (also known as deep structured learning) is part of a broader family of machine learning methods based on artificial neural networks with representation learning. Learning can be supervised, semi-supervised or unsupervised.

De ...

* Neocognitron

__NOTOC__

The neocognitron is a hierarchical, multilayered artificial neural network proposed by Kunihiko Fukushima in 1979. It has been used for Japanese handwritten character recognition and other pattern recognition tasks, and served as the ins ...

* Topological data analysis

In applied mathematics, topological based data analysis (TDA) is an approach to the analysis of datasets using techniques from topology. Extraction of information from datasets that are high-dimensional, incomplete and noisy is generally challengin ...

* Rustum, Rabee, Adebayo Adeloye, and Aurore Simala. "Kohonen self-organising map (KSOM) extracted features for enhancing MLP-ANN prediction models of BOD5." In International Symposium: Quantification and Reduction of Predictive Uncertainty for Sustainable Water Resources Management-24th General Assembly of the International Union of Geodesy and Geophysics (IUGG), pp. 181-187. 2007.

Notes

References

{{DEFAULTSORT:Self-Organizing Map Artificial neural networks Dimension reduction Cluster analysis algorithms Finnish inventions Unsupervised learning