Memory Compression on:

[Wikipedia]

[Google]

[Amazon]

Memory is the faculty of the

Memory is the faculty of the

The storage in sensory memory and short-term memory generally has a strictly limited capacity and duration. This means that information is not retained indefinitely. By contrast, while the total capacity of long-term memory has yet to be established, it can store much larger quantities of information. Furthermore, it can store this information for a much longer duration, potentially for a whole life span. For example, given a random seven-digit number, one may remember it for only a few seconds before forgetting, suggesting it was stored in short-term memory. On the other hand, one can remember telephone numbers for many years through repetition; this information is said to be stored in long-term memory.

While short-term memory encodes information acoustically, long-term memory encodes it semantically: Baddeley (1966) discovered that, after 20 minutes, test subjects had the most difficulty recalling a collection of words that had similar meanings (e.g. big, large, great, huge) long-term. Another part of long-term memory is episodic memory, "which attempts to capture information such as 'what', 'when' and 'where. With episodic memory, individuals are able to recall specific events such as birthday parties and weddings.

Short-term memory is supported by transient patterns of neuronal communication, dependent on regions of the

The storage in sensory memory and short-term memory generally has a strictly limited capacity and duration. This means that information is not retained indefinitely. By contrast, while the total capacity of long-term memory has yet to be established, it can store much larger quantities of information. Furthermore, it can store this information for a much longer duration, potentially for a whole life span. For example, given a random seven-digit number, one may remember it for only a few seconds before forgetting, suggesting it was stored in short-term memory. On the other hand, one can remember telephone numbers for many years through repetition; this information is said to be stored in long-term memory.

While short-term memory encodes information acoustically, long-term memory encodes it semantically: Baddeley (1966) discovered that, after 20 minutes, test subjects had the most difficulty recalling a collection of words that had similar meanings (e.g. big, large, great, huge) long-term. Another part of long-term memory is episodic memory, "which attempts to capture information such as 'what', 'when' and 'where. With episodic memory, individuals are able to recall specific events such as birthday parties and weddings.

Short-term memory is supported by transient patterns of neuronal communication, dependent on regions of the

In 1974 Baddeley and Hitch proposed a "working memory model" that replaced the general concept of short-term memory with active maintenance of information in short-term storage. In this model, working memory consists of three basic stores: the central executive, the phonological loop, and the visuo-spatial sketchpad. In 2000 this model was expanded with the multimodal episodic buffer (

In 1974 Baddeley and Hitch proposed a "working memory model" that replaced the general concept of short-term memory with active maintenance of information in short-term storage. In this model, working memory consists of three basic stores: the central executive, the phonological loop, and the visuo-spatial sketchpad. In 2000 this model was expanded with the multimodal episodic buffer (

The double-strand break introduced by TOP2B apparently frees the part of the promoter at an RNA polymerase-bound

The double-strand break introduced by TOP2B apparently frees the part of the promoter at an RNA polymerase-bound

Memory-related resources

from the

Human Memory

from the Technewtime {{Authority control Mental processes Neuropsychological assessment Sources of knowledge

Memory is the faculty of the

Memory is the faculty of the mind

The mind is that which thinks, feels, perceives, imagines, remembers, and wills. It covers the totality of mental phenomena, including both conscious processes, through which an individual is aware of external and internal circumstances ...

by which data

Data ( , ) are a collection of discrete or continuous values that convey information, describing the quantity, quality, fact, statistics, other basic units of meaning, or simply sequences of symbols that may be further interpreted for ...

or information

Information is an Abstraction, abstract concept that refers to something which has the power Communication, to inform. At the most fundamental level, it pertains to the Interpretation (philosophy), interpretation (perhaps Interpretation (log ...

is encoded

In communications and information processing, code is a system of rules to convert information—such as a letter, word, sound, image, or gesture—into another form, sometimes shortened or secret, for communication through a communication ...

, stored, and retrieved when needed. It is the retention of information over time for the purpose of influencing future action

Action may refer to:

* Action (philosophy), something which is done by a person

* Action principles the heart of fundamental physics

* Action (narrative), a literary mode

* Action fiction, a type of genre fiction

* Action game, a genre of video gam ...

. If past events could not be remembered, it would be impossible for language, relationships, or personal identity

Personal identity is the unique numerical identity of a person over time. Discussions regarding personal identity typically aim to determine the necessary and sufficient conditions under which a person at one time and a person at another time ...

to develop. Memory loss is usually described as forgetfulness or amnesia

Amnesia is a deficit in memory caused by brain damage or brain diseases,Gazzaniga, M., Ivry, R., & Mangun, G. (2009) Cognitive Neuroscience: The biology of the mind. New York: W.W. Norton & Company. but it can also be temporarily caused by t ...

.

Memory is often understood as an informational processing system with explicit and implicit functioning that is made up of a sensory processor, short-term (or working) memory, and long-term memory

Long-term memory (LTM) is the stage of the Atkinson–Shiffrin memory model in which informative knowledge is held indefinitely. It is defined in contrast to sensory memory, the initial stage, and short-term or working memory, the second stage ...

. This can be related to the neuron

A neuron (American English), neurone (British English), or nerve cell, is an membrane potential#Cell excitability, excitable cell (biology), cell that fires electric signals called action potentials across a neural network (biology), neural net ...

.

The sensory processor allows information from the outside world to be sensed in the form of chemical and physical stimuli and attended to various levels of focus and intent. Working memory serves as an encoding and retrieval processor. Information in the form of stimuli is encoded in accordance with explicit or implicit functions by the working memory processor. The working memory also retrieves information from previously stored material. Finally, the function of long-term memory is to store through various categorical models or systems.

Declarative, or explicit memory

Explicit memory (or declarative memory) is one of the two main types of long-term human memory, the other of which is implicit memory. Explicit memory is the conscious, intentional recollection of factual information, previous experiences, and c ...

, is the conscious storage and recollection of data. Under declarative memory resides semantic

Semantics is the study of linguistic Meaning (philosophy), meaning. It examines what meaning is, how words get their meaning, and how the meaning of a complex expression depends on its parts. Part of this process involves the distinction betwee ...

and episodic memory

Episodic memory is the memory of everyday events (such as times, location geography, associated emotions, and other contextual information) that can be explicitly stated or conjured. It is the collection of past personal experiences that occurred ...

. Semantic memory refers to memory that is encoded with specific meaning. Meanwhile, episodic memory refers to information that is encoded along a spatial and temporal plane. Declarative memory is usually the primary process thought of when referencing memory. Non-declarative, or implicit, memory is the unconscious storage and recollection of information. An example of a non-declarative process would be the unconscious learning or retrieval of information by way of procedural memory

Procedural memory is a type of implicit memory ( unconscious, long-term memory) which aids the performance of particular types of tasks without conscious awareness of these previous experiences.

Procedural memory guides the processes we perform ...

, or a priming phenomenon. Priming is the process of subliminally arousing specific responses from memory and shows that not all memory is consciously activated, whereas procedural memory is the slow and gradual learning of skills that often occurs without conscious attention to learning.

Memory is not a perfect processor and is affected by many factors. The ways by which information is encoded, stored, and retrieved can all be corrupted. Pain, for example, has been identified as a physical condition that impairs memory, and has been noted in animal models as well as chronic pain patients. The amount of attention given new stimuli can diminish the amount of information that becomes encoded for storage. Also, the storage process can become corrupted by physical damage to areas of the brain that are associated with memory storage, such as the hippocampus. Finally, the retrieval of information from long-term memory can be disrupted because of decay within long-term memory. Normal functioning, decay over time, and brain damage all affect the accuracy and capacity of the memory.

Sensory memory

Sensory memory holds information, derived from the senses, less than one second after an item is perceived. The ability to look at an item and remember what it looked like with just a split second of observation, or memorization, is an example of sensory memory. It is out of cognitive control and is an automatic response. With very short presentations, participants often report that they seem to "see" more than they can actually report. The first precise experiments exploring this form of sensory memory were conducted byGeorge Sperling

George Sperling (born 1934) is an American cognitive psychologist, researcher, and educator. Sperling documented the existence of iconic memory (one of the sensory memory subtypes). Through several experiments, he showed support for his hypoth ...

(1963) using the "partial report paradigm." Subjects were presented with a grid of 12 letters, arranged into three rows of four. After a brief presentation, subjects were then played either a high, medium or low tone, cuing them which of the rows to report. Based on these partial report experiments, Sperling was able to show that the capacity of sensory memory was approximately 12 items, but that it degraded very quickly (within a few hundred milliseconds). Because this form of memory degrades so quickly, participants would see the display but be unable to report all of the items (12 in the "whole report" procedure) before they decayed. This type of memory cannot be prolonged via rehearsal.

Three types of sensory memories exist. Iconic memory

Iconic memory is the visual sensory memory register pertaining to the visual domain and a fast-decaying store of visual information. It is a component of the visual memory system which also includes visual short-term memory (VSTM) and long-term mem ...

is a fast decaying store of visual information, a type of sensory memory that briefly stores an image that has been perceived for a small duration. Echoic memory

Echoic memory is the sensory memory that registers specific to auditory information (sounds). Once an auditory stimulus is heard, it is stored in memory so that it can be processed and understood. Unlike most visual memory, where a person can cho ...

is a fast decaying store of auditory information, also a sensory memory that briefly stores sounds that have been perceived for short durations. Haptic memory

Haptic memory is the form of sensory memory specific to touch stimuli. Haptic memory is used regularly when assessing the necessary forces for gripping and interacting with familiar objects. It may also influence one's interactions with novel obj ...

is a type of sensory memory that represents a database for touch stimuli.

Short-term memory

Short-term memory, not to be confused with working memory, allows recall for a period of several seconds to a minute without rehearsal. Its capacity, however, is very limited. In 1956, George A. Miller (1920–2012), when working atBell Laboratories

Nokia Bell Labs, commonly referred to as ''Bell Labs'', is an American industrial research and development company owned by Finnish technology company Nokia. With headquarters located in Murray Hill, New Jersey, the company operates several lab ...

, conducted experiments showing that the store of short-term memory was 7±2 items. (Hence, the title of his famous paper, "The Magical Number 7±2.") Modern perspectives estimate the capacity of short-term memory to be lower, typically on the order of 4–5 items, or argue for a more flexible limit based on information instead of items. Memory capacity can be increased through a process called chunking. For example, in recalling a ten-digit telephone number

A telephone number is the address of a Telecommunications, telecommunication endpoint, such as a telephone, in a telephone network, such as the public switched telephone network (PSTN). A telephone number typically consists of a Number, sequ ...

, a person could chunk the digits into three groups: first, the area code (such as 123), then a three-digit chunk (456), and, last, a four-digit chunk (7890). This method of remembering telephone numbers is far more effective than attempting to remember a string of 10 digits; this is because we are able to chunk the information into meaningful groups of numbers. This is reflected in some countries' tendencies to display telephone numbers as several chunks of two to four numbers.

Short-term memory is believed to rely mostly on an acoustic code for storing information, and to a lesser extent on a visual code. Conrad (1964) found that test subjects had more difficulty recalling collections of letters that were acoustically similar, e.g., E, P, D. Confusion with recalling acoustically similar letters rather than visually similar letters implies that the letters were encoded acoustically. Conrad's (1964) study, however, deals with the encoding of written text. Thus, while the memory of written language may rely on acoustic components, generalizations to all forms of memory cannot be made.

Long-term memory

The storage in sensory memory and short-term memory generally has a strictly limited capacity and duration. This means that information is not retained indefinitely. By contrast, while the total capacity of long-term memory has yet to be established, it can store much larger quantities of information. Furthermore, it can store this information for a much longer duration, potentially for a whole life span. For example, given a random seven-digit number, one may remember it for only a few seconds before forgetting, suggesting it was stored in short-term memory. On the other hand, one can remember telephone numbers for many years through repetition; this information is said to be stored in long-term memory.

While short-term memory encodes information acoustically, long-term memory encodes it semantically: Baddeley (1966) discovered that, after 20 minutes, test subjects had the most difficulty recalling a collection of words that had similar meanings (e.g. big, large, great, huge) long-term. Another part of long-term memory is episodic memory, "which attempts to capture information such as 'what', 'when' and 'where. With episodic memory, individuals are able to recall specific events such as birthday parties and weddings.

Short-term memory is supported by transient patterns of neuronal communication, dependent on regions of the

The storage in sensory memory and short-term memory generally has a strictly limited capacity and duration. This means that information is not retained indefinitely. By contrast, while the total capacity of long-term memory has yet to be established, it can store much larger quantities of information. Furthermore, it can store this information for a much longer duration, potentially for a whole life span. For example, given a random seven-digit number, one may remember it for only a few seconds before forgetting, suggesting it was stored in short-term memory. On the other hand, one can remember telephone numbers for many years through repetition; this information is said to be stored in long-term memory.

While short-term memory encodes information acoustically, long-term memory encodes it semantically: Baddeley (1966) discovered that, after 20 minutes, test subjects had the most difficulty recalling a collection of words that had similar meanings (e.g. big, large, great, huge) long-term. Another part of long-term memory is episodic memory, "which attempts to capture information such as 'what', 'when' and 'where. With episodic memory, individuals are able to recall specific events such as birthday parties and weddings.

Short-term memory is supported by transient patterns of neuronal communication, dependent on regions of the frontal lobe

The frontal lobe is the largest of the four major lobes of the brain in mammals, and is located at the front of each cerebral hemisphere (in front of the parietal lobe and the temporal lobe). It is parted from the parietal lobe by a Sulcus (neur ...

(especially dorsolateral prefrontal cortex

The dorsolateral prefrontal cortex (DLPFC or DL-PFC) is an area in the prefrontal cortex of the primate brain. It is one of the most recently derived parts of the human brain. It undergoes a prolonged period of maturation which lasts into adulthoo ...

) and the parietal lobe

The parietal lobe is one of the four Lobes of the brain, major lobes of the cerebral cortex in the brain of mammals. The parietal lobe is positioned above the temporal lobe and behind the frontal lobe and central sulcus.

The parietal lobe integra ...

. Long-term memory, on the other hand, is maintained by more stable and permanent changes in neural connections widely spread throughout the brain. The hippocampus

The hippocampus (: hippocampi; via Latin from Ancient Greek, Greek , 'seahorse'), also hippocampus proper, is a major component of the brain of humans and many other vertebrates. In the human brain the hippocampus, the dentate gyrus, and the ...

is essential (for learning new information) to the consolidation of information from short-term to long-term memory, although it does not seem to store information itself. It was thought that without the hippocampus new memories were unable to be stored into long-term memory and that there would be a very short attention span

Attention span is the amount of time spent concentrating on a task before becoming distracted. Distractibility occurs when attention is uncontrollably diverted to another activity or sensation. ''Attention training'' is said to be part of educa ...

, as first gleaned from patient Henry Molaison

Henry Gustav Molaison (February 26, 1926 – December 2, 2008), known widely as H.M., was an American epileptic man who in 1953 received a bilateral medial temporal lobectomy to surgically resect parts of his brain—the anterior two third ...

after what was thought to be the full removal of both his hippocampi. More recent examination of his brain, post-mortem, shows that the hippocampus was more intact than first thought, throwing theories drawn from the initial data into question. The hippocampus may be involved in changing neural connections for a period of three months or more after the initial learning.

Research has suggested that long-term memory storage in humans may be maintained by DNA methylation

DNA methylation is a biological process by which methyl groups are added to the DNA molecule. Methylation can change the activity of a DNA segment without changing the sequence. When located in a gene promoter (genetics), promoter, DNA methylati ...

, and the 'prion' gene.

Further research investigated the molecular basis for long-term memory

Long-term memory (LTM) is the stage of the Atkinson–Shiffrin memory model in which informative knowledge is held indefinitely. It is defined in contrast to sensory memory, the initial stage, and short-term or working memory, the second stage ...

. By 2015 it had become clear that long-term memory requires gene transcription activation and de novo protein synthesis. Long-term memory formation depends on both the activation of memory promoting genes and the inhibition of memory suppressor genes, and DNA methylation

DNA methylation is a biological process by which methyl groups are added to the DNA molecule. Methylation can change the activity of a DNA segment without changing the sequence. When located in a gene promoter (genetics), promoter, DNA methylati ...

/DNA demethylation

For molecular biology in mammals, DNA demethylation causes replacement of 5-methylcytosine (5mC) in a DNA sequence by cytosine (C) (see figure of 5mC and C). DNA demethylation can occur by an active process at the site of a 5mC in a DNA sequence ...

was found to be a major mechanism for achieving this dual regulation.

Rats with a new, strong long-term memory due to contextual fear conditioning have reduced expression of about 1,000 genes and increased expression of about 500 genes in the hippocampus 24 hours after training, thus exhibiting modified expression of 9.17% of the rat hippocampal genome. Reduced gene expressions were associated with methylations of those genes.

Considerable further research into long-term memory has illuminated the molecular mechanisms by which methylations are established or removed, as reviewed in 2022. These mechanisms include, for instance, signal-responsive TOP2B-induced double-strand breaks in immediate early genes. Also the messenger RNA

In molecular biology, messenger ribonucleic acid (mRNA) is a single-stranded molecule of RNA that corresponds to the genetic sequence of a gene, and is read by a ribosome in the process of synthesizing a protein.

mRNA is created during the ...

s of many genes that had been subjected to methylation-controlled increases or decreases are transported by neural granules ( messenger RNP) to the dendritic spine

A dendritic spine (or spine) is a small membrane protrusion from a neuron's dendrite that typically receives input from a single axon at the synapse. Dendritic spines serve as a storage site for synaptic strength and help transmit electrical sign ...

s. At these locations the messenger RNAs can be translated into the proteins that control signaling at neuron

A neuron (American English), neurone (British English), or nerve cell, is an membrane potential#Cell excitability, excitable cell (biology), cell that fires electric signals called action potentials across a neural network (biology), neural net ...

al synapse

In the nervous system, a synapse is a structure that allows a neuron (or nerve cell) to pass an electrical or chemical signal to another neuron or a target effector cell. Synapses can be classified as either chemical or electrical, depending o ...

s.

Memory consolidation

The transition of a memory from short term to long term is calledmemory consolidation

Memory consolidation is a category of processes that stabilize a memory trace after its initial acquisition. A memory trace is a change in the nervous system caused by memorizing something. Consolidation is distinguished into two specific processe ...

. Little is known about the physiological processes involved. Two propositions of how the brain achieves this task are ''backpropagation'' or ''backprop'' and positive feedback

Positive feedback (exacerbating feedback, self-reinforcing feedback) is a process that occurs in a feedback loop where the outcome of a process reinforces the inciting process to build momentum. As such, these forces can exacerbate the effects ...

from the endocrine system. Backprop has been proposed as a mechanism the brain uses to achieve memory consolidation and has been used, for example by Geoffrey E. Hinton, Nobel Prize laureate for Physics in 2024, to build AI software. It implies a feedback to neurons consolidating a given memory to erase that information when the brain learns that that information is misleading or wrong. However, empirical evidence of its existence is not available.

On the contrary, positive feedback for consolidating a certain short term memory registered in neurons, and considered by the neuro-endocrine systems to be useful, will make that short term memory to consolidate into a permanent one. This has been shown to be true experimentally first in insects, which use arginine and nitric oxide levels in their brains and endorphin receptors for this task. The involvement of arginine and nitric oxide in memory consolidation has been confirmed in birds, mammals and other creatures, including humans.

Glial cells have also an important role in memory formation, although how they do their work remains to be unveiled.

Other mechanisms for memory consolidation can not be discarded.

Multi-store model

The multi-store model (also known asAtkinson–Shiffrin memory model

The Atkinson–Shiffrin model (also known as the multi-store model or modal model) is a model of memory proposed in 1968 by Richard Atkinson and Richard Shiffrin. The model asserts that human memory has three separate components:

# a '' sensor ...

) was first described in 1968 by Atkinson and Shiffrin.

The multi-store model has been criticised for being too simplistic. For instance, long-term memory is believed to be actually made up of multiple subcomponents, such as episodic and procedural memory

Procedural memory is a type of implicit memory ( unconscious, long-term memory) which aids the performance of particular types of tasks without conscious awareness of these previous experiences.

Procedural memory guides the processes we perform ...

. It also proposes that rehearsal is the only mechanism by which information eventually reaches long-term storage, but evidence shows us capable of remembering things without rehearsal.

The model also shows all the memory stores as being a single unit whereas research into this shows differently. For example, short-term memory can be broken up into different units such as visual information and acoustic information. In a study by Zlonoga and Gerber (1986), patient 'KF' demonstrated certain deviations from the Atkinson–Shiffrin model. Patient KF was brain damage

Brain injury (BI) is the destruction or degeneration of brain cells. Brain injuries occur due to a wide range of internal and external factors. In general, brain damage refers to significant, undiscriminating trauma-induced damage.

A common ...

d, displaying difficulties regarding short-term memory. Recognition of sounds such as spoken numbers, letters, words, and easily identifiable noises (such as doorbells and cats meowing) were all impacted. Visual short-term memory was unaffected, suggesting a dichotomy between visual and audial memory.

Working memory

Baddeley's model of working memory

Baddeley's model of working memory is a model of human memory proposed by Alan Baddeley and Graham Hitch in 1974, in an attempt to present a more accurate model of primary memory (often referred to as short-term memory). Working memory splits pr ...

).

The central executive essentially acts as an attention sensory store. It channels information to the three component processes: the phonological loop, the visuospatial sketchpad, and the episodic buffer.

The phonological loop stores auditory information by silently rehearsing sounds or words in a continuous loop: the articulatory process (for example the repetition of a telephone number over and over again). A short list of data is easier to remember. The phonological loop is occasionally disrupted. Irrelevant speech or background noise can impede the phonological loop. Articulatory suppression Articulatory suppression is the process of inhibiting memory performance by speaking while being presented with an item to remember. Most research demonstrates articulatory suppression by requiring an individual to repeatedly say an irrelevant speec ...

can also confuse encoding and words that sound similar can be switched or misremembered through the phonological similarity effect. the phonological loop also has a limit to how much it can hold at once which means that it is easier to remember a lot of short words rather than a lot of long words, according to the word length effect.

The visuospatial sketchpad stores visual and spatial information. It is engaged when performing spatial tasks (such as judging distances) or visual ones (such as counting the windows on a house or imagining images). Those with aphantasia

Aphantasia ( , ) is the inability to voluntarily visualize mental images.

The phenomenon was first described by Francis Galton in 1880, but has remained relatively unstudied. Interest in the phenomenon renewed after the publication of a study ...

will not be able to engage the visuospatial sketchpad.

The episodic buffer is dedicated to linking information across domains to form integrated units of visual, spatial, and verbal information and chronological ordering (e.g., the memory of a story or a movie scene). The episodic buffer is also assumed to have links to long-term memory and semantic meaning.

The working memory model explains many practical observations, such as why it is easier to do two different tasks, one verbal and one visual, than two similar tasks, and the aforementioned word-length effect. Working memory is also the premise for what allows us to do everyday activities involving thought. It is the section of memory where we carry out thought processes and use them to learn and reason about topics.

Types

Researchers distinguish between recognition and recall memory. Recognition memory tasks require individuals to indicate whether they have encountered a stimulus (such as a picture or a word) before. Recall memory tasks require participants to retrieve previously learned information. For example, individuals might be asked to produce a series of actions they have seen before or to say a list of words they have heard before.By information type

Topographical memory involves the ability to orient oneself in space, to recognize and follow an itinerary, or to recognize familiar places. Getting lost when traveling alone is an example of the failure of topographic memory. Flashbulb memories are clear episodic memories of unique and highly emotional events. People remembering where they were or what they were doing when they first heard the news of President Kennedy'sassassination

Assassination is the willful killing, by a sudden, secret, or planned attack, of a personespecially if prominent or important. It may be prompted by political, ideological, religious, financial, or military motives.

Assassinations are orde ...

, the Sydney Siege or of 9/11

The September 11 attacks, also known as 9/11, were four coordinated Islamist terrorist suicide attacks by al-Qaeda against the United States in 2001. Nineteen terrorists hijacked four commercial airliners, crashing the first two into ...

are examples of flashbulb memories.

Long-term

Anderson (1976) divides long-term memory into '' declarative (explicit)'' and '' procedural (implicit)'' memories.Declarative

Declarative memory

Explicit memory (or declarative memory) is one of the two main types of Long-term memory, long-term human memory, the other of which is implicit memory. Explicit memory is the Consciousness, conscious, intentional Recall (memory), recollection of f ...

requires conscious recall, in that some conscious

Consciousness, at its simplest, is awareness of a state or object, either internal to oneself or in one's external environment. However, its nature has led to millennia of analyses, explanations, and debate among philosophers, scientists, a ...

process must call back the information. It is sometimes called ''explicit memory

Explicit memory (or declarative memory) is one of the two main types of long-term human memory, the other of which is implicit memory. Explicit memory is the conscious, intentional recollection of factual information, previous experiences, and c ...

'', since it consists of information that is explicitly stored and retrieved. Declarative memory can be further sub-divided into semantic memory

Semantic memory refers to general world knowledge that humans have accumulated throughout their lives. This general knowledge (Semantics, word meanings, concepts, facts, and ideas) is intertwined in experience and dependent on culture. New concep ...

, concerning principles and facts taken independent of context; and episodic memory

Episodic memory is the memory of everyday events (such as times, location geography, associated emotions, and other contextual information) that can be explicitly stated or conjured. It is the collection of past personal experiences that occurred ...

, concerning information specific to a particular context, such as a time and place. Semantic memory allows the encoding of abstract knowledge

Knowledge is an Declarative knowledge, awareness of facts, a Knowledge by acquaintance, familiarity with individuals and situations, or a Procedural knowledge, practical skill. Knowledge of facts, also called propositional knowledge, is oft ...

about the world, such as "Paris is the capital of France". Episodic memory, on the other hand, is used for more personal memories, such as the sensations, emotions, and personal associations of a particular place or time. Episodic memories often reflect the "firsts" in life such as a first kiss, first day of school or first time winning a championship. These are key events in one's life that can be remembered clearly.

Research suggests that declarative memory is supported by several functions of the medial temporal lobe system which includes the hippocampus. Autobiographical memory

Autobiographical memory (AM) is a memory system consisting of episodes recollected from an individual's life, based on a combination of Episodic memory, episodic (personal experiences and specific objects, people and events experienced at particu ...

– memory for particular events within one's own life – is generally viewed as either equivalent to, or a subset of, episodic memory. Visual memory

Visual memory describes the relationship between perceptual processing and the Encoding (memory), encoding, Storage (memory), storage and Recall (memory), retrieval of the resulting neural representations. Visual memory occurs over a broad time ...

is part of memory preserving some characteristics of our senses pertaining to visual experience. One is able to place in memory information that resembles objects, places, animals or people in sort of a mental image. Visual memory can result in priming and it is assumed some kind of perceptual representational system underlies this phenomenon.

Procedural

In contrast,procedural memory

Procedural memory is a type of implicit memory ( unconscious, long-term memory) which aids the performance of particular types of tasks without conscious awareness of these previous experiences.

Procedural memory guides the processes we perform ...

(or ''implicit memory

In psychology, implicit memory is one of the two main types of long-term human memory. It is acquired and used unconsciously, and can affect thoughts and behaviours. One of its most common forms is procedural memory, which allows people to perf ...

'') is not based on the conscious recall of information, but on implicit learning Implicit learning is the learning of complex information in an unintentional manner, without awareness of what has been learned. According to Frensch and Rünger (2003) the general definition of implicit learning is still subject to some controvers ...

. It can best be summarized as remembering how to do something. Procedural memory is primarily used in learning motor skill

A motor skill is a function that involves specific movements of the motor system, body's muscles to perform a certain task. These tasks could include walking, running, or riding a bike. In order to perform this skill, the body's nervous system, m ...

s and can be considered a subset of implicit memory. It is revealed when one does better in a given task due only to repetition – no new explicit memories have been formed, but one is unconsciously accessing aspects of those previous experiences. Procedural memory involved in motor learning

Motor learning refers broadly to changes in an organism's movements that reflect changes in the structure and function of the nervous system. Motor learning occurs over varying timescales and degrees of complexity: humans learn to walk or talk over ...

depends on the cerebellum

The cerebellum (: cerebella or cerebellums; Latin for 'little brain') is a major feature of the hindbrain of all vertebrates. Although usually smaller than the cerebrum, in some animals such as the mormyrid fishes it may be as large as it or eve ...

and basal ganglia

The basal ganglia (BG) or basal nuclei are a group of subcortical Nucleus (neuroanatomy), nuclei found in the brains of vertebrates. In humans and other primates, differences exist, primarily in the division of the globus pallidus into externa ...

.

A characteristic of procedural memory is that the things remembered are automatically translated into actions, and thus sometimes difficult to describe. Some examples of procedural memory include the ability to ride a bike or tie shoelaces.

By temporal direction

Another major way to distinguish different memory functions is whether the content to be remembered is in the past, retrospective memory, or in the future, prospective memory. John Meacham introduced this distinction in a paper presented at the 1975American Psychological Association

The American Psychological Association (APA) is the main professional organization of psychologists in the United States, and the largest psychological association in the world. It has over 170,000 members, including scientists, educators, clin ...

annual meeting and subsequently included by Ulric Neisser

Ulric Richard Gustav Neisser (December 8, 1928 – February 17, 2012) was a German-American psychologist, Cornell University professor, and member of the US National Academy of Sciences. He has been referred to as the "father of cognitive ps ...

in his 1982 edited volume, ''Memory Observed: Remembering in Natural Contexts''. Thus, retrospective memory as a category includes semantic, episodic and autobiographical memory. In contrast, prospective memory is memory for future intentions, or ''remembering to remember'' (Winograd, 1988). Prospective memory can be further broken down into event- and time-based prospective remembering. Time-based prospective memories are triggered by a time-cue, such as going to the doctor (action) at 4pm (cue). Event-based prospective memories are intentions triggered by cues, such as remembering to post a letter (action) after seeing a mailbox (cue). Cues do not need to be related to the action (as the mailbox/letter example), and lists, sticky-notes, knotted handkerchiefs, or string around the finger all exemplify cues that people use as strategies to enhance prospective memory.

Study techniques

To assess infants

Infants do not have the language ability to report on their memories and so verbal reports cannot be used to assess very young children's memory. Throughout the years, however, researchers have adapted and developed a number of measures for assessing both infants' recognition memory and their recall memory.Habituation

Habituation is a form of non-associative learning in which an organism’s non-reinforced response to an inconsequential stimulus decreases after repeated or prolonged presentations of that stimulus. For example, organisms may habituate to re ...

and operant conditioning

Operant conditioning, also called instrumental conditioning, is a learning process in which voluntary behaviors are modified by association with the addition (or removal) of reward or aversive stimuli. The frequency or duration of the behavior ma ...

techniques have been used to assess infants' recognition memory and the deferred and elicited imitation techniques have been used to assess infants' recall memory.

Techniques used to assess infants' recognition memory include the following:

* Visual paired comparison procedure (relies on habituation): infants are first presented with pairs of visual stimuli, such as two black-and-white photos of human faces, for a fixed amount of time; then, after being familiarized with the two photos, they are presented with the "familiar" photo and a new photo. The time spent looking at each photo is recorded. Looking longer at the new photo indicates that they remember the "familiar" one. Studies using this procedure have found that 5- to 6-month-olds can retain information for as long as fourteen days.

* Operant conditioning technique: infants are placed in a crib and a ribbon that is connected to a mobile overhead is tied to one of their feet. Infants notice that when they kick their foot the mobile moves – the rate of kicking increases dramatically within minutes. Studies using this technique have revealed that infants' memory substantially improves over the first 18-months. Whereas 2- to 3-month-olds can retain an operant response (such as activating the mobile by kicking their foot) for a week, 6-month-olds can retain it for two weeks, and 18-month-olds can retain a similar operant response for as long as 13 weeks.

Techniques used to assess infants' recall memory include the following:

* Deferred imitation technique: an experimenter shows infants a unique sequence of actions (such as using a stick to push a button on a box) and then, after a delay, asks the infants to imitate the actions. Studies using deferred imitation have shown that 14-month-olds' memories for the sequence of actions can last for as long as four months.

* Elicited imitation technique: is very similar to the deferred imitation technique; the difference is that infants are allowed to imitate the actions before the delay. Studies using the elicited imitation technique have shown that 20-month-olds can recall the action sequences twelve months later.

To assess children and older adults

Researchers use a variety of tasks to assess older children and adults' memory. Some examples are: * Paired associate learning – when one learns to associate one specific word with another. For example, when given a word such as "safe" one must learn to say another specific word, such as "green". This is stimulus and response. * Free recall – during this task a subject would be asked to study a list of words and then later they will be asked to recall or write down as many words that they can remember, similar to free response questions. Earlier items are affected by retroactive interference (RI), which means the longer the list, the greater the interference, and the less likelihood that they are recalled. On the other hand, items that have been presented lastly suffer little RI, but suffer a great deal from proactive interference (PI), which means the longer the delay in recall, the more likely that the items will be lost.Baddeley, Alan D., "The Psychology of Memory", pp. 131–132, Basic Books, Inc., Publishers, New York, 1976, * Cued recall – one is given a significant hints to help retrieve information that has been previously encoded into the person's memory; typically this can involve a word relating to the information being asked to remember. This is similar to fill in the blank assessments used in classrooms. * Recognition – subjects are asked to remember a list of words or pictures, after which point they are asked to identify the previously presented words or pictures from among a list of alternatives that were not presented in the original list. This is similar to multiple choice assessments. * Detection paradigm – individuals are shown a number of objects and color samples during a certain period of time. They are then tested on their visual ability to remember as much as they can by looking at testers and pointing out whether the testers are similar to the sample, or if any change is present. * Savings method – compares the speed of originally learning to the speed of relearning it. The amount of time saved measures memory. * Implicit-memory tasks – information is drawn from memory without conscious realization.Failures

* Transience – memories degrade with the passing of time. This occurs in the storage stage of memory, after the information has been stored and before it is retrieved. This can happen in sensory, short-term, and long-term storage. It follows a general pattern where the information is rapidly forgotten during the first couple of days or years, followed by small losses in later days or years. * Absent-mindedness – Memory failure due to the lack ofattention

Attention or focus, is the concentration of awareness on some phenomenon to the exclusion of other stimuli. It is the selective concentration on discrete information, either subjectively or objectively. William James (1890) wrote that "Atte ...

. Attention plays a key role in storing information into long-term memory; without proper attention, the information might not be stored, making it impossible to be retrieved later.

Physiology

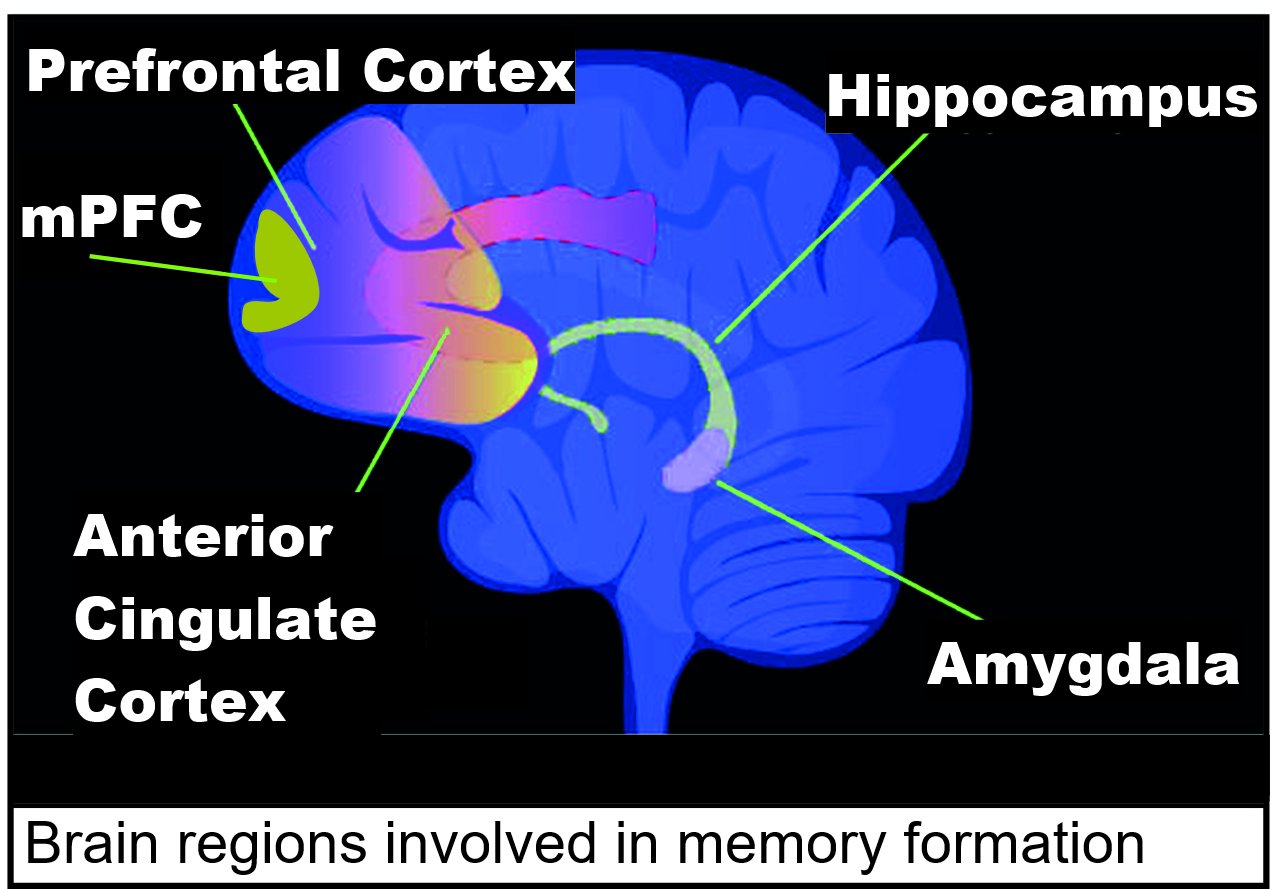

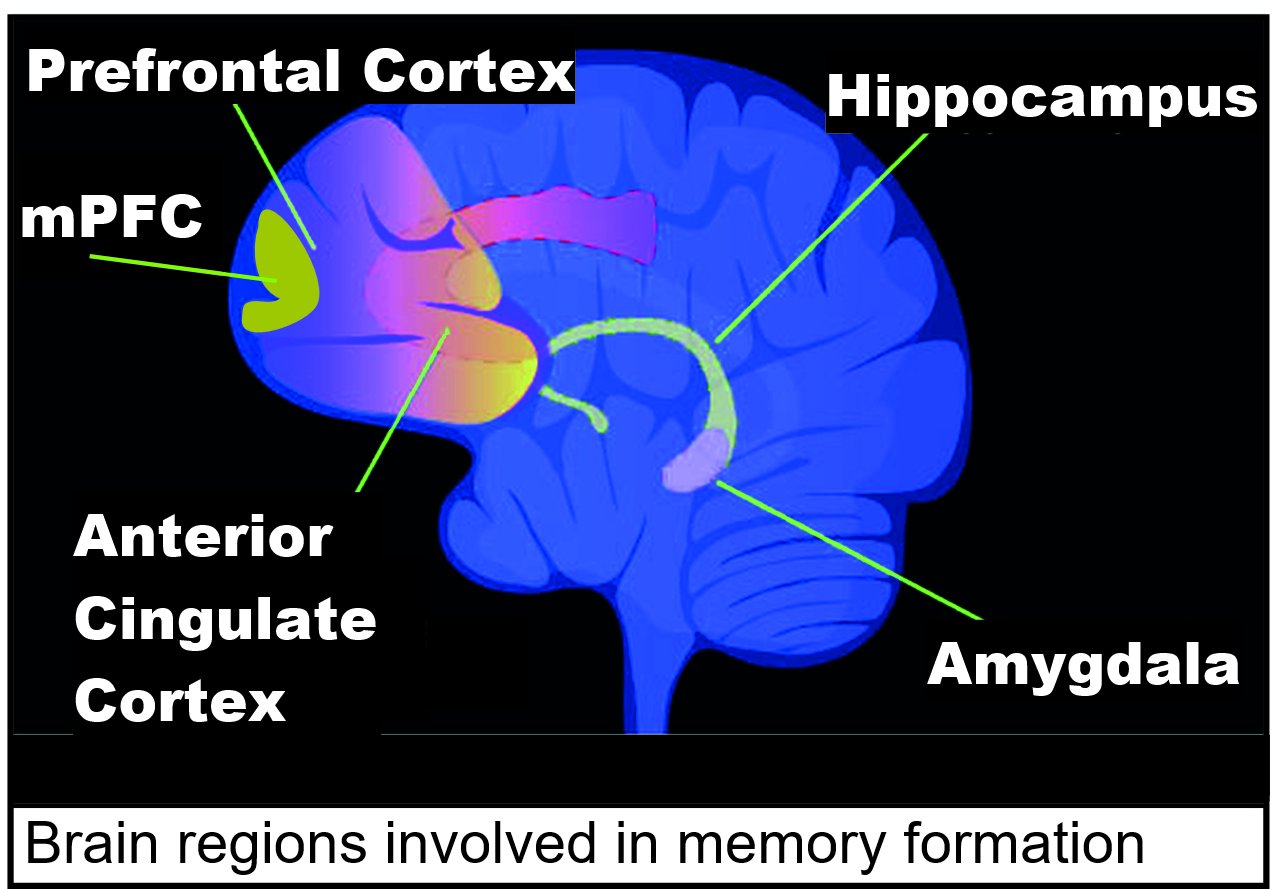

Brain areas involved in theneuroanatomy of memory

The neuroanatomy of memory encompasses a wide variety of anatomical structures in the brain.

Subcortical structures

Hippocampus

The hippocampus is a structure in the brain that has been associated with various memory functions. It is part of ...

such as the hippocampus

The hippocampus (: hippocampi; via Latin from Ancient Greek, Greek , 'seahorse'), also hippocampus proper, is a major component of the brain of humans and many other vertebrates. In the human brain the hippocampus, the dentate gyrus, and the ...

, the amygdala

The amygdala (; : amygdalae or amygdalas; also '; Latin from Greek language, Greek, , ', 'almond', 'tonsil') is a paired nucleus (neuroanatomy), nuclear complex present in the Cerebral hemisphere, cerebral hemispheres of vertebrates. It is c ...

, the striatum

The striatum (: striata) or corpus striatum is a cluster of interconnected nuclei that make up the largest structure of the subcortical basal ganglia. The striatum is a critical component of the motor and reward systems; receives glutamat ...

, or the mammillary bodies are thought to be involved in specific types of memory. For example, the hippocampus is believed to be involved in spatial learning and declarative learning

Declarative learning is acquiring information that one can speak about (contrast with motor learning). The capital of a state is a declarative piece of information, while knowing how to ride a bike is not. Episodic memory and semantic memory a ...

, while the amygdala is thought to be involved in emotional memory.

Damage to certain areas in patients and animal models and subsequent memory deficits is a primary source of information. However, rather than implicating a specific area, it could be that damage to adjacent areas, or to a pathway ''traveling'' through the area is actually responsible for the observed deficit. Further, it is not sufficient to describe memory, and its counterpart, learning

Learning is the process of acquiring new understanding, knowledge, behaviors, skills, value (personal and cultural), values, Attitude (psychology), attitudes, and preferences. The ability to learn is possessed by humans, non-human animals, and ...

, as solely dependent on specific brain regions. Learning and memory are usually attributed to changes in neuronal synapse

In the nervous system, a synapse is a structure that allows a neuron (or nerve cell) to pass an electrical or chemical signal to another neuron or a target effector cell. Synapses can be classified as either chemical or electrical, depending o ...

s, thought to be mediated by long-term potentiation

In neuroscience, long-term potentiation (LTP) is a persistent strengthening of synapses based on recent patterns of activity. These are patterns of synaptic activity that produce a long-lasting increase in signal transmission between two neuron ...

and long-term depression

In neurophysiology, long-term depression (LTD) is an activity-dependent reduction in the efficacy of neuronal synapses lasting hours or longer following a long patterned stimulus. LTD occurs in many areas of the Central Nervous System, CNS with v ...

.

In general, the more emotionally charged an event or experience is, the better it is remembered; this phenomenon is known as the memory enhancement effect. Patients with amygdala damage, however, do not show a memory enhancement effect.

Hebb distinguished between short-term and long-term memory. He postulated that any memory that stayed in short-term storage for a long enough time would be consolidated into a long-term memory. Later research showed this to be false. Research has shown that direct injections of cortisol

Cortisol is a steroid hormone in the glucocorticoid class of hormones and a stress hormone. When used as medication, it is known as hydrocortisone.

Cortisol is produced in many animals, mainly by the ''zona fasciculata'' of the adrenal corte ...

or epinephrine

Adrenaline, also known as epinephrine, is a hormone and medication which is involved in regulating visceral functions (e.g., respiration). It appears as a white microcrystalline granule. Adrenaline is normally produced by the adrenal glands a ...

help the storage of recent experiences. This is also true for stimulation of the amygdala. This proves that excitement enhances memory by the stimulation of hormones that affect the amygdala. Excessive or prolonged stress (with prolonged cortisol) may hurt memory storage. Patients with amygdalar damage are no more likely to remember emotionally charged words than nonemotionally charged ones. The hippocampus is important for explicit memory. The hippocampus is also important for memory consolidation. The hippocampus receives input from different parts of the cortex and sends its output out to different parts of the brain also. The input comes from secondary and tertiary sensory areas that have processed the information a lot already. Hippocampal damage may also cause memory loss and problems with memory storage. This memory loss includes retrograde amnesia

In neurology, retrograde amnesia (RA) is the inability to access memories or information from before an injury or disease occurred. RA differs from a similar condition called anterograde amnesia (AA), which is the inability to form new memories f ...

which is the loss of memory for events that occurred shortly before the time of brain damage.

Cognitive neuroscience

Cognitive neuroscientists consider memory as the retention, reactivation, and reconstruction of the experience-independent internal representation. The term of internal representation implies that such a definition of memory contains two components: the expression of memory at the behavioral or conscious level, and the underpinning physical neural changes (Dudai 2007). The latter component is also called engram or memory traces (Semon 1904). Some neuroscientists and psychologists mistakenly equate the concept of engram and memory, broadly conceiving all persisting after-effects of experiences as memory; others argue against this notion that memory does not exist until it is revealed in behavior or thought (Moscovitch 2007). One question that is crucial incognitive neuroscience

Cognitive neuroscience is the scientific field that is concerned with the study of the Biology, biological processes and aspects that underlie cognition, with a specific focus on the neural connections in the brain which are involved in mental ...

is how information and mental experiences are coded and represented in the brain. Scientists have gained much knowledge about the neuronal codes from the studies of plasticity, but most of such research has been focused on simple learning in simple neuronal circuits; it is considerably less clear about the neuronal changes involved in more complex examples of memory, particularly declarative memory that requires the storage of facts and events (Byrne 2007). Convergence-divergence zone The theory of convergence-divergence zones Antonio Damasio, Time-locked multiregional retroactivation: A systems-level proposal for the neural substrates of recall and recognition, Cognition, 33 (1989) 25-62 was proposed by Antonio Damasio, in 1989, ...

s might be the neural networks where memories are stored and retrieved. Considering that there are several kinds of memory, depending on types of represented knowledge, underlying mechanisms, processes functions and modes of acquisition, it is likely that different brain areas support different memory systems and that they are in mutual relationships in neuronal networks: "components of memory representation are distributed widely across different parts of the brain as mediated by multiple neocortical circuits".

* Encoding

In communications and Data processing, information processing, code is a system of rules to convert information—such as a letter (alphabet), letter, word, sound, image, or gesture—into another form, sometimes data compression, shortened or ...

. Encoding of working memory

Working memory is a cognitive system with a limited capacity that can Memory, hold information temporarily. It is important for reasoning and the guidance of decision-making and behavior. Working memory is often used synonymously with short-term m ...

involves the spiking of individual neurons induced by sensory input, which persists even after the sensory input disappears (Jensen and Lisman 2005; Fransen et al. 2002). Encoding of episodic memory

Episodic memory is the memory of everyday events (such as times, location geography, associated emotions, and other contextual information) that can be explicitly stated or conjured. It is the collection of past personal experiences that occurred ...

involves persistent changes in molecular structures that alter synaptic transmission

Neurotransmission (Latin: ''transmissio'' "passage, crossing" from ''transmittere'' "send, let through") is the process by which signaling molecules called neurotransmitters are released by the axon terminal of a neuron (the presynaptic neuron) ...

between neurons. Examples of such structural changes include long-term potentiation

In neuroscience, long-term potentiation (LTP) is a persistent strengthening of synapses based on recent patterns of activity. These are patterns of synaptic activity that produce a long-lasting increase in signal transmission between two neuron ...

(LTP) or spike-timing-dependent plasticity (STDP). The persistent spiking in working memory can enhance the synaptic and cellular changes in the encoding of episodic memory (Jensen and Lisman 2005).

* Working memory. Recent functional imaging studies detected working memory signals in both medial temporal lobe

The temporal lobe is one of the four major lobes of the cerebral cortex in the brain of mammals. The temporal lobe is located beneath the lateral fissure on both cerebral hemispheres of the mammalian brain.

The temporal lobe is involved in pr ...

(MTL), a brain area strongly associated with long-term memory

Long-term memory (LTM) is the stage of the Atkinson–Shiffrin memory model in which informative knowledge is held indefinitely. It is defined in contrast to sensory memory, the initial stage, and short-term or working memory, the second stage ...

, and prefrontal cortex

In mammalian brain anatomy, the prefrontal cortex (PFC) covers the front part of the frontal lobe of the cerebral cortex. It is the association cortex in the frontal lobe. The PFC contains the Brodmann areas BA8, BA9, BA10, BA11, BA12, ...

(Ranganath et al. 2005), suggesting a strong relationship between working memory and long-term memory. However, the substantially more working memory signals seen in the prefrontal lobe suggest that this area plays a more important role in working memory than MTL (Suzuki 2007).

* Consolidation and reconsolidation. Short-term memory

Short-term memory (or "primary" or "active memory") is the capacity for holding a small amount of information in an active, readily available state for a short interval. For example, short-term memory holds a phone number that has just been recit ...

(STM) is temporary and subject to disruption, while long-term memory (LTM), once consolidated, is persistent and stable. Consolidation of STM into LTM at the molecular level presumably involves two processes: synaptic consolidation and system consolidation. The former involves a protein synthesis process in the medial temporal lobe (MTL), whereas the latter transforms the MTL-dependent memory into an MTL-independent memory over months to years (Ledoux 2007). In recent years, such traditional consolidation dogma has been re-evaluated as a result of the studies on reconsolidation. These studies showed that prevention after retrieval affects subsequent retrieval of the memory (Sara 2000). New studies have shown that post-retrieval treatment with protein synthesis inhibitors and many other compounds can lead to an amnestic state (Nadel et al. 2000b; Alberini 2005; Dudai 2006). These findings on reconsolidation fit with the behavioral evidence that retrieved memory is not a carbon copy of the initial experiences, and memories are updated during retrieval.

Genetics

Study of the genetics of human memory is in its infancy though many genes have been investigated for their association to memory in humans and non-human animals. A notable initial success was the association ofAPOE

Apolipoprotein E (Apo-E) is a protein involved in the metabolism of fats in the body of mammals. A subtype is implicated in Alzheimer's disease and cardiovascular diseases. It is encoded in humans by the gene ''APOE''.

Apo-E belongs to a family ...

with memory dysfunction in Alzheimer's disease

Alzheimer's disease (AD) is a neurodegenerative disease and the cause of 60–70% of cases of dementia. The most common early symptom is difficulty in remembering recent events. As the disease advances, symptoms can include problems wit ...

. The search for genes associated with normally varying memory continues. One of the first candidates for normal variation in memory is the protein '' KIBRA'', which appears to be associated with the rate at which material is forgotten over a delay period. There has been some evidence that memories are stored in the nucleus of neurons.

Genetic underpinnings

Severalgene

In biology, the word gene has two meanings. The Mendelian gene is a basic unit of heredity. The molecular gene is a sequence of nucleotides in DNA that is transcribed to produce a functional RNA. There are two types of molecular genes: protei ...

s, proteins and enzymes have been extensively researched for their association with memory. Long-term memory, unlike short-term memory, is dependent upon the synthesis of new proteins. This occurs within the cellular body, and concerns the particular transmitters, receptors, and new synapse pathways that reinforce the communicative strength between neurons. The production of new proteins devoted to synapse reinforcement is triggered after the release of certain signaling substances (such as calcium within hippocampal neurons) in the cell. In the case of hippocampal cells, this release is dependent upon the expulsion of magnesium (a binding molecule) that is expelled after significant and repetitive synaptic signaling. The temporary expulsion of magnesium frees NMDA receptors to release calcium in the cell, a signal that leads to gene transcription and the construction of reinforcing proteins. For more information, see long-term potentiation

In neuroscience, long-term potentiation (LTP) is a persistent strengthening of synapses based on recent patterns of activity. These are patterns of synaptic activity that produce a long-lasting increase in signal transmission between two neuron ...

(LTP).

One of the newly synthesized proteins in LTP is also critical for maintaining long-term memory. This protein is an autonomously active form of the enzyme protein kinase C

In cell biology, protein kinase C, commonly abbreviated to PKC (EC 2.7.11.13), is a family of protein kinase enzymes that are involved in controlling the function of other proteins through the phosphorylation of hydroxyl groups of serine and t ...

(PKC), known as PKMζ. PKMζ maintains the activity-dependent enhancement of synaptic strength and inhibiting PKMζ erases established long-term memories, without affecting short-term memory or, once the inhibitor is eliminated, the ability to encode and store new long-term memories is restored. Also, BDNF

Brain-derived neurotrophic factor (BDNF), or abrineurin, is a protein found in the and the periphery. that, in humans, is encoded by the ''BDNF'' gene. BDNF is a member of the neurotrophin family of growth factors, which are related to the cano ...

is important for the persistence of long-term memories.

The long-term stabilization of synaptic changes is also determined by a parallel increase of pre- and postsynaptic structures such as axonal bouton, dendritic spine

A dendritic spine (or spine) is a small membrane protrusion from a neuron's dendrite that typically receives input from a single axon at the synapse. Dendritic spines serve as a storage site for synaptic strength and help transmit electrical sign ...

and postsynaptic density.

On the molecular level, an increase of the postsynaptic scaffolding proteins PSD-95

PSD-95 (postsynaptic density protein 95) also known as SAP-90 (synapse-associated protein 90) is a protein that in humans is encoded by the ''DLG4'' (discs large homolog 4) gene.

PSD-95 is a member of the membrane-associated guanylate kinase (MA ...

and HOMER1c has been shown to correlate with the stabilization of synaptic enlargement. The cAMP response element-binding protein (CREB

CREB-TF (CREB, cAMP response element-binding protein) is a cellular transcription factor. It binds to certain DNA sequences called cAMP response elements (CRE), thereby increasing or decreasing the transcription of the genes. CREB was first des ...

) is a transcription factor

In molecular biology, a transcription factor (TF) (or sequence-specific DNA-binding factor) is a protein that controls the rate of transcription (genetics), transcription of genetics, genetic information from DNA to messenger RNA, by binding t ...

which is believed to be important in consolidating short-term to long-term memories, and which is believed to be downregulated in Alzheimer's disease.

DNA methylation and demethylation

Rats exposed to an intenselearning

Learning is the process of acquiring new understanding, knowledge, behaviors, skills, value (personal and cultural), values, Attitude (psychology), attitudes, and preferences. The ability to learn is possessed by humans, non-human animals, and ...

event may retain a life-long memory of the event, even after a single training session. The long-term memory of such an event appears to be initially stored in the hippocampus

The hippocampus (: hippocampi; via Latin from Ancient Greek, Greek , 'seahorse'), also hippocampus proper, is a major component of the brain of humans and many other vertebrates. In the human brain the hippocampus, the dentate gyrus, and the ...

, but this storage is transient. Much of the long-term storage of the memory seems to take place in the anterior cingulate cortex

In human brains, the anterior cingulate cortex (ACC) is the frontal part of the cingulate cortex that resembles a "collar" surrounding the frontal part of the corpus callosum. It consists of Brodmann areas 24, 32, and 33.

It is involved ...

. When such an exposure was experimentally applied, more than 5,000 differently methylated DNA regions appeared in the hippocampus neuronal genome

A genome is all the genetic information of an organism. It consists of nucleotide sequences of DNA (or RNA in RNA viruses). The nuclear genome includes protein-coding genes and non-coding genes, other functional regions of the genome such as ...

of the rats at one and at 24 hours after training. These alterations in methylation pattern occurred at many genes that were downregulated, often due to the formation of new 5-methylcytosine

5-Methylcytosine (5mC) is a methylation, methylated form of the DNA base cytosine (C) that regulates gene Transcription (genetics), transcription and takes several other biological roles. When cytosine is methylated, the DNA maintains the same s ...

sites in CpG rich regions of the genome. Furthermore, many other genes were upregulated, likely often due to hypomethylation. Hypomethylation often results from the removal of methyl groups from previously existing 5-methylcytosines in DNA. Demethylation is carried out by several proteins acting in concert, including the TET enzymes as well as enzymes of the DNA base excision repair

Base excision repair (BER) is a cellular mechanism, studied in the fields of biochemistry and genetics, that repairs damaged DNA throughout the cell cycle. It is responsible primarily for removing small, non-helix-distorting base lesions from t ...

pathway (see Epigenetics in learning and memory). The pattern of induced and repressed genes in brain neurons subsequent to an intense learning event likely provides the molecular basis for a long-term memory of the event.

Epigenetics

Studies of the molecular basis for memory formation indicate thatepigenetic

In biology, epigenetics is the study of changes in gene expression that happen without changes to the DNA sequence. The Greek prefix ''epi-'' (ἐπι- "over, outside of, around") in ''epigenetics'' implies features that are "on top of" or "in ...

mechanisms operating in neurons in the brain

The brain is an organ (biology), organ that serves as the center of the nervous system in all vertebrate and most invertebrate animals. It consists of nervous tissue and is typically located in the head (cephalization), usually near organs for ...

play a central role in determining this capability. Key epigenetic mechanisms involved in memory include the methylation

Methylation, in the chemistry, chemical sciences, is the addition of a methyl group on a substrate (chemistry), substrate, or the substitution of an atom (or group) by a methyl group. Methylation is a form of alkylation, with a methyl group replac ...

and demethylation

Demethylation is the chemical process resulting in the removal of a methyl group (CH3) from a molecule. A common way of demethylation is the replacement of a methyl group by a hydrogen atom, resulting in a net loss of one carbon and two hydrogen at ...

of neuronal DNA, as well as modifications of histone

In biology, histones are highly basic proteins abundant in lysine and arginine residues that are found in eukaryotic cell nuclei and in most Archaeal phyla. They act as spools around which DNA winds to create structural units called nucleosomes ...

proteins including methylations, acetylations and deacetylations.

Stimulation of brain activity in memory formation is often accompanied by the generation of damage in neuronal DNA that is followed by repair associated with persistent epigenetic alterations. In particular the DNA repair processes of non-homologous end joining

Non-homologous end joining (NHEJ) is a pathway that repairs double-strand breaks in DNA. It is called "non-homologous" because the break ends are directly ligated without the need for a homologous template, in contrast to homology directed repair ...

and base excision repair

Base excision repair (BER) is a cellular mechanism, studied in the fields of biochemistry and genetics, that repairs damaged DNA throughout the cell cycle. It is responsible primarily for removing small, non-helix-distorting base lesions from t ...

are employed in memory formation.

DNA topoisomerase 2-beta in learning and memory

During a new learning experience, a set of genes is rapidly expressed in the brain. This inducedgene expression

Gene expression is the process (including its Regulation of gene expression, regulation) by which information from a gene is used in the synthesis of a functional gene product that enables it to produce end products, proteins or non-coding RNA, ...

is considered to be essential for processing the information being learned. Such genes are referred to as immediate early genes (IEGs). DNA topoisomerase 2-beta (TOP2B) activity is essential for the expression of IEGs in a type of learning experience in mice termed associative fear memory. Such a learning experience appears to rapidly trigger TOP2B to induce double-strand breaks in the promoter DNA of IEG genes that function in neuroplasticity

Neuroplasticity, also known as neural plasticity or just plasticity, is the ability of neural networks in the brain to change through neurogenesis, growth and reorganization. Neuroplasticity refers to the brain's ability to reorganize and rewir ...

. Repair

The technical meaning of maintenance involves functional checks, servicing, repairing or replacing of necessary devices, equipment, machinery, building infrastructure and supporting utilities in industrial, business, and residential installat ...

of these induced breaks is associated with DNA demethylation of IEG gene promoters allowing immediate expression of these IEG genes.

The double-strand breaks that are induced during a learning experience are not immediately repaired. About 600 regulatory sequences in promoters and about 800 regulatory sequences in enhancers

In genetics, an enhancer is a short (50–1500 bp) region of DNA that can be bound by proteins ( activators) to increase the likelihood that transcription of a particular gene will occur. These proteins are usually referred to as transcriptio ...

appear to depend on double strand breaks initiated by topoisomerase 2-beta (TOP2B) for activation. The induction of particular double-strand breaks are specific with respect to their inducing signal. When neurons are activated ''in vitro'', just 22 of TOP2B-induced double-strand breaks occur in their genomes.

Such TOP2B-induced double-strand breaks are accompanied by at least four enzymes of the non-homologous end joining (NHEJ) DNA repair pathway (DNA-PKcs, KU70, KU80, and DNA LIGASE IV) (see Figure). These enzymes repair the double-strand breaks within about 15 minutes to two hours. The double-strand breaks in the promoter are thus associated with TOP2B and at least these four repair enzymes. These proteins are present simultaneously on a single promoter nucleosome

A nucleosome is the basic structural unit of DNA packaging in eukaryotes. The structure of a nucleosome consists of a segment of DNA wound around eight histone, histone proteins and resembles thread wrapped around a bobbin, spool. The nucleosome ...

(there are about 147 nucleotides in the DNA sequence wrapped around a single nucleosome) located near the transcription start site of their target gene.

The double-strand break introduced by TOP2B apparently frees the part of the promoter at an RNA polymerase-bound

The double-strand break introduced by TOP2B apparently frees the part of the promoter at an RNA polymerase-bound transcription start site

Transcription is the process of copying a segment of DNA into RNA for the purpose of gene expression. Some segments of DNA are transcribed into RNA molecules that can encode proteins, called messenger RNA (mRNA). Other segments of DNA are transc ...

to physically move to its associated enhancer (see regulatory sequence

A regulatory sequence is a segment of a nucleic acid molecule which is capable of increasing or decreasing the expression of specific genes within an organism. Regulation of gene expression is an essential feature of all living organisms and vir ...

). This allows the enhancer, with its bound transcription factor

In molecular biology, a transcription factor (TF) (or sequence-specific DNA-binding factor) is a protein that controls the rate of transcription (genetics), transcription of genetics, genetic information from DNA to messenger RNA, by binding t ...

s and mediator proteins, to directly interact with the RNA polymerase paused at the transcription start site to start transcription.

Contextual fear conditioning

Pavlovian fear conditioning is a behavioral paradigm in which organisms learn to predict aversive events. It is a form of learning in which an aversive stimulus (e.g. an electrical shock) is associated with a particular neutral context (e.g., a r ...

in the mouse causes the mouse to have a long-term memory and fear of the location in which it occurred. Contextual fear conditioning causes hundreds of DSBs in mouse brain medial prefrontal cortex (mPFC) and hippocampus neurons (see Figure: Brain regions involved in memory formation). These DSBs predominately activate genes involved in synaptic processes, that are important for learning and memory.

In infancy

Up until the mid-1980s it was assumed that infants could not encode, retain, and retrieve information. A growing body of research now indicates that infants as young as 6-months can recall information after a 24-hour delay. Furthermore, research has revealed that as infants grow older they can store information for longer periods of time; 6-month-olds can recall information after a 24-hour period, 9-month-olds after up to five weeks, and 20-month-olds after as long as twelve months. In addition, studies have shown that with age, infants can store information faster. Whereas 14-month-olds can recall a three-step sequence after being exposed to it once, 6-month-olds need approximately six exposures in order to be able to remember it. Although 6-month-olds can recall information over the short-term, they have difficulty recalling the temporal order of information. It is only by 9 months of age that infants can recall the actions of a two-step sequence in the correct temporal order – that is, recalling step 1 and then step 2. In other words, when asked to imitate a two-step action sequence (such as putting a toy car in the base and pushing in the plunger to make the toy roll to the other end), 9-month-olds tend to imitate the actions of the sequence in the correct order (step 1 and then step 2). Younger infants (6-month-olds) can only recall one step of a two-step sequence. Researchers have suggested that these age differences are probably due to the fact that thedentate gyrus

The dentate gyrus (DG) is one of the subfields of the hippocampus, in the hippocampal formation. The hippocampal formation is located in the temporal lobe of the brain, and includes the hippocampus (including CA1 to CA4) subfields, and other su ...

of the hippocampus and the frontal components of the neural network are not fully developed at the age of 6-months.

In fact, the term 'infantile amnesia' refers to the phenomenon of accelerated forgetting during infancy. Importantly, infantile amnesia is not unique to humans, and preclinical research (using rodent models) provides insight into the precise neurobiology of this phenomenon. A review of the literature from behavioral neuroscientist Jee Hyun Kim suggests that accelerated forgetting during early life is at least partly due to rapid growth of the brain during this period.

Aging

One of the key concerns of older adults is the experience of memory loss, especially as it is one of the hallmark symptoms ofAlzheimer's disease

Alzheimer's disease (AD) is a neurodegenerative disease and the cause of 60–70% of cases of dementia. The most common early symptom is difficulty in remembering recent events. As the disease advances, symptoms can include problems wit ...

. However, memory loss is qualitatively different in normal aging

Ageing (or aging in American English) is the process of becoming Old age, older until death. The term refers mainly to humans, many other animals, and fungi; whereas for example, bacteria, perennial plants and some simple animals are potentiall ...

from the kind of memory loss associated with a diagnosis of Alzheimer's (Budson & Price, 2005). Research has revealed that individuals' performance on memory tasks that rely on frontal regions declines with age. Older adults tend to exhibit deficits on tasks that involve knowing the temporal order in which they learned information, source memory tasks that require them to remember the specific circumstances or context in which they learned information, and prospective memory tasks that involve remembering to perform an act at a future time. Older adults can manage their problems with prospective memory by using appointment books, for example.

Gene transcription profiles were determined for the human frontal cortex