Databases In Poland on:

[Wikipedia]

[Google]

[Amazon]

In

The introduction of the term ''database'' coincided with the availability of direct-access storage (disks and drums) from the mid-1960s onwards. The term represented a contrast with the tape-based systems of the past, allowing shared interactive use rather than daily

The introduction of the term ''database'' coincided with the availability of direct-access storage (disks and drums) from the mid-1960s onwards. The term represented a contrast with the tape-based systems of the past, allowing shared interactive use rather than daily

The use of primary keys (user-oriented identifiers) to represent cross-table relationships, rather than disk addresses, had two primary motivations. From an engineering perspective, it enabled tables to be relocated and resized without expensive database reorganization. But Codd was more interested in the difference in semantics: the use of explicit identifiers made it easier to define update operations with clean mathematical definitions, and it also enabled query operations to be defined in terms of the established discipline of

The use of primary keys (user-oriented identifiers) to represent cross-table relationships, rather than disk addresses, had two primary motivations. From an engineering perspective, it enabled tables to be relocated and resized without expensive database reorganization. But Codd was more interested in the difference in semantics: the use of explicit identifiers made it easier to define update operations with clean mathematical definitions, and it also enabled query operations to be defined in terms of the established discipline of

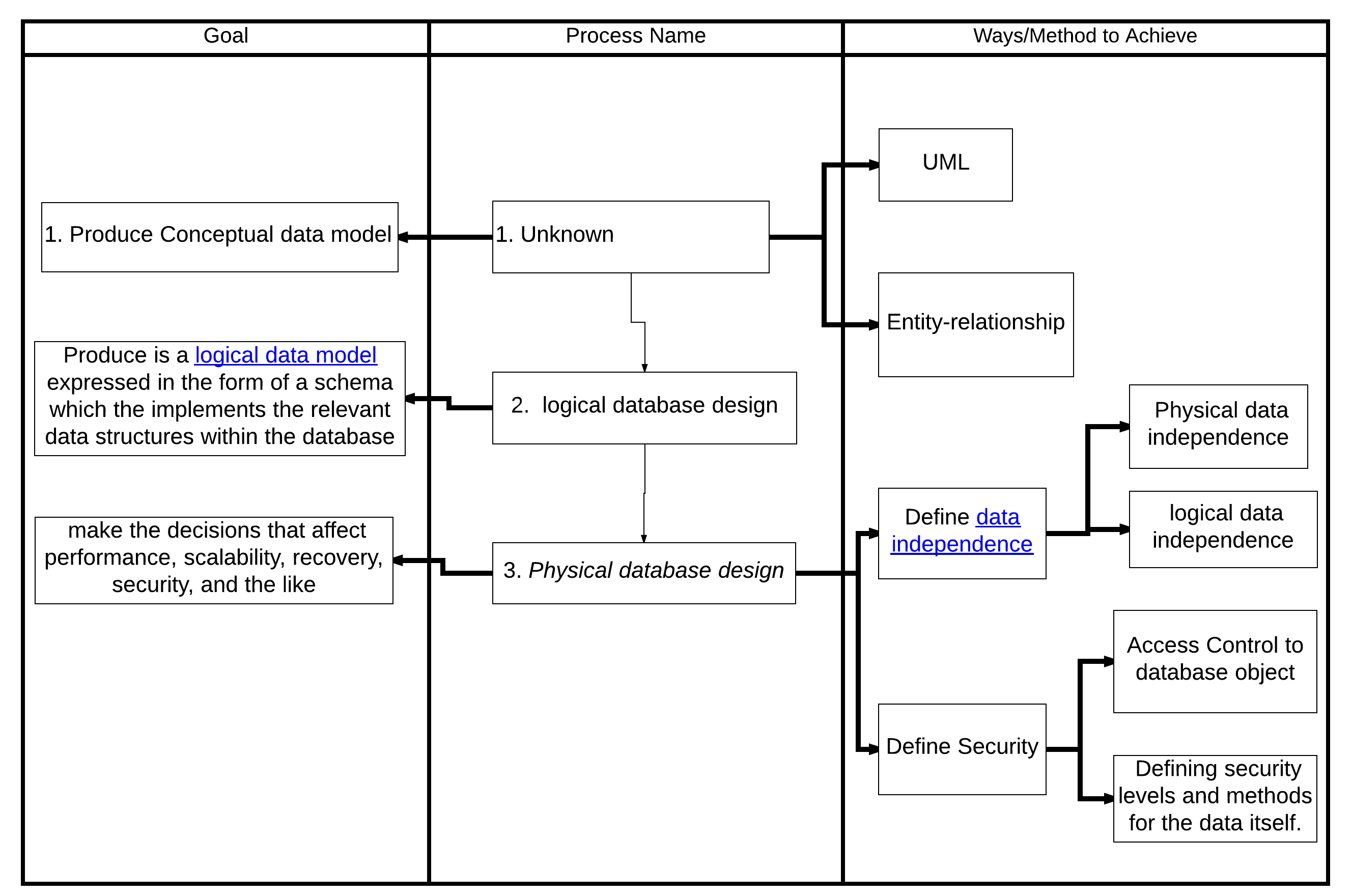

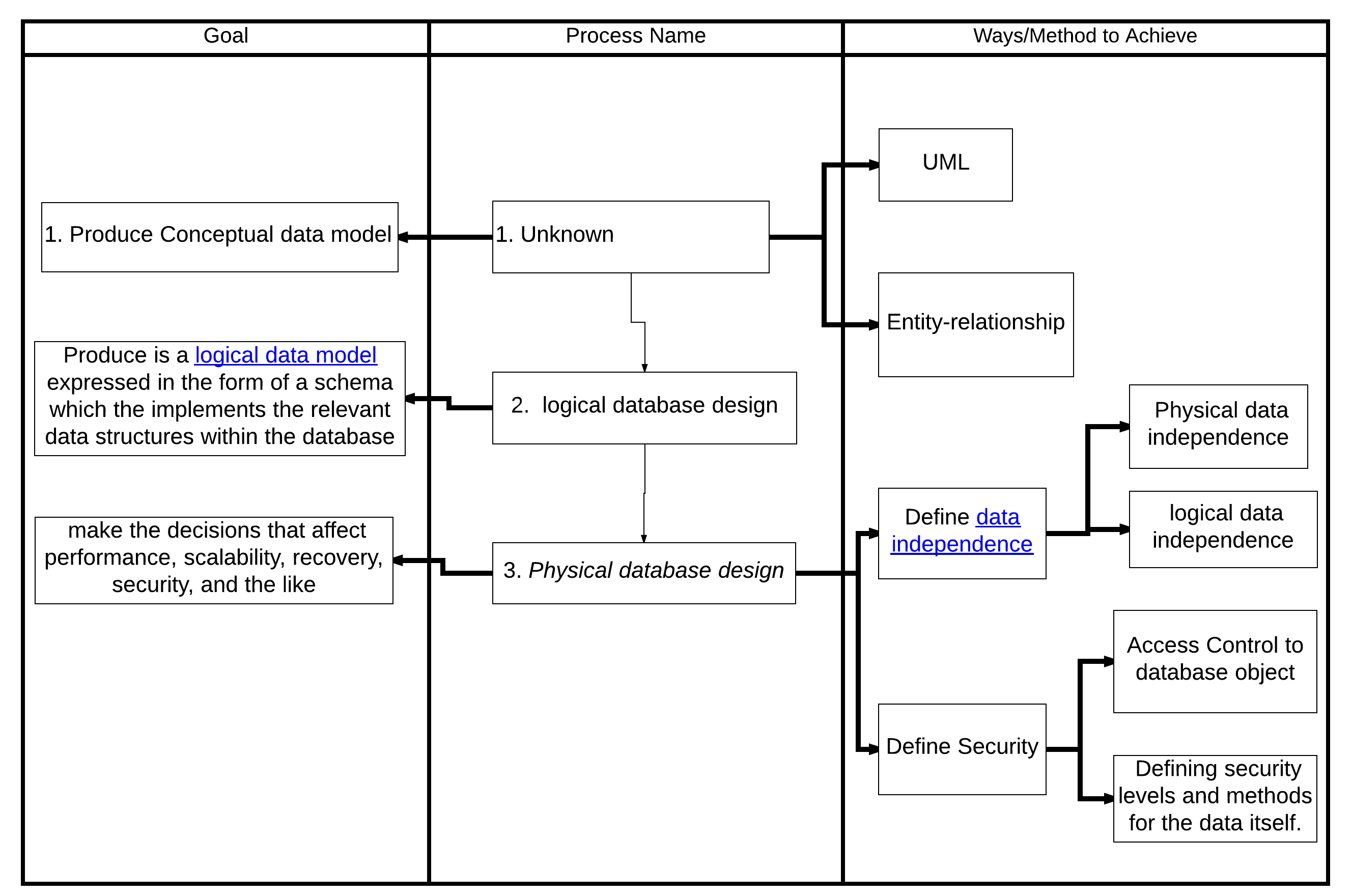

The first task of a database designer is to produce a

The first task of a database designer is to produce a

A database model is a type of data model that determines the logical structure of a database and fundamentally determines in which manner

A database model is a type of data model that determines the logical structure of a database and fundamentally determines in which manner

Encyclopedia of Database Systems

4100 p. 60 illus. . * Gray, J. and Reuter, A. ''Transaction Processing: Concepts and Techniques'', 1st edition, Morgan Kaufmann Publishers, 1992. * Kroenke, David M. and David J. Auer. ''Database Concepts.'' 3rd ed. New York: Prentice, 2007. *

Database Management Systems

'. *

Database System Concepts

'. * * Teorey, T.; Lightstone, S. and Nadeau, T. ''Database Modeling & Design: Logical Design'', 4th edition, Morgan Kaufmann Press, 2005. . *

CMU Database courses playlist

' *

MIT OCW 6.830 , Fall 2010 , Database Systems

' *

Berkeley CS W186

'

DB File extension

nbsp;– information about files with the DB extension {{Authority control

computing

Computing is any goal-oriented activity requiring, benefiting from, or creating computer, computing machinery. It includes the study and experimentation of algorithmic processes, and the development of both computer hardware, hardware and softw ...

, a database is an organized collection of data

Data ( , ) are a collection of discrete or continuous values that convey information, describing the quantity, quality, fact, statistics, other basic units of meaning, or simply sequences of symbols that may be further interpreted for ...

or a type of data store

A data store is a repository for persistently storing and managing collections of data which include not just repositories like databases, but also simpler store types such as simple files, emails, etc.

A ''database'' is a collection of data that ...

based on the use of a database management system (DBMS), the software

Software consists of computer programs that instruct the Execution (computing), execution of a computer. Software also includes design documents and specifications.

The history of software is closely tied to the development of digital comput ...

that interacts with end user

In product development, an end user (sometimes end-user) is a person who ultimately uses or is intended to ultimately use a product. The end user stands in contrast to users who support or maintain the product, such as sysops, system administrato ...

s, applications

Application may refer to:

Mathematics and computing

* Application software, computer software designed to help the user to perform specific tasks

** Application layer, an abstraction layer that specifies protocols and interface methods used in a ...

, and the database itself to capture and analyze the data. The DBMS additionally encompasses the core facilities provided to administer the database. The sum total of the database, the DBMS and the associated applications can be referred to as a database system. Often the term "database" is also used loosely to refer to any of the DBMS, the database system or an application associated with the database.

Before digital storage and retrieval of data have become widespread, index cards

An index card (or record card in British English and system cards in Australian English) consists of card stock (heavy paper) cut to a standard size, used for recording and storing small amounts of discrete data. A collection of such cards ei ...

were used for data storage

Data storage is the recording (storing) of information (data) in a storage medium. Handwriting, phonographic recording, magnetic tape, and optical discs are all examples of storage media. Biological molecules such as RNA and DNA are con ...

in a wide range of applications and environments: in the home to record and store recipes, shopping lists, contact information and other organizational data; in business to record presentation notes, project research and notes, and contact information; in schools as flash cards or other visual aids; and in academic research to hold data such as bibliographical citations or notes in a card file

A (German language, German: 'slipbox', plural ) or card file consists of small items of information stored on (German: 'slips'), paper slips or cards, that may be linked to each other through Index term, subject headings or other metadata such ...

. Professional book indexers used index cards in the creation of book indexes until they were replaced by indexing software

Indexing software consists of computer application software, applications that help to build an index (publishing), index (like this one:Index of branches of science).

Features

There are several methodologies for indexing:

* Standalone indexin ...

in the 1980s and 1990s.

Small databases can be stored on a file system, while large databases are hosted on computer clusters

A computer cluster is a set of computers that work together so that they can be viewed as a single system. Unlike grid computers, computer clusters have each node set to perform the same task, controlled and scheduled by software. The newes ...

or cloud storage

Cloud storage is a model of computer data storage in which data, said to be on "the cloud", is stored remotely in logical pools and is accessible to users over a network, typically the Internet. The physical storage spans multiple servers (so ...

. The design of databases spans formal techniques and practical considerations, including data modeling

Data modeling in software engineering is the process of creating a data model for an information system by applying certain formal techniques. It may be applied as part of broader Model-driven engineering (MDE) concept.

Overview

Data modeli ...

, efficient data representation and storage, query language

A query language, also known as data query language or database query language (DQL), is a computer language used to make queries in databases and information systems. In database systems, query languages rely on strict theory to retrieve informa ...

s, security

Security is protection from, or resilience against, potential harm (or other unwanted coercion). Beneficiaries (technically referents) of security may be persons and social groups, objects and institutions, ecosystems, or any other entity or ...

and privacy

Privacy (, ) is the ability of an individual or group to seclude themselves or information about themselves, and thereby express themselves selectively.

The domain of privacy partially overlaps with security, which can include the concepts of a ...

of sensitive data, and distributed computing

Distributed computing is a field of computer science that studies distributed systems, defined as computer systems whose inter-communicating components are located on different networked computers.

The components of a distributed system commu ...

issues, including supporting concurrent

Concurrent means happening at the same time. Concurrency, concurrent, or concurrence may refer to:

Law

* Concurrence, in jurisprudence, the need to prove both ''actus reus'' and ''mens rea''

* Concurring opinion (also called a "concurrence"), a ...

access and fault tolerance

Fault tolerance is the ability of a system to maintain proper operation despite failures or faults in one or more of its components. This capability is essential for high-availability, mission-critical, or even life-critical systems.

Fault t ...

.

Computer scientists

Computer science is the study of computation, information, and automation. Computer science spans theoretical disciplines (such as algorithms, theory of computation, and information theory) to applied disciplines (including the design an ...

may classify database management systems according to the database model

A database model is a type of data model that determines the logical structure of a database. It fundamentally determines in which manner data can be stored, organized and manipulated. The most popular example of a database model is the relatio ...

s that they support. Relational database

A relational database (RDB) is a database based on the relational model of data, as proposed by E. F. Codd in 1970.

A Relational Database Management System (RDBMS) is a type of database management system that stores data in a structured for ...

s became dominant in the 1980s. These model data as rows and columns

A column or pillar in architecture and structural engineering is a structural element that transmits, through compression, the weight of the structure above to other structural elements below. In other words, a column is a compression member ...

in a series of tables, and the vast majority use SQL

Structured Query Language (SQL) (pronounced ''S-Q-L''; or alternatively as "sequel")

is a domain-specific language used to manage data, especially in a relational database management system (RDBMS). It is particularly useful in handling s ...

for writing and querying data. In the 2000s, non-relational databases became popular, collectively referred to as NoSQL

NoSQL (originally meaning "Not only SQL" or "non-relational") refers to a type of database design that stores and retrieves data differently from the traditional table-based structure of relational databases. Unlike relational databases, which ...

, because they use different query language

A query language, also known as data query language or database query language (DQL), is a computer language used to make queries in databases and information systems. In database systems, query languages rely on strict theory to retrieve informa ...

s.

Terminology and overview

Formally, a "database" refers to a set of related data accessed through the use of a "database management system" (DBMS), which is an integrated set ofcomputer software

Software consists of computer programs that instruct the Execution (computing), execution of a computer. Software also includes design documents and specifications.

The history of software is closely tied to the development of digital comput ...

that allows users

Ancient Egyptian roles

* User (ancient Egyptian official), an ancient Egyptian nomarch (governor) of the Eighth Dynasty

* Useramen, an ancient Egyptian vizier also called "User"

Other uses

* User (computing), a person (or software) using an ...

to interact with one or more databases and provides access to all of the data contained in the database (although restrictions may exist that limit access to particular data). The DBMS provides various functions that allow entry, storage and retrieval of large quantities of information and provides ways to manage how that information is organized.

Because of the close relationship between them, the term "database" is often used casually to refer to both a database and the DBMS used to manipulate it.

Outside the world of professional information technology

Information technology (IT) is a set of related fields within information and communications technology (ICT), that encompass computer systems, software, programming languages, data processing, data and information processing, and storage. Inf ...

, the term ''database'' is often used to refer to any collection of related data (such as a spreadsheet

A spreadsheet is a computer application for computation, organization, analysis and storage of data in tabular form. Spreadsheets were developed as computerized analogs of paper accounting worksheets. The program operates on data entered in c ...

or a card index) as size and usage requirements typically necessitate use of a database management system.

Existing DBMSs provide various functions that allow management of a database and its data which can be classified into four main functional groups:

* Data definition – Creation, modification and removal of definitions that detail how the data is to be organized.

* Update – Insertion, modification, and deletion of the data itself.

* Retrieval – Selecting data according to specified criteria (e.g., a query, a position in a hierarchy, or a position in relation to other data) and providing that data either directly to the user, or making it available for further processing by the database itself or by other applications. The retrieved data may be made available in a more or less direct form without modification, as it is stored in the database, or in a new form obtained by altering it or combining it with existing data from the database.

* Administration – Registering and monitoring users, enforcing data security, monitoring performance, maintaining data integrity, dealing with concurrency control, and recovering information that has been corrupted by some event such as an unexpected system failure.

Both a database and its DBMS conform to the principles of a particular database model

A database model is a type of data model that determines the logical structure of a database. It fundamentally determines in which manner data can be stored, organized and manipulated. The most popular example of a database model is the relatio ...

. "Database system" refers collectively to the database model, database management system, and database.

Physically, database servers are dedicated computers that hold the actual databases and run only the DBMS and related software. Database servers are usually multiprocessor

Multiprocessing (MP) is the use of two or more central processing units (CPUs) within a single computer system. The term also refers to the ability of a system to support more than one processor or the ability to allocate tasks between them. The ...

computers, with generous memory and RAID

RAID (; redundant array of inexpensive disks or redundant array of independent disks) is a data storage virtualization technology that combines multiple physical Computer data storage, data storage components into one or more logical units for th ...

disk arrays used for stable storage. Hardware database accelerators, connected to one or more servers via a high-speed channel, are also used in large-volume transaction processing environments. DBMSs are found at the heart of most database application

A database application is a computer program whose primary purpose is retrieving information from a computerized database. From here, information can be inserted, modified or deleted which is subsequently conveyed back into the database. Early e ...

s. DBMSs may be built around a custom multitasking kernel

Kernel may refer to:

Computing

* Kernel (operating system), the central component of most operating systems

* Kernel (image processing), a matrix used for image convolution

* Compute kernel, in GPGPU programming

* Kernel method, in machine learnin ...

with built-in networking

Network, networking and networked may refer to:

Science and technology

* Network theory, the study of graphs as a representation of relations between discrete objects

* Network science, an academic field that studies complex networks

Mathematics

...

support, but modern DBMSs typically rely on a standard operating system

An operating system (OS) is system software that manages computer hardware and software resources, and provides common daemon (computing), services for computer programs.

Time-sharing operating systems scheduler (computing), schedule tasks for ...

to provide these functions.

Since DBMSs comprise a significant market

Market is a term used to describe concepts such as:

*Market (economics), system in which parties engage in transactions according to supply and demand

*Market economy

*Marketplace, a physical marketplace or public market

*Marketing, the act of sat ...

, computer and storage vendors often take into account DBMS requirements in their own development plans.

Databases and DBMSs can be categorized according to the database model(s) that they support (such as relational or XML

Extensible Markup Language (XML) is a markup language and file format for storing, transmitting, and reconstructing data. It defines a set of rules for encoding electronic document, documents in a format that is both human-readable and Machine-r ...

), the type(s) of computer they run on (from a server cluster

A computer cluster is a set of computers that work together so that they can be viewed as a single system. Unlike grid computers, computer clusters have each node set to perform the same task, controlled and scheduled by software. The newes ...

to a mobile phone

A mobile phone or cell phone is a portable telephone that allows users to make and receive calls over a radio frequency link while moving within a designated telephone service area, unlike fixed-location phones ( landline phones). This rad ...

), the query language

A query language, also known as data query language or database query language (DQL), is a computer language used to make queries in databases and information systems. In database systems, query languages rely on strict theory to retrieve informa ...

(s) used to access the database (such as SQL or XQuery

XQuery (XML Query) is a query language and functional programming language designed to query and transform collections of structured and unstructured data, primarily in the form of XML. It also supports text data and, through implementation-sp ...

), and their internal engineering, which affects performance, scalability

Scalability is the property of a system to handle a growing amount of work. One definition for software systems specifies that this may be done by adding resources to the system.

In an economic context, a scalable business model implies that ...

, resilience, and security.

History

The sizes, capabilities, and performance of databases and their respective DBMSs have grown in orders of magnitude. These performance increases were enabled by the technology progress in the areas ofprocessors

Processor may refer to:

Computing Hardware

* Processor (computing)

** Central processing unit (CPU), the hardware within a computer that executes a program

*** Microprocessor, a central processing unit contained on a single integrated circuit ( ...

, computer memory

Computer memory stores information, such as data and programs, for immediate use in the computer. The term ''memory'' is often synonymous with the terms ''RAM,'' ''main memory,'' or ''primary storage.'' Archaic synonyms for main memory include ...

, computer storage

Computer data storage or digital data storage is a technology consisting of computer components and Data storage, recording media that are used to retain digital data. It is a core function and fundamental component of computers.

The cent ...

, and computer network

A computer network is a collection of communicating computers and other devices, such as printers and smart phones. In order to communicate, the computers and devices must be connected by wired media like copper cables, optical fibers, or b ...

s. The concept of a database was made possible by the emergence of direct access storage media

Data storage is the recording (storing) of information (data) in a storage medium. Handwriting, phonographic recording, magnetic tape, and optical discs are all examples of storage media. Biological molecules such as RNA and DNA are cons ...

such as magnetic disks, which became widely available in the mid-1960s; earlier systems relied on sequential storage of data on magnetic tape

Magnetic tape is a medium for magnetic storage made of a thin, magnetizable coating on a long, narrow strip of plastic film. It was developed in Germany in 1928, based on the earlier magnetic wire recording from Denmark. Devices that use magnetic ...

. The subsequent development of database technology can be divided into three eras based on data model or structure: navigational

Navigation is a field of study that focuses on the process of monitoring and controlling the movement of a craft or vehicle from one place to another.Bowditch, 2003:799. The field of navigation includes four general categories: land navigation, ...

, SQL/ relational, and post-relational.

The two main early navigational data model

A data model is an abstract model that organizes elements of data and standardizes how they relate to one another and to the properties of real-world entities. For instance, a data model may specify that the data element representing a car be ...

s were the hierarchical model

A hierarchical database model is a data model in which the data is organized into a tree-like structure. The data are stored as records which is a collection of one or more fields. Each field contains a single value, and the collection of fields i ...

and the CODASYL

CODASYL, the Conference/Committee on Data Systems Languages, was a consortium formed in 1959 to guide the development of a standard programming language that could be used on many computers. This effort led to the development of the programming ...

model (network model

In computing, the network model is a database model conceived as a flexible way of representing objects and their relationships. Its distinguishing feature is that the schema, viewed as a graph in which object types are nodes and relationship ty ...

). These were characterized by the use of pointers

Pointer may refer to:

People with the name

* Pointer (surname), a surname (including a list of people with the name)

* Pointer Williams (born 1974), American former basketball player

Arts, entertainment, and media

* ''Pointer'' (journal), the ...

(often physical disk addresses) to follow relationships from one record to another.

The relational model

The relational model (RM) is an approach to managing data using a structure and language consistent with first-order predicate logic, first described in 1969 by English computer scientist Edgar F. Codd, where all data are represented in terms of t ...

, first proposed in 1970 by Edgar F. Codd

Edgar Frank "Ted" Codd (19 August 1923 – 18 April 2003) was a British computer scientist who, while working for IBM, invented the relational model for database management, the theoretical basis for relational databases and relational database ...

, departed from this tradition by insisting that applications

Application may refer to:

Mathematics and computing

* Application software, computer software designed to help the user to perform specific tasks

** Application layer, an abstraction layer that specifies protocols and interface methods used in a ...

should search for data by content, rather than by following links. The relational model employs sets of ledger-style tables, each used for a different type of entity

An entity is something that Existence, exists as itself. It does not need to be of material existence. In particular, abstractions and legal fictions are usually regarded as entities. In general, there is also no presumption that an entity is Lif ...

. Only in the mid-1980s did computing hardware become powerful enough to allow the wide deployment of relational systems (DBMSs plus applications). By the early 1990s, however, relational systems dominated in all large-scale data processing

Data processing is the collection and manipulation of digital data to produce meaningful information. Data processing is a form of ''information processing'', which is the modification (processing) of information in any manner detectable by an o ...

applications, and they remain dominant: IBM Db2, Oracle

An oracle is a person or thing considered to provide insight, wise counsel or prophetic predictions, most notably including precognition of the future, inspired by deities. If done through occultic means, it is a form of divination.

Descript ...

, MySQL

MySQL () is an Open-source software, open-source relational database management system (RDBMS). Its name is a combination of "My", the name of co-founder Michael Widenius's daughter My, and "SQL", the acronym for Structured Query Language. A rel ...

, and Microsoft SQL Server

Microsoft SQL Server is a proprietary relational database management system developed by Microsoft using Structured Query Language (SQL, often pronounced "sequel"). As a database server, it is a software product with the primary function of ...

are the most searched DBMS

In computing, a database is an organized collection of data or a type of data store based on the use of a database management system (DBMS), the software that interacts with end users, applications, and the database itself to capture and ana ...

. The dominant database language, standardized SQL for the relational model, has influenced database languages for other data models.

Object database

An object database or object-oriented database is a database management system in which information is represented in the form of objects as used in object-oriented programming. Object databases are different from relational databases which are ...

s were developed in the 1980s to overcome the inconvenience of object–relational impedance mismatch

Object–relational impedance mismatch is a set of difficulties going between data in relational data stores and data in domain-driven object models. Relational Database Management Systems (RDBMS) is the standard method for storing data in a ded ...

, which led to the coining of the term "post-relational" and also the development of hybrid object–relational database

An object–relational database (ORD), or object–relational database management system (ORDBMS), is a database management system (DBMS) similar to a relational database, but with an object-oriented database model: objects, classes and inherit ...

s.

The next generation of post-relational databases in the late 2000s became known as NoSQL

NoSQL (originally meaning "Not only SQL" or "non-relational") refers to a type of database design that stores and retrieves data differently from the traditional table-based structure of relational databases. Unlike relational databases, which ...

databases, introducing fast key–value stores and document-oriented database

A document-oriented database, or document store, is a computer program and data storage system designed for storing, retrieving and managing document-oriented information, also known as semi-structured data.

Document-oriented databases are one ...

s. A competing "next generation" known as NewSQL

NewSQL is a class of relational database management system, relational database management systems that seek to provide the scalability of NoSQL systems for online transaction processing (OLTP) workloads while maintaining the ACID guarantees of a t ...

databases attempted new implementations that retained the relational/SQL model while aiming to match the high performance of NoSQL compared to commercially available relational DBMSs.

1960s, navigational DBMS

The introduction of the term ''database'' coincided with the availability of direct-access storage (disks and drums) from the mid-1960s onwards. The term represented a contrast with the tape-based systems of the past, allowing shared interactive use rather than daily

The introduction of the term ''database'' coincided with the availability of direct-access storage (disks and drums) from the mid-1960s onwards. The term represented a contrast with the tape-based systems of the past, allowing shared interactive use rather than daily batch processing

Computerized batch processing is a method of running software programs called jobs in batches automatically. While users are required to submit the jobs, no other interaction by the user is required to process the batch. Batches may automatically ...

. The Oxford English Dictionary

The ''Oxford English Dictionary'' (''OED'') is the principal historical dictionary of the English language, published by Oxford University Press (OUP), a University of Oxford publishing house. The dictionary, which published its first editio ...

cites a 1962 report by the System Development Corporation

System Development Corporation (SDC) was a computer software company based in Santa Monica, California. Initially created as a division of the RAND Corporation in December 1955 (under the name System Development Division) and established as an ind ...

of California as the first to use the term "data-base" in a specific technical sense.

As computers grew in speed and capability, a number of general-purpose database systems emerged; by the mid-1960s a number of such systems had come into commercial use. Interest in a standard began to grow, and Charles Bachman

Charles William Bachman III (December 11, 1924 – July 13, 2017) was an American computer scientist, who spent his entire career as an industrial researcher, developer, and manager rather than in academia. He was particularly known for his ...

, author of one such product, the Integrated Data Store

Integrated Data Store (IDS) was an early network model , network database, database management system largely used by industry, known for its high performance. IDS became the basis for the CODASYL Data Base Task Group standards.

IDS was design ...

(IDS), founded the Database Task Group within CODASYL

CODASYL, the Conference/Committee on Data Systems Languages, was a consortium formed in 1959 to guide the development of a standard programming language that could be used on many computers. This effort led to the development of the programming ...

, the group responsible for the creation and standardization of COBOL

COBOL (; an acronym for "common business-oriented language") is a compiled English-like computer programming language designed for business use. It is an imperative, procedural, and, since 2002, object-oriented language. COBOL is primarily ...

. In 1971, the Database Task Group delivered their standard, which generally became known as the ''CODASYL approach'', and soon a number of commercial products based on this approach entered the market.

The CODASYL approach offered applications the ability to navigate around a linked data set which was formed into a large network. Applications could find records by one of three methods:

#Use of a primary key (known as a CALC key, typically implemented by hashing

Hash, hashes, hash mark, or hashing may refer to:

Substances

* Hash (food), a coarse mixture of ingredients, often based on minced meat

* Hash (stew), a pork and onion-based gravy found in South Carolina

* Hash, a nickname for hashish, a cannab ...

)

#Navigating relationships (called ''sets'') from one record to another

#Scanning all the records in a sequential order

Later systems added B-tree

In computer science, a B-tree is a self-balancing tree data structure that maintains sorted data and allows searches, sequential access, insertions, and deletions in logarithmic time. The B-tree generalizes the binary search tree, allowing fo ...

s to provide alternate access paths. Many CODASYL databases also added a declarative query language for end users (as distinct from the navigational API

An application programming interface (API) is a connection between computers or between computer programs. It is a type of software interface, offering a service to other pieces of software. A document or standard that describes how to build ...

). However, CODASYL databases were complex and required significant training and effort to produce useful applications.

IBM

International Business Machines Corporation (using the trademark IBM), nicknamed Big Blue, is an American Multinational corporation, multinational technology company headquartered in Armonk, New York, and present in over 175 countries. It is ...

also had its own DBMS in 1966, known as Information Management System

The IBM Information Management System (IMS) is a joint hierarchical database and information management system that supports transaction processing. Development began in 1966 to keep track of the bill of materials for the Saturn V rocket of the ...

(IMS). IMS was a development of software written for the Apollo program

The Apollo program, also known as Project Apollo, was the United States human spaceflight program led by NASA, which Moon landing, landed the first humans on the Moon in 1969. Apollo followed Project Mercury that put the first Americans in sp ...

on the System/360

The IBM System/360 (S/360) is a family of mainframe computer systems announced by IBM on April 7, 1964, and delivered between 1965 and 1978. System/360 was the first family of computers designed to cover both commercial and scientific applicati ...

. IMS was generally similar in concept to CODASYL, but used a strict hierarchy for its model of data navigation instead of CODASYL's network model. Both concepts later became known as navigational databases due to the way data was accessed: the term was popularized by Bachman's 1973 Turing Award

The ACM A. M. Turing Award is an annual prize given by the Association for Computing Machinery (ACM) for contributions of lasting and major technical importance to computer science. It is generally recognized as the highest distinction in the fi ...

presentation ''The Programmer as Navigator''. IMS is classified by IBM as a hierarchical database

A hierarchical database model is a data model in which the data is organized into a tree-like structure. The data are stored as records which is a collection of one or more fields. Each field contains a single value, and the collection of fields i ...

. IDMS and Cincom Systems

Cincom Systems, Inc., is a privately held multinational computer technology corporation founded in 1968 by Tom Nies, Tom Richley, and Claude Bogardus. The company’s first product, Total, was the first commercial database management system that ...

' TOTAL

Total may refer to:

Mathematics

* Total, the summation of a set of numbers

* Total order, a partial order without incomparable pairs

* Total relation, which may also mean

** connected relation (a binary relation in which any two elements are comp ...

databases are classified as network databases. IMS remains in use .

1970s, relational DBMS

Edgar F. Codd

Edgar Frank "Ted" Codd (19 August 1923 – 18 April 2003) was a British computer scientist who, while working for IBM, invented the relational model for database management, the theoretical basis for relational databases and relational database ...

worked at IBM in San Jose, California

San Jose, officially the City of San José ( ; ), is a cultural, commercial, and political center within Silicon Valley and the San Francisco Bay Area. With a city population of 997,368 and a metropolitan area population of 1.95 million, it is ...

, in an office primarily involved in the development of hard disk

A hard disk drive (HDD), hard disk, hard drive, or fixed disk is an electro-mechanical data storage device that stores and retrieves digital data using magnetic storage with one or more rigid rapidly rotating hard disk drive platter, pla ...

systems. He was unhappy with the navigational model of the CODASYL approach, notably the lack of a "search" facility. In 1970, he wrote a number of papers that outlined a new approach to database construction that eventually culminated in the groundbreaking ''A Relational Model of Data for Large Shared Data Banks''.

The paper described a new system for storing and working with large databases. Instead of records being stored in some sort of linked list

In computer science, a linked list is a linear collection of data elements whose order is not given by their physical placement in memory. Instead, each element points to the next. It is a data structure consisting of a collection of nodes whi ...

of free-form records as in CODASYL, Codd's idea was to organize the data as a number of " tables", each table being used for a different type of entity. Each table would contain a fixed number of columns containing the attributes of the entity. One or more columns of each table were designated as a primary key

In the relational model of databases, a primary key is a designated attribute (column) that can reliably identify and distinguish between each individual record in a table. The database creator can choose an existing unique attribute or combinati ...

by which the rows of the table could be uniquely identified; cross-references between tables always used these primary keys, rather than disk addresses, and queries would join tables based on these key relationships, using a set of operations based on the mathematical system of relational calculus

The relational calculus consists of two calculi, the tuple relational calculus and the domain relational calculus, that is part of the relational model for databases and provide a declarative way to specify database queries. The raison d'être ...

(from which the model takes its name). Splitting the data into a set of normalized tables (or ''relations'') aimed to ensure that each "fact" was only stored once, thus simplifying update operations. Virtual tables called ''views'' could present the data in different ways for different users, but views could not be directly updated.

Codd used mathematical terms to define the model: relations, tuples, and domains rather than tables, rows, and columns. The terminology that is now familiar came from early implementations. Codd would later criticize the tendency for practical implementations to depart from the mathematical foundations on which the model was based.

first-order predicate calculus

First-order logic, also called predicate logic, predicate calculus, or quantificational logic, is a collection of formal systems used in mathematics, philosophy, linguistics, and computer science. First-order logic uses quantified variables over ...

; because these operations have clean mathematical properties, it becomes possible to rewrite queries in provably correct ways, which is the basis of query optimization. There is no loss of expressiveness compared with the hierarchic or network models, though the connections between tables are no longer so explicit.

In the hierarchic and network models, records were allowed to have a complex internal structure. For example, the salary history of an employee might be represented as a "repeating group" within the employee record. In the relational model, the process of normalization led to such internal structures being replaced by data held in multiple tables, connected only by logical keys.

For instance, a common use of a database system is to track information about users, their name, login information, various addresses and phone numbers. In the navigational approach, all of this data would be placed in a single variable-length record. In the relational approach, the data would be ''normalized'' into a user table, an address table and a phone number table (for instance). Records would be created in these optional tables only if the address or phone numbers were actually provided.

As well as identifying rows/records using logical identifiers rather than disk addresses, Codd changed the way in which applications assembled data from multiple records. Rather than requiring applications to gather data one record at a time by navigating the links, they would use a declarative query language that expressed what data was required, rather than the access path by which it should be found. Finding an efficient access path to the data became the responsibility of the database management system, rather than the application programmer. This process, called query optimization, depended on the fact that queries were expressed in terms of mathematical logic.

Codd's paper inspired teams at various universities to research the subject, including one at University of California, Berkeley

The University of California, Berkeley (UC Berkeley, Berkeley, Cal, or California), is a Public university, public Land-grant university, land-grant research university in Berkeley, California, United States. Founded in 1868 and named after t ...

led by Eugene Wong

Eugene Wong (born December 24, 1934, in Nanjing, China) is a Chinese-American computer scientist and mathematician. Wong's career has spanned academia, university administration, government and the private sector. Together with Michael Stonebr ...

and Michael Stonebraker

Michael Ralph Stonebraker (born October 11, 1943) is an American computer scientist specializing in database, database systems. Through a series of academic prototypes and commercial startups, Stonebraker's research and products are central to m ...

, who started INGRES

Jean-Auguste-Dominique Ingres ( ; ; 29 August 1780 – 14 January 1867) was a French Neoclassicism, Neoclassical Painting, painter. Ingres was profoundly influenced by past artistic traditions and aspired to become the guardian of academic ...

using funding that had already been allocated for a geographical database project and student programmers to produce code. Beginning in 1973, INGRES delivered its first test products which were generally ready for widespread use in 1979. INGRES was similar to System R in a number of ways, including the use of a "language" for data access

Data access is a generic term referring to a process which has both an IT-specific meaning and other connotations involving access rights in a broader legal and/or political sense. In the former it typically refers to software and activities relat ...

, known as QUEL. Over time, INGRES moved to the emerging SQL standard.

IBM itself did one test implementation of the relational model, PRTV

PRTV (''Peterlee Relational Test Vehicle'') was the world's first relational database management system that could handle significant data volumes.

It was a relational query system with powerful query facilities, but very limited update facility ...

, and a production one, Business System 12

Business System 12, or simply BS12, was one of the first fully relational database management systems, designed and implemented by IBM's ''Bureau Service'' subsidiary at the company's international development centre in Uithoorn, Netherlands. Pr ...

, both now discontinued. Honeywell

Honeywell International Inc. is an American publicly traded, multinational conglomerate corporation headquartered in Charlotte, North Carolina. It primarily operates in four areas of business: aerospace, building automation, industrial automa ...

wrote MRDS for Multics

Multics ("MULTiplexed Information and Computing Service") is an influential early time-sharing operating system based on the concept of a single-level memory.Dennis M. Ritchie, "The Evolution of the Unix Time-sharing System", Communications of t ...

, and now there are two new implementations: Alphora Dataphor and Rel. Most other DBMS implementations usually called ''relational'' are actually SQL DBMSs.

In 1970, the University of Michigan began development of the MICRO Information Management System based on D.L. Childs' Set-Theoretic Data model. The university in 1974 hosted a debate between Codd and Bachman which Bruce Lindsay of IBM later described as "throwing lightning bolts at each other!". MICRO was used to manage very large data sets by the US Department of Labor

The United States Department of Labor (DOL) is one of the executive departments of the U.S. federal government. It is responsible for the administration of federal laws governing occupational safety and health, wage and hour standards, unemp ...

, the U.S. Environmental Protection Agency

The Environmental Protection Agency (EPA) is an independent agency of the United States government tasked with environmental protection matters. President Richard Nixon proposed the establishment of EPA on July 9, 1970; it began operation on De ...

, and researchers from the University of Alberta

The University of Alberta (also known as U of A or UAlberta, ) is a public research university located in Edmonton, Alberta, Canada. It was founded in 1908 by Alexander Cameron Rutherford, the first premier of Alberta, and Henry Marshall Tory, t ...

, the University of Michigan

The University of Michigan (U-M, U of M, or Michigan) is a public university, public research university in Ann Arbor, Michigan, United States. Founded in 1817, it is the oldest institution of higher education in the state. The University of Mi ...

, and Wayne State University

Wayne State University (WSU) is a public university, public research university in Detroit, Michigan, United States. Founded in 1868, Wayne State consists of 13 schools and colleges offering approximately 375 programs. It is Michigan's third-l ...

. It ran on IBM mainframe computers using the Michigan Terminal System

The Michigan Terminal System (MTS) is one of the first time-sharing computer operating systems.. Created in 1967 at the University of Michigan for use on IBM System/360, IBM S/360-67, S/370 and compatible mainframe computers, it was developed and ...

. The system remained in production until 1998.

Integrated approach

In the 1970s and 1980s, attempts were made to build database systems with integrated hardware and software. The underlying philosophy was that such integration would provide higher performance at a lower cost. Examples wereIBM System/38

The System/38 is a discontinued minicomputer and midrange computer manufactured and sold by

IBM. The system was announced in 1978. The System/38 has 48-bit addressing, which was unique for the time, and a novel integrated database system. It was ...

, the early offering of Teradata

Teradata Corporation is an American software company that provides cloud database and Analytics, analytics-related software, products, and services. The company was formed in 1979 in Brentwood, California, as a collaboration between researchers a ...

, and the Britton Lee, Inc. database machine.

Another approach to hardware support for database management was ICL

ICL may refer to:

Companies and organizations

* Idaho Conservation League, environmental organisation in the United States

* Imperial College London, a UK university

* Indian Confederation of Labour

* Indian Cricket League

* Inorganic Chemistry ...

's CAFS accelerator, a hardware disk controller with programmable search capabilities. In the long term, these efforts were generally unsuccessful because specialized database machines could not keep pace with the rapid development and progress of general-purpose computers. Thus most database systems nowadays are software systems running on general-purpose hardware, using general-purpose computer data storage. However, this idea is still pursued in certain applications by some companies like Netezza

IBM Netezza (pronounced ne-teez-a) is a subsidiary of American technology company IBM that designs and markets high-performance data warehouse appliances and advanced analytics applications for the most demanding analytic uses including enterpr ...

and Oracle (Exadata

Oracle Exadata (Exadata) is a computing system optimized for running Oracle Databases.

Exadata is a combined database machine and software platform that includes scale-out x86-64 compute and storage servers, RoCE networking, RDMA-addressable ...

).

Late 1970s, SQL DBMS

IBM formed a team led by Codd that started working on a prototype system, '' System R'' despite opposition from others at the company. The first version was ready in 1974/5, and work then started on multi-table systems in which the data could be split so that all of the data for a record (some of which is optional) did not have to be stored in a single large "chunk". Subsequent multi-user versions were tested by customers in 1978 and 1979, by which time a standardizedquery language

A query language, also known as data query language or database query language (DQL), is a computer language used to make queries in databases and information systems. In database systems, query languages rely on strict theory to retrieve informa ...

– SQL – had been added. Codd's ideas were establishing themselves as both workable and superior to CODASYL, pushing IBM to develop a true production version of System R, known as ''SQL/DS'', and, later, ''Database 2'' ( IBM Db2).

Larry Ellison

Lawrence Joseph Ellison (born August 17, 1944) is an American businessman and entrepreneur who co-founded software company Oracle Corporation. He was Oracle's chief executive officer from 1977 to 2014 and is now its chief technology officer a ...

's Oracle Database (or more simply, Oracle

An oracle is a person or thing considered to provide insight, wise counsel or prophetic predictions, most notably including precognition of the future, inspired by deities. If done through occultic means, it is a form of divination.

Descript ...

) started from a different chain, based on IBM's papers on System R. Though Oracle V1 implementations were completed in 1978, it was not until Oracle Version 2 when Ellison beat IBM to market in 1979.

Stonebraker went on to apply the lessons from INGRES to develop a new database, Postgres, which is now known as PostgreSQL

PostgreSQL ( ) also known as Postgres, is a free and open-source software, free and open-source relational database management system (RDBMS) emphasizing extensibility and SQL compliance. PostgreSQL features transaction processing, transactions ...

. PostgreSQL is often used for global mission-critical applications (the .org and .info domain name registries use it as their primary data store

A data store is a repository for persistently storing and managing collections of data which include not just repositories like databases, but also simpler store types such as simple files, emails, etc.

A ''database'' is a collection of data that ...

, as do many large companies and financial institutions).

In Sweden, Codd's paper was also read and Mimer SQL

Mimer SQL is a proprietary SQL-based relational database management system produced by the Swedish company ''Mimer Information Technology AB'' (Mimer AB), formerly known as ''Upright Database Technology AB''. It was originally developed as a res ...

was developed in the mid-1970s at Uppsala University

Uppsala University (UU) () is a public university, public research university in Uppsala, Sweden. Founded in 1477, it is the List of universities in Sweden, oldest university in Sweden and the Nordic countries still in operation.

Initially fou ...

. In 1984, this project was consolidated into an independent enterprise.

Another data model, the entity–relationship model

An entity–relationship model (or ER model) describes interrelated things of interest in a specific domain of knowledge. A basic ER model is composed of entity types (which classify the things of interest) and specifies relationships that can e ...

, emerged in 1976 and gained popularity for database design

Database design is the organization of data according to a database model. The designer determines what data must be stored and how the data elements interrelate. With this information, they can begin to fit the data to the database model.Teorey, T ...

as it emphasized a more familiar description than the earlier relational model. Later on, entity–relationship constructs were retrofitted as a data modeling

Data modeling in software engineering is the process of creating a data model for an information system by applying certain formal techniques. It may be applied as part of broader Model-driven engineering (MDE) concept.

Overview

Data modeli ...

construct for the relational model, and the difference between the two has become irrelevant.

1980s, on the desktop

Besides IBM and various software companies such asSybase

Sybase, Inc. was an enterprise software and services company. The company produced software relating to relational databases, with facilities located in California and Massachusetts. Sybase was acquired by SAP in 2010; SAP ceased using the Syba ...

and Informix Corporation

Informix Corporation, formerly Informix Software, Inc., was a software company located in Menlo Park, California. It was a developer of relational database software for computers using the Unix, Microsoft Windows, and Apple Macintosh operating s ...

, most large computer hardware vendors by the 1980s had their own database systems such as DEC's VAX Rdb/VMS. The decade ushered in the age of desktop computing

A desktop computer, often abbreviated as desktop, is a personal computer designed for regular use at a stationary location on or near a desk (as opposed to a portable computer) due to its size and power requirements. The most common configuratio ...

. The new computers empowered their users with spreadsheets like Lotus 1-2-3

Lotus 1-2-3 is a discontinued spreadsheet program from Lotus Software (later part of IBM). It was the first killer application of the IBM PC, was hugely popular in the 1980s, and significantly contributed to the success of IBM PC-compatibles ...

and database software like dBASE

dBase (also stylized dBASE) was one of the first database management systems for microcomputers and the most successful in its day. The dBase system included the core database engine, a query system, a Form (programming), forms engine, and a pr ...

. The dBASE product was lightweight and easy for any computer user to understand out of the box. C. Wayne Ratliff

Cecil Wayne Ratliff (born 1946) wrote the database program Vulcan. Raised in Germany and the US, he now resides in the Los Angeles area.

Biography

Ratliff was born in 1946 in Trenton, Ohio, USA. From 1969 to 1982 he worked for the Martin Mari ...

, the creator of dBASE, stated: "dBASE was different from programs like BASIC, C, FORTRAN, and COBOL in that a lot of the dirty work had already been done. The data manipulation is done by dBASE instead of by the user, so the user can concentrate on what he is doing, rather than having to mess with the dirty details of opening, reading, and closing files, and managing space allocation." dBASE was one of the top selling software titles in the 1980s and early 1990s.

1990s, object-oriented

By the start of the decade databases had become a billion-dollar industry in about ten years. The 1990s, along with a rise inobject-oriented programming

Object-oriented programming (OOP) is a programming paradigm based on the concept of '' objects''. Objects can contain data (called fields, attributes or properties) and have actions they can perform (called procedures or methods and impl ...

, saw a growth in how data in various databases were handled. Programmers and designers began to treat the data in their databases as objects

Object may refer to:

General meanings

* Object (philosophy), a thing, being, or concept

** Object (abstract), an object which does not exist at any particular time or place

** Physical object, an identifiable collection of matter

* Goal, an ai ...

. That is to say that if a person's data were in a database, that person's attributes, such as their address, phone number, and age, were now considered to belong to that person instead of being extraneous data. This allows for relations between data to be related to objects and their attributes

Attribute may refer to:

* Attribute (philosophy), a characteristic of an object

* Attribute (research), a quality of an object

* Grammatical modifier

In linguistics, a modifier is an optional element in phrase structure or clause structure whic ...

and not to individual fields. The term "object–relational impedance mismatch

Object–relational impedance mismatch is a set of difficulties going between data in relational data stores and data in domain-driven object models. Relational Database Management Systems (RDBMS) is the standard method for storing data in a ded ...

" described the inconvenience of translating between programmed objects and database tables. Object database

An object database or object-oriented database is a database management system in which information is represented in the form of objects as used in object-oriented programming. Object databases are different from relational databases which are ...

s and object–relational database

An object–relational database (ORD), or object–relational database management system (ORDBMS), is a database management system (DBMS) similar to a relational database, but with an object-oriented database model: objects, classes and inherit ...

s attempt to solve this problem by providing an object-oriented language (sometimes as extensions to SQL) that programmers can use as alternative to purely relational SQL. On the programming side, libraries known as object–relational mapping

Object–relational mapping (ORM, O/RM, and O/R mapping tool) in computer science is a programming technique for converting data between a relational database and the memory (usually the heap) of an object-oriented programming language. This ...

s (ORMs) attempt to solve the same problem.

2000s, NoSQL and NewSQL

Database sales grew rapidly during thedotcom bubble

The dot-com bubble (or dot-com boom) was a stock market bubble that ballooned during the late-1990s and peaked on Friday, March 10, 2000. This period of market growth coincided with the widespread adoption of the World Wide Web and the Intern ...

and, after its end, the rise of ecommerce

E-commerce (electronic commerce) refers to commercial activities including the electronic buying or selling products and services which are conducted on online platforms or over the Internet. E-commerce draws on technologies such as mobile comm ...

. The popularity of open source

Open source is source code that is made freely available for possible modification and redistribution. Products include permission to use and view the source code, design documents, or content of the product. The open source model is a decentrali ...

databases such as MySQL

MySQL () is an Open-source software, open-source relational database management system (RDBMS). Its name is a combination of "My", the name of co-founder Michael Widenius's daughter My, and "SQL", the acronym for Structured Query Language. A rel ...

has grown since 2000, to the extent that Ken Jacobs of Oracle said in 2005 that perhaps "these guys are doing to us what we did to IBM".

XML database

An XML database is a data persistence software system that allows data to be specified, and stored, in XML format. This data can be queried, transformed, exported and returned to a calling system. XML databases are a flavor of document-oriented ...

s are a type of structured document-oriented database that allows querying based on XML

Extensible Markup Language (XML) is a markup language and file format for storing, transmitting, and reconstructing data. It defines a set of rules for encoding electronic document, documents in a format that is both human-readable and Machine-r ...

document attributes. XML databases are mostly used in applications where the data is conveniently viewed as a collection of documents, with a structure that can vary from the very flexible to the highly rigid: examples include scientific articles, patents, tax filings, and personnel records.

NoSQL

NoSQL (originally meaning "Not only SQL" or "non-relational") refers to a type of database design that stores and retrieves data differently from the traditional table-based structure of relational databases. Unlike relational databases, which ...

databases are often very fast, do not require fixed table schemas, avoid join operations by storing denormalized data, and are designed to scale horizontally.

In recent years, there has been a strong demand for massively distributed databases with high partition tolerance, but according to the CAP theorem

In database theory, the CAP theorem, also named Brewer's theorem after computer scientist Eric Brewer (scientist), Eric Brewer, states that any distributed data store can provide at most Inconsistent triad, two of the following three guarantees:

; ...

, it is impossible for a distributed system

Distributed computing is a field of computer science that studies distributed systems, defined as computer systems whose inter-communicating components are located on different networked computers.

The components of a distributed system commun ...

to simultaneously provide consistency

In deductive logic, a consistent theory is one that does not lead to a logical contradiction. A theory T is consistent if there is no formula \varphi such that both \varphi and its negation \lnot\varphi are elements of the set of consequences ...

, availability, and partition tolerance guarantees. A distributed system can satisfy any two of these guarantees at the same time, but not all three. For that reason, many NoSQL databases are using what is called eventual consistency

Eventual consistency is a consistency model used in distributed computing to achieve high availability. Put simply: if no new updates are made to a given data item, ''eventually'' all accesses to that item will return the last updated value. Eve ...

to provide both availability and partition tolerance guarantees with a reduced level of data consistency.

NewSQL

NewSQL is a class of relational database management system, relational database management systems that seek to provide the scalability of NoSQL systems for online transaction processing (OLTP) workloads while maintaining the ACID guarantees of a t ...

is a class of modern relational databases that aims to provide the same scalable performance of NoSQL systems for online transaction processing (read-write) workloads while still using SQL and maintaining the ACID

An acid is a molecule or ion capable of either donating a proton (i.e. Hydron, hydrogen cation, H+), known as a Brønsted–Lowry acid–base theory, Brønsted–Lowry acid, or forming a covalent bond with an electron pair, known as a Lewis ...

guarantees of a traditional database system.

Use cases

Databases are used to support internal operations of organizations and to underpin online interactions with customers and suppliers (seeEnterprise software

Enterprise software, also known as enterprise application software (EAS), is computer software used to satisfy the needs of an organization rather than its individual users. Enterprise software is an integral part of a computer-based information ...

).

Databases are used to hold administrative information and more specialized data, such as engineering data or economic models. Examples include computerized library

A library is a collection of Book, books, and possibly other Document, materials and Media (communication), media, that is accessible for use by its members and members of allied institutions. Libraries provide physical (hard copies) or electron ...

systems, flight reservation system

Airline reservation systems (ARS) are systems that allow an airline to sell their inventory (seats). It contains information on schedules and fares and contains a database of reservations (or passenger name records) and of tickets issued (if appli ...

s, computerized parts inventory system

Inventory control or stock control is the process of managing stock held within a warehouse, store or other storage location, including auditing actions concerned with "checking a shop's stock". These processes ensure that the right amount of suppl ...

s, and many content management system

A content management system (CMS) is computer software used to manage the creation and modification of digital content ( content management).''Managing Enterprise Content: A Unified Content Strategy''. Ann Rockley, Pamela Kostur, Steve Manning. New ...

s that store website

A website (also written as a web site) is any web page whose content is identified by a common domain name and is published on at least one web server. Websites are typically dedicated to a particular topic or purpose, such as news, educatio ...

s as collections of webpages in a database.

Classification

One way to classify databases involves the type of their contents, for example:bibliographic

Bibliography (from and ), as a discipline, is traditionally the academic study of books as physical, cultural objects; in this sense, it is also known as bibliology (from ). English author and bibliographer John Carter describes ''bibliograph ...

, document-text, statistical, or multimedia objects. Another way is by their application area, for example: accounting, music compositions, movies, banking, manufacturing, or insurance. A third way is by some technical aspect, such as the database structure or interface type. This section lists a few of the adjectives used to characterize different kinds of databases.

* An in-memory database

An in-memory database (IMDb, or main memory database system (MMDB) or memory resident database) is a database management system that primarily relies on main memory for computer data storage. It is contrasted with database management systems that e ...

is a database that primarily resides in main memory

Computer data storage or digital data storage is a technology consisting of computer components and recording media that are used to retain digital data. It is a core function and fundamental component of computers.

The central processin ...

, but is typically backed-up by non-volatile computer data storage. Main memory databases are faster than disk databases, and so are often used where response time is critical, such as in telecommunications network equipment.

* An active database

In computing, an active database is a database that includes an event-driven architecture (often in the form of ECA rules) that can respond to conditions both inside and outside the database. Possible uses include security monitoring, alerting, s ...

includes an event-driven architecture which can respond to conditions both inside and outside the database. Possible uses include security monitoring, alerting, statistics gathering and authorization. Many databases provide active database features in the form of database trigger

A database trigger is procedural code that is automatically executed in response to certain Event (computing), events on a particular Table (database), table or View (database), view in a database. The trigger is mostly used for maintaining the Dat ...

s.

* A cloud database

A cloud database is a database that typically runs on a cloud computing platform and access to the database is provided as-a-service. There are two common deployment models: users can run databases on the cloud independently, using a virtual machin ...

relies on cloud technology

Cloud computing is "a paradigm for enabling network access to a scalable and elastic pool of shareable physical or virtual resources with self-service provisioning and administration on-demand," according to ISO.

Essential characteristics ...

. Both the database and most of its DBMS reside remotely, "in the cloud", while its applications are both developed by programmers and later maintained and used by end-users through a web browser

A web browser, often shortened to browser, is an application for accessing websites. When a user requests a web page from a particular website, the browser retrieves its files from a web server and then displays the page on the user's scr ...

and Open API

An open API (often referred to as a public API) is a publicly available application programming interface that provides developers with programmatic access to a (possibly proprietary) software application or web service. Open APIs are APIs that ...

s.

* Data warehouse

In computing, a data warehouse (DW or DWH), also known as an enterprise data warehouse (EDW), is a system used for Business intelligence, reporting and data analysis and is a core component of business intelligence. Data warehouses are central Re ...

s archive data from operational databases and often from external sources such as market research firms. The warehouse becomes the central source of data for use by managers and other end-users who may not have access to operational data. For example, sales data might be aggregated to weekly totals and converted from internal product codes to use UPCs so that they can be compared with ACNielsen

NIQ (also known as NielsenIQ, formerly known as ACNielsen or AC Nielsen) is a global marketing research firm, with worldwide headquarters in Chicago, Illinois, United States. The company has approximately 30,000 employees and operates in more ...

data. Some basic and essential components of data warehousing include extracting, analyzing, and mining

Mining is the Resource extraction, extraction of valuable geological materials and minerals from the surface of the Earth. Mining is required to obtain most materials that cannot be grown through agriculture, agricultural processes, or feasib ...

data, transforming, loading, and managing data so as to make them available for further use.

* A deductive database

A deductive database is a database system that can make deductions (i.e. conclude additional facts) based on rules and facts stored in its database. Datalog is the language typically used to specify facts, rules and queries in deductive database ...

combines logic programming

Logic programming is a programming, database and knowledge representation paradigm based on formal logic. A logic program is a set of sentences in logical form, representing knowledge about some problem domain. Computation is performed by applyin ...

with a relational database.

* A distributed database

A distributed database is a database in which data is stored across different physical locations. It may be stored in multiple computers located in the same physical location (e.g. a data centre); or maybe dispersed over a computer network, netwo ...

is one in which both the data and the DBMS span multiple computers.

* A document-oriented database

A document-oriented database, or document store, is a computer program and data storage system designed for storing, retrieving and managing document-oriented information, also known as semi-structured data.

Document-oriented databases are one ...

is designed for storing, retrieving, and managing document-oriented, or semi structured, information. Document-oriented databases are one of the main categories of NoSQL databases.

* An embedded database

An embedded database system is a database management system (DBMS) which is tightly integrated with an application software; it is embedded in the application (instead of coming as a standalone application). It is a broad technology category that ...

system is a DBMS which is tightly integrated with an application software that requires access to stored data in such a way that the DBMS is hidden from the application's end-users and requires little or no ongoing maintenance.

*End-user databases consist of data developed by individual end-users. Examples of these are collections of documents, spreadsheets, presentations, multimedia, and other files. Several products exist to support such databases.

* A federated database system

A federated database system (FDBS) is a type of Meta (prefix), meta-database management system (DBMS), which transparently maps multiple autonomous Database management system, database systems into a single federated database. The constituent data ...

comprises several distinct databases, each with its own DBMS. It is handled as a single database by a federated database management system (FDBMS), which transparently integrates multiple autonomous DBMSs, possibly of different types (in which case it would also be a heterogeneous database system A heterogeneous database system is an automated (or semi-automated) system for the integration of heterogeneous, disparate database management systems to present a user with a single, unified query interface.

Heterogeneous database systems (HDBs) ...

), and provides them with an integrated conceptual view.

* Sometimes the term ''multi-database'' is used as a synonym for federated database, though it may refer to a less integrated (e.g., without an FDBMS and a managed integrated schema) group of databases that cooperate in a single application. In this case, typically middleware

Middleware is a type of computer software program that provides services to software applications beyond those available from the operating system. It can be described as "software glue".

Middleware makes it easier for software developers to imple ...

is used for distribution, which typically includes an atomic commit protocol (ACP), e.g., the two-phase commit protocol

In transaction processing, databases, and computer networking, the two-phase commit protocol (2PC, ''tupac'') is a type of Atomic commit, atomic commitment protocol (ACP). It is a distributed algorithm that coordinates all the processes that pa ...

, to allow distributed (global) transactions across the participating databases.

* A graph database

A graph database (GDB) is a database that uses graph structures for semantic queries with nodes, edges, and properties to represent and store data. A key concept of the system is the graph (or edge or relationship). The graph relates the dat ...

is a kind of NoSQL database that uses graph structures with nodes, edges, and properties to represent and store information. General graph databases that can store any graph are distinct from specialized graph databases such as triplestore

A triplestore or RDF store is a purpose-built database for the storage and retrieval of triples through semantic queries. A triple is a data entity composed of subject– predicate– object, like "Bob is 35" (i.e., Bob's age measured in years i ...

s and network databases.

* An array DBMS

An array database management system or array DBMS provides database services specifically for arrays (also called raster data), that is: homogeneous collections of data items (often called pixels, voxels, etc.), sitting on a regular grid of one, ...

is a kind of NoSQL DBMS that allows modeling, storage, and retrieval of (usually large) multi-dimensional arrays

An array is a systematic arrangement of similar objects, usually in rows and columns.

Things called an array include:

{{TOC right

Music

* In twelve-tone and serial composition, the presentation of simultaneous twelve-tone sets such that the ...

such as satellite images and climate simulation output.

* In a hypertext

Hypertext is E-text, text displayed on a computer display or other electronic devices with references (hyperlinks) to other text that the reader can immediately access. Hypertext documents are interconnected by hyperlinks, which are typic ...

or hypermedia

Hypermedia, an extension of hypertext, is a nonlinear medium of information that includes graphics, audio, video, plain text and hyperlinks. This designation contrasts with the broader term ''multimedia'', which may include non-interactive linear ...

database, any word or a piece of text representing an object, e.g., another piece of text, an article, a picture, or a film, can be hyperlink

In computing, a hyperlink, or simply a link, is a digital reference providing direct access to Data (computing), data by a user (computing), user's point and click, clicking or touchscreen, tapping. A hyperlink points to a whole document or to ...

ed to that object. Hypertext databases are particularly useful for organizing large amounts of disparate information. For example, they are useful for organizing online encyclopedia