CPU Fan Thermalright Le Grand Macho RT Functioning - 2018-05-20 on:

[Wikipedia]

[Google]

[Amazon]

A central processing unit (CPU), also called a central processor, main processor or just

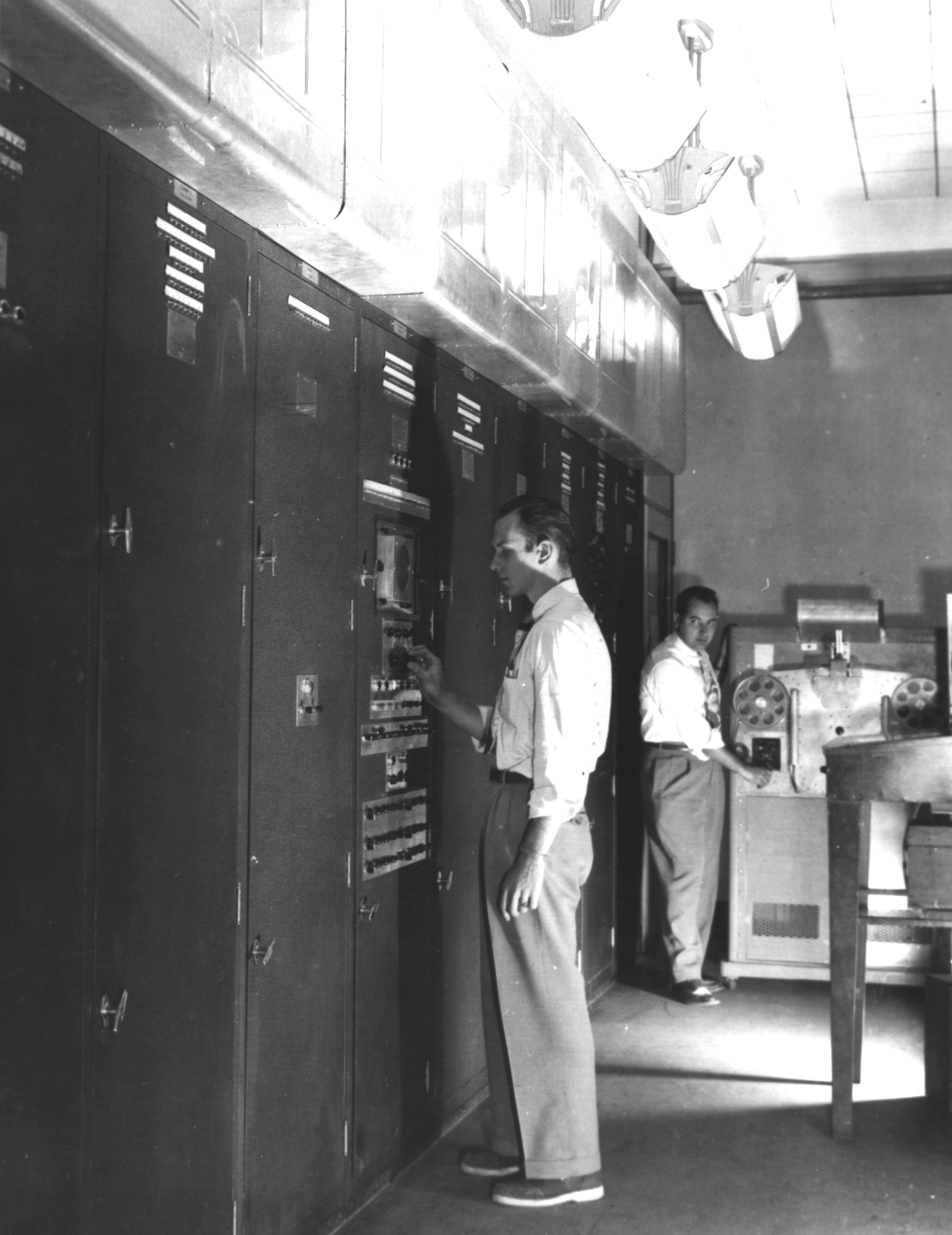

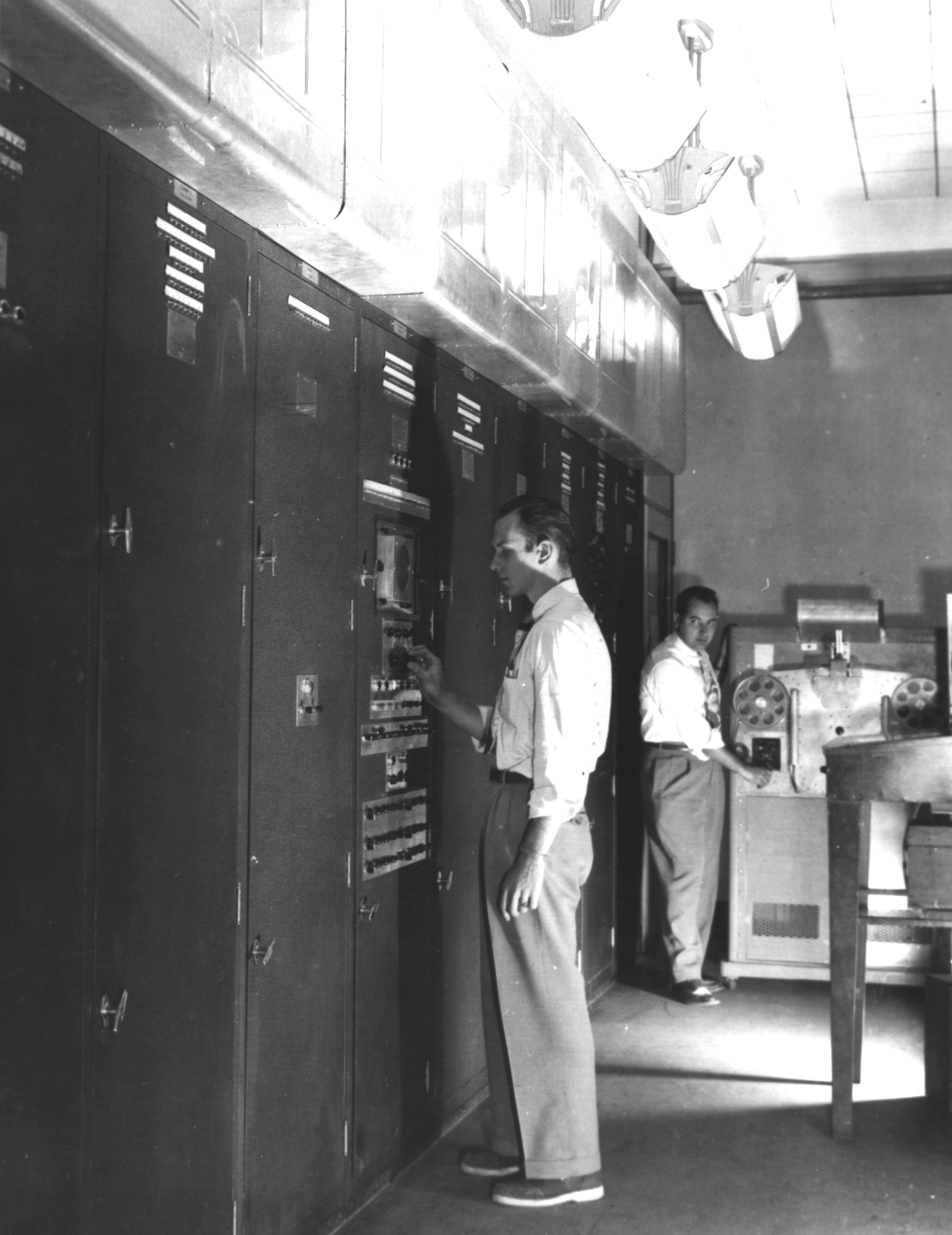

Early computers such as the

Early computers such as the

The design complexity of CPUs increased as various technologies facilitated building smaller and more reliable electronic devices. The first such improvement came with the advent of the

The design complexity of CPUs increased as various technologies facilitated building smaller and more reliable electronic devices. The first such improvement came with the advent of the  Transistor-based computers had several distinct advantages over their predecessors. Aside from facilitating increased reliability and lower power consumption, transistors also allowed CPUs to operate at much higher speeds because of the short switching time of a transistor in comparison to a tube or relay. The increased reliability and dramatically increased speed of the switching elements (which were almost exclusively transistors by this time); CPU clock rates in the tens of megahertz were easily obtained during this period. Additionally, while discrete transistor and IC CPUs were in heavy usage, new high-performance designs like

Transistor-based computers had several distinct advantages over their predecessors. Aside from facilitating increased reliability and lower power consumption, transistors also allowed CPUs to operate at much higher speeds because of the short switching time of a transistor in comparison to a tube or relay. The increased reliability and dramatically increased speed of the switching elements (which were almost exclusively transistors by this time); CPU clock rates in the tens of megahertz were easily obtained during this period. Additionally, while discrete transistor and IC CPUs were in heavy usage, new high-performance designs like

During this period, a method of manufacturing many interconnected transistors in a compact space was developed. The

During this period, a method of manufacturing many interconnected transistors in a compact space was developed. The

"The Texas Instruments TMX 1795: the first, forgotten microprocessor"

The only way to build LSI chips, which are chips with a hundred or more gates, was to build them using a

Since microprocessors were first introduced they have almost completely overtaken all other central processing unit implementation methods. The first commercially available microprocessor, made in 1971, was the

Since microprocessors were first introduced they have almost completely overtaken all other central processing unit implementation methods. The first commercially available microprocessor, made in 1971, was the

Hardwired into a CPU's circuitry is a set of basic operations it can perform, called an

Hardwired into a CPU's circuitry is a set of basic operations it can perform, called an

The arithmetic logic unit (ALU) is a digital circuit within the processor that performs integer arithmetic and bitwise logic operations. The inputs to the ALU are the data words to be operated on (called

The arithmetic logic unit (ALU) is a digital circuit within the processor that performs integer arithmetic and bitwise logic operations. The inputs to the ALU are the data words to be operated on (called

Related to numeric representation is the size and precision of integer numbers that a CPU can represent. In the case of a binary CPU, this is measured by the number of bits (significant digits of a binary encoded integer) that the CPU can process in one operation, which is commonly called Word (data type), ''word size'', ''bit width'', ''data path width'', ''integer precision'', or ''integer size''. A CPU's integer size determines the range of integer values it can directly operate on. For example, an 8-bit computing, 8-bit CPU can directly manipulate integers represented by eight bits, which have a range of 256 (28) discrete integer values.

Integer range can also affect the number of memory locations the CPU can directly address (an address is an integer value representing a specific memory location). For example, if a binary CPU uses 32 bits to represent a memory address then it can directly address 232 memory locations. To circumvent this limitation and for various other reasons, some CPUs use mechanisms (such as bank switching) that allow additional memory to be addressed.

CPUs with larger word sizes require more circuitry and consequently are physically larger, cost more and consume more power (and therefore generate more heat). As a result, smaller 4- or 8-bit

Related to numeric representation is the size and precision of integer numbers that a CPU can represent. In the case of a binary CPU, this is measured by the number of bits (significant digits of a binary encoded integer) that the CPU can process in one operation, which is commonly called Word (data type), ''word size'', ''bit width'', ''data path width'', ''integer precision'', or ''integer size''. A CPU's integer size determines the range of integer values it can directly operate on. For example, an 8-bit computing, 8-bit CPU can directly manipulate integers represented by eight bits, which have a range of 256 (28) discrete integer values.

Integer range can also affect the number of memory locations the CPU can directly address (an address is an integer value representing a specific memory location). For example, if a binary CPU uses 32 bits to represent a memory address then it can directly address 232 memory locations. To circumvent this limitation and for various other reasons, some CPUs use mechanisms (such as bank switching) that allow additional memory to be addressed.

CPUs with larger word sizes require more circuitry and consequently are physically larger, cost more and consume more power (and therefore generate more heat). As a result, smaller 4- or 8-bit

The description of the basic operation of a CPU offered in the previous section describes the simplest form that a CPU can take. This type of CPU, usually referred to as ''subscalar'', operates on and executes one instruction on one or two pieces of data at a time, that is less than one Instructions per cycle, instruction per clock cycle ().

This process gives rise to an inherent inefficiency in subscalar CPUs. Since only one instruction is executed at a time, the entire CPU must wait for that instruction to complete before proceeding to the next instruction. As a result, the subscalar CPU gets "hung up" on instructions which take more than one clock cycle to complete execution. Even adding a second

The description of the basic operation of a CPU offered in the previous section describes the simplest form that a CPU can take. This type of CPU, usually referred to as ''subscalar'', operates on and executes one instruction on one or two pieces of data at a time, that is less than one Instructions per cycle, instruction per clock cycle ().

This process gives rise to an inherent inefficiency in subscalar CPUs. Since only one instruction is executed at a time, the entire CPU must wait for that instruction to complete before proceeding to the next instruction. As a result, the subscalar CPU gets "hung up" on instructions which take more than one clock cycle to complete execution. Even adding a second

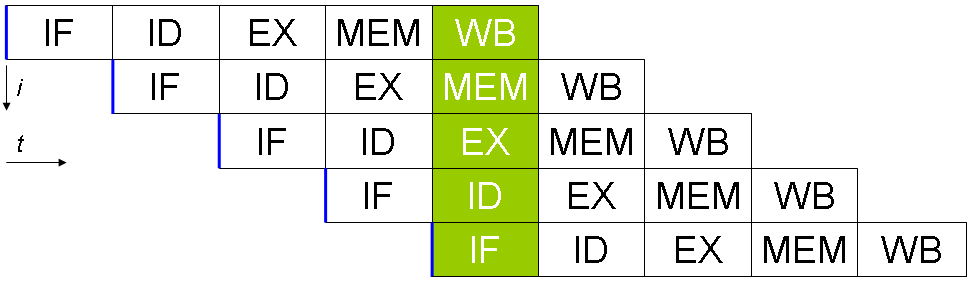

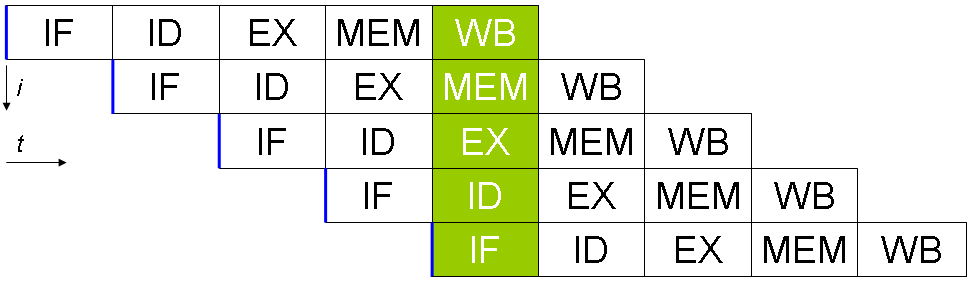

One of the simplest methods for increased parallelism is to begin the first steps of instruction fetching and decoding before the prior instruction finishes executing. This is a technique known as instruction pipelining, and is used in almost all modern general-purpose CPUs. Pipelining allows multiple instruction to be executed at a time by breaking the execution pathway into discrete stages. This separation can be compared to an assembly line, in which an instruction is made more complete at each stage until it exits the execution pipeline and is retired.

Pipelining does, however, introduce the possibility for a situation where the result of the previous operation is needed to complete the next operation; a condition often termed data dependency conflict. Therefore pipelined processors must check for these sorts of conditions and delay a portion of the pipeline if necessary. A pipelined processor can become very nearly scalar, inhibited only by pipeline stalls (an instruction spending more than one clock cycle in a stage).

One of the simplest methods for increased parallelism is to begin the first steps of instruction fetching and decoding before the prior instruction finishes executing. This is a technique known as instruction pipelining, and is used in almost all modern general-purpose CPUs. Pipelining allows multiple instruction to be executed at a time by breaking the execution pathway into discrete stages. This separation can be compared to an assembly line, in which an instruction is made more complete at each stage until it exits the execution pipeline and is retired.

Pipelining does, however, introduce the possibility for a situation where the result of the previous operation is needed to complete the next operation; a condition often termed data dependency conflict. Therefore pipelined processors must check for these sorts of conditions and delay a portion of the pipeline if necessary. A pipelined processor can become very nearly scalar, inhibited only by pipeline stalls (an instruction spending more than one clock cycle in a stage).

Improvements in instruction pipelining led to further decreases in the idle time of CPU components. Designs that are said to be superscalar include a long instruction pipeline and multiple identical

Improvements in instruction pipelining led to further decreases in the idle time of CPU components. Designs that are said to be superscalar include a long instruction pipeline and multiple identical

25 Microchips that shook the world

– an article by the Institute of Electrical and Electronics Engineers. {{Electronic components Central processing unit, Digital electronics Electronic design Electronic design automation

processor

Processor may refer to:

Computing Hardware

* Processor (computing)

**Central processing unit (CPU), the hardware within a computer that executes a program

*** Microprocessor, a central processing unit contained on a single integrated circuit (I ...

, is the electronic circuit

An electronic circuit is composed of individual electronic components, such as resistors, transistors, capacitors, inductors and diodes, connected by conductive wires or traces through which electric current can flow. It is a type of electric ...

ry that executes instructions comprising a computer program

A computer program is a sequence or set of instructions in a programming language for a computer to Execution (computing), execute. Computer programs are one component of software, which also includes software documentation, documentation and oth ...

. The CPU performs basic arithmetic

Arithmetic () is an elementary part of mathematics that consists of the study of the properties of the traditional operations on numbers— addition, subtraction, multiplication, division, exponentiation, and extraction of roots. In the 19th ...

, logic, controlling, and input/output

In computing, input/output (I/O, or informally io or IO) is the communication between an information processing system, such as a computer, and the outside world, possibly a human or another information processing system. Inputs are the signals ...

(I/O) operations specified by the instructions in the program. This contrasts with external components such as main memory

Computer data storage is a technology consisting of computer components and recording media that are used to retain digital data. It is a core function and fundamental component of computers.

The central processing unit (CPU) of a comput ...

and I/O circuitry, and specialized processors such as graphics processing unit

A graphics processing unit (GPU) is a specialized electronic circuit designed to manipulate and alter memory to accelerate the creation of images in a frame buffer intended for output to a display device. GPUs are used in embedded systems, m ...

s (GPUs).

The form, design

A design is a plan or specification for the construction of an object or system or for the implementation of an activity or process or the result of that plan or specification in the form of a prototype, product, or process. The verb ''to design' ...

, and implementation of CPUs have changed over time, but their fundamental operation remains almost unchanged. Principal components of a CPU include the arithmetic–logic unit

In computing, an arithmetic logic unit (ALU) is a combinational digital circuit that performs arithmetic and bitwise operations on integer binary numbers. This is in contrast to a floating-point unit (FPU), which operates on floating point n ...

(ALU) that performs arithmetic and logic operation

In mathematics and mathematical logic, Boolean algebra is a branch of algebra. It differs from elementary algebra in two ways. First, the values of the variables are the truth values ''true'' and ''false'', usually denoted 1 and 0, whereas in e ...

s, processor register

A processor register is a quickly accessible location available to a computer's processor. Registers usually consist of a small amount of fast storage, although some registers have specific hardware functions, and may be read-only or write-only. ...

s that supply operand

In mathematics, an operand is the object of a mathematical operation, i.e., it is the object or quantity that is operated on.

Example

The following arithmetic expression shows an example of operators and operands:

:3 + 6 = 9

In the above exam ...

s to the ALU and store the results of ALU operations, and a control unit

The control unit (CU) is a component of a computer's central processing unit (CPU) that directs the operation of the processor. A CU typically uses a binary decoder to convert coded instructions into timing and control signals that direct the op ...

that orchestrates the fetching (from memory), decoding and execution (of instructions) by directing the coordinated operations of the ALU, registers and other components.

Most modern CPUs are implemented on integrated circuit

An integrated circuit or monolithic integrated circuit (also referred to as an IC, a chip, or a microchip) is a set of electronic circuits on one small flat piece (or "chip") of semiconductor material, usually silicon. Large numbers of tiny ...

(IC) microprocessor

A microprocessor is a computer processor where the data processing logic and control is included on a single integrated circuit, or a small number of integrated circuits. The microprocessor contains the arithmetic, logic, and control circ ...

s, with one or more CPUs on a single IC chip. Microprocessor chips with multiple CPUs are multi-core processor

A multi-core processor is a microprocessor on a single integrated circuit with two or more separate processing units, called cores, each of which reads and executes program instructions. The instructions are ordinary CPU instructions (such ...

s. The individual physical CPUs, processor cores, can also be multithreaded to create additional virtual or logical CPUs.

An IC that contains a CPU may also contain memory

Memory is the faculty of the mind by which data or information is encoded, stored, and retrieved when needed. It is the retention of information over time for the purpose of influencing future action. If past events could not be remember ...

, peripheral

A peripheral or peripheral device is an auxiliary device used to put information into and get information out of a computer. The term ''peripheral device'' refers to all hardware components that are attached to a computer and are controlled by the ...

interfaces, and other components of a computer; such integrated devices are variously called microcontroller

A microcontroller (MCU for ''microcontroller unit'', often also MC, UC, or μC) is a small computer on a single VLSI integrated circuit (IC) chip. A microcontroller contains one or more CPUs ( processor cores) along with memory and programmabl ...

s or systems on a chip (SoC).

Array processors or vector processor

In computing, a vector processor or array processor is a central processing unit (CPU) that implements an instruction set where its instructions are designed to operate efficiently and effectively on large one-dimensional arrays of data calle ...

s have multiple processors that operate in parallel, with no unit considered central. Virtual CPUs

Virtual may refer to:

* Virtual (horse), a thoroughbred racehorse

* Virtual channel, a channel designation which differs from that of the actual radio channel (or range of frequencies) on which the signal travels

* Virtual function, a programming ...

are an abstraction of dynamical aggregated computational resources.

History

Early computers such as the

Early computers such as the ENIAC

ENIAC (; Electronic Numerical Integrator and Computer) was the first programmable, electronic, general-purpose digital computer, completed in 1945. There were other computers that had these features, but the ENIAC had all of them in one pac ...

had to be physically rewired to perform different tasks, which caused these machines to be called "fixed-program computers". The "central processing unit" term has been in use since as early as 1955. Since the term "CPU" is generally defined as a device for software

Software is a set of computer programs and associated documentation and data. This is in contrast to hardware, from which the system is built and which actually performs the work.

At the lowest programming level, executable code consist ...

(computer program) execution, the earliest devices that could rightly be called CPUs came with the advent of the stored-program computer

A stored-program computer is a computer that stores program instructions in electronically or optically accessible memory. This contrasts with systems that stored the program instructions with plugboards or similar mechanisms.

The definition ...

.

The idea of a stored-program computer had been already present in the design of J. Presper Eckert and John William Mauchly's ENIAC

ENIAC (; Electronic Numerical Integrator and Computer) was the first programmable, electronic, general-purpose digital computer, completed in 1945. There were other computers that had these features, but the ENIAC had all of them in one pac ...

, but was initially omitted so that it could be finished sooner. On June 30, 1945, before ENIAC was made, mathematician John von Neumann

John von Neumann (; hu, Neumann János Lajos, ; December 28, 1903 – February 8, 1957) was a Hungarian-American mathematician, physicist, computer scientist, engineer and polymath. He was regarded as having perhaps the widest c ...

distributed the paper entitled ''First Draft of a Report on the EDVAC

The ''First Draft of a Report on the EDVAC'' (commonly shortened to ''First Draft'') is an incomplete 101-page document written by John von Neumann and distributed on June 30, 1945 by Herman Goldstine, security officer on the classified ENIAC pro ...

''. It was the outline of a stored-program computer that would eventually be completed in August 1949. EDVAC was designed to perform a certain number of instructions (or operations) of various types. Significantly, the programs written for EDVAC were to be stored in high-speed computer memory

In computing, memory is a device or system that is used to store information for immediate use in a computer or related computer hardware and digital electronic devices. The term ''memory'' is often synonymous with the term '' primary storag ...

rather than specified by the physical wiring of the computer. This overcame a severe limitation of ENIAC, which was the considerable time and effort required to reconfigure the computer to perform a new task. With von Neumann's design, the program that EDVAC ran could be changed simply by changing the contents of the memory. EDVAC, was not the first stored-program computer, the Manchester Baby

The Manchester Baby, also called the Small-Scale Experimental Machine (SSEM), was the first electronic stored-program computer. It was built at the University of Manchester by Frederic C. Williams, Tom Kilburn, and Geoff Tootill, and ran its ...

which was a small-scale experimental stored-program computer, ran its first program on 21 June 1948 and the Manchester Mark 1

The Manchester Mark 1 was one of the earliest stored-program computers, developed at the Victoria University of Manchester, England from the Manchester Baby (operational in June 1948). Work began in August 1948, and the first version was oper ...

ran its first program during the night of 16–17 June 1949.

Early CPUs were custom designs used as part of a larger and sometimes distinctive computer. However, this method of designing custom CPUs for a particular application has largely given way to the development of multi-purpose processors produced in large quantities. This standardization began in the era of discrete transistor

upright=1.4, gate (G), body (B), source (S) and drain (D) terminals. The gate is separated from the body by an insulating layer (pink).

A transistor is a semiconductor device used to Electronic amplifier, amplify or electronic switch, switch ...

mainframes

A mainframe computer, informally called a mainframe or big iron, is a computer used primarily by large organizations for critical applications like bulk data processing for tasks such as censuses, industry and consumer statistics, enterprise ...

and minicomputer

A minicomputer, or colloquially mini, is a class of smaller general purpose computers that developed in the mid-1960s and sold at a much lower price than mainframe and mid-size computers from IBM and its direct competitors. In a 1970 survey, ' ...

s and has rapidly accelerated with the popularization of the integrated circuit

An integrated circuit or monolithic integrated circuit (also referred to as an IC, a chip, or a microchip) is a set of electronic circuits on one small flat piece (or "chip") of semiconductor material, usually silicon. Large numbers of tiny ...

(IC). The IC has allowed increasingly complex CPUs to be designed and manufactured to tolerances on the order of nanometers

330px, Different lengths as in respect to the molecular scale.

The nanometre (international spelling as used by the International Bureau of Weights and Measures; SI symbol: nm) or nanometer (American and British English spelling differences#-re ...

. Both the miniaturization and standardization of CPUs have increased the presence of digital devices in modern life far beyond the limited application of dedicated computing machines. Modern microprocessors appear in electronic devices ranging from automobiles to cellphones, and sometimes even in toys.

While von Neumann is most often credited with the design of the stored-program computer because of his design of EDVAC, and the design became known as the von Neumann architecture

The von Neumann architecture — also known as the von Neumann model or Princeton architecture — is a computer architecture based on a 1945 description by John von Neumann, and by others, in the '' First Draft of a Report on the EDVAC''. T ...

, others before him, such as Konrad Zuse

Konrad Ernst Otto Zuse (; 22 June 1910 – 18 December 1995) was a German civil engineer, pioneering computer scientist, inventor and businessman. His greatest achievement was the world's first programmable computer; the functional program- ...

, had suggested and implemented similar ideas. The so-called Harvard architecture

The Harvard architecture is a computer architecture with separate storage and signal pathways for instructions and data. It contrasts with the von Neumann architecture, where program instructions and data share the same memory and pathway ...

of the Harvard Mark I

The Harvard Mark I, or IBM Automatic Sequence Controlled Calculator (ASCC), was a general-purpose electromechanical computer used in the war effort during the last part of World War II.

One of the first programs to run on the Mark I was init ...

, which was completed before EDVAC, also used a stored-program design using punched paper tape

Five- and eight-hole punched paper tape

Paper tape reader on the Harwell computer with a small piece of five-hole tape connected in a circle – creating a physical program loop

Punched tape or perforated paper tape is a form of data storage ...

rather than electronic memory. The key difference between the von Neumann and Harvard architectures is that the latter separates the storage and treatment of CPU instructions and data, while the former uses the same memory space for both. Most modern CPUs are primarily von Neumann in design, but CPUs with the Harvard architecture are seen as well, especially in embedded applications; for instance, the Atmel AVR

AVR is a family of microcontrollers developed since 1996 by Atmel, acquired by Microchip Technology in 2016. These are modified Harvard architecture 8-bit RISC single-chip microcontrollers. AVR was one of the first microcontroller families ...

microcontrollers are Harvard architecture processors.

Relay

A relay

Electromechanical relay schematic showing a control coil, four pairs of normally open and one pair of normally closed contacts

An automotive-style miniature relay with the dust cover taken off

A relay is an electrically operated switch ...

s and vacuum tube

A vacuum tube, electron tube, valve (British usage), or tube (North America), is a device that controls electric current flow in a high vacuum between electrodes to which an electric potential difference has been applied.

The type known as ...

s (thermionic tubes) were commonly used as switching elements; a useful computer requires thousands or tens of thousands of switching devices. The overall speed of a system is dependent on the speed of the switches. Vacuum-tube computer

A vacuum-tube computer, now termed a first-generation computer, is a computer that uses vacuum tubes for logic circuitry. Although superseded by second-generation transistorized computers, vacuum-tube computers continued to be built into the 196 ...

s such as EDVAC tended to average eight hours between failures, whereas relay computers like the (slower, but earlier) Harvard Mark I

The Harvard Mark I, or IBM Automatic Sequence Controlled Calculator (ASCC), was a general-purpose electromechanical computer used in the war effort during the last part of World War II.

One of the first programs to run on the Mark I was init ...

failed very rarely. In the end, tube-based CPUs became dominant because the significant speed advantages afforded generally outweighed the reliability problems. Most of these early synchronous CPUs ran at low clock rate

In computing, the clock rate or clock speed typically refers to the frequency at which the clock generator of a processor can generate pulses, which are used to synchronize the operations of its components, and is used as an indicator of the pr ...

s compared to modern microelectronic designs. Clock signal frequencies ranging from 100 kHz to 4 MHz were very common at this time, limited largely by the speed of the switching devices they were built with.

Transistor CPUs

The design complexity of CPUs increased as various technologies facilitated building smaller and more reliable electronic devices. The first such improvement came with the advent of the

The design complexity of CPUs increased as various technologies facilitated building smaller and more reliable electronic devices. The first such improvement came with the advent of the transistor

upright=1.4, gate (G), body (B), source (S) and drain (D) terminals. The gate is separated from the body by an insulating layer (pink).

A transistor is a semiconductor device used to Electronic amplifier, amplify or electronic switch, switch ...

. Transistorized CPUs during the 1950s and 1960s no longer had to be built out of bulky, unreliable and fragile switching elements like vacuum tube

A vacuum tube, electron tube, valve (British usage), or tube (North America), is a device that controls electric current flow in a high vacuum between electrodes to which an electric potential difference has been applied.

The type known as ...

s and relay

A relay

Electromechanical relay schematic showing a control coil, four pairs of normally open and one pair of normally closed contacts

An automotive-style miniature relay with the dust cover taken off

A relay is an electrically operated switch ...

s. With this improvement, more complex and reliable CPUs were built onto one or several printed circuit board

A printed circuit board (PCB; also printed wiring board or PWB) is a medium used in electrical and electronic engineering to connect electronic components to one another in a controlled manner. It takes the form of a laminated sandwich str ...

s containing discrete (individual) components.

In 1964, IBM introduced its IBM System/360

The IBM System/360 (S/360) is a family of mainframe computer systems that was announced by IBM on April 7, 1964, and delivered between 1965 and 1978. It was the first family of computers designed to cover both commercial and scientific applic ...

computer architecture that was used in a series of computers capable of running the same programs with different speed and performance. This was significant at a time when most electronic computers were incompatible with one another, even those made by the same manufacturer. To facilitate this improvement, IBM used the concept of a microprogram (often called "microcode"), which still sees widespread usage in modern CPUs. The System/360 architecture was so popular that it dominated the mainframe computer

A mainframe computer, informally called a mainframe or big iron, is a computer used primarily by large organizations for critical applications like bulk data processing for tasks such as censuses, industry and consumer statistics, enterprise ...

market for decades and left a legacy that is still continued by similar modern computers like the IBM zSeries

IBM Z is a family name used by IBM for all of its z/Architecture mainframe computers.

In July 2017, with another generation of products, the official family was changed to IBM Z from IBM z Systems; the IBM Z family now includes the newest mod ...

. In 1965, Digital Equipment Corporation

Digital Equipment Corporation (DEC ), using the trademark Digital, was a major American company in the computer industry from the 1960s to the 1990s. The company was co-founded by Ken Olsen and Harlan Anderson in 1957. Olsen was president un ...

(DEC) introduced another influential computer aimed at the scientific and research markets, the PDP-8

The PDP-8 is a 12-bit minicomputer that was produced by Digital Equipment Corporation (DEC). It was the first commercially successful minicomputer, with over 50,000 units being sold over the model's lifetime. Its basic design follows the pioneer ...

.

single instruction, multiple data

Single instruction, multiple data (SIMD) is a type of parallel processing in Flynn's taxonomy. SIMD can be internal (part of the hardware design) and it can be directly accessible through an instruction set architecture (ISA), but it should ...

(SIMD) vector processor

In computing, a vector processor or array processor is a central processing unit (CPU) that implements an instruction set where its instructions are designed to operate efficiently and effectively on large one-dimensional arrays of data calle ...

s began to appear. These early experimental designs later gave rise to the era of specialized supercomputer

A supercomputer is a computer with a high level of performance as compared to a general-purpose computer. The performance of a supercomputer is commonly measured in floating-point operations per second ( FLOPS) instead of million instructio ...

s like those made by Cray Inc

Cray Inc., a subsidiary of Hewlett Packard Enterprise, is an American supercomputer manufacturer headquartered in Seattle, Washington. It also manufactures systems for data storage and analytics. Several Cray supercomputer systems are listed i ...

and Fujitsu Ltd.

Small-scale integration CPUs

During this period, a method of manufacturing many interconnected transistors in a compact space was developed. The

During this period, a method of manufacturing many interconnected transistors in a compact space was developed. The integrated circuit

An integrated circuit or monolithic integrated circuit (also referred to as an IC, a chip, or a microchip) is a set of electronic circuits on one small flat piece (or "chip") of semiconductor material, usually silicon. Large numbers of tiny ...

(IC) allowed a large number of transistors to be manufactured on a single semiconductor

A semiconductor is a material which has an electrical conductivity value falling between that of a conductor, such as copper, and an insulator, such as glass. Its resistivity falls as its temperature rises; metals behave in the opposite way ...

-based die

Die, as a verb, refers to death, the cessation of life.

Die may also refer to:

Games

* Die, singular of dice, small throwable objects used for producing random numbers

Manufacturing

* Die (integrated circuit), a rectangular piece of a semicondu ...

, or "chip". At first, only very basic non-specialized digital circuits such as NOR gate

The NOR gate is a digital logic gate that implements logical NOR - it behaves according to the truth table to the right. A HIGH output (1) results if both the inputs to the gate are LOW (0); if one or both input is HIGH (1), a LOW output (0 ...

s were miniaturized into ICs. CPUs based on these "building block" ICs are generally referred to as "small-scale integration" (SSI) devices. SSI ICs, such as the ones used in the Apollo Guidance Computer

The Apollo Guidance Computer (AGC) was a digital computer produced for the Apollo program that was installed on board each Apollo command module (CM) and Apollo Lunar Module (LM). The AGC provided computation and electronic interfaces for guidanc ...

, usually contained up to a few dozen transistors. To build an entire CPU out of SSI ICs required thousands of individual chips, but still consumed much less space and power than earlier discrete transistor designs.

IBM's System/370

The IBM System/370 (S/370) is a model range of IBM mainframe computers announced on June 30, 1970, as the successors to the System/360 family. The series mostly maintains backward compatibility with the S/360, allowing an easy migration path ...

, follow-on to the System/360, used SSI ICs rather than Solid Logic Technology

Solid Logic Technology (SLT) was IBM's method for hybrid packaging of electronic circuitry introduced in 1964 with the IBM System/360 series of computers and related machines. IBM chose to design custom hybrid circuits using discrete, flip chi ...

discrete-transistor modules. DEC's PDP-8

The PDP-8 is a 12-bit minicomputer that was produced by Digital Equipment Corporation (DEC). It was the first commercially successful minicomputer, with over 50,000 units being sold over the model's lifetime. Its basic design follows the pioneer ...

/I and KI10 PDP-10

Digital Equipment Corporation (DEC)'s PDP-10, later marketed as the DECsystem-10, is a mainframe computer family manufactured beginning in 1966 and discontinued in 1983. 1970s models and beyond were marketed under the DECsystem-10 name, espec ...

also switched from the individual transistors used by the PDP-8 and PDP-10 to SSI ICs, and their extremely popular PDP-11

The PDP-11 is a series of 16-bit minicomputers sold by Digital Equipment Corporation (DEC) from 1970 into the 1990s, one of a set of products in the Programmed Data Processor (PDP) series. In total, around 600,000 PDP-11s of all models were sol ...

line was originally built with SSI ICs but was eventually implemented with LSI components once these became practical.

Large-scale integration CPUs

Lee Boysel published influential articles, including a 1967 "manifesto", which described how to build the equivalent of a 32-bit mainframe computer from a relatively small number oflarge-scale integration

An integrated circuit or monolithic integrated circuit (also referred to as an IC, a chip, or a microchip) is a set of electronic circuits on one small flat piece (or "chip") of semiconductor material, usually silicon. Large numbers of tiny ...

circuits (LSI).

Ken Shirriff"The Texas Instruments TMX 1795: the first, forgotten microprocessor"

The only way to build LSI chips, which are chips with a hundred or more gates, was to build them using a

metal–oxide–semiconductor

The metal–oxide–semiconductor field-effect transistor (MOSFET, MOS-FET, or MOS FET) is a type of field-effect transistor (FET), most commonly fabricated by the controlled oxidation of silicon. It has an insulated gate, the voltage of which d ...

(MOS) semiconductor manufacturing process (either PMOS logic, NMOS logic

N-type metal-oxide-semiconductor logic uses n-type (-) MOSFETs (metal-oxide-semiconductor field-effect transistors) to implement logic gates and other digital circuits. These nMOS transistors operate by creating an inversion layer in a p-type t ...

, or CMOS

Complementary metal–oxide–semiconductor (CMOS, pronounced "sea-moss", ) is a type of metal–oxide–semiconductor field-effect transistor (MOSFET) fabrication process that uses complementary and symmetrical pairs of p-type and n-type MOSF ...

logic). However, some companies continued to build processors out of bipolar transistor–transistor logic Transistor–transistor logic (TTL) is a logic family built from bipolar junction transistors. Its name signifies that transistors perform both the logic function (the first "transistor") and the amplifying function (the second "transistor"), as o ...

(TTL) chips because bipolar junction transistors were faster than MOS chips up until the 1970s (a few companies such as Datapoint continued to build processors out of TTL chips until the early 1980s). In the 1960s, MOS ICs were slower and initially considered useful only in applications that required low power. Following the development of silicon-gate MOS technology by Federico Faggin

Federico Faggin (, ; born 1 December 1941) is an Italian physicist, engineer, inventor and entrepreneur. He is best known for designing the first commercial microprocessor, the Intel 4004. He led the 4004 (MCS-4) project and the design group d ...

at Fairchild Semiconductor in 1968, MOS ICs largely replaced bipolar TTL as the standard chip technology in the early 1970s.

As the microelectronic

Microelectronics is a subfield of electronics. As the name suggests, microelectronics relates to the study and manufacture (or microfabrication) of very small electronic designs and components. Usually, but not always, this means micrometre-sc ...

technology advanced, an increasing number of transistors were placed on ICs, decreasing the number of individual ICs needed for a complete CPU. MSI and LSI ICs increased transistor counts to hundreds, and then thousands. By 1968, the number of ICs required to build a complete CPU had been reduced to 24 ICs of eight different types, with each IC containing roughly 1000 MOSFETs. In stark contrast with its SSI and MSI predecessors, the first LSI implementation of the PDP-11 contained a CPU composed of only four LSI integrated circuits.

Microprocessors

Since microprocessors were first introduced they have almost completely overtaken all other central processing unit implementation methods. The first commercially available microprocessor, made in 1971, was the

Since microprocessors were first introduced they have almost completely overtaken all other central processing unit implementation methods. The first commercially available microprocessor, made in 1971, was the Intel 4004

The Intel 4004 is a 4-bit central processing unit (CPU) released by Intel Corporation in 1971. Sold for US$60, it was the first commercially produced microprocessor, and the first in a long line of Intel CPUs.

The 4004 was the first significa ...

, and the first widely used microprocessor, made in 1974, was the Intel 8080

The Intel 8080 (''"eighty-eighty"'') is the second 8-bit microprocessor designed and manufactured by Intel. It first appeared in April 1974 and is an extended and enhanced variant of the earlier 8008 design, although without binary compatibil ...

. Mainframe and minicomputer manufacturers of the time launched proprietary IC development programs to upgrade their older computer architecture

In computer engineering, computer architecture is a description of the structure of a computer system made from component parts. It can sometimes be a high-level description that ignores details of the implementation. At a more detailed level, the ...

s, and eventually produced instruction set

In computer science, an instruction set architecture (ISA), also called computer architecture, is an abstract model of a computer. A device that executes instructions described by that ISA, such as a central processing unit (CPU), is called an ...

compatible microprocessors that were backward-compatible with their older hardware and software. Combined with the advent and eventual success of the ubiquitous personal computer

A personal computer (PC) is a multi-purpose microcomputer whose size, capabilities, and price make it feasible for individual use. Personal computers are intended to be operated directly by an end user, rather than by a computer expert or te ...

, the term ''CPU'' is now applied almost exclusively to microprocessors. Several CPUs (denoted ''cores'') can be combined in a single processing chip.

Previous generations of CPUs were implemented as discrete components

An electronic component is any basic discrete device or physical entity in an electronic system used to affect electrons or their associated fields. Electronic components are mostly industrial products, available in a singular form and are no ...

and numerous small integrated circuit

An integrated circuit or monolithic integrated circuit (also referred to as an IC, a chip, or a microchip) is a set of electronic circuits on one small flat piece (or "chip") of semiconductor material, usually silicon. Large numbers of tiny ...

s (ICs) on one or more circuit boards. Microprocessors, on the other hand, are CPUs manufactured on a very small number of ICs; usually just one. The overall smaller CPU size, as a result of being implemented on a single die, means faster switching time because of physical factors like decreased gate parasitic capacitance

Parasitic capacitance is an unavoidable and usually unwanted capacitance that exists between the parts of an electronic component or circuit simply because of their proximity to each other. When two electrical conductors at different voltages ...

. This has allowed synchronous microprocessors to have clock rates ranging from tens of megahertz to several gigahertz. Additionally, the ability to construct exceedingly small transistors on an IC has increased the complexity and number of transistors in a single CPU many fold. This widely observed trend is described by Moore's law

Moore's law is the observation that the number of transistors in a dense integrated circuit (IC) doubles about every two years. Moore's law is an observation and projection of a historical trend. Rather than a law of physics, it is an empi ...

, which had proven to be a fairly accurate predictor of the growth of CPU (and other IC) complexity until 2016.

While the complexity, size, construction and general form of CPUs have changed enormously since 1950, the basic design and function has not changed much at all. Almost all common CPUs today can be very accurately described as von Neumann stored-program machines. As Moore's law no longer holds, concerns have arisen about the limits of integrated circuit transistor technology. Extreme miniaturization of electronic gates is causing the effects of phenomena like electromigration

Electromigration is the transport of material caused by the gradual movement of the ions in a conductor due to the momentum transfer between conducting electrons and diffusing metal atoms. The effect is important in applications where high dir ...

and subthreshold leakage

Subthreshold conduction or subthreshold leakage or subthreshold drain current is the current between the source and drain of a MOSFET when the transistor is in subthreshold region, or weak-inversion region, that is, for gate-to-source voltages ...

to become much more significant. These newer concerns are among the many factors causing researchers to investigate new methods of computing such as the quantum computer

Quantum computing is a type of computation whose operations can harness the phenomena of quantum mechanics, such as superposition, interference, and entanglement. Devices that perform quantum computations are known as quantum computers. Thoug ...

, as well as to expand the usage of parallelism and other methods that extend the usefulness of the classical von Neumann model.

Operation

The fundamental operation of most CPUs, regardless of the physical form they take, is to execute a sequence of stored instructions that is called a program. The instructions to be executed are kept in some kind ofcomputer memory

In computing, memory is a device or system that is used to store information for immediate use in a computer or related computer hardware and digital electronic devices. The term ''memory'' is often synonymous with the term '' primary storag ...

. Nearly all CPUs follow the fetch, decode and execute steps in their operation, which are collectively known as the instruction cycle

The instruction cycle (also known as the fetch–decode–execute cycle, or simply the fetch-execute cycle) is the cycle that the central processing unit (CPU) follows from boot-up until the computer has shut down in order to process instruction ...

.

After the execution of an instruction, the entire process repeats, with the next instruction cycle normally fetching the next-in-sequence instruction because of the incremented value in the program counter

The program counter (PC), commonly called the instruction pointer (IP) in Intel x86 and Itanium microprocessors, and sometimes called the instruction address register (IAR), the instruction counter, or just part of the instruction sequencer, i ...

. If a jump instruction was executed, the program counter will be modified to contain the address of the instruction that was jumped to and program execution continues normally. In more complex CPUs, multiple instructions can be fetched, decoded and executed simultaneously. This section describes what is generally referred to as the "classic RISC pipeline

In the history of computer hardware, some early reduced instruction set computer central processing units (RISC CPUs) used a very similar architectural solution, now called a classic RISC pipeline. Those CPUs were: MIPS, SPARC, Motorola 88000, ...

", which is quite common among the simple CPUs used in many electronic devices (often called microcontrollers). It largely ignores the important role of CPU cache, and therefore the access stage of the pipeline.

Some instructions manipulate the program counter rather than producing result data directly; such instructions are generally called "jumps" and facilitate program behavior like loops, conditional program execution (through the use of a conditional jump), and existence of functions. In some processors, some other instructions change the state of bits in a "flags" register. These flags can be used to influence how a program behaves, since they often indicate the outcome of various operations. For example, in such processors a "compare" instruction evaluates two values and sets or clears bits in the flags register to indicate which one is greater or whether they are equal; one of these flags could then be used by a later jump instruction to determine program flow.

Fetch

Fetch involves retrieving an instruction (which is represented by a number or sequence of numbers) from program memory. The instruction's location (address) in program memory is determined by theprogram counter

The program counter (PC), commonly called the instruction pointer (IP) in Intel x86 and Itanium microprocessors, and sometimes called the instruction address register (IAR), the instruction counter, or just part of the instruction sequencer, i ...

(PC; called the "instruction pointer" in Intel x86 microprocessors), which stores a number that identifies the address of the next instruction to be fetched. After an instruction is fetched, the PC is incremented by the length of the instruction so that it will contain the address of the next instruction in the sequence. Often, the instruction to be fetched must be retrieved from relatively slow memory, causing the CPU to stall while waiting for the instruction to be returned. This issue is largely addressed in modern processors by caches and pipeline architectures (see below).

Decode

The instruction that the CPU fetches from memory determines what the CPU will do. In the decode step, performed bybinary decoder

In digital electronics, a binary decoder is a combinational logic circuit that converts binary information from the n coded inputs to a maximum of 2n unique outputs. They are used in a wide variety of applications, including instruction decoding ...

circuitry known as the ''instruction decoder'', the instruction is converted into signals that control other parts of the CPU.

The way in which the instruction is interpreted is defined by the CPU's instruction set architecture (ISA). Often, one group of bits (that is, a "field") within the instruction, called the opcode, indicates which operation is to be performed, while the remaining fields usually provide supplemental information required for the operation, such as the operands. Those operands may be specified as a constant value (called an immediate value), or as the location of a value that may be a processor register

A processor register is a quickly accessible location available to a computer's processor. Registers usually consist of a small amount of fast storage, although some registers have specific hardware functions, and may be read-only or write-only. ...

or a memory address, as determined by some addressing mode

Addressing modes are an aspect of the instruction set architecture in most central processing unit (CPU) designs. The various addressing modes that are defined in a given instruction set architecture define how the machine language instructions i ...

.

In some CPU designs the instruction decoder is implemented as a hardwired, unchangeable binary decoder circuit. In others, a microprogram is used to translate instructions into sets of CPU configuration signals that are applied sequentially over multiple clock pulses. In some cases the memory that stores the microprogram is rewritable, making it possible to change the way in which the CPU decodes instructions.

Execute

After the fetch and decode steps, the execute step is performed. Depending on the CPU architecture, this may consist of a single action or a sequence of actions. During each action, control signals electrically enable or disable various parts of the CPU so they can perform all or part of the desired operation. The action is then completed, typically in response to a clock pulse. Very often the results are written to an internal CPU register for quick access by subsequent instructions. In other cases results may be written to slower, but less expensive and higher capacitymain memory

Computer data storage is a technology consisting of computer components and recording media that are used to retain digital data. It is a core function and fundamental component of computers.

The central processing unit (CPU) of a comput ...

.

For example, if an addition instruction is to be executed, registers containing operands (numbers to be summed) are activated, as are the parts of the arithmetic logic unit

In computing, an arithmetic logic unit (ALU) is a combinational digital circuit that performs arithmetic and bitwise operations on integer binary numbers. This is in contrast to a floating-point unit (FPU), which operates on floating point num ...

(ALU) that perform addition. When the clock pulse occurs, the operands flow from the source registers into the ALU, and the sum appears at its output. On subsequent clock pulses, other components are enabled (and disabled) to move the output (the sum of the operation) to storage (e.g., a register or memory). If the resulting sum is too large (i.e., it is larger than the ALU's output word size), an arithmetic overflow flag will be set, influencing the next operation.

Structure and implementation

instruction set

In computer science, an instruction set architecture (ISA), also called computer architecture, is an abstract model of a computer. A device that executes instructions described by that ISA, such as a central processing unit (CPU), is called an ...

. Such operations may involve, for example, adding or subtracting two numbers, comparing two numbers, or jumping to a different part of a program. Each instruction is represented by a unique combination of bit

The bit is the most basic unit of information in computing and digital communications. The name is a portmanteau of binary digit. The bit represents a logical state with one of two possible values. These values are most commonly represente ...

s, known as the machine language opcode

In computing, an opcode (abbreviated from operation code, also known as instruction machine code, instruction code, instruction syllable, instruction parcel or opstring) is the portion of a machine language instruction that specifies the operat ...

. While processing an instruction, the CPU decodes the opcode (via a binary decoder

In digital electronics, a binary decoder is a combinational logic circuit that converts binary information from the n coded inputs to a maximum of 2n unique outputs. They are used in a wide variety of applications, including instruction decoding ...

) into control signals, which orchestrate the behavior of the CPU. A complete machine language instruction consists of an opcode and, in many cases, additional bits that specify arguments for the operation (for example, the numbers to be summed in the case of an addition operation). Going up the complexity scale, a machine language program is a collection of machine language instructions that the CPU executes.

The actual mathematical operation for each instruction is performed by a combinational logic

In automata theory, combinational logic (also referred to as time-independent logic or combinatorial logic) is a type of digital logic which is implemented by Boolean circuits, where the output is a pure function of the present input only. This ...

circuit within the CPU's processor known as the arithmetic–logic unit

In computing, an arithmetic logic unit (ALU) is a combinational digital circuit that performs arithmetic and bitwise operations on integer binary numbers. This is in contrast to a floating-point unit (FPU), which operates on floating point n ...

or ALU. In general, a CPU executes an instruction by fetching it from memory, using its ALU to perform an operation, and then storing the result to memory. Beside the instructions for integer mathematics and logic operations, various other machine instructions exist, such as those for loading data from memory and storing it back, branching operations, and mathematical operations on floating-point numbers performed by the CPU's floating-point unit

In computing, floating-point arithmetic (FP) is arithmetic that represents real numbers approximately, using an integer with a fixed precision, called the significand, scaled by an integer exponent of a fixed base. For example, 12.345 can be ...

(FPU).

Control unit

The control unit (CU) is a component of the CPU that directs the operation of the processor. It tells the computer's memory, arithmetic and logic unit and input and output devices how to respond to the instructions that have been sent to the processor. It directs the operation of the other units by providing timing and control signals. Most computer resources are managed by the CU. It directs the flow of data between the CPU and the other devices.John von Neumann

John von Neumann (; hu, Neumann János Lajos, ; December 28, 1903 – February 8, 1957) was a Hungarian-American mathematician, physicist, computer scientist, engineer and polymath. He was regarded as having perhaps the widest c ...

included the control unit as part of the von Neumann architecture

The von Neumann architecture — also known as the von Neumann model or Princeton architecture — is a computer architecture based on a 1945 description by John von Neumann, and by others, in the '' First Draft of a Report on the EDVAC''. T ...

. In modern computer designs, the control unit is typically an internal part of the CPU with its overall role and operation unchanged since its introduction.

Arithmetic logic unit

The arithmetic logic unit (ALU) is a digital circuit within the processor that performs integer arithmetic and bitwise logic operations. The inputs to the ALU are the data words to be operated on (called

The arithmetic logic unit (ALU) is a digital circuit within the processor that performs integer arithmetic and bitwise logic operations. The inputs to the ALU are the data words to be operated on (called operands

In mathematics, an operand is the object of a mathematical operation, i.e., it is the object or quantity that is operated on.

Example

The following arithmetic expression shows an example of operators and operands:

:3 + 6 = 9

In the above exampl ...

), status information from previous operations, and a code from the control unit indicating which operation to perform. Depending on the instruction being executed, the operands may come from internal CPU registers, external memory, or constants generated by the ALU itself.

When all input signals have settled and propagated through the ALU circuitry, the result of the performed operation appears at the ALU's outputs. The result consists of both a data word, which may be stored in a register or memory, and status information that is typically stored in a special, internal CPU register reserved for this purpose.

Address generation unit

Address generation unit (AGU), sometimes also called address computation unit (ACU), is anexecution unit

In computer engineering, an execution unit (E-unit or EU) is a part of the central processing unit (CPU) that performs the operations and calculations as instructed by the computer program. It may have its own internal control sequence unit (not ...

inside the CPU that calculates addresses used by the CPU to access main memory

Computer data storage is a technology consisting of computer components and recording media that are used to retain digital data. It is a core function and fundamental component of computers.

The central processing unit (CPU) of a comput ...

. By having address calculations handled by separate circuitry that operates in parallel with the rest of the CPU, the number of CPU cycles required for executing various machine instruction

In computer programming, machine code is any low-level programming language, consisting of machine language instructions, which are used to control a computer's central processing unit (CPU). Each instruction causes the CPU to perform a very ...

s can be reduced, bringing performance improvements.

While performing various operations, CPUs need to calculate memory addresses required for fetching data from the memory; for example, in-memory positions of array elements must be calculated before the CPU can fetch the data from actual memory locations. Those address-generation calculations involve different integer arithmetic operations, such as addition, subtraction, modulo operation

In computing, the modulo operation returns the remainder or signed remainder of a division, after one number is divided by another (called the '' modulus'' of the operation).

Given two positive numbers and , modulo (often abbreviated as ) is ...

s, or bit shift

In computer programming, a bitwise operation operates on a bit string, a bit array or a binary numeral (considered as a bit string) at the level of its individual bits. It is a fast and simple action, basic to the higher-level arithmetic oper ...

s. Often, calculating a memory address involves more than one general-purpose machine instruction, which do not necessarily decode and execute quickly. By incorporating an AGU into a CPU design, together with introducing specialized instructions that use the AGU, various address-generation calculations can be offloaded from the rest of the CPU, and can often be executed quickly in a single CPU cycle.

Capabilities of an AGU depend on a particular CPU and its architecture

Architecture is the art and technique of designing and building, as distinguished from the skills associated with construction. It is both the process and the product of sketching, conceiving, planning, designing, and constructing buildings ...

. Thus, some AGUs implement and expose more address-calculation operations, while some also include more advanced specialized instructions that can operate on multiple operand

In mathematics, an operand is the object of a mathematical operation, i.e., it is the object or quantity that is operated on.

Example

The following arithmetic expression shows an example of operators and operands:

:3 + 6 = 9

In the above exam ...

s at a time. Some CPU architectures include multiple AGUs so more than one address-calculation operation can be executed simultaneously, which brings further performance improvements due to the superscalar

A superscalar processor is a CPU that implements a form of parallelism called instruction-level parallelism within a single processor. In contrast to a scalar processor, which can execute at most one single instruction per clock cycle, a sup ...

nature of advanced CPU designs. For example, Intel

Intel Corporation is an American multinational corporation and technology company headquartered in Santa Clara, California. It is the world's largest semiconductor chip manufacturer by revenue, and is one of the developers of the x86 seri ...

incorporates multiple AGUs into its Sandy Bridge

Sandy Bridge is the codename for Intel's 32 nm microarchitecture used in the second generation of the Intel Core processors (Core i7, i5, i3). The Sandy Bridge microarchitecture is the successor to Nehalem and Westmere microarchitecture ...

and Haswell microarchitecture

In computer engineering, microarchitecture, also called computer organization and sometimes abbreviated as µarch or uarch, is the way a given instruction set architecture (ISA) is implemented in a particular processor. A given ISA may be impl ...

s, which increase bandwidth of the CPU memory subsystem by allowing multiple memory-access instructions to be executed in parallel.

Memory management unit (MMU)

Many microprocessors (in smartphones and desktop, laptop, server computers) have a memory management unit, translating logical addresses into physical RAM addresses, providingmemory protection

Memory protection is a way to control memory access rights on a computer, and is a part of most modern instruction set architectures and operating systems. The main purpose of memory protection is to prevent a process from accessing memory that ha ...

and paging

In computer operating systems, memory paging is a memory management scheme by which a computer stores and retrieves data from secondary storage for use in main memory. In this scheme, the operating system retrieves data from secondary storage ...

abilities, useful for virtual memory

In computing, virtual memory, or virtual storage is a memory management technique that provides an "idealized abstraction of the storage resources that are actually available on a given machine" which "creates the illusion to users of a very l ...

. Simpler processors, especially microcontroller

A microcontroller (MCU for ''microcontroller unit'', often also MC, UC, or μC) is a small computer on a single VLSI integrated circuit (IC) chip. A microcontroller contains one or more CPUs ( processor cores) along with memory and programmabl ...

s, usually don't include an MMU.

Cache

A CPU cache is ahardware cache

In computing, a cache ( ) is a hardware or software component that stores data so that future requests for that data can be served faster; the data stored in a cache might be the result of an earlier computation or a copy of data stored elsewher ...

used by the central processing unit (CPU) of a computer

A computer is a machine that can be programmed to Execution (computing), carry out sequences of arithmetic or logical operations (computation) automatically. Modern digital electronic computers can perform generic sets of operations known as C ...

to reduce the average cost (time or energy) to access data

In the pursuit of knowledge, data (; ) is a collection of discrete values that convey information, describing quantity, quality, fact, statistics, other basic units of meaning, or simply sequences of symbols that may be further interpreted ...

from the main memory

Computer data storage is a technology consisting of computer components and recording media that are used to retain digital data. It is a core function and fundamental component of computers.

The central processing unit (CPU) of a comput ...

. A cache is a smaller, faster memory, closer to a processor core

A central processing unit (CPU), also called a central processor, main processor or just processor, is the electronic circuitry that executes instructions comprising a computer program. The CPU performs basic arithmetic, logic, controlling, and ...

, which stores copies of the data from frequently used main memory location

In computing, a memory address is a reference to a specific memory location used at various levels by software and hardware. Memory addresses are fixed-length sequences of digits conventionally displayed and manipulated as unsigned integers. Su ...

s. Most CPUs have different independent caches, including instruction and data cache

A CPU cache is a hardware cache used by the central processing unit (CPU) of a computer to reduce the average cost (time or energy) to access data from the main memory. A cache is a smaller, faster memory, located closer to a processor core, which ...

s, where the data cache is usually organized as a hierarchy of more cache levels (L1, L2, L3, L4, etc.).

All modern (fast) CPUs (with few specialized exceptions) have multiple levels of CPU caches. The first CPUs that used a cache had only one level of cache; unlike later level 1 caches, it was not split into L1d (for data) and L1i (for instructions). Almost all current CPUs with caches have a split L1 cache. They also have L2 caches and, for larger processors, L3 caches as well. The L2 cache is usually not split and acts as a common repository for the already split L1 cache. Every core of a multi-core processor

A multi-core processor is a microprocessor on a single integrated circuit with two or more separate processing units, called cores, each of which reads and executes program instructions. The instructions are ordinary CPU instructions (such ...

has a dedicated L2 cache and is usually not shared between the cores. The L3 cache, and higher-level caches, are shared between the cores and are not split. An L4 cache is currently uncommon, and is generally on dynamic random-access memory

Dynamic random-access memory (dynamic RAM or DRAM) is a type of random-access semiconductor memory that stores each bit of data in a memory cell, usually consisting of a tiny capacitor and a transistor, both typically based on metal-oxide ...

(DRAM), rather than on static random-access memory

Static random-access memory (static RAM or SRAM) is a type of random-access memory (RAM) that uses latching circuitry (flip-flop) to store each bit. SRAM is volatile memory; data is lost when power is removed.

The term ''static'' differen ...

(SRAM), on a separate die or chip. That was also the case historically with L1, while bigger chips have allowed integration of it and generally all cache levels, with the possible exception of the last level. Each extra level of cache tends to be bigger and be optimized differently.

Other types of caches exist (that are not counted towards the "cache size" of the most important caches mentioned above), such as the translation lookaside buffer

A translation lookaside buffer (TLB) is a memory cache that stores the recent translations of virtual memory to physical memory. It is used to reduce the time taken to access a user memory location. It can be called an address-translation cache. ...

(TLB) that is part of the memory management unit

A memory management unit (MMU), sometimes called paged memory management unit (PMMU), is a computer hardware unit having all memory references passed through itself, primarily performing the translation of virtual memory addresses to physical ad ...

(MMU) that most CPUs have.

Caches are generally sized in powers of two: 2, 8, 16 etc. KiB

The byte is a unit of digital information that most commonly consists of eight bits. Historically, the byte was the number of bits used to encode a single character of text in a computer and for this reason it is the smallest addressable unit ...

or Mebibyte, MiB (for larger non-L1) sizes, although the IBM z13 (microprocessor), IBM z13 has a 96 KiB L1 instruction cache.

Clock rate

Most CPUs are synchronous circuits, which means they employ a clock signal to pace their sequential operations. The clock signal is produced by an external Electronic oscillator, oscillator circuit that generates a consistent number of pulses each second in the form of a periodic square wave. The frequency of the clock pulses determines the rate at which a CPU executes instructions and, consequently, the faster the clock, the more instructions the CPU will execute each second. To ensure proper operation of the CPU, the clock period is longer than the maximum time needed for all signals to propagate (move) through the CPU. In setting the clock period to a value well above the worst-case propagation delay, it is possible to design the entire CPU and the way it moves data around the "edges" of the rising and falling clock signal. This has the advantage of simplifying the CPU significantly, both from a design perspective and a component-count perspective. However, it also carries the disadvantage that the entire CPU must wait on its slowest elements, even though some portions of it are much faster. This limitation has largely been compensated for by various methods of increasing CPU parallelism (see below). However, architectural improvements alone do not solve all of the drawbacks of globally synchronous CPUs. For example, a clock signal is subject to the delays of any other electrical signal. Higher clock rates in increasingly complex CPUs make it more difficult to keep the clock signal in phase (synchronized) throughout the entire unit. This has led many modern CPUs to require multiple identical clock signals to be provided to avoid delaying a single signal significantly enough to cause the CPU to malfunction. Another major issue, as clock rates increase dramatically, is the amount of heat that is CPU power dissipation, dissipated by the CPU. The constantly changing clock causes many components to switch regardless of whether they are being used at that time. In general, a component that is switching uses more energy than an element in a static state. Therefore, as clock rate increases, so does energy consumption, causing the CPU to require more heat dissipation in the form of CPU cooling solutions. One method of dealing with the switching of unneeded components is called clock gating, which involves turning off the clock signal to unneeded components (effectively disabling them). However, this is often regarded as difficult to implement and therefore does not see common usage outside of very low-power designs. One notable recent CPU design that uses extensive clock gating is the IBM PowerPC-based Xenon (processor), Xenon used in the Xbox 360; that way, power requirements of the Xbox 360 are greatly reduced.Clockless CPUs

Another method of addressing some of the problems with a global clock signal is the removal of the clock signal altogether. While removing the global clock signal makes the design process considerably more complex in many ways, asynchronous (or clockless) designs carry marked advantages in power consumption and heat dissipation in comparison with similar synchronous designs. While somewhat uncommon, entire Asynchronous circuit#Asynchronous CPU, asynchronous CPUs have been built without using a global clock signal. Two notable examples of this are the ARM architecture family, ARM compliant AMULET microprocessor, AMULET and the MIPS architecture, MIPS R3000 compatible MiniMIPS. Rather than totally removing the clock signal, some CPU designs allow certain portions of the device to be asynchronous, such as using asynchronous Arithmetic logic unit, ALUs in conjunction with superscalar pipelining to achieve some arithmetic performance gains. While it is not altogether clear whether totally asynchronous designs can perform at a comparable or better level than their synchronous counterparts, it is evident that they do at least excel in simpler math operations. This, combined with their excellent power consumption and heat dissipation properties, makes them very suitable for embedded computers.Voltage regulator module

Many modern CPUs have a die-integrated power managing module which regulates on-demand voltage supply to the CPU circuitry allowing it to keep balance between performance and power consumption.Integer range

Every CPU represents numerical values in a specific way. For example, some early digital computers represented numbers as familiar decimal (base 10) numeral system values, and others have employed more unusual representations such as Balanced ternary, ternary (base three). Nearly all modern CPUs represent numbers in Binary numeral system, binary form, with each digit being represented by some two-valued physical quantity such as a "high" or "low" voltage.microcontroller

A microcontroller (MCU for ''microcontroller unit'', often also MC, UC, or μC) is a small computer on a single VLSI integrated circuit (IC) chip. A microcontroller contains one or more CPUs ( processor cores) along with memory and programmabl ...

s are commonly used in modern applications even though CPUs with much larger word sizes (such as 16, 32, 64, even 128-bit) are available. When higher performance is required, however, the benefits of a larger word size (larger data ranges and address spaces) may outweigh the disadvantages. A CPU can have internal data paths shorter than the word size to reduce size and cost. For example, even though the IBM System/360

The IBM System/360 (S/360) is a family of mainframe computer systems that was announced by IBM on April 7, 1964, and delivered between 1965 and 1978. It was the first family of computers designed to cover both commercial and scientific applic ...

instruction set

In computer science, an instruction set architecture (ISA), also called computer architecture, is an abstract model of a computer. A device that executes instructions described by that ISA, such as a central processing unit (CPU), is called an ...

was a 32-bit instruction set, the System/360 IBM System/360 Model 30, Model 30 and IBM System/360 Model 40, Model 40 had 8-bit data paths in the arithmetic logical unit, so that a 32-bit add required four cycles, one for each 8 bits of the operands, and, even though the Motorola 68000 series instruction set was a 32-bit instruction set, the Motorola 68000 and Motorola 68010 had 16-bit data paths in the arithmetic logical unit, so that a 32-bit add required two cycles.

To gain some of the advantages afforded by both lower and higher bit lengths, many instruction set

In computer science, an instruction set architecture (ISA), also called computer architecture, is an abstract model of a computer. A device that executes instructions described by that ISA, such as a central processing unit (CPU), is called an ...

s have different bit widths for integer and floating-point data, allowing CPUs implementing that instruction set to have different bit widths for different portions of the device. For example, the IBM System/360 instruction set was primarily 32 bit, but supported 64-bit floating-point arithmetic, floating-point values to facilitate greater accuracy and range in floating-point numbers. The System/360 Model 65 had an 8-bit adder for decimal and fixed-point binary arithmetic and a 60-bit adder for floating-point arithmetic. Many later CPU designs use similar mixed bit width, especially when the processor is meant for general-purpose usage where a reasonable balance of integer and floating-point capability is required.

Parallelism