|

Logistic Loss

In machine learning and mathematical optimization, loss functions for classification are computationally feasible loss functions representing the price paid for inaccuracy of predictions in classification problems (problems of identifying which category a particular observation belongs to). Given \mathcal as the space of all possible inputs (usually \mathcal \subset \mathbb^d), and \mathcal = \ as the set of labels (possible outputs), a typical goal of classification algorithms is to find a function f: \mathcal \to \mathcal which best predicts a label y for a given input \vec. However, because of incomplete information, noise in the measurement, or probabilistic components in the underlying process, it is possible for the same \vec to generate different y. As a result, the goal of the learning problem is to minimize expected loss (also known as the risk), defined as :I = \displaystyle \int_ V(f(\vec),y) \, p(\vec,y) \, d\vec \, dy where V(f(\vec),y) is a given loss function, an ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Tikhonov Regularization

Ridge regression is a method of estimating the coefficients of multiple-regression models in scenarios where the independent variables are highly correlated. It has been used in many fields including econometrics, chemistry, and engineering. Also known as Tikhonov regularization, named for Andrey Tikhonov, it is a method of regularization of ill-posed problems. It is particularly useful to mitigate the problem of multicollinearity in linear regression, which commonly occurs in models with large numbers of parameters. In general, the method provides improved efficiency in parameter estimation problems in exchange for a tolerable amount of bias (see bias–variance tradeoff). The theory was first introduced by Hoerl and Kennard in 1970 in their ''Technometrics'' papers “RIDGE regressions: biased estimation of nonorthogonal problems” and “RIDGE regressions: applications in nonorthogonal problems”. This was the result of ten years of research into the field of ridge analysis. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Differentiable Programming

Differentiable programming is a programming paradigm in which a numeric computer program can be differentiated throughout via automatic differentiation. This allows for gradient-based optimization of parameters in the program, often via gradient descent, as well as other learning approaches that are based on higher order derivative information. Differentiable programming has found use in a wide variety of areas, particularly scientific computing and artificial intelligence. One of the early proposals to adopt such a framework in a systematic fashion to improve upon learning algorithms was made by the Advanced Concepts Team at the European Space Agency in early 2016. Approaches Most differentiable programming frameworks work by constructing a graph containing the control flow and data structures in the program. Attempts generally fall into two groups: * Static, compiled graph-based approaches such as TensorFlow,TensorFlow 1 uses the static graph approach, whereas TensorFlow 2 ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Quadratic Programming

Quadratic programming (QP) is the process of solving certain mathematical optimization problems involving quadratic functions. Specifically, one seeks to optimize (minimize or maximize) a multivariate quadratic function subject to linear constraints on the variables. Quadratic programming is a type of nonlinear programming. "Programming" in this context refers to a formal procedure for solving mathematical problems. This usage dates to the 1940s and is not specifically tied to the more recent notion of "computer programming." To avoid confusion, some practitioners prefer the term "optimization" — e.g., "quadratic optimization." Problem formulation The quadratic programming problem with variables and constraints can be formulated as follows. Given: * a real-valued, -dimensional vector , * an -dimensional real symmetric matrix , * an -dimensional real matrix , and * an -dimensional real vector , the objective of quadratic programming is to find an -dimensional vector , that wi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Subgradient Method

Subgradient methods are iterative methods for solving convex minimization problems. Originally developed by Naum Z. Shor and others in the 1960s and 1970s, subgradient methods are convergent when applied even to a non-differentiable objective function. When the objective function is differentiable, sub-gradient methods for unconstrained problems use the same search direction as the method of steepest descent. Subgradient methods are slower than Newton's method when applied to minimize twice continuously differentiable convex functions. However, Newton's method fails to converge on problems that have non-differentiable kinks. In recent years, some interior-point methods have been suggested for convex minimization problems, but subgradient projection methods and related bundle methods of descent remain competitive. For convex minimization problems with very large number of dimensions, subgradient-projection methods are suitable, because they require little storage. Subgradient proj ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Stochastic Gradient Descent

Stochastic gradient descent (often abbreviated SGD) is an iterative method for optimizing an objective function with suitable smoothness properties (e.g. differentiable or subdifferentiable). It can be regarded as a stochastic approximation of gradient descent optimization, since it replaces the actual gradient (calculated from the entire data set) by an estimate thereof (calculated from a randomly selected subset of the data). Especially in high-dimensional optimization problems this reduces the very high computational burden, achieving faster iterations in trade for a lower convergence rate. While the basic idea behind stochastic approximation can be traced back to the Robbins–Monro algorithm of the 1950s, stochastic gradient descent has become an important optimization method in machine learning. Background Both statistical estimation and machine learning consider the problem of minimizing an objective function that has the form of a sum: : Q(w) = \frac\sum_^n Q_i(w), ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Gradient Descent

In mathematics, gradient descent (also often called steepest descent) is a first-order iterative optimization algorithm for finding a local minimum of a differentiable function. The idea is to take repeated steps in the opposite direction of the gradient (or approximate gradient) of the function at the current point, because this is the direction of steepest descent. Conversely, stepping in the direction of the gradient will lead to a local maximum of that function; the procedure is then known as gradient ascent. Gradient descent is generally attributed to Augustin-Louis Cauchy, who first suggested it in 1847. Jacques Hadamard independently proposed a similar method in 1907. Its convergence properties for non-linear optimization problems were first studied by Haskell Curry in 1944, with the method becoming increasingly well-studied and used in the following decades. Description Gradient descent is based on the observation that if the multi-variable function F(\mathbf) is def ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Support Vector Machines

In machine learning, support vector machines (SVMs, also support vector networks) are supervised learning models with associated learning algorithms that analyze data for classification and regression analysis. Developed at AT&T Bell Laboratories by Vladimir Vapnik with colleagues (Boser et al., 1992, Guyon et al., 1993, Cortes and Vapnik, 1995, Vapnik et al., 1997) SVMs are one of the most robust prediction methods, being based on statistical learning frameworks or VC theory proposed by Vapnik (1982, 1995) and Chervonenkis (1974). Given a set of training examples, each marked as belonging to one of two categories, an SVM training algorithm builds a model that assigns new examples to one category or the other, making it a non- probabilistic binary linear classifier (although methods such as Platt scaling exist to use SVM in a probabilistic classification setting). SVM maps training examples to points in space so as to maximise the width of the gap between the two categories. New ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Positive Part

In mathematics, the positive part of a real or extended real-valued function is defined by the formula : f^+(x) = \max(f(x),0) = \begin f(x) & \mbox f(x) > 0 \\ 0 & \mbox \end Intuitively, the graph of f^+ is obtained by taking the graph of f, chopping off the part under the ''x''-axis, and letting f^+ take the value zero there. Similarly, the negative part of ''f'' is defined as : f^-(x) =\max(-f(x),0)= -\min(f(x),0) = \begin -f(x) & \mbox f(x) 0.html" ;"title=">0">>0 : f^-= - <0. One may define the positive and negative part of any function with values in a . The unit is the positive part of the |

AdaBoost

AdaBoost, short for ''Adaptive Boosting'', is a statistical classification meta-algorithm formulated by Yoav Freund and Robert Schapire in 1995, who won the 2003 Gödel Prize for their work. It can be used in conjunction with many other types of learning algorithms to improve performance. The output of the other learning algorithms ('weak learners') is combined into a weighted sum that represents the final output of the boosted classifier. Usually, AdaBoost is presented for binary classification, although it can be generalized to multiple classes or bounded intervals on the real line. AdaBoost is adaptive in the sense that subsequent weak learners are tweaked in favor of those instances misclassified by previous classifiers. In some problems it can be less susceptible to the overfitting problem than other learning algorithms. The individual learners can be weak, but as long as the performance of each one is slightly better than random guessing, the final model can be proven to con ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

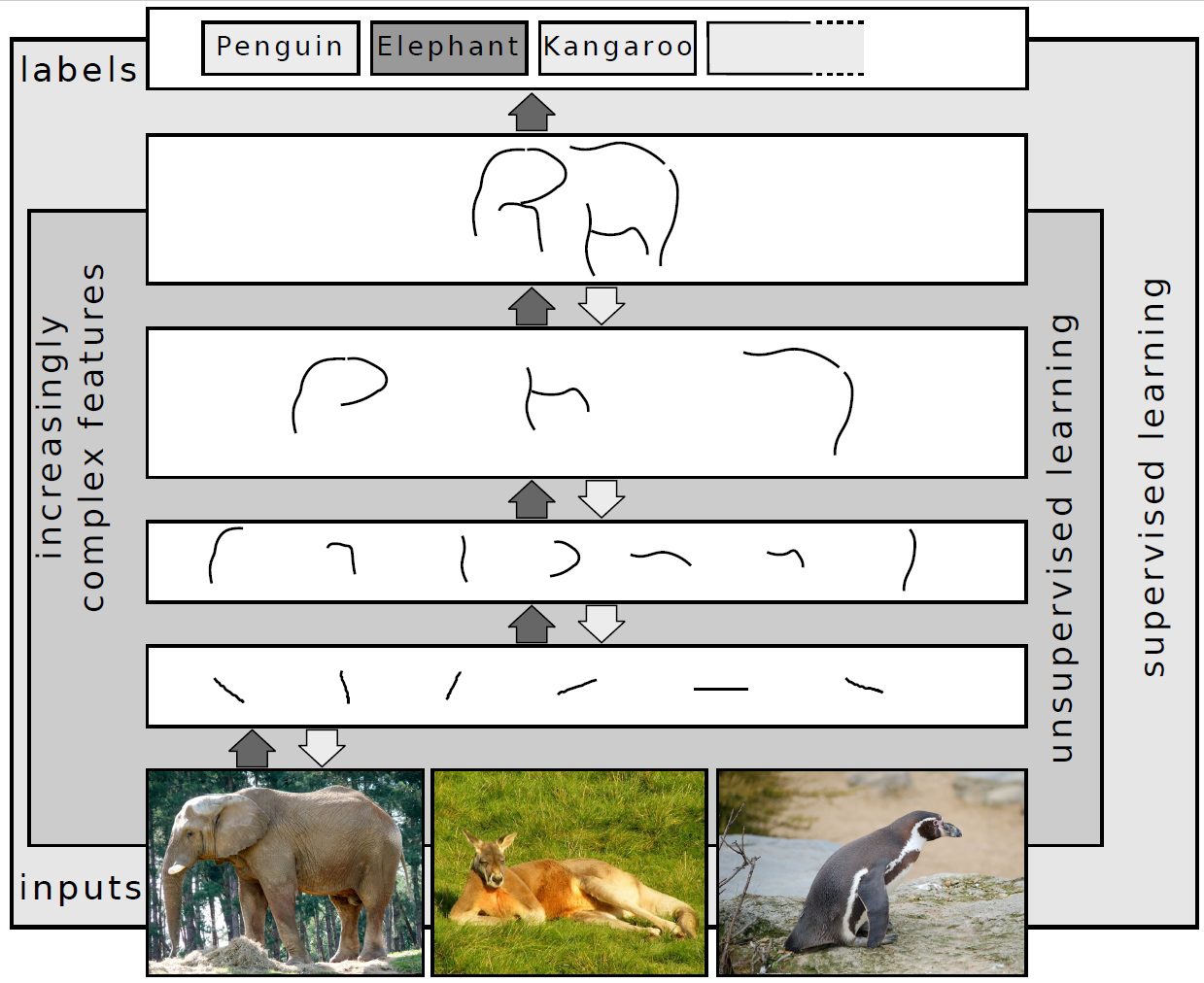

Deep Learning

Deep learning (also known as deep structured learning) is part of a broader family of machine learning methods based on artificial neural networks with representation learning. Learning can be supervised, semi-supervised or unsupervised. Deep-learning architectures such as deep neural networks, deep belief networks, deep reinforcement learning, recurrent neural networks, convolutional neural networks and Transformers have been applied to fields including computer vision, speech recognition, natural language processing, machine translation, bioinformatics, drug design, medical image analysis, Climatology, climate science, material inspection and board game programs, where they have produced results comparable to and in some cases surpassing human expert performance. Artificial neural networks (ANNs) were inspired by information processing and distributed communication nodes in biological systems. ANNs have various differences from biological brains. Specifically, artificial ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Kullback–Leibler Divergence

In mathematical statistics, the Kullback–Leibler divergence (also called relative entropy and I-divergence), denoted D_\text(P \parallel Q), is a type of statistical distance: a measure of how one probability distribution ''P'' is different from a second, reference probability distribution ''Q''. A simple interpretation of the KL divergence of ''P'' from ''Q'' is the expected excess surprise from using ''Q'' as a model when the actual distribution is ''P''. While it is a distance, it is not a metric, the most familiar type of distance: it is not symmetric in the two distributions (in contrast to variation of information), and does not satisfy the triangle inequality. Instead, in terms of information geometry, it is a type of divergence, a generalization of squared distance, and for certain classes of distributions (notably an exponential family), it satisfies a generalized Pythagorean theorem (which applies to squared distances). In the simple case, a relative entropy of 0 ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |