|

Semantic Analysis (machine Learning)

In machine learning, semantic analysis of a corpus is the task of building structures that approximate concepts from a large set of documents. It generally does not involve prior semantic understanding of the documents. A metalanguage based on predicate logic can analyze the speech of humans. Another strategy to understand the semantics of a text is symbol grounding. If language is grounded, it is equal to recognizing a machine readable meaning. For the restricted domain of spatial analysis, a computer based language understanding system was demonstrated. Latent semantic analysis (sometimes latent semantic indexing), is a class of techniques where documents are represented as vectors in term space. A prominent example is PLSI. Latent Dirichlet allocation involves attributing document terms to topics. n-grams and hidden Markov models work by representing the term stream as a Markov chain where each term is derived from the few terms before it. See also * Explicit semantic ana ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence. Machine learning algorithms build a model based on sample data, known as training data, in order to make predictions or decisions without being explicitly programmed to do so. Machine learning algorithms are used in a wide variety of applications, such as in medicine, email filtering, speech recognition, agriculture, and computer vision, where it is difficult or unfeasible to develop conventional algorithms to perform the needed tasks.Hu, J.; Niu, H.; Carrasco, J.; Lennox, B.; Arvin, F.,Voronoi-Based Multi-Robot Autonomous Exploration in Unknown Environments via Deep Reinforcement Learning IEEE Transactions on Vehicular Technology, 2020. A subset of machine learning is closely related to computational statistics, which focuses on making predicti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Text Corpus

In linguistics, a corpus (plural ''corpora'') or text corpus is a language resource consisting of a large and structured set of texts (nowadays usually electronically stored and processed). In corpus linguistics, they are used to do statistical analysis and statistical hypothesis testing, hypothesis testing, checking occurrences or validating linguistic rules within a specific language territory. In Search engine (computing), search technology, a corpus is the collection of documents which is being searched. Overview A corpus may contain texts in a single language (''monolingual corpus'') or text data in multiple languages (''multilingual corpus''). In order to make the corpora more useful for doing linguistic research, they are often subjected to a process known as annotation. An example of annotating a corpus is part-of-speech tagging, or ''POS-tagging'', in which information about each word's part of speech (verb, noun, adjective, etc.) is added to the corpus in the form o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Metalanguage

In logic and linguistics, a metalanguage is a language used to describe another language, often called the ''object language''. Expressions in a metalanguage are often distinguished from those in the object language by the use of italics, quotation marks, or writing on a separate line. The structure of sentences and phrases in a metalanguage can be described by a metasyntax. Types There are a variety of recognized metalanguages, including ''embedded'', ''ordered'', and ''nested'' (or ''hierarchical'') metalanguages. Embedded An embedded metalanguage is a language formally, naturally and firmly fixed in an object language. This idea is found in Douglas Hofstadter's book, '' Gödel, Escher, Bach'', in a discussion of the relationship between formal languages and number theory: "... it is in the nature of any formalization of number theory that its metalanguage is embedded within it." It occurs in natural, or informal, languages, as well—such as in English, where words ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Predicate Logic

First-order logic—also known as predicate logic, quantificational logic, and first-order predicate calculus—is a collection of formal systems used in mathematics, philosophy, linguistics, and computer science. First-order logic uses quantified variables over non-logical objects, and allows the use of sentences that contain variables, so that rather than propositions such as "Socrates is a man", one can have expressions in the form "there exists x such that x is Socrates and x is a man", where "there exists''"'' is a quantifier, while ''x'' is a variable. This distinguishes it from propositional logic, which does not use quantifiers or relations; in this sense, propositional logic is the foundation of first-order logic. A theory about a topic is usually a first-order logic together with a specified domain of discourse (over which the quantified variables range), finitely many functions from that domain to itself, finitely many predicates defined on that domain, and a set of ax ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Symbol Grounding

In cognitive science and semantics, the symbol grounding problem concerns how it is that words (symbols in general) get their meanings, and hence is closely related to the problem of what meaning itself really is. The problem of meaning is in turn related to the problem of how it is that mental states are meaningful, hence to the problem of consciousness: what is the connection between certain physical systems and the contents of subjective experiences. Background Referents Gottlob Frege distinguished a referent (the thing that a word refers to) and the word's meaning. This is most clearly illustrated using the proper names of concrete individuals, but it is also true of names of kinds of things and of abstract properties: (1) "Tony Blair", (2) "the prime minister of the UK during the year 2004", and (3) "Cherie Blair's husband" all have the same referent, but not the same meaning. Some have suggested that the meaning of a (referring) word is the rule or features that one must ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Latent Semantic Analysis

Latent semantic analysis (LSA) is a technique in natural language processing, in particular distributional semantics, of analyzing relationships between a set of documents and the terms they contain by producing a set of concepts related to the documents and terms. LSA assumes that words that are close in meaning will occur in similar pieces of text (the distributional hypothesis). A matrix containing word counts per document (rows represent unique words and columns represent each document) is constructed from a large piece of text and a mathematical technique called singular value decomposition (SVD) is used to reduce the number of rows while preserving the similarity structure among columns. Documents are then compared by cosine similarity between any two columns. Values close to 1 represent very similar documents while values close to 0 represent very dissimilar documents. An information retrieval technique using latent semantic structure was patented in 1988US Patent 4,83 ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear Algebra

Linear algebra is the branch of mathematics concerning linear equations such as: :a_1x_1+\cdots +a_nx_n=b, linear maps such as: :(x_1, \ldots, x_n) \mapsto a_1x_1+\cdots +a_nx_n, and their representations in vector spaces and through matrices. Linear algebra is central to almost all areas of mathematics. For instance, linear algebra is fundamental in modern presentations of geometry, including for defining basic objects such as lines, planes and rotations. Also, functional analysis, a branch of mathematical analysis, may be viewed as the application of linear algebra to spaces of functions. Linear algebra is also used in most sciences and fields of engineering, because it allows modeling many natural phenomena, and computing efficiently with such models. For nonlinear systems, which cannot be modeled with linear algebra, it is often used for dealing with first-order approximations, using the fact that the differential of a multivariate function at a point is the linear ma ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probabilistic Latent Semantic Indexing

Probabilistic latent semantic analysis (PLSA), also known as probabilistic latent semantic indexing (PLSI, especially in information retrieval circles) is a statistical technique for the analysis of two-mode and co-occurrence data. In effect, one can derive a low-dimensional representation of the observed variables in terms of their affinity to certain hidden variables, just as in latent semantic analysis, from which PLSA evolved. Compared to standard latent semantic analysis which stems from linear algebra and downsizes the occurrence tables (usually via a singular value decomposition), probabilistic latent semantic analysis is based on a mixture decomposition derived from a latent class model. Model Considering observations in the form of co-occurrences (w,d) of words and documents, PLSA models the probability of each co-occurrence as a mixture of conditionally independent multinomial distributions: : P(w,d) = \sum_c P(c) P(d, c) P(w, c) = P(d) \sum_c P(c, d) P(w, c) with c b ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Latent Dirichlet Allocation

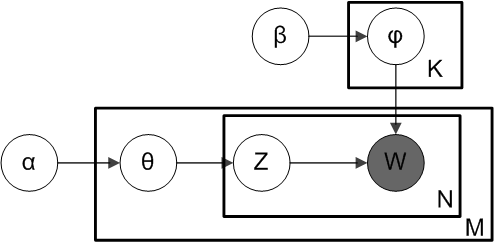

In natural language processing, Latent Dirichlet Allocation (LDA) is a generative statistical model that explains a set of observations through unobserved groups, and each group explains why some parts of the data are similar. The LDA is an example of a topic model. In this, observations (e.g., words) are collected into documents, and each word's presence is attributable to one of the document's topics. Each document will contain a small number of topics. History In the context of population genetics, LDA was proposed by J. K. Pritchard, M. Stephens and P. Donnelly in 2000. LDA was applied in machine learning by David Blei, Andrew Ng and Michael I. Jordan in 2003. Overview Evolutionary biology and bio-medicine In evolutionary biology and bio-medicine, the model is used to detect the presence of structured genetic variation in a group of individuals. The model assumes that alleles carried by individuals under study have origin in various extant or past populations. The ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

N-gram

In the fields of computational linguistics and probability, an ''n''-gram (sometimes also called Q-gram) is a contiguous sequence of ''n'' items from a given sample of text or speech. The items can be phonemes, syllables, letters, words or base pairs according to the application. The ''n''-grams typically are collected from a text or speech corpus. When the items are words, -grams may also be called ''shingles''. Using Latin numerical prefixes, an ''n''-gram of size 1 is referred to as a "unigram"; size 2 is a "bigram" (or, less commonly, a "digram"); size 3 is a "trigram". English cardinal numbers are sometimes used, e.g., "four-gram", "five-gram", and so on. In computational biology, a polymer or oligomer of a known size is called a ''k''-mer instead of an ''n''-gram, with specific names using Greek numerical prefixes such as "monomer", "dimer", "trimer", "tetramer", "pentamer", etc., or English cardinal numbers, "one-mer", "two-mer", "three-mer", etc. Applications ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hidden Markov Models

A hidden Markov model (HMM) is a statistical Markov model in which the system being modeled is assumed to be a Markov process — call it X — with unobservable ("''hidden''") states. As part of the definition, HMM requires that there be an observable process Y whose outcomes are "influenced" by the outcomes of X in a known way. Since X cannot be observed directly, the goal is to learn about X by observing Y. HMM has an additional requirement that the outcome of Y at time t=t_0 must be "influenced" exclusively by the outcome of X at t=t_0 and that the outcomes of X and Y at t handwriting recognition, handwriting, gesture recognition, part-of-speech tagging, musical score following, partial discharges and bioinformatics. Definition Let X_n and Y_n be discrete-time stochastic processes and n\geq 1. The pair (X_n,Y_n) is a ''hidden Markov model'' if * X_n is a Markov process whose behavior is not directly observable ("hidden"); * \operatorname\bigl(Y_n \in A ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Markov Chain

A Markov chain or Markov process is a stochastic model describing a sequence of possible events in which the probability of each event depends only on the state attained in the previous event. Informally, this may be thought of as, "What happens next depends only on the state of affairs ''now''." A countably infinite sequence, in which the chain moves state at discrete time steps, gives a discrete-time Markov chain (DTMC). A continuous-time process is called a continuous-time Markov chain (CTMC). It is named after the Russian mathematician Andrey Markov. Markov chains have many applications as statistical models of real-world processes, such as studying cruise control systems in motor vehicles, queues or lines of customers arriving at an airport, currency exchange rates and animal population dynamics. Markov processes are the basis for general stochastic simulation methods known as Markov chain Monte Carlo, which are used for simulating sampling from complex probability dist ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |