|

Solomonoff

Ray Solomonoff (July 25, 1926 – December 7, 2009) was the inventor of algorithmic probability, his General Theory of Inductive Inference (also known as Universal Inductive Inference),Samuel Rathmanner and Marcus Hutter. A philosophical treatise of universal induction. Entropy, 13(6):1076–1136, 2011. and was a founder of algorithmic information theory. He was an originator of the branch of artificial intelligence based on machine learning, prediction and probability. He circulated the first report on non-semantic machine learning in 1956."An Inductive Inference Machine", Dartmouth College, N.H., version of Aug. 14, 1956(pdf scanned copy of the original)/ref> Solomonoff first described algorithmic probability in 1960, publishing the theorem that launched Kolmogorov complexity and algorithmic information theory. He first described these results at a conference at Caltech in 1960, and in a report, Feb. 1960, "A Preliminary Report on a General Theory of Inductive Inference." He clar ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Algorithmic Probability

In algorithmic information theory, algorithmic probability, also known as Solomonoff probability, is a mathematical method of assigning a prior probability to a given observation. It was invented by Ray Solomonoff in the 1960s. It is used in inductive inference theory and analyses of algorithms. In his general theory of inductive inference, Solomonoff uses the method together with Bayes' rule to obtain probabilities of prediction for an algorithm's future outputs. In the mathematical formalism used, the observations have the form of finite binary strings viewed as outputs of Turing machines, and the universal prior is a probability distribution over the set of finite binary strings calculated from a probability distribution over programs (that is, inputs to a universal Turing machine). The prior is universal in the Turing-computability sense, i.e. no string has zero probability. It is not computable, but it can be approximated. Overview Algorithmic probability is the main ingre ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Solomonoff Induction

Solomonoff's theory of inductive inference is a mathematical proof that if a universe is generated by an algorithm, then observations of that universe, encoded as a dataset, are best predicted by the smallest executable archive of that dataset. This formalization of Occam's razorJJ McCall. Induction: From Kolmogorov and Solomonoff to De Finetti and Back to Kolmogorov – Metroeconomica, 2004 – Wiley Online Library.D Stork. Foundations of Occam's razor and parsimony in learning from ricoh.com – NIPS 2001 Workshop, 2001A.N. Soklakov. Occam's razor as a formal basis for a physical theorfrom arxiv.org– Foundations of Physics Letters, 2002 – SpringerM Hutter. On the existence and convergence of computable universal priorarxiv.org– Algorithmic Learning Theory, 2003 – Springer for induction was introduced by Ray Solomonoff, based on probability theory and theoretical computer science. In essence, Solomonoff's induction derives the posterior probability of any computable theo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Kolmogorov Complexity

In algorithmic information theory (a subfield of computer science and mathematics), the Kolmogorov complexity of an object, such as a piece of text, is the length of a shortest computer program (in a predetermined programming language) that produces the object as output. It is a measure of the computational resources needed to specify the object, and is also known as algorithmic complexity, Solomonoff–Kolmogorov–Chaitin complexity, program-size complexity, descriptive complexity, or algorithmic entropy. It is named after Andrey Kolmogorov, who first published on the subject in 1963 and is a generalization of classical information theory. The notion of Kolmogorov complexity can be used to state and prove impossibility results akin to Cantor's diagonal argument, Gödel's incompleteness theorem, and Turing's halting problem. In particular, no program ''P'' computing a lower bound for each text's Kolmogorov complexity can return a value essentially larger than ''P'''s own leng ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Dartmouth Workshop

The Dartmouth Summer Research Project on Artificial Intelligence was a 1956 summer workshop widely consideredKline, Ronald R., Cybernetics, Automata Studies and the Dartmouth Conference on Artificial Intelligence, IEEE Annals of the History of Computing, October–December, 2011, IEEE Computer Society to be the founding event of artificial intelligence as a field. The project lasted approximately six to eight weeks and was essentially an extended brainstorming session. Eleven mathematicians and scientists originally planned to attend; not all of them attended, but more than ten others came for short times. Background In the early 1950s, there were various names for the field of "thinking machines": cybernetics, automata theory, and complex information processing. The variety of names suggests the variety of conceptual orientations. In 1955, John McCarthy, then a young Assistant Professor of Mathematics at Dartmouth College, decided to organize a group to clarify and develo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Dartmouth Workshop

The Dartmouth Summer Research Project on Artificial Intelligence was a 1956 summer workshop widely consideredKline, Ronald R., Cybernetics, Automata Studies and the Dartmouth Conference on Artificial Intelligence, IEEE Annals of the History of Computing, October–December, 2011, IEEE Computer Society to be the founding event of artificial intelligence as a field. The project lasted approximately six to eight weeks and was essentially an extended brainstorming session. Eleven mathematicians and scientists originally planned to attend; not all of them attended, but more than ten others came for short times. Background In the early 1950s, there were various names for the field of "thinking machines": cybernetics, automata theory, and complex information processing. The variety of names suggests the variety of conceptual orientations. In 1955, John McCarthy, then a young Assistant Professor of Mathematics at Dartmouth College, decided to organize a group to clarify and develo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

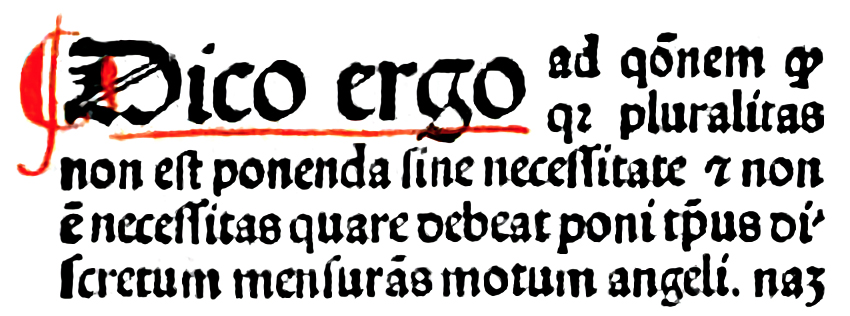

Occam's Razor

Occam's razor, Ockham's razor, or Ocham's razor ( la, novacula Occami), also known as the principle of parsimony or the law of parsimony ( la, lex parsimoniae), is the problem-solving principle that "entities should not be multiplied beyond necessity". It is generally understood in the sense that with competing theories or explanations, the simpler one, for example a model with fewer parameters, is to be preferred. The idea is frequently attributed to English Franciscan friar William of Ockham (), a scholastic philosopher and theologian, although he never used these exact words. This philosophical razor advocates that when presented with competing hypotheses about the same prediction, one should select the solution with the fewest assumptions, and that this is not meant to be a way of choosing between hypotheses that make different predictions. Similarly, in science, Occam's razor is used as an abductive heuristic in the development of theoretical models rather than as a rigoro ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Algorithmic Information Theory

Algorithmic information theory (AIT) is a branch of theoretical computer science that concerns itself with the relationship between computation and information of computably generated objects (as opposed to stochastically generated), such as strings or any other data structure. In other words, it is shown within algorithmic information theory that computational incompressibility "mimics" (except for a constant that only depends on the chosen universal programming language) the relations or inequalities found in information theory. According to Gregory Chaitin, it is "the result of putting Shannon's information theory and Turing's computability theory into a cocktail shaker and shaking vigorously." Besides the formalization of a universal measure for irreducible information content of computably generated objects, some main achievements of AIT were to show that: in fact algorithmic complexity follows (in the self-delimited case) the same inequalities (except for a constant) tha ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Artificial Intelligence

Artificial intelligence (AI) is intelligence—perceiving, synthesizing, and inferring information—demonstrated by machines, as opposed to intelligence displayed by animals and humans. Example tasks in which this is done include speech recognition, computer vision, translation between (natural) languages, as well as other mappings of inputs. The ''Oxford English Dictionary'' of Oxford University Press defines artificial intelligence as: the theory and development of computer systems able to perform tasks that normally require human intelligence, such as visual perception, speech recognition, decision-making, and translation between languages. AI applications include advanced web search engines (e.g., Google), recommendation systems (used by YouTube, Amazon and Netflix), understanding human speech (such as Siri and Alexa), self-driving cars (e.g., Tesla), automated decision-making and competing at the highest level in strategic game systems (such as chess and Go). ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Artificial Intelligence

Artificial intelligence (AI) is intelligence—perceiving, synthesizing, and inferring information—demonstrated by machines, as opposed to intelligence displayed by animals and humans. Example tasks in which this is done include speech recognition, computer vision, translation between (natural) languages, as well as other mappings of inputs. The ''Oxford English Dictionary'' of Oxford University Press defines artificial intelligence as: the theory and development of computer systems able to perform tasks that normally require human intelligence, such as visual perception, speech recognition, decision-making, and translation between languages. AI applications include advanced web search engines (e.g., Google), recommendation systems (used by YouTube, Amazon and Netflix), understanding human speech (such as Siri and Alexa), self-driving cars (e.g., Tesla), automated decision-making and competing at the highest level in strategic game systems (such as chess and Go). ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Technological Singularity

The technological singularity—or simply the singularity—is a hypothetical future point in time at which technological growth becomes uncontrollable and irreversible, resulting in unforeseeable changes to human civilization. According to the most popular version of the singularity hypothesis, I. J. Good, I.J. Good's #Intelligence explosion, intelligence explosion model, an upgradable intelligent agent will eventually enter a "runaway reaction" of self-improvement cycles, each new and more intelligent generation appearing more and more rapidly, causing an "explosion" in intelligence and resulting in a powerful superintelligence that qualitatively far surpasses all human intelligence.Vinge, Vernor"The Coming Technological Singularity: How to Survive in the Post-Human Era", in ''Vision-21: Interdisciplinary Science and Engineering in the Era of Cyberspace'', G. A. Landis, ed., NASA Publication CP-10129, pp. 11–22, 1993. The first person to use the concept of a "singularity" in t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence. Machine learning algorithms build a model based on sample data, known as training data, in order to make predictions or decisions without being explicitly programmed to do so. Machine learning algorithms are used in a wide variety of applications, such as in medicine, email filtering, speech recognition, agriculture, and computer vision, where it is difficult or unfeasible to develop conventional algorithms to perform the needed tasks.Hu, J.; Niu, H.; Carrasco, J.; Lennox, B.; Arvin, F.,Voronoi-Based Multi-Robot Autonomous Exploration in Unknown Environments via Deep Reinforcement Learning IEEE Transactions on Vehicular Technology, 2020. A subset of machine learning is closely related to computational statistics, which focuses on making predicti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Inductive Inference

Inductive reasoning is a method of reasoning in which a general principle is derived from a body of observations. It consists of making broad generalizations based on specific observations. Inductive reasoning is distinct from ''deductive'' reasoning. If the premises are correct, the conclusion of a deductive argument is ''certain''; in contrast, the truth of the conclusion of an inductive argument is ''probable'', based upon the evidence given. Types The types of inductive reasoning include generalization, prediction, statistical syllogism, argument from analogy, and causal inference. Inductive generalization A generalization (more accurately, an ''inductive generalization'') proceeds from a premise about a sample to a conclusion about the population. The observation obtained from this sample is projected onto the broader population. : The proportion Q of the sample has attribute A. : Therefore, the proportion Q of the population has attribute A. For example, say there ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |