|

Autocorrelation

Autocorrelation, sometimes known as serial correlation in the discrete time case, is the correlation of a signal with a delayed copy of itself as a function of delay. Informally, it is the similarity between observations of a random variable as a function of the time lag between them. The analysis of autocorrelation is a mathematical tool for finding repeating patterns, such as the presence of a periodic signal obscured by noise, or identifying the missing fundamental frequency in a signal implied by its harmonic frequencies. It is often used in signal processing for analyzing functions or series of values, such as time domain signals. Different fields of study define autocorrelation differently, and not all of these definitions are equivalent. In some fields, the term is used interchangeably with autocovariance. Unit root processes, trend-stationary processes, autoregressive processes, and moving average processes are specific forms of processes with autocorrelation. A ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Autoregressive Process

In statistics, econometrics and signal processing, an autoregressive (AR) model is a representation of a type of random process; as such, it is used to describe certain time-varying processes in nature, economics, etc. The autoregressive model specifies that the output variable depends linearly on its own previous values and on a stochastic term (an imperfectly predictable term); thus the model is in the form of a stochastic difference equation (or recurrence relation which should not be confused with differential equation). Together with the moving-average (MA) model, it is a special case and key component of the more general autoregressive–moving-average (ARMA) and autoregressive integrated moving average (ARIMA) models of time series, which have a more complicated stochastic structure; it is also a special case of the vector autoregressive model (VAR), which consists of a system of more than one interlocking stochastic difference equation in more than one evolving random vari ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

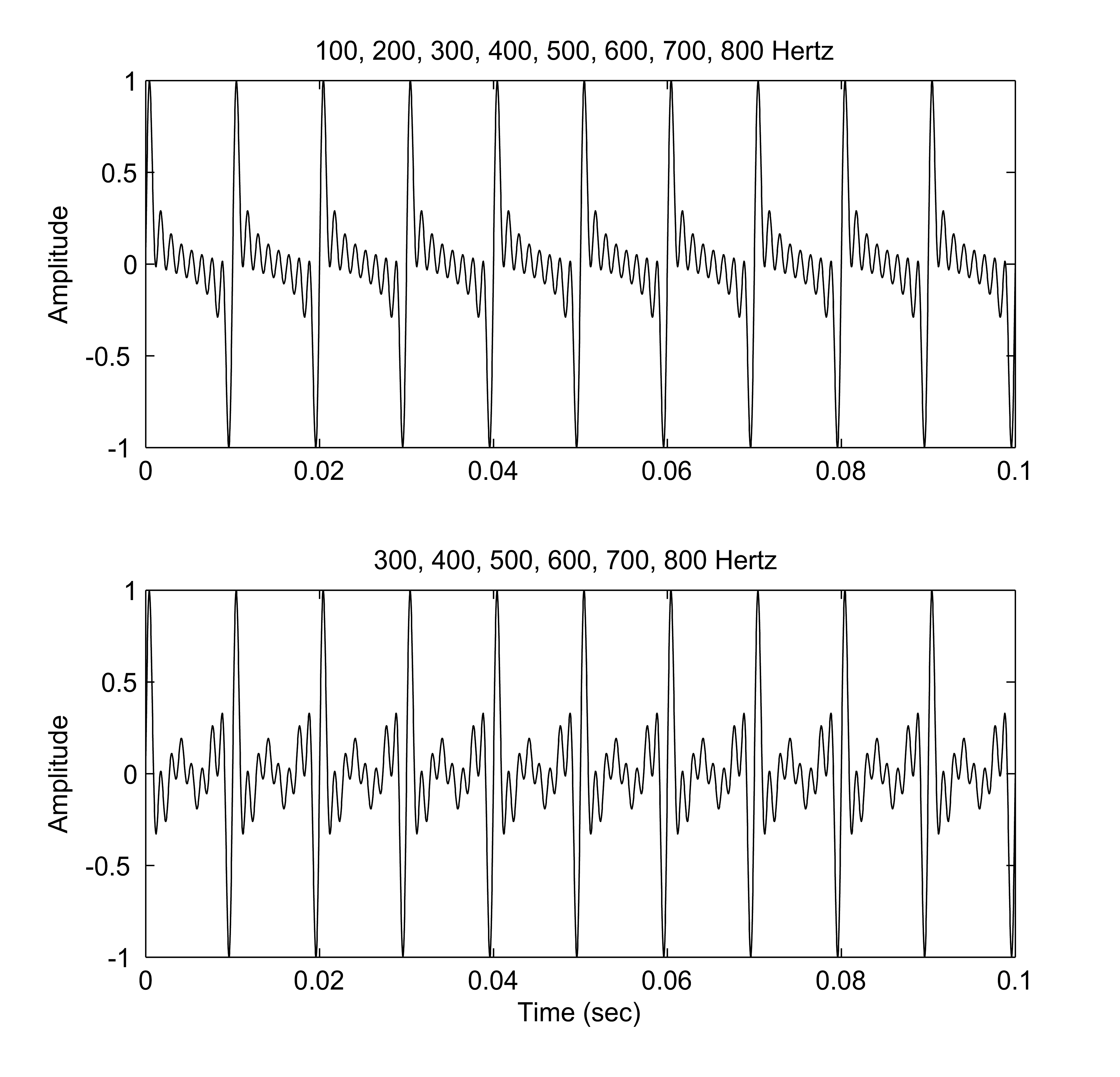

Missing Fundamental Frequency

A harmonic sound is said to have a missing fundamental, suppressed fundamental, or phantom fundamental when its overtones suggest a fundamental frequency but the sound lacks a component at the fundamental frequency itself. The brain perceives the pitch of a tone not only by its fundamental frequency, but also by the periodicity implied by the relationship between the higher harmonics; we may perceive the same pitch (perhaps with a different timbre) even if the fundamental frequency is missing from a tone. For example, when a note (that is not a pure tone) has a pitch of 100 Hz, it will consist of frequency components that are integer multiples of that value (e.g. 100, 200, 300, 400, 500.... Hz). However, smaller loudspeakers may not produce low frequencies, and so in our example, the 100 Hz component may be missing. Nevertheless, a pitch corresponding to the fundamental may still be heard. Explanation A low pitch (also known as the pitch of the missing fundamental ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Moving Average Process

In time series analysis, the moving-average model (MA model), also known as moving-average process, is a common approach for modeling univariate time series. The moving-average model specifies that the output variable is cross-correlated with a non-identical to itself random-variable. Together with the autoregressive (AR) model, the moving-average model is a special case and key component of the more general ARMA and ARIMA models of time series, which have a more complicated stochastic structure. The moving-average model should not be confused with the moving average, a distinct concept despite some similarities. Contrary to the AR model, the finite MA model is always stationary. Definition The notation MA(''q'') refers to the moving average model of order ''q'': : X_t = \mu + \varepsilon_t + \theta_1 \varepsilon_ + \cdots + \theta_q \varepsilon_ = \mu + \sum_^q \theta_i \varepsilon_ + \varepsilon_, where \mu is the mean of the series, the \theta_1,...,\theta_q are t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Autocovariance

In probability theory and statistics, given a stochastic process, the autocovariance is a function that gives the covariance of the process with itself at pairs of time points. Autocovariance is closely related to the autocorrelation of the process in question. Auto-covariance of stochastic processes Definition With the usual notation \operatorname for the expectation operator, if the stochastic process \left\ has the mean function \mu_t = \operatorname _t/math>, then the autocovariance is given by where t_1 and t_2 are two moments in time. Definition for weakly stationary process If \left\ is a weakly stationary (WSS) process, then the following are true: :\mu_ = \mu_ \triangleq \mu for all t_1,t_2 and :\operatorname [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Complex Conjugation

In mathematics, the complex conjugate of a complex number is the number with an equal real part and an imaginary part equal in magnitude but opposite in sign. That is, (if a and b are real, then) the complex conjugate of a + bi is equal to a - bi. The complex conjugate of z is often denoted as \overline or z^*. In polar form, the conjugate of r e^ is r e^. This can be shown using Euler's formula. The product of a complex number and its conjugate is a real number: a^2 + b^2 (or r^2 in polar coordinates). If a root of a univariate polynomial with real coefficients is complex, then its complex conjugate is also a root. Notation The complex conjugate of a complex number z is written as \overline z or z^*. The first notation, a vinculum, avoids confusion with the notation for the conjugate transpose of a matrix, which can be thought of as a generalization of the complex conjugate. The second is preferred in physics, where dagger (†) is used for the conjugate tra ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Expected Value

In probability theory, the expected value (also called expectation, expectancy, mathematical expectation, mean, average, or first moment) is a generalization of the weighted average. Informally, the expected value is the arithmetic mean of a large number of independently selected outcomes of a random variable. The expected value of a random variable with a finite number of outcomes is a weighted average of all possible outcomes. In the case of a continuum of possible outcomes, the expectation is defined by integration. In the axiomatic foundation for probability provided by measure theory, the expectation is given by Lebesgue integration. The expected value of a random variable is often denoted by , , or , with also often stylized as or \mathbb. History The idea of the expected value originated in the middle of the 17th century from the study of the so-called problem of points, which seeks to divide the stakes ''in a fair way'' between two players, who have to end th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variance

In probability theory and statistics, variance is the expectation of the squared deviation of a random variable from its population mean or sample mean. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers is spread out from their average value. Variance has a central role in statistics, where some ideas that use it include descriptive statistics, statistical inference, hypothesis testing, goodness of fit, and Monte Carlo sampling. Variance is an important tool in the sciences, where statistical analysis of data is common. The variance is the square of the standard deviation, the second central moment of a distribution, and the covariance of the random variable with itself, and it is often represented by \sigma^2, s^2, \operatorname(X), V(X), or \mathbb(X). An advantage of variance as a measure of dispersion is that it is more amenable to algebraic manipulation than other measures of dispersion such as the expected absolute deviation; for e ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mean

There are several kinds of mean in mathematics, especially in statistics. Each mean serves to summarize a given group of data, often to better understand the overall value (magnitude and sign) of a given data set. For a data set, the ''arithmetic mean'', also known as "arithmetic average", is a measure of central tendency of a finite set of numbers: specifically, the sum of the values divided by the number of values. The arithmetic mean of a set of numbers ''x''1, ''x''2, ..., x''n'' is typically denoted using an overhead bar, \bar. If the data set were based on a series of observations obtained by sampling from a statistical population, the arithmetic mean is the ''sample mean'' (\bar) to distinguish it from the mean, or expected value, of the underlying distribution, the ''population mean'' (denoted \mu or \mu_x).Underhill, L.G.; Bradfield d. (1998) ''Introstat'', Juta and Company Ltd.p. 181/ref> Outside probability and statistics, a wide range of other notions of mean are o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

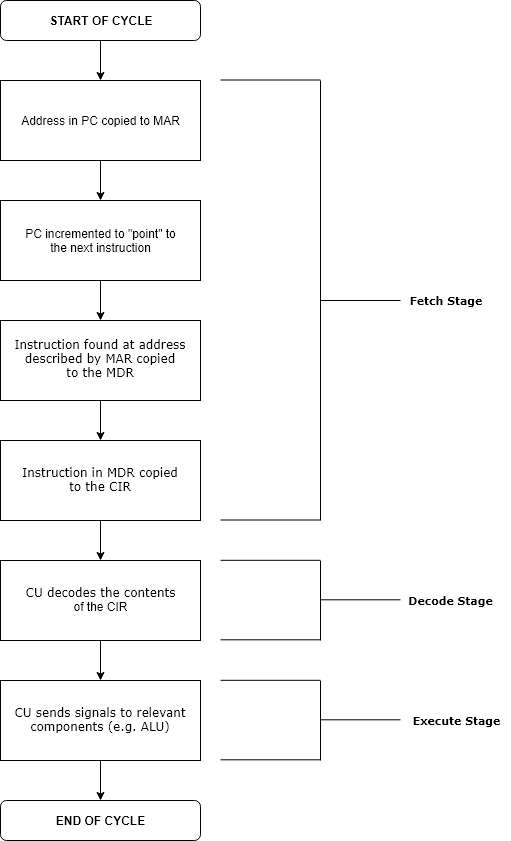

Execution (computing)

Execution in computer and software engineering is the process by which a computer or virtual machine reads and acts on the instructions of a computer program. Each instruction of a program is a description of a particular action which must be carried out, in order for a specific problem to be solved. Execution involves repeatedly following a ' fetch–decode–execute' cycle for each instruction done by control unit. As the executing machine follows the instructions, specific effects are produced in accordance with the semantics of those instructions. Programs for a computer may be executed in a batch process without human interaction or a user may type commands in an interactive session of an interpreter. In this case, the "commands" are simply program instructions, whose execution is chained together. The term run is used almost synonymously. A related meaning of both "to run" and "to execute" refers to the specific action of a user starting (or ''launching'' or ''invoki ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Random Process

In probability theory and related fields, a stochastic () or random process is a mathematical object usually defined as a family of random variables. Stochastic processes are widely used as mathematical models of systems and phenomena that appear to vary in a random manner. Examples include the growth of a bacterial population, an electrical current fluctuating due to thermal noise, or the movement of a gas molecule. Stochastic processes have applications in many disciplines such as biology, chemistry, ecology, neuroscience, physics, image processing, signal processing, control theory, information theory, computer science, cryptography and telecommunications. Furthermore, seemingly random changes in financial markets have motivated the extensive use of stochastic processes in finance. Applications and the study of phenomena have in turn inspired the proposal of new stochastic processes. Examples of such stochastic processes include the Wiener process or Brownian motion proc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Realization (probability)

In probability and statistics, a realization, observation, or observed value, of a random variable is the value that is actually observed (what actually happened). The random variable itself is the process dictating how the observation comes about. Statistical quantities computed from realizations without deploying a statistical model are often called "empirical", as in empirical distribution function or empirical probability. Conventionally, to avoid confusion, upper case letters denote random variables; the corresponding lower case letters denote their realizations. Formal definition In more formal probability theory, a random variable is a function ''X'' defined from a sample space Ω to a measurable space called the state space. If an element in Ω is mapped to an element in state space by ''X'', then that element in state space is a realization. Elements of the sample space can be thought of as all the different possibilities that ''could'' happen; while a reali ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Continuous-time

In mathematical dynamics, discrete time and continuous time are two alternative frameworks within which variables that evolve over time are modeled. Discrete time Discrete time views values of variables as occurring at distinct, separate "points in time", or equivalently as being unchanged throughout each non-zero region of time ("time period")—that is, time is viewed as a discrete variable. Thus a non-time variable jumps from one value to another as time moves from one time period to the next. This view of time corresponds to a digital clock that gives a fixed reading of 10:37 for a while, and then jumps to a new fixed reading of 10:38, etc. In this framework, each variable of interest is measured once at each time period. The number of measurements between any two time periods is finite. Measurements are typically made at sequential integer values of the variable "time". A discrete signal or discrete-time signal is a time series consisting of a sequence of quantities. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |