Regression (statistics) on:

[Wikipedia]

[Google]

[Amazon]

In

In

''Nouvelles méthodes pour la détermination des orbites des comètes''

Firmin Didot, Paris, 1805. “Sur la Méthode des moindres quarrés” appears as an appendix. and by Gauss in 1809.Chapter 1 of: Angrist, J. D., & Pischke, J. S. (2008). ''Mostly Harmless Econometrics: An Empiricist's Companion''. Princeton University Press. Legendre and Gauss both applied the method to the problem of determining, from astronomical observations, the orbits of bodies about the Sun (mostly comets, but also later the then newly discovered minor planets). Gauss published a further development of the theory of least squares in 1821,C.F. Gauss

''Theoria combinationis observationum erroribus minimis obnoxiae''

(1821/1823) including a version of the Gauss–Markov theorem. The term "regression" was coined by

Regression models predict a value of the ''Y'' variable given known values of the ''X'' variables. Prediction ''within'' the range of values in the dataset used for model-fitting is known informally as

Regression models predict a value of the ''Y'' variable given known values of the ''X'' variables. Prediction ''within'' the range of values in the dataset used for model-fitting is known informally as

Calculating Interval Forecasts

" ''Journal of Business and Economic Statistics,'' 11. pp. 121–135. * * Fox, J. (1997). ''Applied Regression Analysis, Linear Models and Related Methods.'' Sage * Hardle, W., ''Applied Nonparametric Regression'' (1990), * * A. Sen, M. Srivastava, ''Regression Analysis — Theory, Methods, and Applications'', Springer-Verlag, Berlin, 2011 (4th printing). * T. Strutz: ''Data Fitting and Uncertainty (A practical introduction to weighted least squares and beyond)''. Vieweg+Teubner, . * Stulp, Freek, and Olivier Sigaud. ''Many Regression Algorithms, One Unified Model: A Review.'' Neural Networks, vol. 69, Sept. 2015, pp. 60–79. https://doi.org/10.1016/j.neunet.2015.05.005. * Malakooti, B. (2013)

Operations and Production Systems with Multiple Objectives

John Wiley & Sons.

– basic history and references

What is multiple regression used for?

– Multiple regression

– how linear regression mistakes can appear when Y-range is much smaller than X-range {{DEFAULTSORT:Regression Analysis Actuarial science Curve fitting Estimation theory

In

In statistical model

A statistical model is a mathematical model that embodies a set of statistical assumptions concerning the generation of Sample (statistics), sample data (and similar data from a larger Statistical population, population). A statistical model repres ...

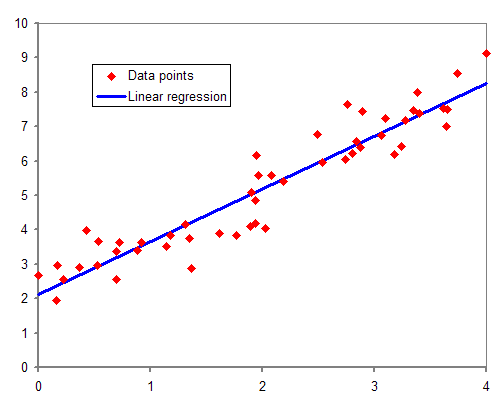

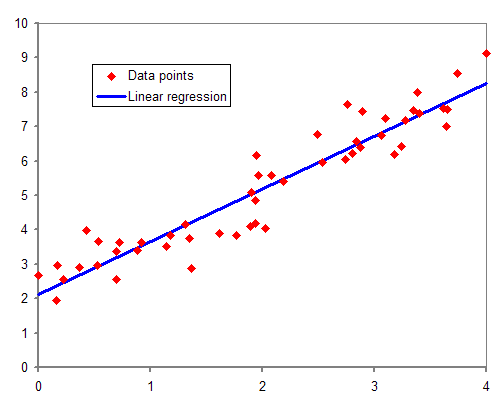

ing, regression analysis is a set of statistical processes for estimating the relationships between a dependent variable

Dependent and independent variables are variables in mathematical modeling, statistical modeling and experimental sciences. Dependent variables receive this name because, in an experiment, their values are studied under the supposition or demand ...

(often called the 'outcome' or 'response' variable, or a 'label' in machine learning parlance) and one or more independent variable

Dependent and independent variables are variables in mathematical modeling, statistical modeling and experimental sciences. Dependent variables receive this name because, in an experiment, their values are studied under the supposition or demand ...

s (often called 'predictors', 'covariates', 'explanatory variables' or 'features'). The most common form of regression analysis is linear regression

In statistics, linear regression is a linear approach for modelling the relationship between a scalar response and one or more explanatory variables (also known as dependent and independent variables). The case of one explanatory variable is call ...

, in which one finds the line (or a more complex linear combination) that most closely fits the data according to a specific mathematical criterion. For example, the method of ordinary least squares

In statistics, ordinary least squares (OLS) is a type of linear least squares method for choosing the unknown parameters in a linear regression model (with fixed level-one effects of a linear function of a set of explanatory variables) by the prin ...

computes the unique line (or hyperplane

In geometry, a hyperplane is a subspace whose dimension is one less than that of its ''ambient space''. For example, if a space is 3-dimensional then its hyperplanes are the 2-dimensional planes, while if the space is 2-dimensional, its hyper ...

) that minimizes the sum of squared differences between the true data and that line (or hyperplane). For specific mathematical reasons (see linear regression

In statistics, linear regression is a linear approach for modelling the relationship between a scalar response and one or more explanatory variables (also known as dependent and independent variables). The case of one explanatory variable is call ...

), this allows the researcher to estimate the conditional expectation

In probability theory, the conditional expectation, conditional expected value, or conditional mean of a random variable is its expected value – the value it would take “on average” over an arbitrarily large number of occurrences – give ...

(or population average value

In colloquial, ordinary language, an average is a single number taken as representative of a list of numbers, usually the sum of the numbers divided by how many numbers are in the list (the arithmetic mean). For example, the average of the numbers ...

) of the dependent variable when the independent variables take on a given set of values. Less common forms of regression use slightly different procedures to estimate alternative location parameters

In geography, location or place are used to denote a region (point, line, or area) on Earth's surface or elsewhere. The term ''location'' generally implies a higher degree of certainty than ''place'', the latter often indicating an entity with an ...

(e.g., quantile regression or Necessary Condition Analysis) or estimate the conditional expectation across a broader collection of non-linear models (e.g., nonparametric regression).

Regression analysis is primarily used for two conceptually distinct purposes.

First, regression analysis is widely used for prediction

A prediction (Latin ''præ-'', "before," and ''dicere'', "to say"), or forecast, is a statement about a future event or data. They are often, but not always, based upon experience or knowledge. There is no universal agreement about the exact ...

and forecasting, where its use has substantial overlap with the field of machine learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence.

Machine ...

.

Second, in some situations regression analysis can be used to infer causal relationships

Causality (also referred to as causation, or cause and effect) is influence by which one event, process, state, or object (''a'' ''cause'') contributes to the production of another event, process, state, or object (an ''effect'') where the cau ...

between the independent and dependent variables. Importantly, regressions by themselves only reveal relationships between a dependent variable and a collection of independent variables in a fixed dataset. To use regressions for prediction or to infer causal relationships, respectively, a researcher must carefully justify why existing relationships have predictive power for a new context or why a relationship between two variables has a causal interpretation. The latter is especially important when researchers hope to estimate causal relationships using observational data

In fields such as epidemiology, social sciences, psychology and statistics, an observational study draws inferences from a sample to a population where the independent variable is not under the control of the researcher because of ethical concern ...

.

History

The earliest form of regression was the method of least squares, which was published by Legendre in 1805, A.M. Legendre''Nouvelles méthodes pour la détermination des orbites des comètes''

Firmin Didot, Paris, 1805. “Sur la Méthode des moindres quarrés” appears as an appendix. and by Gauss in 1809.Chapter 1 of: Angrist, J. D., & Pischke, J. S. (2008). ''Mostly Harmless Econometrics: An Empiricist's Companion''. Princeton University Press. Legendre and Gauss both applied the method to the problem of determining, from astronomical observations, the orbits of bodies about the Sun (mostly comets, but also later the then newly discovered minor planets). Gauss published a further development of the theory of least squares in 1821,C.F. Gauss

''Theoria combinationis observationum erroribus minimis obnoxiae''

(1821/1823) including a version of the Gauss–Markov theorem. The term "regression" was coined by

Francis Galton

Sir Francis Galton, FRS FRAI (; 16 February 1822 – 17 January 1911), was an English Victorian era polymath: a statistician, sociologist, psychologist, anthropologist, tropical explorer, geographer, inventor, meteorologist, proto- ...

in the 19th century to describe a biological phenomenon. The phenomenon was that the heights of descendants of tall ancestors tend to regress down towards a normal average (a phenomenon also known as regression toward the mean

In statistics, regression toward the mean (also called reversion to the mean, and reversion to mediocrity) is the fact that if one sample of a random variable is extreme, the next sampling of the same random variable is likely to be closer to it ...

).

For Galton, regression had only this biological meaning, but his work was later extended by Udny Yule and Karl Pearson

Karl Pearson (; born Carl Pearson; 27 March 1857 – 27 April 1936) was an English mathematician and biostatistician. He has been credited with establishing the discipline of mathematical statistics. He founded the world's first university st ...

to a more general statistical context. In the work of Yule and Pearson, the joint distribution of the response and explanatory variables is assumed to be Gaussian

Carl Friedrich Gauss (1777–1855) is the eponym of all of the topics listed below.

There are over 100 topics all named after this German mathematician and scientist, all in the fields of mathematics, physics, and astronomy. The English eponymo ...

. This assumption was weakened by R.A. Fisher

Sir Ronald Aylmer Fisher (17 February 1890 – 29 July 1962) was a British polymath who was active as a mathematician, statistician, biologist, geneticist, and academic. For his work in statistics, he has been described as "a genius who a ...

in his works of 1922 and 1925. Fisher assumed that the conditional distribution

In probability theory and statistics, given two jointly distributed random variables X and Y, the conditional probability distribution of Y given X is the probability distribution of Y when X is known to be a particular value; in some cases the co ...

of the response variable is Gaussian, but the joint distribution need not be. In this respect, Fisher's assumption is closer to Gauss's formulation of 1821.

In the 1950s and 1960s, economists used electromechanical desk "calculators" to calculate regressions. Before 1970, it sometimes took up to 24 hours to receive the result from one regression.

Regression methods continue to be an area of active research. In recent decades, new methods have been developed for robust regression

In robust statistics, robust regression seeks to overcome some limitations of traditional regression analysis. A regression analysis models the relationship between one or more independent variables and a dependent variable. Standard types of reg ...

, regression involving correlated responses such as time series

In mathematics, a time series is a series of data points indexed (or listed or graphed) in time order. Most commonly, a time series is a sequence taken at successive equally spaced points in time. Thus it is a sequence of discrete-time data. Exa ...

and growth curves, regression in which the predictor (independent variable) or response variables are curves, images, graphs, or other complex data objects, regression methods accommodating various types of missing data, nonparametric regression, Bayesian methods for regression, regression in which the predictor variables are measured with error, regression with more predictor variables than observations, and causal inference

Causal inference is the process of determining the independent, actual effect of a particular phenomenon that is a component of a larger system. The main difference between causal inference and inference of association is that causal inference ana ...

with regression.

Regression model

In practice, researchers first select a model they would like to estimate and then use their chosen method (e.g.,ordinary least squares

In statistics, ordinary least squares (OLS) is a type of linear least squares method for choosing the unknown parameters in a linear regression model (with fixed level-one effects of a linear function of a set of explanatory variables) by the prin ...

) to estimate the parameters of that model. Regression models involve the following components:

*The unknown parameters, often denoted as a scalar

Scalar may refer to:

*Scalar (mathematics), an element of a field, which is used to define a vector space, usually the field of real numbers

* Scalar (physics), a physical quantity that can be described by a single element of a number field such ...

or vector .

*The independent variables, which are observed in data and are often denoted as a vector (where denotes a row of data).

*The dependent variable, which are observed in data and often denoted using the scalar .

*The error terms, which are ''not'' directly observed in data and are often denoted using the scalar .

In various fields of application, different terminologies are used in place of dependent and independent variables

Dependent and independent variables are variables in mathematical modeling, statistical modeling and experimental sciences. Dependent variables receive this name because, in an experiment, their values are studied under the supposition or demand ...

.

Most regression models propose that is a function of and , with representing an additive error term that may stand in for un-modeled determinants of or random statistical noise:

:

The researchers' goal is to estimate the function that most closely fits the data. To carry out regression analysis, the form of the function must be specified. Sometimes the form of this function is based on knowledge about the relationship between and that does not rely on the data. If no such knowledge is available, a flexible or convenient form for is chosen. For example, a simple univariate regression may propose , suggesting that the researcher believes to be a reasonable approximation for the statistical process generating the data.

Once researchers determine their preferred statistical model

A statistical model is a mathematical model that embodies a set of statistical assumptions concerning the generation of Sample (statistics), sample data (and similar data from a larger Statistical population, population). A statistical model repres ...

, different forms of regression analysis provide tools to estimate the parameters . For example, least squares

The method of least squares is a standard approach in regression analysis to approximate the solution of overdetermined systems (sets of equations in which there are more equations than unknowns) by minimizing the sum of the squares of the res ...

(including its most common variant, ordinary least squares

In statistics, ordinary least squares (OLS) is a type of linear least squares method for choosing the unknown parameters in a linear regression model (with fixed level-one effects of a linear function of a set of explanatory variables) by the prin ...

) finds the value of that minimizes the sum of squared errors . A given regression method will ultimately provide an estimate of , usually denoted to distinguish the estimate from the true (unknown) parameter value that generated the data. Using this estimate, the researcher can then use the ''fitted value'' for prediction or to assess the accuracy of the model in explaining the data. Whether the researcher is intrinsically interested in the estimate or the predicted value will depend on context and their goals. As described in ordinary least squares

In statistics, ordinary least squares (OLS) is a type of linear least squares method for choosing the unknown parameters in a linear regression model (with fixed level-one effects of a linear function of a set of explanatory variables) by the prin ...

, least squares is widely used because the estimated function approximates the conditional expectation

In probability theory, the conditional expectation, conditional expected value, or conditional mean of a random variable is its expected value – the value it would take “on average” over an arbitrarily large number of occurrences – give ...

. However, alternative variants (e.g., least absolute deviations

Least absolute deviations (LAD), also known as least absolute errors (LAE), least absolute residuals (LAR), or least absolute values (LAV), is a statistical optimality criterion and a statistical optimization technique based minimizing the ''sum o ...

or quantile regression) are useful when researchers want to model other functions .

It is important to note that there must be sufficient data to estimate a regression model. For example, suppose that a researcher has access to rows of data with one dependent and two independent variables: . Suppose further that the researcher wants to estimate a bivariate linear model via least squares

The method of least squares is a standard approach in regression analysis to approximate the solution of overdetermined systems (sets of equations in which there are more equations than unknowns) by minimizing the sum of the squares of the res ...

: . If the researcher only has access to data points, then they could find infinitely many combinations that explain the data equally well: any combination can be chosen that satisfies , all of which lead to and are therefore valid solutions that minimize the sum of squared residuals. To understand why there are infinitely many options, note that the system of equations is to be solved for 3 unknowns, which makes the system underdetermined. Alternatively, one can visualize infinitely many 3-dimensional planes that go through fixed points.

More generally, to estimate a least squares

The method of least squares is a standard approach in regression analysis to approximate the solution of overdetermined systems (sets of equations in which there are more equations than unknowns) by minimizing the sum of the squares of the res ...

model with distinct parameters, one must have distinct data points. If , then there does not generally exist a set of parameters that will perfectly fit the data. The quantity appears often in regression analysis, and is referred to as the degrees of freedom

Degrees of freedom (often abbreviated df or DOF) refers to the number of independent variables or parameters of a thermodynamic system. In various scientific fields, the word "freedom" is used to describe the limits to which physical movement or ...

in the model. Moreover, to estimate a least squares model, the independent variables must be linearly independent: one must ''not'' be able to reconstruct any of the independent variables by adding and multiplying the remaining independent variables. As discussed in ordinary least squares

In statistics, ordinary least squares (OLS) is a type of linear least squares method for choosing the unknown parameters in a linear regression model (with fixed level-one effects of a linear function of a set of explanatory variables) by the prin ...

, this condition ensures that is an invertible matrix and therefore that a unique solution exists.

Underlying assumptions

By itself, a regression is simply a calculation using the data. In order to interpret the output of regression as a meaningful statistical quantity that measures real-world relationships, researchers often rely on a number of classical assumptions. These assumptions often include: *The sample is representative of the population at large. *The independent variables are measured with no error. *Deviations from the model have an expected value of zero, conditional on covariates: *The variance of the residuals is constant across observations (homoscedasticity

In statistics, a sequence (or a vector) of random variables is homoscedastic () if all its random variables have the same finite variance. This is also known as homogeneity of variance. The complementary notion is called heteroscedasticity. The s ...

).

* The residuals are uncorrelated

In probability theory and statistics, two real-valued random variables, X, Y, are said to be uncorrelated if their covariance, \operatorname ,Y= \operatorname Y- \operatorname \operatorname /math>, is zero. If two variables are uncorrelated, there ...

with one another. Mathematically, the variance–covariance matrix

In probability theory and statistics, a covariance matrix (also known as auto-covariance matrix, dispersion matrix, variance matrix, or variance–covariance matrix) is a square Matrix (mathematics), matrix giving the covariance between ea ...

of the errors is diagonal.

A handful of conditions are sufficient for the least-squares estimator to possess desirable properties: in particular, the Gauss–Markov assumptions imply that the parameter estimates will be unbiased

Bias is a disproportionate weight ''in favor of'' or ''against'' an idea or thing, usually in a way that is closed-minded, prejudicial, or unfair. Biases can be innate or learned. People may develop biases for or against an individual, a group, ...

, consistent, and efficient in the class of linear unbiased estimators. Practitioners have developed a variety of methods to maintain some or all of these desirable properties in real-world settings, because these classical assumptions are unlikely to hold exactly. For example, modeling errors-in-variables

In statistics, errors-in-variables models or measurement error models are regression models that account for measurement errors in the independent variables. In contrast, standard regression models assume that those regressors have been measured e ...

can lead to reasonable estimates independent variables are measured with errors. Heteroscedasticity-consistent standard errors

The topic of heteroskedasticity-consistent (HC) standard errors arises in statistics and econometrics in the context of linear regression and time series analysis. These are also known as heteroskedasticity-robust standard errors (or simply robust ...

allow the variance of to change across values of . Correlated errors that exist within subsets of the data or follow specific patterns can be handled using ''clustered standard errors, geographic weighted regression'', or Newey–West standard errors, among other techniques. When rows of data correspond to locations in space, the choice of how to model within geographic units can have important consequences. The subfield of econometrics

Econometrics is the application of Statistics, statistical methods to economic data in order to give Empirical evidence, empirical content to economic relationships.M. Hashem Pesaran (1987). "Econometrics," ''The New Palgrave: A Dictionary of ...

is largely focused on developing techniques that allow researchers to make reasonable real-world conclusions in real-world settings, where classical assumptions do not hold exactly.

Linear regression

In linear regression, the model specification is that the dependent variable, is a linear combination of the ''parameters'' (but need not be linear in the ''independent variables''). For example, insimple linear regression

In statistics, simple linear regression is a linear regression model with a single explanatory variable. That is, it concerns two-dimensional sample points with one independent variable and one dependent variable (conventionally, the ''x'' and ...

for modeling data points there is one independent variable: , and two parameters, and :

:straight line:

In multiple linear regression, there are several independent variables or functions of independent variables.

Adding a term in to the preceding regression gives:

:parabola:

This is still linear regression; although the expression on the right hand side is quadratic in the independent variable , it is linear in the parameters , and

In both cases, is an error term and the subscript indexes a particular observation.

Returning our attention to the straight line case: Given a random sample from the population, we estimate the population parameters and obtain the sample linear regression model:

:

The residual, , is the difference between the value of the dependent variable predicted by the model, , and the true value of the dependent variable, . One method of estimation is ordinary least squares

In statistics, ordinary least squares (OLS) is a type of linear least squares method for choosing the unknown parameters in a linear regression model (with fixed level-one effects of a linear function of a set of explanatory variables) by the prin ...

. This method obtains parameter estimates that minimize the sum of squared residuals, SSR:

:

Minimization of this function results in a set of normal equations

In statistics, ordinary least squares (OLS) is a type of linear least squares method for choosing the unknown parameters in a linear regression model (with fixed level-one effects of a linear function of a set of explanatory variables) by the p ...

, a set of simultaneous linear equations in the parameters, which are solved to yield the parameter estimators, .

In the case of simple regression, the formulas for the least squares estimates are

:

:

where is the mean

There are several kinds of mean in mathematics, especially in statistics. Each mean serves to summarize a given group of data, often to better understand the overall value (magnitude and sign) of a given data set.

For a data set, the ''arithme ...

(average) of the values and is the mean of the values.

Under the assumption that the population error term has a constant variance, the estimate of that variance is given by:

:

This is called the mean square error

In statistics, the mean squared error (MSE) or mean squared deviation (MSD) of an estimator (of a procedure for estimating an unobserved quantity) measures the average of the squares of the errors—that is, the average squared difference between ...

(MSE) of the regression. The denominator is the sample size reduced by the number of model parameters estimated from the same data, for regressor

Dependent and independent variables are variables in mathematical modeling, statistical modeling and experimental sciences. Dependent variables receive this name because, in an experiment, their values are studied under the supposition or demand ...

s or if an intercept is used. In this case, so the denominator is .

The standard errors of the parameter estimates are given by

:

:

Under the further assumption that the population error term is normally distributed, the researcher can use these estimated standard errors to create confidence interval

In frequentist statistics, a confidence interval (CI) is a range of estimates for an unknown parameter. A confidence interval is computed at a designated ''confidence level''; the 95% confidence level is most common, but other levels, such as 9 ...

s and conduct hypothesis tests about the population parameter

In statistics, as opposed to its general use in mathematics, a parameter is any measured quantity of a statistical population that summarises or describes an aspect of the population, such as a mean or a standard deviation. If a population e ...

s.

General linear model

In the more general multiple regression model, there are independent variables: : where is the -th observation on the -th independent variable. If the first independent variable takes the value 1 for all , , then is called the regression intercept. The least squares parameter estimates are obtained from normal equations. The residual can be written as : The normal equations are : In matrix notation, the normal equations are written as : where the element of is , the element of the column vector is , and the element of is . Thus is , is , and is . The solution is :Diagnostics

Once a regression model has been constructed, it may be important to confirm thegoodness of fit

The goodness of fit of a statistical model describes how well it fits a set of observations. Measures of goodness of fit typically summarize the discrepancy between observed values and the values expected under the model in question. Such measure ...

of the model and the statistical significance

In statistical hypothesis testing, a result has statistical significance when it is very unlikely to have occurred given the null hypothesis (simply by chance alone). More precisely, a study's defined significance level, denoted by \alpha, is the p ...

of the estimated parameters. Commonly used checks of goodness of fit include the R-squared

In statistics, the coefficient of determination, denoted ''R''2 or ''r''2 and pronounced "R squared", is the proportion of the variation in the dependent variable that is predictable from the independent variable(s).

It is a statistic used ...

, analyses of the pattern of residuals and hypothesis testing. Statistical significance can be checked by an F-test of the overall fit, followed by t-test

A ''t''-test is any statistical hypothesis testing, statistical hypothesis test in which the test statistic follows a Student's t-distribution, Student's ''t''-distribution under the null hypothesis. It is most commonly applied when the test stati ...

s of individual parameters.

Interpretations of these diagnostic tests rest heavily on the model's assumptions. Although examination of the residuals can be used to invalidate a model, the results of a t-test

A ''t''-test is any statistical hypothesis testing, statistical hypothesis test in which the test statistic follows a Student's t-distribution, Student's ''t''-distribution under the null hypothesis. It is most commonly applied when the test stati ...

or F-test are sometimes more difficult to interpret if the model's assumptions are violated. For example, if the error term does not have a normal distribution, in small samples the estimated parameters will not follow normal distributions and complicate inference. With relatively large samples, however, a central limit theorem can be invoked such that hypothesis testing may proceed using asymptotic approximations.

Limited dependent variables

Limited dependent variable

A limited dependent variable is a variable whose range of

possible values is "restricted in some important way."

In econometrics, the term is often used when

estimation of the relationship between the ''limited'' dependent variable

of interest ...

s, which are response variables that are categorical variable

In statistics, a categorical variable (also called qualitative variable) is a variable that can take on one of a limited, and usually fixed, number of possible values, assigning each individual or other unit of observation to a particular group or ...

s or are variables constrained to fall only in a certain range, often arise in econometrics

Econometrics is the application of Statistics, statistical methods to economic data in order to give Empirical evidence, empirical content to economic relationships.M. Hashem Pesaran (1987). "Econometrics," ''The New Palgrave: A Dictionary of ...

.

The response variable may be non-continuous ("limited" to lie on some subset of the real line). For binary (zero or one) variables, if analysis proceeds with least-squares linear regression, the model is called the linear probability model In statistics, a linear probability model (LPM) is a special case of a binary regression model. Here the dependent variable for each observation takes values which are either 0 or 1. The probability of observing a 0 or 1 in any one case is treated a ...

. Nonlinear models for binary dependent variables include the probit and logit model

In statistics, the logistic model (or logit model) is a statistical model that models the probability of an event taking place by having the log-odds for the event be a linear combination of one or more independent variables. In regression ana ...

. The multivariate probit

In statistics and econometrics, the multivariate probit model is a generalization of the probit model used to estimate several correlated binary outcomes jointly. For example, if it is believed that the decisions of sending at least one child to ...

model is a standard method of estimating a joint relationship between several binary dependent variables and some independent variables. For categorical variable

In statistics, a categorical variable (also called qualitative variable) is a variable that can take on one of a limited, and usually fixed, number of possible values, assigning each individual or other unit of observation to a particular group or ...

s with more than two values there is the multinomial logit. For ordinal variable

Ordinal data is a categorical, statistical data type where the variables have natural, ordered categories and the distances between the categories are not known. These data exist on an ordinal scale, one of four levels of measurement described b ...

s with more than two values, there are the ordered logit

In statistics, the ordered logit model (also ordered logistic regression or proportional odds model) is an ordinal regression model—that is, a regression model for ordinal dependent variables—first considered by Peter McCullagh. For exampl ...

and ordered probit

In statistics, ordered probit is a generalization of the widely used probit analysis to the case of more than two outcomes of an ordinal dependent variable (a dependent variable for which the potential values have a natural ordering, as in poor, f ...

models. Censored regression model Censored regression models are a class of models in which the dependent variable is censored above or below a certain threshold. A commonly used likelihood-based model to accommodate to a censored sample is the Tobit model, but quantile and nonpar ...

s may be used when the dependent variable is only sometimes observed, and Heckman correction

The Heckman correction is a statistical technique to correct bias from non-randomly selected samples or otherwise incidentally truncated dependent variables, a pervasive issue in quantitative social sciences when using observational data. Conceptu ...

type models may be used when the sample is not randomly selected from the population of interest. An alternative to such procedures is linear regression based on polychoric correlation (or polyserial correlations) between the categorical variables. Such procedures differ in the assumptions made about the distribution of the variables in the population. If the variable is positive with low values and represents the repetition of the occurrence of an event, then count models like the Poisson regression or the negative binomial

In probability theory and statistics, the negative binomial distribution is a discrete probability distribution that models the number of failures in a sequence of independent and identically distributed Bernoulli trials before a specified (non-r ...

model may be used.

Nonlinear regression

When the model function is not linear in the parameters, the sum of squares must be minimized by an iterative procedure. This introduces many complications which are summarized in Differences between linear and non-linear least squares.Interpolation and extrapolation

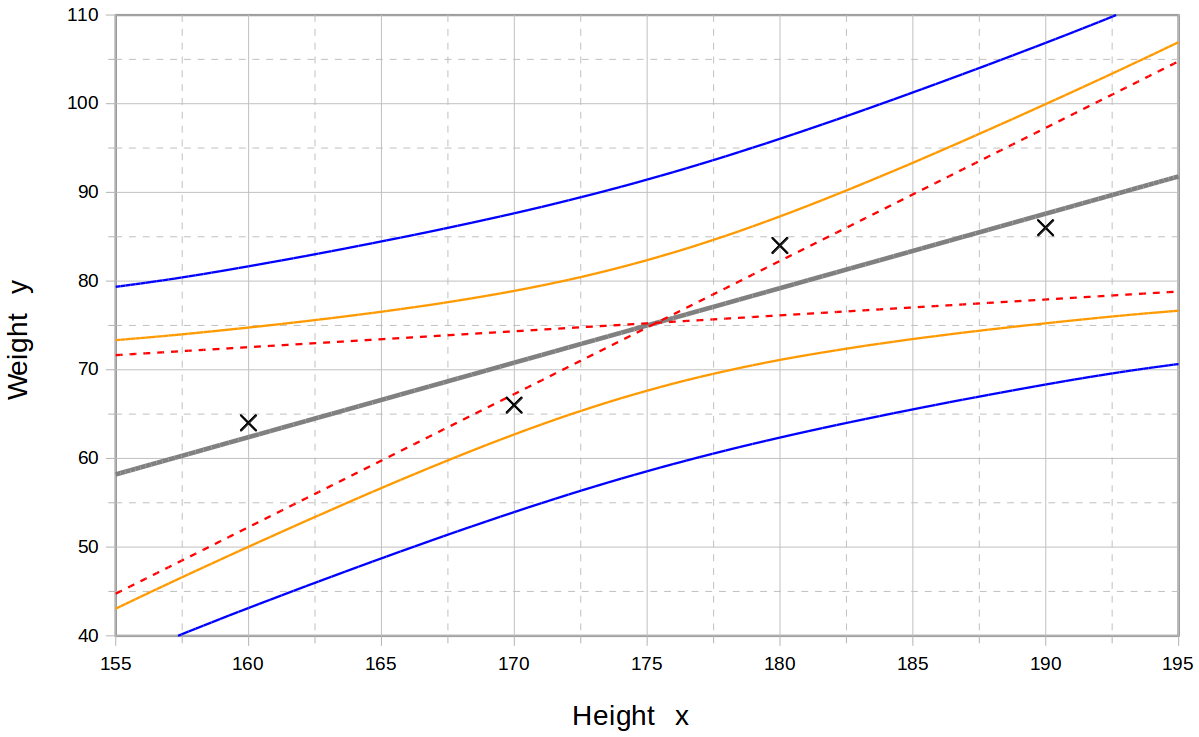

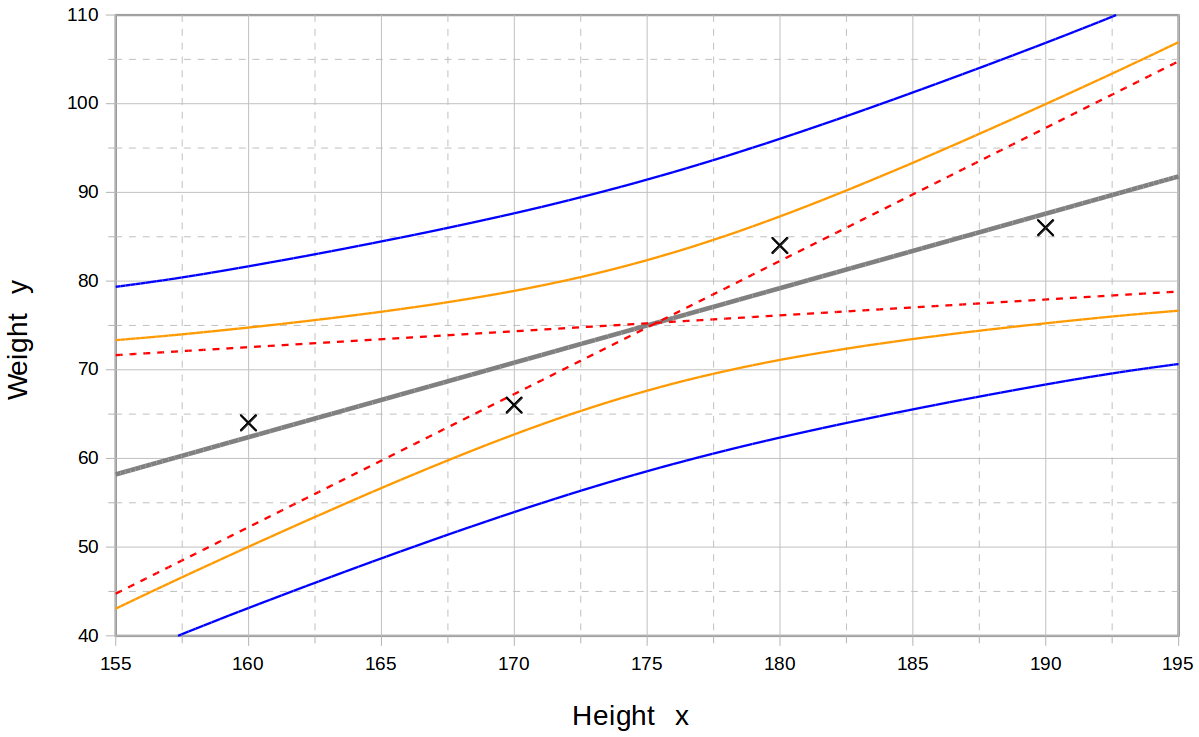

Regression models predict a value of the ''Y'' variable given known values of the ''X'' variables. Prediction ''within'' the range of values in the dataset used for model-fitting is known informally as

Regression models predict a value of the ''Y'' variable given known values of the ''X'' variables. Prediction ''within'' the range of values in the dataset used for model-fitting is known informally as interpolation

In the mathematical field of numerical analysis, interpolation is a type of estimation, a method of constructing (finding) new data points based on the range of a discrete set of known data points.

In engineering and science, one often has a n ...

. Prediction ''outside'' this range of the data is known as extrapolation. Performing extrapolation relies strongly on the regression assumptions. The further the extrapolation goes outside the data, the more room there is for the model to fail due to differences between the assumptions and the sample data or the true values.

It is generally advised that when performing extrapolation, one should accompany the estimated value of the dependent variable with a prediction interval

In statistical inference, specifically predictive inference, a prediction interval is an estimate of an interval in which a future observation will fall, with a certain probability, given what has already been observed. Prediction intervals are o ...

that represents the uncertainty. Such intervals tend to expand rapidly as the values of the independent variable(s) moved outside the range covered by the observed data.

For such reasons and others, some tend to say that it might be unwise to undertake extrapolation.

However, this does not cover the full set of modeling errors that may be made: in particular, the assumption of a particular form for the relation between ''Y'' and ''X''. A properly conducted regression analysis will include an assessment of how well the assumed form is matched by the observed data, but it can only do so within the range of values of the independent variables actually available. This means that any extrapolation is particularly reliant on the assumptions being made about the structural form of the regression relationship. Best-practice advice here is that a linear-in-variables and linear-in-parameters relationship should not be chosen simply for computational convenience, but that all available knowledge should be deployed in constructing a regression model. If this knowledge includes the fact that the dependent variable cannot go outside a certain range of values, this can be made use of in selecting the model – even if the observed dataset has no values particularly near such bounds. The implications of this step of choosing an appropriate functional form for the regression can be great when extrapolation is considered. At a minimum, it can ensure that any extrapolation arising from a fitted model is "realistic" (or in accord with what is known).

Power and sample size calculations

There are no generally agreed methods for relating the number of observations versus the number of independent variables in the model. One method conjectured by Good and Hardin is , where is the sample size, is the number of independent variables and is the number of observations needed to reach the desired precision if the model had only one independent variable. For example, a researcher is building a linear regression model using a dataset that contains 1000 patients (). If the researcher decides that five observations are needed to precisely define a straight line (), then the maximum number of independent variables the model can support is 4, because :Other methods

Although the parameters of a regression model are usually estimated using the method of least squares, other methods which have been used include: * Bayesian methods, e.g. Bayesian linear regression * Percentage regression, for situations where reducing ''percentage'' errors is deemed more appropriate. *Least absolute deviations

Least absolute deviations (LAD), also known as least absolute errors (LAE), least absolute residuals (LAR), or least absolute values (LAV), is a statistical optimality criterion and a statistical optimization technique based minimizing the ''sum o ...

, which is more robust in the presence of outliers, leading to quantile regression

* Nonparametric regression, requires a large number of observations and is computationally intensive

* Scenario optimization, leading to interval predictor model In regression analysis, an interval predictor model (IPM) is an approach to regression where bounds on the function to be approximated are obtained.

This differs from other techniques in machine learning, where usually one wishes to estimate point v ...

s

* Distance metric learning, which is learned by the search of a meaningful distance metric in a given input space.

Software

All major statistical software packages performleast squares

The method of least squares is a standard approach in regression analysis to approximate the solution of overdetermined systems (sets of equations in which there are more equations than unknowns) by minimizing the sum of the squares of the res ...

regression analysis and inference. Simple linear regression

In statistics, simple linear regression is a linear regression model with a single explanatory variable. That is, it concerns two-dimensional sample points with one independent variable and one dependent variable (conventionally, the ''x'' and ...

and multiple regression using least squares can be done in some spreadsheet

A spreadsheet is a computer application for computation, organization, analysis and storage of data in tabular form. Spreadsheets were developed as computerized analogs of paper accounting worksheets. The program operates on data entered in cel ...

applications and on some calculators. While many statistical software packages can perform various types of nonparametric and robust regression, these methods are less standardized. Different software packages implement different methods, and a method with a given name may be implemented differently in different packages. Specialized regression software has been developed for use in fields such as survey analysis and neuroimaging.

See also

*Anscombe's quartet

Anscombe's quartet comprises four data sets that have nearly identical simple descriptive statistics, yet have very different distributions and appear very different when graphed. Each dataset consists of eleven (''x'',''y'') points. They were ...

* Curve fitting

* Estimation theory

Estimation theory is a branch of statistics that deals with estimating the values of parameters based on measured empirical data that has a random component. The parameters describe an underlying physical setting in such a way that their valu ...

* Forecasting

* Fraction of variance unexplained

In statistics, the fraction of variance unexplained (FVU) in the context of a regression task is the fraction of variance of the regressand (dependent variable) ''Y'' which cannot be explained, i.e., which is not correctly predicted, by the e ...

* Function approximation

* Generalized linear model

In statistics, a generalized linear model (GLM) is a flexible generalization of ordinary linear regression. The GLM generalizes linear regression by allowing the linear model to be related to the response variable via a ''link function'' and b ...

* Kriging (a linear least squares estimation algorithm)

* Local regression

* Modifiable areal unit problem

* Multivariate adaptive regression splines

* Multivariate normal distribution

* Pearson correlation coefficient

* Quasi-variance

Quasi-variance (qv) estimates are a statistical approach that is suitable for communicating the effects of a categorical explanatory variable within a statistical model. In standard statistical models the effects of a categorical explanatory var ...

* Prediction interval

In statistical inference, specifically predictive inference, a prediction interval is an estimate of an interval in which a future observation will fall, with a certain probability, given what has already been observed. Prediction intervals are o ...

* Regression validation

In statistics, regression validation is the process of deciding whether the numerical results quantifying hypothesized relationships between variables, obtained from regression analysis, are acceptable as descriptions of the data. The validation p ...

* Robust regression

In robust statistics, robust regression seeks to overcome some limitations of traditional regression analysis. A regression analysis models the relationship between one or more independent variables and a dependent variable. Standard types of reg ...

* Segmented regression

Segmented regression, also known as piecewise regression or broken-stick regression, is a method in regression analysis in which the independent variable is partitioned into intervals and a separate line segment is fit to each interval. Segmented r ...

* Signal processing

Signal processing is an electrical engineering subfield that focuses on analyzing, modifying and synthesizing ''signals'', such as audio signal processing, sound, image processing, images, and scientific measurements. Signal processing techniq ...

* Stepwise regression

In statistics, stepwise regression is a method of fitting regression models in which the choice of predictive variables is carried out by an automatic procedure. In each step, a variable is considered for addition to or subtraction from the set of ...

* Taxicab geometry

* Trend estimation

Linear trend estimation is a statistical technique to aid interpretation of data. When a series of measurements of a process are treated as, for example, a sequences or time series, trend estimation can be used to make and justify statements abou ...

References

Further reading

* William H. Kruskal and Judith M. Tanur, ed. (1978), "Linear Hypotheses," ''International Encyclopedia of Statistics''. Free Press, v. 1, :Evan J. Williams, "I. Regression," pp. 523–41. :Julian C. Stanley

Julian Cecil Stanley (July 9, 1918 – August 12, 2005) was an American psychologist. He was an advocate of accelerated education for academically gifted children. He founded the Johns Hopkins University Center for Talented Youth (CTY), as wel ...

, "II. Analysis of Variance," pp. 541–554.

* Lindley, D.V. (1987). "Regression and correlation analysis," New Palgrave: A Dictionary of Economics, v. 4, pp. 120–23.

* Birkes, David and Dodge, Y., ''Alternative Methods of Regression''.

* Chatfield, C. (1993)Calculating Interval Forecasts

" ''Journal of Business and Economic Statistics,'' 11. pp. 121–135. * * Fox, J. (1997). ''Applied Regression Analysis, Linear Models and Related Methods.'' Sage * Hardle, W., ''Applied Nonparametric Regression'' (1990), * * A. Sen, M. Srivastava, ''Regression Analysis — Theory, Methods, and Applications'', Springer-Verlag, Berlin, 2011 (4th printing). * T. Strutz: ''Data Fitting and Uncertainty (A practical introduction to weighted least squares and beyond)''. Vieweg+Teubner, . * Stulp, Freek, and Olivier Sigaud. ''Many Regression Algorithms, One Unified Model: A Review.'' Neural Networks, vol. 69, Sept. 2015, pp. 60–79. https://doi.org/10.1016/j.neunet.2015.05.005. * Malakooti, B. (2013)

Operations and Production Systems with Multiple Objectives

John Wiley & Sons.

External links

*– basic history and references

What is multiple regression used for?

– Multiple regression

– how linear regression mistakes can appear when Y-range is much smaller than X-range {{DEFAULTSORT:Regression Analysis Actuarial science Curve fitting Estimation theory