Homoskedastic on:

[Wikipedia]

[Google]

[Amazon]

In

In

Residuals can be tested for homoscedasticity using the

Residuals can be tested for homoscedasticity using the

In

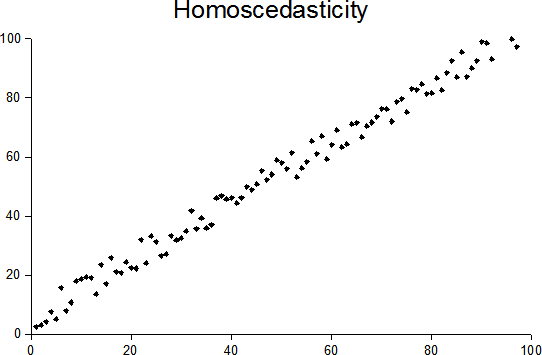

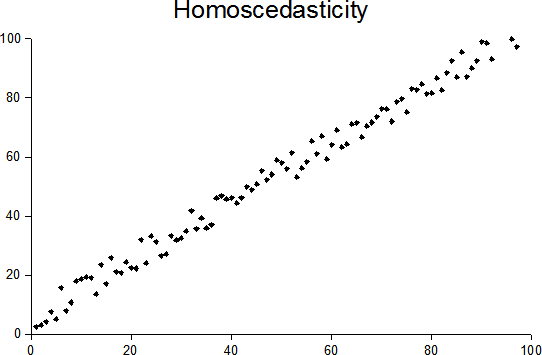

In statistics

Statistics (from German language, German: ''wikt:Statistik#German, Statistik'', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of ...

, a sequence

In mathematics, a sequence is an enumerated collection of objects in which repetitions are allowed and order matters. Like a set, it contains members (also called ''elements'', or ''terms''). The number of elements (possibly infinite) is calle ...

(or a vector) of random variable

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a mathematical formalization of a quantity or object which depends on random events. It is a mapping or a function from possible outcomes (e.g., the po ...

s is homoscedastic () if all its random variables have the same finite variance

In probability theory and statistics, variance is the expectation of the squared deviation of a random variable from its population mean or sample mean. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers ...

. This is also known as homogeneity of variance. The complementary notion is called heteroscedasticity. The spellings ''homoskedasticity'' and ''heteroskedasticity'' are also frequently used.

Assuming a variable is homoscedastic when in reality it is heteroscedastic () results in unbiased but inefficient point estimates and in biased estimates of standard errors, and may result in overestimating the goodness of fit

The goodness of fit of a statistical model describes how well it fits a set of observations. Measures of goodness of fit typically summarize the discrepancy between observed values and the values expected under the model in question. Such measure ...

as measured by the Pearson coefficient

In statistics, the Pearson correlation coefficient (PCC, pronounced ) ― also known as Pearson's ''r'', the Pearson product-moment correlation coefficient (PPMCC), the bivariate correlation, or colloquially simply as the correlation coefficient ...

.

The existence of heteroscedasticity is a major concern in regression analysis

In statistical modeling, regression analysis is a set of statistical processes for estimating the relationships between a dependent variable (often called the 'outcome' or 'response' variable, or a 'label' in machine learning parlance) and one ...

and the analysis of variance

Analysis of variance (ANOVA) is a collection of statistical models and their associated estimation procedures (such as the "variation" among and between groups) used to analyze the differences among means. ANOVA was developed by the statisticia ...

, as it invalidates statistical tests of significance that assume that the modelling errors all have the same variance. While the ordinary least squares

In statistics, ordinary least squares (OLS) is a type of linear least squares method for choosing the unknown parameters in a linear regression model (with fixed level-one effects of a linear function of a set of explanatory variables) by the prin ...

estimator is still unbiased in the presence of heteroscedasticity, it is inefficient and generalized least squares

In statistics, generalized least squares (GLS) is a technique for estimating the unknown parameters in a linear regression model when there is a certain degree of correlation between the residuals in a regression model. In these cases, ordinar ...

should be used instead.

Because heteroscedasticity concerns expectations of the second moment

Moment or Moments may refer to:

* Present time

Music

* The Moments, American R&B vocal group Albums

* ''Moment'' (Dark Tranquillity album), 2020

* ''Moment'' (Speed album), 1998

* ''Moments'' (Darude album)

* ''Moments'' (Christine Guldbrand ...

of the errors, its presence is referred to as misspecification of the second order.

The econometrician

Econometrics is the application of statistical methods to economic data in order to give empirical content to economic relationships.M. Hashem Pesaran (1987). "Econometrics," '' The New Palgrave: A Dictionary of Economics'', v. 2, p. 8 p. 8� ...

Robert Engle

Robert Fry Engle III (born November 10, 1942) is an American economist and statistician. He won the 2003 Nobel Memorial Prize in Economic Sciences, sharing the award with Clive Granger, "for methods of analyzing economic time series with time-var ...

was awarded the 2003 Nobel Memorial Prize for Economics

The Nobel Memorial Prize in Economic Sciences, officially the Sveriges Riksbank Prize in Economic Sciences in Memory of Alfred Nobel ( sv, Sveriges riksbanks pris i ekonomisk vetenskap till Alfred Nobels minne), is an economics award administered ...

for his studies on regression analysis

In statistical modeling, regression analysis is a set of statistical processes for estimating the relationships between a dependent variable (often called the 'outcome' or 'response' variable, or a 'label' in machine learning parlance) and one ...

in the presence of heteroscedasticity, which led to his formulation of the autoregressive conditional heteroscedasticity

In econometrics, the autoregressive conditional heteroskedasticity (ARCH) model is a statistical model for time series data that describes the variance of the current error term or innovation as a function of the actual sizes of the previous time ...

(ARCH) modeling technique.

Definition

Consider thelinear regression

In statistics, linear regression is a linear approach for modelling the relationship between a scalar response and one or more explanatory variables (also known as dependent and independent variables). The case of one explanatory variable is call ...

equation where the dependent random variable equals the deterministic variable times coefficient plus a random disturbance term that has mean zero. The disturbances are homoscedastic if the variance of is a constant ; otherwise, they are heteroscedastic. In particular, the disturbances are heteroscedastic if the variance of depends on or on the value of . One way they might be heteroscedastic is if (an example of a scedastic function In probability theory and statistics, a conditional variance is the variance of a random variable given the value(s) of one or more other variables.

Particularly in econometrics, the conditional variance is also known as the scedastic function or sk ...

), so the variance is proportional to the value of .

More generally, if the variance-covariance matrix of disturbance across has a nonconstant diagonal, the disturbance is heteroscedastic. The matrices below are covariances when there are just three observations across time. The disturbance in matrix A is homoscedastic; this is the simple case where OLS is the best linear unbiased estimator. The disturbances in matrices B and C are heteroscedastic. In matrix B, the variance is time-varying, increasing steadily across time; in matrix C, the variance depends on the value of . The disturbance in matrix D is homoscedastic because the diagonal variances are constant, even though the off-diagonal covariances are non-zero and ordinary least squares is inefficient for a different reason: serial correlation.

:

Examples

Heteroscedasticity often occurs when there is a large difference among the sizes of the observations. * A classic example of heteroscedasticity is that of income versus expenditure on meals. As one's income increases, the variability of food consumption will increase. A poorer person will spend a rather constant amount by always eating inexpensive food; a wealthier person may occasionally buy inexpensive food and at other times eat expensive meals. Those with higher incomes display a greater variability of food consumption. * Imagine you are watching a rocket take off nearby and measuring the distance it has travelled once each second. In the first couple of seconds your measurements may be accurate to the nearest centimeter, say. However, 5 minutes later as the rocket recedes into space, the accuracy of your measurements may only be good to 100 m, because of the increased distance, atmospheric distortion and a variety of other factors. The data you collect would exhibit heteroscedasticity.Consequences of heteroscedasticity

One of the assumptions of the classical linear regression model is that there is no heteroscedasticity. Breaking this assumption means that theGauss–Markov theorem

In statistics, the Gauss–Markov theorem (or simply Gauss theorem for some authors) states that the ordinary least squares (OLS) estimator has the lowest sampling variance within the class of linear unbiased estimators, if the errors in the ...

does not apply, meaning that OLS estimators are not the Best Linear Unbiased Estimators (BLUE) and their variance is not the lowest of all other unbiased estimators.

Heteroscedasticity does ''not'' cause ordinary least squares coefficient estimates to be biased, although it can cause ordinary least squares estimates of the variance (and, thus, standard errors) of the coefficients to be biased, possibly above or below the true of population variance. Thus, regression analysis using heteroscedastic data will still provide an unbiased estimate for the relationship between the predictor variable and the outcome, but standard errors and therefore inferences obtained from data analysis are suspect. Biased standard errors lead to biased inference, so results of hypothesis tests are possibly wrong. For example, if OLS is performed on a heteroscedastic data set, yielding biased standard error estimation, a researcher might fail to reject a null hypothesis at a given significance level, when that null hypothesis was actually uncharacteristic of the actual population (making a type II error

In statistical hypothesis testing, a type I error is the mistaken rejection of an actually true null hypothesis (also known as a "false positive" finding or conclusion; example: "an innocent person is convicted"), while a type II error is the fa ...

).

Under certain assumptions, the OLS estimator has a normal asymptotic distribution

In mathematics and statistics, an asymptotic distribution is a probability distribution that is in a sense the "limiting" distribution of a sequence of distributions. One of the main uses of the idea of an asymptotic distribution is in providing a ...

when properly normalized and centered (even when the data does not come from a normal distribution

In statistics, a normal distribution or Gaussian distribution is a type of continuous probability distribution for a real-valued random variable. The general form of its probability density function is

:

f(x) = \frac e^

The parameter \mu ...

). This result is used to justify using a normal distribution, or a chi square distribution (depending on how the test statistic

A test statistic is a statistic (a quantity derived from the sample) used in statistical hypothesis testing.Berger, R. L.; Casella, G. (2001). ''Statistical Inference'', Duxbury Press, Second Edition (p.374) A hypothesis test is typically specif ...

is calculated), when conducting a hypothesis test

A statistical hypothesis test is a method of statistical inference used to decide whether the data at hand sufficiently support a particular hypothesis.

Hypothesis testing allows us to make probabilistic statements about population parameters.

...

. This holds even under heteroscedasticity. More precisely, the OLS estimator in the presence of heteroscedasticity is asymptotically normal, when properly normalized and centered, with a variance-covariance matrix

Matrix most commonly refers to:

* ''The Matrix'' (franchise), an American media franchise

** ''The Matrix'', a 1999 science-fiction action film

** "The Matrix", a fictional setting, a virtual reality environment, within ''The Matrix'' (franchis ...

that differs from the case of homoscedasticity. In 1980, White proposed a consistent estimator

In statistics, a consistent estimator or asymptotically consistent estimator is an estimator—a rule for computing estimates of a parameter ''θ''0—having the property that as the number of data points used increases indefinitely, the result ...

for the variance-covariance matrix of the asymptotic distribution of the OLS estimator. This validates the use of hypothesis testing using OLS estimators and White's variance-covariance estimator under heteroscedasticity.

Heteroscedasticity is also a major practical issue encountered in ANOVA

Analysis of variance (ANOVA) is a collection of statistical models and their associated estimation procedures (such as the "variation" among and between groups) used to analyze the differences among means. ANOVA was developed by the statistician ...

problems.

The F test

An ''F''-test is any statistical test in which the test statistic has an ''F''-distribution under the null hypothesis. It is most often used when comparing statistical models that have been fitted to a data set, in order to identify the model th ...

can still be used in some circumstances.

However, it has been said that students in econometrics

Econometrics is the application of Statistics, statistical methods to economic data in order to give Empirical evidence, empirical content to economic relationships.M. Hashem Pesaran (1987). "Econometrics," ''The New Palgrave: A Dictionary of ...

should not overreact to heteroscedasticity. One author wrote, "unequal error variance is worth correcting only when the problem is severe." In addition, another word of caution was in the form, "heteroscedasticity has never been a reason to throw out an otherwise good model." With the advent of heteroscedasticity-consistent standard errors

The topic of heteroskedasticity-consistent (HC) standard errors arises in statistics and econometrics in the context of linear regression and time series analysis. These are also known as heteroskedasticity-robust standard errors (or simply robust ...

allowing for inference without specifying the conditional second moment of error term, testing conditional homoscedasticity is not as important as in the past.

For any non-linear model (for instance Logit

In statistics, the logit ( ) function is the quantile function associated with the standard logistic distribution. It has many uses in data analysis and machine learning, especially in data transformations.

Mathematically, the logit is the ...

and Probit

In probability theory and statistics, the probit function is the quantile function associated with the standard normal distribution. It has applications in data analysis and machine learning, in particular exploratory statistical graphics and s ...

models), however, heteroscedasticity has more severe consequences: the maximum likelihood estimates (MLE) of the parameters will be biased, as well as inconsistent (unless the likelihood function is modified to correctly take into account the precise form of heteroscedasticity). Yet, in the context of binary choice models (Logit

In statistics, the logit ( ) function is the quantile function associated with the standard logistic distribution. It has many uses in data analysis and machine learning, especially in data transformations.

Mathematically, the logit is the ...

or Probit

In probability theory and statistics, the probit function is the quantile function associated with the standard normal distribution. It has applications in data analysis and machine learning, in particular exploratory statistical graphics and s ...

), heteroscedasticity will only result in a positive scaling effect on the asymptotic mean of the misspecified MLE (i.e. the model that ignores heteroscedasticity). As a result, the predictions which are based on the misspecified MLE will remain correct. In addition, the misspecified Probit and Logit MLE will be asymptotically normally distributed which allows performing the usual significance tests (with the appropriate variance-covariance matrix). However, regarding the general hypothesis testing, as pointed out by Greene

Greene may refer to:

Places United States

*Greene, Indiana, an unincorporated community

*Greene, Iowa, a city

*Greene, Maine, a town

**Greene (CDP), Maine, in the town of Greene

*Greene (town), New York

**Greene (village), New York, in the town o ...

, “simply computing a robust covariance matrix for an otherwise inconsistent estimator does not give it redemption. Consequently, the virtue of a robust covariance matrix in this setting is unclear.”

Correcting for heteroscedasticity

There are five common corrections for heteroscedasticity. They are: * View logarithmized data. Non-logarithmized series that are growing exponentially often appear to have increasing variability as the series rises over time. The variability in percentage terms may, however, be rather stable. * Use a different specification for the model (different ''X'' variables, or perhaps non-linear transformations of the ''X'' variables). * Apply aweighted least squares

Weighted least squares (WLS), also known as weighted linear regression, is a generalization of ordinary least squares and linear regression in which knowledge of the variance of observations is incorporated into the regression.

WLS is also a speci ...

estimation method, in which OLS is applied to transformed or weighted values of ''X'' and ''Y''. The weights vary over observations, usually depending on the changing error variances. In one variation the weights are directly related to the magnitude of the dependent variable, and this corresponds to least squares percentage regression.

* Heteroscedasticity-consistent standard errors

The topic of heteroskedasticity-consistent (HC) standard errors arises in statistics and econometrics in the context of linear regression and time series analysis. These are also known as heteroskedasticity-robust standard errors (or simply robust ...

(HCSE), while still biased, improve upon OLS estimates. HCSE is a consistent estimator of standard errors in regression models with heteroscedasticity. This method corrects for heteroscedasticity without altering the values of the coefficients. This method may be superior to regular OLS because if heteroscedasticity is present it corrects for it, however, if the data is homoscedastic, the standard errors are equivalent to conventional standard errors estimated by OLS. Several modifications of the White method of computing heteroscedasticity-consistent standard errors have been proposed as corrections with superior finite sample properties.

* Use MINQUE

In statistics, the theory of minimum norm quadratic unbiased estimation (MINQUE) was developed by C. R. Rao. Its application was originally to the problem of heteroscedasticity and the estimation of variance components in random effects model

I ...

or even the customary estimators (for independent samples with observations each), whose efficiency losses are not substantial when the number of observations per sample is large (), especially for small number of independent samples.

Testing for heteroscedasticity

Breusch–Pagan test

In statistics, the Breusch–Pagan test, developed in 1979 by Trevor Breusch and Adrian Pagan, is used to test for heteroskedasticity in a linear regression model. It was independently suggested with some extension by R. Dennis Cook and Sanf ...

, which performs an auxiliary regression of the squared residuals on the independent variables. From this auxiliary regression, the explained sum of squares is retained, divided by two, and then becomes the test statistic for a chi-squared distribution with the degrees of freedom equal to the number of independent variables. The null hypothesis of this chi-squared test is homoscedasticity, and the alternative hypothesis would indicate heteroscedasticity. Since the Breusch–Pagan test is sensitive to departures from normality or small sample sizes, the Koenker–Bassett or 'generalized Breusch–Pagan' test is commonly used instead. From the auxiliary regression, it retains the R-squared value which is then multiplied by the sample size, and then becomes the test statistic for a chi-squared distribution (and uses the same degrees of freedom). Although it is not necessary for the Koenker–Bassett test, the Breusch–Pagan test requires that the squared residuals also be divided by the residual sum of squares divided by the sample size. Testing for groupwise heteroscedasticity can be done with the Goldfeld–Quandt test

In statistics, the Goldfeld–Quandt test checks for homoscedasticity in regression analyses. It does this by dividing a dataset into two parts or groups, and hence the test is sometimes called a two-group test. The Goldfeld–Quandt test is one o ...

.

List of heteroscedasticity tests

Although tests for heteroscedasticity between groups can formally be considered as a special case of testing within regression models, some tests have structures specific to this case.Tests in regression

*Levene's test In statistics, Levene's test is an inferential statistic used to assess the equality of variances for a variable calculated for two or more groups. Some common statistical procedures assume that variances of the populations from which different samp ...

*Goldfeld–Quandt test

In statistics, the Goldfeld–Quandt test checks for homoscedasticity in regression analyses. It does this by dividing a dataset into two parts or groups, and hence the test is sometimes called a two-group test. The Goldfeld–Quandt test is one o ...

* Park test

*Glejser test

In statistics, the Glejser test for heteroscedasticity, developed in 1969 by Herbert Glejser, regresses the residuals on the explanatory variable that is thought to be related to the heteroscedastic variance. After it was found not to be asymp ...

*Brown–Forsythe test

The Brown–Forsythe test is a statistical test for the equality of group variances based on performing an Analysis of Variance (ANOVA) on a transformation of the response variable. When a one-way ANOVA is performed, samples are assumed to have bee ...

* Harrison–McCabe test

*Breusch–Pagan test

In statistics, the Breusch–Pagan test, developed in 1979 by Trevor Breusch and Adrian Pagan, is used to test for heteroskedasticity in a linear regression model. It was independently suggested with some extension by R. Dennis Cook and Sanf ...

*White test

In statistics, the White test is a statistical test that establishes whether the variance of the errors in a regression model is constant: that is for homoskedasticity.

This test, and an estimator for heteroscedasticity-consistent standard err ...

* Cook–Weisberg test

Tests for grouped data

*F-test of equality of variances

In statistics, an ''F''-test of equality of variances is a test for the null hypothesis that two normal populations have the same variance.

Notionally, any ''F''-test can be regarded as a comparison of two variances, but the specific case being ...

*Cochran's C test In statistics, Cochran's C test, named after William Gemmell Cochran, William G. Cochran, is a one-tailed test, one-sided upper limit variance outlier test. The C test is used to decide if a single Estimation theory, estimate of a variance (or a st ...

*Hartley's test In statistics, Hartley's test, also known as the ''F''max test or Hartley's ''F''max, is used in the analysis of variance to verify that different groups have a similar variance, an assumption needed for other statistical tests. It was developed by ...

*Bartlett's test

In statistics, Bartlett's test, named after Maurice Stevenson Bartlett, is used to test homoscedasticity, that is, if multiple samples are from populations with equal variances. Some statistical tests, such as the analysis of variance, assume tha ...

Generalisations

Homoscedastic distributions

Two or morenormal distribution

In statistics, a normal distribution or Gaussian distribution is a type of continuous probability distribution for a real-valued random variable. The general form of its probability density function is

:

f(x) = \frac e^

The parameter \mu ...

s, are both homoscedastic and lack Serial correlation

Autocorrelation, sometimes known as serial correlation in the discrete time case, is the correlation of a signal with a delayed copy of itself as a function of delay. Informally, it is the similarity between observations of a random variable as ...

if they share the same diagonals in their covariance

In probability theory and statistics, covariance is a measure of the joint variability of two random variables. If the greater values of one variable mainly correspond with the greater values of the other variable, and the same holds for the les ...

matrix, and their non-diagonal entries are zero. Homoscedastic distributions are especially useful to derive statistical pattern recognition

Pattern recognition is the automated recognition of patterns and regularities in data. It has applications in statistical data analysis, signal processing, image analysis, information retrieval, bioinformatics, data compression, computer graphi ...

and machine learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence.

Machine ...

algorithms. One popular example of an algorithm that assumes homoscedasticity is Fisher's linear discriminant analysis

Linear discriminant analysis (LDA), normal discriminant analysis (NDA), or discriminant function analysis is a generalization of Fisher's linear discriminant, a method used in statistics and other fields, to find a linear combination of features ...

.

The concept of homoscedasticity can be applied to distributions on spheres.

Multivariate data

The study of homescedasticity and heteroscedasticity has been generalized to the multivariate case, which deals with the covariances of vector observations instead of the variance of scalar observations. One version of this is to use covariance matrices as the multivariate measure of dispersion. Several authors have considered tests in this context, for both regression and grouped-data situations.Bartlett's test

In statistics, Bartlett's test, named after Maurice Stevenson Bartlett, is used to test homoscedasticity, that is, if multiple samples are from populations with equal variances. Some statistical tests, such as the analysis of variance, assume tha ...

for heteroscedasticity between grouped data, used most commonly in the univariate case, has also been extended for the multivariate case, but a tractable solution only exists for 2 groups. Approximations exist for more than two groups, and they are both called Box's M test

Box's ''M'' test is a multivariate statistical test used to check the equality of multiple variance-covariance matrices. The test is commonly used to test the assumption of homogeneity of variances and covariances in MANOVA and linear discrimina ...

.

See also

*Heterogeneity

Homogeneity and heterogeneity are concepts often used in the sciences and statistics relating to the uniformity of a substance or organism. A material or image that is homogeneous is uniform in composition or character (i.e. color, shape, siz ...

*Spherical error

A sphere () is a geometrical object that is a three-dimensional analogue to a two-dimensional circle. A sphere is the set of points that are all at the same distance from a given point in three-dimensional space.. That given point is the ce ...

References

Further reading

Most statistics textbooks will include at least some material on homoscedasticity and heteroscedasticity. Some examples are: * * * * * *External links

* byMark Thoma

Mark Allen Thoma (born December 15, 1956) is a macroeconomist and econometrician and a professor of economics at the Department of Economics of the University of Oregon. Thoma is best known as a regular columnist for ''The Fiscal Times'' through ...

{{statistics

Statistical deviation and dispersion

Regression analysis

ja:ARCHモデル#分散不均一性