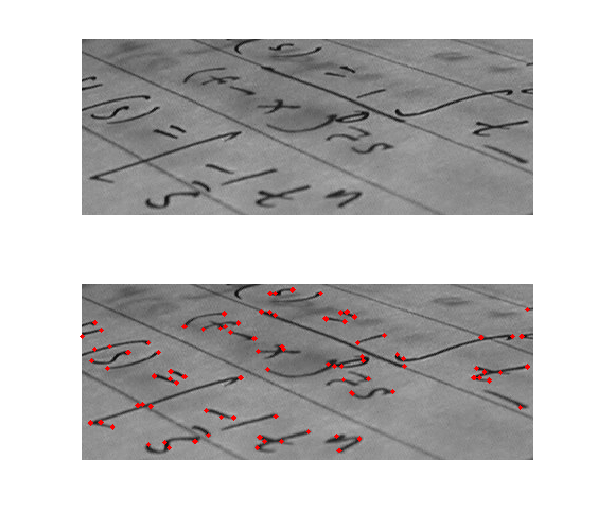

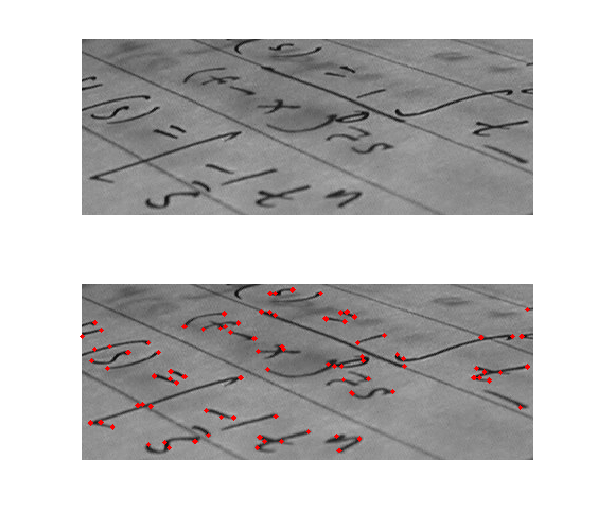

Corner detection is an approach used within

computer vision systems to extract certain kinds of

features and infer the contents of an image. Corner detection is frequently used in

motion detection,

image registration

Image registration is the process of transforming different sets of data into one coordinate system. Data may be multiple photographs, data from different sensors, times, depths, or viewpoints. It is used in computer vision, medical imaging, milit ...

,

video tracking

Video tracking is the process of locating a moving object (or multiple objects) over time using a camera. It has a variety of uses, some of which are: human-computer interaction, security and surveillance, video communication and compression, au ...

,

image mosaicing,

panorama stitching,

3D reconstruction and

object recognition

Object recognition – technology in the field of computer vision for finding and identifying objects in an image or video sequence. Humans recognize a multitude of objects in images with little effort, despite the fact that the image of the ...

. Corner detection overlaps with the topic of

interest point detection.

Formalization

A corner can be defined as the intersection of two edges. A corner can also be defined as a point for which there are two dominant and different edge directions in a local neighbourhood of the point.

An interest point is a point in an image which has a well-defined position and can be robustly detected. This means that an interest point can be a corner but it can also be, for example, an isolated point of local intensity maximum or minimum, line endings, or a point on a curve where the curvature is locally maximal.

In practice, most so-called corner detection methods detect interest points in general, and in fact, the term "corner" and "interest point" are used more or less interchangeably through the literature.

As a consequence, if only corners are to be detected it is necessary to do a local analysis of detected interest points to determine which of these are real corners. Examples of edge detection that can be used with post-processing to detect corners are the

Kirsch operator

The Kirsch operator or Kirsch compass kernel is a non-linear edge detector that finds the maximum edge strength in a few predetermined directions. It is named after the computer scientist Russell Kirsch

Russell A. Kirsch (June 20, 1929August 11 ...

and the Frei-Chen masking set.

"Corner", "interest point" and "feature" are used interchangeably in literature, confusing the issue. Specifically, there are several

blob detectors that can be referred to as "interest point operators", but which are sometimes erroneously referred to as "corner detectors". Moreover, there exists a notion of

ridge detection to capture the presence of elongated objects.

Corner detectors are not usually very robust and often require large redundancies introduced to prevent the effect of individual errors from dominating the recognition task.

One determination of the quality of a corner detector is its ability to detect the same corner in multiple similar images, under conditions of different lighting, translation, rotation and other transforms.

A simple approach to corner detection in images is using

correlation, but this gets very computationally expensive and suboptimal. An alternative approach used frequently is based on a method proposed by Harris and Stephens (below), which in turn is an improvement of a method by Moravec.

Moravec corner detection algorithm

This is one of the earliest corner detection algorithms and defines a ''corner'' to be a point with low self-similarity.

The algorithm tests each pixel in the image to see if a corner is present, by considering how similar a patch centered on the pixel is to nearby, largely overlapping patches. The similarity is measured by taking the sum of squared differences (SSD) between the corresponding pixels of two patches. A lower number indicates more similarity.

If the pixel is in a region of uniform intensity, then the nearby patches will look similar. If the pixel is on an edge, then nearby patches in a direction perpendicular to the edge will look quite different, but nearby patches in a direction parallel to the edge will result in only a small change. If the pixel is on a feature with variation in all directions, then none of the nearby patches will look similar.

The corner strength is defined as the smallest SSD between the patch and its neighbours (horizontal, vertical and on the two diagonals). The reason is that if this number is high, then the variation along all shifts is either equal to it or larger than it, so capturing that all nearby patches look different.

If the corner strength number is computed for all locations, that it is locally maximal for one location indicates that a feature of interest is present in it.

As pointed out by Moravec, one of the main problems with this operator is that it is not

isotropic: if an edge is present that is not in the direction of the neighbours (horizontal, vertical, or diagonal), then the smallest SSD will be large and the edge will be incorrectly chosen as an interest point.

The Harris & Stephens / Shi–Tomasi corner detection algorithms

Harris and Stephens

improved upon Moravec's corner detector by considering the differential of the corner score with respect to direction directly, instead of using shifted patches. (This corner score is often referred to as

autocorrelation, since the term is used in the paper in which this detector is described. However, the mathematics in the paper clearly indicate that the sum of squared differences is used.)

Without loss of generality, we will assume a grayscale 2-dimensional image is used. Let this image be given by

. Consider taking an image patch over the area

and shifting it by

. The weighted ''sum of squared differences'' (SSD) between these two patches, denoted

, is given by:

:

can be approximated by a

Taylor expansion

In mathematics, the Taylor series or Taylor expansion of a function is an infinite sum of terms that are expressed in terms of the function's derivatives at a single point. For most common functions, the function and the sum of its Taylor seri ...

. Let

and

be the partial

derivatives of

, such that

:

This produces the approximation

:

which can be written in matrix form:

:

where ''A'' is the

structure tensor

In mathematics, the structure tensor, also referred to as the second-moment matrix, is a matrix derived from the gradient of a function. It describes the distribution of the gradient in a specified neighborhood around a point and makes the inf ...

,

:

In words, we find the

covariance

In probability theory and statistics, covariance is a measure of the joint variability of two random variables. If the greater values of one variable mainly correspond with the greater values of the other variable, and the same holds for the ...

of the partial derivative of the image intensity

with respect to the

and

axes.

Angle brackets denote averaging (i.e. summation over

).

denotes the type of window that slides over the image. If a

Box filter is used the response will be

anisotropic, but if a

Gaussian

Carl Friedrich Gauss (1777–1855) is the eponym of all of the topics listed below.

There are over 100 topics all named after this German mathematician and scientist, all in the fields of mathematics, physics, and astronomy. The English eponymo ...

is used, then the response will be

isotropic.

A corner (or in general an interest point) is characterized by a large variation of

in all directions of the vector

. By analyzing the eigenvalues of

, this characterization can be expressed in the following way:

should have two "large" eigenvalues for an interest point.

Based on the magnitudes of the eigenvalues, the following inferences can be made based on this argument:

#If

and

then this pixel

has no features of interest.

#If

and

has some large positive value, then an edge is found.

#If

and

have large positive values, then a corner is found.

Harris and Stephens note that exact computation of the eigenvalues is computationally expensive, since it requires the computation of a

square root

In mathematics, a square root of a number is a number such that ; in other words, a number whose ''square'' (the result of multiplying the number by itself, or ⋅ ) is . For example, 4 and −4 are square roots of 16, because .

...

, and instead suggest the

following function

, where

is a tunable sensitivity parameter:

:

Therefore, the algorithm

does not have to actually compute the

eigenvalue decomposition

In linear algebra, eigendecomposition is the factorization of a matrix into a canonical form, whereby the matrix is represented in terms of its eigenvalues and eigenvectors. Only diagonalizable matrices can be factorized in this way. When the matr ...

of the matrix

and

instead it is sufficient to evaluate the

determinant

In mathematics, the determinant is a scalar value that is a function of the entries of a square matrix. It characterizes some properties of the matrix and the linear map represented by the matrix. In particular, the determinant is nonzero if a ...

and

trace

Trace may refer to:

Arts and entertainment Music

* ''Trace'' (Son Volt album), 1995

* ''Trace'' (Died Pretty album), 1993

* Trace (band), a Dutch progressive rock band

* ''The Trace'' (album)

Other uses in arts and entertainment

* ''Trace'' ...

of

to find

corners, or rather interest points in general.

The Shi–Tomasi

corner detector directly computes

because under certain assumptions, the corners are more stable for tracking. Note that this method is also sometimes referred to as the Kanade–Tomasi corner detector.

The value of

has to be determined empirically, and in the literature values in the range 0.04–0.15 have been reported as feasible.

One can avoid setting the parameter

by using Noble's

corner measure

which amounts to the

harmonic mean of the eigenvalues:

:

being a small positive constant.

''If''

can be interpreted as the

precision matrix

In statistics, the precision matrix or concentration matrix is the matrix inverse of the covariance matrix or dispersion matrix, P = \Sigma^.

For univariate distributions, the precision matrix degenerates into a scalar precision, defined as the ...

for the corner position, the

covariance matrix for the corner position is

, i.e.

:

The sum of the eigenvalues of

, which in that case can be interpreted as a

generalized variance The generalized variance is a scalar value which generalizes variance for multivariate random variables. It was introduced by Samuel S. Wilks.

The generalized variance is defined as the determinant of the covariance matrix

In probabilit ...

(or a "total uncertainty") of the corner position, is related to Noble's corner measure

by the following equation:

:

The Förstner corner detector

In some cases, one may wish to compute the location of a corner with subpixel accuracy. To achieve an approximate solution, the Förstner algorithm solves for the point closest to all the tangent lines of the corner in a given window and is a least-square solution. The algorithm relies on the fact that for an ideal corner, tangent lines cross at a single point.

The equation of a tangent line

at pixel

is given by:

:

where

is the gradient vector of the image

at

.

The point

closest to all the tangent lines in the window

is:

:

The distance from

to the tangent lines

is weighted by the gradient magnitude, thus giving more importance to tangents passing through pixels with strong gradients.

Solving for

:

:

are defined as:

:

Minimizing this equation can be done by differentiating with respect to

and setting it equal to 0:

:

Note that

is the

structure tensor

In mathematics, the structure tensor, also referred to as the second-moment matrix, is a matrix derived from the gradient of a function. It describes the distribution of the gradient in a specified neighborhood around a point and makes the inf ...

. For the equation to have a solution,

must be invertible, which implies that

must be full rank (rank 2). Thus, the solution

:

only exists where an actual corner exists in the window

.

A methodology for performing ''automatic scale selection'' for this corner localization method has been presented by Lindeberg

by minimizing the normalized residual

:

over scales. Thereby, the method has the ability to automatically adapt the scale levels for computing the image gradients to the noise level in the image data, by choosing coarser scale levels for noisy image data and finer scale levels for near ideal corner-like structures.

Notes:

*

can be viewed as a residual in the least-square solution computation: if

, then there was no error.

*this algorithm can be modified to compute centers of circular features by changing tangent lines to normal lines.

The multi-scale Harris operator

The computation of the second moment matrix (sometimes also referred to as the

structure tensor

In mathematics, the structure tensor, also referred to as the second-moment matrix, is a matrix derived from the gradient of a function. It describes the distribution of the gradient in a specified neighborhood around a point and makes the inf ...

)

in the Harris operator, requires the computation of

image derivative Image derivatives can be computed by using small convolution filters of size 2 × 2 or 3 × 3, such as the Discrete Laplace operator, Laplacian, Sobel operator, Sobel, Roberts cross, Roberts and Prewitt operator, Prewitt operato ...

s

in the image domain as well as the summation of non-linear combinations of these derivatives over local neighbourhoods. Since the computation of derivatives usually involves a stage of scale-space smoothing, an operational definition of the Harris operator requires two scale parameters: (i) a ''local scale'' for smoothing prior to the computation of

image derivative Image derivatives can be computed by using small convolution filters of size 2 × 2 or 3 × 3, such as the Discrete Laplace operator, Laplacian, Sobel operator, Sobel, Roberts cross, Roberts and Prewitt operator, Prewitt operato ...

s, and (ii) an ''integration scale'' for accumulating the non-linear operations on derivative operators into an integrated image descriptor.

With

denoting the original image intensity, let

denote the

scale space representation of

obtained by convolution with a Gaussian kernel

:

with local scale parameter

:

:

and let

and

denote the partial derivatives of

.

Moreover, introduce a Gaussian window function

with integration scale parameter

. Then, the

''multi-scale second-moment matrix''

Corner detection is an approach used within computer vision systems to extract certain kinds of features and infer the contents of an image. Corner detection is frequently used in motion detection,

Corner detection is an approach used within computer vision systems to extract certain kinds of features and infer the contents of an image. Corner detection is frequently used in motion detection,  In some cases, one may wish to compute the location of a corner with subpixel accuracy. To achieve an approximate solution, the Förstner algorithm solves for the point closest to all the tangent lines of the corner in a given window and is a least-square solution. The algorithm relies on the fact that for an ideal corner, tangent lines cross at a single point.

The equation of a tangent line at pixel is given by:

:

where is the gradient vector of the image at .

The point closest to all the tangent lines in the window is:

:

The distance from to the tangent lines is weighted by the gradient magnitude, thus giving more importance to tangents passing through pixels with strong gradients.

Solving for :

:

are defined as:

:

Minimizing this equation can be done by differentiating with respect to and setting it equal to 0:

:

Note that is the

In some cases, one may wish to compute the location of a corner with subpixel accuracy. To achieve an approximate solution, the Förstner algorithm solves for the point closest to all the tangent lines of the corner in a given window and is a least-square solution. The algorithm relies on the fact that for an ideal corner, tangent lines cross at a single point.

The equation of a tangent line at pixel is given by:

:

where is the gradient vector of the image at .

The point closest to all the tangent lines in the window is:

:

The distance from to the tangent lines is weighted by the gradient magnitude, thus giving more importance to tangents passing through pixels with strong gradients.

Solving for :

:

are defined as:

:

Minimizing this equation can be done by differentiating with respect to and setting it equal to 0:

:

Note that is the  Corner detection is an approach used within computer vision systems to extract certain kinds of features and infer the contents of an image. Corner detection is frequently used in motion detection,

Corner detection is an approach used within computer vision systems to extract certain kinds of features and infer the contents of an image. Corner detection is frequently used in motion detection,  In some cases, one may wish to compute the location of a corner with subpixel accuracy. To achieve an approximate solution, the Förstner algorithm solves for the point closest to all the tangent lines of the corner in a given window and is a least-square solution. The algorithm relies on the fact that for an ideal corner, tangent lines cross at a single point.

The equation of a tangent line at pixel is given by:

:

where is the gradient vector of the image at .

The point closest to all the tangent lines in the window is:

:

The distance from to the tangent lines is weighted by the gradient magnitude, thus giving more importance to tangents passing through pixels with strong gradients.

Solving for :

:

are defined as:

:

Minimizing this equation can be done by differentiating with respect to and setting it equal to 0:

:

Note that is the

In some cases, one may wish to compute the location of a corner with subpixel accuracy. To achieve an approximate solution, the Förstner algorithm solves for the point closest to all the tangent lines of the corner in a given window and is a least-square solution. The algorithm relies on the fact that for an ideal corner, tangent lines cross at a single point.

The equation of a tangent line at pixel is given by:

:

where is the gradient vector of the image at .

The point closest to all the tangent lines in the window is:

:

The distance from to the tangent lines is weighted by the gradient magnitude, thus giving more importance to tangents passing through pixels with strong gradients.

Solving for :

:

are defined as:

:

Minimizing this equation can be done by differentiating with respect to and setting it equal to 0:

:

Note that is the