|

Scaled Correlation

In statistics, scaled correlation is a form of a coefficient of correlation applicable to data that have a temporal component such as time series. It is the average short-term correlation. If the signals have multiple components (slow and fast), scaled coefficient of correlation can be computed only for the fast components of the signals, ignoring the contributions of the slow components.Nikolić D, Muresan RC, Feng W, Singer W (2012) Scaled correlation analysis: a better way to compute a cross-correlogram. ''European Journal of Neuroscience'', pp. 1–21, doi:10.1111/j.1460-9568.2011.07987.x http://www.danko-nikolic.com/wp-content/uploads/2012/03/Scaled-correlation-analysis.pdf This filtering-like operation has the advantages of not having to make assumptions about the sinusoidal nature of the signals. For example, in the studies of brain signals researchers are often interested in the high-frequency components (beta and gamma range; 25–80 Hz), and may not be interested in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German: '' Statistik'', "description of a state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of surveys and experiments.Dodge, Y. (2006) ''The Oxford Dictionary of Statistical Terms'', Oxford University Press. When census data cannot be collected, statisticians collect data by developing specific experiment designs and survey samples. Representative sampling assures that inferences and conclusions can reasonably extend from the sample to the population as a whole. An ex ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Autocorrelation

Autocorrelation, sometimes known as serial correlation in the discrete time case, is the correlation of a signal with a delayed copy of itself as a function of delay. Informally, it is the similarity between observations of a random variable as a function of the time lag between them. The analysis of autocorrelation is a mathematical tool for finding repeating patterns, such as the presence of a periodic signal obscured by noise, or identifying the missing fundamental frequency in a signal implied by its harmonic frequencies. It is often used in signal processing for analyzing functions or series of values, such as time domain signals. Different fields of study define autocorrelation differently, and not all of these definitions are equivalent. In some fields, the term is used interchangeably with autocovariance. Unit root processes, trend-stationary processes, autoregressive processes, and moving average processes are specific forms of processes with autocorrelatio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Spectral Density

The power spectrum S_(f) of a time series x(t) describes the distribution of power into frequency components composing that signal. According to Fourier analysis, any physical signal can be decomposed into a number of discrete frequencies, or a spectrum of frequencies over a continuous range. The statistical average of a certain signal or sort of signal (including noise) as analyzed in terms of its frequency content, is called its spectrum. When the energy of the signal is concentrated around a finite time interval, especially if its total energy is finite, one may compute the energy spectral density. More commonly used is the power spectral density (or simply power spectrum), which applies to signals existing over ''all'' time, or over a time period large enough (especially in relation to the duration of a measurement) that it could as well have been over an infinite time interval. The power spectral density (PSD) then refers to the spectral energy distribution that would ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Phase Correlation

Phase correlation is an approach to estimate the relative translative offset between two similar images (digital image correlation) or other data sets. It is commonly used in image registration and relies on a frequency-domain representation of the data, usually calculated by fast Fourier transforms. The term is applied particularly to a subset of cross-correlation techniques that isolate the phase information from the Fourier-space representation of the cross-correlogram. Example The following image demonstrates the usage of phase correlation to determine relative translative movement between two images corrupted by independent Gaussian noise. The image was translated by (30,33) pixels. Accordingly, one can clearly see a peak in the phase-correlation representation at approximately (30,33). Method Given two input images \ g_a and \ g_b: Apply a window function (e.g., a Hamming window) on both images to reduce edge effects (this may be optional depending on the image charac ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cross-correlation

In signal processing, cross-correlation is a measure of similarity of two series as a function of the displacement of one relative to the other. This is also known as a ''sliding dot product'' or ''sliding inner-product''. It is commonly used for searching a long signal for a shorter, known feature. It has applications in pattern recognition, single particle analysis, electron tomography, averaging, cryptanalysis, and neurophysiology. The cross-correlation is similar in nature to the convolution of two functions. In an autocorrelation, which is the cross-correlation of a signal with itself, there will always be a peak at a lag of zero, and its size will be the signal energy. In probability and statistics, the term ''cross-correlations'' refers to the correlations between the entries of two random vectors \mathbf and \mathbf, while the ''correlations'' of a random vector \mathbf are the correlations between the entries of \mathbf itself, those forming the correlation mat ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

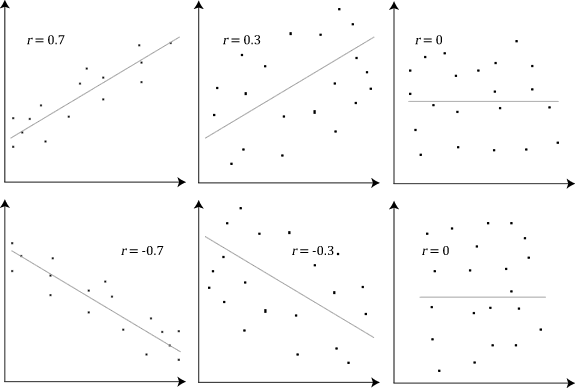

Correlation

In statistics, correlation or dependence is any statistical relationship, whether causal or not, between two random variables or bivariate data. Although in the broadest sense, "correlation" may indicate any type of association, in statistics it usually refers to the degree to which a pair of variables are '' linearly'' related. Familiar examples of dependent phenomena include the correlation between the height of parents and their offspring, and the correlation between the price of a good and the quantity the consumers are willing to purchase, as it is depicted in the so-called demand curve. Correlations are useful because they can indicate a predictive relationship that can be exploited in practice. For example, an electrical utility may produce less power on a mild day based on the correlation between electricity demand and weather. In this example, there is a causal relationship, because extreme weather causes people to use more electricity for heating or cooling. H ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Convolution

In mathematics (in particular, functional analysis), convolution is a mathematical operation on two functions ( and ) that produces a third function (f*g) that expresses how the shape of one is modified by the other. The term ''convolution'' refers to both the result function and to the process of computing it. It is defined as the integral of the product of the two functions after one is reflected about the y-axis and shifted. The choice of which function is reflected and shifted before the integral does not change the integral result (see commutativity). The integral is evaluated for all values of shift, producing the convolution function. Some features of convolution are similar to cross-correlation: for real-valued functions, of a continuous or discrete variable, convolution (f*g) differs from cross-correlation (f \star g) only in that either or is reflected about the y-axis in convolution; thus it is a cross-correlation of and , or and . For complex-valued fu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Coherence (signal Processing)

In signal processing, the coherence is a statistic that can be used to examine the relation between two signals or data sets. It is commonly used to estimate the power transfer between input and output of a linear system. If the signals are ergodic, and the system function is linear, it can be used to estimate the causality between the input and output. Definition and formulation The coherence (sometimes called magnitude-squared coherence) between two signals x(t) and y(t) is a real-valued function that is defined as: ::C_(f) = \frac where Gxy(f) is the Cross-spectral density between x and y, and Gxx(f) and Gyy(f) the auto spectral density of x and y respectively. The magnitude of the spectral density is denoted as , G, . Given the restrictions noted above (ergodicity, linearity) the coherence function estimates the extent to which y(t) may be predicted from x(t) by an optimum linear least squares function. Values of coherence will always satisfy 0\le C_(f)\le 1. For an ''ideal ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Wiener–Khinchin Theorem

In applied mathematics, the Wiener–Khinchin theorem or Wiener–Khintchine theorem, also known as the Wiener–Khinchin–Einstein theorem or the Khinchin–Kolmogorov theorem, states that the autocorrelation function of a wide-sense-stationary random process has a spectral decomposition given by the power spectrum of that process. History Norbert Wiener proved this theorem for the case of a deterministic function in 1930; Aleksandr Khinchin later formulated an analogous result for stationary stochastic processes and published that probabilistic analogue in 1934. Albert Einstein explained, without proofs, the idea in a brief two-page memo in 1914. The case of a continuous-time process For continuous time, the Wiener–Khinchin theorem says that if x is a wide-sense stochastic process whose autocorrelation function (sometimes called autocovariance) defined in terms of statistical expected value, r_(\tau) = \mathbb\big (t)^*x(t - \tau)\big/math> (the asterisk denotes comp ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Correlation

In statistics, correlation or dependence is any statistical relationship, whether causal or not, between two random variables or bivariate data. Although in the broadest sense, "correlation" may indicate any type of association, in statistics it usually refers to the degree to which a pair of variables are '' linearly'' related. Familiar examples of dependent phenomena include the correlation between the height of parents and their offspring, and the correlation between the price of a good and the quantity the consumers are willing to purchase, as it is depicted in the so-called demand curve. Correlations are useful because they can indicate a predictive relationship that can be exploited in practice. For example, an electrical utility may produce less power on a mild day based on the correlation between electricity demand and weather. In this example, there is a causal relationship, because extreme weather causes people to use more electricity for heating or cooling. H ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cross-correlation

In signal processing, cross-correlation is a measure of similarity of two series as a function of the displacement of one relative to the other. This is also known as a ''sliding dot product'' or ''sliding inner-product''. It is commonly used for searching a long signal for a shorter, known feature. It has applications in pattern recognition, single particle analysis, electron tomography, averaging, cryptanalysis, and neurophysiology. The cross-correlation is similar in nature to the convolution of two functions. In an autocorrelation, which is the cross-correlation of a signal with itself, there will always be a peak at a lag of zero, and its size will be the signal energy. In probability and statistics, the term ''cross-correlations'' refers to the correlations between the entries of two random vectors \mathbf and \mathbf, while the ''correlations'' of a random vector \mathbf are the correlations between the entries of \mathbf itself, those forming the correlation mat ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Autocorrelation

Autocorrelation, sometimes known as serial correlation in the discrete time case, is the correlation of a signal with a delayed copy of itself as a function of delay. Informally, it is the similarity between observations of a random variable as a function of the time lag between them. The analysis of autocorrelation is a mathematical tool for finding repeating patterns, such as the presence of a periodic signal obscured by noise, or identifying the missing fundamental frequency in a signal implied by its harmonic frequencies. It is often used in signal processing for analyzing functions or series of values, such as time domain signals. Different fields of study define autocorrelation differently, and not all of these definitions are equivalent. In some fields, the term is used interchangeably with autocovariance. Unit root processes, trend-stationary processes, autoregressive processes, and moving average processes are specific forms of processes with autocorrelatio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |