|

Total Operating Characteristic

The total operating characteristic (TOC) is a statistical method to compare a Boolean variable versus a rank variable. TOC can measure the ability of an index variable to diagnose either presence or absence of a characteristic. The diagnosis of presence or absence depends on whether the value of the index is above a threshold. TOC considers multiple possible thresholds. Each threshold generates a two-by-two contingency table, which contains four entries: hits, misses, false alarms, and correct rejections. The receiver operating characteristic (ROC) also characterizes diagnostic ability, although ROC reveals less information than the TOC. For each threshold, ROC reveals two ratios, hits/(hits + misses) and false alarms/(false alarms + correct rejections), while TOC shows the total information in the contingency table for each threshold. The TOC method reveals all of the information that the ROC method provides, plus additional important information that ROC does not reveal, i.e. the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Method

Statistics (from German language, German: ''wikt:Statistik#German, Statistik'', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of statistical survey, surveys and experimental design, experiments.Dodge, Y. (2006) ''The Oxford Dictionary of Statistical Terms'', Oxford University Press. When census data cannot be collected, statisticians collect data by developing specific experiment designs and survey sample (statistics), samples. Representative sampling as ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

ROCCET

Receiver Operating Characteristic Curve Explorer and Tester (ROCCET) is an open-access web server for performing biomarker analysis using ROC (Receiver Operating Characteristic) curve analyses on metabolomic data sets. ROCCET is designed specifically for performing and assessing a standard binary classification test (disease vs. control). ROCCET accepts metabolite data tables, with or without clinical/observational variables, as input and performs extensive biomarker analysis and biomarker identification using these input data. It operates through a menu-based navigation system that allows users to identify or assess those clinical variables and/or metabolites that contain the maximal diagnostic or class-predictive information. ROCCET supports both manual and semi-automated feature selection and is able to automatically generate a variety of mathematical models that maximize the sensitivity and specificity of the biomarker(s) while minimizing the number of biomarkers used in the bi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Precision And Recall

In pattern recognition, information retrieval, object detection and classification (machine learning), precision and recall are performance metrics that apply to data retrieved from a collection, corpus or sample space. Precision (also called positive predictive value) is the fraction of relevant instances among the retrieved instances, while recall (also known as sensitivity) is the fraction of relevant instances that were retrieved. Both precision and recall are therefore based on relevance. Consider a computer program for recognizing dogs (the relevant element) in a digital photograph. Upon processing a picture which contains ten cats and twelve dogs, the program identifies eight dogs. Of the eight elements identified as dogs, only five actually are dogs (true positives), while the other three are cats (false positives). Seven dogs were missed (false negatives), and seven cats were correctly excluded (true negatives). The program's precision is then 5/8 (true positives / se ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

False Alarm

A false alarm, also called a nuisance alarm, is the deceptive or erroneous report of an emergency, causing unnecessary panic and/or bringing resources (such as emergency services) to a place where they are not needed. False alarms may occur with residential burglary alarms, smoke detectors, industrial alarms, and in signal detection theory. False alarms have the potential to divert emergency responders away from legitimate emergencies, which could ultimately lead to loss of life. In some cases, repeated false alarms in a certain area may cause occupants to develop alarm fatigue and to start ignoring most alarms, knowing that each time it will probably be false. Intentionally falsely activating alarms in businesses and schools can lead to serious disciplinary actions, and criminal penalties such as fines and jail time. Overview The term “false alarm” refers to alarm systems in many different applications being triggered by something other than the expected trigger-event. Exa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

F1 Score

In statistical analysis of binary classification, the F-score or F-measure is a measure of a test's accuracy. It is calculated from the precision and recall of the test, where the precision is the number of true positive results divided by the number of all positive results, including those not identified correctly, and the recall is the number of true positive results divided by the number of all samples that should have been identified as positive. Precision is also known as positive predictive value, and recall is also known as sensitivity in diagnostic binary classification. The F1 score is the harmonic mean of the precision and recall. The more generic F_\beta score applies additional weights, valuing one of precision or recall more than the other. The highest possible value of an F-score is 1.0, indicating perfect precision and recall, and the lowest possible value is 0, if either precision or recall are zero. Etymology The name F-measure is believed to be named after ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Detection Theory

Detection theory or signal detection theory is a means to measure the ability to differentiate between information-bearing patterns (called stimulus in living organisms, signal in machines) and random patterns that distract from the information (called noise, consisting of background stimuli and random activity of the detection machine and of the nervous system of the operator). In the field of electronics, signal recovery is the separation of such patterns from a disguising background. According to the theory, there are a number of determiners of how a detecting system will detect a signal, and where its threshold levels will be. The theory can explain how changing the threshold will affect the ability to discern, often exposing how adapted the system is to the task, purpose or goal at which it is aimed. When the detecting system is a human being, characteristics such as experience, expectations, physiological state (e.g., fatigue) and other factors can affect the threshold app ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

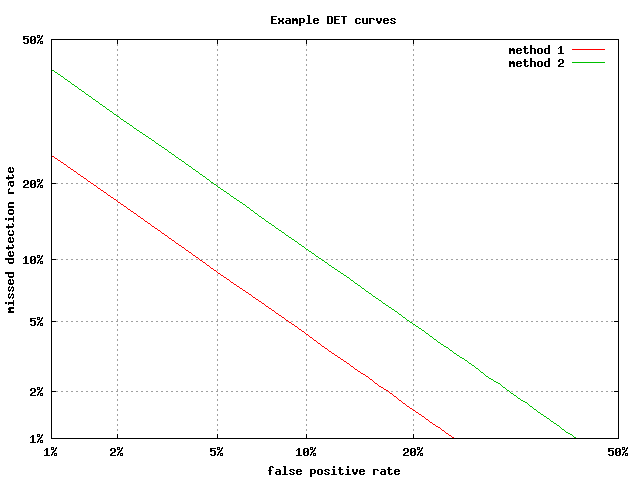

Detection Error Tradeoff

A detection error tradeoff (DET) graph is a graphical plot of error rates for binary classification systems, plotting the false rejection rate vs. false acceptance rate.A. Martin, A., G. Doddington, T. Kamm, M. Ordowski, and M. Przybocki.The DET Curve in Assessment of Detection Task Performance, Proc. Eurospeech '97, Rhodes, Greece, September 1997, Vol. 4, pp. 1895-1898. The x- and y-axes are scaled non-linearly by their standard normal deviates (or just by logarithmic transformation), yielding tradeoff curves that are more linear than ROC curves, and use most of the image area to highlight the differences of importance in the critical operating region. Axis warping The normal deviate mapping (or normal quantile function, or inverse normal cumulative distribution) is given by the probit function, so that the horizontal axis is ''x'' = probit(''Pfa'') and the vertical is ''y'' = probit(''Pfr''), where ''Pfa'' and ''Pfr'' are the false-accept and false-reject rates. The probit ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Constant False Alarm Rate

Constant false alarm rate (CFAR) detection refers to a common form of adaptive algorithm used in radar systems to detect target returns against a background of noise, clutter and interference. Principle In the radar receiver, the returning echoes are typically received by the antenna, amplified, down-converted to an intermediate frequency, and then passed through detector circuitry that extracts the envelope of the signal, known as the ''video signal''. This video signal is proportional to the power of the received echo. It comprises the desired echo signal as well as the unwanted signals from internal receiver noise and external clutter and interference. The term ''video'' refers to the resulting signal being appropriate for display on a cathode ray tube, or "video screen". The role of the constant false alarm rate circuitry is to determine the power threshold above which any return can be considered to probably originate from a target as opposed to one of the spurious sources ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Coefficient Of Determination

In statistics, the coefficient of determination, denoted ''R''2 or ''r''2 and pronounced "R squared", is the proportion of the variation in the dependent variable that is predictable from the independent variable(s). It is a statistic used in the context of statistical models whose main purpose is either the prediction of future outcomes or the testing of hypotheses, on the basis of other related information. It provides a measure of how well observed outcomes are replicated by the model, based on the proportion of total variation of outcomes explained by the model. There are several definitions of ''R''2 that are only sometimes equivalent. One class of such cases includes that of simple linear regression where ''r''2 is used instead of ''R''2. When only an intercept is included, then ''r''2 is simply the square of the sample correlation coefficient (i.e., ''r'') between the observed outcomes and the observed predictor values. If additional regressors are included, ''R''2 ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Brier Score

The Brier Score is a Scoring rule#StrictlyProperScoringRules, ''strictly proper score function'' or ''strictly proper scoring rule'' that measures the accuracy of probabilistic classification, probabilistic predictions. For unidimensional predictions, it is strictly equivalent to the mean squared error as applied to predicted probabilities. The Brier score is applicable to tasks in which predictions must assign probabilities to a set of mutually exclusive discrete outcomes or classes. The set of possible outcomes can be either binary or categorical in nature, and the probabilities assigned to this set of outcomes must sum to one (where each individual probability is in the range of 0 to 1). It was proposed by Glenn W. Brier in 1950. The Brier score can be thought of as a Loss function, cost function. More precisely, across all items i\in in a set of ''N'' predictions, the Brier score measures the mean squared difference between: * The predicted probability assigned to the possible ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Fleiss' Kappa

Fleiss' kappa (named after Joseph L. Fleiss) is a statistical measure for assessing the reliability of agreement between a fixed number of raters when assigning categorical ratings to a number of items or classifying items. This contrasts with other kappas such as Cohen's kappa, which only work when assessing the agreement between not more than two raters or the intra-rater reliability (for one appraiser versus themself). The measure calculates the degree of agreement in classification over that which would be expected by chance. Fleiss' kappa can be used with binary or nominal-scale. It can also be applied to Ordinal data (ranked data): the MiniTab online documentation gives an example. However, this document notes: "When you have ordinal ratings, such as defect severity ratings on a scale of 1–5, Kendall's coefficients, which account for ordering, are usually more appropriate statistics to determine association than kappa alone." Keep in mind however, that Kendall rank coef ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |