|

Stein Discrepancy

A Stein discrepancy is a statistical divergence between two probability measures that is rooted in Stein's method. It was first formulated as a tool to assess the quality of Markov chain Monte Carlo samplers,J. Gorham and L. Mackey. Measuring Sample Quality with Stein's Method. Advances in Neural Information Processing Systems, 2015. but has since been used in diverse settings in statistics, machine learning and computer science. Definition Let \mathcal be a measurable space and let \mathcal be a set of measurable functions of the form m : \mathcal \rightarrow \mathbb. A natural notion of distance between two probability distributions P, Q, defined on \mathcal, is provided by an integral probability metric : (1.1) \quad d_(P , Q) := \sup_ , \mathbb_(X)- \mathbb_(Y) , where for the purposes of exposition we assume that the expectations exist, and that the set \mathcal is sufficiently rich that (1.1) is indeed a metric on the set of probability distributions on \mathcal, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Divergence (statistics)

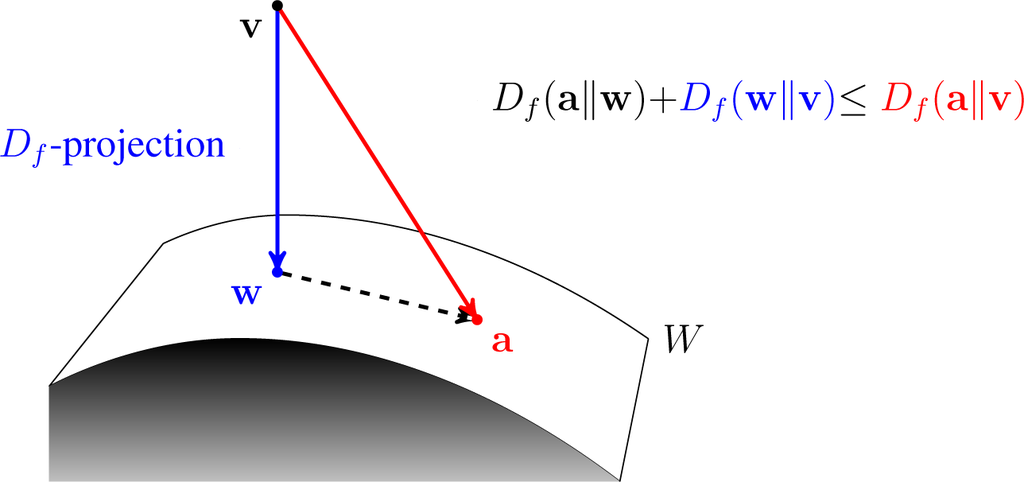

In information geometry, a divergence is a kind of statistical distance: a binary function which establishes the separation from one probability distribution to another on a statistical manifold. The simplest divergence is squared Euclidean distance (SED), and divergences can be viewed as generalizations of SED. The other most important divergence is relative entropy ( Kullback–Leibler divergence, KL divergence), which is central to information theory. There are numerous other specific divergences and classes of divergences, notably ''f''-divergences and Bregman divergences (see ). Definition Given a differentiable manifold M of dimension n, a divergence on M is a C^2-function D: M\times M\to [0, \infty) satisfying: # D(p, q) \geq 0 for all p, q \in M (non-negativity), # D(p, q) = 0 if and only if p=q (positivity), # At every point p\in M, D(p, p+dp) is a positive-definite quadratic form for infinitesimal displacements dp from p. In applications to statistics, the manifo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear Programming

Linear programming (LP), also called linear optimization, is a method to achieve the best outcome (such as maximum profit or lowest cost) in a mathematical model whose requirements are represented by linear relationships. Linear programming is a special case of mathematical programming (also known as mathematical optimization). More formally, linear programming is a technique for the optimization of a linear objective function, subject to linear equality and linear inequality constraints. Its feasible region is a convex polytope, which is a set defined as the intersection of finitely many half spaces, each of which is defined by a linear inequality. Its objective function is a real-valued affine (linear) function defined on this polyhedron. A linear programming algorithm finds a point in the polytope where this function has the smallest (or largest) value if such a point exists. Linear programs are problems that can be expressed in canonical form as : \begin & \text ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Stein's Method

Stein's method is a general method in probability theory to obtain bounds on the distance between two probability distributions with respect to a probability metric. It was introduced by Charles Stein, who first published it in 1972, to obtain a bound between the distribution of a sum of m-dependent sequence of random variables and a standard normal distribution in the Kolmogorov (uniform) metric and hence to prove not only a central limit theorem, but also bounds on the rates of convergence for the given metric. History At the end of the 1960s, unsatisfied with the by-then known proofs of a specific central limit theorem, Charles Stein developed a new way of proving the theorem for his statistics lecture. . Interview given ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Goodness Of Fit

The goodness of fit of a statistical model describes how well it fits a set of observations. Measures of goodness of fit typically summarize the discrepancy between observed values and the values expected under the model in question. Such measures can be used in statistical hypothesis testing, e.g. to normality test, test for normality of Errors and residuals in statistics, residuals, to test whether two samples are drawn from identical distributions (see Kolmogorov–Smirnov test), or whether outcome frequencies follow a specified distribution (see Pearson's chi-square test). In the analysis of variance, one of the components into which the variance is partitioned may be a lack-of-fit sum of squares. Fit of distributions In assessing whether a given distribution is suited to a data-set, the following statistical hypothesis test, tests and their underlying measures of fit can be used: *Bayesian information criterion *Kolmogorov–Smirnov test *Cramér–von Mises criterion *And ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Minimum-distance Estimation

Minimum-distance estimation (MDE) is a conceptual method for fitting a statistical model to data, usually the empirical distribution. Often-used estimators such as ordinary least squares can be thought of as special cases of minimum-distance estimation. While consistent and asymptotically normal, minimum-distance estimators are generally not statistically efficient when compared to maximum likelihood estimators, because they omit the Jacobian usually present in the likelihood function. This, however, substantially reduces the computational complexity of the optimization problem. Definition Let \displaystyle X_1,\ldots,X_n be an independent and identically distributed (iid) random sample from a population with distribution F(x;\theta)\colon \theta\in\Theta and \Theta\subseteq\mathbb^k (k\geq 1). Let \displaystyle F_n(x) be the empirical distribution function based on the sample. Let \hat be an estimator for \displaystyle \theta. Then F(x;\hat) is an estimator for \displaystyl ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Flow-based Generative Model

A flow-based generative model is a generative model used in machine learning that explicitly models a probability distribution by leveraging normalizing flow, which is a statistical method using the change-of-variable law of probabilities to transform a simple distribution into a complex one. The direct modeling of likelihood provides many advantages. For example, the negative log-likelihood can be directly computed and minimized as the loss function. Additionally, novel samples can be generated by sampling from the initial distribution, and applying the flow transformation. In contrast, many alternative generative modeling methods such as variational autoencoder (VAE) and generative adversarial network do not explicitly represent the likelihood function. Method Let z_0 be a (possibly multivariate) random variable with distribution p_0(z_0). For i = 1, ..., K, let z_i = f_i(z_) be a sequence of random variables transformed from z_0. The functions f_1, ..., f_K should be i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Kullback–Leibler Divergence

In mathematical statistics, the Kullback–Leibler divergence (also called relative entropy and I-divergence), denoted D_\text(P \parallel Q), is a type of statistical distance: a measure of how one probability distribution ''P'' is different from a second, reference probability distribution ''Q''. A simple interpretation of the KL divergence of ''P'' from ''Q'' is the expected excess surprise from using ''Q'' as a model when the actual distribution is ''P''. While it is a distance, it is not a metric, the most familiar type of distance: it is not symmetric in the two distributions (in contrast to variation of information), and does not satisfy the triangle inequality. Instead, in terms of information geometry, it is a type of divergence, a generalization of squared distance, and for certain classes of distributions (notably an exponential family), it satisfies a generalized Pythagorean theorem (which applies to squared distances). In the simple case, a relative entropy ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variational Bayesian Methods

Variational Bayesian methods are a family of techniques for approximating intractable integrals arising in Bayesian inference and machine learning. They are typically used in complex statistical models consisting of observed variables (usually termed "data") as well as unknown parameters and latent variables, with various sorts of relationships among the three types of random variables, as might be described by a graphical model. As typical in Bayesian inference, the parameters and latent variables are grouped together as "unobserved variables". Variational Bayesian methods are primarily used for two purposes: #To provide an analytical approximation to the posterior probability of the unobserved variables, in order to do statistical inference over these variables. #To derive a lower bound for the marginal likelihood (sometimes called the ''evidence'') of the observed data (i.e. the marginal probability of the data given the model, with marginalization performed over unobserve ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Quantization (image Processing)

Quantization, involved in image processing, is a lossy compression technique achieved by compressing a range of values to a single quantum (discrete) value. When the number of discrete symbols in a given stream is reduced, the stream becomes more compressible. For example, reducing the number of colors required to represent a digital image makes it possible to reduce its file size. Specific applications include DCT data quantization in JPEG and DWT data quantization in JPEG 2000. Color quantization Color quantization reduces the number of colors used in an image; this is important for displaying images on devices that support a limited number of colors and for efficiently compressing certain kinds of images. Most bitmap editors and many operating systems have built-in support for color quantization. Popular modern color quantization algorithms include the nearest color algorithm (for fixed palettes), the median cut algorithm, and an algorithm based on octrees. It is common ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Stein Thinning Of MCMC Output

Stein is a German, Yiddish and Norwegian word meaning "stone" and "pip" or "kernel". It stems from the same Germanic root as the English word stone. It may refer to: Places In Austria * Stein, a neighbourhood of Krems an der Donau, Lower Austria * Stein, Styria, a municipality in the district of Fürstenfeld, Styria * Stein (Lassing), a village in the district of Liezen, Styria * Stein an der Enns, a village in the district of Liezen, Styria In Canada * Stein River, a tributary of the Fraser River, from the Nlaka'pamux language ''Stagyn'', meaning "hidden place" **Stein Valley Nlaka'pamux Heritage Park, a British Columbia provincial park comprising the basin of that river ** Stein Mountain, a mountain in the Lillooet Ranges named for the river **Stein Lake, a lake in the upper reaches of the Stein River basin In Germany * Stein, Bavaria, a town in the district of Fürth, Bavaria * Stein, Schleswig-Holstein, a municipality in the district of Plön, Schleswig-Holstein * Stei ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Wasserstein Metric

In mathematics, the Wasserstein distance or Kantorovich–Rubinstein metric is a distance function defined between probability distributions on a given metric space M. It is named after Leonid Vaseršteĭn. Intuitively, if each distribution is viewed as a unit amount of earth (soil) piled on ''M'', the metric is the minimum "cost" of turning one pile into the other, which is assumed to be the amount of earth that needs to be moved times the mean distance it has to be moved. This problem was first formalised by Gaspard Monge in 1781. Because of this analogy, the metric is known in computer science as the earth mover's distance. The name "Wasserstein distance" was coined by R. L. Dobrushin in 1970, after learning of it in the work of Leonid Vaseršteĭn on Markov processes describing large systems of automata (Russian, 1969). However the metric was first defined by Leonid Kantorovich in ''The Mathematical Method of Production Planning and Organization'' (Russian original 1939 ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Infinitesimal Generator (stochastic Processes)

In mathematics — specifically, in stochastic analysis — the infinitesimal generator of a Feller process (i.e. a continuous-time Markov process satisfying certain regularity conditions) is a Fourier multiplier operator that encodes a great deal of information about the process. The generator is used in evolution equations such as the Kolmogorov backward equation (which describes the evolution of statistics of the process); its ''L''2 Hermitian adjoint is used in evolution equations such as the Fokker–Planck equation (which describes the evolution of the probability density functions of the process). Definition General case For a Feller process (X_t)_ with Feller semigroup T=(T_t)_ and state space E we define the generator (A,D(A)) by :D(A)=\left\, :A f=\lim_ \frac, for any f\in D(A). Here C_(E) denotes the Banach space of continuous functions on E vanishing at infinity, equipped with the supremum norm, and T_t f(x)= \mathbb^x f(X_t)=\mathbb(f(X_t), X_0=x). In ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |