|

Shrinkage Estimator

In statistics, shrinkage is the reduction in the effects of sampling variation. In regression analysis, a fitted relationship appears to perform less well on a new data set than on the data set used for fitting. In particular the value of the coefficient of determination 'shrinks'. This idea is complementary to overfitting and, separately, to the standard adjustment made in the coefficient of determination to compensate for the subjunctive effects of further sampling, like controlling for the potential of new explanatory terms improving the model by chance: that is, the adjustment formula itself provides "shrinkage." But the adjustment formula yields an artificial shrinkage. A shrinkage estimator is an estimator that, either explicitly or implicitly, incorporates the effects of shrinkage. In loose terms this means that a naive or raw estimate is improved by combining it with other information. The term relates to the notion that the improved estimate is made closer to the value supp ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German language, German: ''wikt:Statistik#German, Statistik'', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of statistical survey, surveys and experimental design, experiments.Dodge, Y. (2006) ''The Oxford Dictionary of Statistical Terms'', Oxford University Press. When census data cannot be collected, statisticians collect data by developing specific experiment designs and survey sample (statistics), samples. Representative sampling as ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sample Variance

In probability theory and statistics, variance is the expectation of the squared deviation of a random variable from its population mean or sample mean. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers is spread out from their average value. Variance has a central role in statistics, where some ideas that use it include descriptive statistics, statistical inference, hypothesis testing, goodness of fit, and Monte Carlo sampling. Variance is an important tool in the sciences, where statistical analysis of data is common. The variance is the square of the standard deviation, the second central moment of a distribution, and the covariance of the random variable with itself, and it is often represented by \sigma^2, s^2, \operatorname(X), V(X), or \mathbb(X). An advantage of variance as a measure of dispersion is that it is more amenable to algebraic manipulation than other measures of dispersion such as the expected absolute deviation; for e ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Principal Component Regression

In statistics, principal component regression (PCR) is a regression analysis technique that is based on principal component analysis (PCA). More specifically, PCR is used for estimating the unknown regression coefficients in a standard linear regression model. In PCR, instead of regressing the dependent variable on the explanatory variables directly, the principal components of the explanatory variables are used as regressors. One typically uses only a subset of all the principal components for regression, making PCR a kind of regularized procedure and also a type of shrinkage estimator. Often the principal components with higher variances (the ones based on eigenvectors corresponding to the higher eigenvalues of the sample variance-covariance matrix of the explanatory variables) are selected as regressors. However, for the purpose of predicting the outcome, the principal components with low variances may also be important, in some cases even more important. One major use o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mark And Recapture

Mark and recapture is a method commonly used in ecology to estimate an animal population's size where it is impractical to count every individual. A portion of the population is captured, marked, and released. Later, another portion will be captured and the number of marked individuals within the sample is counted. Since the number of marked individuals within the second sample should be proportional to the number of marked individuals in the whole population, an estimate of the total population size can be obtained by dividing the number of marked individuals by the proportion of marked individuals in the second sample. Other names for this method, or closely related methods, include capture-recapture, capture-mark-recapture, mark-recapture, sight-resight, mark-release-recapture, multiple systems estimation, band recovery, the Petersen method, and the Lincoln method. Another major application for these methods is in epidemiology, where they are used to estimate the completeness of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Decision Stump

A decision stump is a machine learning model consisting of a one-level decision tree. That is, it is a decision tree with one internal node (the root) which is immediately connected to the terminal nodes (its leaves). A decision stump makes a prediction based on the value of just a single input feature. Sometimes they are also called 1-rules. Depending on the type of the input feature, several variations are possible. For nominal features, one may build a stump which contains a leaf for each possible feature value or a stump with the two leaves, one of which corresponds to some chosen category, and the other leaf to all the other categories.This is what has been implemented in Weka's DecisionStump classifier. For binary features these two schemes are identical. A missing value may be treated as a yet another category. For continuous features, usually, some threshold feature value is selected, and the stump contains two leaves — for values below and above the threshold. However, r ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Boosting (machine Learning)

In machine learning, boosting is an ensemble meta-algorithm for primarily reducing bias, and also variance in supervised learning, and a family of machine learning algorithms that convert weak learners to strong ones. Boosting is based on the question posed by Kearns and Valiant (1988, 1989):Michael Kearns(1988)''Thoughts on Hypothesis Boosting'' Unpublished manuscript (Machine Learning class project, December 1988) "Can a set of weak learners create a single strong learner?" A weak learner is defined to be a classifier that is only slightly correlated with the true classification (it can label examples better than random guessing). In contrast, a strong learner is a classifier that is arbitrarily well-correlated with the true classification. Robert Schapire's affirmative answer in a 1990 paper to the question of Kearns and Valiant has had significant ramifications in machine learning and statistics, most notably leading to the development of boosting. When first introduced, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Additive Smoothing

In statistics, additive smoothing, also called Laplace smoothing or Lidstone smoothing, is a technique used to smooth categorical data. Given a set of observation counts \textstyle from a \textstyle -dimensional multinomial distribution with \textstyle trials, a "smoothed" version of the counts gives the estimator: :\hat\theta_i= \frac \qquad (i=1,\ldots,d), where the smoothed count \textstyle and the "pseudocount" ''α'' > 0 is a smoothing parameter. ''α'' = 0 corresponds to no smoothing. (This parameter is explained in below.) Additive smoothing is a type of shrinkage estimator, as the resulting estimate will be between the empirical probability ( relative frequency) \textstyle , and the uniform probability \textstyle . Invoking Laplace's rule of succession, some authors have argued that ''α'' should be 1 (in which case the term add-one smoothing is also used), though in practice a smaller value is typically chosen. From a Bayesian point of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Journal Of The Royal Statistical Society, Series C

The ''Journal of the Royal Statistical Society'' is a peer-reviewed scientific journal of statistics. It comprises three series and is published by Wiley for the Royal Statistical Society. History The Statistical Society of London was founded in 1834, but would not begin producing a journal for four years. From 1834 to 1837, members of the society would read the results of their studies to the other members, and some details were recorded in the proceedings. The first study reported to the society in 1834 was a simple survey of the occupations of people in Manchester, England. Conducted by going door-to-door and inquiring, the study revealed that the most common profession was mill-hands, followed closely by weavers. When founded, the membership of the Statistical Society of London overlapped almost completely with the statistical section of the British Association for the Advancement of Science. In 1837 a volume of ''Transactions of the Statistical Society of London'' were ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Journal Of The Royal Statistical Society, Series B

The ''Journal of the Royal Statistical Society'' is a peer-reviewed scientific journal of statistics. It comprises three series and is published by Wiley for the Royal Statistical Society. History The Statistical Society of London was founded in 1834, but would not begin producing a journal for four years. From 1834 to 1837, members of the society would read the results of their studies to the other members, and some details were recorded in the proceedings. The first study reported to the society in 1834 was a simple survey of the occupations of people in Manchester, England. Conducted by going door-to-door and inquiring, the study revealed that the most common profession was mill-hands, followed closely by weavers. When founded, the membership of the Statistical Society of London overlapped almost completely with the statistical section of the British Association for the Advancement of Science. In 1837 a volume of ''Transactions of the Statistical Society of London'' were wri ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Lasso Regression

In statistics and machine learning, lasso (least absolute shrinkage and selection operator; also Lasso or LASSO) is a regression analysis method that performs both variable selection and regularization in order to enhance the prediction accuracy and interpretability of the resulting statistical model. It was originally introduced in geophysics, and later by Robert Tibshirani, who coined the term. Lasso was originally formulated for linear regression models. This simple case reveals a substantial amount about the estimator. These include its relationship to ridge regression and best subset selection and the connections between lasso coefficient estimates and so-called soft thresholding. It also reveals that (like standard linear regression) the coefficient estimates do not need to be unique if covariates are collinear. Though originally defined for linear regression, lasso regularization is easily extended to other statistical models including generalized linear models, generaliz ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Ridge Regression

Ridge regression is a method of estimating the coefficients of multiple-regression models in scenarios where the independent variables are highly correlated. It has been used in many fields including econometrics, chemistry, and engineering. Also known as Tikhonov regularization, named for Andrey Tikhonov, it is a method of regularization of ill-posed problems. It is particularly useful to mitigate the problem of multicollinearity in linear regression, which commonly occurs in models with large numbers of parameters. In general, the method provides improved efficiency in parameter estimation problems in exchange for a tolerable amount of bias (see bias–variance tradeoff). The theory was first introduced by Hoerl and Kennard in 1970 in their ''Technometrics'' papers “RIDGE regressions: biased estimation of nonorthogonal problems” and “RIDGE regressions: applications in nonorthogonal problems”. This was the result of ten years of research into the field of ridge analysis. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

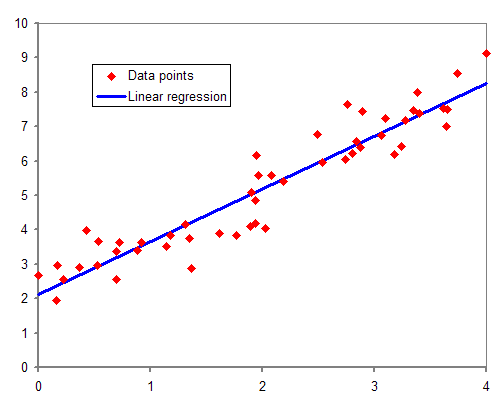

Regression Analysis

In statistical modeling, regression analysis is a set of statistical processes for estimating the relationships between a dependent variable (often called the 'outcome' or 'response' variable, or a 'label' in machine learning parlance) and one or more independent variables (often called 'predictors', 'covariates', 'explanatory variables' or 'features'). The most common form of regression analysis is linear regression, in which one finds the line (or a more complex linear combination) that most closely fits the data according to a specific mathematical criterion. For example, the method of ordinary least squares computes the unique line (or hyperplane) that minimizes the sum of squared differences between the true data and that line (or hyperplane). For specific mathematical reasons (see linear regression), this allows the researcher to estimate the conditional expectation (or population average value) of the dependent variable when the independent variables take on a given ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |