|

Boosting (machine Learning)

In machine learning (ML), boosting is an Ensemble learning, ensemble metaheuristic for primarily reducing Bias–variance tradeoff, bias (as opposed to variance). It can also improve the Stability (learning theory), stability and accuracy of ML Statistical classification, classification and Regression analysis, regression algorithms. Hence, it is prevalent in supervised learning for converting weak learners to strong learners. The concept of boosting is based on the question posed by Michael Kearns (computer scientist), Kearns and Leslie Valiant, Valiant (1988, 1989):Michael Kearns(1988)''Thoughts on Hypothesis Boosting'' Unpublished manuscript (Machine Learning class project, December 1988) "Can a set of weak learners create a single strong learner?" A weak learner is defined as a Statistical classification, classifier that is only slightly correlated with the true classification. A strong learner is a classifier that is arbitrarily well-correlated with the true classification. R ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Learning

Machine learning (ML) is a field of study in artificial intelligence concerned with the development and study of Computational statistics, statistical algorithms that can learn from data and generalise to unseen data, and thus perform Task (computing), tasks without explicit Machine code, instructions. Within a subdiscipline in machine learning, advances in the field of deep learning have allowed Neural network (machine learning), neural networks, a class of statistical algorithms, to surpass many previous machine learning approaches in performance. ML finds application in many fields, including natural language processing, computer vision, speech recognition, email filtering, agriculture, and medicine. The application of ML to business problems is known as predictive analytics. Statistics and mathematical optimisation (mathematical programming) methods comprise the foundations of machine learning. Data mining is a related field of study, focusing on exploratory data analysi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sara Solla

Sara A. Solla is an Argentine-American physicist and neuroscientist whose research applies ideas from statistical mechanics to problems involving neural networks, machine learning, and neuroscience. She is a professor of physics and of physiology at Northwestern University. Education and career Solla is originally from Buenos Aires, and earned a licenciatura in physics in 1974 from the University of Buenos Aires. She completed a Ph.D. in physics in 1982 at the University of Washington. She became a postdoctoral researcher at Cornell University and at the Thomas J. Watson Research Center of IBM Research. Influenced to work in neural networks by a talk from John Hopfield at Cornell, she became a researcher in the neural networks group at Bell Labs. She took her present position at Northwestern University in 1997. Recognition Solla is a Member of the American Academy of Arts and Sciences The American Academy of Arts and Sciences (The Academy) is one of the oldest learned so ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Loss Functions For Classification

In machine learning and mathematical optimization, loss functions for classification are computationally feasible loss functions representing the price paid for inaccuracy of predictions in classification problems (problems of identifying which category a particular observation belongs to). Given \mathcal as the space of all possible inputs (usually \mathcal \subset \mathbb^d), and \mathcal = \ as the set of labels (possible outputs), a typical goal of classification algorithms is to find a function f: \mathcal \to \mathcal which best predicts a label y for a given input \vec. However, because of incomplete information, noise in the measurement, or probabilistic components in the underlying process, it is possible for the same \vec to generate different y. As a result, the goal of the learning problem is to minimize expected loss (also known as the risk), defined as :I = \displaystyle \int_ V(f(\vec),y) \, p(\vec,y) \, d\vec \, dy where V(f(\vec),y) is a given loss function, a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Convex Function

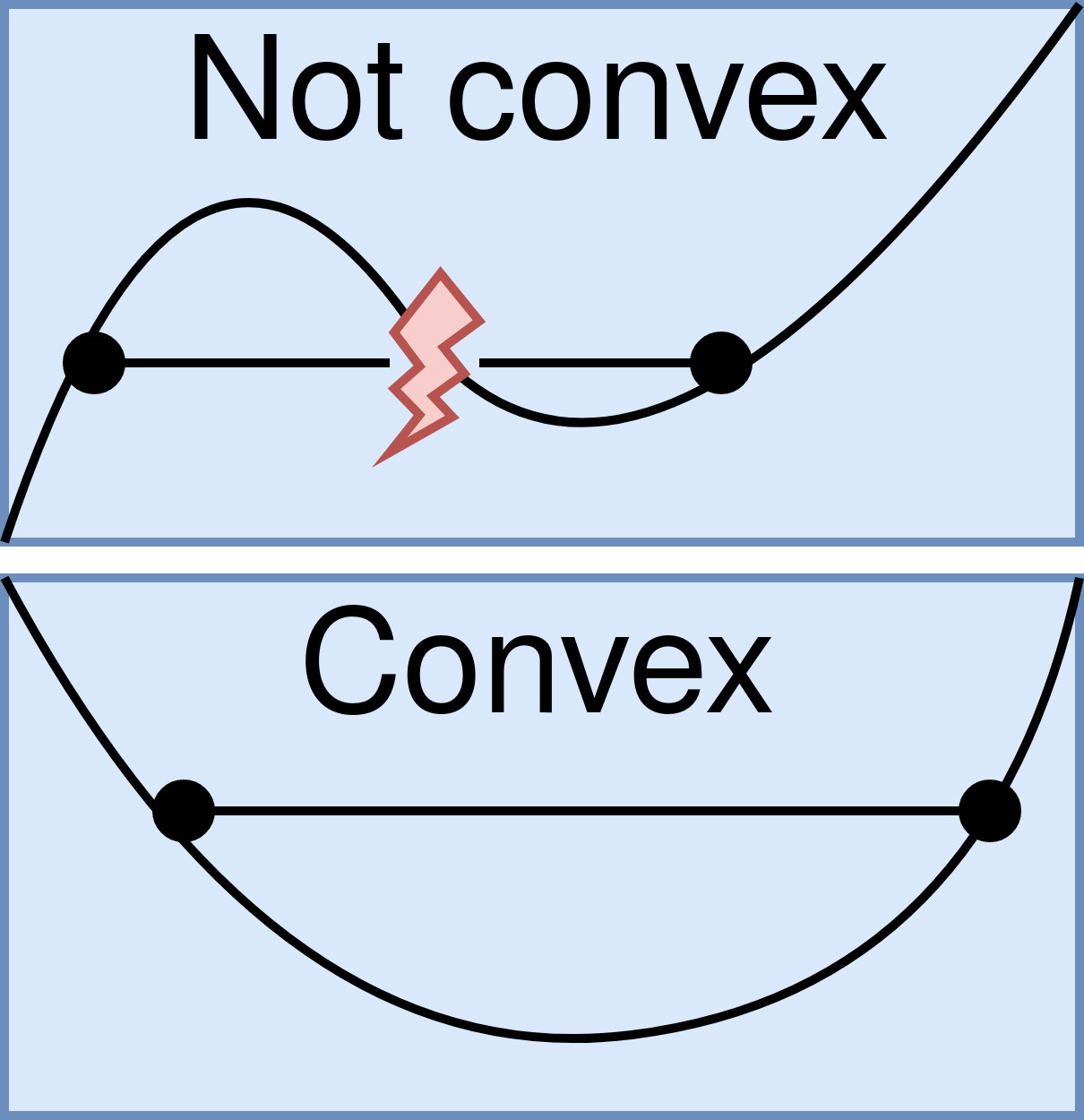

In mathematics, a real-valued function is called convex if the line segment between any two distinct points on the graph of a function, graph of the function lies above or on the graph between the two points. Equivalently, a function is convex if its epigraph (mathematics), ''epigraph'' (the set of points on or above the graph of the function) is a convex set. In simple terms, a convex function graph is shaped like a cup \cup (or a straight line like a linear function), while a concave function's graph is shaped like a cap \cap. A twice-differentiable function, differentiable function of a single variable is convex if and only if its second derivative is nonnegative on its entire domain of a function, domain. Well-known examples of convex functions of a single variable include a linear function f(x) = cx (where c is a real number), a quadratic function cx^2 (c as a nonnegative real number) and an exponential function ce^x (c as a nonnegative real number). Convex functions pl ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Function Space

In mathematics, a function space is a set of functions between two fixed sets. Often, the domain and/or codomain will have additional structure which is inherited by the function space. For example, the set of functions from any set into a vector space has a natural vector space structure given by pointwise addition and scalar multiplication. In other scenarios, the function space might inherit a topological or metric structure, hence the name function ''space''. In linear algebra Let be a field and let be any set. The functions → can be given the structure of a vector space over where the operations are defined pointwise, that is, for any , : → , any in , and any in , define \begin (f+g)(x) &= f(x)+g(x) \\ (c\cdot f)(x) &= c\cdot f(x) \end When the domain has additional structure, one might consider instead the subset (or subspace) of all such functions which respect that structure. For example, if and also itself are vector spaces over , the se ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Gradient Descent

Gradient descent is a method for unconstrained mathematical optimization. It is a first-order iterative algorithm for minimizing a differentiable multivariate function. The idea is to take repeated steps in the opposite direction of the gradient (or approximate gradient) of the function at the current point, because this is the direction of steepest descent. Conversely, stepping in the direction of the gradient will lead to a trajectory that maximizes that function; the procedure is then known as ''gradient ascent''. It is particularly useful in machine learning for minimizing the cost or loss function. Gradient descent should not be confused with local search algorithms, although both are iterative methods for optimization. Gradient descent is generally attributed to Augustin-Louis Cauchy, who first suggested it in 1847. Jacques Hadamard independently proposed a similar method in 1907. Its convergence properties for non-linear optimization problems were first studied by Has ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

LogitBoost

In machine learning and computational learning theory, LogitBoost is a boosting algorithm formulated by Jerome Friedman, Trevor Hastie, and Robert Tibshirani. The original paper casts the AdaBoost algorithm into a statistical framework. Specifically, if one considers AdaBoost as a generalized additive model and then applies the cost function of logistic regression, one can derive the LogitBoost algorithm. Minimizing the LogitBoost cost function LogitBoost can be seen as a convex optimization. Specifically, given that we seek an additive model of the form :f = \sum_t \alpha_t h_t the LogitBoost algorithm minimizes the logistic loss: :\sum_i \log\left( 1 + e^\right) See also * Gradient boosting Gradient boosting is a machine learning technique based on boosting in a functional space, where the target is ''pseudo-residuals'' instead of residuals as in traditional boosting. It gives a prediction model in the form of an ensemble of weak ... * Logistic model tree Referen ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

LPBoost

Linear Programming Boosting (LPBoost) is a supervised classifier from the boosting family of classifiers. LPBoost maximizes a margin between training samples of different classes, and thus also belongs to the class of margin classifier algorithms. Consider a classification function f: \mathcal \to \, which classifies samples from a space \mathcal into one of two classes, labelled 1 and -1, respectively. LPBoost is an algorithm for learning such a classification function, given a set of training examples with known class labels. LPBoost is a machine learning technique especially suited for joint classification and feature selection in structured domains. LPBoost overview As in all boosting classifiers, the final classification function is of the form :f(\boldsymbol) = \sum_^ \alpha_j h_j(\boldsymbol), where \alpha_j are non-negative weightings for ''weak'' classifiers h_j: \mathcal \to \. Each individual weak classifier h_j may be just a little bit better than random, bu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hypothesis

A hypothesis (: hypotheses) is a proposed explanation for a phenomenon. A scientific hypothesis must be based on observations and make a testable and reproducible prediction about reality, in a process beginning with an educated guess or thought. If a hypothesis is repeatedly independently demonstrated by experiment to be true, it becomes a scientific theory. In colloquial usage, the words "hypothesis" and "theory" are often used interchangeably, but this is incorrect in the context of science. A working hypothesis is a provisionally-accepted hypothesis used for the purpose of pursuing further progress in research. Working hypotheses are frequently discarded, and often proposed with knowledge (and warning) that they are incomplete and thus false, with the intent of moving research in at least somewhat the right direction, especially when scientists are stuck on an issue and brainstorming ideas. A different meaning of the term ''hypothesis'' is used in formal l ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Training Data

In machine learning, a common task is the study and construction of algorithms that can learn from and make predictions on data. Such algorithms function by making data-driven predictions or decisions, through building a mathematical model from input data. These input data used to build the model are usually divided into multiple data sets. In particular, three data sets are commonly used in different stages of the creation of the model: training, validation, and test sets. The model is initially fit on a training data set, which is a set of examples used to fit the parameters (e.g. weights of connections between neurons in artificial neural networks) of the model. The model (e.g. a naive Bayes classifier) is trained on the training data set using a supervised learning method, for example using optimization methods such as gradient descent or stochastic gradient descent. In practice, the training data set often consists of pairs of an input vector (or scalar) and the correspondi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probably Approximately Correct Learning

In computational learning theory, probably approximately correct (PAC) learning is a framework for mathematical analysis of machine learning. It was proposed in 1984 by Leslie Valiant.L. Valiant. A theory of the learnable.' Communications of the ACM, 27, 1984. In this framework, the learner receives samples and must select a generalization function (called the ''hypothesis'') from a certain class of possible functions. The goal is that, with high probability (the "probably" part), the selected function will have low generalization error (the "approximately correct" part). The learner must be able to learn the concept given any arbitrary approximation ratio, probability of success, or distribution of the samples. The model was later extended to treat noise (misclassified samples). An important innovation of the PAC framework is the introduction of computational complexity theory concepts to machine learning. In particular, the learner is expected to find efficient functions (t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |