|

LogitBoost

In machine learning and computational learning theory, LogitBoost is a boosting algorithm formulated by Jerome Friedman, Trevor Hastie, and Robert Tibshirani. The original paper casts the AdaBoost algorithm into a statistical framework. Specifically, if one considers AdaBoost as a generalized additive model and then applies the cost function of logistic regression, one can derive the LogitBoost algorithm. Minimizing the LogitBoost cost function LogitBoost can be seen as a convex optimization. Specifically, given that we seek an additive model of the form :f = \sum_t \alpha_t h_t the LogitBoost algorithm minimizes the logistic loss: :\sum_i \log\left( 1 + e^\right) See also * Gradient boosting Gradient boosting is a machine learning technique used in regression and classification tasks, among others. It gives a prediction model in the form of an ensemble of weak prediction models, which are typically decision trees. When a decision tr ... * Logistic model tree Referen ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

AdaBoost

AdaBoost, short for ''Adaptive Boosting'', is a statistical classification meta-algorithm formulated by Yoav Freund and Robert Schapire in 1995, who won the 2003 Gödel Prize for their work. It can be used in conjunction with many other types of learning algorithms to improve performance. The output of the other learning algorithms ('weak learners') is combined into a weighted sum that represents the final output of the boosted classifier. Usually, AdaBoost is presented for binary classification, although it can be generalized to multiple classes or bounded intervals on the real line. AdaBoost is adaptive in the sense that subsequent weak learners are tweaked in favor of those instances misclassified by previous classifiers. In some problems it can be less susceptible to the overfitting problem than other learning algorithms. The individual learners can be weak, but as long as the performance of each one is slightly better than random guessing, the final model can be proven t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Boosting (meta-algorithm)

In machine learning, boosting is an ensemble meta-algorithm for primarily reducing bias, and also variance in supervised learning, and a family of machine learning algorithms that convert weak learners to strong ones. Boosting is based on the question posed by Kearns and Valiant (1988, 1989):Michael Kearns(1988)''Thoughts on Hypothesis Boosting'' Unpublished manuscript (Machine Learning class project, December 1988) "Can a set of weak learners create a single strong learner?" A weak learner is defined to be a classifier that is only slightly correlated with the true classification (it can label examples better than random guessing). In contrast, a strong learner is a classifier that is arbitrarily well-correlated with the true classification. Robert Schapire's affirmative answer in a 1990 paper to the question of Kearns and Valiant has had significant ramifications in machine learning and statistics, most notably leading to the development of boosting. When first introduce ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Logistic Model Tree

In computer science, a logistic model tree (LMT) is a classification model with an associated supervised training algorithm that combines logistic regression (LR) and decision tree learning. Logistic model trees are based on the earlier idea of a model tree: a decision tree that has linear regression models at its leaves to provide a piecewise linear regression model (where ordinary decision trees with constants at their leaves would produce a piecewise constant model). In the logistic variant, the LogitBoost algorithm is used to produce an LR model at every node in the tree; the node is then split using the C4.5 criterion. Each LogitBoost invocation is warm-started from its results in the parent node. Finally, the tree is pruned. The basic LMT induction algorithm uses cross-validation to find a number of LogitBoost iterations that does not overfit the training data. A faster version has been proposed that uses the Akaike information criterion to control LogitBoost stopping. R ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Loss Functions For Classification

In machine learning and mathematical optimization, loss functions for classification are computationally feasible loss functions representing the price paid for inaccuracy of predictions in classification problems (problems of identifying which category a particular observation belongs to). Given \mathcal as the space of all possible inputs (usually \mathcal \subset \mathbb^d), and \mathcal = \ as the set of labels (possible outputs), a typical goal of classification algorithms is to find a function f: \mathcal \to \mathcal which best predicts a label y for a given input \vec. However, because of incomplete information, noise in the measurement, or probabilistic components in the underlying process, it is possible for the same \vec to generate different y. As a result, the goal of the learning problem is to minimize expected loss (also known as the risk), defined as :I = \displaystyle \int_ V(f(\vec),y) \, p(\vec,y) \, d\vec \, dy where V(f(\vec),y) is a given loss function, an ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Machine Learning

Machine learning (ML) is a field of inquiry devoted to understanding and building methods that 'learn', that is, methods that leverage data to improve performance on some set of tasks. It is seen as a part of artificial intelligence. Machine learning algorithms build a model based on sample data, known as training data, in order to make predictions or decisions without being explicitly programmed to do so. Machine learning algorithms are used in a wide variety of applications, such as in medicine, email filtering, speech recognition, agriculture, and computer vision, where it is difficult or unfeasible to develop conventional algorithms to perform the needed tasks.Hu, J.; Niu, H.; Carrasco, J.; Lennox, B.; Arvin, F.,Voronoi-Based Multi-Robot Autonomous Exploration in Unknown Environments via Deep Reinforcement Learning IEEE Transactions on Vehicular Technology, 2020. A subset of machine learning is closely related to computational statistics, which focuses on making pred ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Computational Learning Theory

In computer science, computational learning theory (or just learning theory) is a subfield of artificial intelligence devoted to studying the design and analysis of machine learning algorithms. Overview Theoretical results in machine learning mainly deal with a type of inductive learning called supervised learning. In supervised learning, an algorithm is given samples that are labeled in some useful way. For example, the samples might be descriptions of mushrooms, and the labels could be whether or not the mushrooms are edible. The algorithm takes these previously labeled samples and uses them to induce a classifier. This classifier is a function that assigns labels to samples, including samples that have not been seen previously by the algorithm. The goal of the supervised learning algorithm is to optimize some measure of performance such as minimizing the number of mistakes made on new samples. In addition to performance bounds, computational learning theory studies the t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Jerome H

Jerome (; la, Eusebius Sophronius Hieronymus; grc-gre, Εὐσέβιος Σωφρόνιος Ἱερώνυμος; – 30 September 420), also known as Jerome of Stridon, was a Christian priest, confessor, theologian, and historian; he is commonly known as Saint Jerome. Jerome was born at Stridon, a village near Emona on the border of Dalmatia and Pannonia. He is best known for his translation of the Bible into Latin (the translation that became known as the Vulgate) and his commentaries on the whole Bible. Jerome attempted to create a translation of the Old Testament based on a Hebrew version, rather than the Septuagint, as Latin Bible translations used to be performed before him. His list of writings is extensive, and beside his biblical works, he wrote polemical and historical essays, always from a theologian's perspective. Jerome was known for his teachings on Christian moral life, especially to those living in cosmopolitan centers such as Rome. In many cases, he ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Trevor Hastie

Trevor John Hastie (born 27 June 1953) is an American statistician and computer scientist. He is currently serving as the John A. Overdeck Professor of Mathematical Sciences and Professor of Statistics at Stanford University. Hastie is known for his contributions to applied statistics, especially in the field of machine learning, data mining, and bioinformatics. He has authored several popular books in statistical learning, including ''The Elements of Statistical Learning: Data Mining, Inference, and Prediction''. Hastie has been listed as an ISI Highly Cited Author in Mathematics by the ISI Web of Knowledge. Education and career Hastie was born on 27 June 1953 in South Africa. He received his B.S. in statistics from the Rhodes University in 1976 and master's degree from University of Cape Town in 1979. Hastie joined the doctoral program at Stanford University in 1980 and received his Ph.D. in 1984 under the supervision of Werner Stuetzle. His dissertation was "Principal Curves ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Robert Tibshirani

Robert Tibshirani (born July 10, 1956) is a professor in the Departments of Statistics and Biomedical Data Science at Stanford University. He was a professor at the University of Toronto from 1985 to 1998. In his work, he develops statistical tools for the analysis of complex datasets, most recently in genomics and proteomics. His most well-known contributions are the Lasso method, which proposed the use of L1 penalization in regression and related problems, and Significance Analysis of Microarrays. Education and early life Tibshirani was born on 10 July 1956 in Niagara Falls, Ontario, Canada. He received his B. Math. in statistics and computer science from the University of Waterloo in 1979 and a Master's degree in Statistics from University of Toronto in 1980. Tibshirani joined the doctoral program at Stanford University in 1981 and received his Ph.D. in 1984 under the supervision of Bradley Efron. His dissertation was entitled "Local likelihood estimation". Honors and ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Generalized Additive Model

In statistics, a generalized additive model (GAM) is a generalized linear model in which the linear response variable depends linearly on unknown smooth functions of some predictor variables, and interest focuses on inference about these smooth functions. GAMs were originally developed by Trevor Hastie and Robert Tibshirani to blend properties of generalized linear models with additive models. They can be interpreted as the discriminative generalization of the naive Bayes generative model. The model relates a univariate response variable, ''Y'', to some predictor variables, ''x''''i''. An exponential family distribution is specified for Y (for example normal, binomial or Poisson distributions) along with a link function ''g'' (for example the identity or log functions) relating the expected value of ''Y'' to the predictor variables via a structure such as : g(\operatorname(Y))=\beta_0 + f_1(x_1) + f_2(x_2)+ \cdots + f_m(x_m).\,\! The functions ''f''''i'' may be functions w ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Logistic Regression

In statistics, the logistic model (or logit model) is a statistical model that models the probability of an event taking place by having the log-odds for the event be a linear function (calculus), linear combination of one or more independent variables. In regression analysis, logistic regression (or logit regression) is estimation theory, estimating the parameters of a logistic model (the coefficients in the linear combination). Formally, in binary logistic regression there is a single binary variable, binary dependent variable, coded by an indicator variable, where the two values are labeled "0" and "1", while the independent variables can each be a binary variable (two classes, coded by an indicator variable) or a continuous variable (any real value). The corresponding probability of the value labeled "1" can vary between 0 (certainly the value "0") and 1 (certainly the value "1"), hence the labeling; the function that converts log-odds to probability is the logistic function, h ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Convex Optimization

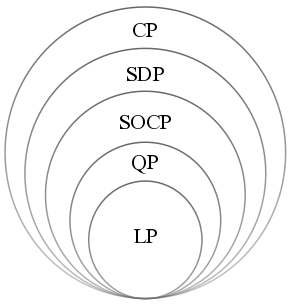

Convex optimization is a subfield of mathematical optimization that studies the problem of minimizing convex functions over convex sets (or, equivalently, maximizing concave functions over convex sets). Many classes of convex optimization problems admit polynomial-time algorithms, whereas mathematical optimization is in general NP-hard. Convex optimization has applications in a wide range of disciplines, such as automatic control systems, estimation and signal processing, communications and networks, electronic circuit design, data analysis and modeling, finance, statistics (optimal experimental design), and structural optimization, where the approximation concept has proven to be efficient. With recent advancements in computing and optimization algorithms, convex programming is nearly as straightforward as linear programming. Definition A convex optimization problem is an optimization problem in which the objective function is a convex function and the feasible set ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |