|

Rate–distortion Theory

Rate–distortion theory is a major branch of information theory which provides the theoretical foundations for lossy data compression; it addresses the problem of determining the minimal number of bits per symbol, as measured by the rate ''R'', that should be communicated over a channel, so that the source (input signal) can be approximately reconstructed at the receiver (output signal) without exceeding an expected distortion ''D''. Introduction Rate–distortion theory gives an analytical expression for how much compression can be achieved using lossy compression methods. Many of the existing audio, speech, image, and video compression techniques have transforms, quantization, and bit-rate allocation procedures that capitalize on the general shape of rate–distortion functions. Rate–distortion theory was created by Claude Shannon in his foundational work on information theory. In rate–distortion theory, the ''rate'' is usually understood as the number of bits per ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Theory

Information theory is the scientific study of the quantification, storage, and communication of information. The field was originally established by the works of Harry Nyquist and Ralph Hartley, in the 1920s, and Claude Shannon in the 1940s. The field is at the intersection of probability theory, statistics, computer science, statistical mechanics, information engineering, and electrical engineering. A key measure in information theory is entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a fair coin flip (with two equally likely outcomes) provides less information (lower entropy) than specifying the outcome from a roll of a die (with six equally likely outcomes). Some other important measures in information theory are mutual information, channel capacity, error exponents, and relative entropy. Important sub-fields of information theory include sourc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Quantization (signal Processing)

Quantization, in mathematics and digital signal processing, is the process of mapping input values from a large set (often a continuous set) to output values in a (countable) smaller set, often with a finite number of elements. Rounding and truncation are typical examples of quantization processes. Quantization is involved to some degree in nearly all digital signal processing, as the process of representing a signal in digital form ordinarily involves rounding. Quantization also forms the core of essentially all lossy compression algorithms. The difference between an input value and its quantized value (such as round-off error) is referred to as quantization error. A device or algorithmic function that performs quantization is called a quantizer. An analog-to-digital converter is an example of a quantizer. Example For example, rounding a real number x to the nearest integer value forms a very basic type of quantizer – a ''uniform'' one. A typical (''mid-tread'') u ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

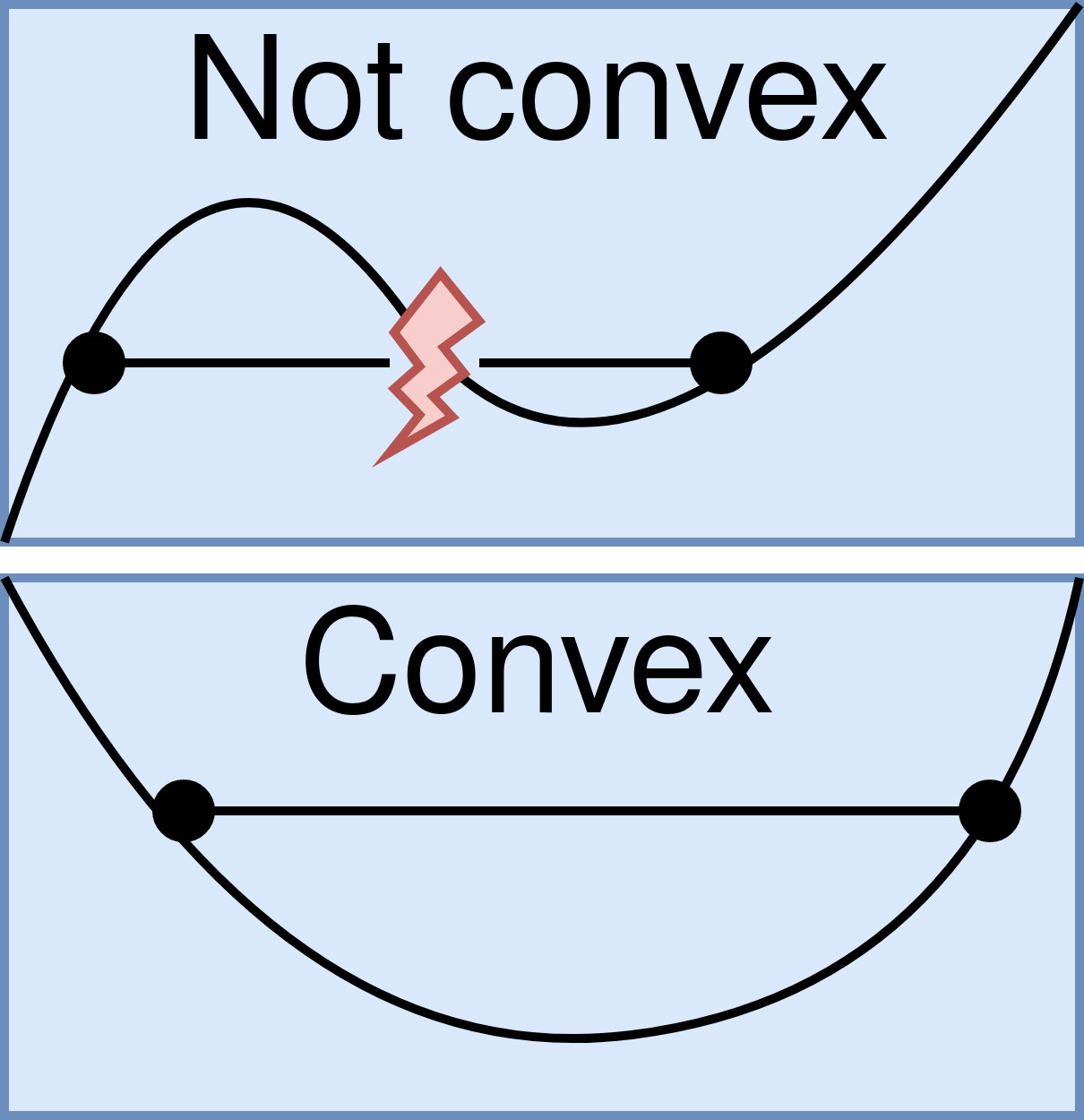

Convex Function

In mathematics, a real-valued function is called convex if the line segment between any two points on the graph of the function lies above the graph between the two points. Equivalently, a function is convex if its epigraph (the set of points on or above the graph of the function) is a convex set. A twice-differentiable function of a single variable is convex if and only if its second derivative is nonnegative on its entire domain. Well-known examples of convex functions of a single variable include the quadratic function x^2 and the exponential function e^x. In simple terms, a convex function refers to a function whose graph is shaped like a cup \cup, while a concave function's graph is shaped like a cap \cap. Convex functions play an important role in many areas of mathematics. They are especially important in the study of optimization problems where they are distinguished by a number of convenient properties. For instance, a strictly convex function on an open set has ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Monotonically Decreasing

In mathematics, a monotonic function (or monotone function) is a function between ordered sets that preserves or reverses the given order. This concept first arose in calculus, and was later generalized to the more abstract setting of order theory. In calculus and analysis In calculus, a function f defined on a subset of the real numbers with real values is called ''monotonic'' if and only if it is either entirely non-increasing, or entirely non-decreasing. That is, as per Fig. 1, a function that increases monotonically does not exclusively have to increase, it simply must not decrease. A function is called ''monotonically increasing'' (also ''increasing'' or ''non-decreasing'') if for all x and y such that x \leq y one has f\!\left(x\right) \leq f\!\left(y\right), so f preserves the order (see Figure 1). Likewise, a function is called ''monotonically decreasing'' (also ''decreasing'' or ''non-increasing'') if, whenever x \leq y, then f\!\left(x\right) \geq f\!\left(y\ri ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Continuous Function

In mathematics, a continuous function is a function such that a continuous variation (that is a change without jump) of the argument induces a continuous variation of the value of the function. This means that there are no abrupt changes in value, known as '' discontinuities''. More precisely, a function is continuous if arbitrarily small changes in its value can be assured by restricting to sufficiently small changes of its argument. A discontinuous function is a function that is . Up until the 19th century, mathematicians largely relied on intuitive notions of continuity, and considered only continuous functions. The epsilon–delta definition of a limit was introduced to formalize the definition of continuity. Continuity is one of the core concepts of calculus and mathematical analysis, where arguments and values of functions are real and complex numbers. The concept has been generalized to functions between metric spaces and between topological spaces. The latter are t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Optimization Problem

In mathematics, computer science and economics, an optimization problem is the problem of finding the ''best'' solution from all feasible solutions. Optimization problems can be divided into two categories, depending on whether the variables are continuous or discrete: * An optimization problem with discrete variables is known as a '' discrete optimization'', in which an object such as an integer, permutation or graph must be found from a countable set. * A problem with continuous variables is known as a ''continuous optimization'', in which an optimal value from a continuous function must be found. They can include constrained problems and multimodal problems. Continuous optimization problem The '' standard form'' of a continuous optimization problem is \begin &\underset& & f(x) \\ &\operatorname & &g_i(x) \leq 0, \quad i = 1,\dots,m \\ &&&h_j(x) = 0, \quad j = 1, \dots,p \end where * is the objective function to be minimized over the -variable vector , * are called ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Analytical Expression

In mathematics, a closed-form expression is a mathematical expression that uses a finite number of standard operations. It may contain constants, variables, certain well-known operations (e.g., + − × ÷), and functions (e.g., ''n''th root, exponent, logarithm, trigonometric functions, and inverse hyperbolic functions), but usually no limit, differentiation, or integration. The set of operations and functions may vary with author and context. Example: roots of polynomials The solutions of any quadratic equation with complex coefficients can be expressed in closed form in terms of addition, subtraction, multiplication, division, and square root extraction, each of which is an elementary function. For example, the quadratic equation :ax^2+bx+c=0, is tractable since its solutions can be expressed as a closed-form expression, i.e. in terms of elementary functions: :x=\frac. Similarly, solutions of cubic and quartic (third and fourth degree) equations can be ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Continuous Signal

In mathematical dynamics, discrete time and continuous time are two alternative frameworks within which variables that evolve over time are modeled. Discrete time Discrete time views values of variables as occurring at distinct, separate "points in time", or equivalently as being unchanged throughout each non-zero region of time ("time period")—that is, time is viewed as a discrete variable. Thus a non-time variable jumps from one value to another as time moves from one time period to the next. This view of time corresponds to a digital clock that gives a fixed reading of 10:37 for a while, and then jumps to a new fixed reading of 10:38, etc. In this framework, each variable of interest is measured once at each time period. The number of measurements between any two time periods is finite. Measurements are typically made at sequential integer values of the variable "time". A discrete signal or discrete-time signal is a time series consisting of a sequence of quantit ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Amplitude

The amplitude of a periodic variable is a measure of its change in a single period (such as time or spatial period). The amplitude of a non-periodic signal is its magnitude compared with a reference value. There are various definitions of amplitude (see below), which are all functions of the magnitude of the differences between the variable's extreme values. In older texts, the phase of a periodic function is sometimes called the amplitude. Definitions Peak amplitude & semi-amplitude For symmetric periodic waves, like sine waves, square waves or triangle waves ''peak amplitude'' and ''semi amplitude'' are the same. Peak amplitude In audio system measurements, telecommunications and others where the measurand is a signal that swings above and below a reference value but is not sinusoidal, peak amplitude is often used. If the reference is zero, this is the maximum absolute value of the signal; if the reference is a mean value (DC component), the peak amplitude is th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Infimum And Supremum

In mathematics, the infimum (abbreviated inf; plural infima) of a subset S of a partially ordered set P is a greatest element in P that is less than or equal to each element of S, if such an element exists. Consequently, the term ''greatest lower bound'' (abbreviated as ) is also commonly used. The supremum (abbreviated sup; plural suprema) of a subset S of a partially ordered set P is the least element in P that is greater than or equal to each element of S, if such an element exists. Consequently, the supremum is also referred to as the ''least upper bound'' (or ). The infimum is in a precise sense dual to the concept of a supremum. Infima and suprema of real numbers are common special cases that are important in analysis, and especially in Lebesgue integration. However, the general definitions remain valid in the more abstract setting of order theory where arbitrary partially ordered sets are considered. The concepts of infimum and supremum are close to minimum and max ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Conditional Entropy

In information theory, the conditional entropy quantifies the amount of information needed to describe the outcome of a random variable Y given that the value of another random variable X is known. Here, information is measured in shannons, nats, or hartleys. The ''entropy of Y conditioned on X'' is written as \Eta(Y, X). Definition The conditional entropy of Y given X is defined as where \mathcal X and \mathcal Y denote the support sets of X and Y. ''Note:'' Here, the convention is that the expression 0 \log 0 should be treated as being equal to zero. This is because \lim_ \theta\, \log \theta = 0. Intuitively, notice that by definition of expected value and of conditional probability, \displaystyle H(Y, X) can be written as H(Y, X) = \mathbb (X,Y)/math>, where f is defined as \displaystyle f(x,y) := -\log\Big(\frac\Big) = -\log(p(y, x)). One can think of \displaystyle f as associating each pair \displaystyle (x, y) with a quantity measuring the information conten ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mutual Information

In probability theory and information theory, the mutual information (MI) of two random variables is a measure of the mutual dependence between the two variables. More specifically, it quantifies the " amount of information" (in units such as shannons (bits), nats or hartleys) obtained about one random variable by observing the other random variable. The concept of mutual information is intimately linked to that of entropy of a random variable, a fundamental notion in information theory that quantifies the expected "amount of information" held in a random variable. Not limited to real-valued random variables and linear dependence like the correlation coefficient, MI is more general and determines how different the joint distribution of the pair (X,Y) is from the product of the marginal distributions of X and Y. MI is the expected value of the pointwise mutual information (PMI). The quantity was defined and analyzed by Claude Shannon in his landmark paper "A Mathemati ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |