rate–distortion theory on:

[Wikipedia]

[Google]

[Amazon]

Rate–distortion theory is a major branch of

Rate–distortion theory gives an analytical expression for how much compression can be achieved using lossy compression methods. Many of the existing audio, speech, image, and video compression techniques have transforms, quantization, and bit-rate allocation procedures that capitalize on the general shape of rate–distortion functions.

Rate–distortion theory was created by

Rate–distortion theory gives an analytical expression for how much compression can be achieved using lossy compression methods. Many of the existing audio, speech, image, and video compression techniques have transforms, quantization, and bit-rate allocation procedures that capitalize on the general shape of rate–distortion functions.

Rate–distortion theory was created by

Rate–distortion theory tell us that 'no compression system exists that performs outside the gray area'. The closer a practical compression system is to the red (lower) bound, the better it performs. As a general rule, this bound can only be attained by increasing the coding block length parameter. Nevertheless, even at unit blocklengths one can often find good (scalar) quantizers that operate at distances from the rate–distortion function that are practically relevant.

This rate–distortion function holds only for Gaussian memoryless sources. It is known that the Gaussian source is the most "difficult" source to encode: for a given mean square error, it requires the greatest number of bits. The performance of a practical compression system working on—say—images, may well be below the lower bound shown.

Rate–distortion theory tell us that 'no compression system exists that performs outside the gray area'. The closer a practical compression system is to the red (lower) bound, the better it performs. As a general rule, this bound can only be attained by increasing the coding block length parameter. Nevertheless, even at unit blocklengths one can often find good (scalar) quantizers that operate at distances from the rate–distortion function that are practically relevant.

This rate–distortion function holds only for Gaussian memoryless sources. It is known that the Gaussian source is the most "difficult" source to encode: for a given mean square error, it requires the greatest number of bits. The performance of a practical compression system working on—say—images, may well be below the lower bound shown.

VcDemo Image and Video Compression Learning Tool

{{DEFAULTSORT:Rate-distortion theory Data compression Information theory

information theory

Information theory is the mathematical study of the quantification (science), quantification, Data storage, storage, and telecommunications, communication of information. The field was established and formalized by Claude Shannon in the 1940s, ...

which provides the theoretical foundations for lossy data compression

In information technology, lossy compression or irreversible compression is the class of data compression methods that uses inexact approximations and partial data discarding to represent the content. These techniques are used to reduce data size ...

; it addresses the problem of determining the minimal number of bits per symbol, as measured by the rate ''R'', that should be communicated over a channel, so that the source (input signal) can be approximately reconstructed at the receiver (output signal) without exceeding an expected distortion ''D''.

Introduction

Claude Shannon

Claude Elwood Shannon (April 30, 1916 – February 24, 2001) was an American mathematician, electrical engineer, computer scientist, cryptographer and inventor known as the "father of information theory" and the man who laid the foundations of th ...

in his foundational work on information theory.

In rate–distortion theory, the ''rate'' is usually understood as the number of bit

The bit is the most basic unit of information in computing and digital communication. The name is a portmanteau of binary digit. The bit represents a logical state with one of two possible values. These values are most commonly represented as ...

s per data sample to be stored or transmitted. The notion of ''distortion'' is a subject of on-going discussion. In the most simple case (which is actually used in most cases), the distortion is defined as the expected value of the square of the difference between input and output signal (i.e., the mean squared error

In statistics, the mean squared error (MSE) or mean squared deviation (MSD) of an estimator (of a procedure for estimating an unobserved quantity) measures the average of the squares of the errors—that is, the average squared difference betwee ...

). However, since we know that most lossy compression

In information technology, lossy compression or irreversible compression is the class of data compression methods that uses inexact approximations and partial data discarding to represent the content. These techniques are used to reduce data size ...

techniques operate on data that will be perceived by human consumers (listening to music

Music is the arrangement of sound to create some combination of Musical form, form, harmony, melody, rhythm, or otherwise Musical expression, expressive content. Music is generally agreed to be a cultural universal that is present in all hum ...

, watching pictures and video) the distortion measure should preferably be modeled on human perception

Perception () is the organization, identification, and interpretation of sensory information in order to represent and understand the presented information or environment. All perception involves signals that go through the nervous syste ...

and perhaps aesthetics

Aesthetics (also spelled esthetics) is the branch of philosophy concerned with the nature of beauty and taste (sociology), taste, which in a broad sense incorporates the philosophy of art.Slater, B. H.Aesthetics ''Internet Encyclopedia of Ph ...

: much like the use of probability

Probability is a branch of mathematics and statistics concerning events and numerical descriptions of how likely they are to occur. The probability of an event is a number between 0 and 1; the larger the probability, the more likely an e ...

in lossless compression

Lossless compression is a class of data compression that allows the original data to be perfectly reconstructed from the compressed data with no loss of information. Lossless compression is possible because most real-world data exhibits statisti ...

, distortion measures can ultimately be identified with loss function

In mathematical optimization and decision theory, a loss function or cost function (sometimes also called an error function) is a function that maps an event or values of one or more variables onto a real number intuitively representing some "cost ...

s as used in Bayesian estimation

Estimation (or estimating) is the process of finding an estimate or approximation, which is a value that is usable for some purpose even if input data may be incomplete, uncertain, or unstable. The value is nonetheless usable because it is d ...

and decision theory

Decision theory or the theory of rational choice is a branch of probability theory, probability, economics, and analytic philosophy that uses expected utility and probabilities, probability to model how individuals would behave Rationality, ratio ...

. In audio compression, perceptual models (and therefore perceptual distortion measures) are relatively well developed and routinely used in compression techniques such as MP3

MP3 (formally MPEG-1 Audio Layer III or MPEG-2 Audio Layer III) is a coding format for digital audio developed largely by the Fraunhofer Society in Germany under the lead of Karlheinz Brandenburg. It was designed to greatly reduce the amount ...

or Vorbis

Vorbis is a free and open-source software project headed by the Xiph.Org Foundation. The project produces an audio coding format and software reference encoder/decoder ( codec) for lossy audio compression, libvorbis. Vorbis is most comm ...

, but are often not easy to include in rate–distortion theory. In image and video compression, the human perception models are less well developed and inclusion is mostly limited to the JPEG

JPEG ( , short for Joint Photographic Experts Group and sometimes retroactively referred to as JPEG 1) is a commonly used method of lossy compression for digital images, particularly for those images produced by digital photography. The degr ...

and MPEG

The Moving Picture Experts Group (MPEG) is an alliance of working groups established jointly by International Organization for Standardization, ISO and International Electrotechnical Commission, IEC that sets standards for media coding, includ ...

weighting ( quantization, normalization

Normalization or normalisation refers to a process that makes something more normal or regular. Science

* Normalization process theory, a sociological theory of the implementation of new technologies or innovations

* Normalization model, used in ...

) matrix.

Distortion functions

Distortion functions measure the cost of representing a symbol by an approximated symbol . Typical distortion functions are the Hamming distortion and the Squared-error distortion.Hamming distortion

:Squared-error distortion

:Rate–distortion functions

The functions that relate the rate and distortion are found as the solution of the following minimization problem: : Here , sometimes called a test channel, is the conditionalprobability density function

In probability theory, a probability density function (PDF), density function, or density of an absolutely continuous random variable, is a Function (mathematics), function whose value at any given sample (or point) in the sample space (the s ...

(PDF) of the communication channel output (compressed signal) for a given input (original signal) , and is the mutual information

In probability theory and information theory, the mutual information (MI) of two random variables is a measure of the mutual Statistical dependence, dependence between the two variables. More specifically, it quantifies the "Information conten ...

between and defined as

:

where and are the entropy of the output signal ''Y'' and the conditional entropy

In information theory, the conditional entropy quantifies the amount of information needed to describe the outcome of a random variable Y given that the value of another random variable X is known. Here, information is measured in shannons, n ...

of the output signal given the input signal, respectively:

:

:

The problem can also be formulated as a distortion–rate function, where we find the infimum

In mathematics, the infimum (abbreviated inf; : infima) of a subset S of a partially ordered set P is the greatest element in P that is less than or equal to each element of S, if such an element exists. If the infimum of S exists, it is unique ...

over achievable distortions for given rate constraint. The relevant expression is:

:

The two formulations lead to functions which are inverses of each other.

The mutual information can be understood as a measure for 'prior' uncertainty the receiver has about the sender's signal (''H''(''Y'')), diminished by the uncertainty that is left after receiving information about the sender's signal (). Of course the decrease in uncertainty is due to the communicated amount of information, which is .

As an example, in case there is ''no'' communication at all, then and . Alternatively, if the communication channel is perfect and the received signal is identical to the signal at the sender, then and .

In the definition of the rate–distortion function, and are the distortion between and for a given and the prescribed maximum distortion, respectively. When we use the mean squared error

In statistics, the mean squared error (MSE) or mean squared deviation (MSD) of an estimator (of a procedure for estimating an unobserved quantity) measures the average of the squares of the errors—that is, the average squared difference betwee ...

as distortion measure, we have (for amplitude-continuous signals):

:

As the above equations show, calculating a rate–distortion function requires the stochastic description of the input in terms of the PDF , and then aims at finding the conditional PDF that minimize rate for a given distortion . These definitions can be formulated measure-theoretically to account for discrete and mixed random variables as well.

An analytical solution to this minimization problem is often difficult to obtain except in some instances for which we next offer two of the best known examples. The rate–distortion function of any source is known to obey several fundamental properties, the most important ones being that it is a continuous

Continuity or continuous may refer to:

Mathematics

* Continuity (mathematics), the opposing concept to discreteness; common examples include

** Continuous probability distribution or random variable in probability and statistics

** Continuous ...

, monotonically decreasing

In mathematics, a monotonic function (or monotone function) is a function between ordered sets that preserves or reverses the given order. This concept first arose in calculus, and was later generalized to the more abstract setting of orde ...

convex

Convex or convexity may refer to:

Science and technology

* Convex lens, in optics

Mathematics

* Convex set, containing the whole line segment that joins points

** Convex polygon, a polygon which encloses a convex set of points

** Convex polytop ...

(U) function

Function or functionality may refer to:

Computing

* Function key, a type of key on computer keyboards

* Function model, a structured representation of processes in a system

* Function object or functor or functionoid, a concept of object-orie ...

and thus the shape for the function in the examples is typical (even measured rate–distortion functions in real life tend to have very similar forms).

Although analytical solutions to this problem are scarce, there are upper and lower bounds to these functions including the famous Shannon lower bound (SLB), which in the case of squared error and memoryless sources, states that for arbitrary sources with finite differential entropy,

:

where ''h''(''D'') is the differential entropy of a Gaussian random variable with variance D. This lower bound is extensible to sources with memory and other distortion measures. One important feature of the SLB is that it is asymptotically tight in the low distortion regime for a wide class of sources and in some occasions, it actually coincides with the rate–distortion function. Shannon Lower Bounds can generally be found if the distortion between any two numbers can be expressed as a function of the difference between the value of these two numbers.

The Blahut–Arimoto algorithm, co-invented by Richard Blahut, is an elegant iterative technique for numerically obtaining rate–distortion functions of arbitrary finite input/output alphabet sources and much work has been done to extend it to more general problem instances.

The computation of the rate-distortion function requires knowledge of the underlying distribution, which is often unavailable in contemporary applications in data-science and machine learning. However, this challenge can be addressed using deep learning-based estimators of the rate-distortion function. These estimators are typically referred to as 'neural estimators', involving the optimization of a parametrized variational form of the rate distortion objective.

When working with stationary sources with memory, it is necessary to modify the definition of the rate distortion function and it must be understood in the sense of a limit taken over sequences of increasing lengths.

:

where

:

and

:

where superscripts denote a complete sequence up to that time and the subscript 0 indicates initial state.

Memoryless (independent) Gaussian source with squared-error distortion

If we assume that is aGaussian

Carl Friedrich Gauss (1777–1855) is the eponym of all of the topics listed below.

There are over 100 topics all named after this German mathematician and scientist, all in the fields of mathematics, physics, and astronomy. The English eponymo ...

random variable with variance

In probability theory and statistics, variance is the expected value of the squared deviation from the mean of a random variable. The standard deviation (SD) is obtained as the square root of the variance. Variance is a measure of dispersion ...

, and if we assume that successive samples of the signal are stochastically independent (or equivalently, the source is ''memoryless

In probability and statistics, memorylessness is a property of probability distributions. It describes situations where previous failures or elapsed time does not affect future trials or further wait time. Only the geometric and exponential d ...

'', or the signal is ''uncorrelated''), we find the following analytical expression

In mathematics, an expression or equation is in closed form if it is formed with constants, variables, and a set of functions considered as ''basic'' and connected by arithmetic operations (, and integer powers) and function composition. ...

for the rate–distortion function:

:

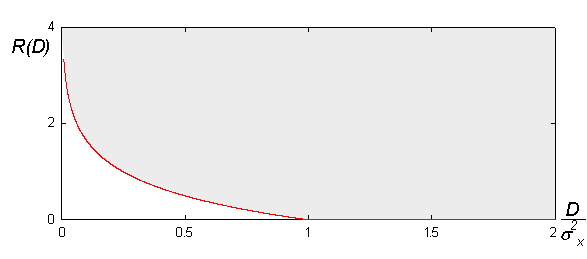

The following figure shows what this function looks like:

Rate–distortion theory tell us that 'no compression system exists that performs outside the gray area'. The closer a practical compression system is to the red (lower) bound, the better it performs. As a general rule, this bound can only be attained by increasing the coding block length parameter. Nevertheless, even at unit blocklengths one can often find good (scalar) quantizers that operate at distances from the rate–distortion function that are practically relevant.

This rate–distortion function holds only for Gaussian memoryless sources. It is known that the Gaussian source is the most "difficult" source to encode: for a given mean square error, it requires the greatest number of bits. The performance of a practical compression system working on—say—images, may well be below the lower bound shown.

Rate–distortion theory tell us that 'no compression system exists that performs outside the gray area'. The closer a practical compression system is to the red (lower) bound, the better it performs. As a general rule, this bound can only be attained by increasing the coding block length parameter. Nevertheless, even at unit blocklengths one can often find good (scalar) quantizers that operate at distances from the rate–distortion function that are practically relevant.

This rate–distortion function holds only for Gaussian memoryless sources. It is known that the Gaussian source is the most "difficult" source to encode: for a given mean square error, it requires the greatest number of bits. The performance of a practical compression system working on—say—images, may well be below the lower bound shown.

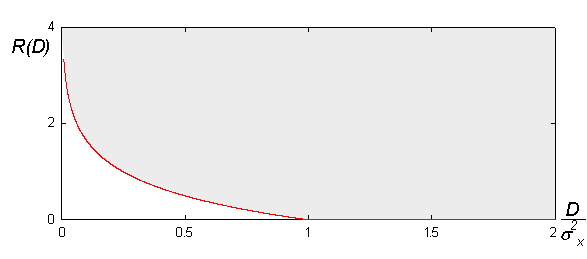

Memoryless (independent) Bernoulli source with Hamming distortion

The rate-distortion function of aBernoulli random variable

In probability theory and statistics, the Bernoulli distribution, named after Swiss mathematician Jacob Bernoulli, is the discrete probability distribution of a random variable which takes the value 1 with probability p and the value 0 with pro ...

with Hamming distortion is given by:

:

where denotes the binary entropy function

Binary may refer to:

Science and technology Mathematics

* Binary number, a representation of numbers using only two values (0 and 1) for each digit

* Binary function, a function that takes two arguments

* Binary operation, a mathematical op ...

.

Plot of the rate-distortion function for :

Connecting rate-distortion theory to channel capacity

Suppose we want to transmit information about a source to the user with a distortion not exceeding ''D''. Rate–distortion theory tells us that at least bits/symbol of information from the source must reach the user. We also know from Shannon's channel coding theorem that if the source entropy is ''H'' bits/symbol, and thechannel capacity

Channel capacity, in electrical engineering, computer science, and information theory, is the theoretical maximum rate at which information can be reliably transmitted over a communication channel.

Following the terms of the noisy-channel coding ...

is ''C'' (where ), then bits/symbol will be lost when transmitting this information over the given channel. For the user to have any hope of reconstructing with a maximum distortion ''D'', we must impose the requirement that the information lost in transmission does not exceed the maximum tolerable loss of bits/symbol. This means that the channel capacity must be at least as large as .

See also

* * * * * *References

External links

*VcDemo Image and Video Compression Learning Tool

{{DEFAULTSORT:Rate-distortion theory Data compression Information theory