|

Predictive Model Markup Language

The Predictive Model Markup Language (PMML) is an XML-based Predictive modelling, predictive model interchange format conceived by Dr. Robert Lee Grossman, then the director of the National Center for Data Mining at the University of Illinois at Chicago. PMML provides a way for analytic applications to describe and exchange Predictive modelling, predictive models produced by data mining and machine learning algorithms. It supports common models such as logistic regression and other feedforward neural networks. Version 0.9 was published in 1998. Subsequent versions have been developed by the Data Mining Group. Since PMML is an XML-based standard, the specification comes in the form of an XML Schema (W3C), XML schema. PMML itself is a mature standard with over 30 organizations having announced products supporting PMML. PMML Components A PMML file can be described by the following components: * Header (computing), Header: contains general information about the PMML document, su ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Text Mining

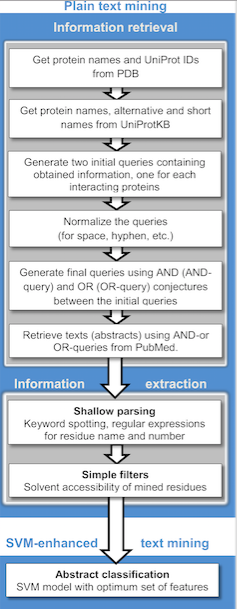

Text mining, also referred to as ''text data mining'', similar to text analytics, is the process of deriving high-quality information from text. It involves "the discovery by computer of new, previously unknown information, by automatically extracting information from different written resources." Written resources may include websites, books, emails, reviews, and articles. High-quality information is typically obtained by devising patterns and trends by means such as statistical pattern learning. According to Hotho et al. (2005) we can distinguish between three different perspectives of text mining: information extraction, data mining, and a KDD (Knowledge Discovery in Databases) process. Text mining usually involves the process of structuring the input text (usually parsing, along with the addition of some derived linguistic features and the removal of others, and subsequent insertion into a database), deriving patterns within the structured data, and finally evaluation and int ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Proportional Hazards Models

Proportional hazards models are a class of survival models in statistics. Survival models relate the time that passes, before some event occurs, to one or more covariates that may be associated with that quantity of time. In a proportional hazards model, the unique effect of a unit increase in a covariate is multiplicative with respect to the hazard rate. For example, taking a drug may halve one's hazard rate for a stroke occurring, or, changing the material from which a manufactured component is constructed may double its hazard rate for failure. Other types of survival models such as accelerated failure time models do not exhibit proportional hazards. The accelerated failure time model describes a situation where the biological or mechanical life history of an event is accelerated (or decelerated). Background Survival models can be viewed as consisting of two parts: the underlying baseline hazard function, often denoted \lambda_0(t), describing how the risk of event per ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Association Rule

Association rule learning is a rule-based machine learning method for discovering interesting relations between variables in large databases. It is intended to identify strong rules discovered in databases using some measures of interestingness.Piatetsky-Shapiro, Gregory (1991), ''Discovery, analysis, and presentation of strong rules'', in Piatetsky-Shapiro, Gregory; and Frawley, William J.; eds., ''Knowledge Discovery in Databases'', AAAI/MIT Press, Cambridge, MA. In any given transaction with a variety of items, association rules are meant to discover the rules that determine how or why certain items are connected. Based on the concept of strong rules, Rakesh Agrawal, Tomasz Imieliński and Arun Swami introduced association rules for discovering regularities between products in large-scale transaction data recorded by point-of-sale (POS) systems in supermarkets. For example, the rule \ \Rightarrow \ found in the sales data of a supermarket would indicate that if a customer buy ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Support Vector Machines

In machine learning, support vector machines (SVMs, also support vector networks) are supervised learning models with associated learning algorithms that analyze data for classification and regression analysis. Developed at AT&T Bell Laboratories by Vladimir Vapnik with colleagues (Boser et al., 1992, Guyon et al., 1993, Cortes and Vapnik, 1995, Vapnik et al., 1997) SVMs are one of the most robust prediction methods, being based on statistical learning frameworks or VC theory proposed by Vapnik (1982, 1995) and Chervonenkis (1974). Given a set of training examples, each marked as belonging to one of two categories, an SVM training algorithm builds a model that assigns new examples to one category or the other, making it a non-probabilistic binary linear classifier (although methods such as Platt scaling exist to use SVM in a probabilistic classification setting). SVM maps training examples to points in space so as to maximise the width of the gap between the two categories. New ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Multi-class Classification

In machine learning and statistical classification, multiclass classification or multinomial classification is the problem of classifying instances into one of three or more classes (classifying instances into one of two classes is called binary classification). While many classification algorithms (notably multinomial logistic regression) naturally permit the use of more than two classes, some are by nature binary algorithms; these can, however, be turned into multinomial classifiers by a variety of strategies. Multiclass classification should not be confused with multi-label classification, where multiple labels are to be predicted for each instance. General strategies The existing multi-class classification techniques can be categorized into (i) transformation to binary (ii) extension from binary and (iii) hierarchical classification. Transformation to binary This section discusses strategies for reducing the problem of multiclass classification to multiple binary classif ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Spectral Density Estimation

In statistical signal processing, the goal of spectral density estimation (SDE) or simply spectral estimation is to estimate the spectral density (also known as the power spectral density) of a signal from a sequence of time samples of the signal. Intuitively speaking, the spectral density characterizes the frequency content of the signal. One purpose of estimating the spectral density is to detect any periodicities in the data, by observing peaks at the frequencies corresponding to these periodicities. Some SDE techniques assume that a signal is composed of a limited (usually small) number of generating frequencies plus noise and seek to find the location and intensity of the generated frequencies. Others make no assumption on the number of components and seek to estimate the whole generating spectrum. Overview Spectrum analysis, also referred to as frequency domain analysis or spectral density estimation, is the technical process of decomposing a complex signal in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Seasonal Adjustment

Seasonal adjustment or deseasonalization is a statistical method for removing the seasonal component of a time series. It is usually done when wanting to analyse the trend, and cyclical deviations from trend, of a time series independently of the seasonal components. Many economic phenomena have seasonal cycles, such as agricultural production, (crop yields fluctuate with the seasons) and consumer consumption (increased personal spending leading up to Christmas). It is necessary to adjust for this component in order to understand underlying trends in the economy, so official statistics are often adjusted to remove seasonal components. Typically, seasonally adjusted data is reported for unemployment rates to reveal the underlying trends and cycles in labor markets. Time series components The investigation of many economic time series becomes problematic due to seasonal fluctuations. Time series are made up of four components: *S_t: The seasonal component *T_t: The trend component ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

ARIMA

Arima, officially The Royal Chartered Borough of Arima is the easternmost and second largest in area of the three boroughs of Trinidad and Tobago. It is geographically adjacent to Sangre Grande and Arouca at the south central foothills of the Northern Range. To the south is the Caroni–Arena Dam. Coterminous with Town of Arima since 1888, the borough of Arima is the fourth-largest municipality in population in the country (after Port of Spain, Chaguanas and San Fernando). The census estimated it had 33,606 residents in 2011. In 1887, the town petitioned Queen Victoria for municipal status as part of her Golden Jubilee celebration. This was granted in the following year, and Arima became a Royal Borough on 1 August 1888. Historically the third-largest town of Trinidad and Tobago, Arima is fourth since Chaguanas became the largest town in the country. Geography Climate The borough has a tropical rainforest climate ( Köppen ''Af''), bordering on a tropical monsoon ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Smoothing

In statistics and image processing, to smooth a data set is to create an approximating function that attempts to capture important patterns in the data, while leaving out noise or other fine-scale structures/rapid phenomena. In smoothing, the data points of a signal are modified so individual points higher than the adjacent points (presumably because of noise) are reduced, and points that are lower than the adjacent points are increased leading to a smoother signal. Smoothing may be used in two important ways that can aid in data analysis (1) by being able to extract more information from the data as long as the assumption of smoothing is reasonable and (2) by being able to provide analyses that are both flexible and robust. Many different algorithms are used in smoothing. Smoothing may be distinguished from the related and partially overlapping concept of curve fitting in the following ways: * curve fitting often involves the use of an explicit function form for the result, wherea ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Time Series

In mathematics, a time series is a series of data points indexed (or listed or graphed) in time order. Most commonly, a time series is a sequence taken at successive equally spaced points in time. Thus it is a sequence of discrete-time data. Examples of time series are heights of ocean tides, counts of sunspots, and the daily closing value of the Dow Jones Industrial Average. A time series is very frequently plotted via a run chart (which is a temporal line chart). Time series are used in statistics, signal processing, pattern recognition, econometrics, mathematical finance, weather forecasting, earthquake prediction, electroencephalography, control engineering, astronomy, communications engineering, and largely in any domain of applied science and engineering which involves temporal measurements. Time series ''analysis'' comprises methods for analyzing time series data in order to extract meaningful statistics and other characteristics of the data. Time series ''for ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Conditional (programming)

In computer science, conditionals (that is, conditional statements, conditional expressions and conditional constructs,) are programming language commands for handling decisions. Specifically, conditionals perform different computations or actions depending on whether a programmer-defined boolean ''condition'' evaluates to true or false. In terms of control flow, the decision is always achieved by selectively altering the control flow based on some condition (apart from the case of branch predication). Although dynamic dispatch is not usually classified as a conditional construct, it is another way to select between alternatives at runtime. Terminology In imperative programming languages, the term "conditional statement" is usually used, whereas in functional programming, the terms "conditional expression" or "conditional construct" are preferred, because these terms all have distinct meanings. If–then(–else) The if–then construct (sometimes called if–the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |