|

Online Machine Learning

In computer science, online machine learning is a method of machine learning in which data becomes available in a sequential order and is used to update the best predictor for future data at each step, as opposed to batch learning techniques which generate the best predictor by learning on the entire training data set at once. Online learning is a common technique used in areas of machine learning where it is computationally infeasible to train over the entire dataset, requiring the need of out-of-core algorithms. It is also used in situations where it is necessary for the algorithm to dynamically adapt to new patterns in the data, or when the data itself is generated as a function of time, e.g., stock price prediction. Online learning algorithms may be prone to catastrophic interference, a problem that can be addressed by incremental learning approaches. Introduction In the setting of supervised learning, a function of f : X \to Y is to be learned, where X is thought of as ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Computer Science

Computer science is the study of computation, automation, and information. Computer science spans theoretical disciplines (such as algorithms, theory of computation, information theory, and automation) to Applied science, practical disciplines (including the design and implementation of Computer architecture, hardware and Computer programming, software). Computer science is generally considered an area of research, academic research and distinct from computer programming. Algorithms and data structures are central to computer science. The theory of computation concerns abstract models of computation and general classes of computational problem, problems that can be solved using them. The fields of cryptography and computer security involve studying the means for secure communication and for preventing Vulnerability (computing), security vulnerabilities. Computer graphics (computer science), Computer graphics and computational geometry address the generation of images. Progr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

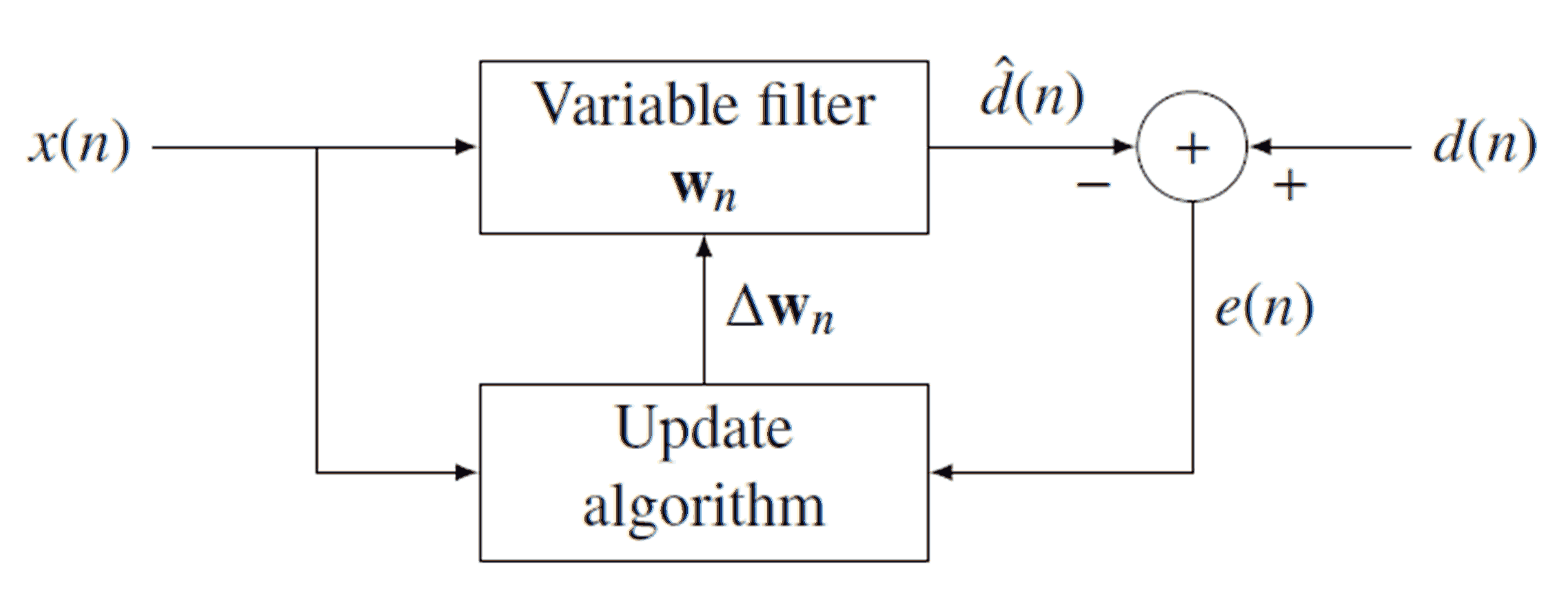

Recursive Least Squares

Recursive least squares (RLS) is an adaptive filter algorithm that recursively finds the coefficients that minimize a weighted linear least squares cost function relating to the input signals. This approach is in contrast to other algorithms such as the least mean squares (LMS) that aim to reduce the mean square error. In the derivation of the RLS, the input signals are considered deterministic, while for the LMS and similar algorithms they are considered stochastic. Compared to most of its competitors, the RLS exhibits extremely fast convergence. However, this benefit comes at the cost of high computational complexity. Motivation RLS was discovered by Gauss but lay unused or ignored until 1950 when Plackett rediscovered the original work of Gauss from 1821. In general, the RLS can be used to solve any problem that can be solved by adaptive filters. For example, suppose that a signal d(n) is transmitted over an echoey, noisy channel that causes it to be received as :x(n)=\sum ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Continual Learning

In computer science, incremental learning is a method of machine learning in which input data is continuously used to extend the existing model's knowledge i.e. to further train the model. It represents a dynamic technique of supervised learning and unsupervised learning that can be applied when training data becomes available gradually over time or its size is out of system memory limits. Algorithms that can facilitate incremental learning are known as incremental machine learning algorithms. Many traditional machine learning algorithms inherently support incremental learning. Other algorithms can be adapted to facilitate incremental learning. Examples of incremental algorithms include decision trees (IDE4, ID5R angaenari, decision rules, artificial neural networks (RBF networks, Learn++, Fuzzy ARTMAP, TopoART,Marko Tscherepanow, Marco Kortkamp, and Marc KammerA Hierarchical ART Network for the Stable Incremental Learning of Topological Structures and Associations from Noisy Data ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

AdaGrad

Stochastic gradient descent (often abbreviated SGD) is an iterative method for optimizing an objective function with suitable smoothness properties (e.g. differentiable or subdifferentiable). It can be regarded as a stochastic approximation of gradient descent optimization, since it replaces the actual gradient (calculated from the entire data set) by an estimate thereof (calculated from a randomly selected subset of the data). Especially in high-dimensional optimization problems this reduces the very high computational burden, achieving faster iterations in trade for a lower convergence rate. While the basic idea behind stochastic approximation can be traced back to the Robbins–Monro algorithm of the 1950s, stochastic gradient descent has become an important optimization method in machine learning. Background Both statistical estimation and machine learning consider the problem of minimizing an objective function that has the form of a sum: : Q(w) = \frac\sum_^n Q_i(w), wh ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Online Mirror Descent

In mathematics, mirror descent is an iterative optimization algorithm for finding a local minimum of a differentiable function. It generalizes algorithms such as gradient descent and multiplicative weights. History Mirror descent was originally proposed by Nemirovski and Yudin in 1983. Motivation In gradient descent with the sequence of learning rates (\eta_n)_ applied to a differentiable function F, one starts with a guess \mathbf_0 for a local minimum of F, and considers the sequence \mathbf_0, \mathbf_1, \mathbf_2, \ldots such that :\mathbf_=\mathbf_n-\eta_n \nabla F(\mathbf_n),\ n \ge 0. This can be reformulated by noting that :\mathbf_=\arg \min_ \left(F(\mathbf_n) + \nabla F(\mathbf_n)^T (\mathbf - \mathbf_n) + \frac\, \mathbf - \mathbf_n\, ^2\right) In other words, \mathbf_ minimizes the first-order approximation to F at \mathbf_n with added proximity term \, \mathbf - \mathbf_n\, ^2. This Euclidean distance term is a particular example of a Bregman distance. Us ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hinge Loss

In machine learning, the hinge loss is a loss function used for training classifiers. The hinge loss is used for "maximum-margin" classification, most notably for support vector machines (SVMs). For an intended output and a classifier score , the hinge loss of the prediction is defined as :\ell(y) = \max(0, 1-t \cdot y) Note that y should be the "raw" output of the classifier's decision function, not the predicted class label. For instance, in linear SVMs, y = \mathbf \cdot \mathbf + b, where (\mathbf,b) are the parameters of the hyperplane and \mathbf is the input variable(s). When and have the same sign (meaning predicts the right class) and , y, \ge 1, the hinge loss \ell(y) = 0. When they have opposite signs, \ell(y) increases linearly with , and similarly if , y, < 1, even if it has the same sign (correct prediction, but not by enough margin). Extensions While binary SVMs are commonly extended to |

Support Vector Machine

In machine learning, support vector machines (SVMs, also support vector networks) are supervised learning models with associated learning algorithms that analyze data for classification and regression analysis. Developed at AT&T Bell Laboratories by Vladimir Vapnik with colleagues (Boser et al., 1992, Guyon et al., 1993, Cortes and Vapnik, 1995, Vapnik et al., 1997) SVMs are one of the most robust prediction methods, being based on statistical learning frameworks or VC theory proposed by Vapnik (1982, 1995) and Chervonenkis (1974). Given a set of training examples, each marked as belonging to one of two categories, an SVM training algorithm builds a model that assigns new examples to one category or the other, making it a non- probabilistic binary linear classifier (although methods such as Platt scaling exist to use SVM in a probabilistic classification setting). SVM maps training examples to points in space so as to maximise the width of the gap between the two categorie ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Subgradient

In mathematics, the subderivative, subgradient, and subdifferential generalize the derivative to convex functions which are not necessarily differentiable. Subderivatives arise in convex analysis, the study of convex functions, often in connection to convex optimization. Let f:I \to \mathbb be a real-valued convex function defined on an open interval of the real line. Such a function need not be differentiable at all points: For example, the absolute value function ''f''(''x'')=, ''x'', is nondifferentiable when ''x''=0. However, as seen in the graph on the right (where ''f(x)'' in blue has non-differentiable kinks similar to the absolute value function), for any ''x''0 in the domain of the function one can draw a line which goes through the point (''x''0, ''f''(''x''0)) and which is everywhere either touching or below the graph of ''f''. The slope of such a line is called a ''subderivative'' (because the line is under the graph of ''f''). Definition Rigorously, a ''subderiv ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Greedy Algorithm

A greedy algorithm is any algorithm that follows the problem-solving heuristic of making the locally optimal choice at each stage. In many problems, a greedy strategy does not produce an optimal solution, but a greedy heuristic can yield locally optimal solutions that approximate a globally optimal solution in a reasonable amount of time. For example, a greedy strategy for the travelling salesman problem (which is of high computational complexity) is the following heuristic: "At each step of the journey, visit the nearest unvisited city." This heuristic does not intend to find the best solution, but it terminates in a reasonable number of steps; finding an optimal solution to such a complex problem typically requires unreasonably many steps. In mathematical optimization, greedy algorithms optimally solve combinatorial problems having the properties of matroids and give constant-factor approximations to optimization problems with the submodular structure. Specifics Greedy algori ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Randomization

Randomization is the process of making something random. Randomization is not haphazard; instead, a random process is a sequence of random variables describing a process whose outcomes do not follow a deterministic pattern, but follow an evolution described by probability distributions. For example, a random sample of individuals from a population refers to a sample where every individual has a known probability of being sampled. This would be contrasted with nonprobability sampling where arbitrary individuals are selected. In various contexts, randomization may involve: * generating a random permutation of a sequence (such as when shuffling cards); * selecting a random sample of a population (important in statistical sampling); * allocating experimental units via random assignment to a treatment or control condition; * generating random numbers (random number generation); or * transforming a data stream (such as when using a scrambler in telecommunications). Applications Rando ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Concavification

In mathematics, concavification is the process of converting a non-concave function to a concave function. A related concept is convexification – converting a non-convex function to a convex function. It is especially important in economics and mathematical optimization. Concavification of a quasiconcave function by monotone transformation An important special case of concavification is where the original function is a quasiconcave function. It is known that: * Every concave function is quasiconcave, but the opposite is not true. * Every monotone transformation of a quasiconcave function is also quasiconcave. For example, if f(x) is quasiconcave and g(\cdot) is a monotonically-increasing function, then g(f(x)) is also quasiconcave. Therefore, a natural question is: ''given a quasiconcave function'' f(x), ''does there exist a monotonically increasing'' g(\cdot) ''such that'' g(f(x)) ''is concave?'' Positive and negative examples As a positive example, consider the function f(x ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Regret

Regret is the emotion of wishing one had made a different decision in the past, because the consequences of the decision were unfavorable. Regret is related to perceived opportunity. Its intensity varies over time after the decision, in regard to action versus inaction, and in regard to self-control at a particular age. The self-recrimination which comes with regret is thought to spur corrective action and adaptation. In Western societies adults have the highest regrets regarding choices of their education. Definition Regret has been defined by psychologists in the late 1990s as a "negative emotion predicated on an upward, self-focused, counterfactual inference". Another definition is "an aversive emotional state elicited by a discrepancy in the outcome values of chosen vs. unchosen actions". Regret differs from remorse in that people can regret things beyond their control, but remorse indicates a sense of responsibility for the situation. For example, a person can fee ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |