|

Markov Chains

A Markov chain or Markov process is a stochastic model describing a sequence of possible events in which the probability of each event depends only on the state attained in the previous event. Informally, this may be thought of as, "What happens next depends only on the state of affairs ''now''." A countably infinite sequence, in which the chain moves state at discrete time steps, gives a discrete-time Markov chain (DTMC). A continuous-time process is called a continuous-time Markov chain (CTMC). It is named after the Russian mathematician Andrey Markov. Markov chains have many applications as statistical models of real-world processes, such as studying cruise control systems in motor vehicles, queues or lines of customers arriving at an airport, currency exchange rates and animal population dynamics. Markov processes are the basis for general stochastic simulation methods known as Markov chain Monte Carlo, which are used for simulating sampling from complex probability distr ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Physics

Physics is the natural science that studies matter, its fundamental constituents, its motion and behavior through space and time, and the related entities of energy and force. "Physical science is that department of knowledge which relates to the order of nature, or, in other words, to the regular succession of events." Physics is one of the most fundamental scientific disciplines, with its main goal being to understand how the universe behaves. "Physics is one of the most fundamental of the sciences. Scientists of all disciplines use the ideas of physics, including chemists who study the structure of molecules, paleontologists who try to reconstruct how dinosaurs walked, and climatologists who study how human activities affect the atmosphere and oceans. Physics is also the foundation of all engineering and technology. No engineer could design a flat-screen TV, an interplanetary spacecraft, or even a better mousetrap without first understanding the basic laws of physics ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Continuous Or Discrete Variable

In mathematics and statistics, a quantitative variable may be continuous or discrete if they are typically obtained by ''measuring'' or ''counting'', respectively. If it can take on two particular real values such that it can also take on all real values between them (even values that are arbitrarily close together), the variable is continuous in that interval. If it can take on a value such that there is a non- infinitesimal gap on each side of it containing no values that the variable can take on, then it is discrete around that value. In some contexts a variable can be discrete in some ranges of the number line and continuous in others. Continuous variable A continuous variable is a variable whose value is obtained by measuring, i.e., one which can take on an uncountable set of values. For example, a variable over a non-empty range of the real numbers is continuous, if it can take on any value in that range. The reason is that any range of real numbers between a and ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

State Space

A state space is the set of all possible configurations of a system. It is a useful abstraction for reasoning about the behavior of a given system and is widely used in the fields of artificial intelligence and game theory. For instance, the toy problem Vacuum World has a discrete finite state space in which there are a limited set of configurations that the vacuum and dirt can be in. A "counter" system, where states are the natural numbers starting at 1 and are incremented over time has an infinite discrete state space. The angular position of an undamped pendulum is a continuous (and therefore infinite) state space. Definition In the theory of dynamical systems, the state space of a discrete system defined by a function ''ƒ'' can be modeled as a directed graph where each possible state of the dynamical system is represented by a vertex with a directed edge from ''a'' to ''b'' if and only if ''ƒ''(''a'') = ''b''. This is known as a state diagram. For a co ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Independence (probability Theory)

Independence is a fundamental notion in probability theory, as in statistics and the theory of stochastic processes. Two events are independent, statistically independent, or stochastically independent if, informally speaking, the occurrence of one does not affect the probability of occurrence of the other or, equivalently, does not affect the odds. Similarly, two random variables are independent if the realization of one does not affect the probability distribution of the other. When dealing with collections of more than two events, two notions of independence need to be distinguished. The events are called pairwise independent if any two events in the collection are independent of each other, while mutual independence (or collective independence) of events means, informally speaking, that each event is independent of any combination of other events in the collection. A similar notion exists for collections of random variables. Mutual independence implies pairwise independe ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Conditional Probability

In probability theory, conditional probability is a measure of the probability of an event occurring, given that another event (by assumption, presumption, assertion or evidence) has already occurred. This particular method relies on event B occurring with some sort of relationship with another event A. In this event, the event B can be analyzed by a conditional probability with respect to A. If the event of interest is and the event is known or assumed to have occurred, "the conditional probability of given ", or "the probability of under the condition ", is usually written as or occasionally . This can also be understood as the fraction of probability B that intersects with A: P(A \mid B) = \frac. For example, the probability that any given person has a cough on any given day may be only 5%. But if we know or assume that the person is sick, then they are much more likely to be coughing. For example, the conditional probability that someone unwell (sick) is coughing might b ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Memorylessness

In probability and statistics, memorylessness is a property of certain probability distributions. It usually refers to the cases when the distribution of a "waiting time" until a certain event does not depend on how much time has elapsed already. To model memoryless situations accurately, we must constantly 'forget' which state the system is in: the probabilities would not be influenced by the history of the process. Only two kinds of distributions are memoryless: geometric distributions of non-negative integers and the exponential distributions of non-negative real numbers. In the context of Markov processes, memorylessness refers to the Markov property, an even stronger assumption which implies that the properties of random variables related to the future depend only on relevant information about the current time, not on information from further in the past. The present article describes the use outside the Markov property. Waiting time examples With memory Most phenomen ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Markov Property

In probability theory and statistics, the term Markov property refers to the memoryless property of a stochastic process. It is named after the Russian mathematician Andrey Markov. The term strong Markov property is similar to the Markov property, except that the meaning of "present" is defined in terms of a random variable known as a stopping time. The term Markov assumption is used to describe a model where the Markov assumption is assumed to hold, such as a hidden Markov model. A Markov random field extends this property to two or more dimensions or to random variables defined for an interconnected network of items. An example of a model for such a field is the Ising model. A discrete-time stochastic process satisfying the Markov property is known as a Markov chain. Introduction A stochastic process has the Markov property if the conditional probability distribution of future states of the process (conditional on both past and present values) depends only upon the pres ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Speech Processing

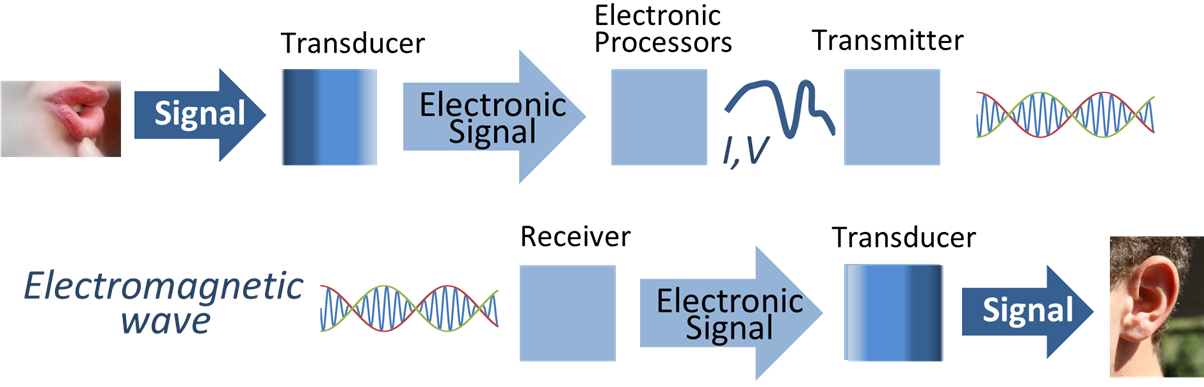

Speech processing is the study of speech signals and the processing methods of signals. The signals are usually processed in a digital representation, so speech processing can be regarded as a special case of digital signal processing, applied to speech signals. Aspects of speech processing includes the acquisition, manipulation, storage, transfer and output of speech signals. The input is called speech recognition and the output is called speech synthesis. History Early attempts at speech processing and recognition were primarily focused on understanding a handful of simple phonetic elements such as vowels. In 1952, three researchers at Bell Labs, Stephen. Balashek, R. Biddulph, and K. H. Davis, developed a system that could recognize digits spoken by a single speaker. Pioneering works in field of speech recognition using analysis of its spectrum were reported in 1940s. Linear predictive coding (LPC), a speech processing algorithm, was first proposed by Fumitada Itakura of ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Theory

Information theory is the scientific study of the quantification, storage, and communication of information. The field was originally established by the works of Harry Nyquist and Ralph Hartley, in the 1920s, and Claude Shannon in the 1940s. The field is at the intersection of probability theory, statistics, computer science, statistical mechanics, information engineering, and electrical engineering. A key measure in information theory is entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a fair coin flip (with two equally likely outcomes) provides less information (lower entropy) than specifying the outcome from a roll of a die (with six equally likely outcomes). Some other important measures in information theory are mutual information, channel capacity, error exponents, and relative entropy. Important sub-fields of information theory include s ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Signal Processing and Ronald W. Schafer, the principles of signal processing can be found in the classical numerical analysis techniques of the 17th century. They further state that the digital re ...

Signal processing is an electrical engineering subfield that focuses on analyzing, modifying and synthesizing '' signals'', such as sound, images, and scientific measurements. Signal processing techniques are used to optimize transmissions, digital storage efficiency, correcting distorted signals, subjective video quality and to also detect or pinpoint components of interest in a measured signal. History According to Alan V. Oppenheim Alan Victor Oppenheim''Alan Victor Oppenheim'' was elected in 1987 [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |