|

Longest Processing Time

Longest-processing-time-first (LPT) is a greedy algorithm for job scheduling. The input to the algorithm is a set of ''jobs'', each of which has a specific processing-time. There is also a number ''m'' specifying the number of ''machines'' that can process the jobs. The LPT algorithm works as follows: # Order the jobs by descending order of their processing-time, such that the job with the longest processing time is first. # Schedule each job in this sequence into a machine in which the current load (= total processing-time of scheduled jobs) is smallest. Step 2 of the algorithm is essentially the list-scheduling (LS) algorithm. The difference is that LS loops over the jobs in an arbitrary order, while LPT pre-orders them by descending processing time. LPT was first analyzed by Ronald Graham in the 1960s in the context of the identical-machines scheduling problem. Later, it was applied to many other variants of the problem. LPT can also be described in a more abstract way, a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Greedy Algorithm

A greedy algorithm is any algorithm that follows the problem-solving heuristic of making the locally optimal choice at each stage. In many problems, a greedy strategy does not produce an optimal solution, but a greedy heuristic can yield locally optimal solutions that approximate a globally optimal solution in a reasonable amount of time. For example, a greedy strategy for the travelling salesman problem (which is of high computational complexity) is the following heuristic: "At each step of the journey, visit the nearest unvisited city." This heuristic does not intend to find the best solution, but it terminates in a reasonable number of steps; finding an optimal solution to such a complex problem typically requires unreasonably many steps. In mathematical optimization, greedy algorithms optimally solve combinatorial problems having the properties of matroids and give constant-factor approximations to optimization problems with the submodular structure. Specifics Greedy algo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Uniform-machines Scheduling

Uniform machine scheduling (also called uniformly-related machine scheduling or related machine scheduling) is an optimization problem in computer science and operations research. It is a variant of optimal job scheduling. We are given ''n'' jobs ''J''1, ''J''2, ..., ''Jn'' of varying processing times, which need to be scheduled on ''m'' different machines. The goal is to minimize the makespan - the total time required to execute the schedule. The time that machine ''i'' needs in order to process job j is denoted by ''pi,j''. In the general case, the times ''pi,j'' are unrelated, and any matrix of positive processing times is possible. In the specific variant called ''uniform machine scheduling'', some machines are ''uniformly'' faster than others. This means that, for each machine ''i'', there is a speed factor ''si'', and the run-time of job ''j'' on machine ''i'' is ''pi,j'' = ''pj'' / ''si''. In the standard three-field notation for optimal job scheduling problems, the uniform-m ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Greedy Number Partitioning

In computer science, greedy number partitioning is a class of greedy algorithms for multiway number partitioning. The input to the algorithm is a set ''S'' of numbers, and a parameter ''k''. The required output is a partition of ''S'' into ''k'' subsets, such that the sums in the subsets are as nearly equal as possible. Greedy algorithms process the numbers sequentially, and insert the next number into a bin in which the sum of numbers is currently smallest. Approximate algorithms The simplest greedy partitioning algorithm is called list scheduling. It just processes the inputs in any order they arrive. It always returns a partition in which the largest sum is at most 2-\frac times the optimal (minimum) largest sum. This heuristic can be used as an online algorithm, when the order in which the items arrive cannot be controlled. An improved greedy algorithm is called LPT scheduling. It processes the inputs by descending order of value, from large to small. Since it needs to p ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Online Algorithm

In computer science, an online algorithm is one that can process its input piece-by-piece in a serial fashion, i.e., in the order that the input is fed to the algorithm, without having the entire input available from the start. In contrast, an offline algorithm is given the whole problem data from the beginning and is required to output an answer which solves the problem at hand. In operations research, the area in which online algorithms are developed is called online optimization. As an example, consider the sorting algorithms selection sort and insertion sort: selection sort repeatedly selects the minimum element from the unsorted remainder and places it at the front, which requires access to the entire input; it is thus an offline algorithm. On the other hand, insertion sort considers one input element per iteration and produces a partial solution without considering future elements. Thus insertion sort is an online algorithm. Note that the final result of an insertion sort ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

LPT Algorithm

Longest-processing-time-first (LPT) is a greedy algorithm for Optimal job scheduling, job scheduling. The input to the algorithm is a set of ''jobs'', each of which has a specific processing-time. There is also a number ''m'' specifying the number of ''machines'' that can process the jobs. The LPT algorithm works as follows: # Order the jobs by descending order of their processing-time, such that the job with the longest processing time is first. # Schedule each job in this sequence into a machine in which the current load (= total processing-time of scheduled jobs) is smallest. Step 2 of the algorithm is essentially the List scheduling, list-scheduling (LS) algorithm. The difference is that LS loops over the jobs in an arbitrary order, while LPT pre-orders them by descending processing time. LPT was first analyzed by Ronald Graham in the 1960s in the context of the identical-machines scheduling problem. Later, it was applied to many other variants of the problem. LPT can also be ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

3-partition Problem

The 3-partition problem is a strongly NP-complete problem in computer science. The problem is to decide whether a given multiset of integers can be partitioned into triplets that all have the same sum. More precisely: * The input to the problem is a multiset ''S'' of ''n'' = 3 positive integers. The sum of all integers is . * The output is whether or not there exists a partition of ''S'' into ''m'' triplets ''S''1, ''S''2, …, ''S''''m'' such that the sum of the numbers in each one is equal to ''T''. The ''S''1, ''S''2, …, ''S''''m'' must form a partition of ''S'' in the sense that they are disjoint and they cover ''S''. The 3-partition problem remains strongly NP-complete under the restriction that every integer in ''S'' is strictly between ''T''/4 and ''T''/2. Example # The set S = \ can be partitioned into the four sets \, \, \ , \, each of which sums to ''T'' = 90. # The set S = \ can be partitioned into the two sets \, \ each of which sum to ''T'' = 15. # (ever ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Balanced Partition Problem

Balanced number partitioning is a variant of multiway number partitioning in which there are constraints on the number of items allocated to each set. The input to the problem is a set of ''n'' items of different sizes, and two integers ''m'', ''k''. The output is a partition of the items into ''m'' subsets, such that the number of items in each subset is at most ''k''. Subject to this, it is required that the sums of sizes in the ''m'' subsets are as similar as possible. An example application is identical-machines scheduling where each machine has a job-queue that can hold at most ''k'' jobs. The problem has applications also in manufacturing of VLSI chips, and in assigning tools to machines in flexible manufacturing systems. In the standard three-field notation for optimal job scheduling problems, the problem of minimizing the largest sum is sometimes denoted by "P , # ≤ k , ''C''max". The middle field "# ≤ k" denote ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Uniform-machines Scheduling

Uniform machine scheduling (also called uniformly-related machine scheduling or related machine scheduling) is an optimization problem in computer science and operations research. It is a variant of optimal job scheduling. We are given ''n'' jobs ''J''1, ''J''2, ..., ''Jn'' of varying processing times, which need to be scheduled on ''m'' different machines. The goal is to minimize the makespan - the total time required to execute the schedule. The time that machine ''i'' needs in order to process job j is denoted by ''pi,j''. In the general case, the times ''pi,j'' are unrelated, and any matrix of positive processing times is possible. In the specific variant called ''uniform machine scheduling'', some machines are ''uniformly'' faster than others. This means that, for each machine ''i'', there is a speed factor ''si'', and the run-time of job ''j'' on machine ''i'' is ''pi,j'' = ''pj'' / ''si''. In the standard three-field notation for optimal job scheduling problems, the uniform-m ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

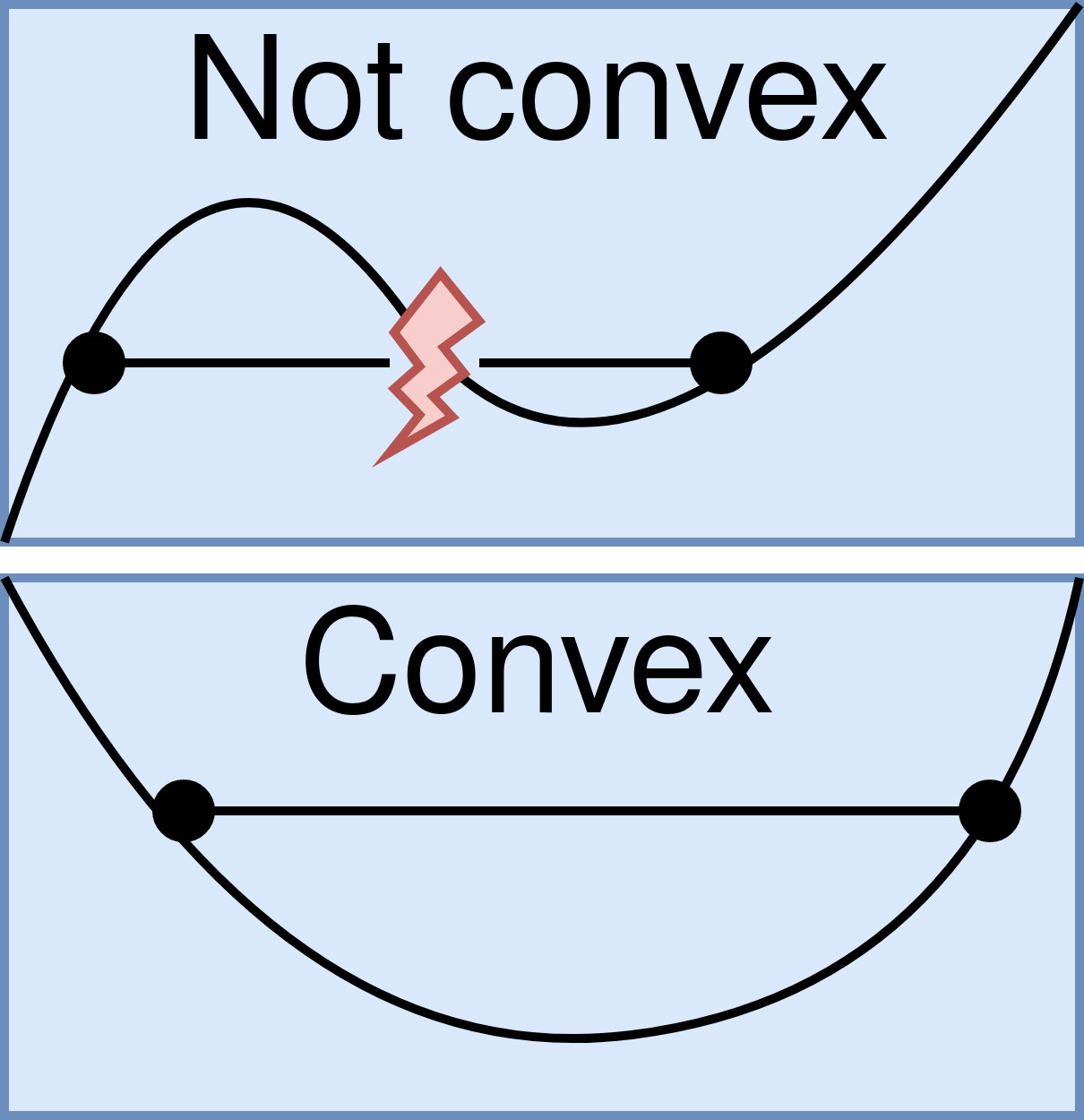

Convex Function

In mathematics, a real-valued function is called convex if the line segment between any two points on the graph of the function lies above the graph between the two points. Equivalently, a function is convex if its epigraph (the set of points on or above the graph of the function) is a convex set. A twice-differentiable function of a single variable is convex if and only if its second derivative is nonnegative on its entire domain. Well-known examples of convex functions of a single variable include the quadratic function x^2 and the exponential function e^x. In simple terms, a convex function refers to a function whose graph is shaped like a cup \cup, while a concave function's graph is shaped like a cap \cap. Convex functions play an important role in many areas of mathematics. They are especially important in the study of optimization problems where they are distinguished by a number of convenient properties. For instance, a strictly convex function on an open set has n ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Negative Exponential Distribution

In probability theory and statistics, the exponential distribution is the probability distribution of the time between events in a Poisson point process, i.e., a process in which events occur continuously and independently at a constant average rate. It is a particular case of the gamma distribution. It is the continuous analogue of the geometric distribution, and it has the key property of being memoryless. In addition to being used for the analysis of Poisson point processes it is found in various other contexts. The exponential distribution is not the same as the class of exponential families of distributions. This is a large class of probability distributions that includes the exponential distribution as one of its members, but also includes many other distributions, like the normal, binomial, gamma, and Poisson distributions. Definitions Probability density function The probability density function (pdf) of an exponential distribution is : f(x;\lambda) = \begin \lamb ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Optimal Job Scheduling

Optimal job scheduling is a class of optimization problems related to scheduling. The inputs to such problems are a list of '' jobs'' (also called ''processes'' or ''tasks'') and a list of ''machines'' (also called ''processors'' or ''workers''). The required output is a ''schedule'' – an assignment of jobs to machines. The schedule should optimize a certain ''objective function''. In the literature, problems of optimal job scheduling are often called machine scheduling, processor scheduling, multiprocessor scheduling, or just scheduling. There are many different problems of optimal job scheduling, different in the nature of jobs, the nature of machines, the restrictions on the schedule, and the objective function. A convenient notation for optimal scheduling problems was introduced by Ronald Graham, Eugene Lawler, Jan Karel Lenstra and Alexander Rinnooy Kan. It consists of three fields: α, β and γ. Each field may be a comma separated list of words. The α field describes t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Almost Surely

In probability theory, an event is said to happen almost surely (sometimes abbreviated as a.s.) if it happens with probability 1 (or Lebesgue measure 1). In other words, the set of possible exceptions may be non-empty, but it has probability 0. The concept is analogous to the concept of " almost everywhere" in measure theory. In probability experiments on a finite sample space, there is no difference between ''almost surely'' and ''surely'' (since having a probability of 1 often entails including all the sample points). However, this distinction becomes important when the sample space is an infinite set, because an infinite set can have non-empty subsets of probability 0. Some examples of the use of this concept include the strong and uniform versions of the law of large numbers, and the continuity of the paths of Brownian motion. The terms almost certainly (a.c.) and almost always (a.a.) are also used. Almost never describes the opposite of ''almost surely'': an event ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |