|

Linear Probing

Linear probing is a scheme in computer programming for resolving collisions in hash tables, data structures for maintaining a collection of key–value pairs and looking up the value associated with a given key. It was invented in 1954 by Gene Amdahl, Elaine M. McGraw, and Arthur Samuel and first analyzed in 1963 by Donald Knuth. Along with quadratic probing and double hashing, linear probing is a form of open addressing. In these schemes, each cell of a hash table stores a single key–value pair. When the hash function causes a collision by mapping a new key to a cell of the hash table that is already occupied by another key, linear probing searches the table for the closest following free location and inserts the new key there. Lookups are performed in the same way, by searching the table sequentially starting at the position given by the hash function, until finding a cell with a matching key or an empty cell. As write, "Hash tables are the most commonly used nontrivial ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

MurmurHash

MurmurHash is a non-cryptographic hash function suitable for general hash-based lookup. It was created by Austin Appleby in 2008 and is currently hosted on GitHub along with its test suite named 'SMHasher'. It also exists in a number of variants, all of which have been released into the public domain. The name comes from two basic operations, multiply (MU) and rotate (R), used in its inner loop. Unlike cryptographic hash functions, it is not specifically designed to be difficult to reverse by an adversary, making it unsuitable for cryptographic purposes. Variants MurmurHash3 The current version is MurmurHash3, which yields a 32-bit or 128-bit hash value. When using 128-bits, the x86 and x64 versions do not produce the same values, as the algorithms are optimized for their respective platforms. MurmurHash3 was released alongside SMHasher—a hash function test suite. MurmurHash2 MurmurHash2 yields a 32-bit or 64-bit value. It came in multiple variants, including some that allo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Stirling's Approximation

In mathematics, Stirling's approximation (or Stirling's formula) is an approximation for factorials. It is a good approximation, leading to accurate results even for small values of n. It is named after James Stirling, though a related but less precise result was first stated by Abraham de Moivre. One way of stating the approximation involves the logarithm of the factorial: \ln(n!) = n\ln n - n +O(\ln n), where the big O notation means that, for all sufficiently large values of n, the difference between \ln(n!) and n\ln n-n will be at most proportional to the logarithm. In computer science applications such as the worst-case lower bound for comparison sorting, it is convenient to use instead the binary logarithm, giving the equivalent form \log_2 (n!) = n\log_2 n - n\log_2 e +O(\log_2 n). The error term in either base can be expressed more precisely as \tfrac12\log(2\pi n)+O(\tfrac1n), corresponding to an approximate formula for the factorial itself, n! \sim \sqrt\left(\frac\r ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Chernoff Bound

In probability theory, the Chernoff bound gives exponentially decreasing bounds on tail distributions of sums of independent random variables. Despite being named after Herman Chernoff, the author of the paper it first appeared in, the result is due to Herman Rubin. It is a sharper bound than the first- or second-moment-based tail bounds such as Markov's inequality or Chebyshev's inequality, which only yield power-law bounds on tail decay. However, the Chernoff bound requires the variates to be independent, a condition that is not required by either Markov's inequality or Chebyshev's inequality (although Chebyshev's inequality does require the variates to be pairwise independent). The Chernoff bound is related to the Bernstein inequalities, which were developed earlier, and to Hoeffding's inequality. The generic bound The generic Chernoff bound for a random variable is attained by applying Markov's inequality to . This gives a bound in terms of the moment-generating functio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Load Factor (computer Science)

In computing, a hash table, also known as hash map, is a data structure that implements an associative array or dictionary. It is an abstract data type that maps keys to values. A hash table uses a hash function to compute an ''index'', also called a ''hash code'', into an array of ''buckets'' or ''slots'', from which the desired value can be found. During lookup, the key is hashed and the resulting hash indicates where the corresponding value is stored. Ideally, the hash function will assign each key to a unique bucket, but most hash table designs employ an imperfect hash function, which might cause hash ''collisions'' where the hash function generates the same index for more than one key. Such collisions are typically accommodated in some way. In a well-dimensioned hash table, the average time complexity for each lookup is independent of the number of elements stored in the table. Many hash table designs also allow arbitrary insertions and deletions of key–value pairs, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Big O Notation

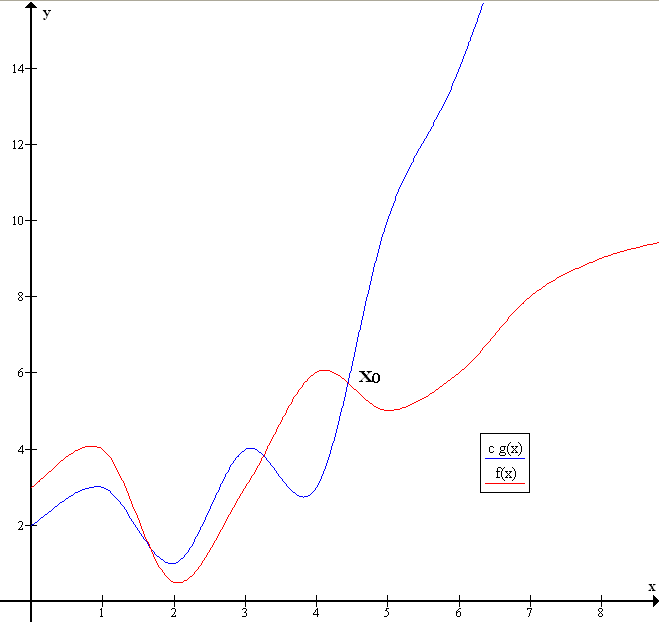

Big ''O'' notation is a mathematical notation that describes the limiting behavior of a function when the argument tends towards a particular value or infinity. Big O is a member of a family of notations invented by Paul Bachmann, Edmund Landau, and others, collectively called Bachmann–Landau notation or asymptotic notation. The letter O was chosen by Bachmann to stand for '' Ordnung'', meaning the order of approximation. In computer science, big O notation is used to classify algorithms according to how their run time or space requirements grow as the input size grows. In analytic number theory, big O notation is often used to express a bound on the difference between an arithmetical function and a better understood approximation; a famous example of such a difference is the remainder term in the prime number theorem. Big O notation is also used in many other fields to provide similar estimates. Big O notation characterizes functions according to their growth rates: d ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Expected Time

In computational complexity theory, the average-case complexity of an algorithm is the amount of some computational resource (typically time) used by the algorithm, averaged over all possible inputs. It is frequently contrasted with worst-case complexity which considers the maximal complexity of the algorithm over all possible inputs. There are three primary motivations for studying average-case complexity.O. Goldreich and S. Vadhan, Special issue on worst-case versus average-case complexity, Comput. Complex. 16, 325–330, 2007. First, although some problems may be intractable in the worst-case, the inputs which elicit this behavior may rarely occur in practice, so the average-case complexity may be a more accurate measure of an algorithm's performance. Second, average-case complexity analysis provides tools and techniques to generate hard instances of problems which can be utilized in areas such as cryptography and derandomization. Third, average-case complexity allows discrimin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Primary Clustering

In computer programming, primary clustering is one of two major failure modes of open addressing based hash tables, especially those using linear probing. It occurs after a hash collision causes two of the records in the hash table to hash to the same position, and causes one of the records to be moved to the next location in its probe sequence. Once this happens, the cluster formed by this pair of records is more likely to grow by the addition of even more colliding records, regardless of whether the new records hash to the same location as the first two. This phenomenon causes searches for keys within the cluster to be longer.. For instance, in linear probing, a record involved in a collision is always moved to the next available hash table cell subsequent to the position given by its hash function, creating a contiguous cluster of occupied hash table cells. Whenever another record is hashed to anywhere within the cluster, it grows in size by one cell. Because of this phenomenon, i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sentinel Value

In computer programming, a sentinel value (also referred to as a flag value, trip value, rogue value, signal value, or dummy data) is a special value in the context of an algorithm which uses its presence as a condition of termination, typically in a loop or recursive algorithm. The sentinel value is a form of in-band data that makes it possible to detect the end of the data when no out-of-band data (such as an explicit size indication) is provided. The value should be selected in such a way that it is guaranteed to be distinct from all legal data values since otherwise, the presence of such values would prematurely signal the end of the data (the semipredicate problem). A sentinel value is sometimes known as an " Elephant in Cairo," due to a joke where this is used as a physical sentinel. In safe languages, most sentinel values could be replaced with option types, which enforce explicit handling of the exceptional case. Examples Some examples of common sentinel values and thei ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Lazy Deletion

In computer science, lazy deletion refers to a method of deleting elements from a hash table that uses open addressing Open addressing, or closed hashing, is a method of Hash table#Collision resolution, collision resolution in hash tables. With this method a hash collision is resolved by probing, or searching through alternative locations in the array (the ''prob .... In this method, deletions are done by marking an element as deleted, rather than erasing it entirely. Deleted locations are treated as empty when inserting and as occupied during a search. The problem with this scheme is that as the number of delete/insert operations increases, the cost of a successful search increases. To improve this, when an element is searched and found in the table, the element is relocated to the first location marked for deletion that was probed during the search. Instead of finding an element to relocate when the deletion occurs, the relocation occurs lazily during the next search. Reference ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear Probing Deletion

Linearity is the property of a mathematical relationship (''function'') that can be graphically represented as a straight line. Linearity is closely related to '' proportionality''. Examples in physics include rectilinear motion, the linear relationship of voltage and current in an electrical conductor (Ohm's law), and the relationship of mass and weight. By contrast, more complicated relationships are ''nonlinear''. Generalized for functions in more than one dimension, linearity means the property of a function of being compatible with addition and scaling, also known as the superposition principle. The word linear comes from Latin ''linearis'', "pertaining to or resembling a line". In mathematics In mathematics, a linear map or linear function ''f''(''x'') is a function that satisfies the two properties: * Additivity: . * Homogeneity of degree 1: for all α. These properties are known as the superposition principle. In this definition, ''x'' is not necessarily a real ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Dynamic Array

In computer science, a dynamic array, growable array, resizable array, dynamic table, mutable array, or array list is a random access, variable-size list data structure that allows elements to be added or removed. It is supplied with standard libraries in many modern mainstream programming languages. Dynamic arrays overcome a limit of static arrays, which have a fixed capacity that needs to be specified at allocation. A dynamic array is not the same thing as a dynamically allocated array or variable-length array, either of which is an array whose size is fixed when the array is allocated, although a dynamic array may use such a fixed-size array as a back end.See, for example, thsource code of java.util.ArrayList class from OpenJDK 6 Bounded-size dynamic arrays and capacity A simple dynamic array can be constructed by allocating an array of fixed-size, typically larger than the number of elements immediately required. The elements of the dynamic array are stored contiguously a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |