|

L-moments

In statistics, L-moments are a sequence of statistics used to summarize the shape of a probability distribution. They are linear combinations of order statistics ( L-statistics) analogous to conventional moments, and can be used to calculate quantities analogous to standard deviation, skewness and kurtosis, termed the L-scale, L-skewness and L-kurtosis respectively (the L-mean is identical to the conventional mean). Standardised L-moments are called L-moment ratios and are analogous to standardized moments. Just as for conventional moments, a theoretical distribution has a set of population L-moments. Sample L-moments can be defined for a sample from the population, and can be used as estimators of the population L-moments. Population L-moments For a random variable ''X'', the ''r''th population L-moment is : \lambda_r = r^ \sum_^ , where ''X''''k:n'' denotes the ''k''th order statistic (''k''th smallest value) in an independent sample of size ''n'' from the distribution of ' ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mean Absolute Difference

The mean absolute difference (univariate) is a Statistical dispersion#Measures of statistical dispersion, measure of statistical dispersion equal to the average absolute difference of two independent values drawn from a probability distribution. A related statistic is the #Relative_mean_absolute_difference, relative mean absolute difference, which is the mean absolute difference divided by the arithmetic mean, and equal to twice the Gini coefficient. The mean absolute difference is also known as the absolute mean difference (not to be confused with the absolute value of the mean signed difference) and the Corrado Gini, Gini mean difference (GMD). The mean absolute difference is sometimes denoted by Δ or as MD. Definition The mean absolute difference is defined as the "average" or "mean", formally the expected value, of the absolute difference of two random variables ''X'' and ''Y'' Independent and identically distributed random variables, independently and identically distribute ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Skewness

In probability theory and statistics, skewness is a measure of the asymmetry of the probability distribution of a real-valued random variable about its mean. The skewness value can be positive, zero, negative, or undefined. For a unimodal distribution, negative skew commonly indicates that the ''tail'' is on the left side of the distribution, and positive skew indicates that the tail is on the right. In cases where one tail is long but the other tail is fat, skewness does not obey a simple rule. For example, a zero value means that the tails on both sides of the mean balance out overall; this is the case for a symmetric distribution, but can also be true for an asymmetric distribution where one tail is long and thin, and the other is short but fat. Introduction Consider the two distributions in the figure just below. Within each graph, the values on the right side of the distribution taper differently from the values on the left side. These tapering sides are called ''tail ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Binomial Coefficient

In mathematics, the binomial coefficients are the positive integers that occur as coefficients in the binomial theorem. Commonly, a binomial coefficient is indexed by a pair of integers and is written \tbinom. It is the coefficient of the term in the polynomial expansion of the binomial power ; this coefficient can be computed by the multiplicative formula :\binom nk = \frac, which using factorial notation can be compactly expressed as :\binom = \frac. For example, the fourth power of is :\begin (1 + x)^4 &= \tbinom x^0 + \tbinom x^1 + \tbinom x^2 + \tbinom x^3 + \tbinom x^4 \\ &= 1 + 4x + 6 x^2 + 4x^3 + x^4, \end and the binomial coefficient \tbinom =\tfrac = \tfrac = 6 is the coefficient of the term. Arranging the numbers \tbinom, \tbinom, \ldots, \tbinom in successive rows for n=0,1,2,\ldots gives a triangular array called Pascal's triangle, satisfying the recurrence relation :\binom = \binom + \binom. The binomial coefficients occur in many areas of mathematics, a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Higher-order Statistics

In statistics, the term higher-order statistics (HOS) refers to functions which use the third or higher power of a sample, as opposed to more conventional techniques of lower-order statistics, which use constant, linear, and quadratic terms (zeroth, first, and second powers). The third and higher moments, as used in the skewness and kurtosis, are examples of HOS, whereas the first and second moments, as used in the arithmetic mean (first), and variance (second) are examples of low-order statistics. HOS are particularly used in the estimation of shape parameters, such as skewness and kurtosis, as when measuring the deviation of a distribution from the normal distribution. In statistical theory, one long-established approach to higher-order statistics, for univariate and multivariate distributions is through the use of cumulants and joint cumulants.Kendall, MG., Stuart, A. (1969) ''The Advanced Theory of Statistics, Volume 1: Distribution Theory, 3rd Edition'', Griffin. (Chapte ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Resistant Statistic

Robust statistics are statistics with good performance for data drawn from a wide range of probability distributions, especially for distributions that are not normal. Robust statistical methods have been developed for many common problems, such as estimating location, scale, and regression parameters. One motivation is to produce statistical methods that are not unduly affected by outliers. Another motivation is to provide methods with good performance when there are small departures from a parametric distribution. For example, robust methods work well for mixtures of two normal distributions with different standard deviations; under this model, non-robust methods like a t-test work poorly. Introduction Robust statistics seek to provide methods that emulate popular statistical methods, but which are not unduly affected by outliers or other small departures from model assumptions. In statistics, classical estimation methods rely heavily on assumptions which are often not me ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

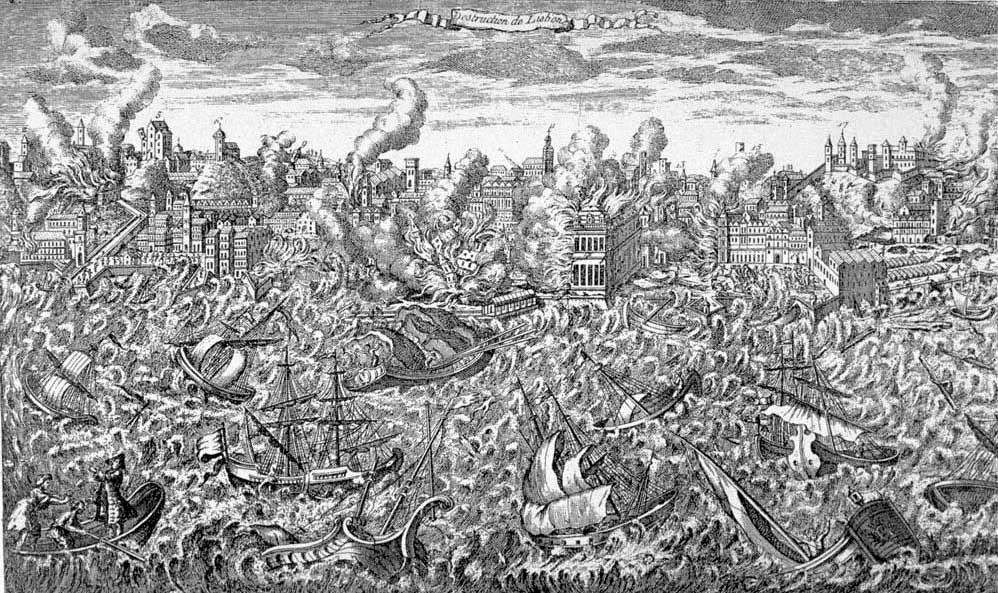

Extreme Value Theory

Extreme value theory or extreme value analysis (EVA) is a branch of statistics dealing with the extreme deviations from the median of probability distributions. It seeks to assess, from a given ordered sample of a given random variable, the probability of events that are more extreme than any previously observed. Extreme value analysis is widely used in many disciplines, such as structural engineering, finance, earth sciences, traffic prediction, and geological engineering. For example, EVA might be used in the field of hydrology to estimate the probability of an unusually large flooding event, such as the 100-year flood. Similarly, for the design of a breakwater, a coastal engineer would seek to estimate the 50-year wave and design the structure accordingly. Data analysis Two main approaches exist for practical extreme value analysis. The first method relies on deriving block maxima (minima) series as a preliminary step. In many situations it is customary and convenient to e ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Robust Statistics

Robust statistics are statistics with good performance for data drawn from a wide range of probability distributions, especially for distributions that are not normal. Robust statistical methods have been developed for many common problems, such as estimating location, scale, and regression parameters. One motivation is to produce statistical methods that are not unduly affected by outliers. Another motivation is to provide methods with good performance when there are small departures from a parametric distribution. For example, robust methods work well for mixtures of two normal distributions with different standard deviations; under this model, non-robust methods like a t-test work poorly. Introduction Robust statistics seek to provide methods that emulate popular statistical methods, but which are not unduly affected by outliers or other small departures from Statistical assumption, model assumptions. In statistics, classical estimation methods rely heavily on assumpti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Maximum Likelihood

In statistics, maximum likelihood estimation (MLE) is a method of estimation theory, estimating the Statistical parameter, parameters of an assumed probability distribution, given some observed data. This is achieved by Mathematical optimization, maximizing a likelihood function so that, under the assumed statistical model, the Realization (probability), observed data is most probable. The point estimate, point in the parameter space that maximizes the likelihood function is called the maximum likelihood estimate. The logic of maximum likelihood is both intuitive and flexible, and as such the method has become a dominant means of statistical inference. If the likelihood function is Differentiable function, differentiable, the derivative test for finding maxima can be applied. In some cases, the first-order conditions of the likelihood function can be solved analytically; for instance, the ordinary least squares estimator for a linear regression model maximizes the likelihood when ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Method Of Moments (statistics)

In statistics, the method of moments is a method of estimation of population parameters. The same principle is used to derive higher moments like skewness and kurtosis. It starts by expressing the population moments (i.e., the expected values of powers of the random variable under consideration) as functions of the parameters of interest. Those expressions are then set equal to the sample moments. The number of such equations is the same as the number of parameters to be estimated. Those equations are then solved for the parameters of interest. The solutions are estimates of those parameters. The method of moments was introduced by Pafnuty Chebyshev in 1887 in the proof of the central limit theorem. The idea of matching empirical moments of a distribution to the population moments dates back at least to Pearson. Method Suppose that the problem is to estimate k unknown parameters \theta_, \theta_2, \dots, \theta_k characterizing the distribution f_W(w; \theta) of the random va ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability Distributions

In probability theory and statistics, a probability distribution is the mathematical function that gives the probabilities of occurrence of different possible outcomes for an experiment. It is a mathematical description of a random phenomenon in terms of its sample space and the probabilities of events (subsets of the sample space). For instance, if is used to denote the outcome of a coin toss ("the experiment"), then the probability distribution of would take the value 0.5 (1 in 2 or 1/2) for , and 0.5 for (assuming that the coin is fair). Examples of random phenomena include the weather conditions at some future date, the height of a randomly selected person, the fraction of male students in a school, the results of a survey to be conducted, etc. Introduction A probability distribution is a mathematical description of the probabilities of events, subsets of the sample space. The sample space, often denoted by \Omega, is the set of all possible outcomes of a random phe ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Summary Statistics

In descriptive statistics, summary statistics are used to summarize a set of observations, in order to communicate the largest amount of information as simply as possible. Statisticians commonly try to describe the observations in * a measure of location, or central tendency, such as the arithmetic mean * a measure of statistical dispersion like the standard deviation, standard mean absolute deviation * a measure of the shape of the distribution like skewness or kurtosis * if more than one variable is measured, a measure of correlation and dependence, statistical dependence such as a Pearson product-moment correlation coefficient, correlation coefficient A common collection of order statistics used as summary statistics are the five-number summary, sometimes extended to a seven-number summary, and the associated box plot. Entries in an analysis of variance table can also be regarded as summary statistics. Examples Location Common measures of location, or central tendency, are ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Gumbel Distribution

In probability theory and statistics, the Gumbel distribution (also known as the type-I generalized extreme value distribution) is used to model the distribution of the maximum (or the minimum) of a number of samples of various distributions. This distribution might be used to represent the distribution of the maximum level of a river in a particular year if there was a list of maximum values for the past ten years. It is useful in predicting the chance that an extreme earthquake, flood or other natural disaster will occur. The potential applicability of the Gumbel distribution to represent the distribution of maxima relates to extreme value theory, which indicates that it is likely to be useful if the distribution of the underlying sample data is of the normal or exponential type. ''This article uses the Gumbel distribution to model the distribution of the maximum value''. ''To model the minimum value, use the negative of the original values.'' The Gumbel distribution is a parti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |