|

Information Source (mathematics)

In mathematics, an information source is a sequence of random variables ranging over a Alphabet (computer science), finite alphabet Γ, having a stationary distribution. The uncertainty, or entropy rate, of an information source is defined as :H\ = \lim_ H(X_n , X_0, X_1, \dots, X_) where : X_0, X_1, \dots, X_n is the sequence of random variables defining the information source, and :H(X_n , X_0, X_1, \dots, X_) is the conditional information entropy of the sequence of random variables. Equivalently, one has :H\ = \lim_ \frac. See also * Markov information source * Asymptotic equipartition property References * Robert B. Ash, ''Information Theory'', (1965) Dover Publications. zh-yue:資訊源 Information theory Stochastic processes {{statistics-stub ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mathematics

Mathematics is a field of study that discovers and organizes methods, Mathematical theory, theories and theorems that are developed and Mathematical proof, proved for the needs of empirical sciences and mathematics itself. There are many areas of mathematics, which include number theory (the study of numbers), algebra (the study of formulas and related structures), geometry (the study of shapes and spaces that contain them), Mathematical analysis, analysis (the study of continuous changes), and set theory (presently used as a foundation for all mathematics). Mathematics involves the description and manipulation of mathematical object, abstract objects that consist of either abstraction (mathematics), abstractions from nature orin modern mathematicspurely abstract entities that are stipulated to have certain properties, called axioms. Mathematics uses pure reason to proof (mathematics), prove properties of objects, a ''proof'' consisting of a succession of applications of in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Random Variable

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a Mathematics, mathematical formalization of a quantity or object which depends on randomness, random events. The term 'random variable' in its mathematical definition refers to neither randomness nor variability but instead is a mathematical function (mathematics), function in which * the Domain of a function, domain is the set of possible Outcome (probability), outcomes in a sample space (e.g. the set \ which are the possible upper sides of a flipped coin heads H or tails T as the result from tossing a coin); and * the Range of a function, range is a measurable space (e.g. corresponding to the domain above, the range might be the set \ if say heads H mapped to -1 and T mapped to 1). Typically, the range of a random variable is a subset of the Real number, real numbers. Informally, randomness typically represents some fundamental element of chance, such as in the roll of a dice, d ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Alphabet (computer Science)

In formal language theory, an alphabet, sometimes called a vocabulary, is a non-empty set of indivisible symbols/ characters/glyphs, typically thought of as representing letters, characters, digits, phonemes, or even words. The definition is used in a diverse range of fields including logic, mathematics, computer science, and linguistics. An alphabet may have any cardinality ("size") and, depending on its purpose, may be finite (e.g., the alphabet of letters "a" through "z"), countable (e.g., \), or even uncountable (e.g., \). Strings, also known as "words" or "sentences", over an alphabet are defined as a sequence of the symbols from the alphabet set. For example, the alphabet of lowercase letters "a" through "z" can be used to form English words like "iceberg" while the alphabet of both upper and lower case letters can also be used to form proper names like "Wikipedia". A common alphabet is , the binary alphabet, and a "00101111" is an example of a binary string. Infinite se ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Stationary Distribution

Stationary distribution may refer to: * and , a special distribution for a Markov chain such that if the chain starts with its stationary distribution, the marginal distribution of all states at any time will always be the stationary distribution. Assuming irreducibility, the stationary distribution is always unique if it exists, and its existence can be implied by positive recurrence of all states. The stationary distribution has the interpretation of the limiting distribution when the chain is irreducible and aperiodic. * The marginal distribution of a stationary process or stationary time series * The set of joint probability distributions of a stationary process or stationary time series In some fields of application, the term stable distribution is used for the equivalent of a stationary (marginal) distribution, although in probability and statistics the term has a rather different meaning: see stable distribution. Crudely stated, all of the above are specific cases of a c ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Entropy Rate

In the mathematical theory of probability, the entropy rate or source information rate is a function assigning an entropy to a stochastic process. For a strongly stationary process, the conditional entropy for latest random variable eventually tend towards this rate value. Definition A process X with a countable index gives rise to the sequence of its joint entropies H_n(X_1, X_2, \dots X_n). If the limit exists, the entropy rate is defined as :H(X) := \lim_ \tfrac H_n. Note that given any sequence (a_n)_n with a_0=0 and letting \Delta a_k := a_k - a_, by telescoping one has a_n=\Delta a_k. The entropy rate thus computes the mean of the first n such entropy changes, with n going to infinity. The behaviour of joint entropies from one index to the next is also explicitly subject in some characterizations of entropy. Discussion While X may be understood as a sequence of random variables, the entropy rate H(X) represents the average entropy change per one random variable, in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

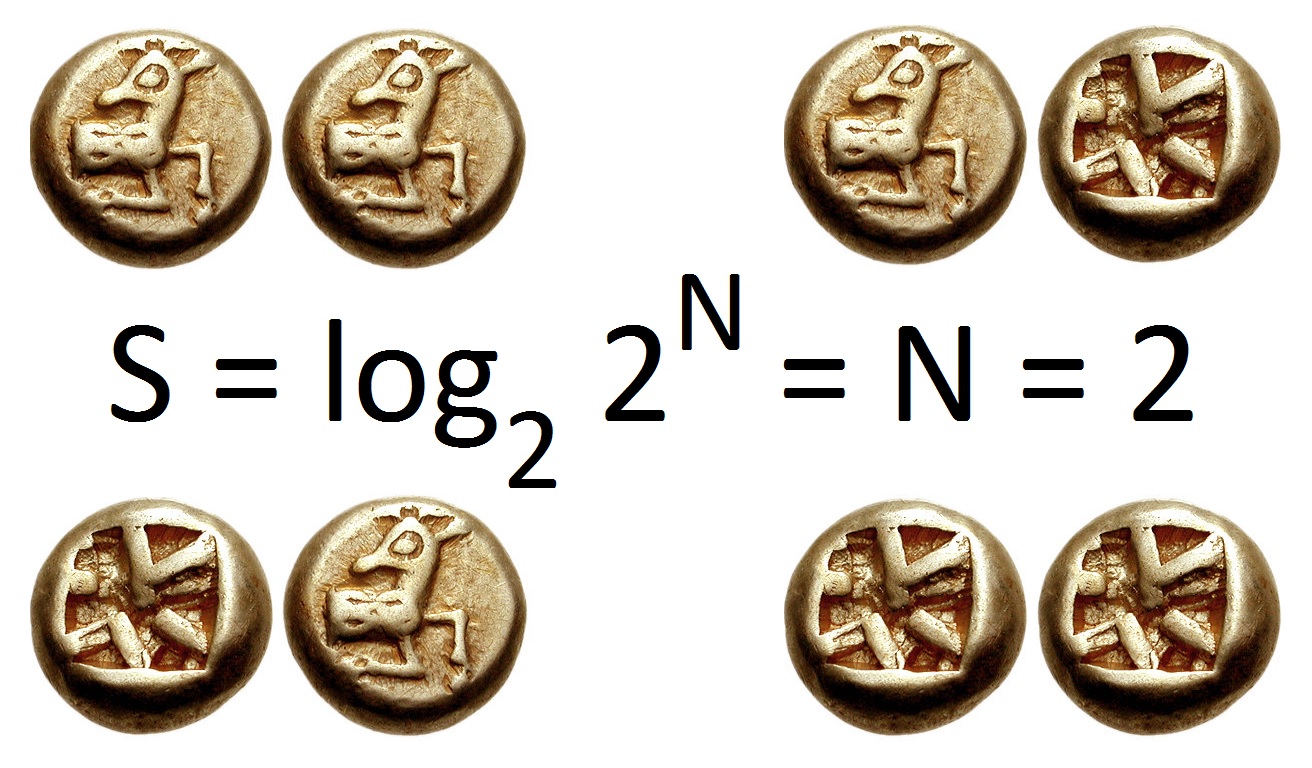

Information Entropy

In information theory, the entropy of a random variable quantifies the average level of uncertainty or information associated with the variable's potential states or possible outcomes. This measures the expected amount of information needed to describe the state of the variable, considering the distribution of probabilities across all potential states. Given a discrete random variable X, which may be any member x within the set \mathcal and is distributed according to p\colon \mathcal\to , 1/math>, the entropy is \Eta(X) := -\sum_ p(x) \log p(x), where \Sigma denotes the sum over the variable's possible values. The choice of base for \log, the logarithm, varies for different applications. Base 2 gives the unit of bits (or " shannons"), while base ''e'' gives "natural units" nat, and base 10 gives units of "dits", "bans", or " hartleys". An equivalent definition of entropy is the expected value of the self-information of a variable. The concept of information entropy was ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Markov Information Source

In mathematics, a Markov information source, or simply, a Markov source, is an information source whose underlying dynamics are given by a stationary finite Markov chain. Formal definition An information source is a sequence of random variables ranging over a finite alphabet \Gamma, having a stationary distribution. A Markov information source is then a (stationary) Markov chain M, together with a function :f:S\to \Gamma that maps states S in the Markov chain to letters in the alphabet \Gamma. A unifilar Markov source is a Markov source for which the values f(s_k) are distinct whenever each of the states s_k are reachable, in one step, from a common prior state. Unifilar sources are notable in that many of their properties are far more easily analyzed, as compared to the general case. Applications Markov sources are commonly used in communication theory, as a model of a transmitter. Markov sources also occur in natural language processing, where they are used to represent h ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Asymptotic Equipartition Property

In information theory, the asymptotic equipartition property (AEP) is a general property of the output samples of a stochastic source. It is fundamental to the concept of typical set used in theories of data compression. Roughly speaking, the theorem states that although there are many series of results that may be produced by a random process, the one actually produced is most probably from a loosely defined set of outcomes that all have approximately the same chance of being the one actually realized. (This is a consequence of the law of large numbers and ergodic theory.) Although there are individual outcomes which have a higher probability than any outcome in this set, the vast number of outcomes in the set almost guarantees that the outcome will come from the set. One way of intuitively understanding the property is through Cramér's large deviation theorem, which states that the probability of a large deviation from mean decays exponentially with the number of samples. Suc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Information Theory

Information theory is the mathematical study of the quantification (science), quantification, Data storage, storage, and telecommunications, communication of information. The field was established and formalized by Claude Shannon in the 1940s, though early contributions were made in the 1920s through the works of Harry Nyquist and Ralph Hartley. It is at the intersection of electronic engineering, mathematics, statistics, computer science, Neuroscience, neurobiology, physics, and electrical engineering. A key measure in information theory is information entropy, entropy. Entropy quantifies the amount of uncertainty involved in the value of a random variable or the outcome of a random process. For example, identifying the outcome of a Fair coin, fair coin flip (which has two equally likely outcomes) provides less information (lower entropy, less uncertainty) than identifying the outcome from a roll of a dice, die (which has six equally likely outcomes). Some other important measu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |