|

EWMA

In statistics, a moving average (rolling average or running average) is a calculation to analyze data points by creating a series of averages of different subsets of the full data set. It is also called a moving mean (MM) or rolling mean and is a type of finite impulse response filter. Variations include: simple, cumulative, or weighted forms (described below). Given a series of numbers and a fixed subset size, the first element of the moving average is obtained by taking the average of the initial fixed subset of the number series. Then the subset is modified by "shifting forward"; that is, excluding the first number of the series and including the next value in the subset. A moving average is commonly used with time series data to smooth out short-term fluctuations and highlight longer-term trends or cycles. The threshold between short-term and long-term depends on the application, and the parameters of the moving average will be set accordingly. It is also used in economic ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Exponential Smoothing

Exponential smoothing is a rule of thumb technique for smoothing time series data using the exponential window function. Whereas in the simple moving average the past observations are weighted equally, exponential functions are used to assign exponentially decreasing weights over time. It is an easily learned and easily applied procedure for making some determination based on prior assumptions by the user, such as seasonality. Exponential smoothing is often used for analysis of time-series data. Exponential smoothing is one of many window functions commonly applied to smooth data in signal processing, acting as low-pass filters to remove high-frequency noise. This method is preceded by Poisson's use of recursive exponential window functions in convolutions from the 19th century, as well as Kolmogorov and Zurbenko's use of recursive moving averages from their studies of turbulence in the 1940s. The raw data sequence is often represented by \ beginning at time t = 0, and the o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Simple Moving Average

In statistics, a moving average (rolling average or running average) is a calculation to analyze data points by creating a series of averages of different subsets of the full data set. It is also called a moving mean (MM) or rolling mean and is a type of finite impulse response filter. Variations include: simple, cumulative, or weighted forms (described below). Given a series of numbers and a fixed subset size, the first element of the moving average is obtained by taking the average of the initial fixed subset of the number series. Then the subset is modified by "shifting forward"; that is, excluding the first number of the series and including the next value in the subset. A moving average is commonly used with time series data to smooth out short-term fluctuations and highlight longer-term trends or cycles. The threshold between short-term and long-term depends on the application, and the parameters of the moving average will be set accordingly. It is also used in economi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Time Series

In mathematics, a time series is a series of data points indexed (or listed or graphed) in time order. Most commonly, a time series is a sequence taken at successive equally spaced points in time. Thus it is a sequence of discrete-time data. Examples of time series are heights of ocean tides, counts of sunspots, and the daily closing value of the Dow Jones Industrial Average. A time series is very frequently plotted via a run chart (which is a temporal line chart). Time series are used in statistics, signal processing, pattern recognition, econometrics, mathematical finance, weather forecasting, earthquake prediction, electroencephalography, control engineering, astronomy, communications engineering, and largely in any domain of applied science and engineering which involves temporal measurements. Time series ''analysis'' comprises methods for analyzing time series data in order to extract meaningful statistics and other characteristics of the data. Time series ''for ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Median

In statistics and probability theory, the median is the value separating the higher half from the lower half of a data sample, a population, or a probability distribution. For a data set, it may be thought of as "the middle" value. The basic feature of the median in describing data compared to the mean (often simply described as the "average") is that it is not skewed by a small proportion of extremely large or small values, and therefore provides a better representation of a "typical" value. Median income, for example, may be a better way to suggest what a "typical" income is, because income distribution can be very skewed. The median is of central importance in robust statistics, as it is the most resistant statistic, having a breakdown point of 50%: so long as no more than half the data are contaminated, the median is not an arbitrarily large or small result. Finite data set of numbers The median of a finite list of numbers is the "middle" number, when those numbers are list ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Infinite Impulse Response

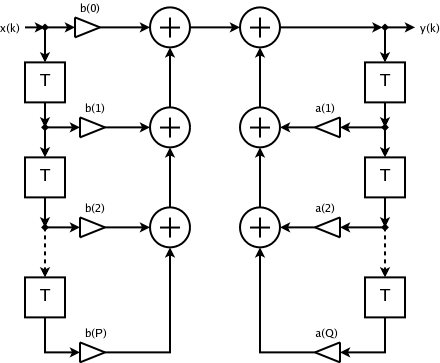

Infinite impulse response (IIR) is a property applying to many linear time-invariant systems that are distinguished by having an impulse response h(t) which does not become exactly zero past a certain point, but continues indefinitely. This is in contrast to a finite impulse response (FIR) system in which the impulse response ''does'' become exactly zero at times t>T for some finite T, thus being of finite duration. Common examples of linear time-invariant systems are most electronic and digital filters. Systems with this property are known as ''IIR systems'' or ''IIR filters''. In practice, the impulse response, even of IIR systems, usually approaches zero and can be neglected past a certain point. However the physical systems which give rise to IIR or FIR responses are dissimilar, and therein lies the importance of the distinction. For instance, analog electronic filters composed of resistors, capacitors, and/or inductors (and perhaps linear amplifiers) are generally IIR filte ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Exponential Decay

A quantity is subject to exponential decay if it decreases at a rate proportional to its current value. Symbolically, this process can be expressed by the following differential equation, where is the quantity and (lambda) is a positive rate called the exponential decay constant, disintegration constant, rate constant, or transformation constant: :\frac = -\lambda N. The solution to this equation (see derivation below) is: :N(t) = N_0 e^, where is the quantity at time , is the initial quantity, that is, the quantity at time . Measuring rates of decay Mean lifetime If the decaying quantity, ''N''(''t''), is the number of discrete elements in a certain set, it is possible to compute the average length of time that an element remains in the set. This is called the mean lifetime (or simply the lifetime), where the exponential time constant, \tau, relates to the decay rate constant, λ, in the following way: :\tau = \frac. The mean lifetime can be looked at a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Data

In the pursuit of knowledge, data (; ) is a collection of discrete values that convey information, describing quantity, quality, fact, statistics, other basic units of meaning, or simply sequences of symbols that may be further interpreted. A datum is an individual value in a collection of data. Data is usually organized into structures such as tables that provide additional context and meaning, and which may themselves be used as data in larger structures. Data may be used as variables in a computational process. Data may represent abstract ideas or concrete measurements. Data is commonly used in scientific research, economics, and in virtually every other form of human organizational activity. Examples of data sets include price indices (such as consumer price index), unemployment rates, literacy rates, and census data. In this context, data represents the raw facts and figures which can be used in such a manner in order to capture the useful information out of i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

National Institute Of Standards And Technology

The National Institute of Standards and Technology (NIST) is an agency of the United States Department of Commerce whose mission is to promote American innovation and industrial competitiveness. NIST's activities are organized into Outline of physical science, physical science laboratory programs that include Nanotechnology, nanoscale science and technology, engineering, information technology, neutron research, material measurement, and physical measurement. From 1901 to 1988, the agency was named the National Bureau of Standards. History Background The Articles of Confederation, ratified by the colonies in 1781, provided: The United States in Congress assembled shall also have the sole and exclusive right and power of regulating the alloy and value of coin struck by their own authority, or by that of the respective states—fixing the standards of weights and measures throughout the United States. Article 1, section 8, of the Constitution of the United States, ratified in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Laplace Distribution

In probability theory and statistics, the Laplace distribution is a continuous probability distribution named after Pierre-Simon Laplace. It is also sometimes called the double exponential distribution, because it can be thought of as two exponential distributions (with an additional location parameter) spliced together along the abscissa, although the term is also sometimes used to refer to the Gumbel distribution. The difference between two independent identically distributed exponential random variables is governed by a Laplace distribution, as is a Brownian motion evaluated at an exponentially distributed random time. Increments of Laplace motion or a variance gamma process evaluated over the time scale also have a Laplace distribution. Definitions Probability density function A random variable has a \textrm(\mu, b) distribution if its probability density function is :f(x\mid\mu,b) = \frac \exp \left( -\frac \right) \,\! Here, \mu is a location parameter and b > ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Skip List

In computer science, a skip list (or skiplist) is a probabilistic data structure that allows \mathcal(\log n) average complexity for search as well as \mathcal(\log n) average complexity for insertion within an ordered sequence of n elements. Thus it can get the best features of a sorted array (for searching) while maintaining a linked list-like structure that allows insertion, which is not possible with a static array. Fast search is made possible by maintaining a linked hierarchy of subsequences, with each successive subsequence skipping over fewer elements than the previous one (see the picture below on the right). Searching starts in the sparsest subsequence until two consecutive elements have been found, one smaller and one larger than or equal to the element searched for. Via the linked hierarchy, these two elements link to elements of the next sparsest subsequence, where searching is continued until finally searching in the full sequence. The elements that are skipped ov ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |