|

Durbin Test

Durbin test is a non-parametric statistical test for balanced incomplete designs that reduces to the Friedman test in the case of a complete block design. In the analysis of designed experiments, the Friedman test is the most common non-parametric test for complete block designs. Background In a randomized block design, ''k'' treatments are applied to ''b'' blocks. In a complete block design, every treatment is run for every block and the data are arranged as follows: For some experiments, it may not be realistic to run all treatments in all blocks, so one may need to run an incomplete block design. In this case, it is strongly recommended to run a balanced incomplete design. A balanced incomplete block design has the following properties: #Every block contains ''k'' experimental units. #Every treatment appears in ''r'' blocks. #Every treatment appears with every other treatment an equal number of times. Test assumptions The Durbin test is based on the following assumptions: ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Non-parametric

Nonparametric statistics is a type of statistical analysis that makes minimal assumptions about the underlying distribution of the data being studied. Often these models are infinite-dimensional, rather than finite dimensional, as in parametric statistics. Nonparametric statistics can be used for descriptive statistics or statistical inference. Nonparametric tests are often used when the assumptions of parametric tests are evidently violated. Definitions The term "nonparametric statistics" has been defined imprecisely in the following two ways, among others: The first meaning of ''nonparametric'' involves techniques that do not rely on data belonging to any particular parametric family of probability distributions. These include, among others: * Methods which are ''distribution-free'', which do not rely on assumptions that the data are drawn from a given parametric family of probability distributions. * Statistics defined to be a function on a sample, without dependency on a pa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Multiple Comparisons

Multiple comparisons, multiplicity or multiple testing problem occurs in statistics when one considers a set of statistical inferences simultaneously or estimates a subset of parameters selected based on the observed values. The larger the number of inferences made, the more likely erroneous inferences become. Several statistical techniques have been developed to address this problem, for example, by requiring a stricter significance threshold for individual comparisons, so as to compensate for the number of inferences being made. Methods for family-wise error rate give the probability of false positives resulting from the multiple comparisons problem. History The problem of multiple comparisons received increased attention in the 1950s with the work of statisticians such as Tukey and Scheffé. Over the ensuing decades, many procedures were developed to address the problem. In 1996, the first international conference on multiple comparison procedures took place in Tel Aviv. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Van Der Waerden Test

Named after the Dutch mathematician Bartel Leendert van der Waerden, the Van der Waerden test is a statistical test that ''k'' population distribution functions are equal. The Van der Waerden test converts the ranks from a standard Kruskal-Wallis test to quantiles of the standard normal distribution (details given below). These are called normal scores and the test is computed from these normal scores. The ''k'' population version of the test is an extension of the test for two populations published by Van der Waerden (1952,1953). Background Analysis of Variance (ANOVA) is a data analysis technique for examining the significance of the factors (independent variables) in a multi-factor model. The one factor model can be thought of as a generalization of the two sample t-test. That is, the two sample t-test is a test of the hypothesis that two population means are equal. The one factor ANOVA tests the hypothesis that ''k'' population means are equal. The standard ANOVA assumes that ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Analysis Of Variance

Analysis of variance (ANOVA) is a family of statistical methods used to compare the Mean, means of two or more groups by analyzing variance. Specifically, ANOVA compares the amount of variation ''between'' the group means to the amount of variation ''within'' each group. If the between-group variation is substantially larger than the within-group variation, it suggests that the group means are likely different. This comparison is done using an F-test. The underlying principle of ANOVA is based on the law of total variance, which states that the total variance in a dataset can be broken down into components attributable to different sources. In the case of ANOVA, these sources are the variation between groups and the variation within groups. ANOVA was developed by the statistician Ronald Fisher. In its simplest form, it provides a statistical test of whether two or more population means are equal, and therefore generalizes the Student's t-test#Independent two-sample t-test, ''t''- ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Chi-squared Distribution

In probability theory and statistics, the \chi^2-distribution with k Degrees of freedom (statistics), degrees of freedom is the distribution of a sum of the squares of k Independence (probability theory), independent standard normal random variables. The chi-squared distribution \chi^2_k is a special case of the gamma distribution and the univariate Wishart distribution. Specifically if X \sim \chi^2_k then X \sim \text(\alpha=\frac, \theta=2) (where \alpha is the shape parameter and \theta the scale parameter of the gamma distribution) and X \sim \text_1(1,k) . The scaled chi-squared distribution s^2 \chi^2_k is a reparametrization of the gamma distribution and the univariate Wishart distribution. Specifically if X \sim s^2 \chi^2_k then X \sim \text(\alpha=\frac, \theta=2 s^2) and X \sim \text_1(s^2,k) . The chi-squared distribution is one of the most widely used probability distributions in inferential statistics, notably in hypothesis testing and in constru ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

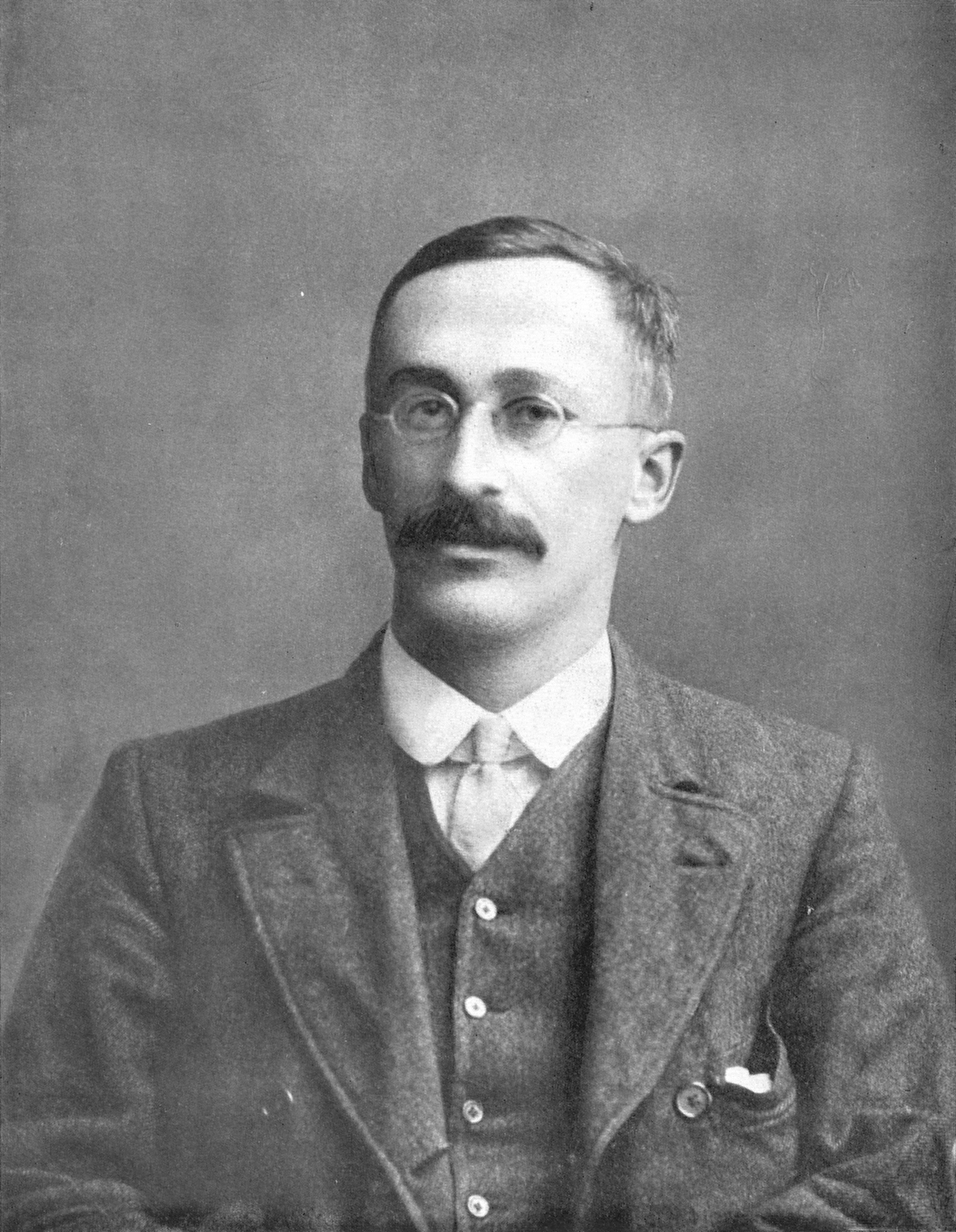

James Durbin

__NOTOC__ James Durbin FBA (30 June 1923 – 23 June 2012) was a British statistician and econometrician, known particularly for his work on time series analysis and serial correlation. Education The son of a greengrocer, Durbin was born in Widnes, where he attended the Wade Deacon Grammar School. He studied mathematics at St John's College, Cambridge, where his contemporaries included David Cox and Denis Sargan. After wartime service in the Army Operational Research Group, he worked as a statistician for two years with the British Boot, Shoe and Allied Trades Research Association and took a postgraduate diploma in mathematical statistics at Cambridge, supervised by Henry Daniels. Career After two years at the department of applied economics in Cambridge, Durbin joined the London School of Economics in 1950 and was appointed professor of statistics in 1961, a post he held until his retirement in 1988. Awards and honours He served as president of the International Stati ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Student's T-distribution

In probability theory and statistics, Student's distribution (or simply the distribution) t_\nu is a continuous probability distribution that generalizes the Normal distribution#Standard normal distribution, standard normal distribution. Like the latter, it is symmetric around zero and bell-shaped. However, t_\nu has Heavy-tailed distribution, heavier tails, and the amount of probability mass in the tails is controlled by the parameter \nu. For \nu = 1 the Student's distribution t_\nu becomes the standard Cauchy distribution, which has very fat-tailed distribution, "fat" tails; whereas for \nu \to \infty it becomes the standard normal distribution \mathcal(0, 1), which has very "thin" tails. The name "Student" is a pseudonym used by William Sealy Gosset in his scientific paper publications during his work at the Guinness Brewery in Dublin, Ireland. The Student's distribution plays a role in a number of widely used statistical analyses, including Student's t- ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

F Distribution

In probability theory and statistics, the ''F''-distribution or ''F''-ratio, also known as Snedecor's ''F'' distribution or the Fisher–Snedecor distribution (after Ronald Fisher and George W. Snedecor), is a continuous probability distribution that arises frequently as the null distribution of a test statistic, most notably in the analysis of variance (ANOVA) and other ''F''-tests. Definitions The ''F''-distribution with ''d''1 and ''d''2 degrees of freedom is the distribution of X = \frac where U_1 and U_2 are independent random variables with chi-square distributions with respective degrees of freedom d_1 and d_2. It can be shown to follow that the probability density function (pdf) for ''X'' is given by \begin f(x; d_1,d_2) &= \frac \\ pt&=\frac \left(\frac\right)^ x^ \left(1+\frac \, x \right)^ \end for real ''x'' > 0. Here \mathrm is the beta function. In many applications, the parameters ''d''1 and ''d''2 are positive integers, but the distribution is well-d ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Statistical Test

A statistical hypothesis test is a method of statistical inference used to decide whether the data provide sufficient evidence to reject a particular hypothesis. A statistical hypothesis test typically involves a calculation of a test statistic. Then a decision is made, either by comparing the test statistic to a critical value or equivalently by evaluating a ''p''-value computed from the test statistic. Roughly 100 specialized statistical tests are in use and noteworthy. History While hypothesis testing was popularized early in the 20th century, early forms were used in the 1700s. The first use is credited to John Arbuthnot (1710), followed by Pierre-Simon Laplace (1770s), in analyzing the human sex ratio at birth; see . Choice of null hypothesis Paul Meehl has argued that the epistemological importance of the choice of null hypothesis has gone largely unacknowledged. When the null hypothesis is predicted by theory, a more precise experiment will be a more severe tes ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Quantile Function

In probability and statistics, the quantile function is a function Q: ,1\mapsto \mathbb which maps some probability x \in ,1/math> of a random variable v to the value of the variable y such that P(v\leq y) = x according to its probability distribution. In other words, the function returns the value of the variable below which the specified cumulative probability is contained. For example, if the distribution is a standard normal distribution then Q(0.5) will return 0 as 0.5 of the probability mass is contained below 0. The quantile function is also called the percentile function (after the percentile), percent-point function, inverse cumulative distribution function (after the cumulative distribution function or c.d.f.) or inverse distribution function. Definition Strictly increasing distribution function With reference to a continuous and strictly increasing cumulative distribution function (c.d.f.) F_X\colon \mathbb \to ,1/math> of a random variable , the quantile function ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |