|

Bernoulli Sequence

In probability and statistics, a Bernoulli process (named after Jacob Bernoulli) is a finite or infinite sequence of binary random variables, so it is a discrete-time stochastic process that takes only two values, canonically 0 and 1. The component Bernoulli variables ''X''''i'' are identically distributed and independent. Prosaically, a Bernoulli process is a repeated coin flipping, possibly with an unfair coin (but with consistent unfairness). Every variable ''X''''i'' in the sequence is associated with a Bernoulli trial or experiment. They all have the same Bernoulli distribution. Much of what can be said about the Bernoulli process can also be generalized to more than two outcomes (such as the process for a six-sided die); this generalization is known as the Bernoulli scheme. The problem of determining the process, given only a limited sample of Bernoulli trials, may be called the problem of checking whether a coin is fair. Definition A Bernoulli process is a fini ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability

Probability is the branch of mathematics concerning numerical descriptions of how likely an Event (probability theory), event is to occur, or how likely it is that a proposition is true. The probability of an event is a number between 0 and 1, where, roughly speaking, 0 indicates impossibility of the event and 1 indicates certainty."Kendall's Advanced Theory of Statistics, Volume 1: Distribution Theory", Alan Stuart and Keith Ord, 6th Ed, (2009), .William Feller, ''An Introduction to Probability Theory and Its Applications'', (Vol 1), 3rd Ed, (1968), Wiley, . The higher the probability of an event, the more likely it is that the event will occur. A simple example is the tossing of a fair (unbiased) coin. Since the coin is fair, the two outcomes ("heads" and "tails") are both equally probable; the probability of "heads" equals the probability of "tails"; and since no other outcomes are possible, the probability of either "heads" or "tails" is 1/2 (which could also be written ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Negative Binomial Distribution

In probability theory Probability theory is the branch of mathematics concerned with probability. Although there are several different probability interpretations, probability theory treats the concept in a rigorous mathematical manner by expressing it through a set o ... and statistics, the negative binomial distribution is a discrete probability distribution that models the number of failures in a sequence of independent and identically distributed Bernoulli trials before a specified (non-random) number of successes (denoted r) occurs. For example, we can define rolling a 6 on a die as a success, and rolling any other number as a failure, and ask how many failure rolls will occur before we see the third success (r=3). In such a case, the probability distribution of the number of failures that appear will be a negative binomial distribution. An alternative formulation is to model the number of total trials (instead of the number of failures). In fact, for a specified (non-ra ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Iverson Bracket

In mathematics, the Iverson bracket, named after Kenneth E. Iverson, is a notation that generalises the Kronecker delta, which is the Iverson bracket of the statement . It maps any statement to a function of the free variables in that statement. This function is defined to take the value 1 for the values of the variables for which the statement is true, and takes the value 0 otherwise. It is generally denoted by putting the statement inside square brackets: = \begin 1 & \text P \text \\ 0 & \text \end In other words, the Iverson bracket of a statement is the indicator function of the set of values for which the statement is true. The Iverson bracket allows using capital-sigma notation without summation index. That is, for any property P(k) of the integer k, \sum_kf(k)\,(k)= \sum_f(k). By convention, f(k) does not need to be defined for the values of for which the Iverson bracket equals ; that is, a summand f(k) textbf/math> must evaluate to 0 regardless of whether f(k) is ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability Mass Function

In probability and statistics, a probability mass function is a function that gives the probability that a discrete random variable is exactly equal to some value. Sometimes it is also known as the discrete density function. The probability mass function is often the primary means of defining a discrete probability distribution, and such functions exist for either scalar or multivariate random variables whose domain is discrete. A probability mass function differs from a probability density function (PDF) in that the latter is associated with continuous rather than discrete random variables. A PDF must be integrated over an interval to yield a probability. The value of the random variable having the largest probability mass is called the mode. Formal definition Probability mass function is the probability distribution of a discrete random variable, and provides the possible values and their associated probabilities. It is the function p: \R \to ,1/math> defined by for - ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Discrete Random Variable

A random variable (also called random quantity, aleatory variable, or stochastic variable) is a mathematical formalization of a quantity or object which depends on random events. It is a mapping or a function from possible outcomes (e.g., the possible upper sides of a flipped coin such as heads H and tails T) in a sample space (e.g., the set \) to a measurable space, often the real numbers (e.g., \ in which 1 corresponding to H and -1 corresponding to T). Informally, randomness typically represents some fundamental element of chance, such as in the roll of a dice; it may also represent uncertainty, such as measurement error. However, the interpretation of probability is philosophically complicated, and even in specific cases is not always straightforward. The purely mathematical analysis of random variables is independent of such interpretational difficulties, and can be based upon a rigorous axiomatic setup. In the formal mathematical language of measure theory, a random ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Measure (mathematics)

In mathematics, the concept of a measure is a generalization and formalization of geometrical measures ( length, area, volume) and other common notions, such as mass and probability of events. These seemingly distinct concepts have many similarities and can often be treated together in a single mathematical context. Measures are foundational in probability theory, integration theory, and can be generalized to assume negative values, as with electrical charge. Far-reaching generalizations (such as spectral measures and projection-valued measures) of measure are widely used in quantum physics and physics in general. The intuition behind this concept dates back to ancient Greece, when Archimedes tried to calculate the area of a circle. But it was not until the late 19th and early 20th centuries that measure theory became a branch of mathematics. The foundations of modern measure theory were laid in the works of Émile Borel, Henri Lebesgue, Nikolai Luzin, Johann Radon, Co ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Borel Algebra

In mathematics, a Borel set is any set in a topological space that can be formed from open sets (or, equivalently, from closed sets) through the operations of countable union, countable intersection, and relative complement. Borel sets are named after Émile Borel. For a topological space ''X'', the collection of all Borel sets on ''X'' forms a σ-algebra, known as the Borel algebra or Borel σ-algebra. The Borel algebra on ''X'' is the smallest σ-algebra containing all open sets (or, equivalently, all closed sets). Borel sets are important in measure theory, since any measure defined on the open sets of a space, or on the closed sets of a space, must also be defined on all Borel sets of that space. Any measure defined on the Borel sets is called a Borel measure. Borel sets and the associated Borel hierarchy also play a fundamental role in descriptive set theory. In some contexts, Borel sets are defined to be generated by the compact sets of the topological s ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Sigma Algebra

Sigma (; uppercase Σ, lowercase σ, lowercase in word-final position ς; grc-gre, σίγμα) is the eighteenth letter of the Greek alphabet. In the system of Greek numerals, it has a value of 200. In general mathematics, uppercase Σ is used as an operator for summation. When used at the end of a letter-case word (one that does not use all caps), the final form (ς) is used. In ' (Odysseus), for example, the two lowercase sigmas (σ) in the center of the name are distinct from the word-final sigma (ς) at the end. The Latin letter S derives from sigma while the Cyrillic letter Es derives from a lunate form of this letter. History The shape (Σς) and alphabetic position of sigma is derived from the Phoenician letter ( ''shin''). Sigma's original name may have been ''san'', but due to the complicated early history of the Greek epichoric alphabets, ''san'' came to be identified as a separate letter in the Greek alphabet, represented as Ϻ. Herodotus reports that "san" wa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cylinder Set

In mathematics, the cylinder sets form a basis of the product topology on a product of sets; they are also a generating family of the cylinder σ-algebra. General definition Given a collection S of sets, consider the Cartesian product X = \prod_ Y of all sets in the collection. The canonical projection corresponding to some Y\in S is the function p_ : X \to Y that maps every element of the product to its Y component. A cylinder set is a preimage of a canonical projection or finite intersection of such preimages. Explicitly, it is a set of the form, \bigcap_^n p_^ \left(A_i\right) = \left\ for any choice of n, finite sequence of sets Y_1,...Y_n\in S and subsets A_ \subseteq Y_i for 1 \leq i \leq n. Here x_Y\in Y denotes the Y component of x\in X. Then, when all sets in S are topological spaces, the product topology is generated by cylinder sets corresponding to the components' open sets. That is cylinders of the form \bigcap_^n p_^ \left(U_i\right) where for each i, U_i is ope ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

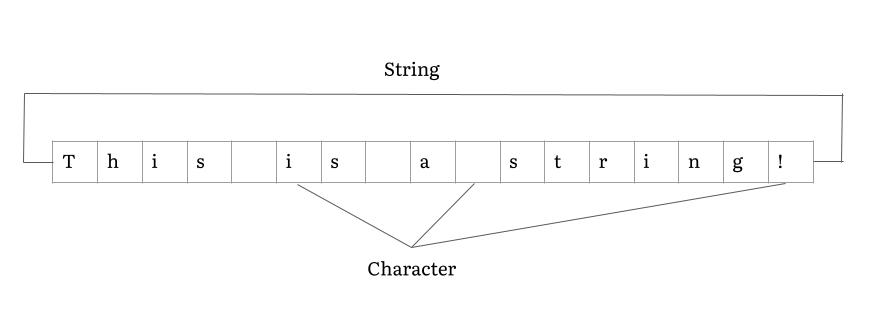

String (computer Science)

In computer programming, a string is traditionally a sequence of characters, either as a literal constant or as some kind of variable. The latter may allow its elements to be mutated and the length changed, or it may be fixed (after creation). A string is generally considered as a data type and is often implemented as an array data structure of bytes (or words) that stores a sequence of elements, typically characters, using some character encoding. ''String'' may also denote more general arrays or other sequence (or list) data types and structures. Depending on the programming language and precise data type used, a variable declared to be a string may either cause storage in memory to be statically allocated for a predetermined maximum length or employ dynamic allocation to allow it to hold a variable number of elements. When a string appears literally in source code, it is known as a string literal or an anonymous string. In formal languages, which are used in mathemati ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Product Topology

In topology and related areas of mathematics, a product space is the Cartesian product of a family of topological spaces equipped with a natural topology called the product topology. This topology differs from another, perhaps more natural-seeming, topology called the box topology, which can also be given to a product space and which agrees with the product topology when the product is over only finitely many spaces. However, the product topology is "correct" in that it makes the product space a categorical product of its factors, whereas the box topology is too fine; in that sense the product topology is the natural topology on the Cartesian product. Definition Throughout, I will be some non-empty index set and for every index i \in I, let X_i be a topological space. Denote the Cartesian product of the sets X_i by X := \prod X_ := \prod_ X_i and for every index i \in I, denote the i-th by \begin p_i :\;&& \prod_ X_j &&\;\to\; & X_i \\ .3ex && \left(x_j ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Topology

In mathematics, topology (from the Greek words , and ) is concerned with the properties of a geometric object that are preserved under continuous deformations, such as stretching, twisting, crumpling, and bending; that is, without closing holes, opening holes, tearing, gluing, or passing through itself. A topological space is a set endowed with a structure, called a ''topology'', which allows defining continuous deformation of subspaces, and, more generally, all kinds of continuity. Euclidean spaces, and, more generally, metric spaces are examples of a topological space, as any distance or metric defines a topology. The deformations that are considered in topology are homeomorphisms and homotopies. A property that is invariant under such deformations is a topological property. Basic examples of topological properties are: the dimension, which allows distinguishing between a line and a surface; compactness, which allows distinguishing between a line and a circle; connectedne ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |