Viterbi Algorithm on:

[Wikipedia]

[Google]

[Amazon]

The Viterbi algorithm is a dynamic programming

A particular patient visits three days in a row, and reports feeling normal on the first day, cold on the second day, and dizzy on the third day.

Firstly, the probabilities of being healthy or having a fever on the first day are calculated. The probability that a patient will be healthy on the first day and report feeling normal is . Similarly, the probability that a patient will have a fever on the first day and report feeling normal is .

The probabilities for each of the following days can be calculated from the previous day directly. For example, the highest chance of being healthy on the second day and reporting to be cold, following reporting being normal on the first day, is the maximum of and . This suggests it is more likely that the patient was healthy for both of those days, rather than having a fever and recovering.

The rest of the probabilities are summarised in the following table:

From the table, it can be seen that the patient most likely had a fever on the third day. Furthermore, there exists a sequence of states ending on "fever", of which the probability of producing the given observations is 0.01512. This sequence is precisely (healthy, healthy, fever), which can be found be tracing back which states were used when calculating the maxima (which happens to be the best guess from each day but will not always be). In other words, given the observed activities, the patient was most likely to have been healthy on the first day and also on the second day (despite feeling cold that day), and only to have contracted a fever on the third day.

The operation of Viterbi's algorithm can be visualized by means of a trellis diagram. The Viterbi path is essentially the shortest path through this trellis.

A particular patient visits three days in a row, and reports feeling normal on the first day, cold on the second day, and dizzy on the third day.

Firstly, the probabilities of being healthy or having a fever on the first day are calculated. The probability that a patient will be healthy on the first day and report feeling normal is . Similarly, the probability that a patient will have a fever on the first day and report feeling normal is .

The probabilities for each of the following days can be calculated from the previous day directly. For example, the highest chance of being healthy on the second day and reporting to be cold, following reporting being normal on the first day, is the maximum of and . This suggests it is more likely that the patient was healthy for both of those days, rather than having a fever and recovering.

The rest of the probabilities are summarised in the following table:

From the table, it can be seen that the patient most likely had a fever on the third day. Furthermore, there exists a sequence of states ending on "fever", of which the probability of producing the given observations is 0.01512. This sequence is precisely (healthy, healthy, fever), which can be found be tracing back which states were used when calculating the maxima (which happens to be the best guess from each day but will not always be). In other words, given the observed activities, the patient was most likely to have been healthy on the first day and also on the second day (despite feeling cold that day), and only to have contracted a fever on the third day.

The operation of Viterbi's algorithm can be visualized by means of a trellis diagram. The Viterbi path is essentially the shortest path through this trellis.

Tutorial

on convolutional coding with viterbi decoding, by Chip Fleming

A tutorial for a Hidden Markov Model toolkit (implemented in C) that contains a description of the Viterbi algorithm

Viterbi algorithm

by Dr. Andrew J. Viterbi (scholarpedia.org).

Mathematica

has an implementation as part of its support for stochastic processes

Susa

signal processing framework provides the C++ implementation for

here

C++

C#

Java

Java 8

Julia (HMMBase.jl)

Perl

Prolog

{{Webarchive, url=https://web.archive.org/web/20120502010115/http://www.cs.stonybrook.edu/~pfodor/viterbi/viterbi.P , date=2012-05-02

Go

SFIHMM

includes code for Viterbi decoding. Eponymous algorithms of mathematics Error detection and correction Dynamic programming Markov models Articles with example Python (programming language) code

algorithm

In mathematics and computer science, an algorithm () is a finite sequence of Rigour#Mathematics, mathematically rigorous instructions, typically used to solve a class of specific Computational problem, problems or to perform a computation. Algo ...

for obtaining the maximum a posteriori probability estimate of the most likely sequence of hidden states—called the Viterbi path—that results in a sequence of observed events. This is done especially in the context of Markov information sources and hidden Markov model

A hidden Markov model (HMM) is a Markov model in which the observations are dependent on a latent (or ''hidden'') Markov process (referred to as X). An HMM requires that there be an observable process Y whose outcomes depend on the outcomes of X ...

s (HMM).

The algorithm has found universal application in decoding the convolutional code

In telecommunication, a convolutional code is a type of error-correcting code that generates parity symbols via the sliding application of a boolean polynomial function to a data stream. The sliding application represents the 'convolution' of th ...

s used in both CDMA

Code-division multiple access (CDMA) is a channel access method used by various radio communication technologies. CDMA is an example of multiple access, where several transmitters can send information simultaneously over a single communicatio ...

and GSM

The Global System for Mobile Communications (GSM) is a family of standards to describe the protocols for second-generation (2G) digital cellular networks, as used by mobile devices such as mobile phones and Mobile broadband modem, mobile broadba ...

digital cellular, dial-up

Dial-up Internet access is a form of Internet access that uses the facilities of the public switched telephone network (PSTN) to establish a connection to an Internet service provider (ISP) by dialing a telephone number on a conventional telepho ...

modems, satellite, deep-space communications, and 802.11 wireless LANs. It is now also commonly used in speech recognition

Speech recognition is an interdisciplinary subfield of computer science and computational linguistics that develops methodologies and technologies that enable the recognition and translation of spoken language into text by computers. It is also ...

, speech synthesis

Speech synthesis is the artificial production of human speech. A computer system used for this purpose is called a speech synthesizer, and can be implemented in software or hardware products. A text-to-speech (TTS) system converts normal langua ...

, diarization, keyword spotting, computational linguistics

Computational linguistics is an interdisciplinary field concerned with the computational modelling of natural language, as well as the study of appropriate computational approaches to linguistic questions. In general, computational linguistics ...

, and bioinformatics

Bioinformatics () is an interdisciplinary field of science that develops methods and Bioinformatics software, software tools for understanding biological data, especially when the data sets are large and complex. Bioinformatics uses biology, ...

. For example, in speech-to-text (speech recognition), the acoustic signal is treated as the observed sequence of events, and a string of text is considered to be the "hidden cause" of the acoustic signal. The Viterbi algorithm finds the most likely string of text given the acoustic signal.

History

The Viterbi algorithm is named afterAndrew Viterbi

Andrew James Viterbi (born Andrea Giacomo Viterbi, March 9, 1935) is an electrical engineer and businessman who co-founded Qualcomm Inc. and invented the Viterbi algorithm. He is the Presidential Chair Professor of Electrical Engineering at th ...

, who proposed it in 1967 as a decoding algorithm for convolutional codes over noisy digital communication links. It has, however, a history of multiple invention, with at least seven independent discoveries, including those by Viterbi, Needleman and Wunsch, and Wagner and Fischer. It was introduced to natural language processing

Natural language processing (NLP) is a subfield of computer science and especially artificial intelligence. It is primarily concerned with providing computers with the ability to process data encoded in natural language and is thus closely related ...

as a method of part-of-speech tagging

In corpus linguistics, part-of-speech tagging (POS tagging, PoS tagging, or POST), also called grammatical tagging, is the process of marking up a word in a text ( corpus) as corresponding to a particular part of speech, based on both its defini ...

as early as 1987.

''Viterbi path'' and ''Viterbi algorithm'' have become standard terms for the application of dynamic programming algorithms to maximization problems involving probabilities.

For example, in statistical parsing a dynamic programming algorithm can be used to discover the single most likely context-free derivation (parse) of a string, which is commonly called the "Viterbi parse". Another application is in target tracking, where the track is computed that assigns a maximum likelihood to a sequence of observations.

Algorithm

Given a hidden Markov model with a set of hidden states and a sequence of observations , the Viterbi algorithm finds the most likely sequence of states that could have produced those observations. At each time step , the algorithm solves the subproblem where only the observations up to are considered. Two matrices of size are constructed: * contains the maximum probability of ending up at state at observation , out of all possible sequences of states leading up to it. * tracks the previous state that was used before in this maximum probability state sequence. Let and be the initial and transition probabilities respectively, and let be the probability of observing at state . Then the values of are given by the recurrence relation The formula for is identical for , except that is replaced with , and . The Viterbi path can be found by selecting the maximum of at the final timestep, and following in reverse.Pseudocode

function Viterbi(states, init, trans, emit, obs) is input states: S hidden states input init: initial probabilities of each state input trans: S × S transition matrix input emit: S × O emission matrix input obs: sequence of T observations prob ← T × S matrix of zeroes prev ← empty T × S matrix for each state s in states do prob s] = init * emit obs for t = 1 to T - 1 inclusive do ''// t = 0 has been dealt with already'' for each state s in states do for each state r in states do new_prob ← prob - 1r] * trans * emit obs if new_prob > prob then prob ← new_prob prev ← r path ← empty array of length T path - 1← the state s with maximum prob - 1s] for t = T - 2 to 0 inclusive do path ← prev + 1path + 1 return path end The time complexity of the algorithm is . If it is known which state transitions have non-zero probability, an improved bound can be found by iterating over only those which link to in the inner loop. Then usingamortized analysis

In computer science, amortized analysis is a method for analyzing a given algorithm's complexity, or how much of a resource, especially time or memory, it takes to execute. The motivation for amortized analysis is that looking at the worst-case ...

one can show that the complexity is , where is the number of edges in the graph, i.e. the number of non-zero entries in the transition matrix.

Example

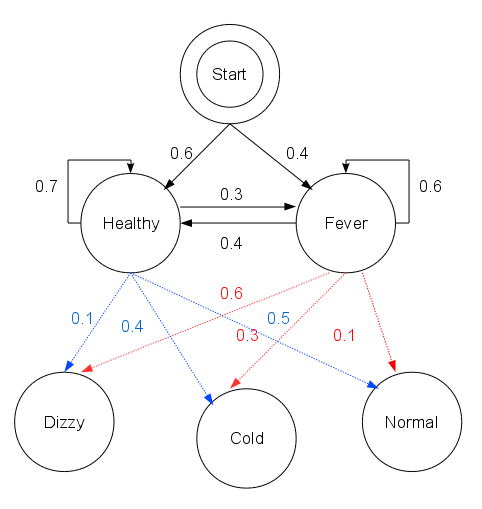

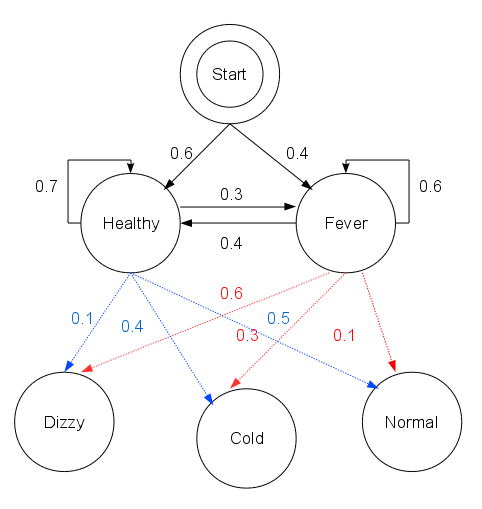

A doctor wishes to determine whether patients are healthy or have a fever. The only information the doctor can obtain is by asking patients how they feel. The patients may report that they either feel normal, dizzy, or cold. It is believed that the health condition of the patients operates as a discreteMarkov chain

In probability theory and statistics, a Markov chain or Markov process is a stochastic process describing a sequence of possible events in which the probability of each event depends only on the state attained in the previous event. Informally ...

. There are two states, "healthy" and "fever", but the doctor cannot observe them directly; they are ''hidden'' from the doctor. On each day, the chance that a patient tells the doctor "I feel normal", "I feel cold", or "I feel dizzy", depends only on the patient's health condition on that day.

The ''observations'' (normal, cold, dizzy) along with the ''hidden'' states (healthy, fever) form a hidden Markov model (HMM). From past experience, the probabilities of this model have been estimated as:

init = trans = emit =In this code,

init represents the doctor's belief about how likely the patient is to be healthy initially. Note that the particular probability distribution used here is not the equilibrium one, which would be trans represent the change of health condition in the underlying Markov chain. In this example, a patient who is healthy today has only a 30% chance of having a fever tomorrow. The emission probabilities emit represent how likely each possible observation (normal, cold, or dizzy) is, given the underlying condition (healthy or fever). A patient who is healthy has a 50% chance of feeling normal; one who has a fever has a 60% chance of feeling dizzy.

A particular patient visits three days in a row, and reports feeling normal on the first day, cold on the second day, and dizzy on the third day.

Firstly, the probabilities of being healthy or having a fever on the first day are calculated. The probability that a patient will be healthy on the first day and report feeling normal is . Similarly, the probability that a patient will have a fever on the first day and report feeling normal is .

The probabilities for each of the following days can be calculated from the previous day directly. For example, the highest chance of being healthy on the second day and reporting to be cold, following reporting being normal on the first day, is the maximum of and . This suggests it is more likely that the patient was healthy for both of those days, rather than having a fever and recovering.

The rest of the probabilities are summarised in the following table:

From the table, it can be seen that the patient most likely had a fever on the third day. Furthermore, there exists a sequence of states ending on "fever", of which the probability of producing the given observations is 0.01512. This sequence is precisely (healthy, healthy, fever), which can be found be tracing back which states were used when calculating the maxima (which happens to be the best guess from each day but will not always be). In other words, given the observed activities, the patient was most likely to have been healthy on the first day and also on the second day (despite feeling cold that day), and only to have contracted a fever on the third day.

The operation of Viterbi's algorithm can be visualized by means of a trellis diagram. The Viterbi path is essentially the shortest path through this trellis.

A particular patient visits three days in a row, and reports feeling normal on the first day, cold on the second day, and dizzy on the third day.

Firstly, the probabilities of being healthy or having a fever on the first day are calculated. The probability that a patient will be healthy on the first day and report feeling normal is . Similarly, the probability that a patient will have a fever on the first day and report feeling normal is .

The probabilities for each of the following days can be calculated from the previous day directly. For example, the highest chance of being healthy on the second day and reporting to be cold, following reporting being normal on the first day, is the maximum of and . This suggests it is more likely that the patient was healthy for both of those days, rather than having a fever and recovering.

The rest of the probabilities are summarised in the following table:

From the table, it can be seen that the patient most likely had a fever on the third day. Furthermore, there exists a sequence of states ending on "fever", of which the probability of producing the given observations is 0.01512. This sequence is precisely (healthy, healthy, fever), which can be found be tracing back which states were used when calculating the maxima (which happens to be the best guess from each day but will not always be). In other words, given the observed activities, the patient was most likely to have been healthy on the first day and also on the second day (despite feeling cold that day), and only to have contracted a fever on the third day.

The operation of Viterbi's algorithm can be visualized by means of a trellis diagram. The Viterbi path is essentially the shortest path through this trellis.

Extensions

A generalization of the Viterbi algorithm, termed the ''max-sum algorithm'' (or ''max-product algorithm'') can be used to find the most likely assignment of all or some subset oflatent variable

In statistics, latent variables (from Latin: present participle of ) are variables that can only be inferred indirectly through a mathematical model from other observable variables that can be directly observed or measured. Such '' latent va ...

s in a large number of graphical models, e.g. Bayesian network

A Bayesian network (also known as a Bayes network, Bayes net, belief network, or decision network) is a probabilistic graphical model that represents a set of variables and their conditional dependencies via a directed acyclic graph (DAG). Whi ...

s, Markov random field

In the domain of physics and probability, a Markov random field (MRF), Markov network or undirected graphical model is a set of random variables having a Markov property described by an undirected graph

In discrete mathematics, particularly ...

s and conditional random field

Conditional random fields (CRFs) are a class of statistical modeling methods often applied in pattern recognition and machine learning and used for structured prediction. Whereas a classifier predicts a label for a single sample without consi ...

s. The latent variables need, in general, to be connected in a way somewhat similar to a hidden Markov model

A hidden Markov model (HMM) is a Markov model in which the observations are dependent on a latent (or ''hidden'') Markov process (referred to as X). An HMM requires that there be an observable process Y whose outcomes depend on the outcomes of X ...

(HMM), with a limited number of connections between variables and some type of linear structure among the variables. The general algorithm involves ''message passing'' and is substantially similar to the belief propagation

Belief propagation, also known as sum–product message passing, is a message-passing algorithm for performing inference on graphical models, such as Bayesian networks and Markov random fields. It calculates the marginal distribution for ea ...

algorithm (which is the generalization of the forward-backward algorithm).

With an algorithm called iterative Viterbi decoding, one can find the subsequence of an observation that matches best (on average) to a given hidden Markov model. This algorithm is proposed by Qi Wang et al. to deal with turbo code. Iterative Viterbi decoding works by iteratively invoking a modified Viterbi algorithm, reestimating the score for a filler until convergence.

An alternative algorithm, the Lazy Viterbi algorithm, has been proposed. For many applications of practical interest, under reasonable noise conditions, the lazy decoder (using Lazy Viterbi algorithm) is much faster than the original Viterbi decoder (using Viterbi algorithm). While the original Viterbi algorithm calculates every node in the trellis of possible outcomes, the Lazy Viterbi algorithm maintains a prioritized list of nodes to evaluate in order, and the number of calculations required is typically fewer (and never more) than the ordinary Viterbi algorithm for the same result. However, it is not so easy to parallelize in hardware.

Soft output Viterbi algorithm

The soft output Viterbi algorithm (SOVA) is a variant of the classical Viterbi algorithm. SOVA differs from the classical Viterbi algorithm in that it uses a modified path metric which takes into account the ''a priori probabilities'' of the input symbols, and produces a ''soft'' output indicating the ''reliability'' of the decision. The first step in the SOVA is the selection of the survivor path, passing through one unique node at each time instant, ''t''. Since each node has 2 branches converging at it (with one branch being chosen to form the ''Survivor Path'', and the other being discarded), the difference in the branch metrics (or ''cost'') between the chosen and discarded branches indicate the ''amount of error'' in the choice. This ''cost'' is accumulated over the entire sliding window (usually equals ''at least'' five constraint lengths), to indicate the ''soft output'' measure of reliability of the ''hard bit decision'' of the Viterbi algorithm.See also

*Expectation–maximization algorithm

In statistics, an expectation–maximization (EM) algorithm is an iterative method to find (local) maximum likelihood or maximum a posteriori (MAP) estimates of parameters in statistical models, where the model depends on unobserved latent varia ...

* Baum–Welch algorithm

* Forward-backward algorithm

* Forward algorithm

The forward algorithm, in the context of a hidden Markov model (HMM), is used to calculate a 'belief state': the probability of a state at a certain time, given the history of evidence. The process is also known as ''filtering''. The forward alg ...

* Error-correcting code

In computing, telecommunication, information theory, and coding theory, forward error correction (FEC) or channel coding is a technique used for controlling errors in data transmission over unreliable or noisy communication channels.

The centra ...

* Viterbi decoder

* Hidden Markov model

A hidden Markov model (HMM) is a Markov model in which the observations are dependent on a latent (or ''hidden'') Markov process (referred to as X). An HMM requires that there be an observable process Y whose outcomes depend on the outcomes of X ...

* Part-of-speech tagging

In corpus linguistics, part-of-speech tagging (POS tagging, PoS tagging, or POST), also called grammatical tagging, is the process of marking up a word in a text ( corpus) as corresponding to a particular part of speech, based on both its defini ...

* A* search algorithm

References

General references

* (note: the Viterbi decoding algorithm is described in section IV.) Subscription required. * * Subscription required. * * (Describes the forward algorithm and Viterbi algorithm for HMMs). * Shinghal, R. and Godfried T. Toussaint, "Experiments in text recognition with the modified Viterbi algorithm," ''IEEE Transactions on Pattern Analysis and Machine Intelligence'', Vol. PAMI-l, April 1979, pp. 184–193. * Shinghal, R. and Godfried T. Toussaint, "The sensitivity of the modified Viterbi algorithm to the source statistics," ''IEEE Transactions on Pattern Analysis and Machine Intelligence'', vol. PAMI-2, March 1980, pp. 181–185.External links

* Implementations in Java, F#, Clojure, C# on WikibooksTutorial

on convolutional coding with viterbi decoding, by Chip Fleming

A tutorial for a Hidden Markov Model toolkit (implemented in C) that contains a description of the Viterbi algorithm

Viterbi algorithm

by Dr. Andrew J. Viterbi (scholarpedia.org).

Implementations

Mathematica

has an implementation as part of its support for stochastic processes

Susa

signal processing framework provides the C++ implementation for

Forward error correction

In computing, telecommunication, information theory, and coding theory, forward error correction (FEC) or channel coding is a technique used for controlling errors in data transmission over unreliable or noisy communication channels.

The centra ...

codes and channel equalizatiohere

C++

C#

Java

Java 8

Julia (HMMBase.jl)

Perl

Prolog

{{Webarchive, url=https://web.archive.org/web/20120502010115/http://www.cs.stonybrook.edu/~pfodor/viterbi/viterbi.P , date=2012-05-02

Go

SFIHMM

includes code for Viterbi decoding. Eponymous algorithms of mathematics Error detection and correction Dynamic programming Markov models Articles with example Python (programming language) code