|

Gamma Distribution

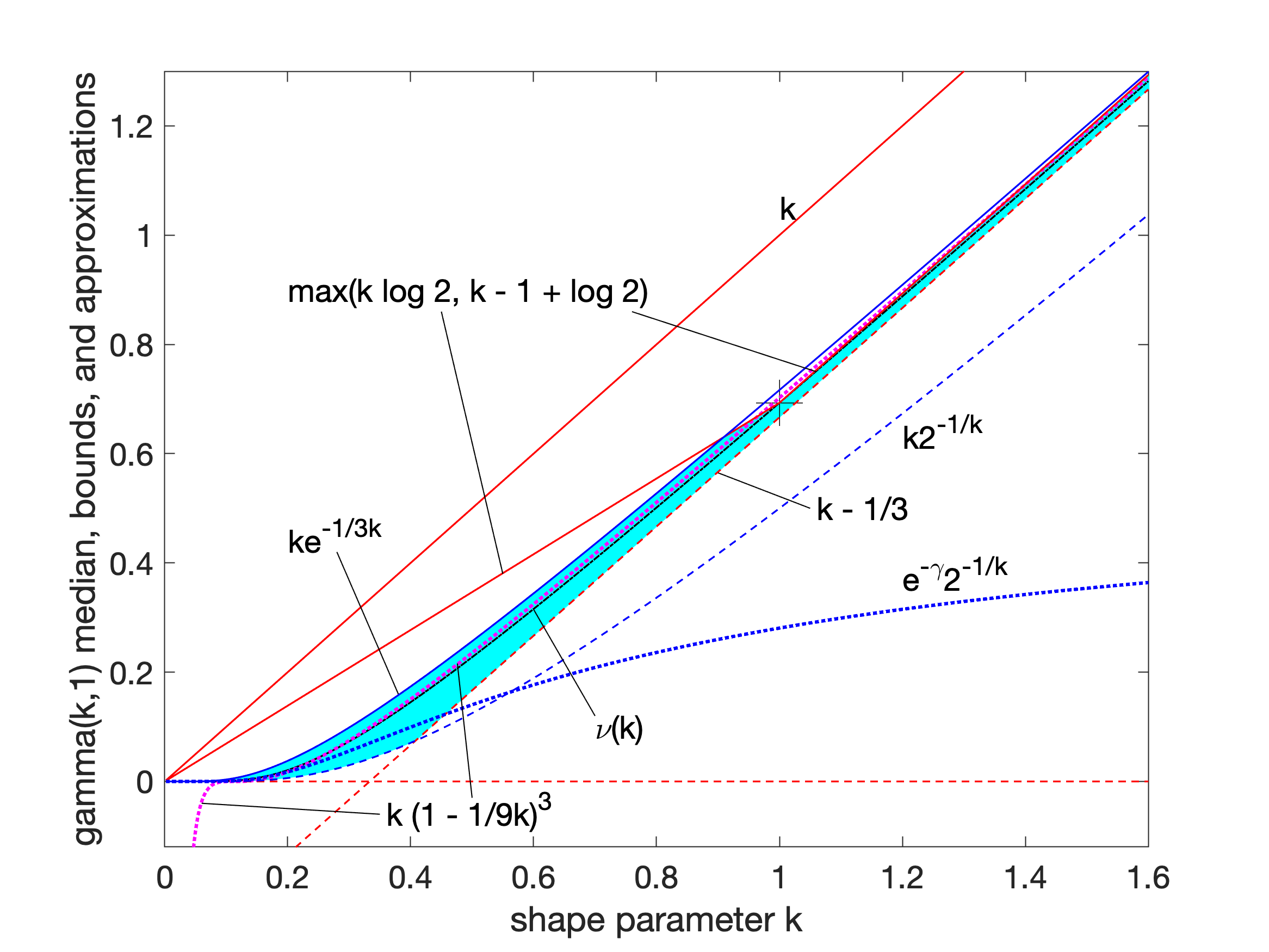

In probability theory and statistics, the gamma distribution is a versatile two-parameter family of continuous probability distributions. The exponential distribution, Erlang distribution, and chi-squared distribution are special cases of the gamma distribution. There are two equivalent parameterizations in common use: # With a shape parameter and a scale parameter # With a shape parameter \alpha and a rate parameter In each of these forms, both parameters are positive real numbers. The distribution has important applications in various fields, including econometrics, Bayesian statistics, and life testing. In econometrics, the (''α'', ''θ'') parameterization is common for modeling waiting times, such as the time until death, where it often takes the form of an Erlang distribution for integer ''α'' values. Bayesian statisticians prefer the (''α'',''λ'') parameterization, utilizing the gamma distribution as a conjugate prior for several inverse scale parameters, facilit ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Accelerated Life Testing

Accelerated life testing is the process of testing a product by subjecting it to conditions ( stress, strain, temperatures, voltage, vibration rate, pressure etc.) in excess of its normal service parameters in an effort to uncover faults and potential modes of failure in a short amount of time. By analyzing the product's response to such tests, engineers can make predictions about the service life and maintenance intervals of a product. In polymers, testing may be done at elevated temperatures to produce a result in a shorter amount of time than it could be produced at ambient temperatures. Many mechanical properties of polymers have an Arrhenius type relationship with respect to time and temperature (for example, creep, stress relaxation, and tensile properties). If one conducts short tests at elevated temperatures, that data can be used to extrapolate the behavior of the polymer at room temperature, avoiding the need to do lengthy, and hence expensive tests. Purpose ALT is p ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Coefficient Of Variation

In probability theory and statistics, the coefficient of variation (CV), also known as normalized root-mean-square deviation (NRMSD), percent RMS, and relative standard deviation (RSD), is a standardized measure of dispersion of a probability distribution or frequency distribution. It is defined as the ratio of the standard deviation \sigma to the mean \mu (or its absolute value, , and often expressed as a percentage ("%RSD"). The CV or RSD is widely used in analytical chemistry to express the precision and repeatability of an assay. It is also commonly used in fields such as engineering or physics when doing quality assurance studies and ANOVA gauge R&R, by economists and investors in economic models, in epidemiology, and in psychology/neuroscience. Definition The coefficient of variation (CV) is defined as the ratio of the standard deviation \sigma to the mean \mu, CV = \frac. It shows the extent of variability in relation to the mean of the population. The coefficien ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Probability Density Function

In probability theory, a probability density function (PDF), density function, or density of an absolutely continuous random variable, is a Function (mathematics), function whose value at any given sample (or point) in the sample space (the set of possible values taken by the random variable) can be interpreted as providing a ''relative likelihood'' that the value of the random variable would be equal to that sample. Probability density is the probability per unit length, in other words, while the ''absolute likelihood'' for a continuous random variable to take on any particular value is 0 (since there is an infinite set of possible values to begin with), the value of the PDF at two different samples can be used to infer, in any particular draw of the random variable, how much more likely it is that the random variable would be close to one sample compared to the other sample. More precisely, the PDF is used to specify the probability of the random variable falling ''within ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Incomplete Gamma Function

In mathematics, the upper and lower incomplete gamma functions are types of special functions which arise as solutions to various mathematical problems such as certain integrals. Their respective names stem from their integral definitions, which are defined similarly to the gamma function but with different or "incomplete" integral limits. The gamma function is defined as an integral from zero to infinity. This contrasts with the lower incomplete gamma function, which is defined as an integral from zero to a variable upper limit. Similarly, the upper incomplete gamma function is defined as an integral from a variable lower limit to infinity. Definition The upper incomplete gamma function is defined as: \Gamma(s,x) = \int_x^ t^\,e^\, dt , whereas the lower incomplete gamma function is defined as: \gamma(s,x) = \int_0^x t^\,e^\, dt . In both cases is a complex parameter, such that the real part of is positive. Properties By integration by parts we find the recurrence relati ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cumulative Distribution Function

In probability theory and statistics, the cumulative distribution function (CDF) of a real-valued random variable X, or just distribution function of X, evaluated at x, is the probability that X will take a value less than or equal to x. Every probability distribution Support (measure theory), supported on the real numbers, discrete or "mixed" as well as Continuous variable, continuous, is uniquely identified by a right-continuous Monotonic function, monotone increasing function (a càdlàg function) F \colon \mathbb R \rightarrow [0,1] satisfying \lim_F(x)=0 and \lim_F(x)=1. In the case of a scalar continuous distribution, it gives the area under the probability density function from negative infinity to x. Cumulative distribution functions are also used to specify the distribution of multivariate random variables. Definition The cumulative distribution function of a real-valued random variable X is the function given by where the right-hand side represents the probability ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Gamma Function

In mathematics, the gamma function (represented by Γ, capital Greek alphabet, Greek letter gamma) is the most common extension of the factorial function to complex numbers. Derived by Daniel Bernoulli, the gamma function \Gamma(z) is defined for all complex numbers z except non-positive integers, and for every positive integer z=n, \Gamma(n) = (n-1)!\,.The gamma function can be defined via a convergent improper integral for complex numbers with positive real part: \Gamma(z) = \int_0^\infty t^ e^\textt, \ \qquad \Re(z) > 0\,.The gamma function then is defined in the complex plane as the analytic continuation of this integral function: it is a meromorphic function which is holomorphic function, holomorphic except at zero and the negative integers, where it has simple Zeros and poles, poles. The gamma function has no zeros, so the reciprocal gamma function is an entire function. In fact, the gamma function corresponds to the Mellin transform of the negative exponential functi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Exponentially Distributed

In probability theory and statistics, the exponential distribution or negative exponential distribution is the probability distribution of the distance between events in a Poisson point process, i.e., a process in which events occur continuously and independently at a constant average rate; the distance parameter could be any meaningful mono-dimensional measure of the process, such as time between production errors, or length along a roll of fabric in the weaving manufacturing process. It is a particular case of the gamma distribution. It is the continuous analogue of the geometric distribution, and it has the key property of being memoryless. In addition to being used for the analysis of Poisson point processes it is found in various other contexts. The exponential distribution is not the same as the class of exponential families of distributions. This is a large class of probability distributions that includes the exponential distribution as one of its members, but also inc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Integer

An integer is the number zero (0), a positive natural number (1, 2, 3, ...), or the negation of a positive natural number (−1, −2, −3, ...). The negations or additive inverses of the positive natural numbers are referred to as negative integers. The set (mathematics), set of all integers is often denoted by the boldface or blackboard bold The set of natural numbers \mathbb is a subset of \mathbb, which in turn is a subset of the set of all rational numbers \mathbb, itself a subset of the real numbers \mathbb. Like the set of natural numbers, the set of integers \mathbb is Countable set, countably infinite. An integer may be regarded as a real number that can be written without a fraction, fractional component. For example, 21, 4, 0, and −2048 are integers, while 9.75, , 5/4, and Square root of 2, are not. The integers form the smallest Group (mathematics), group and the smallest ring (mathematics), ring containing the natural numbers. In algebraic number theory, the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Normal Distribution

In probability theory and statistics, a normal distribution or Gaussian distribution is a type of continuous probability distribution for a real-valued random variable. The general form of its probability density function is f(x) = \frac e^\,. The parameter is the mean or expectation of the distribution (and also its median and mode), while the parameter \sigma^2 is the variance. The standard deviation of the distribution is (sigma). A random variable with a Gaussian distribution is said to be normally distributed, and is called a normal deviate. Normal distributions are important in statistics and are often used in the natural and social sciences to represent real-valued random variables whose distributions are not known. Their importance is partly due to the central limit theorem. It states that, under some conditions, the average of many samples (observations) of a random variable with finite mean and variance is itself a random variable—whose distribution c ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variance

In probability theory and statistics, variance is the expected value of the squared deviation from the mean of a random variable. The standard deviation (SD) is obtained as the square root of the variance. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers is spread out from their average value. It is the second central moment of a distribution, and the covariance of the random variable with itself, and it is often represented by \sigma^2, s^2, \operatorname(X), V(X), or \mathbb(X). An advantage of variance as a measure of dispersion is that it is more amenable to algebraic manipulation than other measures of dispersion such as the expected absolute deviation; for example, the variance of a sum of uncorrelated random variables is equal to the sum of their variances. A disadvantage of the variance for practical applications is that, unlike the standard deviation, its units differ from the random variable, which is why the standard devi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |