|

GPT-2

Generative Pre-trained Transformer 2 (GPT-2) is a large language model by OpenAI and the second in their foundational series of Generative pre-trained transformer, GPT models. GPT-2 was pre-trained on a dataset of 8 million web pages. It was partially released in February 2019, followed by full release of the 1.5-billion-parameter model on November 5, 2019. GPT-2 was created as a "direct scale-up" of GPT-1 with a ten-fold increase in both its parameter count and the size of its training dataset. It is a general-purpose learner and its ability to perform the various tasks was a consequence of its general ability to accurately predict the next item in a sequence, which enabled it to machine translation, translate texts, question answering, answer questions about a topic from a text, automatic summarization, summarize passages from a larger text, and natural language generation, generate text output on a level sometimes Turing test, indistinguishable from that of humans; however, it ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

GPT-3

Generative Pre-trained Transformer 3 (GPT-3) is a large language model released by OpenAI in 2020. Like its predecessor, GPT-2, it is a decoder-only transformer model of deep neural network, which supersedes recurrence and convolution-based architectures with a technique known as "attention". This attention mechanism allows the model to focus selectively on segments of input text it predicts to be most relevant. GPT-3 has 175 billion parameters, each with 16-bit precision, requiring 350GB of storage since each parameter occupies 2 bytes. It has a context window size of 2048 tokens, and has demonstrated strong " zero-shot" and " few-shot" learning abilities on many tasks. On September 22, 2020, Microsoft announced that it had licensed GPT-3 exclusively. Others can still receive output from its public API, but only Microsoft has access to the underlying model. Background According to ''The Economist'', improved algorithms, more powerful computers, and a recent increase i ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

GPT-1

Generative Pre-trained Transformer 1 (GPT-1) was the first of OpenAI's large language models following Google's invention of the transformer architecture in 2017. In June 2018, OpenAI released a paper entitled "Improving Language Understanding by Generative Pre-Training", in which they introduced that initial model along with the general concept of a generative pre-trained transformer. Up to that point, the best-performing neural NLP models primarily employed supervised learning from large amounts of manually labeled data. This reliance on supervised learning limited their use of datasets that were not well-annotated, in addition to making it prohibitively expensive and time-consuming to train extremely large models; many languages (such as Swahili or Haitian Creole) are difficult to translate and interpret using such models due to a lack of available text for corpus-building. In contrast, a GPT's "semi-supervised" approach involved two stages: an unsupervised generative "pre ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Large Language Model

A large language model (LLM) is a language model trained with self-supervised machine learning on a vast amount of text, designed for natural language processing tasks, especially language generation. The largest and most capable LLMs are generative pretrained transformers (GPTs), which are largely used in generative chatbots such as ChatGPT or Gemini. LLMs can be fine-tuned for specific tasks or guided by prompt engineering. These models acquire predictive power regarding syntax, semantics, and ontologies inherent in human language corpora, but they also inherit inaccuracies and biases present in the data they are trained in. History Before the emergence of transformer-based models in 2017, some language models were considered large relative to the computational and data constraints of their time. In the early 1990s, IBM's statistical models pioneered word alignment techniques for machine translation, laying the groundwork for corpus-based language modeling. A sm ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Generative Pre-trained Transformer

A generative pre-trained transformer (GPT) is a type of large language model (LLM) and a prominent framework for generative artificial intelligence. It is an Neural network (machine learning), artificial neural network that is used in natural language processing by machines. It is based on the Transformer (deep learning architecture), transformer deep learning architecture, pre-trained on large data sets of unlabeled text, and able to generate novel human-like content. As of 2023, most LLMs had these characteristics and are sometimes referred to broadly as GPTs. The first GPT was introduced in 2018 by OpenAI. OpenAI has released significant #Foundation models, GPT foundation models that have been sequentially numbered, to comprise its "GPT-''n''" series. Each of these was significantly more capable than the previous, due to increased size (number of trainable parameters) and training. The most recent of these, GPT-4o, was released in May 2024. Such models have been the basis fo ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

OpenAI

OpenAI, Inc. is an American artificial intelligence (AI) organization founded in December 2015 and headquartered in San Francisco, California. It aims to develop "safe and beneficial" artificial general intelligence (AGI), which it defines as "highly autonomous systems that outperform humans at most economically valuable work". As a leading organization in the ongoing AI boom, OpenAI is known for the GPT family of large language models, the DALL-E series of text-to-image models, and a text-to-video model named Sora (text-to-video model), Sora. Its release of ChatGPT in November 2022 has been credited with catalyzing widespread interest in generative AI. The organization has a complex corporate structure. As of April 2025, it is led by the Nonprofit organization, non-profit OpenAI, Inc., Delaware General Corporation Law, registered in Delaware, and has multiple for-profit subsidiaries including OpenAI Holdings, LLC and OpenAI Global, LLC. Microsoft has invested US$13 billion ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

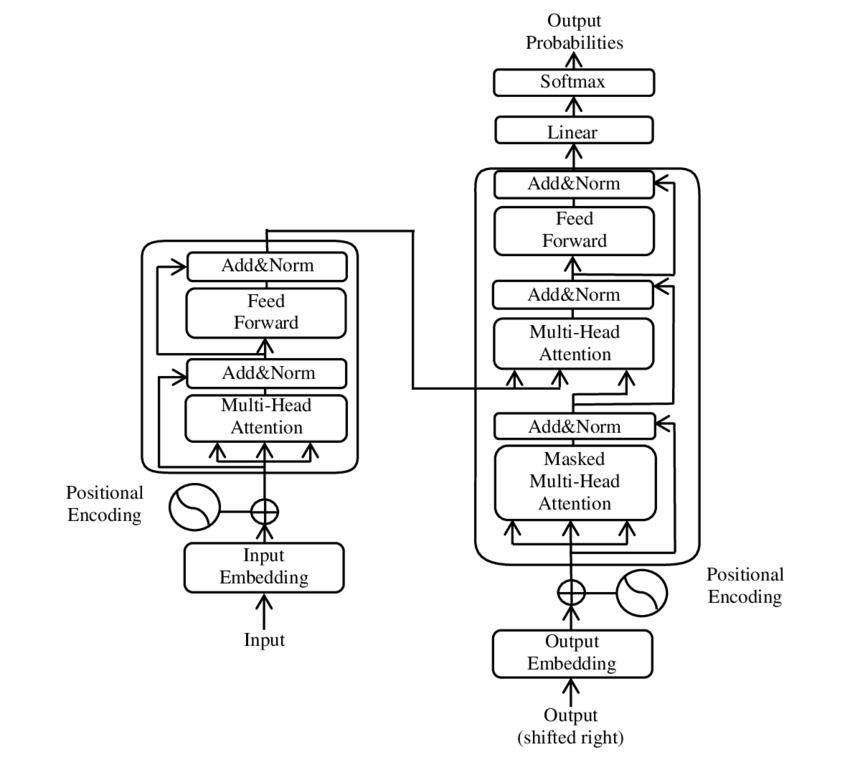

Transformer (machine Learning Model)

The transformer is a deep learning architecture based on the multi-head attention mechanism, in which text is converted to numerical representations called tokens, and each token is converted into a vector via lookup from a word embedding table. At each layer, each token is then contextualized within the scope of the context window with other (unmasked) tokens via a parallel multi-head attention mechanism, allowing the signal for key tokens to be amplified and less important tokens to be diminished. Transformers have the advantage of having no recurrent units, therefore requiring less training time than earlier recurrent neural architectures (RNNs) such as long short-term memory (LSTM). Later variations have been widely adopted for training large language models (LLM) on large (language) datasets. The modern version of the transformer was proposed in the 2017 paper " Attention Is All You Need" by researchers at Google. Transformers were first developed as an improvement ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

BERT (language Model)

Bidirectional encoder representations from transformers (BERT) is a language model introduced in October 2018 by researchers at Google. It learns to represent text as a sequence of vectors using self-supervised learning. It uses the Transformer (machine learning model), encoder-only transformer architecture. BERT dramatically improved the State of the art, state-of-the-art for large language model, large language models. , BERT is a ubiquitous baseline in natural language processing (NLP) experiments. BERT is trained by masked token prediction and next sentence prediction. As a result of this training process, BERT learns contextual, Latent space, latent representations of tokens in their context, similar to ELMo and GPT-2. It found applications for many natural language processing tasks, such as coreference resolution and polysemy resolution. It is an evolutionary step over ELMo, and spawned the study of "BERTology", which attempts to interpret what is learned by BERT. BERT wa ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Hugging Face

Hugging Face, Inc. is a French-American company based in List of tech companies in the New York metropolitan area, New York City that develops computation tools for building applications using machine learning. It is most notable for its Transformer (machine learning model), transformers Software libraries, library built for natural language processing applications and its platform that allows users to share machine learning models and Dataset (machine learning), datasets and showcase their work. History The company was founded in 2016 by French entrepreneurs Clément Delangue, Julien Chaumond, and Thomas Wolf in New York City, originally as a company that developed a chatbot app targeted at teenagers. The company was named after the emoji. After Open-source software, open sourcing the model behind the chatbot, the company Lean startup, pivoted to focus on being a platform for machine learning. In March 2021, Hugging Face raised US$40 million in a Series B funding round. On ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Natural Language Generation

Natural language generation (NLG) is a software process that produces natural language output. A widely cited survey of NLG methods describes NLG as "the subfield of artificial intelligence and computational linguistics that is concerned with the construction of computer systems that can produce understandable texts in English or other human languages from some underlying non-linguistic representation of information". While it is widely agreed that the output of any NLG process is text, there is some disagreement about whether the inputs of an NLG system need to be non-linguistic. Common applications of NLG methods include the production of various reports, for example weather and patient reports; image captions; and chatbots like ChatGPT. Automated NLG can be compared to the process humans use when they turn ideas into writing or speech. Psycholinguists prefer the term language production for this process, which can also be described in mathematical terms, or modeled in a com ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Reddit

Reddit ( ) is an American Proprietary software, proprietary social news news aggregator, aggregation and Internet forum, forum Social media, social media platform. Registered users (commonly referred to as "redditors") submit content to the site such as links, text posts, images, and videos, which are then voted up or down ("upvoted" or "downvoted") by other members. Posts are organized by subject into user-created boards called "subreddits". Submissions with more upvotes appear towards the top of their subreddit and, if they receive enough upvotes, ultimately on the site's front page. Reddit administrators moderate the communities. Moderation is also conducted by community-specific moderators, who are unpaid volunteers. It is operated by Reddit, Inc., based in San Francisco. As of February 2025, Reddit is the List of most-visited websites, ninth-most-visited website in the world. According to data provided by Similarweb, 51.75% of the website traffic comes from the United St ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |