|

Demand Forecasting

Demand forecasting, also known as ''demand planning and sales forecasting'' (DP&SF), involves the prediction of the quantity of goods and services that will be demanded by consumers or business customers at a future point in time. More specifically, the methods of demand forecasting entail using predictive analytics to estimate customer demand in consideration of key economic conditions. This is an important tool in optimizing business profitability through efficient supply chain management. Demand forecasting methods are divided into two major categories, qualitative and quantitative methods: *Qualitative methods are based on expert opinion and information gathered from the field. This method is mostly used in situations when there is minimal data available for analysis, such as when a business or product has recently been introduced to the market. *Quantitative methods use available data and analytical tools in order to produce predictions. Demand forecasting may be used in resou ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Consumer

A consumer is a person or a group who intends to order, or use purchased goods, products, or services primarily for personal, social, family, household and similar needs, who is not directly related to entrepreneurial or business activities. The term most commonly refers to a person who purchases goods and services for personal use. Rights "Consumers, by definition, include us all", said President John F. Kennedy, offering his definition to the United States Congress on March 15, 1962. This speech became the basis for the creation of World Consumer Rights Day, now celebrated on March 15. In his speech, John Fitzgerald Kennedy outlined the integral responsibility to consumers from their respective governments to help exercise consumers' rights, including: *The right to safety: To be protected against the marketing of goods that are hazardous to health or life. *The right to be informed: To be protected against fraudulent, deceitful, or grossly misleading information, adverti ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Opportunity Cost

In microeconomic theory, the opportunity cost of a choice is the value of the best alternative forgone where, given limited resources, a choice needs to be made between several mutually exclusive alternatives. Assuming the best choice is made, it is the "cost" incurred by not enjoying the ''benefit'' that would have been had if the second best available choice had been taken instead. The '' New Oxford American Dictionary'' defines it as "the loss of potential gain from other alternatives when one alternative is chosen". As a representation of the relationship between scarcity and choice, the objective of opportunity cost is to ensure efficient use of scarce resources. It incorporates all associated costs of a decision, both explicit and implicit. Thus, opportunity costs are not restricted to monetary or financial costs: the real cost of output forgone, lost time, pleasure, or any other benefit that provides utility should also be considered an opportunity cost. Types Expl ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Neural Network

A neural network is a group of interconnected units called neurons that send signals to one another. Neurons can be either biological cells or signal pathways. While individual neurons are simple, many of them together in a network can perform complex tasks. There are two main types of neural networks. *In neuroscience, a '' biological neural network'' is a physical structure found in brains and complex nervous systems – a population of nerve cells connected by synapses. *In machine learning, an '' artificial neural network'' is a mathematical model used to approximate nonlinear functions. Artificial neural networks are used to solve artificial intelligence problems. In biology In the context of biology, a neural network is a population of biological neurons chemically connected to each other by synapses. A given neuron can be connected to hundreds of thousands of synapses. Each neuron sends and receives electrochemical signals called action potentials to its conne ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Diffusion Of Innovation

Diffusion of innovations is a theory that seeks to explain how, why, and at what rate new ideas and technology spread. The theory was popularized by Everett Rogers in his book ''Diffusion of Innovations'', first published in 1962. Rogers argues that diffusion is the process by which an innovation is communicated through certain channels over time among the participants in a social system. The origins of the diffusion of innovations theory are varied and span multiple disciplines. Rogers proposes that five main elements influence the spread of a new idea: the innovation itself, adopters, communication channels, time, and a social system. This process relies heavily on social capital. The innovation must be widely adopted in order to self-sustain. Within the rate of adoption, there is a point at which an innovation reaches critical mass. In 1989, management consultants working at the consulting firm Regis McKenna, Inc. theorized that this point lies at the boundary between the ea ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Reference Class Forecasting

Reference class forecasting or comparison class forecasting is a method of predicting the future by looking at similar past situations and their outcomes. The theories behind reference class forecasting were developed by Daniel Kahneman and Amos Tversky. The theoretical work helped Kahneman win the Nobel Prize in Economics. Reference class forecasting is so named as it predicts the outcome of a planned action based on actual outcomes in a reference class of similar actions to that being forecast. Discussion of which reference class to use when forecasting a given situation is known as the reference class problem. Overview Kahneman and Tversky Decision Research Technical Report PTR-1042-77-6. In found that human judgment is generally optimistic due to overconfidence and insufficient consideration of distributional information about outcomes. People tend to underestimate the costs, completion times, and risks of planned actions, whereas they tend to overestimate the benefi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Group Method Of Data Handling

A group is a number of persons or things that are located, gathered, or classed together. Groups of people * Cultural group, a group whose members share the same cultural identity * Ethnic group, a group whose members share the same ethnic identity * Religious group (other), a group whose members share the same religious identity * Social group, a group whose members share the same social identity * Tribal group, a group whose members share the same tribal identity * Organization, an entity that has a collective goal and is linked to an external environment * Peer group, an entity of three or more people with similar age, ability, experience, and interest * Class (education), a group of people which attends a specific course or lesson at an educational institution Social science * In-group and out-group * Types of social groups, Primary, secondary, and reference groups * Social group * Collective, Collectives Philosophy and religion * Khandha, a Buddhist concept ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Extrapolation

In mathematics Mathematics is a field of study that discovers and organizes methods, Mathematical theory, theories and theorems that are developed and Mathematical proof, proved for the needs of empirical sciences and mathematics itself. There are many ar ..., extrapolation is a type of estimation, beyond the original observation range, of the value of a variable on the basis of its relationship with another variable. It is similar to interpolation, which produces estimates between known observations, but extrapolation is subject to greater uncertainty and a higher risk of producing meaningless results. Extrapolation may also mean extension of a wikt:method, method, assuming similar methods will be applicable. Extrapolation may also apply to human experience to project, extend, or expand known experience into an area not known or previously experienced. By doing so, one makes an assumption of the unknown [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Discrete Event Simulation

A discrete-event simulation (DES) models the operation of a system as a (discrete) sequence of events in time. Each event occurs at a particular instant in time and marks a change of state in the system. Between consecutive events, no change in the system is assumed to occur; thus the simulation time can directly jump to the occurrence time of the next event, which is called next-event time progression. In addition to next-event time progression, there is also an alternative approach, called incremental time progression, where time is broken up into small time slices and the system state is updated according to the set of events/activities happening in the time slice. Because not every time slice has to be simulated, a next-event time simulation can typically run faster than a corresponding incremental time simulation. Both forms of DES contrast with continuous simulation in which the system state is changed continuously over time on the basis of a set of differential equations d ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Game Theory

Game theory is the study of mathematical models of strategic interactions. It has applications in many fields of social science, and is used extensively in economics, logic, systems science and computer science. Initially, game theory addressed two-person zero-sum games, in which a participant's gains or losses are exactly balanced by the losses and gains of the other participant. In the 1950s, it was extended to the study of non zero-sum games, and was eventually applied to a wide range of Human behavior, behavioral relations. It is now an umbrella term for the science of rational Decision-making, decision making in humans, animals, and computers. Modern game theory began with the idea of mixed-strategy equilibria in two-person zero-sum games and its proof by John von Neumann. Von Neumann's original proof used the Brouwer fixed-point theorem on continuous mappings into compact convex sets, which became a standard method in game theory and mathematical economics. His paper was f ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Delphi Method

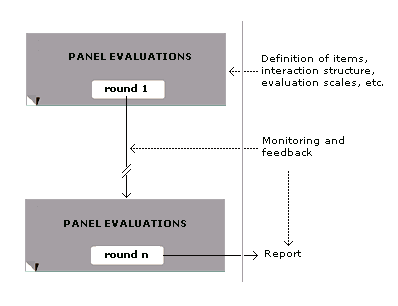

The Delphi method or Delphi technique ( ; also known as Estimate-Talk-Estimate or ETE) is a structured communication technique or method, originally developed as a systematic, interactive forecasting method that relies on a panel of experts. Delphi has been widely used for business forecasting and has certain advantages over another structured forecasting approach, prediction markets. Delphi can also be used to help reach expert consensus and develop professional guidelines. It is used for such purposes in many health-related fields, including clinical medicine, public health, and research. Delphi is based on the principle that forecasts (or decisions) from a structured group of individuals are more accurate than those from unstructured groups. The experts answer questionnaires in two or more rounds. After each round, a facilitator or change agent provides an anonymised summary of the experts' forecasts from the previous round as well as the reasons they provided for their judgment ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Marketing Research

Marketing research is the systematic gathering, recording, and analysis of qualitative data, qualitative and quantitative data, quantitative data about issues relating to marketing products and services. The goal is to identify and assess how changing elements of the marketing mix impacts customer behavior. This involves employing a data-driven marketing approach to specify the data required to address these issues, then designing the method for collecting information and implementing the data collection process. After analyzing the collected data, these results and findings, including their implications, are forwarded to those empowered to act on them. Market research, marketing research, and marketing are a sequence of business process, business activities; sometimes these are handled informally. The field of ''marketing research'' is much older than that of ''market research''. Although both involve consumers, ''Marketing'' research is concerned specifically with marketing pro ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Forecast Bias

A forecast bias occurs when there are consistent differences between actual outcomes and previously generated forecasts of those quantities; that is: forecasts may have a general tendency to be too high or too low. A normal property of a good forecast is that it is not biased.APICS Dictionary 12th Edition, American Production and Inventory Control Society. Available for download a As a quantitative measure , the "forecast bias" can be specified as a probabilistic or statistical property of the forecast error. A typical measure of bias of forecasting procedure is the arithmetic mean or expected value of the forecast errors, but other measures of bias are possible. For example, a median-unbiased forecast would be one where half of the forecasts are too low and half too high: see Bias of an estimator. In contexts where forecasts are being produced on a repetitive basis, the performance of the forecasting system may be monitored using a tracking signal, which provides an automatically ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |