|

Garbage Collector (computing)

In computer science, garbage collection (GC) is a form of automatic memory management. The ''garbage collector'' attempts to reclaim memory that was allocated by the program, but is no longer referenced; such memory is called ''garbage''. Garbage collection was invented by American computer scientist John McCarthy around 1959 to simplify manual memory management in Lisp. Garbage collection relieves the programmer from doing manual memory management, where the programmer specifies what objects to de-allocate and return to the memory system and when to do so. Other, similar techniques include stack allocation, region inference, and memory ownership, and combinations thereof. Garbage collection may take a significant proportion of a program's total processing time, and affect performance as a result. Resources other than memory, such as network sockets, database handles, windows, file descriptors, and device descriptors, are not typically handled by garbage collection, but ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Structure And Interpretation Of Computer Programs P

A structure is an arrangement and organization of interrelated elements in a material object or system, or the object or system so organized. Material structures include man-made objects such as buildings and machines and natural objects such as organism, biological organisms, minerals and chemical substance, chemicals. Abstract structures include data structures in computer science and musical form. Types of structure include a hierarchy (a cascade of one-to-many relationships), a Complex network, network featuring many-to-many Link (geometry), links, or a lattice (order), lattice featuring connections between components that are neighbors in space. Load-bearing Buildings, aircraft, skeletons, Ant colony, anthills, beaver dams, bridges and salt domes are all examples of Structural load, load-bearing structures. The results of construction are divided into buildings and nonbuilding structure, non-building structures, and make up the infrastructure of a human society. Built str ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Language Specification

In computer programming, a programming language specification (or standard or definition) is a documentation artifact that defines a programming language so that users and implementors can agree on what programs in that language mean. Specifications are typically detailed and formal, and primarily used by implementors, with users referring to them in case of ambiguity; the C++ specification is frequently cited by users, for instance, due to the complexity. Related documentation includes a programming language reference, which is intended expressly for users, and a programming language rationale, which explains why the specification is written as it is; these are typically more informal than a specification. Standardization Not all major programming languages have specifications, and languages can exist and be popular for decades without a specification. A language may have one or more implementations, whose behavior acts as a ''de facto'' standard, without this behavior being d ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Compiler

In computing, a compiler is a computer program that Translator (computing), translates computer code written in one programming language (the ''source'' language) into another language (the ''target'' language). The name "compiler" is primarily used for programs that translate source code from a high-level programming language to a lower level language, low-level programming language (e.g. assembly language, object code, or machine code) to create an executable program.Compilers: Principles, Techniques, and Tools by Alfred V. Aho, Ravi Sethi, Jeffrey D. Ullman - Second Edition, 2007 There are many different types of compilers which produce output in different useful forms. A ''cross-compiler'' produces code for a different Central processing unit, CPU or operating system than the one on which the cross-compiler itself runs. A ''bootstrap compiler'' is often a temporary compiler, used for compiling a more permanent or better optimised compiler for a language. Related software ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Heap (data Structure)

In computer science, a heap is a Tree (data structure), tree-based data structure that satisfies the heap property: In a ''max heap'', for any given Node (computer science), node C, if P is the parent node of C, then the ''key'' (the ''value'') of P is greater than or equal to the key of C. In a ''min heap'', the key of P is less than or equal to the key of C. The node at the "top" of the heap (with no parents) is called the ''root'' node. The heap is one maximally efficient implementation of an abstract data type called a priority queue, and in fact, priority queues are often referred to as "heaps", regardless of how they may be implemented. In a heap, the highest (or lowest) priority element is always stored at the root. However, a heap is not a sorted structure; it can be regarded as being partially ordered. A heap is a useful data structure when it is necessary to repeatedly remove the object with the highest (or lowest) priority, or when insertions need to be interspersed wit ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

C++/CLI

C++/CLI is a variant of the C++ programming language, modified for Common Language Infrastructure. It has been part of Visual Studio 2005 and later, and provides interoperability with other .NET languages such as C#. Microsoft created C++/CLI to supersede Managed Extensions for C++. In December 2005, Ecma International published C++/CLI specifications as the ECMA-372 standard. Syntax changes C++/CLI should be thought of as a language of its own (with a new set of keywords, for example), instead of the C++ superset-oriented Managed C++ (MC++) (whose non-standard keywords were styled like or ). Because of this, there are some major syntactic changes, especially related to the elimination of ambiguous identifiers and the addition of .NET-specific features. Many conflicting syntaxes, such as the multiple versions of operator in MC++, have been split: in C++/CLI, .NET reference types are created with the new keyword (i.e. garbage collected new()). Also, C++/CLI has introduced ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Modula-3

Modula-3 is a programming language conceived as a successor to an upgraded version of Modula-2 known as Modula-2+. It has been influential in research circles (influencing the designs of languages such as Java, C#, Python and Nim), but it has not been adopted widely in industry. It was designed by Luca Cardelli, James Donahue, Lucille Glassman, Mick Jordan (before at the Olivetti Software Technology Laboratory), Bill Kalsow and Greg Nelson at the Digital Equipment Corporation (DEC) Systems Research Center (SRC) and the Olivetti Research Center (ORC) in the late 1980s. Modula-3's main features are modularity, simplicity and safety while preserving the power of a systems-programming language. Modula-3 aimed to continue the Pascal tradition of type safety, while introducing new constructs for practical real-world programming. In particular Modula-3 added support for generic programming (similar to templates), multithreading, exception handling, garbage collection, o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Ada (programming Language)

Ada is a structured, statically typed, imperative, and object-oriented high-level programming language, inspired by Pascal and other languages. It has built-in language support for '' design by contract'' (DbC), extremely strong typing, explicit concurrency, tasks, synchronous message passing, protected objects, and non-determinism. Ada improves code safety and maintainability by using the compiler to find errors in favor of runtime errors. Ada is an international technical standard, jointly defined by the International Organization for Standardization (ISO), and the International Electrotechnical Commission (IEC). , the standard, ISO/IEC 8652:2023, is called Ada 2022 informally. Ada was originally designed by a team led by French computer scientist Jean Ichbiah of Honeywell under contract to the United States Department of Defense (DoD) from 1977 to 1983 to supersede over 450 programming languages then used by the DoD. Ada was named after Ada Lovelace (1815–185 ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

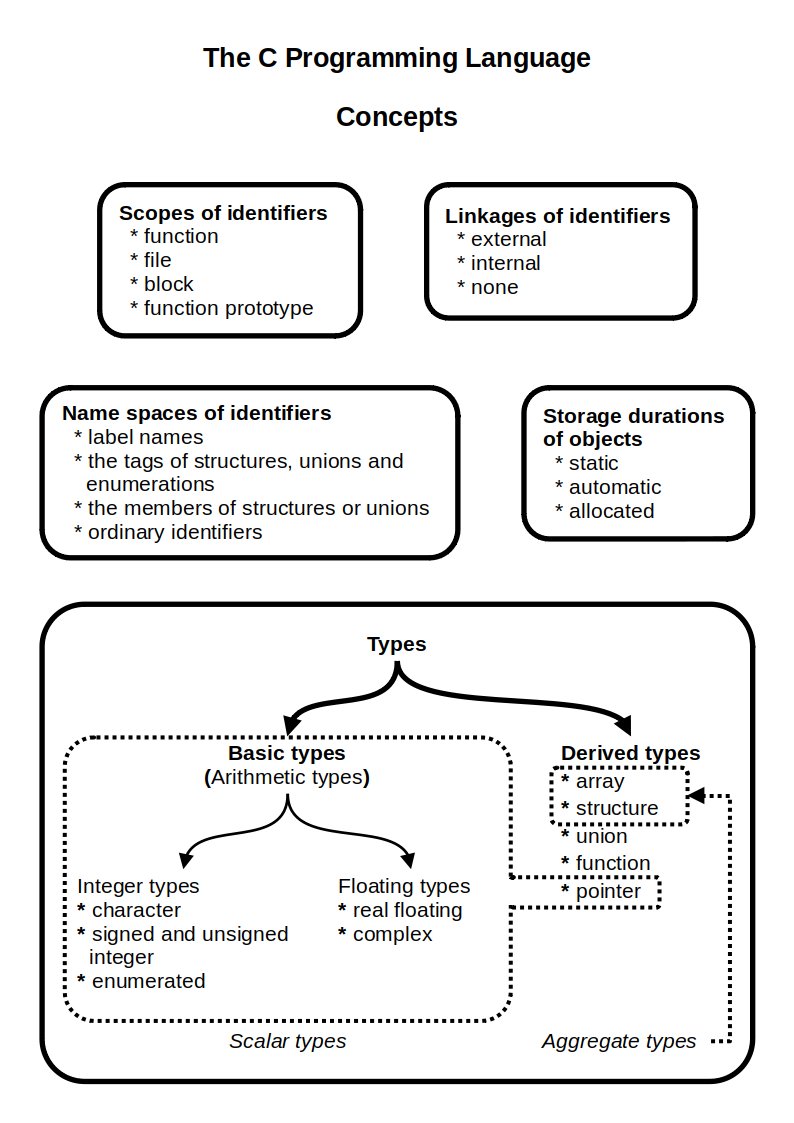

C (programming Language)

C (''pronounced'' '' – like the letter c'') is a general-purpose programming language. It was created in the 1970s by Dennis Ritchie and remains very widely used and influential. By design, C's features cleanly reflect the capabilities of the targeted Central processing unit, CPUs. It has found lasting use in operating systems code (especially in Kernel (operating system), kernels), device drivers, and protocol stacks, but its use in application software has been decreasing. C is commonly used on computer architectures that range from the largest supercomputers to the smallest microcontrollers and embedded systems. A successor to the programming language B (programming language), B, C was originally developed at Bell Labs by Ritchie between 1972 and 1973 to construct utilities running on Unix. It was applied to re-implementing the kernel of the Unix operating system. During the 1980s, C gradually gained popularity. It has become one of the most widely used programming langu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Lambda Calculus

In mathematical logic, the lambda calculus (also written as ''λ''-calculus) is a formal system for expressing computability, computation based on function Abstraction (computer science), abstraction and function application, application using variable Name binding, binding and Substitution (algebra), substitution. Untyped lambda calculus, the topic of this article, is a universal machine, a model of computation that can be used to simulate any Turing machine (and vice versa). It was introduced by the mathematician Alonzo Church in the 1930s as part of his research into the foundations of mathematics. In 1936, Church found a formulation which was #History, logically consistent, and documented it in 1940. Lambda calculus consists of constructing #Lambda terms, lambda terms and performing #Reduction, reduction operations on them. A term is defined as any valid lambda calculus expression. In the simplest form of lambda calculus, terms are built using only the following rules: # x: A ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Scripting Language

In computing, a script is a relatively short and simple set of instructions that typically automation, automate an otherwise manual process. The act of writing a script is called scripting. A scripting language or script language is a programming language that is used for scripting. Originally, scripting was limited to automating an operating system shell and languages were relatively simple. Today, scripting is more pervasive and some languages include modern features that allow them to be used for Application software, application development as well as scripting. Overview A scripting language can be a general purpose language or a domain-specific language for a particular environment. When embedded in an application, it may be called an extension language. A scripting language is sometimes referred to as very high-level programming language if it operates at a high level of abstraction, or as a control language, particularly for job control languages on mainframes. The te ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Go (programming Language)

Go is a high-level programming language, high-level general purpose programming language that is static typing, statically typed and compiled language, compiled. It is known for the simplicity of its syntax and the efficiency of development that it enables by the inclusion of a large standard library supplying many needs for common projects. It was designed at Google in 2007 by Robert Griesemer, Rob Pike, and Ken Thompson, and publicly announced in November of 2009. It is syntax (programming languages), syntactically similar to C (programming language), C, but also has memory safety, garbage collection (computer science), garbage collection, structural type system, structural typing, and communicating sequential processes, CSP-style concurrency (computer science), concurrency. It is often referred to as Golang to avoid ambiguity and because of its former domain name, golang.org, but its proper name is Go. There are two major implementations: * The original, Self-hosting (compi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

D (programming Language)

D, also known as dlang, is a multi-paradigm system programming language created by Walter Bright at Digital Mars and released in 2001. Andrei Alexandrescu joined the design and development effort in 2007. Though it originated as a re-engineering of C++, D is now a very different language. As it has developed, it has drawn inspiration from other high-level programming languages. Notably, it has been influenced by Java, Python, Ruby, C#, and Eiffel. The D language reference describes it as follows: Features D is not source-compatible with C and C++ source code in general. However, any code that is legal in both C/C++ and D should behave in the same way. Like C++, D has closures, anonymous functions, compile-time function execution, design by contract, ranges, built-in container iteration concepts, and type inference. D's declaration, statement and expression syntaxes also closely match those of C++. Unlike C++, D also implements garbage collection, first cl ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |