Bellman equation on:

[Wikipedia]

[Google]

[Amazon]

A Bellman equation, named after Richard E. Bellman, is a

A Bellman equation, named after Richard E. Bellman, is a

Meyn & Tweedie

.

A Bellman equation, named after Richard E. Bellman, is a

A Bellman equation, named after Richard E. Bellman, is a necessary condition

In logic and mathematics, necessity and sufficiency are terms used to describe a conditional or implicational relationship between two statements. For example, in the conditional statement: "If then ", is necessary for , because the truth of ...

for optimality associated with the mathematical optimization

Mathematical optimization (alternatively spelled ''optimisation'') or mathematical programming is the selection of a best element, with regard to some criteria, from some set of available alternatives. It is generally divided into two subfiel ...

method known as dynamic programming. It writes the "value" of a decision problem at a certain point in time in terms of the payoff from some initial choices and the "value" of the remaining decision problem that results from those initial choices. This breaks a dynamic optimization problem into a sequence

In mathematics, a sequence is an enumerated collection of objects in which repetitions are allowed and order matters. Like a set, it contains members (also called ''elements'', or ''terms''). The number of elements (possibly infinite) is cal ...

of simpler subproblems, as Bellman's “principle of optimality" prescribes. The equation applies to algebraic structures with a total ordering; for algebraic structures with a partial ordering, the generic Bellman's equation can be used.

The Bellman equation was first applied to engineering control theory

Control theory is a field of control engineering and applied mathematics that deals with the control system, control of dynamical systems in engineered processes and machines. The objective is to develop a model or algorithm governing the applic ...

and to other topics in applied mathematics, and subsequently became an important tool in economic theory

Economics () is a behavioral science that studies the production, distribution, and consumption of goods and services.

Economics focuses on the behaviour and interactions of economic agents and how economies work. Microeconomics anal ...

; though the basic concepts of dynamic programming are prefigured in John von Neumann

John von Neumann ( ; ; December 28, 1903 – February 8, 1957) was a Hungarian and American mathematician, physicist, computer scientist and engineer. Von Neumann had perhaps the widest coverage of any mathematician of his time, in ...

and Oskar Morgenstern

Oskar Morgenstern (; January 24, 1902 – July 26, 1977) was a German-born economist. In collaboration with mathematician John von Neumann, he is credited with founding the field of game theory and its application to social sciences and strategic ...

's '' Theory of Games and Economic Behavior'' and Abraham Wald's ''sequential analysis

In statistics, sequential analysis or sequential hypothesis testing is statistical analysis where the sample size is not fixed in advance. Instead data is evaluated as it is collected, and further sampling is stopped in accordance with a pre-defi ...

''. The term "Bellman equation" usually refers to the dynamic programming equation (DPE) associated with discrete-time

In mathematical dynamics, discrete time and continuous time are two alternative frameworks within which variables that evolve over time are modeled.

Discrete time

Discrete time views values of variables as occurring at distinct, separate "poi ...

optimization problems. In continuous-time optimization problems, the analogous equation is a partial differential equation

In mathematics, a partial differential equation (PDE) is an equation which involves a multivariable function and one or more of its partial derivatives.

The function is often thought of as an "unknown" that solves the equation, similar to ho ...

that is called the Hamilton–Jacobi–Bellman equation.

In discrete time any multi-stage optimization problem can be solved by analyzing the appropriate Bellman equation. The appropriate Bellman equation can be found by introducing new state variables (state augmentation). However, the resulting augmented-state multi-stage optimization problem has a higher dimensional state space than the original multi-stage optimization problem - an issue that can potentially render the augmented problem intractable due to the “ curse of dimensionality”. Alternatively, it has been shown that if the cost function of the multi-stage optimization problem satisfies a "backward separable" structure, then the appropriate Bellman equation can be found without state augmentation.

Analytical concepts in dynamic programming

To understand the Bellman equation, several underlying concepts must be understood. First, any optimization problem has some objective: minimizing travel time, minimizing cost, maximizing profits, maximizing utility, etc. The mathematical function that describes this objective is called the ''objective function

In mathematical optimization and decision theory, a loss function or cost function (sometimes also called an error function) is a function that maps an event or values of one or more variables onto a real number intuitively representing some "cost ...

''.

Dynamic programming breaks a multi-period planning problem into simpler steps at different points in time. Therefore, it requires keeping track of how the decision situation is evolving over time. The information about the current situation that is needed to make a correct decision is called the "state". For example, to decide how much to consume and spend at each point in time, people would need to know (among other things) their initial wealth. Therefore, wealth would be one of their ''state variable

A state variable is one of the set of Variable (mathematics), variables that are used to describe the mathematical "state" of a dynamical system. Intuitively, the state of a system describes enough about the system to determine its future behavi ...

s'', but there would probably be others.

The variables chosen at any given point in time are often called the '' control variables''. For instance, given their current wealth, people might decide how much to consume now. Choosing the control variables now may be equivalent to choosing the next state; more generally, the next state is affected by other factors in addition to the current control. For example, in the simplest case, today's wealth (the state) and consumption (the control) might exactly determine tomorrow's wealth (the new state), though typically other factors will affect tomorrow's wealth too.

The dynamic programming approach describes the optimal plan by finding a rule that tells what the controls should be, given any possible value of the state. For example, if consumption (''c'') depends ''only'' on wealth (''W''), we would seek a rule that gives consumption as a function of wealth. Such a rule, determining the controls as a function of the states, is called a ''policy function''.

Finally, by definition, the optimal decision rule is the one that achieves the best possible value of the objective. For example, if someone chooses consumption, given wealth, in order to maximize happiness (assuming happiness ''H'' can be represented by a mathematical function, such as a utility

In economics, utility is a measure of a certain person's satisfaction from a certain state of the world. Over time, the term has been used with at least two meanings.

* In a normative context, utility refers to a goal or objective that we wish ...

function and is something defined by wealth), then each level of wealth will be associated with some highest possible level of happiness, . The best possible value of the objective, written as a function of the state, is called the ''value function''.

Bellman showed that a dynamic optimization

Mathematical optimization (alternatively spelled ''optimisation'') or mathematical programming is the selection of a best element, with regard to some criteria, from some set of available alternatives. It is generally divided into two subfiel ...

problem in discrete time

In mathematical dynamics, discrete time and continuous time are two alternative frameworks within which variables that evolve over time are modeled.

Discrete time

Discrete time views values of variables as occurring at distinct, separate "poi ...

can be stated in a recursive, step-by-step form known as backward induction

Backward induction is the process of determining a sequence of optimal choices by reasoning from the endpoint of a problem or situation back to its beginning using individual events or actions. Backward induction involves examining the final point ...

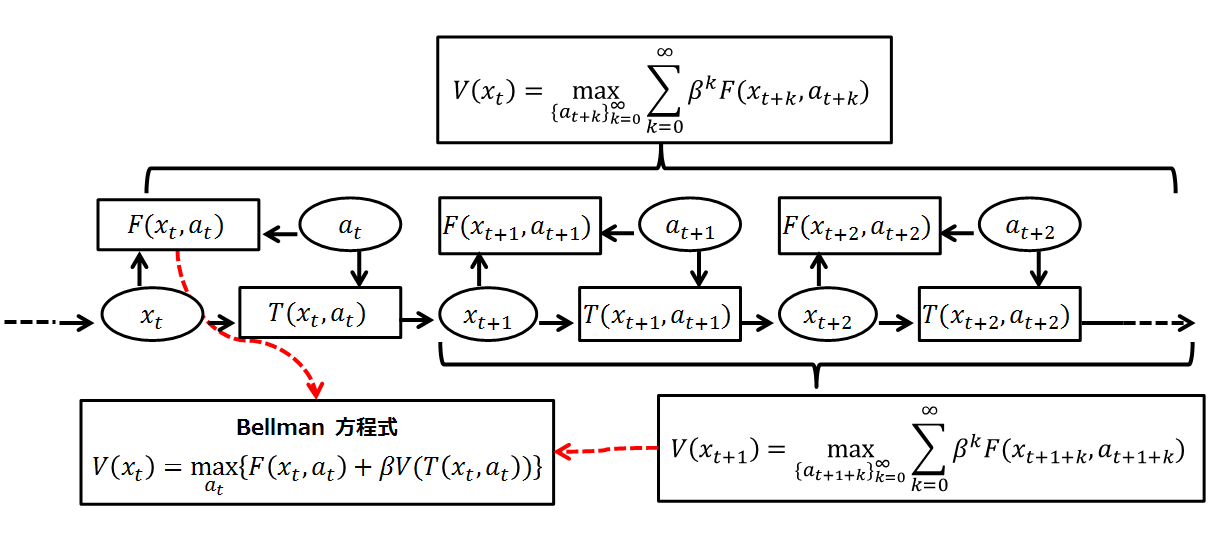

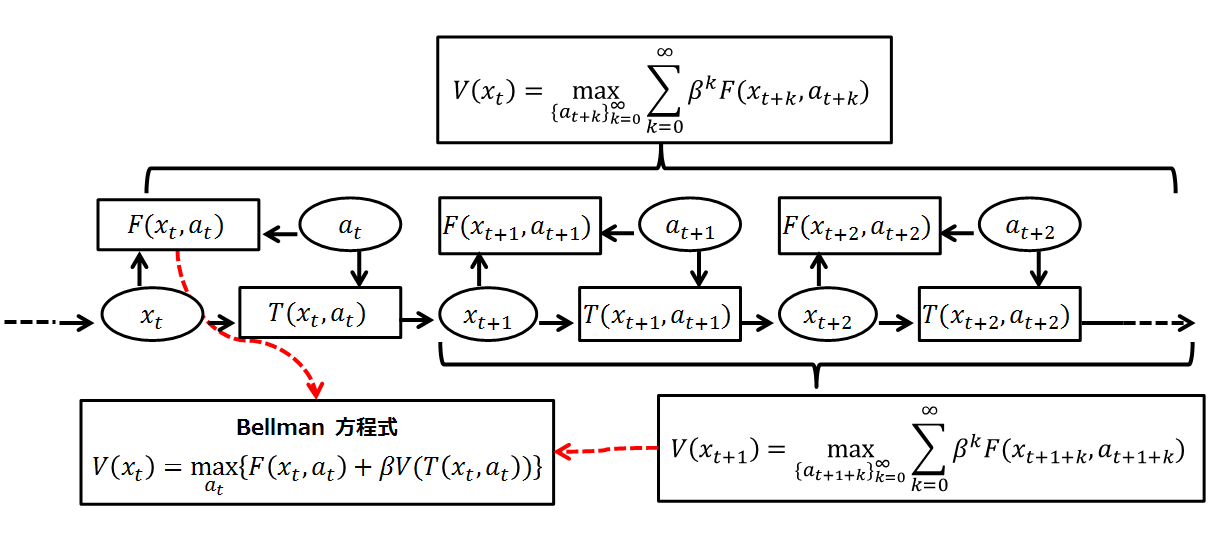

by writing down the relationship between the value function in one period and the value function in the next period. The relationship between these two value functions is called the "Bellman equation". In this approach, the optimal policy in the last time period is specified in advance as a function of the state variable's value at that time, and the resulting optimal value of the objective function is thus expressed in terms of that value of the state variable. Next, the next-to-last period's optimization involves maximizing the sum of that period's period-specific objective function and the optimal value of the future objective function, giving that period's optimal policy contingent upon the value of the state variable as of the next-to-last period decision. This logic continues recursively back in time, until the first period decision rule is derived, as a function of the initial state variable value, by optimizing the sum of the first-period-specific objective function and the value of the second period's value function, which gives the value for all the future periods. Thus, each period's decision is made by explicitly acknowledging that all future decisions will be optimally made.

Derivation

A dynamic decision problem

Let be the state at time . For a decision that begins at time 0, we take as given the initial state . At any time, the set of possible actions depends on the current state; we express this as , where a particular action represents particular values for one or more control variables, and is the set of actions available to be taken at state . It is also assumed that the state changes from to a new state when action is taken, and that the current payoff from taking action in state is . Finally, we assume impatience, represented by a discount factor . Under these assumptions, an infinite-horizon decision problem takes the following form: : subject to the constraints : Notice that we have defined notation to denote the optimal value that can be obtained by maximizing this objective function subject to the assumed constraints. This function is the ''value function''. It is a function of the initial state variable , since the best value obtainable depends on the initial situation.Bellman's principle of optimality

The dynamic programming method breaks this decision problem into smaller subproblems. Bellman's ''principle of optimality'' describes how to do this:Principle of Optimality: An optimal policy has the property that whatever the initial state and initial decision are, the remaining decisions must constitute an optimal policy with regard to the state resulting from the first decision. (See Bellman, 1957, Chap. III.3.)In computer science, a problem that can be broken apart like this is said to have optimal substructure. In the context of dynamic

game theory

Game theory is the study of mathematical models of strategic interactions. It has applications in many fields of social science, and is used extensively in economics, logic, systems science and computer science. Initially, game theory addressed ...

, this principle is analogous to the concept of subgame perfect equilibrium, although what constitutes an optimal policy in this case is conditioned on the decision-maker's opponents choosing similarly optimal policies from their points of view.

As suggested by the ''principle of optimality'', we will consider the first decision separately, setting aside all future decisions (we will start afresh from time 1 with the new state ). Collecting the future decisions in brackets on the right, the above infinite-horizon decision problem is equivalent to:

:

subject to the constraints

:

Here we are choosing , knowing that our choice will cause the time 1 state to be . That new state will then affect the decision problem from time 1 on. The whole future decision problem appears inside the square brackets on the right.

The Bellman equation

So far it seems we have only made the problem uglier by separating today's decision from future decisions. But we can simplify by noticing that what is inside the square brackets on the right is ''the value'' of the time 1 decision problem, starting from state . Therefore, the problem can be rewritten as a recursive definition of the value function: :, subject to the constraints: This is the Bellman equation. It may be simplified even further if the time subscripts are dropped and the value of the next state is plugged in: : The Bellman equation is classified as a functional equation, because solving it means finding the unknown function , which is the ''value function''. Recall that the value function describes the best possible value of the objective, as a function of the state . By calculating the value function, we will also find the function that describes the optimal action as a function of the state; this is called the ''policy function''.In a stochastic problem

In the deterministic setting, other techniques besides dynamic programming can be used to tackle the aboveoptimal control

Optimal control theory is a branch of control theory that deals with finding a control for a dynamical system over a period of time such that an objective function is optimized. It has numerous applications in science, engineering and operations ...

problem. However, the Bellman Equation is often the most convenient method of solving ''stochastic'' optimal control problems.

For a specific example from economics, consider an infinitely-lived consumer with initial wealth endowment at period . They have an instantaneous utility function

In economics, utility is a measure of a certain person's satisfaction from a certain state of the world. Over time, the term has been used with at least two meanings.

* In a Normative economics, normative context, utility refers to a goal or ob ...

where denotes consumption and discounts the next period utility at a rate of . Assume that what is not consumed in period carries over to the next period with interest rate . Then the consumer's utility maximization problem is to choose a consumption plan that solves

:

subject to

:

and

:

The first constraint is the capital accumulation/law of motion specified by the problem, while the second constraint is a transversality condition that the consumer does not carry debt at the end of their life. The Bellman equation is

:

Alternatively, one can treat the sequence problem directly using, for example, the Hamiltonian equations.

Now, if the interest rate varies from period to period, the consumer is faced with a stochastic optimization problem. Let the interest ''r'' follow a Markov process

In probability theory and statistics, a Markov chain or Markov process is a stochastic process describing a sequence of possible events in which the probability of each event depends only on the state attained in the previous event. Informally, ...

with probability transition function where denotes the probability measure

In mathematics, a probability measure is a real-valued function defined on a set of events in a σ-algebra that satisfies Measure (mathematics), measure properties such as ''countable additivity''. The difference between a probability measure an ...

governing the distribution of interest rate next period if current interest rate is . In this model the consumer decides their current period consumption after the current period interest rate is announced.

Rather than simply choosing a single sequence , the consumer now must choose a sequence for each possible realization of a in such a way that their lifetime expected utility is maximized:

:

The expectation is taken with respect to the appropriate probability measure given by ''Q'' on the sequences of ''r''s. Because ''r'' is governed by a Markov process, dynamic programming simplifies the problem significantly. Then the Bellman equation is simply:

:

Under some reasonable assumption, the resulting optimal policy function ''g''(''a'',''r'') is measurable.

For a general stochastic sequential optimization problem with Markovian shocks and where the agent is faced with their decision '' ex-post'', the Bellman equation takes a very similar form

:

Solution methods

* Themethod of undetermined coefficients

In mathematics, the method of undetermined coefficients is an approach to finding a particular solution to certain nonhomogeneous ordinary differential equations and recurrence relations. It is closely related to the annihilator method, but inst ...

, also known as 'guess and verify', can be used to solve some infinite-horizon, autonomous Bellman equations.

* The Bellman equation can be solved by backwards induction, either analytically in a few special cases, or numerically on a computer. Numerical backwards induction is applicable to a wide variety of problems, but may be infeasible when there are many state variables, due to the curse of dimensionality. Approximate dynamic programming has been introduced by D. P. Bertsekas and J. N. Tsitsiklis with the use of artificial neural network

In machine learning, a neural network (also artificial neural network or neural net, abbreviated ANN or NN) is a computational model inspired by the structure and functions of biological neural networks.

A neural network consists of connected ...

s ( multilayer perceptrons) for approximating the Bellman function. This is an effective mitigation strategy for reducing the impact of dimensionality by replacing the memorization of the complete function mapping for the whole space domain with the memorization of the sole neural network parameters. In particular, for continuous-time systems, an approximate dynamic programming approach that combines both policy iterations with neural networks was introduced. In discrete-time, an approach to solve the HJB equation combining value iterations and neural networks was introduced.

* By calculating the first-order conditions associated with the Bellman equation, and then using the envelope theorem to eliminate the derivatives of the value function, it is possible to obtain a system of difference equation

In mathematics, a recurrence relation is an equation according to which the nth term of a sequence of numbers is equal to some combination of the previous terms. Often, only k previous terms of the sequence appear in the equation, for a parameter ...

s or differential equations called the ' Euler equations'. Standard techniques for the solution of difference or differential equations can then be used to calculate the dynamics of the state variables and the control variables of the optimization problem.

Applications in economics

The first known application of a Bellman equation in economics is due to Martin Beckmann andRichard Muth

Richard Ferris Muth (May 14, 1927 – April 10, 2018) was an American economist who is considered to be one of the founders of urban economics (along with William Alonso and Edwin Mills (economist), Edwin Mills).

Muth obtained his Ph.D. from the ...

. Martin Beckmann also wrote extensively on consumption theory using the Bellman equation in 1959. His work influenced Edmund S. Phelps, among others.

A celebrated economic application of a Bellman equation is Robert C. Merton

Robert Cox Merton (born July 31, 1944) is an American economist, Nobel Memorial Prize in Economic Sciences laureate, and professor at the MIT Sloan School of Management, known for his pioneering contributions to continuous-time finance, especia ...

's seminal 1973 article on the intertemporal capital asset pricing model. (See also Merton's portfolio problem). The solution to Merton's theoretical model, one in which investors chose between income today and future income or capital gains, is a form of Bellman's equation. Because economic applications of dynamic programming usually result in a Bellman equation that is a difference equation

In mathematics, a recurrence relation is an equation according to which the nth term of a sequence of numbers is equal to some combination of the previous terms. Often, only k previous terms of the sequence appear in the equation, for a parameter ...

, economists refer to dynamic programming as a "recursive method" and a subfield of recursive economics is now recognized within economics.

Nancy Stokey, Robert E. Lucas, and Edward Prescott describe stochastic and nonstochastic dynamic programming in considerable detail, and develop theorems for the existence of solutions to problems meeting certain conditions. They also describe many examples of modeling theoretical problems in economics using recursive methods. This book led to dynamic programming being employed to solve a wide range of theoretical problems in economics, including optimal economic growth

In economics, economic growth is an increase in the quantity and quality of the economic goods and Service (economics), services that a society Production (economics), produces. It can be measured as the increase in the inflation-adjusted Outp ...

, resource extraction

Natural resources are resources that are drawn from nature and used with few modifications. This includes the sources of valued characteristics such as commercial and industrial use, aesthetic value, scientific interest, and cultural value. ...

, principal–agent problem

The principal–agent problem refers to the conflict in interests and priorities that arises when one person or entity (the " agent") takes actions on behalf of another person or entity (the " principal"). The problem worsens when there is a gr ...

s, public finance

Public finance refers to the monetary resources available to governments and also to the study of finance within government and role of the government in the economy. Within academic settings, public finance is a widely studied subject in man ...

, business investment

Investment is traditionally defined as the "commitment of resources into something expected to gain value over time". If an investment involves money, then it can be defined as a "commitment of money to receive more money later". From a broade ...

, asset pricing

In financial economics, asset pricing refers to a formal treatment and development of two interrelated Price, pricing principles, outlined below, together with the resultant models. There have been many models developed for different situations, ...

, factor supply, and industrial organization

In economics, industrial organization is a field that builds on the theory of the firm by examining the structure of (and, therefore, the boundaries between) firms and markets. Industrial organization adds real-world complications to the per ...

. Lars Ljungqvist and Thomas Sargent

Thomas John Sargent (born July 19, 1943) is an American economist and the W.R. Berkley Professor of Economics and Business at New York University. He specializes in the fields of macroeconomics, monetary economics, and time series econometric ...

apply dynamic programming to study a variety of theoretical questions in monetary policy

Monetary policy is the policy adopted by the monetary authority of a nation to affect monetary and other financial conditions to accomplish broader objectives like high employment and price stability (normally interpreted as a low and stable rat ...

, fiscal policy

In economics and political science, fiscal policy is the use of government revenue collection ( taxes or tax cuts) and expenditure to influence a country's economy. The use of government revenue expenditures to influence macroeconomic variab ...

, taxation

A tax is a mandatory financial charge or levy imposed on an individual or legal person, legal entity by a governmental organization to support government spending and public expenditures collectively or to Pigouvian tax, regulate and reduce nega ...

, economic growth

In economics, economic growth is an increase in the quantity and quality of the economic goods and Service (economics), services that a society Production (economics), produces. It can be measured as the increase in the inflation-adjusted Outp ...

, search theory

In microeconomics, search theory studies buyers or sellers who cannot instantly find a trading partner, and must therefore search for a partner prior to transacting. It involves determining the best approach to use when looking for a specific ite ...

, and labor economics. Avinash Dixit and Robert Pindyck showed the value of the method for thinking about capital budgeting

Capital budgeting in corporate finance, corporate planning and accounting is an area of capital management that concerns the planning process used to determine whether an organization's long term capital investments such as new machinery, repla ...

. Anderson adapted the technique to business valuation, including privately held businesses.

Using dynamic programming to solve concrete problems is complicated by informational difficulties, such as choosing the unobservable discount rate. There are also computational issues, the main one being the curse of dimensionality arising from the vast number of possible actions and potential state variables that must be considered before an optimal strategy can be selected. For an extensive discussion of computational issues, see Miranda and Fackler, and Meyn 2007. Appendix contains abridgeMeyn & Tweedie

.

Example

In Markov decision processes, a Bellman equation is arecursion

Recursion occurs when the definition of a concept or process depends on a simpler or previous version of itself. Recursion is used in a variety of disciplines ranging from linguistics to logic. The most common application of recursion is in m ...

for expected rewards. For example, the expected reward for being in a particular state ''s'' and following some fixed policy has the Bellman equation:

:

This equation describes the expected reward for taking the action prescribed by some policy .

The equation for the optimal policy is referred to as the ''Bellman optimality equation'':

:

where is the optimal policy and refers to the value function of the optimal policy. The equation above describes the reward for taking the action giving the highest expected return.

See also

* * * * * * * *References

{{DEFAULTSORT:Bellman Equation Equations Dynamic programming Control theory