|

Uncorrelated

In probability theory and statistics, two real-valued random variables, X, Y, are said to be uncorrelated if their covariance, \operatorname ,Y= \operatorname Y- \operatorname \operatorname /math>, is zero. If two variables are uncorrelated, there is no linear relationship between them. Uncorrelated random variables have a Pearson correlation coefficient, when it exists, of zero, except in the trivial case when either variable has zero variance (is a constant). In this case the correlation is undefined. In general, uncorrelatedness is not the same as orthogonality, except in the special case where at least one of the two random variables has an expected value of 0. In this case, the covariance is the expectation of the product, and X and Y are uncorrelated if and only if \operatorname Y= 0. If X and Y are independent, with finite second moments, then they are uncorrelated. However, not all uncorrelated variables are independent. Definition Definition for two real random v ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variance

In probability theory and statistics, variance is the expectation of the squared deviation of a random variable from its population mean or sample mean. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers is spread out from their average value. Variance has a central role in statistics, where some ideas that use it include descriptive statistics, statistical inference, hypothesis testing, goodness of fit, and Monte Carlo sampling. Variance is an important tool in the sciences, where statistical analysis of data is common. The variance is the square of the standard deviation, the second central moment of a distribution, and the covariance of the random variable with itself, and it is often represented by \sigma^2, s^2, \operatorname(X), V(X), or \mathbb(X). An advantage of variance as a measure of dispersion is that it is more amenable to algebraic manipulation than other measures of dispersion such as the expected absolute deviatio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Normally Distributed And Uncorrelated Does Not Imply Independent

In probability theory, although simple examples illustrate that linear uncorrelatedness of two random variables does not in general imply their independence, it is sometimes mistakenly thought that it does imply that when the two random variables are normally distributed. This article demonstrates that assumption of normal distributions does not have that consequence, although the multivariate normal distribution, including the bivariate normal distribution, does. To say that the pair (X,Y) of random variables has a bivariate normal distribution means that every linear combination aX+bY of X and Y for constant (i.e. not random) coefficients a and b (not both equal to zero) has a univariate normal distribution. In that case, if X and Y are uncorrelated then they are independent. However, it is possible for two random variables X and Y to be so distributed jointly that each one alone is marginally normally distributed, and they are uncorrelated, but they are not independent; examples ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Correlation And Dependence

In statistics, correlation or dependence is any statistical relationship, whether causal or not, between two random variables or bivariate data. Although in the broadest sense, "correlation" may indicate any type of association, in statistics it usually refers to the degree to which a pair of variables are '' linearly'' related. Familiar examples of dependent phenomena include the correlation between the height of parents and their offspring, and the correlation between the price of a good and the quantity the consumers are willing to purchase, as it is depicted in the so-called demand curve. Correlations are useful because they can indicate a predictive relationship that can be exploited in practice. For example, an electrical utility may produce less power on a mild day based on the correlation between electricity demand and weather. In this example, there is a causal relationship, because extreme weather causes people to use more electricity for heating or cooling. H ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Covariance

In probability theory and statistics, covariance is a measure of the joint variability of two random variables. If the greater values of one variable mainly correspond with the greater values of the other variable, and the same holds for the lesser values (that is, the variables tend to show similar behavior), the covariance is positive. In the opposite case, when the greater values of one variable mainly correspond to the lesser values of the other, (that is, the variables tend to show opposite behavior), the covariance is negative. The sign of the covariance therefore shows the tendency in the linear relationship between the variables. The magnitude of the covariance is not easy to interpret because it is not normalized and hence depends on the magnitudes of the variables. The normalized version of the covariance, the correlation coefficient, however, shows by its magnitude the strength of the linear relation. A distinction must be made between (1) the covariance of two ran ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Covariance Between Two Binomials

In probability theory and statistics, covariance is a measure of the joint variability of two random variables. If the greater values of one variable mainly correspond with the greater values of the other variable, and the same holds for the lesser values (that is, the variables tend to show similar behavior), the covariance is positive. In the opposite case, when the greater values of one variable mainly correspond to the lesser values of the other, (that is, the variables tend to show opposite behavior), the covariance is negative. The sign of the covariance therefore shows the tendency in the linear relationship between the variables. The magnitude of the covariance is not easy to interpret because it is not normalized and hence depends on the magnitudes of the variables. The normalized version of the covariance, the correlation coefficient, however, shows by its magnitude the strength of the linear relation. A distinction must be made between (1) the covariance of two rando ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Stochastic Process

In probability theory and related fields, a stochastic () or random process is a mathematical object usually defined as a family of random variables. Stochastic processes are widely used as mathematical models of systems and phenomena that appear to vary in a random manner. Examples include the growth of a bacterial population, an electrical current fluctuating due to thermal noise, or the movement of a gas molecule. Stochastic processes have applications in many disciplines such as biology, chemistry, ecology, neuroscience, physics, image processing, signal processing, control theory, information theory, computer science, cryptography and telecommunications. Furthermore, seemingly random changes in financial markets have motivated the extensive use of stochastic processes in finance. Applications and the study of phenomena have in turn inspired the proposal of new stochastic processes. Examples of such stochastic processes include the Wiener process or Brownian motion ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Pearson Correlation Coefficient

In statistics, the Pearson correlation coefficient (PCC, pronounced ) ― also known as Pearson's ''r'', the Pearson product-moment correlation coefficient (PPMCC), the bivariate correlation, or colloquially simply as the correlation coefficient ― is a measure of linear correlation between two sets of data. It is the ratio between the covariance of two variables and the product of their standard deviations; thus, it is essentially a normalized measurement of the covariance, such that the result always has a value between −1 and 1. As with covariance itself, the measure can only reflect a linear correlation of variables, and ignores many other types of relationships or correlations. As a simple example, one would expect the age and height of a sample of teenagers from a high school to have a Pearson correlation coefficient significantly greater than 0, but less than 1 (as 1 would represent an unrealistically perfect correlation). Naming and history It was developed by ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistical Independence

Independence is a fundamental notion in probability theory, as in statistics and the theory of stochastic processes. Two events are independent, statistically independent, or stochastically independent if, informally speaking, the occurrence of one does not affect the probability of occurrence of the other or, equivalently, does not affect the odds. Similarly, two random variables are independent if the realization of one does not affect the probability distribution of the other. When dealing with collections of more than two events, two notions of independence need to be distinguished. The events are called pairwise independent if any two events in the collection are independent of each other, while mutual independence (or collective independence) of events means, informally speaking, that each event is independent of any combination of other events in the collection. A similar notion exists for collections of random variables. Mutual independence implies pairwise independenc ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cross-covariance Matrix

In probability theory and statistics, a cross-covariance matrix is a matrix whose element in the ''i'', ''j'' position is the covariance between the ''i''-th element of a random vector and ''j''-th element of another random vector. A random vector is a random variable with multiple dimensions. Each element of the vector is a scalar random variable. Each element has either a finite number of ''observed'' empirical values or a finite or infinite number of ''potential'' values. The potential values are specified by a theoretical joint probability distribution. Intuitively, the cross-covariance matrix generalizes the notion of covariance to multiple dimensions. The cross-covariance matrix of two random vectors \mathbf and \mathbf is typically denoted by \operatorname_ or \Sigma_. Definition For random vectors \mathbf and \mathbf, each containing random elements whose expected value and variance exist, the cross-covariance matrix of \mathbf and \mathbf is defined by where \mathbf ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Orthogonality

In mathematics, orthogonality is the generalization of the geometric notion of '' perpendicularity''. By extension, orthogonality is also used to refer to the separation of specific features of a system. The term also has specialized meanings in other fields including art and chemistry. Etymology The word comes from the Ancient Greek ('), meaning "upright", and ('), meaning "angle". The Ancient Greek (') and Classical Latin ' originally denoted a rectangle. Later, they came to mean a right triangle. In the 12th century, the post-classical Latin word ''orthogonalis'' came to mean a right angle or something related to a right angle. Mathematics Physics * In optics, polarization states are said to be orthogonal when they propagate independently of each other, as in vertical and horizontal linear polarization or right- and left-handed circular polarization. * In special relativity, a time axis determined by a rapidity of motion is hyperbolic-orthogonal to a space axis ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

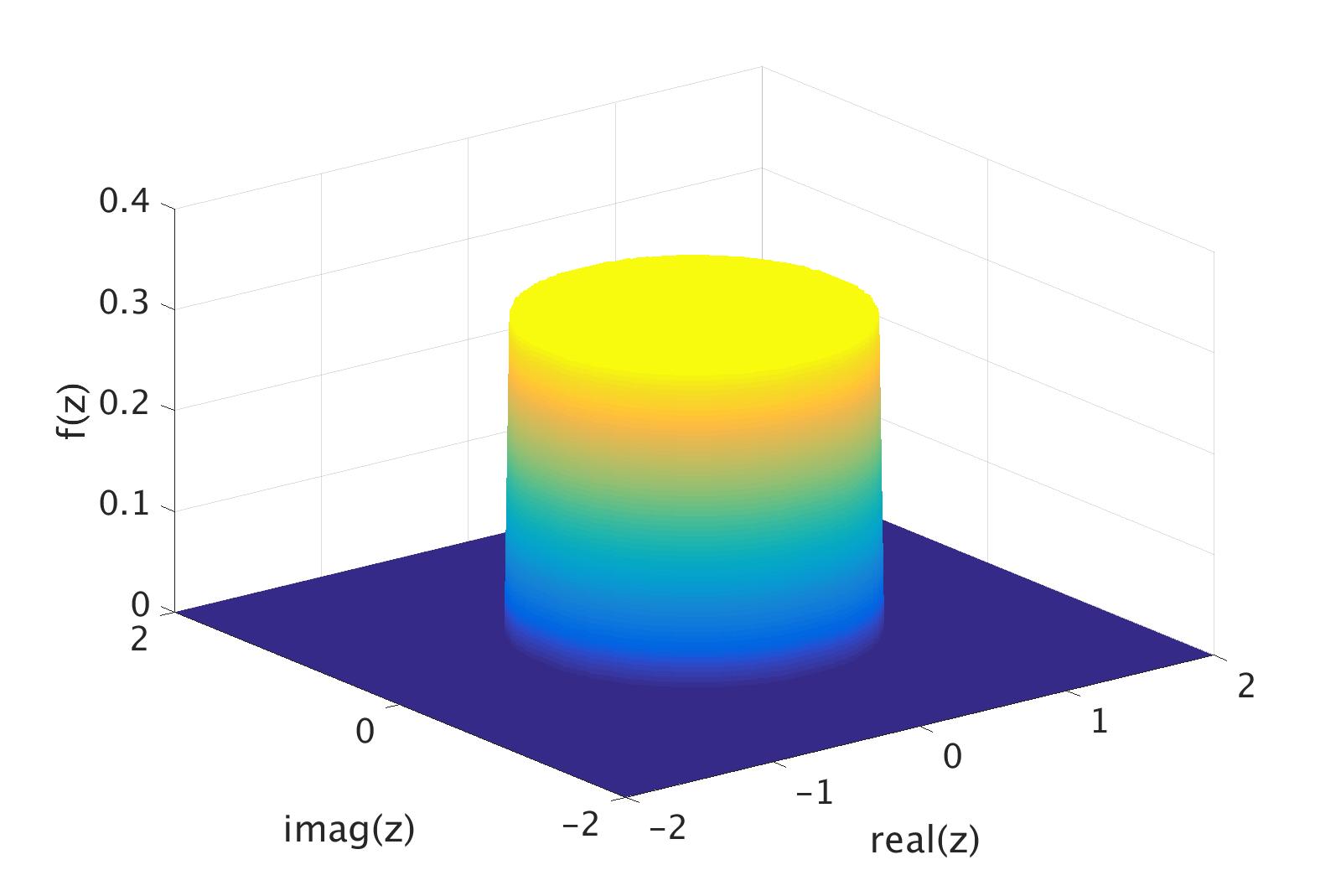

Complex Random Variable

In probability theory and statistics, complex random variables are a generalization of real-valued random variables to complex numbers, i.e. the possible values a complex random variable may take are complex numbers. Complex random variables can always be considered as pairs of real random variables: their real and imaginary parts. Therefore, the distribution of one complex random variable may be interpreted as the joint distribution of two real random variables. Some concepts of real random variables have a straightforward generalization to complex random variables—e.g., the definition of the mean of a complex random variable. Other concepts are unique to complex random variables. Applications of complex random variables are found in digital signal processing, quadrature amplitude modulation and information theory. Definition A complex random variable Z on the probability space (\Omega,\mathcal,P) is a function Z \colon \Omega \rightarrow \mathbb such that both its real ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Random Vector

In probability, and statistics, a multivariate random variable or random vector is a list of mathematical variables each of whose value is unknown, either because the value has not yet occurred or because there is imperfect knowledge of its value. The individual variables in a random vector are grouped together because they are all part of a single mathematical system — often they represent different properties of an individual statistical unit. For example, while a given person has a specific age, height and weight, the representation of these features of ''an unspecified person'' from within a group would be a random vector. Normally each element of a random vector is a real number. Random vectors are often used as the underlying implementation of various types of aggregate random variables, e.g. a random matrix, random tree, random sequence, stochastic process, etc. More formally, a multivariate random variable is a column vector \mathbf = (X_1,\dots,X_n)^\mathsf (or ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |