|

Study Heterogeneity

In statistics, (between-) study heterogeneity is a phenomenon that commonly occurs when attempting to undertake a meta-analysis. In a simplistic scenario, studies whose results are to be combined in the meta-analysis would all be undertaken in the same way and to the same experimental protocols. Differences between outcomes would only be due to measurement error (and studies would hence be ''Homogeneity (statistics), homogeneous''). Study heterogeneity denotes the variability (statistics), variability in outcomes that goes beyond what would be expected (or could be explained) due to measurement error alone. Introduction Meta-analysis is a method used to combine the results of different trials in order to obtain a quantitative synthesis. The size of individual clinical trials is often too small to detect treatment effects reliably. Meta-analysis increases the power of statistical analyses by pooling the results of all available trials. As one tries to use meta-analysis to estimate ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Statistics

Statistics (from German language, German: ', "description of a State (polity), state, a country") is the discipline that concerns the collection, organization, analysis, interpretation, and presentation of data. In applying statistics to a scientific, industrial, or social problem, it is conventional to begin with a statistical population or a statistical model to be studied. Populations can be diverse groups of people or objects such as "all people living in a country" or "every atom composing a crystal". Statistics deals with every aspect of data, including the planning of data collection in terms of the design of statistical survey, surveys and experimental design, experiments. When census data (comprising every member of the target population) cannot be collected, statisticians collect data by developing specific experiment designs and survey sample (statistics), samples. Representative sampling assures that inferences and conclusions can reasonably extend from the sample ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variance Component

In econometrics, a random effects model, also called a variance components model, is a statistical model where the model parameters are random variables. It is a kind of hierarchical linear model, which assumes that the data being analysed are drawn from a hierarchy of different populations whose differences relate to that hierarchy. A random effects model is a special case of a mixed model. Contrast this to the biostatistics definitions, as biostatisticians use "fixed" and "random" effects to respectively refer to the population-average and subject-specific effects (and where the latter are generally assumed to be unknown, latent variables). Qualitative description Random effect models assist in controlling for unobserved heterogeneity when the heterogeneity is constant over time and not correlated with independent variables. This constant can be removed from longitudinal data through differencing, since taking a first difference will remove any time invariant components of th ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Variance

In probability theory and statistics, variance is the expected value of the squared deviation from the mean of a random variable. The standard deviation (SD) is obtained as the square root of the variance. Variance is a measure of dispersion, meaning it is a measure of how far a set of numbers is spread out from their average value. It is the second central moment of a distribution, and the covariance of the random variable with itself, and it is often represented by \sigma^2, s^2, \operatorname(X), V(X), or \mathbb(X). An advantage of variance as a measure of dispersion is that it is more amenable to algebraic manipulation than other measures of dispersion such as the expected absolute deviation; for example, the variance of a sum of uncorrelated random variables is equal to the sum of their variances. A disadvantage of the variance for practical applications is that, unlike the standard deviation, its units differ from the random variable, which is why the standard devi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Prior Probability Distribution

A prior probability distribution of an uncertain quantity, simply called the prior, is its assumed probability distribution before some evidence is taken into account. For example, the prior could be the probability distribution representing the relative proportions of voters who will vote for a particular politician in a future election. The unknown quantity may be a parameter of the model or a latent variable rather than an observable variable. In Bayesian statistics, Bayes' rule prescribes how to update the prior with new information to obtain the posterior probability distribution, which is the conditional distribution of the uncertain quantity given new data. Historically, the choice of priors was often constrained to a conjugate family of a given likelihood function, so that it would result in a tractable posterior of the same family. The widespread availability of Markov chain Monte Carlo methods, however, has made this less of a concern. There are many ways to constru ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

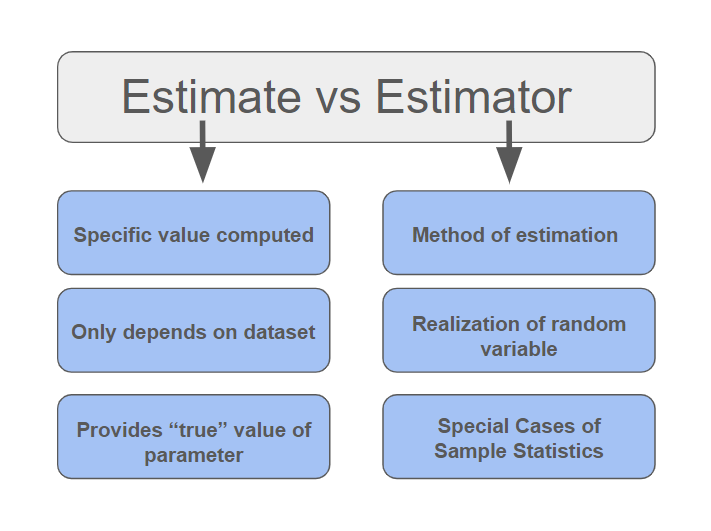

Estimator

In statistics, an estimator is a rule for calculating an estimate of a given quantity based on Sample (statistics), observed data: thus the rule (the estimator), the quantity of interest (the estimand) and its result (the estimate) are distinguished. For example, the sample mean is a commonly used estimator of the population mean. There are point estimator, point and interval estimators. The point estimators yield single-valued results. This is in contrast to an interval estimator, where the result would be a range of plausible values. "Single value" does not necessarily mean "single number", but includes vector valued or function valued estimators. ''Estimation theory'' is concerned with the properties of estimators; that is, with defining properties that can be used to compare different estimators (different rules for creating estimates) for the same quantity, based on the same data. Such properties can be used to determine the best rules to use under given circumstances. Howeve ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bayesian Inference

Bayesian inference ( or ) is a method of statistical inference in which Bayes' theorem is used to calculate a probability of a hypothesis, given prior evidence, and update it as more information becomes available. Fundamentally, Bayesian inference uses a prior distribution to estimate posterior probabilities. Bayesian inference is an important technique in statistics, and especially in mathematical statistics. Bayesian updating is particularly important in the dynamic analysis of a sequence of data. Bayesian inference has found application in a wide range of activities, including science, engineering, philosophy, medicine, sport, and law. In the philosophy of decision theory, Bayesian inference is closely related to subjective probability, often called "Bayesian probability". Introduction to Bayes' rule Formal explanation Bayesian inference derives the posterior probability as a consequence of two antecedents: a prior probability and a "likelihood function" derive ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Frequentist

Frequentist inference is a type of statistical inference based in frequentist probability, which treats “probability” in equivalent terms to “frequency” and draws conclusions from sample-data by means of emphasizing the frequency or proportion of findings in the data. Frequentist inference underlies frequentist statistics, in which the well-established methodologies of statistical hypothesis testing and confidence intervals are founded. History of frequentist statistics Frequentism is based on the presumption that statistics represent probabilistic frequencies. This view was primarily developed by Ronald Fisher and the team of Jerzy Neyman and Egon Pearson. Ronald Fisher contributed to frequentist statistics by developing the frequentist concept of "significance testing", which is the study of the significance of a measure of a statistic when compared to the hypothesis. Neyman-Pearson extended Fisher's ideas to apply to multiple hypotheses. They posed that the ratio ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Null Hypothesis

The null hypothesis (often denoted ''H''0) is the claim in scientific research that the effect being studied does not exist. The null hypothesis can also be described as the hypothesis in which no relationship exists between two sets of data or variables being analyzed. If the null hypothesis is true, any experimentally observed effect is due to chance alone, hence the term "null". In contrast with the null hypothesis, an alternative hypothesis (often denoted ''H''A or ''H''1) is developed, which claims that a relationship does exist between two variables. Basic definitions The null hypothesis and the ''alternative hypothesis'' are types of conjectures used in statistical tests to make statistical inferences, which are formal methods of reaching conclusions and separating scientific claims from statistical noise. The statement being tested in a test of statistical significance is called the null hypothesis. The test of significance is designed to assess the strength of the e ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Power Of A Test

In frequentist statistics, power is the probability of detecting a given effect (if that effect actually exists) using a given test in a given context. In typical use, it is a function of the specific test that is used (including the choice of test statistic and significance level), the sample size (more data tends to provide more power), and the effect size (effects or correlations that are large relative to the variability of the data tend to provide more power). More formally, in the case of a simple hypothesis test with two hypotheses, the power of the test is the probability that the test correctly rejects the null hypothesis (H_0) when the alternative hypothesis (H_1) is true. It is commonly denoted by 1-\beta, where \beta is the probability of making a type II error (a false negative) conditional on there being a true effect or association. Background Statistical testing uses data from samples to assess, or make inferences about, a statistical population. For ex ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

William Gemmell Cochran

William Gemmell Cochran (15 July 1909 – 29 March 1980) was a prominent statistician. He was born in Scotland but spent most of his life in the United States. Cochran studied mathematics at the University of Glasgow and the University of Cambridge. He worked at Rothamsted Experimental Station from 1934 to 1939, when he moved to the United States. There he helped establish several departments of statistics. His longest spell in any one university was at Harvard, which he joined in 1957 and from which he retired in 1976. Writings Cochran wrote many articles and books. His books became standard texts: * ''Experimental Designs'' (with Gertrude Mary Cox) 1950 * * ''Statistical Methods Applied to Experiments in Agriculture and Biology'' by George W. Snedecor (Cochran contributed from the fifth (1956) edition) * ''Planning and Analysis of Observational Studies'' (edited by Lincoln E. Moses and Frederick Mosteller Charles Frederick Mosteller (December 24, 1916 – July 23, 2 ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Pharmacokinetics

Pharmacokinetics (from Ancient Greek ''pharmakon'' "drug" and ''kinetikos'' "moving, putting in motion"; see chemical kinetics), sometimes abbreviated as PK, is a branch of pharmacology dedicated to describing how the body affects a specific substance after administration. The substances of interest include any chemical xenobiotic such as pharmaceutical drugs, pesticides, food additives, cosmetics, etc. It attempts to analyze chemical metabolism and to discover the fate of a chemical from the moment that it is administered up to the point at which it is completely eliminated from the body. Pharmacokinetics is based on mathematical modeling that places great emphasis on the relationship between drug plasma concentration and the time elapsed since the drug's administration. Pharmacokinetics is the study of how an organism affects the drug, whereas pharmacodynamics (PD) is the study of how the drug affects the organism. Both together influence dosing, benefit, and adverse effe ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Estimand

An estimand is a quantity that is to be estimated in a statistical analysis. The term is used to distinguish the target of inference from the method used to obtain an approximation of this target (i.e., the estimator) and the specific value obtained from a given method and dataset (i.e., the estimate). For instance, a normally distributed random variable X has two defining parameters, its mean \mu and variance \sigma^. A variance estimator: s^ = \sum_^ \left. \left( x_ - \bar \right)^ \right/ (n-1), yields an estimate of 7 for a data set x = \left\; then s^ is called an estimator of \sigma^, and \sigma^ is called the estimand. Definition In relation to an estimator, an estimand is the outcome of different treatments of interest. It can formally be thought of as any quantity that is to be estimated in any type of experiment. Overview An estimand is closely linked to the purpose or objective of an analysis. It describes what is to be estimated based on the question of interes ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |