|

Reverse Causality

In econometrics, endogeneity broadly refers to situations in which an explanatory variable is correlated with the error term. The distinction between endogenous and exogenous variables originated in simultaneous equations models, where one separates variables whose values are determined by the model from variables which are predetermined; ignoring simultaneity in the estimation leads to biased estimates as it violates the exogeneity assumption of the Gauss–Markov theorem. The problem of endogeneity is often ignored by researchers conducting non-experimental research and doing so precludes making policy recommendations. Instrumental variable techniques are commonly used to address this problem. Besides simultaneity, correlation between explanatory variables and the error term can arise when an unobserved or omitted variable is confounding both independent and dependent variables, or when independent variables are measured with error. Exogeneity versus endogeneity In a stoch ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Econometrics

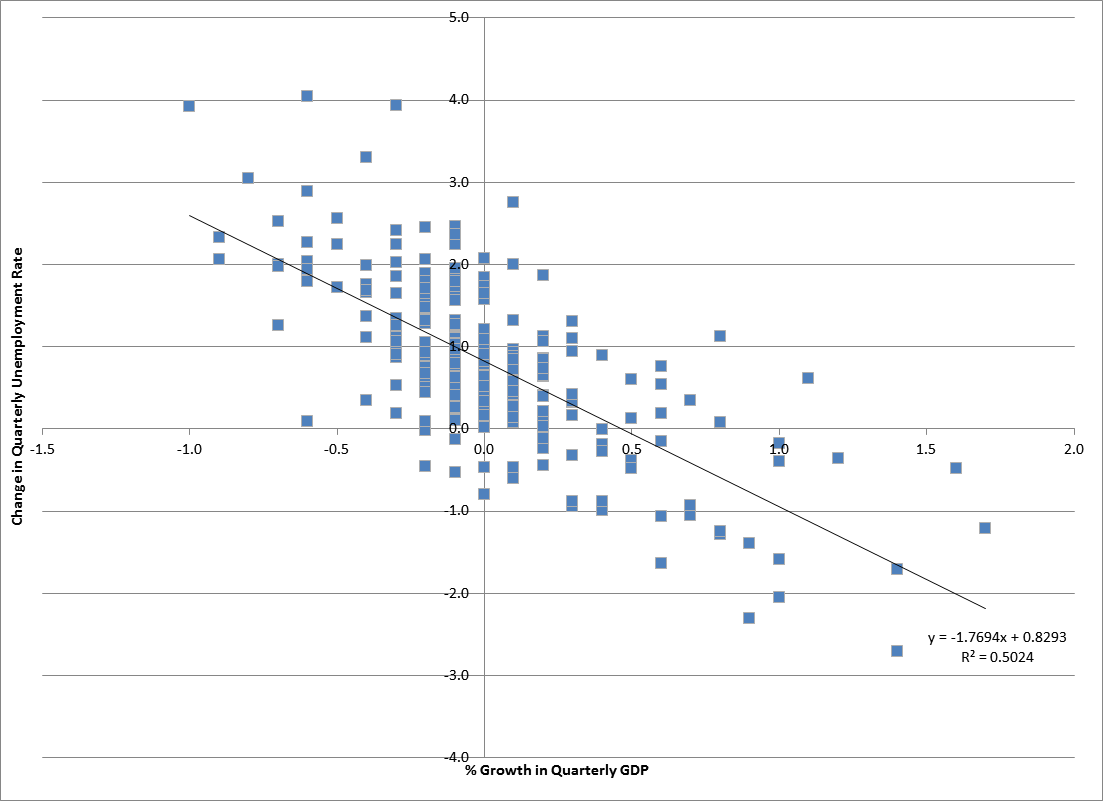

Econometrics is the application of Statistics, statistical methods to economic data in order to give Empirical evidence, empirical content to economic relationships.M. Hashem Pesaran (1987). "Econometrics," ''The New Palgrave: A Dictionary of Economics'', v. 2, p. 8 [pp. 8–22]. Reprinted in J. Eatwell ''et al.'', eds. (1990). ''Econometrics: The New Palgrave''p. 1[pp. 1–34].Abstract (The New Palgrave Dictionary of Economics, 2008 revision by J. Geweke, J. Horowitz, and H. P. Pesaran). More precisely, it is "the quantitative analysis of actual economic Phenomenon, phenomena based on the concurrent development of theory and observation, related by appropriate methods of inference". An introductory economics textbook describes econometrics as allowing economists "to sift through mountains of data to extract simple relationships". Jan Tinbergen is one of the two founding fathers of econometrics. The other, Ragnar Frisch, also coined the term in the sense in which it is used toda ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Confounding

In statistics, a confounder (also confounding variable, confounding factor, extraneous determinant or lurking variable) is a variable that influences both the dependent variable and independent variable, causing a spurious association. Confounding is a causal concept, and as such, cannot be described in terms of correlations or associations.Pearl, J., (2009). Simpson's Paradox, Confounding, and Collapsibility In ''Causality: Models, Reasoning and Inference'' (2nd ed.). New York : Cambridge University Press. The existence of confounders is an important quantitative explanation why correlation does not imply causation. Confounds are threats to internal validity. Definition Confounding is defined in terms of the data generating model. Let ''X'' be some independent variable, and ''Y'' some dependent variable. To estimate the effect of ''X'' on ''Y'', the statistician must suppress the effects of extraneous variables that influence both ''X'' and ''Y''. We say that ''X'' ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Time Series

In mathematics, a time series is a series of data points indexed (or listed or graphed) in time order. Most commonly, a time series is a sequence taken at successive equally spaced points in time. Thus it is a sequence of discrete-time data. Examples of time series are heights of ocean tides, counts of sunspots, and the daily closing value of the Dow Jones Industrial Average. A time series is very frequently plotted via a run chart (which is a temporal line chart). Time series are used in statistics, signal processing, pattern recognition, econometrics, mathematical finance, weather forecasting, earthquake prediction, electroencephalography, control engineering, astronomy, communications engineering, and largely in any domain of applied science and engineering which involves temporal measurements. Time series ''analysis'' comprises methods for analyzing time series data in order to extract meaningful statistics and other characteristics of the data. Time series ''forecasting' ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Structural Equation Modeling

Structural equation modeling (SEM) is a label for a diverse set of methods used by scientists in both experimental and observational research across the sciences, business, and other fields. It is used most in the social and behavioral sciences. A definition of SEM is difficult without reference to highly technical language, but a good starting place is the name itself. SEM involves the construction of a ''model'', to represent how various aspects of an observable or theoretical phenomenon are thought to be causally structurally related to one another. The ''structural'' aspect of the model implies theoretical associations between variables that represent the phenomenon under investigation. The postulated causal structuring is often depicted with arrows representing causal connections between variables (as in Figures 1 and 2) but these causal connections can be equivalently represented as equations. The causal structures imply that specific patterns of connections should appe ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Confounding Variable

In statistics, a confounder (also confounding variable, confounding factor, extraneous determinant or lurking variable) is a variable that influences both the dependent variable and independent variable, causing a spurious association. Confounding is a causal concept, and as such, cannot be described in terms of correlations or associations.Pearl, J., (2009). Simpson's Paradox, Confounding, and Collapsibility In ''Causality: Models, Reasoning and Inference'' (2nd ed.). New York : Cambridge University Press. The existence of confounders is an important quantitative explanation why correlation does not imply causation. Confounds are threats to internal validity. Definition Confounding is defined in terms of the data generating model. Let ''X'' be some independent variable, and ''Y'' some dependent variable. To estimate the effect of ''X'' on ''Y'', the statistician must suppress the effects of extraneous variables that influence both ''X'' and ''Y''. We say that ''X'' and ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Heckman Correction

The Heckman correction is a statistical technique to correct bias from non-randomly selected samples or otherwise incidentally truncated dependent variables, a pervasive issue in quantitative social sciences when using observational data. Conceptually, this is achieved by explicitly modelling the individual sampling probability of each observation (the so-called selection equation) together with the conditional expectation of the dependent variable (the so-called outcome equation). The resulting likelihood function is mathematically similar to the tobit model for censored dependent variables, a connection first drawn by James Heckman in 1974. Heckman also developed a two-step control function approach to estimate this model, which avoids the computational burden of having to estimate both equations jointly, albeit at the cost of inefficiency. Heckman received the Nobel Memorial Prize in Economic Sciences in 2000 for his work in this field. Method Statistical analyses based on ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Instrumental Variable

In statistics, econometrics, epidemiology and related disciplines, the method of instrumental variables (IV) is used to estimate causal relationships when controlled experiments are not feasible or when a treatment is not successfully delivered to every unit in a randomized experiment. Intuitively, IVs are used when an explanatory variable of interest is correlated with the error term, in which case ordinary least squares and ANOVA give biased results. A valid instrument induces changes in the explanatory variable but has no independent effect on the dependent variable, allowing a researcher to uncover the causal effect of the explanatory variable on the dependent variable. Instrumental variable methods allow for consistent estimation when the explanatory variables (covariates) are correlated with the error terms in a regression model. Such correlation may occur when: # changes in the dependent variable change the value of at least one of the covariates ("reverse" causation), # ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Consistent Estimator

In statistics, a consistent estimator or asymptotically consistent estimator is an estimator—a rule for computing estimates of a parameter ''θ''0—having the property that as the number of data points used increases indefinitely, the resulting sequence of estimates converges in probability to ''θ''0. This means that the distributions of the estimates become more and more concentrated near the true value of the parameter being estimated, so that the probability of the estimator being arbitrarily close to ''θ''0 converges to one. In practice one constructs an estimator as a function of an available sample of size ''n'', and then imagines being able to keep collecting data and expanding the sample ''ad infinitum''. In this way one would obtain a sequence of estimates indexed by ''n'', and consistency is a property of what occurs as the sample size “grows to infinity”. If the sequence of estimates can be mathematically shown to converge in probability to the true value ''� ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Bias Of An Estimator

In statistics, the bias of an estimator (or bias function) is the difference between this estimator's expected value and the true value of the parameter being estimated. An estimator or decision rule with zero bias is called ''unbiased''. In statistics, "bias" is an property of an estimator. Bias is a distinct concept from consistency: consistent estimators converge in probability to the true value of the parameter, but may be biased or unbiased; see bias versus consistency for more. All else being equal, an unbiased estimator is preferable to a biased estimator, although in practice, biased estimators (with generally small bias) are frequently used. When a biased estimator is used, bounds of the bias are calculated. A biased estimator may be used for various reasons: because an unbiased estimator does not exist without further assumptions about a population; because an estimator is difficult to compute (as in unbiased estimation of standard deviation); because a biased estimato ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Ordinary Least Squares

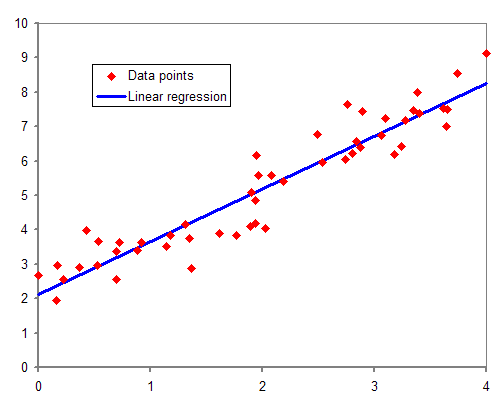

In statistics, ordinary least squares (OLS) is a type of linear least squares method for choosing the unknown parameters in a linear regression model (with fixed level-one effects of a linear function of a set of explanatory variables) by the principle of least squares: minimizing the sum of the squares of the differences between the observed dependent variable (values of the variable being observed) in the input dataset and the output of the (linear) function of the independent variable. Geometrically, this is seen as the sum of the squared distances, parallel to the axis of the dependent variable, between each data point in the set and the corresponding point on the regression surface—the smaller the differences, the better the model fits the data. The resulting estimator can be expressed by a simple formula, especially in the case of a simple linear regression, in which there is a single regressor on the right side of the regression equation. The OLS estimator is consiste ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Regression Analysis

In statistical modeling, regression analysis is a set of statistical processes for estimating the relationships between a dependent variable (often called the 'outcome' or 'response' variable, or a 'label' in machine learning parlance) and one or more independent variables (often called 'predictors', 'covariates', 'explanatory variables' or 'features'). The most common form of regression analysis is linear regression, in which one finds the line (or a more complex linear combination) that most closely fits the data according to a specific mathematical criterion. For example, the method of ordinary least squares computes the unique line (or hyperplane) that minimizes the sum of squared differences between the true data and that line (or hyperplane). For specific mathematical reasons (see linear regression), this allows the researcher to estimate the conditional expectation (or population average value) of the dependent variable when the independent variables take on a given ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Error Term

In mathematics and statistics, an error term is an additive type of error. Common examples include: * errors and residuals in statistics, e.g. in linear regression * the error term in numerical integration In analysis, numerical integration comprises a broad family of algorithms for calculating the numerical value of a definite integral, and by extension, the term is also sometimes used to describe the numerical solution of differential equations ... {{sia, mathematics Error measures ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |