|

P-value

In null-hypothesis significance testing, the ''p''-value is the probability of obtaining test results at least as extreme as the result actually observed, under the assumption that the null hypothesis is correct. A very small ''p''-value means that such an extreme observed outcome would be very unlikely ''under the null hypothesis''. Even though reporting ''p''-values of statistical tests is common practice in academic publications of many quantitative fields, misinterpretation and misuse of p-values is widespread and has been a major topic in mathematics and metascience. In 2016, the American Statistical Association (ASA) made a formal statement that "''p''-values do not measure the probability that the studied hypothesis is true, or the probability that the data were produced by random chance alone" and that "a ''p''-value, or statistical significance, does not measure the size of an effect or the importance of a result" or "evidence regarding a model or hypothesis". That ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

P-hacking

Data dredging, also known as data snooping or ''p''-hacking is the misuse of data analysis to find patterns in data that can be presented as statistically significant, thus dramatically increasing and understating the risk of false positives. This is done by performing many statistical tests on the data and only reporting those that come back with significant results. Thus data dredging is also often a misused or misapplied form of data mining. The process of data dredging involves testing multiple hypotheses using a single data set by Brute-force search, exhaustively searching—perhaps for combinations of variables that might show a correlation, and perhaps for groups of cases or observations that show differences in their mean or in their breakdown by some other variable. Conventional tests of statistical significance are based on the probability that a particular result would arise if chance alone were at work, and necessarily accept some risk of Type I error, mistaken conclu ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Statistical Hypothesis Testing

A statistical hypothesis test is a method of statistical inference used to decide whether the data provide sufficient evidence to reject a particular hypothesis. A statistical hypothesis test typically involves a calculation of a test statistic. Then a decision is made, either by comparing the test statistic to a Critical value (statistics), critical value or equivalently by evaluating a p-value, ''p''-value computed from the test statistic. Roughly 100 list of statistical tests, specialized statistical tests are in use and noteworthy. History While hypothesis testing was popularized early in the 20th century, early forms were used in the 1700s. The first use is credited to John Arbuthnot (1710), followed by Pierre-Simon Laplace (1770s), in analyzing the human sex ratio at birth; see . Choice of null hypothesis Paul Meehl has argued that the epistemological importance of the choice of null hypothesis has gone largely unacknowledged. When the null hypothesis is predicted by the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Statistical Significance

In statistical hypothesis testing, a result has statistical significance when a result at least as "extreme" would be very infrequent if the null hypothesis were true. More precisely, a study's defined significance level, denoted by \alpha, is the probability of the study rejecting the null hypothesis, given that the null hypothesis is true; and the p-value, ''p''-value of a result, ''p'', is the probability of obtaining a result at least as extreme, given that the null hypothesis is true. The result is said to be ''statistically significant'', by the standards of the study, when p \le \alpha. The significance level for a study is chosen before data collection, and is typically set to 5% or much lower—depending on the field of study. In any experiment or Observational study, observation that involves drawing a Sampling (statistics), sample from a Statistical population, population, there is always the possibility that an observed effect would have occurred due to sampling error al ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Statistical Hypothesis Testing

A statistical hypothesis test is a method of statistical inference used to decide whether the data provide sufficient evidence to reject a particular hypothesis. A statistical hypothesis test typically involves a calculation of a test statistic. Then a decision is made, either by comparing the test statistic to a Critical value (statistics), critical value or equivalently by evaluating a p-value, ''p''-value computed from the test statistic. Roughly 100 list of statistical tests, specialized statistical tests are in use and noteworthy. History While hypothesis testing was popularized early in the 20th century, early forms were used in the 1700s. The first use is credited to John Arbuthnot (1710), followed by Pierre-Simon Laplace (1770s), in analyzing the human sex ratio at birth; see . Choice of null hypothesis Paul Meehl has argued that the epistemological importance of the choice of null hypothesis has gone largely unacknowledged. When the null hypothesis is predicted by the ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Fisher's Combined Probability Test

In statistics, Fisher's method, also known as Fisher's combined probability test, is a technique for data fusion or "meta-analysis" (analysis of analyses). It was developed by and named for Ronald Fisher. In its basic form, it is used to combine the results from several Chi-squared test, independence tests bearing upon the same overall statistical hypothesis testing, hypothesis (''H''0). Application to independent test statistics Fisher's method combines extreme value probabilities from each test, commonly known as "p-value, ''p''-values", into one test statistic (''X''2) using the formula :X^2_ = -2\sum_^k \ln p_i, where ''p''''i'' is the ''p''-value for the ''i''th hypothesis test. When the ''p''-values tend to be small, the test statistic ''X''2 will be large, which suggests that the null hypotheses are not true for every test. When all the null hypotheses are true, and the ''p''''i'' (or their corresponding test statistics) are independent, ''X''2 has a chi-squared dis ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Misuse Of P-values

Misuse of ''p''-values is common in scientific research and scientific education. ''p''-values are often used or interpreted incorrectly; the American Statistical Association states that ''p''-values can indicate how incompatible the data are with a specified statistical model. From a Neyman–Pearson hypothesis testing approach to statistical inferences, the data obtained by comparing the ''p''-value to a significance level will yield one of two results: either the null hypothesis is rejected (which however does not prove that the null hypothesis is ''false''), or the null hypothesis ''cannot'' be rejected at that significance level (which however does not prove that the null hypothesis is ''true''). From a Fisherian statistical testing approach to statistical inferences, a low ''p''-value means ''either'' that the null hypothesis is true and a highly improbable event has occurred ''or'' that the null hypothesis is false. Clarifications about ''p''-values The following list ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Likelihood Principle

In statistics, the likelihood principle is the proposition that, given a statistical model, all the evidence in a sample relevant to model parameters is contained in the likelihood function. A likelihood function arises from a probability density function considered as a function of its distributional parameterization argument. For example, consider a model which gives the probability density function \; f_X(x \mid \theta)\; of observable random variable \, X \, as a function of a parameter \,\theta~. Then for a specific value \,x\, of \,X~, the function \,\mathcal(\theta \mid x) = f_X(x \mid \theta)\; is a likelihood function of \,\theta~: it gives a measure of how "likely" any particular value of \,\theta\, is, if we know that \,X\, has the value \,x~. The density function may be a density with respect to counting measure, i.e. a probability mass function. Two likelihood functions are ''equivalent'' if one is a scalar multiple of the other. The likelihood princip ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Ronald Fisher

Sir Ronald Aylmer Fisher (17 February 1890 – 29 July 1962) was a British polymath who was active as a mathematician, statistician, biologist, geneticist, and academic. For his work in statistics, he has been described as "a genius who almost single-handedly created the foundations for modern statistical science" and "the single most important figure in 20th century statistics". In genetics, Fisher was the one to most comprehensively combine the ideas of Gregor Mendel and Charles Darwin, as his work used mathematics to combine Mendelian genetics and natural selection; this contributed to the revival of Darwinism in the early 20th-century revision of the theory of evolution known as the Modern synthesis (20th century), modern synthesis. For his contributions to biology, Richard Dawkins declared Fisher to be the greatest of Darwin's successors. He is also considered one of the founding fathers of Neo-Darwinism. According to statistician Jeffrey T. Leek, Fisher is the most in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Metascience

Metascience (also known as meta-research) is the use of scientific methodology to study science itself. Metascience seeks to increase the quality of scientific research while reducing inefficiency. It is also known as "research on research" and "the science of science", as it uses research methods to study how research is done and find where improvements can be made. Metascience concerns itself with all fields of research and has been described as "a bird's eye view of science". In the words of John Ioannidis, "Science is the best thing that has happened to human beings... but we can do it better." In 1966, an early meta-research paper examined the statistical methods of 295 papers published in ten high-profile medical journals. It found that "in almost 73% of the reports read... conclusions were drawn when the justification for these conclusions was invalid." Meta-research in the following decades found many methodological flaws, inefficiencies, and poor practices in research ac ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

Test Statistic

Test statistic is a quantity derived from the sample for statistical hypothesis testing.Berger, R. L.; Casella, G. (2001). ''Statistical Inference'', Duxbury Press, Second Edition (p.374) A hypothesis test is typically specified in terms of a test statistic, considered as a numerical summary of a data-set that reduces the data to one value that can be used to perform the hypothesis test. In general, a test statistic is selected or defined in such a way as to quantify, within observed data, behaviours that would distinguish the null from the alternative hypothesis, where such an alternative is prescribed, or that would characterize the null hypothesis if there is no explicitly stated alternative hypothesis. An important property of a test statistic is that its sampling distribution under the null hypothesis must be calculable, either exactly or approximately, which allows ''p''-values to be calculated. A ''test statistic'' shares some of the same qualities of a descriptive stat ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

T-statistic

In statistics, the ''t''-statistic is the ratio of the difference in a number’s estimated value from its assumed value to its standard error. It is used in hypothesis testing via Student's ''t''-test. The ''t''-statistic is used in a ''t''-test to determine whether to support or reject the null hypothesis. It is very similar to the z-score but with the difference that ''t''-statistic is used when the sample size is small or the population standard deviation is unknown. For example, the ''t''-statistic is used in estimating the population mean from a sampling distribution of sample means if the population standard deviation is unknown. It is also used along with p-value when running hypothesis tests where the p-value tells us what the odds are of the results to have happened. Definition and features Let \hat\beta be an estimator of parameter ''β'' in some statistical model. Then a ''t''-statistic for this parameter is any quantity of the form : t_ = \frac, where ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |

P-factor

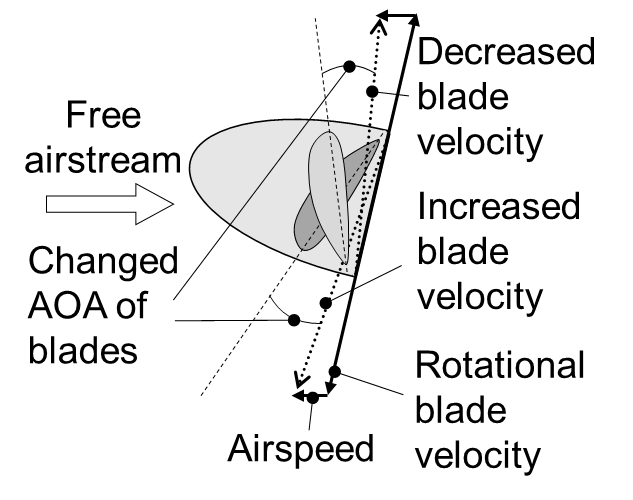

Pfactor, also known as asymmetric blade effect and asymmetric disc effect, is an aerodynamic phenomenon experienced by a moving propeller (aircraft), propeller,) wherein the propeller's center of thrust moves off-center when the aircraft is at a high angle of attack. This shift in the location of the center of thrust will exert a yawing moment on the aircraft, causing it to Aircraft principal axes, yaw slightly to one side. A rudder input is required to counteract the yawing tendency. Causes When a propeller aircraft is flying at cruise speed in level flight, the propeller disc is perpendicular to the relative airflow through the propeller. Each of the propeller blades contacts the air at the same angle and speed, and thus the thrust produced is evenly distributed across the propeller. However, at lower speeds, the aircraft will typically be in a nose-high attitude, with the propeller disc rotated slightly toward the horizontal. This has two effects. Firstly, propeller bla ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] [Amazon] |