|

Orthonormal Vectors

In linear algebra, two vectors in an inner product space are orthonormal if they are orthogonal (or perpendicular along a line) unit vectors. A set of vectors form an orthonormal set if all vectors in the set are mutually orthogonal and all of unit length. An orthonormal set which forms a basis is called an orthonormal basis. Intuitive overview The construction of orthogonality of vectors is motivated by a desire to extend the intuitive notion of perpendicular vectors to higher-dimensional spaces. In the Cartesian plane, two vectors are said to be ''perpendicular'' if the angle between them is 90° (i.e. if they form a right angle). This definition can be formalized in Cartesian space by defining the dot product and specifying that two vectors in the plane are orthogonal if their dot product is zero. Similarly, the construction of the norm of a vector is motivated by a desire to extend the intuitive notion of the length of a vector to higher-dimensional spaces. In Cartesian s ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear Algebra

Linear algebra is the branch of mathematics concerning linear equations such as: :a_1x_1+\cdots +a_nx_n=b, linear maps such as: :(x_1, \ldots, x_n) \mapsto a_1x_1+\cdots +a_nx_n, and their representations in vector spaces and through matrices. Linear algebra is central to almost all areas of mathematics. For instance, linear algebra is fundamental in modern presentations of geometry, including for defining basic objects such as lines, planes and rotations. Also, functional analysis, a branch of mathematical analysis, may be viewed as the application of linear algebra to spaces of functions. Linear algebra is also used in most sciences and fields of engineering, because it allows modeling many natural phenomena, and computing efficiently with such models. For nonlinear systems, which cannot be modeled with linear algebra, it is often used for dealing with first-order approximations, using the fact that the differential of a multivariate function at a point is the linear ma ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Cotangent

In mathematics, the trigonometric functions (also called circular functions, angle functions or goniometric functions) are real functions which relate an angle of a right-angled triangle to ratios of two side lengths. They are widely used in all sciences that are related to geometry, such as navigation, solid mechanics, celestial mechanics, geodesy, and many others. They are among the simplest periodic functions, and as such are also widely used for studying periodic phenomena through Fourier analysis. The trigonometric functions most widely used in modern mathematics are the sine, the cosine, and the tangent. Their reciprocals are respectively the cosecant, the secant, and the cotangent, which are less used. Each of these six trigonometric functions has a corresponding inverse function, and an analog among the hyperbolic functions. The oldest definitions of trigonometric functions, related to right-angle triangles, define them only for acute angles. To extend the sine and cosi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Standard Basis

In mathematics, the standard basis (also called natural basis or canonical basis) of a coordinate vector space (such as \mathbb^n or \mathbb^n) is the set of vectors whose components are all zero, except one that equals 1. For example, in the case of the Euclidean plane \mathbb^2 formed by the pairs of real numbers, the standard basis is formed by the vectors :\mathbf_x = (1,0),\quad \mathbf_y = (0,1). Similarly, the standard basis for the three-dimensional space \mathbb^3 is formed by vectors :\mathbf_x = (1,0,0),\quad \mathbf_y = (0,1,0),\quad \mathbf_z=(0,0,1). Here the vector e''x'' points in the ''x'' direction, the vector e''y'' points in the ''y'' direction, and the vector e''z'' points in the ''z'' direction. There are several common notations for standard-basis vectors, including , , , and . These vectors are sometimes written with a hat to emphasize their status as unit vectors (standard unit vectors). These vectors are a basis in the sense that any other vector can ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Spectral Theorem

In mathematics, particularly linear algebra and functional analysis, a spectral theorem is a result about when a linear operator or matrix (mathematics), matrix can be Diagonalizable matrix, diagonalized (that is, represented as a diagonal matrix in some basis). This is extremely useful because computations involving a diagonalizable matrix can often be reduced to much simpler computations involving the corresponding diagonal matrix. The concept of diagonalization is relatively straightforward for operators on finite-dimensional vector spaces but requires some modification for operators on infinite-dimensional spaces. In general, the spectral theorem identifies a class of linear operators that can be modeled by multiplication operators, which are as simple as one can hope to find. In more abstract language, the spectral theorem is a statement about commutative C*-algebras. See also spectral theory for a historical perspective. Examples of operators to which the spectral theorem appl ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Axiom Of Choice

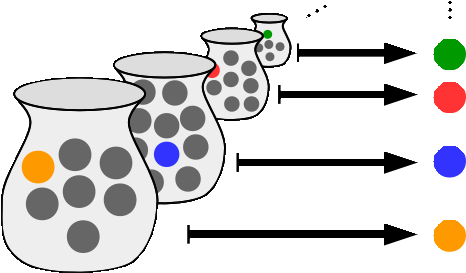

In mathematics, the axiom of choice, or AC, is an axiom of set theory equivalent to the statement that ''a Cartesian product of a collection of non-empty sets is non-empty''. Informally put, the axiom of choice says that given any collection of sets, each containing at least one element, it is possible to construct a new set by arbitrarily choosing one element from each set, even if the collection is infinite. Formally, it states that for every indexed family (S_i)_ of nonempty sets, there exists an indexed set (x_i)_ such that x_i \in S_i for every i \in I. The axiom of choice was formulated in 1904 by Ernst Zermelo in order to formalize his proof of the well-ordering theorem. In many cases, a set arising from choosing elements arbitrarily can be made without invoking the axiom of choice; this is, in particular, the case if the number of sets from which to choose the elements is finite, or if a canonical rule on how to choose the elements is available – some distinguishin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Constructive Proof

In mathematics, a constructive proof is a method of mathematical proof, proof that demonstrates the existence of a mathematical object by creating or providing a method for creating the object. This is in contrast to a non-constructive proof (also known as an existence proof or existence theorem, ''pure existence theorem''), which proves the existence of a particular kind of object without providing an example. For avoiding confusion with the stronger concept that follows, such a constructive proof is sometimes called an effective proof. A constructive proof may also refer to the stronger concept of a proof that is valid in constructive mathematics. Constructivism (mathematics), Constructivism is a mathematical philosophy that rejects all proof methods that involve the existence of objects that are not explicitly built. This excludes, in particular, the use of the law of the excluded middle, the axiom of infinity, and the axiom of choice, and induces a different meaning for some ter ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linearly Independent

In the theory of vector spaces, a set of vectors is said to be if there is a nontrivial linear combination of the vectors that equals the zero vector. If no such linear combination exists, then the vectors are said to be . These concepts are central to the definition of dimension. A vector space can be of finite dimension or infinite dimension depending on the maximum number of linearly independent vectors. The definition of linear dependence and the ability to determine whether a subset of vectors in a vector space is linearly dependent are central to determining the dimension of a vector space. Definition A sequence of vectors \mathbf_1, \mathbf_2, \dots, \mathbf_k from a vector space is said to be ''linearly dependent'', if there exist scalars a_1, a_2, \dots, a_k, not all zero, such that :a_1\mathbf_1 + a_2\mathbf_2 + \cdots + a_k\mathbf_k = \mathbf, where \mathbf denotes the zero vector. This implies that at least one of the scalars is nonzero, say a_1\ne 0, and ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear Map

In mathematics, and more specifically in linear algebra, a linear map (also called a linear mapping, linear transformation, vector space homomorphism, or in some contexts linear function) is a Map (mathematics), mapping V \to W between two vector spaces that preserves the operations of vector addition and scalar multiplication. The same names and the same definition are also used for the more general case of module (mathematics), modules over a ring (mathematics), ring; see Module homomorphism. If a linear map is a bijection then it is called a . In the case where V = W, a linear map is called a (linear) ''endomorphism''. Sometimes the term refers to this case, but the term "linear operator" can have different meanings for different conventions: for example, it can be used to emphasize that V and W are Real number, real vector spaces (not necessarily with V = W), or it can be used to emphasize that V is a function space, which is a common convention in functional analysis. Some ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Diagonalizable Matrix

In linear algebra, a square matrix A is called diagonalizable or non-defective if it is similar to a diagonal matrix, i.e., if there exists an invertible matrix P and a diagonal matrix D such that or equivalently (Such D are not unique.) For a finite-dimensional vector space a linear map T:V\to V is called diagonalizable if there exists an ordered basis of V consisting of eigenvectors of T. These definitions are equivalent: if T has a matrix representation T = PDP^ as above, then the column vectors of P form a basis consisting of eigenvectors of and the diagonal entries of D are the corresponding eigenvalues of with respect to this eigenvector basis, A is represented by Diagonalization is the process of finding the above P and Diagonalizable matrices and maps are especially easy for computations, once their eigenvalues and eigenvectors are known. One can raise a diagonal matrix D to a power by simply raising the diagonal entries to that power, and the determi ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Inner Product

In mathematics, an inner product space (or, rarely, a Hausdorff space, Hausdorff pre-Hilbert space) is a real vector space or a complex vector space with an operation (mathematics), operation called an inner product. The inner product of two vectors in the space is a Scalar (mathematics), scalar, often denoted with angle brackets such as in \langle a, b \rangle. Inner products allow formal definitions of intuitive geometric notions, such as lengths, angles, and orthogonality (zero inner product) of vectors. Inner product spaces generalize Euclidean vector spaces, in which the inner product is the dot product or ''scalar product'' of Cartesian coordinates. Inner product spaces of infinite Dimension (vector space), dimension are widely used in functional analysis. Inner product spaces over the Field (mathematics), field of complex numbers are sometimes referred to as unitary spaces. The first usage of the concept of a vector space with an inner product is due to Giuseppe Peano, in ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |