|

Elementary Row Operations

In mathematics, an elementary matrix is a matrix which differs from the identity matrix by one single elementary row operation. The elementary matrices generate the general linear group GL''n''(F) when F is a field. Left multiplication (pre-multiplication) by an elementary matrix represents elementary row operations, while right multiplication (post-multiplication) represents elementary column operations. Elementary row operations are used in Gaussian elimination to reduce a matrix to row echelon form. They are also used in Gauss–Jordan elimination to further reduce the matrix to reduced row echelon form. Elementary row operations There are three types of elementary matrices, which correspond to three types of row operations (respectively, column operations): ;Row switching: A row within the matrix can be switched with another row. : R_i \leftrightarrow R_j ;Row multiplication: Each element in a row can be multiplied by a non-zero constant. It is also known as ''scaling'' a ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Mathematics

Mathematics is an area of knowledge that includes the topics of numbers, formulas and related structures, shapes and the spaces in which they are contained, and quantities and their changes. These topics are represented in modern mathematics with the major subdisciplines of number theory, algebra, geometry, and analysis, respectively. There is no general consensus among mathematicians about a common definition for their academic discipline. Most mathematical activity involves the discovery of properties of abstract objects and the use of pure reason to prove them. These objects consist of either abstractions from nature orin modern mathematicsentities that are stipulated to have certain properties, called axioms. A ''proof'' consists of a succession of applications of deductive rules to already established results. These results include previously proved theorems, axioms, andin case of abstraction from naturesome basic properties that are considered true starting poin ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Diagonal Matrix

In linear algebra, a diagonal matrix is a matrix in which the entries outside the main diagonal are all zero; the term usually refers to square matrices. Elements of the main diagonal can either be zero or nonzero. An example of a 2×2 diagonal matrix is \left begin 3 & 0 \\ 0 & 2 \end\right/math>, while an example of a 3×3 diagonal matrix is \left begin 6 & 0 & 0 \\ 0 & 0 & 0 \\ 0 & 0 & 0 \end\right/math>. An identity matrix of any size, or any multiple of it (a scalar matrix), is a diagonal matrix. A diagonal matrix is sometimes called a scaling matrix, since matrix multiplication with it results in changing scale (size). Its determinant is the product of its diagonal values. Definition As stated above, a diagonal matrix is a matrix in which all off-diagonal entries are zero. That is, the matrix with ''n'' columns and ''n'' rows is diagonal if \forall i,j \in \, i \ne j \implies d_ = 0. However, the main diagonal entries are unrestricted. The term ''diagonal matrix'' ma ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

LU Decomposition

In numerical analysis and linear algebra, lower–upper (LU) decomposition or factorization factors a matrix as the product of a lower triangular matrix and an upper triangular matrix (see matrix decomposition). The product sometimes includes a permutation matrix as well. LU decomposition can be viewed as the matrix form of Gaussian elimination. Computers usually solve square systems of linear equations using LU decomposition, and it is also a key step when inverting a matrix or computing the determinant of a matrix. The LU decomposition was introduced by the Polish mathematician Tadeusz Banachiewicz in 1938. Definitions Let ''A'' be a square matrix. An LU factorization refers to the factorization of ''A'', with proper row and/or column orderings or permutations, into two factors – a lower triangular matrix ''L'' and an upper triangular matrix ''U'': : A = LU. In the lower triangular matrix all elements above the diagonal are zero, in the upper triangular matrix, all ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Matrix (mathematics)

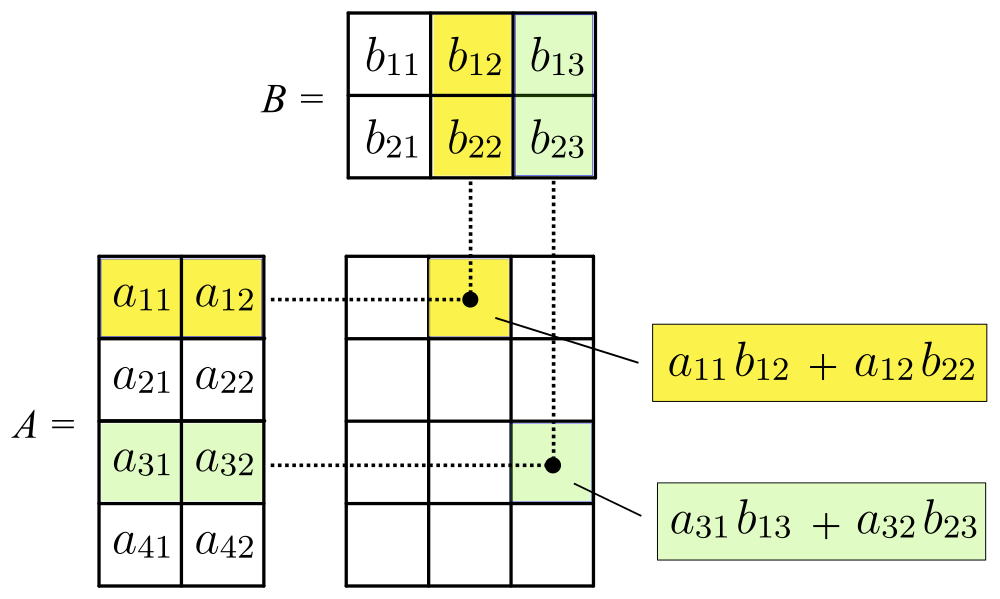

In mathematics, a matrix (plural matrices) is a rectangular array or table of numbers, symbols, or expressions, arranged in rows and columns, which is used to represent a mathematical object or a property of such an object. For example, \begin1 & 9 & -13 \\20 & 5 & -6 \end is a matrix with two rows and three columns. This is often referred to as a "two by three matrix", a "-matrix", or a matrix of dimension . Without further specifications, matrices represent linear maps, and allow explicit computations in linear algebra. Therefore, the study of matrices is a large part of linear algebra, and most properties and operations of abstract linear algebra can be expressed in terms of matrices. For example, matrix multiplication represents composition of linear maps. Not all matrices are related to linear algebra. This is, in particular, the case in graph theory, of incidence matrices, and adjacency matrices. ''This article focuses on matrices related to linear algebra, an ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

System Of Linear Equations

In mathematics, a system of linear equations (or linear system) is a collection of one or more linear equations involving the same variables. For example, :\begin 3x+2y-z=1\\ 2x-2y+4z=-2\\ -x+\fracy-z=0 \end is a system of three equations in the three variables . A solution to a linear system is an assignment of values to the variables such that all the equations are simultaneously satisfied. A solution to the system above is given by the ordered triple :(x,y,z)=(1,-2,-2), since it makes all three equations valid. The word "system" indicates that the equations are to be considered collectively, rather than individually. In mathematics, the theory of linear systems is the basis and a fundamental part of linear algebra, a subject which is used in most parts of modern mathematics. Computational algorithms for finding the solutions are an important part of numerical linear algebra, and play a prominent role in engineering, physics, chemistry, computer science, and economics. ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Linear Algebra

Linear algebra is the branch of mathematics concerning linear equations such as: :a_1x_1+\cdots +a_nx_n=b, linear maps such as: :(x_1, \ldots, x_n) \mapsto a_1x_1+\cdots +a_nx_n, and their representations in vector spaces and through matrices. Linear algebra is central to almost all areas of mathematics. For instance, linear algebra is fundamental in modern presentations of geometry, including for defining basic objects such as lines, planes and rotations. Also, functional analysis, a branch of mathematical analysis, may be viewed as the application of linear algebra to spaces of functions. Linear algebra is also used in most sciences and fields of engineering, because it allows modeling many natural phenomena, and computing efficiently with such models. For nonlinear systems, which cannot be modeled with linear algebra, it is often used for dealing with first-order approximations, using the fact that the differential of a multivariate function at a point is the line ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Steinberg Relations

In algebraic K-theory, a field of mathematics, the Steinberg group \operatorname(A) of a ring A is the universal central extension of the commutator subgroup of the stable general linear group of A . It is named after Robert Steinberg, and it is connected with lower K -groups, notably K_ and K_ . Definition Abstractly, given a ring A , the Steinberg group \operatorname(A) is the universal central extension of the commutator subgroup of the stable general linear group (the commutator subgroup is perfect and so has a universal central extension). Presentation using generators and relations A concrete presentation using generators and relations is as follows. Elementary matrices — i.e. matrices of the form (\lambda) := \mathbf + (\lambda) , where \mathbf is the identity matrix, (\lambda) is the matrix with \lambda in the (p,q) -entry and zeros elsewhere, and p \neq q — satisfy the following relations, called the Steinberg relations: : \begin e_(\lam ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Triangular Matrix

In mathematics, a triangular matrix is a special kind of square matrix. A square matrix is called if all the entries ''above'' the main diagonal are zero. Similarly, a square matrix is called if all the entries ''below'' the main diagonal are zero. Because matrix equations with triangular matrices are easier to solve, they are very important in numerical analysis. By the LU decomposition algorithm, an invertible matrix may be written as the product of a lower triangular matrix ''L'' and an upper triangular matrix ''U'' if and only if all its leading principal minors are non-zero. Description A matrix of the form :L = \begin \ell_ & & & & 0 \\ \ell_ & \ell_ & & & \\ \ell_ & \ell_ & \ddots & & \\ \vdots & \vdots & \ddots & \ddots & \\ \ell_ & \ell_ & \ldots & \ell_ & \ell_ \end is called a lower triangular matrix or left triangular matrix, ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Shear Mapping

In plane geometry, a shear mapping is a linear map that displaces each point in a fixed direction, by an amount proportional to its signed distance from the line that is parallel to that direction and goes through the origin. This type of mapping is also called shear transformation, transvection, or just shearing. An example is the mapping that takes any point with coordinates (x,y) to the point (x + 2y,y). In this case, the displacement is horizontal by a factor of 2 where the fixed line is the x-axis, and the signed distance is the y coordinate. Note that points on opposite sides of the reference line are displaced in opposite directions. Shear mappings must not be confused with rotations. Applying a shear map to a set of points of the plane will change all angles between them (except straight angles), and the length of any line segment that is not parallel to the direction of displacement. Therefore, it will usually distort the shape of a geometric figure, for example t ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Scalar (mathematics)

A scalar is an element of a field which is used to define a '' vector space''. In linear algebra, real numbers or generally elements of a field are called scalars and relate to vectors in an associated vector space through the operation of scalar multiplication (defined in the vector space), in which a vector can be multiplied by a scalar in the defined way to produce another vector. Generally speaking, a vector space may be defined by using any field instead of real numbers (such as complex numbers). Then scalars of that vector space will be elements of the associated field (such as complex numbers). A scalar product operation – not to be confused with scalar multiplication – may be defined on a vector space, allowing two vectors to be multiplied in the defined way to produce a scalar. A vector space equipped with a scalar product is called an inner product space. A quantity described by multiple scalars, such as having both direction and magnitude, is calle ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Matrix (mathematics)

In mathematics, a matrix (plural matrices) is a rectangular array or table of numbers, symbols, or expressions, arranged in rows and columns, which is used to represent a mathematical object or a property of such an object. For example, \begin1 & 9 & -13 \\20 & 5 & -6 \end is a matrix with two rows and three columns. This is often referred to as a "two by three matrix", a "-matrix", or a matrix of dimension . Without further specifications, matrices represent linear maps, and allow explicit computations in linear algebra. Therefore, the study of matrices is a large part of linear algebra, and most properties and operations of abstract linear algebra can be expressed in terms of matrices. For example, matrix multiplication represents composition of linear maps. Not all matrices are related to linear algebra. This is, in particular, the case in graph theory, of incidence matrices, and adjacency matrices. ''This article focuses on matrices related to linear algebra, an ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |

Determinant

In mathematics, the determinant is a scalar value that is a function of the entries of a square matrix. It characterizes some properties of the matrix and the linear map represented by the matrix. In particular, the determinant is nonzero if and only if the matrix is invertible and the linear map represented by the matrix is an isomorphism. The determinant of a product of matrices is the product of their determinants (the preceding property is a corollary of this one). The determinant of a matrix is denoted , , or . The determinant of a matrix is :\begin a & b\\c & d \end=ad-bc, and the determinant of a matrix is : \begin a & b & c \\ d & e & f \\ g & h & i \end= aei + bfg + cdh - ceg - bdi - afh. The determinant of a matrix can be defined in several equivalent ways. Leibniz formula expresses the determinant as a sum of signed products of matrix entries such that each summand is the product of different entries, and the number of these summands is n!, the factorial o ... [...More Info...] [...Related Items...] OR: [Wikipedia] [Google] [Baidu] |